- The paper demonstrates a force-centric imitation learning approach that integrates a novel ForceCapture system with HybridIL, enabling precise contact-rich manipulation.

- It introduces a low-cost, sensor-based data collection method that efficiently captures natural human force interactions, reducing data collection time by two-thirds compared to teleoperation.

- The results reveal HybridIL’s robust performance, achieving 100% initial task success and 85% success in peel length consistency during vegetable peeling.

Introduction

The paper "ForceMimic: Force-Centric Imitation Learning with Force-Motion Capture System for Contact-Rich Manipulation" introduces a novel robot learning framework focusing on force-centric manipulation tasks. Human manipulation in contact-rich environments often requires precise force application, a capability that is under-explored in current robotic systems which primarily focus on trajectory-based tasks. ForceMimic addresses this gap by developing a force-centered data collection and imitation learning system to enhance robotic task performance in scenarios that require accurate force management, such as vegetable peeling.

System Overview

The core of this research lies in the development of the ForceCapture system and the Hybrid Imitation Learning (HybridIL) algorithm. ForceCapture is a handheld, robot-free device that enables the natural collection of force-centric manipulation data using a SLAM camera and a force sensor. This data is then used to train the HybridIL model, which predicts both position and wrench parameters, utilizing a hybrid control strategy that integrates force and position control primitives.

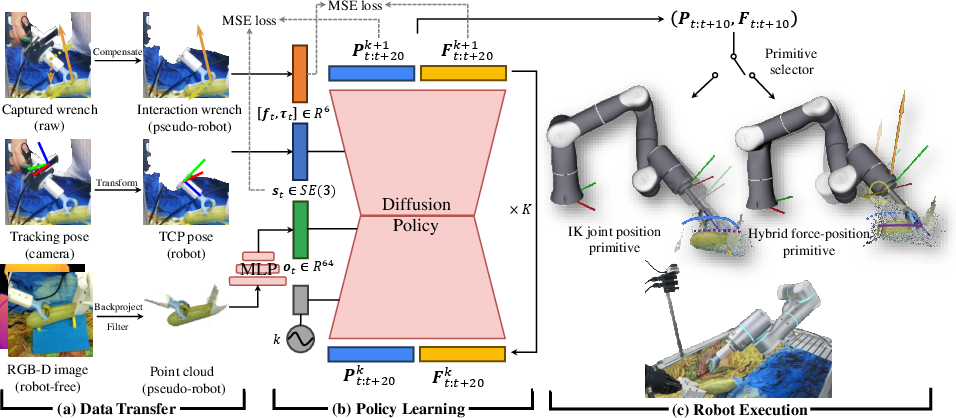

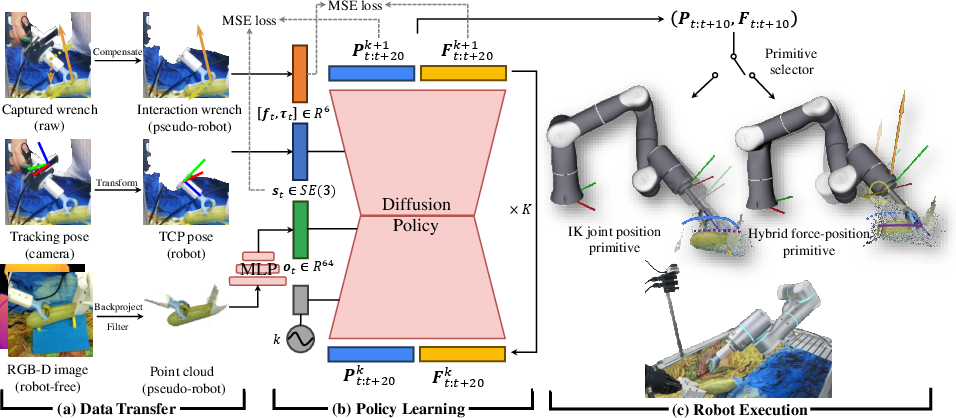

Figure 1: Overview of the pipeline for ForceMimic, illustrating the transfer of robot-free data to pseudo-robot data, the learning of a diffusion-based policy, and the execution of actions through hybrid force-position control.

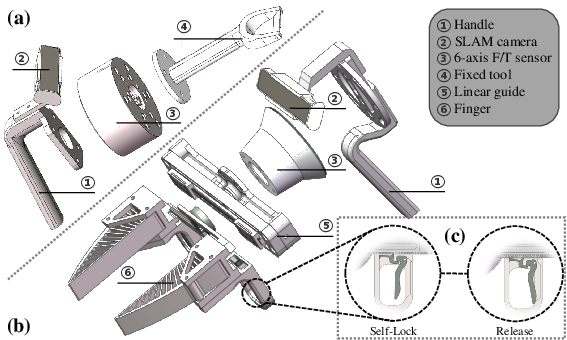

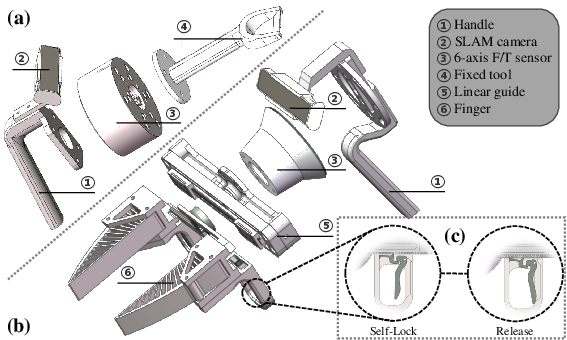

Hardware Design: ForceCapture

ForceCapture is designed to overcome challenges in collecting natural force interaction data. It employs a low-cost, scalable design that integrates a six-axis force sensor and a SLAM camera, allowing intuitive recording of manipulation data without requiring direct robot involvement.

Figure 2: Structure of ForceCapture showcasing both fixed-tool and movable gripper versions, including the unique self-lock function.

Methodology

Data Transfer

The data collection phase involves compensating for gravitational and inertial forces to isolate true interaction forces. RGB-D images captured during manipulation are transformed into voxelized point clouds to create a consistent input format for robot learning models.

HybridIL Learning Algorithm

HybridIL utilizes a diffusion policy framework to learn from force-centric demonstration data. The model predicts force-position actions, which are executed using custom control primitives that adapt the robot's actions based on the predicted interaction forces and trajectories.

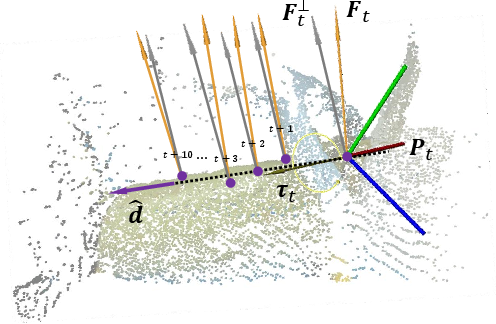

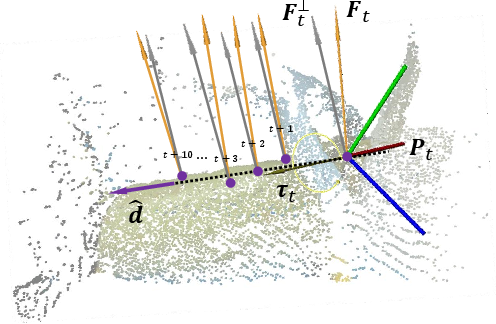

Figure 3: Illustration of how HybridIL interfaces with control primitives, specifically hybrid force-position control active states and orthogonal calculations.

Experiments

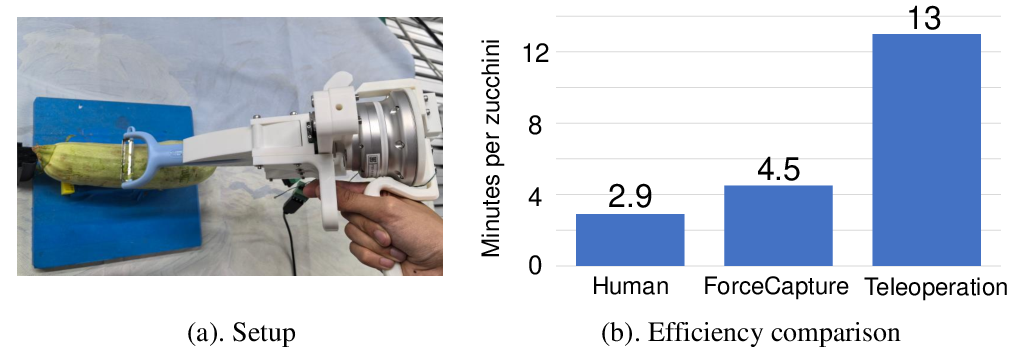

Data Collection Efficiency

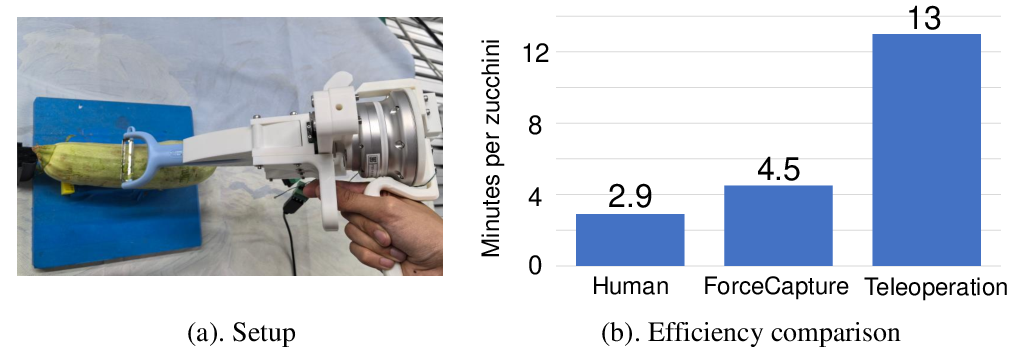

The study compared the data collection efficiency of ForceCapture against traditional teleoperation methods. ForceCapture demonstrated superior efficiency, requiring only a third of the time needed by teleoperation methods for a zucchini peeling task, attributed to its natural handling and minimal training requirements.

Figure 4: Experimental setup showing the comparison of data collection efficiency between ForceCapture and conventional teleoperation approaches.

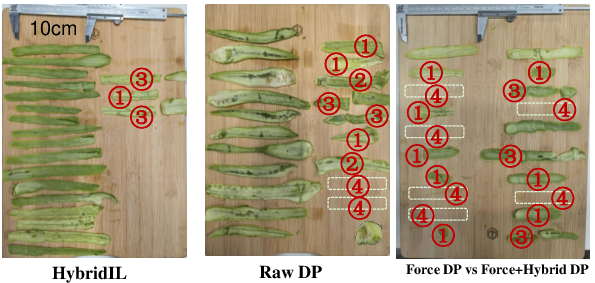

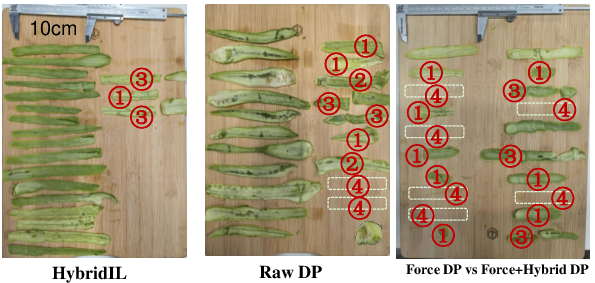

The HybridIL model was evaluated using a vegetable peeling task. The results indicate that HybridIL significantly outperforms baseline models, achieving a 100% success rate in initial task execution and an 85% success rate when considering peel length, demonstrating robustness in contact-rich manipulation scenarios.

Figure 5: Visualization of peeled skins from different models, highlighting the uniform success of HybridIL.

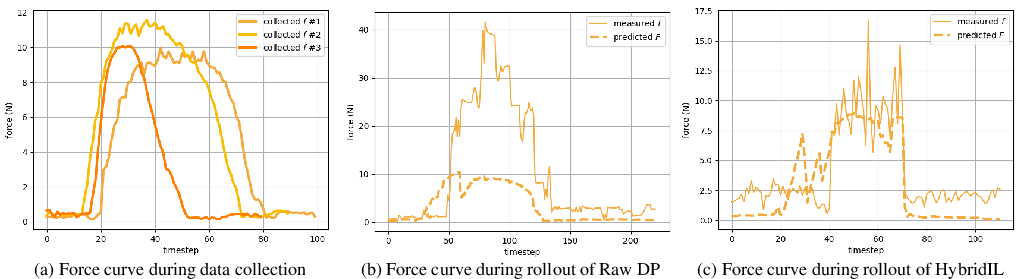

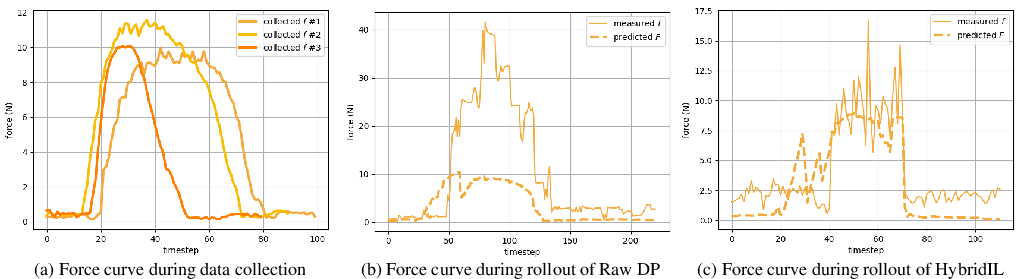

Figure 6: Recorded force curves for HybridIL showing consistency and alignment with dataset statistics, enabling effective manipulation without excessive force.

Conclusion

ForceMimic represents a significant step towards effective force-centric robotic manipulation, offering a detailed system for capturing and translating human force-execution into robotic tasks. Future directions include expanding this approach to a broader range of tasks, enhancing data representation capabilities, and exploring dynamic control primitives that adaptively choose strategies based on real-time environmental interactions. As such advancements unfold, they promise to refine the precision and adaptability of robots in complex tactile environments.