Toward Understanding In-context vs. In-weight Learning

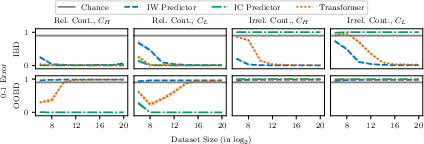

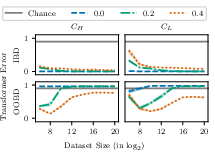

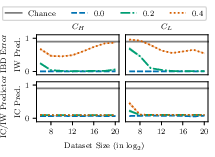

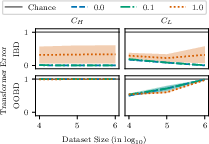

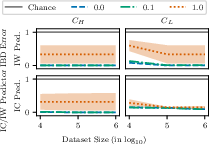

Abstract: It has recently been demonstrated empirically that in-context learning emerges in transformers when certain distributional properties are present in the training data, but this ability can also diminish upon further training. We provide a new theoretical understanding of these phenomena by identifying simplified distributional properties that give rise to the emergence and eventual disappearance of in-context learning. We do so by first analyzing a simplified model that uses a gating mechanism to choose between an in-weight and an in-context predictor. Through a combination of a generalization error and regret analysis we identify conditions where in-context and in-weight learning emerge. These theoretical findings are then corroborated experimentally by comparing the behaviour of a full transformer on the simplified distributions to that of the stylized model, demonstrating aligned results. We then extend the study to a full LLM, showing how fine-tuning on various collections of natural language prompts can elicit similar in-context and in-weight learning behaviour.

- A mechanism for sample-efficient in-context learning for sparse retrieval tasks. In International Conference on Algorithmic Learning Theory (ALT), pages 3–46.

- Many-shot in-context learning. arXiv:2404.11018.

- What learning algorithm is in-context learning? investigations with linear models. In International Conference on Learning Representations (ICLR).

- Transformers as statisticians: Provable in-context learning with in-context algorithm selection. In Advances in Neural Information Processing Systems (NeurIPS), volume 36, pages 57125–57211.

- Concentration inequalities: A nonasymptotic theory of independence. Clarendon Press.

- Language models are few-shot learners. In Advances in Neural Information Processing Systems (NeurIPS), volume 33, pages 1877–1901.

- On the generalization ability of on-line learning algorithms. In Advances in Neural Information Processing Systems. MIT Press.

- Prediction, Learning, and Games. Cambridge University Press, New York, NY, USA.

- Data distributional properties drive emergent in-context learning in transformers. In Advances in Neural Information Processing Systems (NeurIPS), volume 35, pages 18878–18891.

- Transformers generalize differently from information stored in context vs in weights. arXiv:2210.05675.

- Unveiling induction heads: Provable training dynamics and feature learning in transformers. In ICML Workshop on Theoretical Foundations of Foundation Models.

- The evolution of statistical induction heads: In-context learning markov chains. arXiv:2402.11004.

- The evolution of statistical induction heads: In-context learning markov chains.

- What can transformers learn in-context? a case study of simple function classes. In Advances in Neural Information Processing Systems (NeurIPS), volume 35.

- Gemini Team, Google (2023). Gemini: A family of highly capable multimodal models. arXiv preprint arXiv:2312.11805. [Online; accessed 01-February-2024].

- Context is environment. In International Conference on Learning Representations (ICLR).

- Hazan, E. (2023). Introduction to online convex optimization.

- Deep residual learning for image recognition. In 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 770–778.

- In-context learning creates task vectors. In Bouamor, H., Pino, J., and Bali, K., editors, Conference on Empirical Methods in Natural Language Processing (EMNLP), pages 9318–9333.

- Human-level concept learning through probabilistic program induction. Science, 350(6266):1332–1338.

- Transformers as algorithms: Generalization and stability in in-context learning. In International Conference on Machine Learning (ICML), pages 19565–19594.

- How transformers learn causal structure with gradient descent. In International Conference on Machine Learning (ICML).

- In-context learning and induction heads. arXiv:2209.11895.

- Radford, A. (2018). Improving language understanding by generative pre-training.

- Language models are unsupervised multitask learners.

- Reddy, G. (2023). The mechanistic basis of data dependence and abrupt learning in an in-context classification task. In International Conference on Learning Representations (ICLR).

- One-layer transformers fail to solve the induction heads task. arXiv:2408.14332.

- Why larger language models do in-context learning differently? In International Conference on Machine Learning (ICML).

- The transient nature of emergent in-context learning in transformers. In Advances in Neural Information Processing Systems (NeurIPS), volume 36, pages 27801–27819.

- What needs to go right for an induction head? a mechanistic study of in-context learning circuits and their formation. In International Conference on Machine Learning (ICML).

- Attention is all you need. In Guyon, I., Luxburg, U. V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., and Garnett, R., editors, Advances in Neural Information Processing Systems, volume 30.

- Transformers learn in-context by gradient descent. In International Conference on Machine Learning (ICML).

- Larger language models do in-context learning differently. arXiv:2303.03846.

- How many pretraining tasks are needed for in-context learning of linear regression? In The Twelfth International Conference on Learning Representations.

- An explanation of in-context learning as implicit bayesian inference. In International Conference on Learning Representations (ICLR).

- Trained transformers learn linear models in-context. Journal of Machine Learning Research, 25:1–55.

- Trained transformers learn linear models in-context. Journal of Machine Learning Research, 25(49):1–55.

- Zinkevich, M. (2003). Online convex programming and generalized infinitesimal gradient ascent. In Proceedings of the Twentieth International Conference on Machine Learning, pages 928–936.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.