- The paper demonstrates that integrating 29 domain-specific tools significantly improves performance on specialized chemistry tasks such as molecular conversion and property prediction.

- It employs dual benchmarking using SMolInstruct and general exam questions, revealing that tool augmentation can impair reasoning on abstract challenges.

- The study highlights error types—including tool, reasoning, and grounding errors—suggesting refinements to reduce cognitive load in tool-assisted language agents.

Introduction

The integration of LLMs with specialized tools aims to augment their capabilities in domain-specific tasks. The paper "ChemToolAgent: The Impact of Tools on Language Agents for Chemistry Problem Solving" (2411.07228) investigates this paradigm within the domain of chemistry. While existing agents like ChemCrow and Coscientist highlight the potential of tool-augmented LLMs, their evaluations remain narrow, focusing on limited tasks. This study introduces ChemAgent, an advanced tool-augmented agent, and conducts a comprehensive evaluation across diverse chemistry-related tasks.

Methodology

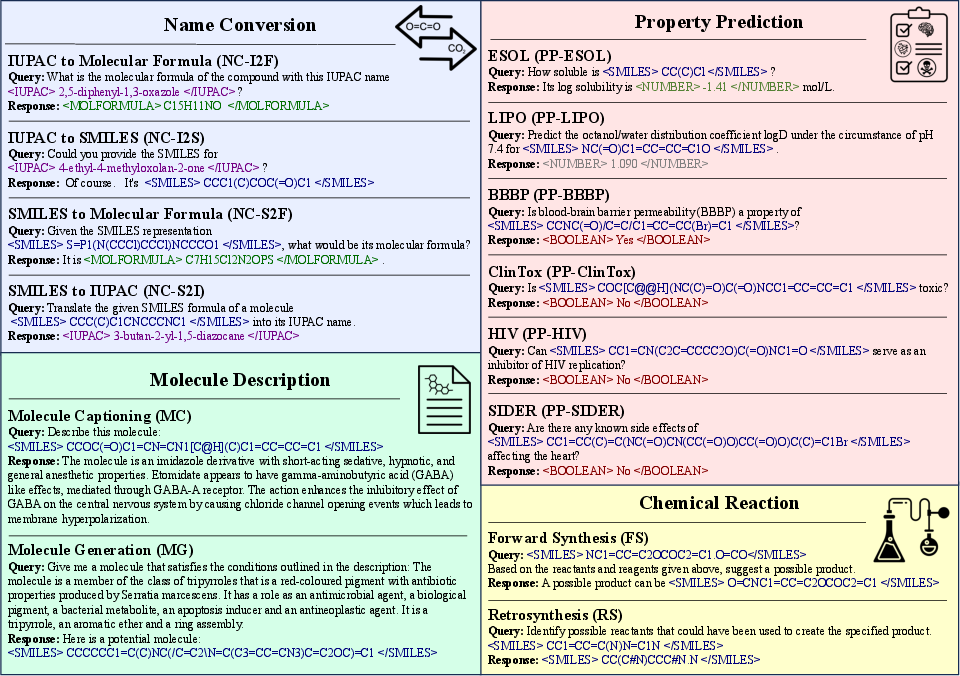

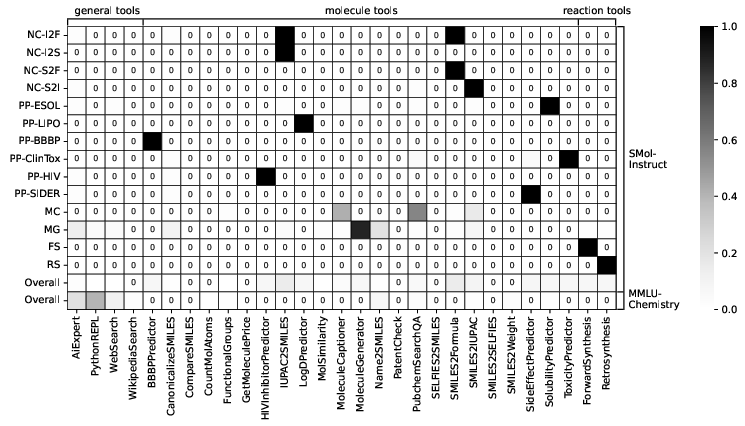

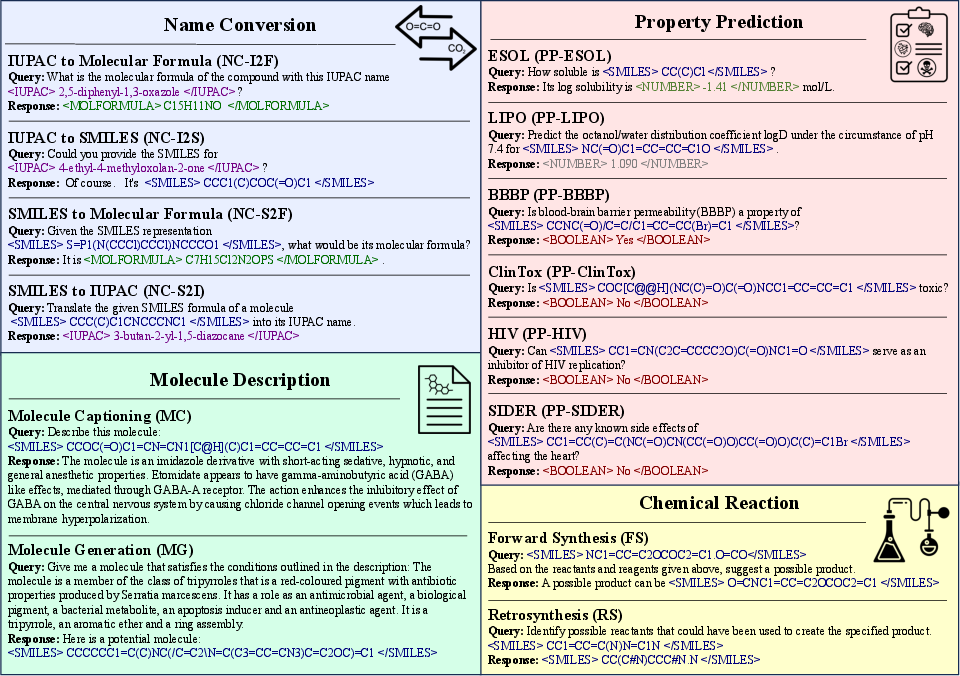

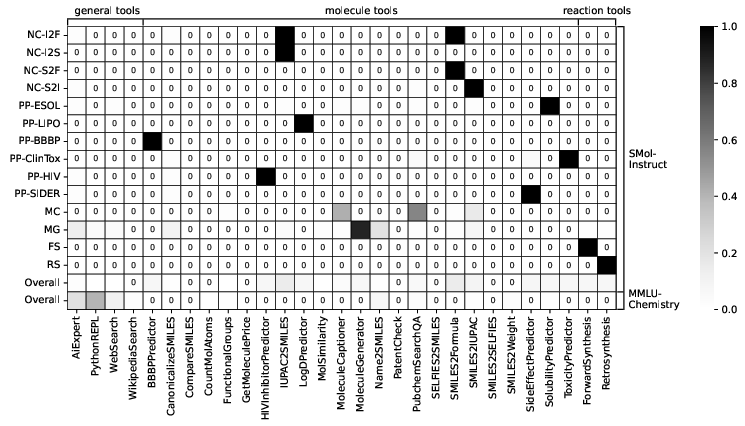

ChemAgent extends ChemCrow, leveraging the ReAct framework and incorporating 29 tools, including molecular property predictors and synthesis predictors. The evaluation benchmarks include 14 specialized tasks from SMolInstruct and general chemistry questions sourced from subsets of MMLU and GPQA. This dual-category benchmarking approach enables the investigation of ChemAgent's performance across a broad spectrum of chemistry tasks.

Figure 1: Tasks in SMolInstruct illustrating molecule- and reaction-centric challenges.

Results

Specialized Chemistry Tasks

In specialized tasks, ChemAgent demonstrated superior performance compared to base LLMs and ChemCrow, emphasizing the efficacy of domain-specific tools. For instance, ChemAgent achieved high accuracy in molecular conversion tasks (I2F, I2S) and property prediction tasks, often outperforming non-LLM state-of-the-art models.

General Chemistry Questions

Contrary to expectations, ChemAgent did not consistently outperform base LLMs on general chemistry exam-like questions. This finding highlights a critical insight: while tool augmentation can substantially enhance performance in tasks requiring specific domain tools, it can introduce complexity that impedes the agent's reasoning ability in more abstract, generalized scenarios.

Figure 2: The statistics of tool usage by ChemAgent (GPT), indicating tool selection preferences across tasks.

Error Analysis

The error analysis delineated three primary error types: reasoning, grounding, and tool errors. On specialized tasks, errors were predominantly tool-related due to inherent inaccuracies in neural-network-based tools. In contrast, general tasks were marred by reasoning errors, suggesting an increased cognitive load imposed by tool integration. This underperformance in general questions underscores the need to refine agents’ cognitive processes to mitigate subtle reasoning mistakes.

Implications and Future Directions

This study underscores the nuanced impact of tool integration on LLM-based agents, advocating for a judicious selection of tools tailored to task characteristics. The findings suggest that while task-specific tools can enhance performance, they can complicate reasoning in tasks necessitating broad knowledge and inference.

Future iterations of chemistry agents could benefit from strategies to minimize cognitive load, such as multi-agent systems to distribute reasoning tasks, or refining information verification mechanisms to resolve discrepancies in tool outputs. This approach could enhance the agent's ability to navigate complex reasoning scenarios by leveraging the precise benefits of tool augmentation without overwhelming the cognitive architecture.

Conclusion

ChemAgent, an enhanced chemistry-focused language agent, reveals the potential and limitations of tool-augmented LLMs. The study provides crucial insights into optimizing tool integration strategies, particularly in complex domains like chemistry. The findings emphasize the necessity of balancing tool complexity with the innate reasoning capabilities of LLMs, paving the way for future research to refine the interplay between domain-specific tools and general reasoning frameworks in AI.

In summation, this work contributes to a deeper understanding of the practical implications of tool-augmented LLMs, advocating for improved frameworks that enhance task performance across diverse domains.