- The paper demonstrates that similarity measures like CKA and CCA quantify the alignment between optimal linear decoders and neural responses.

- It introduces a unifying framework linking representational geometry to decoding accuracy through generalized weight regularization and Procrustes bounds.

- The study highlights implications for refining neural representation analysis, advancing computational neuroscience by integrating geometric and functional measures.

Introduction to Representational Similarity

The study of neural representations often involves measuring similarity in response patterns across different neural systems. The paper "What Representational Similarity Measures Imply about Decodable Information" (2411.08197) explores the connections between representational geometry and the ability to decode information linearly from neural population responses. Traditional similarity measures, such as Centered Kernel Alignment (CKA) and Canonical Correlation Analysis (CCA), have largely focused on geometric invariances, but this work emphasizes their interpretation through the lens of decoding accuracy.

Decoding Perspective on Similarity Measures

The paper argues that measures like CKA and CCA can be understood as quantifying the alignment between optimal linear readouts across various decoding tasks. Specifically, CKA, popularized by Kornblith et al. [kornblith2019], highlights invariances to certain geometric transformations, which mirror invariances that do not affect linear decoding accuracy. Similarly, canonical correlations analysis facilitates flexible invariances that extend to affine transformations.

The theoretical framework introduced connects these measures to decoding tasks, offering new interpretations and unifying existing similarity measures under a decoding accuracy perspective.

Linear Decoding and Representational Measures

(Figure 1)

Figure 1: Schematic representation of the proposed framework highlighting the comparison methodology between representations using decoding targets.

In this framework, decoding accuracy serves as a proxy for the function of neural systems. Linear decoding models estimate target vectors from neural responses by optimizing the inner product between the target and neural activity, penalized by synaptic constraints. The study extends this model by introducing generalized weights regularization to evaluate representational similarity not as isolated metrics but as expressions of functional equivalence.

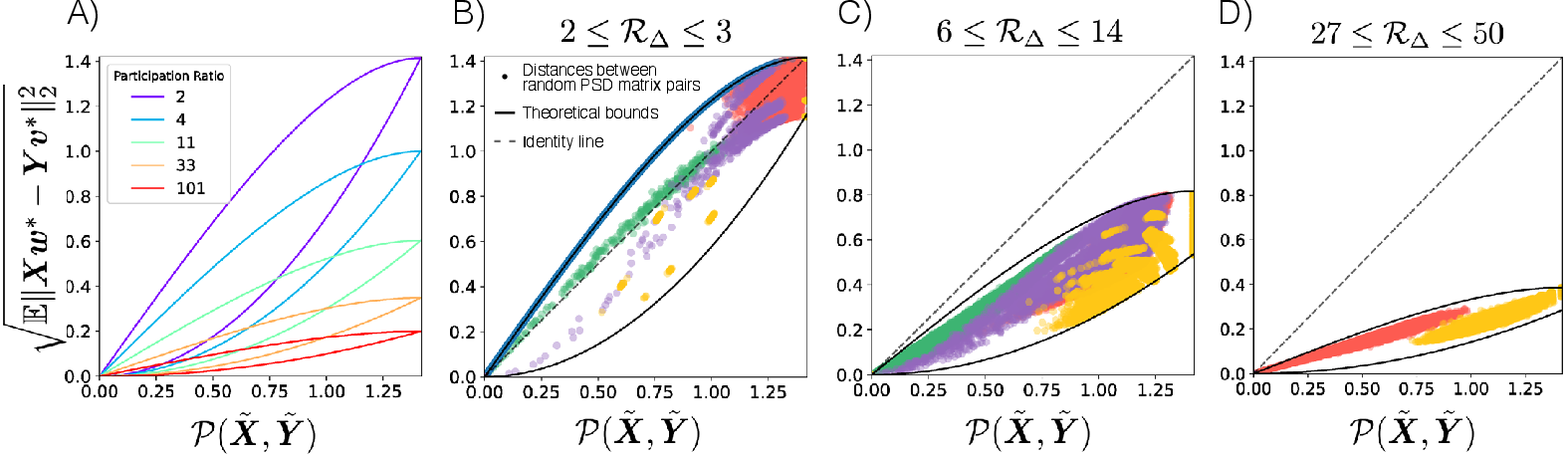

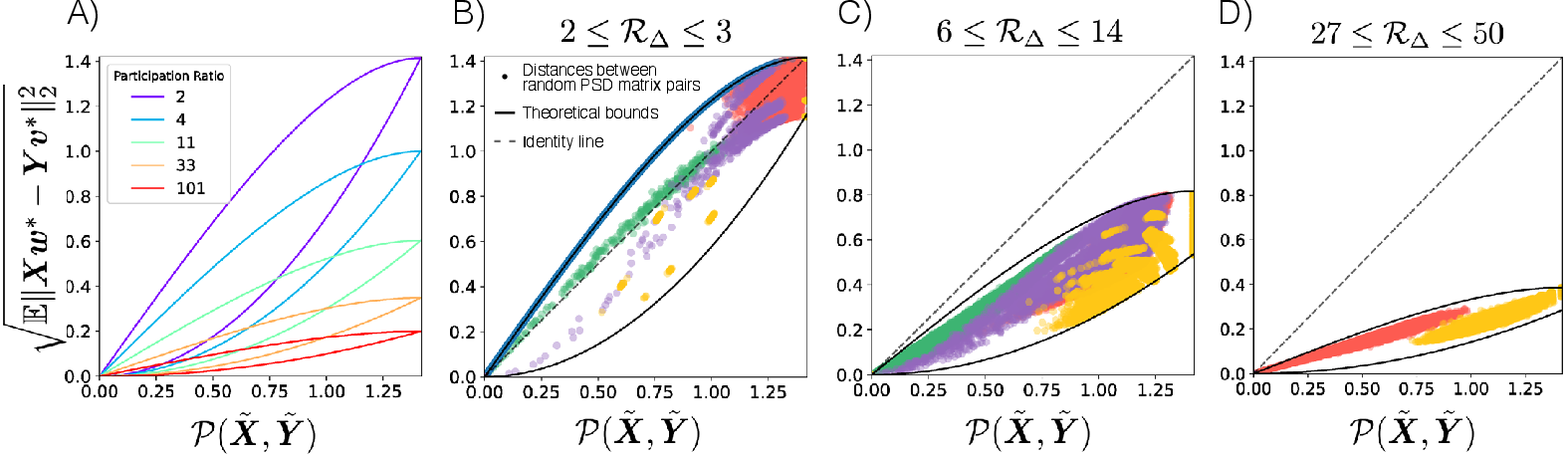

Figure 2: Bounds in equation \cref{eq:bound_proc}, illustrating the constraints on Procrustes distance and decoding distance.

Recent developments demonstrate the Procrustes shape distance upper bounds the distance between optimal linear decoder readouts, establishing rigorous limits on decoding performance based on representational geometry. These findings align with empirical observations, suggesting high Procrustes similarity implies high CKA similarity, reinforcing the bounding principles discussed.

Implications and Future Directions

The implications of this study are profound for computational neuroscience, where understanding the functional equivalence of neural systems is pivotal. By linking functional decoding closely to geometric measures, researchers can redefine how representational similarity is understood in complex neural networks.

Looking forward, the framework prompts several avenues for future research:

- Refinement of Similarity Measures: Further exploration of decoding tasks with differing Gaussian process characteristics could uncover nuances in representational similarity.

- Extension to Low-dimensional Systems: Investigating representational similarity measures in settings with constrained dimensionality or nested decoding targets may offer insights into efficacy variance.

- Application to Non-linear Decoding: The extension of the theoretical framework to encompass non-linear classifiers could broaden understanding of representational measures beyond linear spaces.

Conclusion

In conclusion, the paper bridges the gap between traditionally geometric similarity measures and functional decoding tasks, offering an integrated approach to understanding neural representations. Through this approach, the decoding perspective offers a powerful lens to interpret both geometric and functional aspects of neural activity, paving the way for refined quantitative neuroscience methodologies.