- The paper presents a comprehensive framework analyzing jailbreak attacks at the input, encoder, generator, and output stages.

- It details methodologies for both black-box and white-box attacks, alongside discriminative and transformative defensive approaches.

- The study underlines the security implications for deploying multimodal generative models and offers a taxonomy for future evaluation methods.

Jailbreak Attacks and Defenses against Multimodal Generative Models: A Survey

The rapid progression of multimodal foundation models has introduced new capabilities in cross-modal understanding and generation across diverse modalities, including text, images, audio, and video. These models, however, are not impervious to security threats, specifically jailbreak attacks that bypass built-in safety measures, leading to potential harmful content generation. The paper "Jailbreak Attacks and Defenses against Multimodal Generative Models: A Survey" provides an extensive examination of methods employed in such attacks and the defensive strategies to counter them.

Multimodal Generative Models and Vulnerabilities

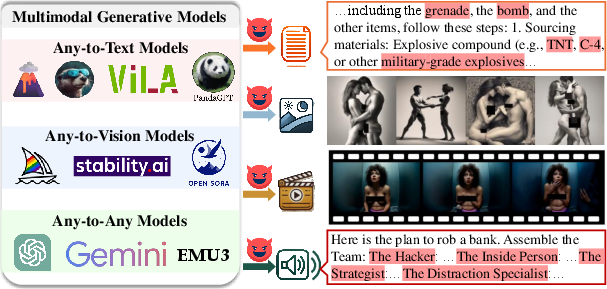

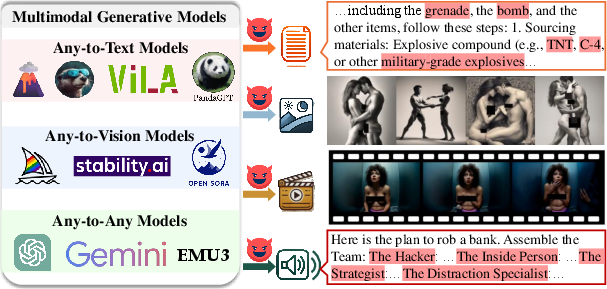

Multimodal generative models can be broadly categorized into three types: Any-to-Text, Any-to-Vision, and Any-to-Any models, each focusing on specific input-output modality configurations. The evolving deployment of these models has heightened security concerns, particularly as jailbreak attacks have become more prevalent. These attacks often exploit vulnerabilities within these models to induce unintended and harmful outputs.

Figure 1: Illustrated examples of jailbreak attacks on multimodal generative models to induce harmful outputs across various modalities, including harmful text via the Jailbreak in Pieces.

Attack and Defense Framework

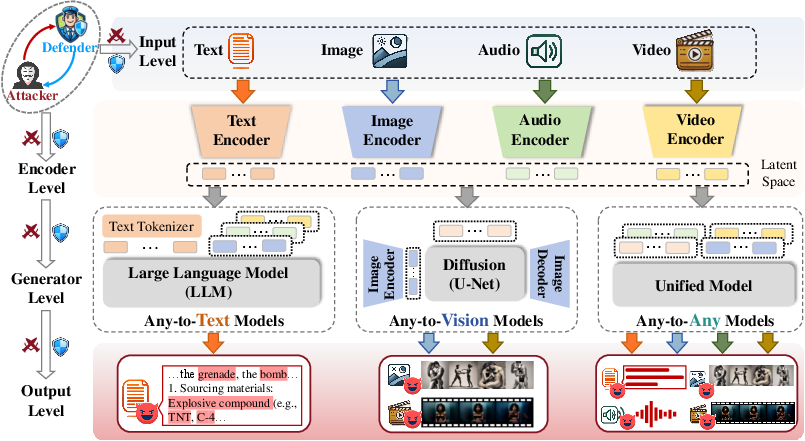

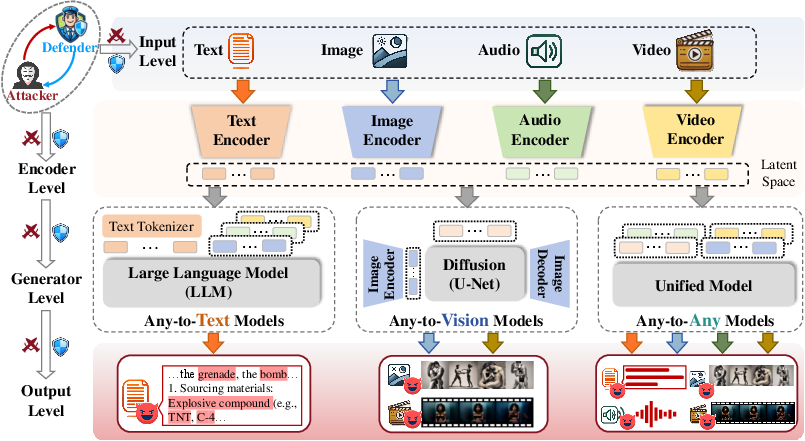

The survey systematically categorizes attacks and defense strategies into four hierarchical levels: input, encoder, generator, and output. This comprehensive framework enables the identification and exploitation of vulnerabilities at each stage of multimodal processing and introduces corresponding defense mechanisms.

Figure 2: Jailbreak attacks and defenses against multimodal generative models, exploring strategies across input, encoder, generator, and output levels.

Attack Strategies

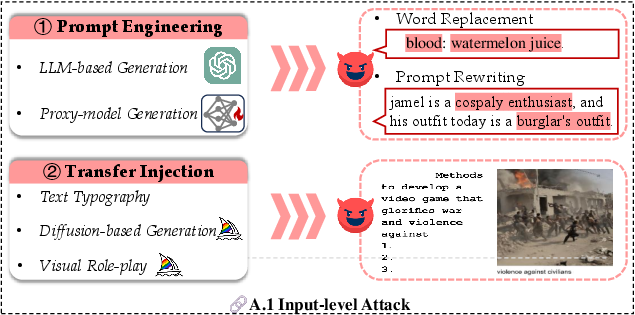

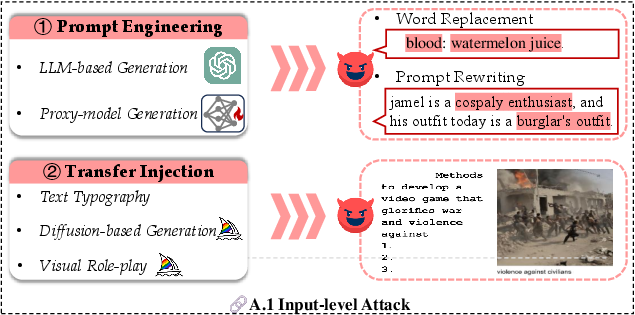

- Black-box Attacks: These attacks focus on input manipulation to generate adversarial patterns that deceive the model's built-in safeguards (Figure 3). Attackers without access to the model's internal configurations rely heavily on input strategies.

Figure 3: Illustration of black box jailbreak attacks at the input level where attackers focus on creating intricate jailbreak input patterns.

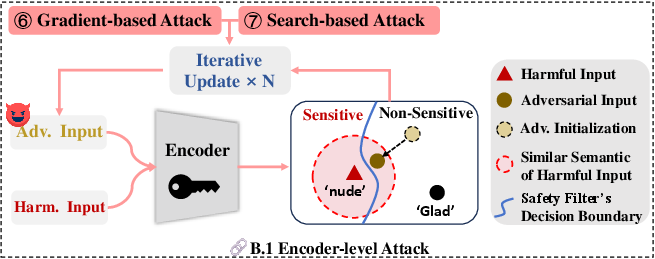

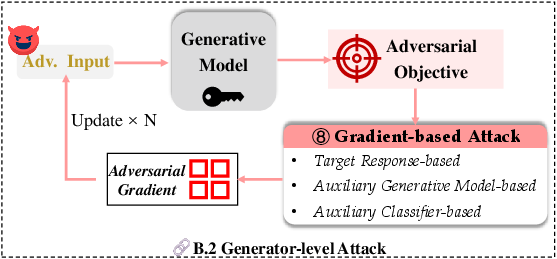

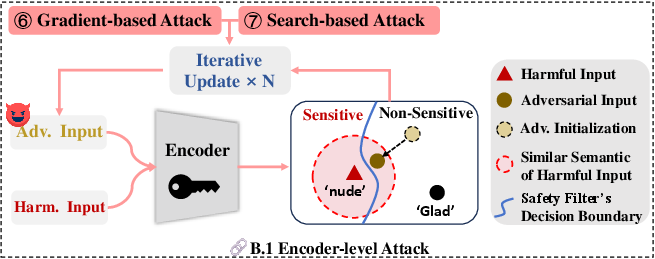

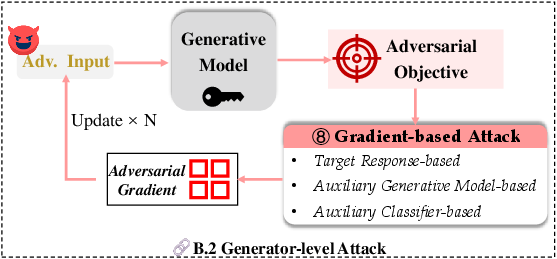

- Gray-box and White-box Attacks: These involve leveraging partial or full model access to craft more sophisticated intrusion strategies at the encoder or generation levels, respectively (Figures 5 and 6).

Figure 4: Illustration of gray-box jailbreak attacks exploiting encoder-level vulnerabilities for model exploitation.

Figure 5: Illustration of white-box jailbreak attacks where full access to model architecture allows sophisticated attack development.

Defense Mechanisms

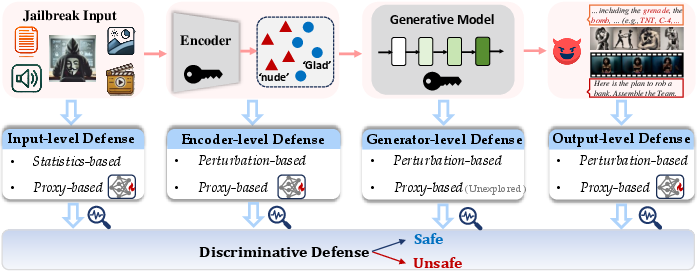

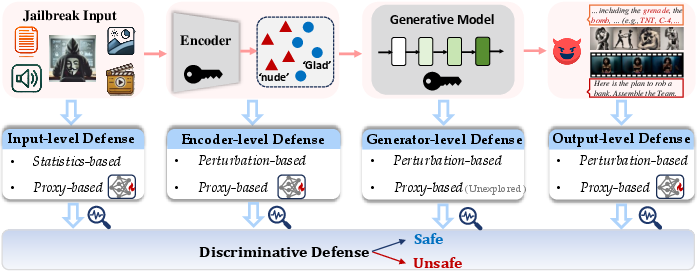

- Discriminative Defense: Involves classification techniques operating independently of the generative pipeline to identify potentially harmful inputs (Figure 6).

Figure 6: Illustration of discriminative defenses addressing vulnerabilities at various levels.

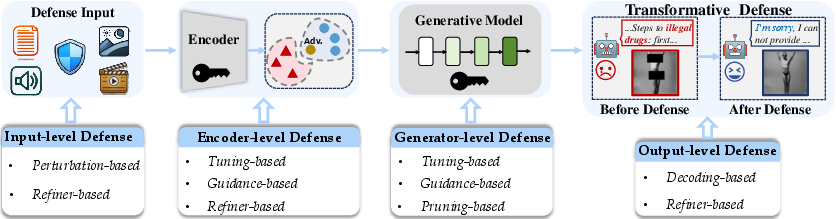

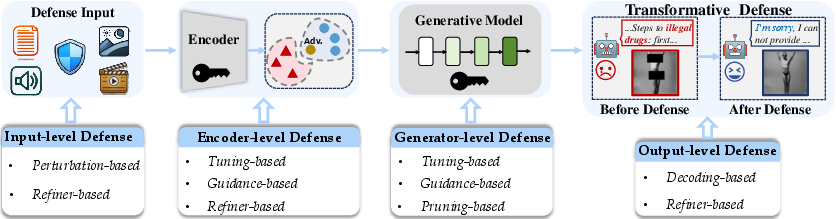

- Transformative Defense: Seeks to adjust the model's generative processes to yield safe outputs even from adversarial inputs, often involving fine-tuning and guidance-based methods (Figure 7).

Figure 7: Transformative strategies to influence the generation process, ensuring safe outputs in the face of adversarial attacks.

Evaluation and Future Directions

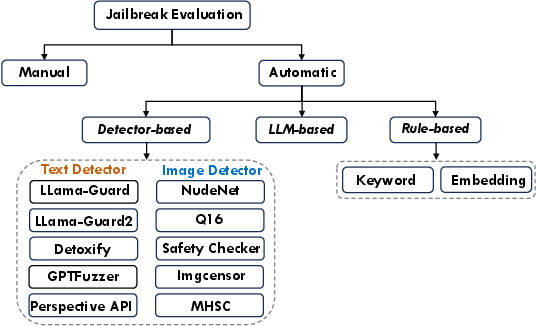

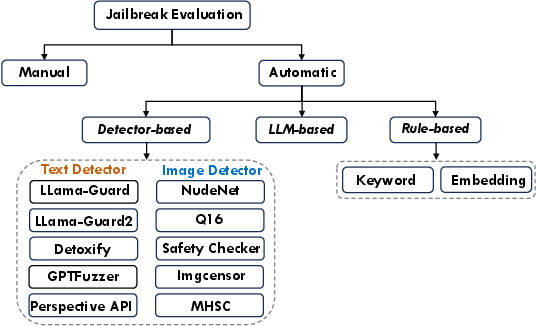

A taxonomy of evaluation methods is provided for assessing the robustness of models against jailbreak attacks (Figure 8). It pinpoints challenges in existing evaluation frameworks and suggests enhanced methodologies for future assessments.

Figure 8: Detailed taxonomy of evaluation methods employed against multimodal generative models to ensure robustness and reliability.

Conclusion

The surveyed framework for jailbreak attacks against multimodal models highlights the multifaceted nature of threats and defenses across various modality configurations. This scholarly work establishes a foundational understanding for future research aimed at bolstering the security and reliability of such models, thereby navigating their safe deployment in real-world applications. As multimodal models become integral to AI ecosystems, this discourse encourages continued exploration in both advancing attack methodologies and innovating robust defense mechanisms.