- The paper introduces a semantic-aware video tokenizer that integrates LLM-based codebooks for effective spatial-temporal compression.

- The paper leverages a cross-attention query autoencoder and a curriculum learning strategy to decouple and encode visual features efficiently.

- The paper achieves comparable reconstruction on UCF-101 using only 25% of tokens while improving video generation metrics by 32.9%.

Semantic-Aware Spatial-Temporal Tokenization

Introduction

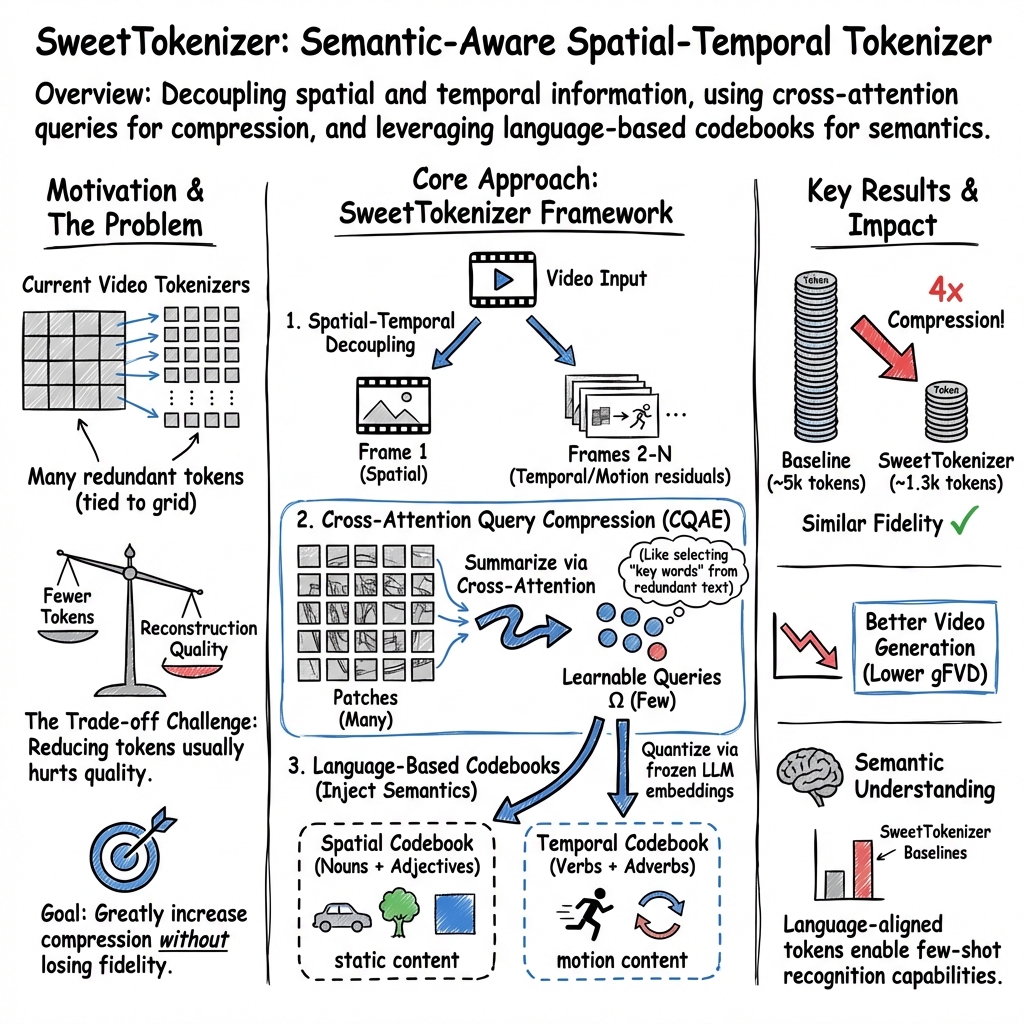

The paper, "SweetTok: Semantic-Aware Spatial-Temporal Tokenizer for Compact Video Discretization" (2412.10443), presents a novel tokenizer framework aimed at improving compression ratios while maintaining reconstruction fidelity in video data. The study leverages the VQ-VAE paradigm, introducing innovative mechanisms for decoupling spatial and temporal dimensions and integrating semantic information through specialized codebooks derived from LLM embeddings. This approach not only enhances video reconstruction fidelity but also significantly boosts video generation outcomes, showcasing its compact and effective representation capabilities.

Methodology Overview

Cross-attention Query AutoEncoder (CQAE)

The proposed methodology addresses the inefficiencies of existing video tokenizers, which tend to have low compression ratios due to redundant spatial and temporal representations. The CQAE structure is introduced to decouple and efficiently encode spatial and temporal information into learnable query tokens. The spatial and temporal dimensions are compressed into separate queries, allowing for enhanced motion information and better decoder alignment. This approach significantly reduces token count required while preserving essential temporal dynamics.

Language-based Latent Codebook

Recognizing the power of semantic-rich embeddings, the tokenizer leverages pre-trained LLM codebooks to enhance compression fidelity. Two distinct codebooks are constructed: one for spatial information (using nouns and adjectives) and another for temporal motion (using verbs and adverbs). This strategic embedding choice allows the learnable compressed queries to integrate seamlessly into downstream visual understanding tasks, powered by the semantic depth of LLMs.

Curriculum Learning Strategy

To ensure robust training convergence, a curriculum learning strategy is employed. This training protocol is segmented into three progressive stages, facilitating stable learning and adaptation from spatial pre-training using image data to joint spatial-temporal training with video data, ultimately enabling comprehensive spatiotemporal decoding.

Experimental Results

Video Reconstruction and Generation

SweetTokenizer demonstrates marked improvements in video data compression and reconstruction fidelity. On the UCF-101 dataset, it achieves comparable reconstruction fidelity using only 25% of the tokens compared to state-of-the-art methods. In generating video data, the model achieves a 32.9% improvement in generation metrics, highlighting its efficacy in efficient token utilization and semantic integration.

Image Reconstruction

The tokenizer also excels in image data tasks, showing substantial improvements in metrics such as rFVD and rFID across datasets like ImageNet-1K. By maintaining semantic-rich latent spaces, SweetTokenizer supports high-quality reconstructions and paves the way for effective visual token generation and comprehension.

Implications and Future Work

The introduction of SweetTokenizer offers significant implications for the field of computer vision, particularly in tasks requiring compact yet effective visual representations. Its architecture promises practical applications in varied domains, from generative models to visual recognition systems. Future work may explore enhancing these semantic capabilities through contrastive learning and further refining the balance between compression and fidelity, specifically tailoring tokenization methods for diverse visual data types.

Conclusion

SweetTokenizer presents a sophisticated approach to video and image discretization through semantic-enhanced compression methods. Its efficacy in reducing token counts while maintaining high reconstruction fidelity offers a promising direction for future research in visual data processing and tokenization strategies, marking an important advancement in the intersection of computer vision and natural language processing.