- The paper demonstrates DOME's dynamic hierarchical outline and memory-enhancement approach significantly improves long-form story coherence and reduces context conflicts.

- The methodology integrates macro and micro planning via a novel DHO mechanism coupled with a temporal knowledge graph-based memory module for efficient content retrieval.

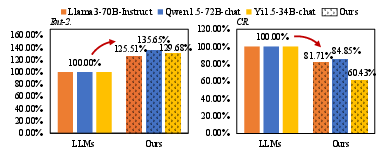

- Experimental results indicate that DOME outperforms existing methods in fluency, diversity, and plot consistency in long narrative generation.

Generating Long-form Story Using Dynamic Hierarchical Outlining with Memory-Enhancement

This essay reviews the research paper "Generating Long-form Story Using Dynamic Hierarchical Outlining with Memory-Enhancement," which introduces the Dynamic Hierarchical Outlining with Memory-Enhancement (DOME) method for long-form story generation. This method addresses the challenges of maintaining contextual consistency and coherent plot development in long-form stories generated by LLMs.

Introduction

The paper tackles the task of long-form story generation, a complex NLP problem requiring creativity and long-term planning. Traditional methodologies often rely on fixed outlines or lack overarching macro-level planning, leading to difficulty in ensuring both contextual consistency and coherent plot development. DOME is introduced as a solution to these issues, enhancing story coherence from both plot and expression perspectives.

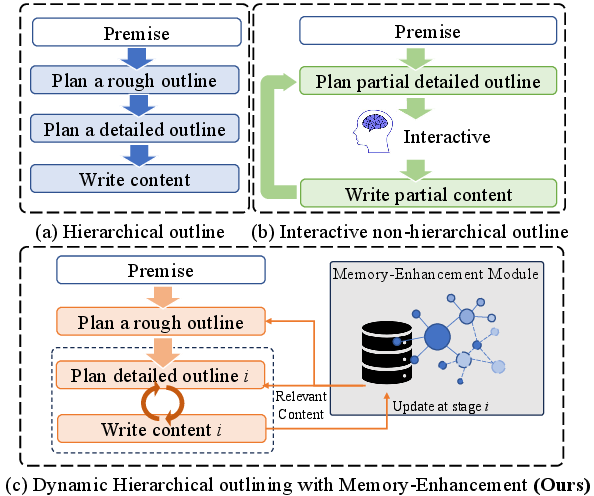

Figure 1: Illustration and comparison of three strategies of long-form story generation.

DOME Methodology

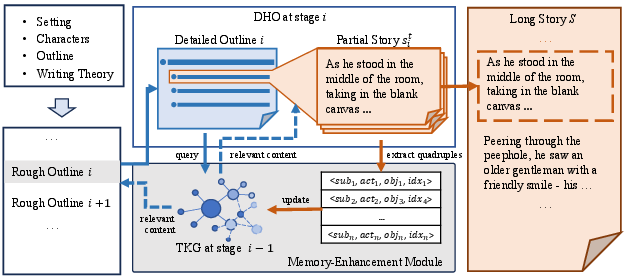

Dynamic Hierarchical Outline (DHO) Mechanism

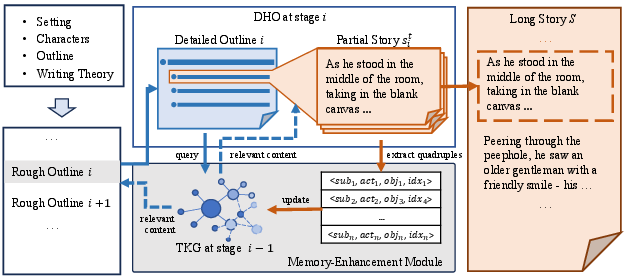

The DHO mechanism fuses planning and writing stages, incorporating novel writing theory to generate coherent plot structures. This approach dynamically expands a rough outline, ensuring adaptation to uncertainties during story generation. The integration of macro- and micro-level planning helps maintain plot completeness and development fluency.

Memory-Enhancement Module (MEM)

MEM utilizes temporal knowledge graphs to store and access previously generated content, aiding in reducing contextual conflicts and improving coherence. This module provides relevant content for outline planning and sequential story writing, ensuring consistency and fluidity in the narrative.

Figure 2: General diagram of proposed DOME.

Experimental Evaluation

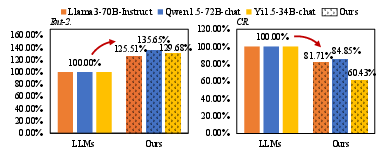

Experiments demonstrate that DOME significantly improves the fluency, coherence, and quality of long-form stories compared to state-of-the-art methods. The authors present both automatic evaluations (e.g., entropy metrics) and human evaluations, which highlight DOME's ability to produce long stories with reduced conflicts and enhanced diversity.

Figure 3: Scalability results of applying DOME on different LLMs.

Implications and Future Directions

The DOME method provides a robust framework for enhancing story generation with LLMs, suggesting future research directions focusing on further refining memory mechanisms and exploring additional applications beyond narrative generation. The integration of dynamic outlining and memory-enhancement strategies could serve as a template for other generative tasks that require sustained coherence and thematic consistency.

Conclusion

The DOME framework offers a significant advancement in automatic long-form story generation, providing enhanced plot coherence and reducing contextual conflicts. Its modular design and applicability across various LLM architectures suggest wide-ranging implications for future research in AI-driven narrative construction.

By leveraging the strengths of hierarchical outlining and advanced memory mechanisms, this research presents a compelling approach to overcoming longstanding challenges in the domain of automatic story generation.