- The paper presents a model that calibrates long and short-term contexts using BERT-tiny to enhance narrative coherence in long story generation.

- It employs discourse tokens to structure narratives from introduction to conclusion, ensuring complete and orderly story progression.

- Experimental results across diverse datasets demonstrate significant improvements in coherence, completeness, and relevance compared to baseline models.

LongStory: Coherent, Complete and Length Controlled Long Story Generation

Introduction

The task of generating coherent and complete long stories has been a significant challenge in NLP. Human authors can write stories of varying lengths while maintaining coherence and ending appropriately, a feat that current LLMs struggle to achieve. This paper introduces a novel model, LongStory, designed to address these challenges by ensuring coherence, completeness, and length control in generated stories.

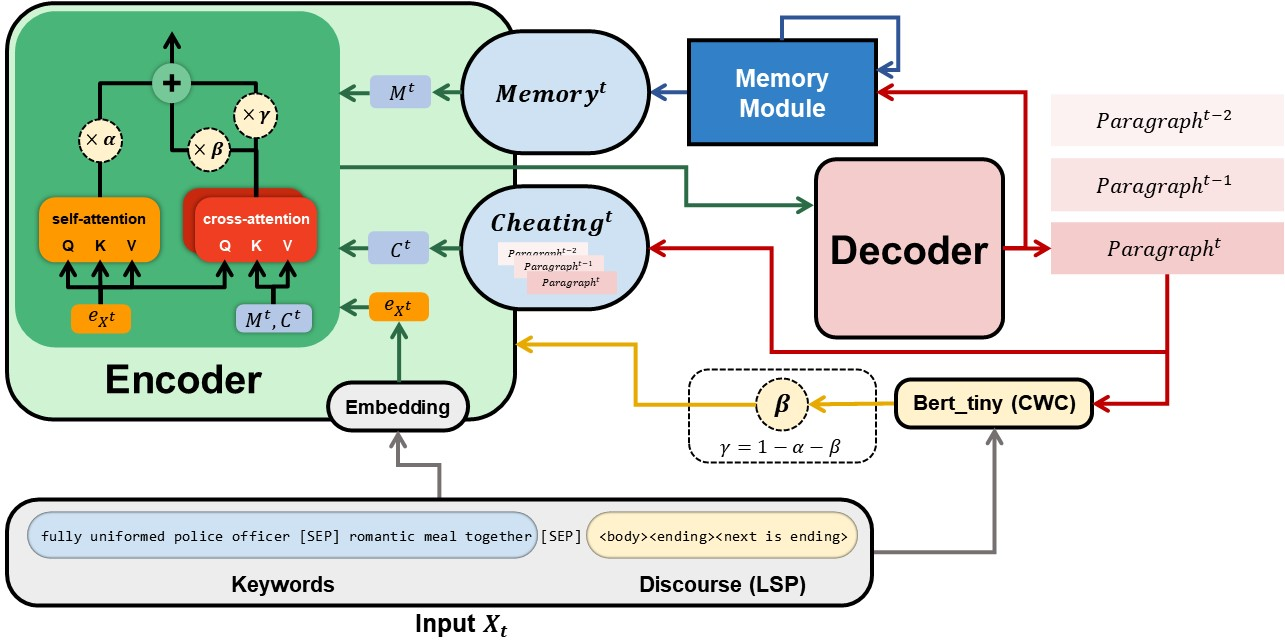

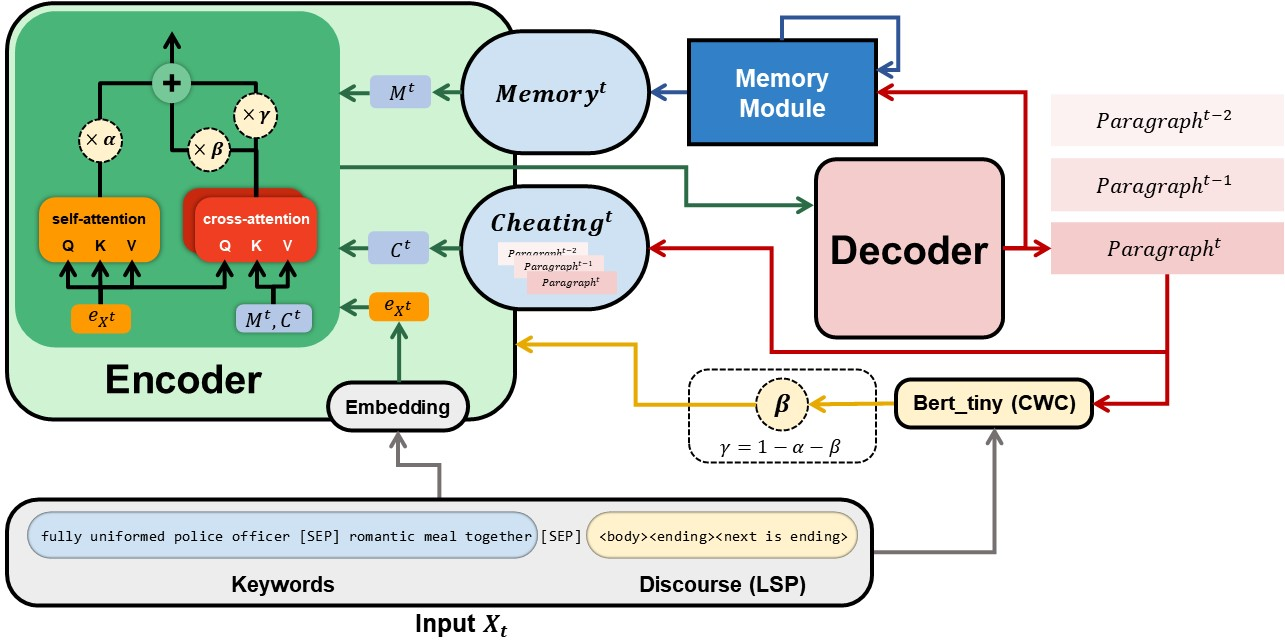

LongStory employs two innovative methodologies: the long and short-term contexts weight calibrator (CWC) and long story structural positions (LSP). CWC adjusts weights for long-term context Memory and short-term context Cheating, recognizing their distinct contributions to story coherence and continuity. LSP uses discourse tokens to define the structural positions within a story, enabling efficient paragraph generation.

Methodology

LongStory's architecture integrates BERT-tiny as a calibrator, refining the balance between long-term and short-term context utilization. This approach ensures continuity and coherence across recursive paragraph generations, a common challenge in long story creation.

Figure 1: Model architecture. LongStory utilizes keywords and discourse tokens through the CWC and LSP, employing BERT-tiny to balance long-term and short-term contexts.

The methodology involves recursive paragraph generation, where output paragraphs contribute to context understanding for subsequent paragraphs. This process maintains coherence through calibrated context weights (β and γ), derived from long-term memory (Mt) and short-term context (Ct).

Long and Short Term Contexts Weight Calibrator (CWC): By employing BERT-tiny, LongStory calibrates the context weight for each paragraph. The learnable parameter β controls long-term context memory, while short-term context is managed through cheating (Ct). This calibration lends coherence across the narrative.

Long Story Structural Positions (LSP): Discourse tokens are utilized to provide structural positioning within a story. Tokens such as <intro>, <body>, and <tail>, among others, help maintain consistent narrative flow. These tokens ensure the model understands the story progression from beginning to end.

Experimental Results

LongStory was trained on diverse datasets, including Writing Prompts, Booksum, and Reedsy Prompts, each offering varied story lengths. The results demonstrate that LongStory outperforms existing models, including Plotmachine, in metrics of coherence, completeness, relevance, and repetitiveness.

Providing insights into context utilization, LongStory exhibits superior coherence in generating stories. It intelligently leverages narrative structure, demonstrated by high completeness scores, ensuring final paragraphs properly conclude the generated stories.

Further Analysis

Zero-shot Testing: The zero-shot tests reveal LongStory's remarkable ability to generalize and predict outcomes across datasets not seen during training. This capability underscores LongStory's adaptability and robustness in story generation tasks.

Ablated Methodology Impact: Analysis of ablated versions of LongStory (LongStory¬M, LongStory¬C, LongStory¬D) highlights the critical role of memory and discourse tokens. Particularly, the presence of Memory and Cheating contexts distinctly enhances coherence and relevance, affirming the model's efficacy.

Conclusion

LongStory introduces a significant advancement in long story generation, capable of maintaining coherence and completeness across lengthy narratives. Its integration of CWC and LSP methodologies enables structured story progression and effective context management. The results across varied datasets affirm LongStory's potential in revolutionizing NLP tasks related to lengthy text generation, paving the way for more refined and contextually aware models in the future.