- The paper proposes a multi-agent Story Generator that integrates LLMs, dual memory storage, and knowledge graph-driven twist generation.

- It employs long-term and short-term memory strategies to maintain thematic coherence and mitigate theme drift in extended narratives.

- Experiments demonstrate significant improvements in narrative quality, with enhanced interestingness, logical consistency, and readability over baseline models.

Long Story Generation via Knowledge Graph and Literary Theory

Introduction

The paper "Long Story Generation via Knowledge Graph and Literary Theory" (2508.03137) proposes a novel approach to the generation of long-form stories, addressing the inherent challenges posed by theme drift and plot inconsistency in long text generation (LTG). Previous methodologies have primarily relied on outline-based generation, which often leads to a loss of thematic coherence and unappealing storylines. The authors introduce a multi-agent Story Generator structure that utilizes LLMs as core components, aiming to enhance narrative quality and logical consistency in extended storytelling endeavors.

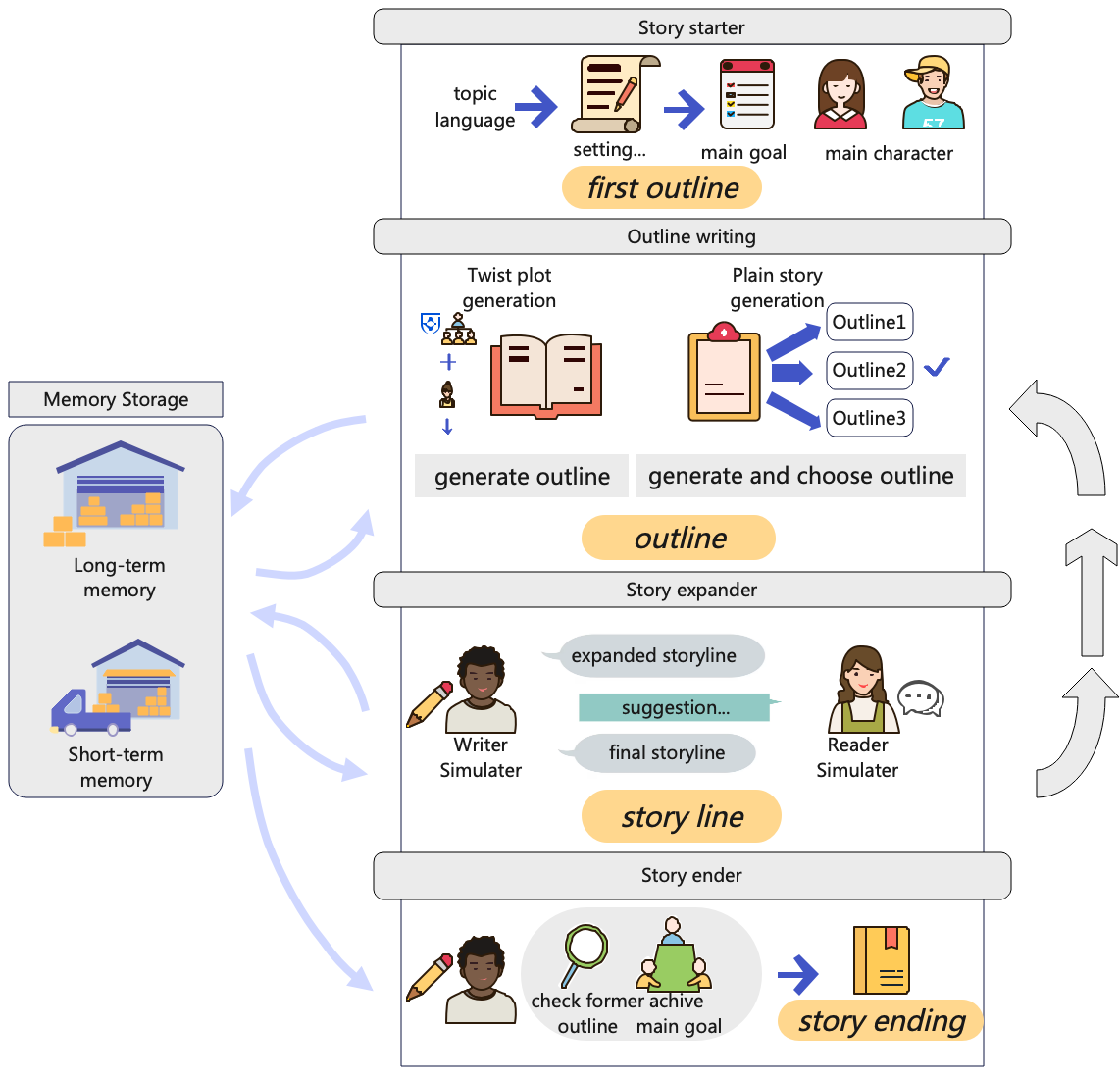

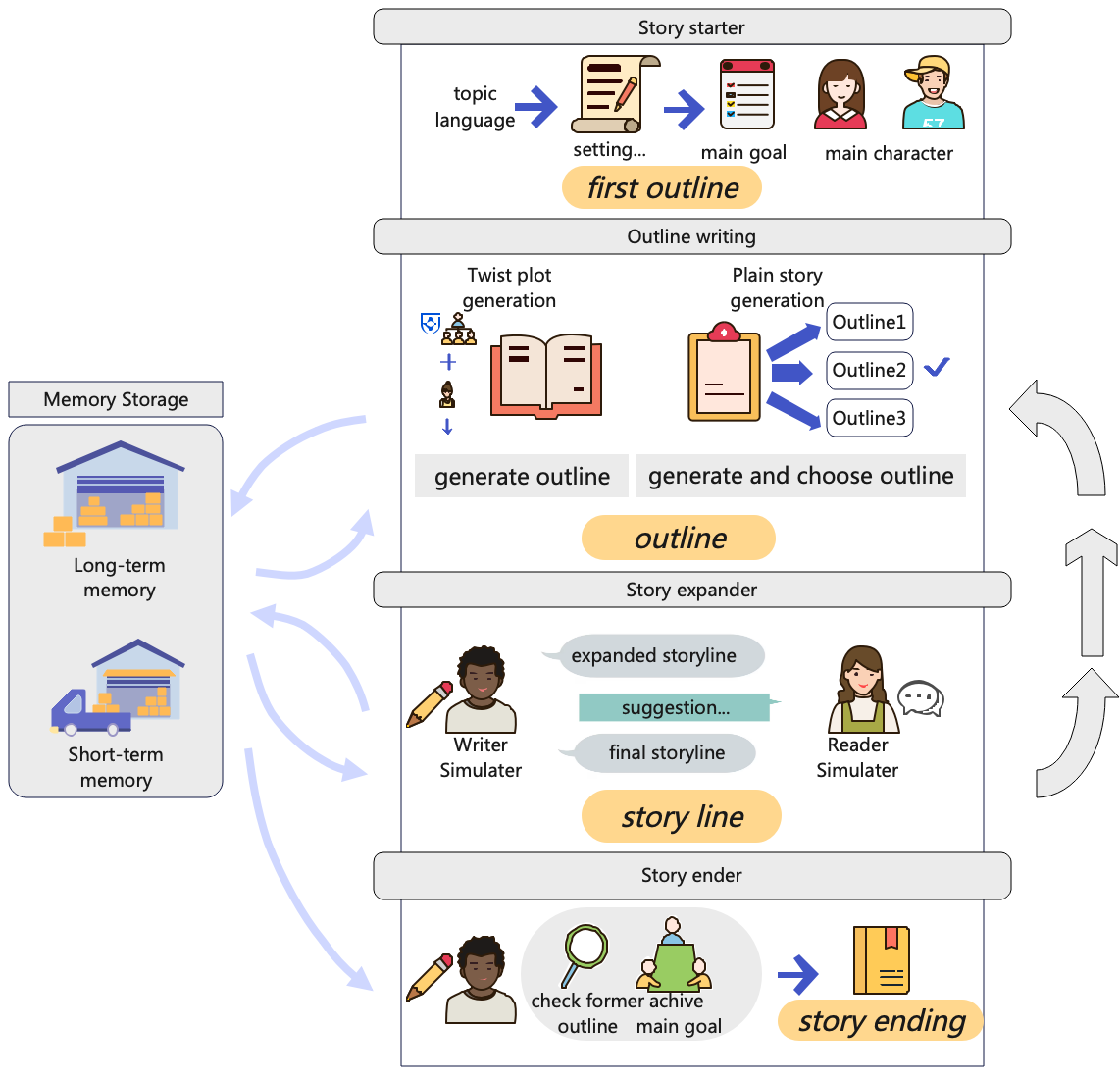

Figure 1: Overview of the Story Generator structure.

Story Generator Architecture

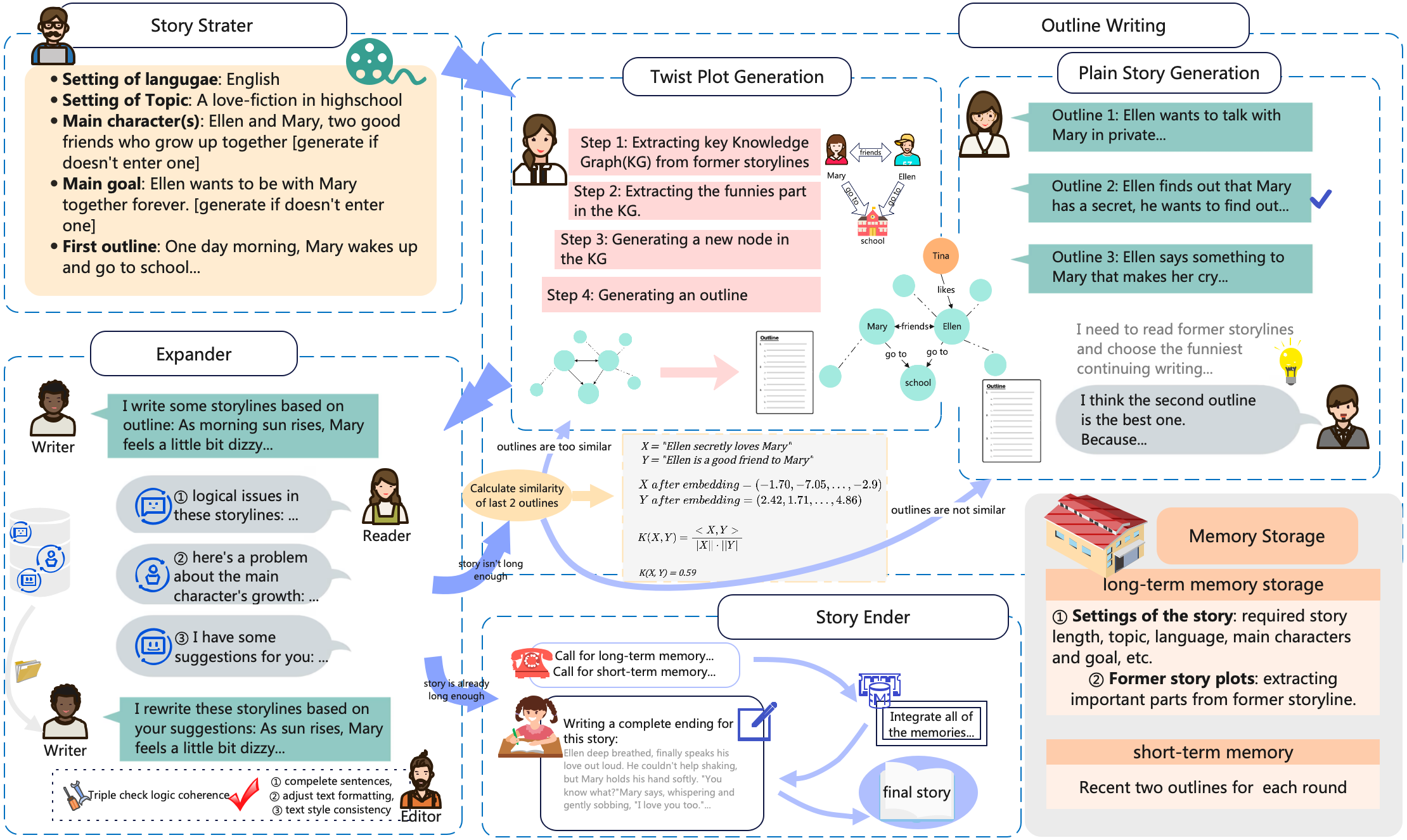

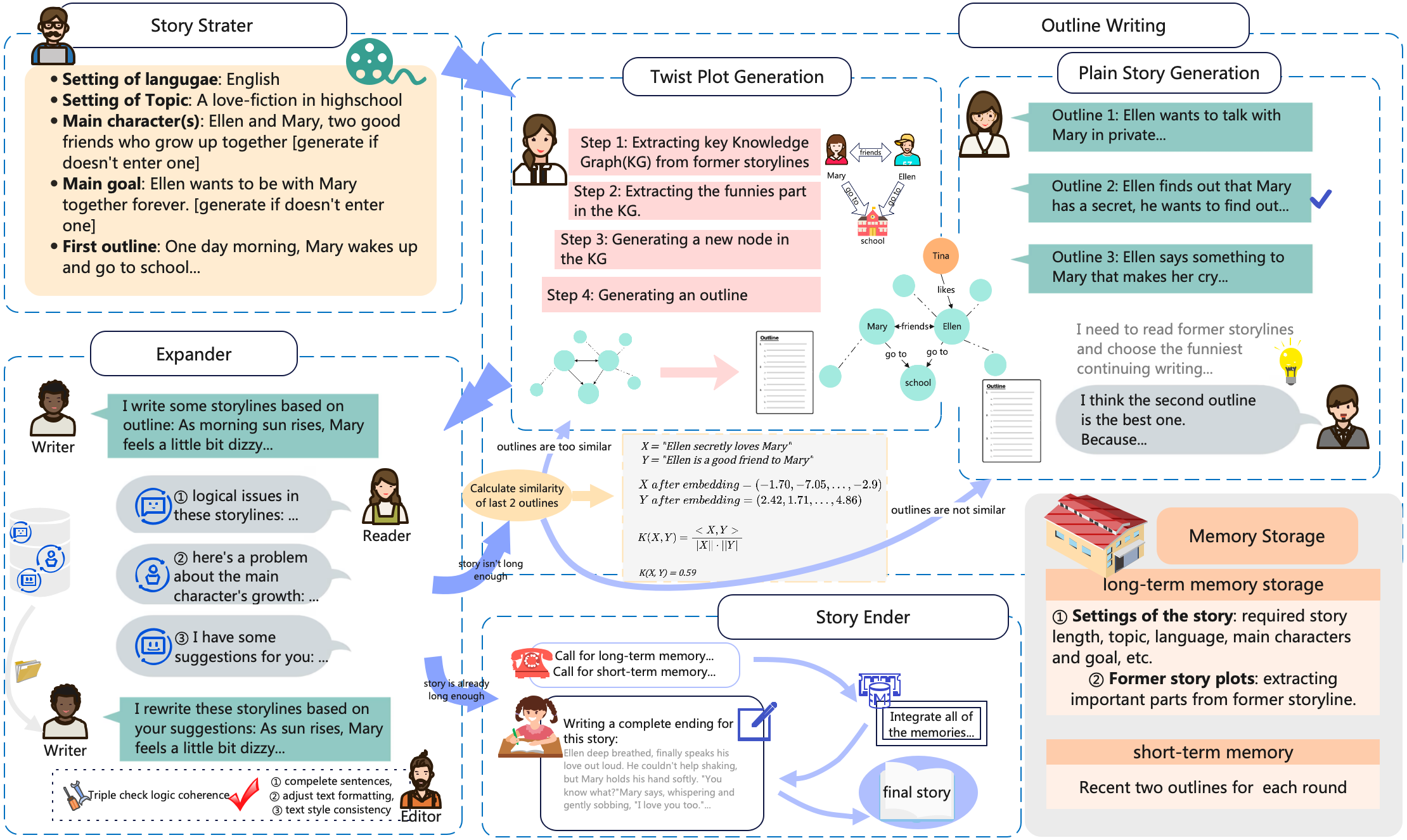

The Story Generator operates through four structured stages: Story Starter, Outline Writing, Story Expander, and Story Ender. Each stage is crafted to ensure thematic consistency and engagement through the strategic use of memory storage and multi-agent interactions.

Memory Storage Strategy

To counter theme drift, the authors propose a memory storage framework comprising long-term and short-term memory components. Long-term memory anchors key story elements, while short-term memory retains recent outlines to maintain context continuity across segments. This dual memory approach mitigates the "Lost in the Middle" phenomenon, ensuring logical narrative flow over extended text spans.

Literary Theory Application

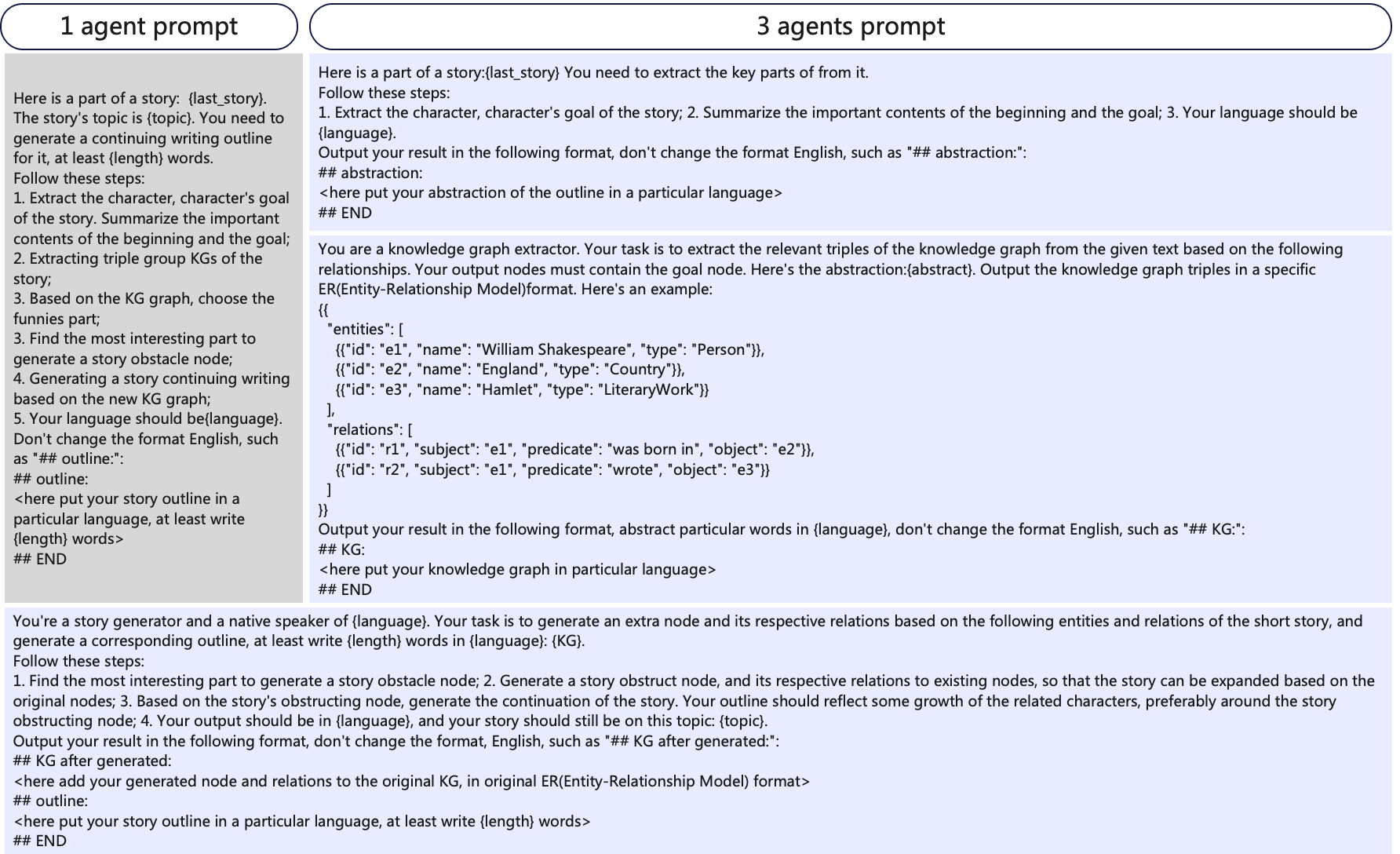

Leveraging literary narratology, the paper introduces a framework for generating story twists driven by plot mechanics rather than mere chronological sequence. By constructing a knowledge graph (KG) centered on character goals, new nodes are introduced to weave complex, engaging plot twists aligned with structural closure theory. This strategy enriches the narrative by maintaining thematic integrity while introducing unpredictability and depth.

Figure 2: The structure of Story Generator. Begins in the Story Starter stage, then Outline Writing. Expender expands outlines and triple-checks storylines. Story Ender gives a good ending to the story. Memory Storage keeps following the whole generation process and gives back responses when Generators call it.

Experiments

Experimental Setup

The authors employed a comparison methodology, integrating GPT-3.5-turbo and Claude-3-7-sonnet as core LLMs. Stories across various genres were assessed using human evaluations facilitated by Auto-J, a generative judge tool designed to mimic human preference metrics.

Results

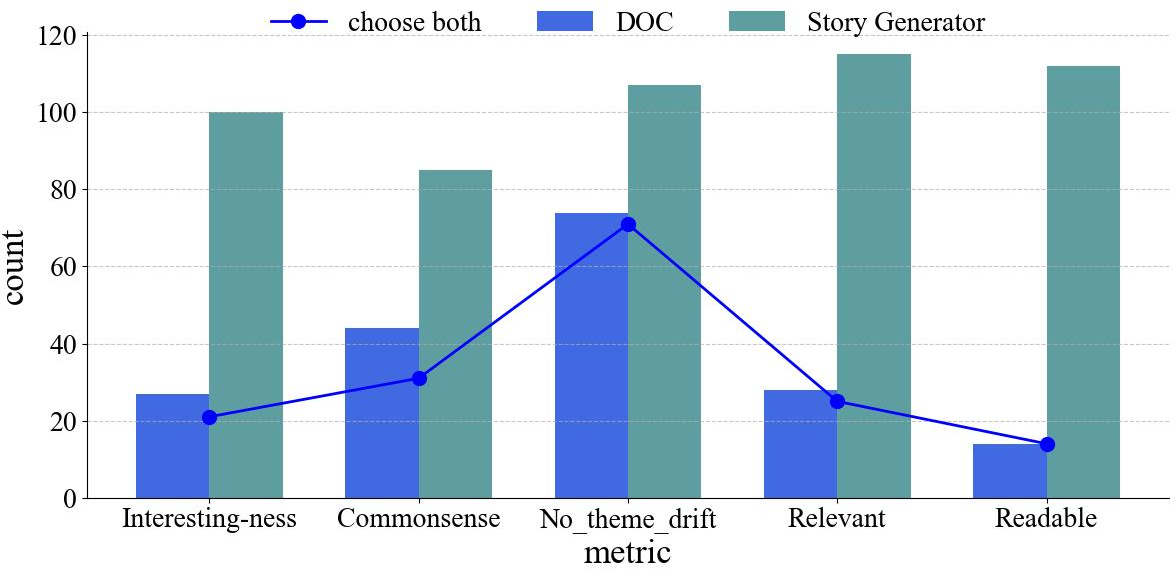

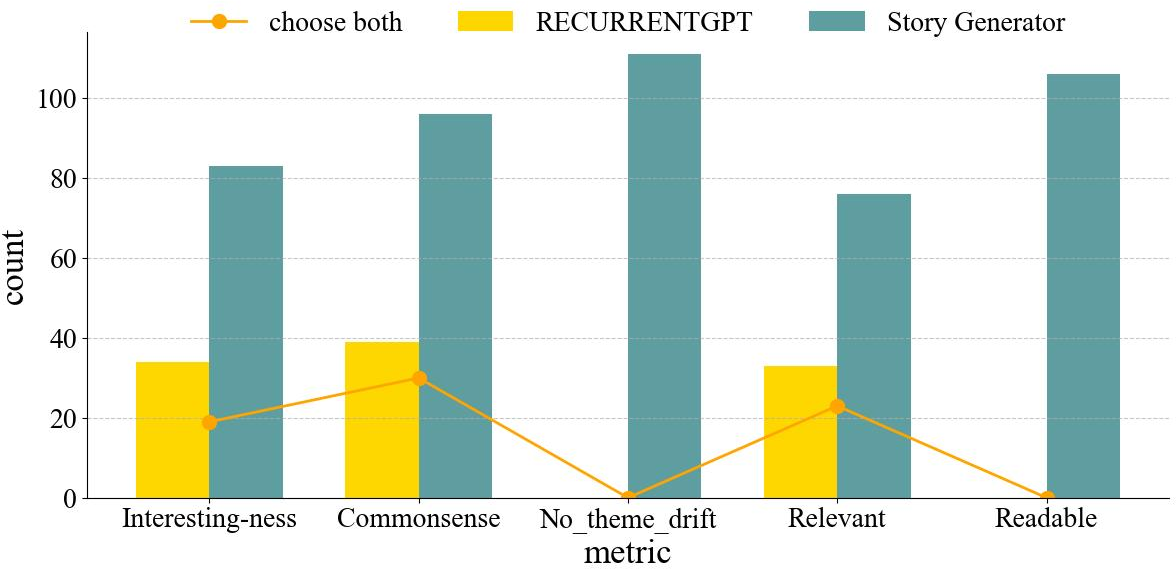

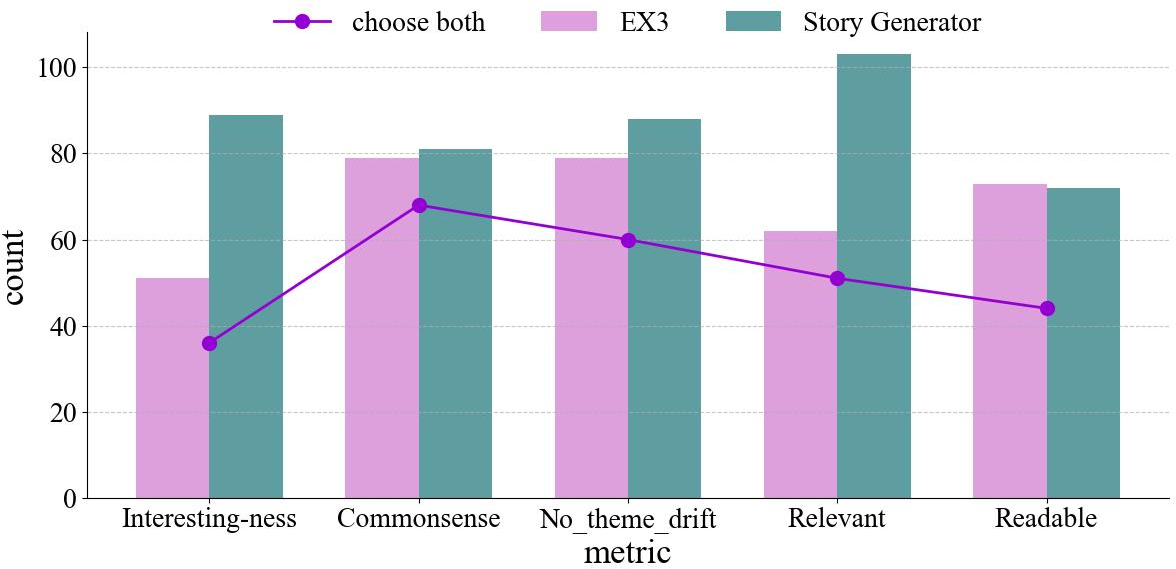

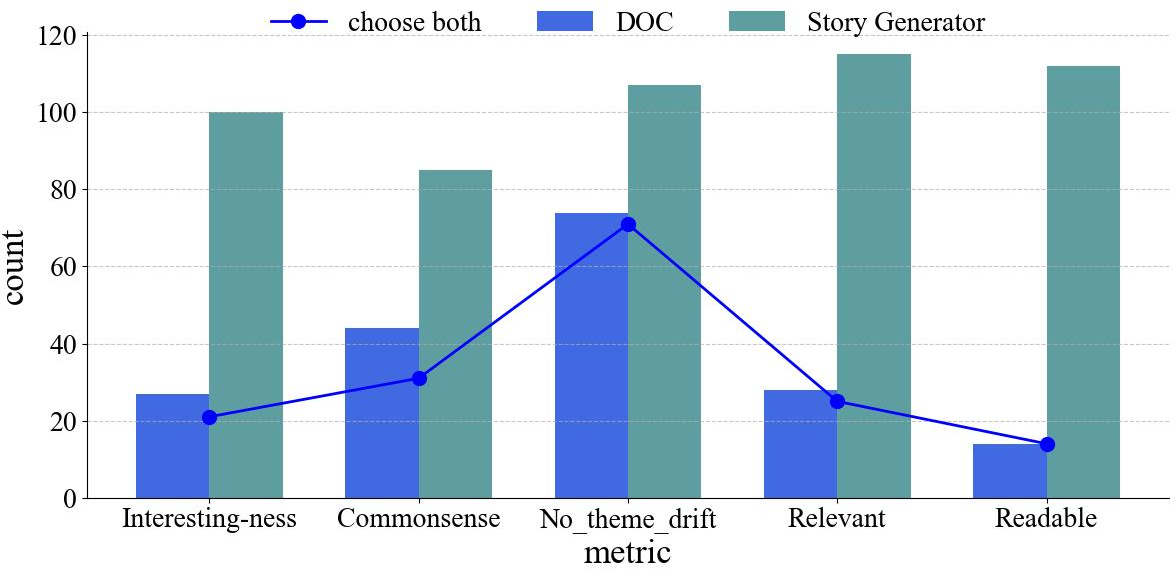

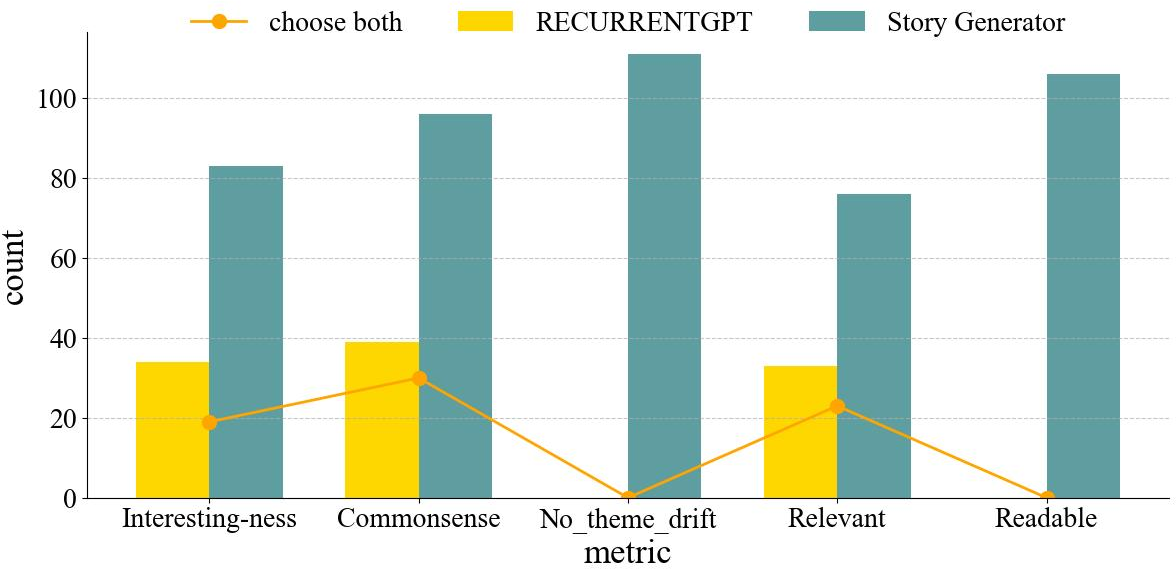

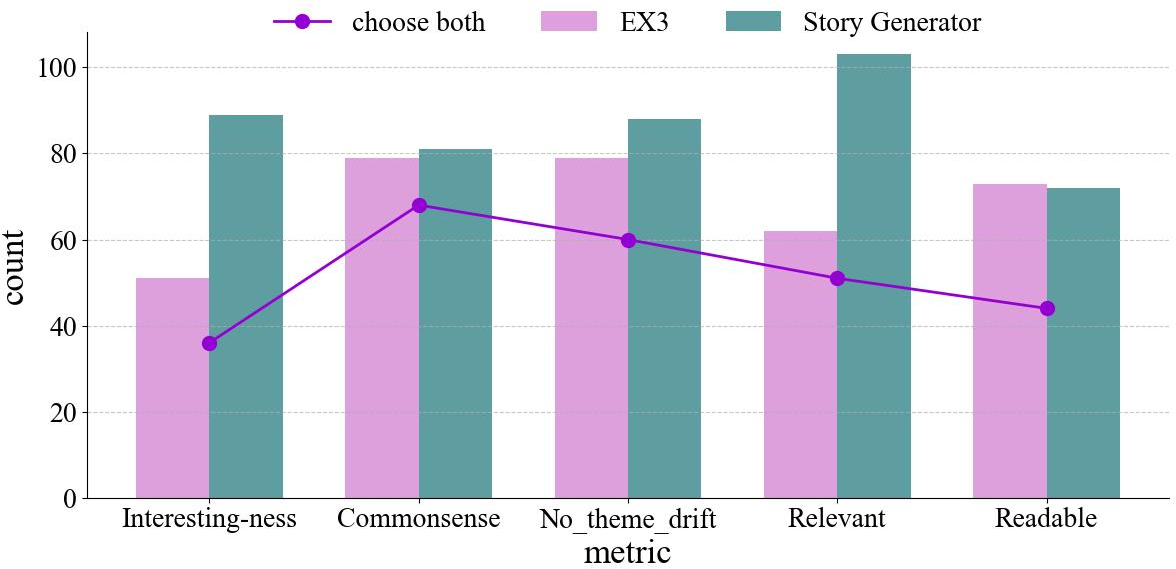

The Story Generator demonstrated superior performance in all evaluated dimensions compared to baseline models, such as DOC, RECURRENTGPT, and EX3. Metrics of interesting-ness, commonsense logic, thematic consistency, relevance, and readability all favored the novel approach, substantiating the efficacy of the multi-agent structure and memory enhancement systems.

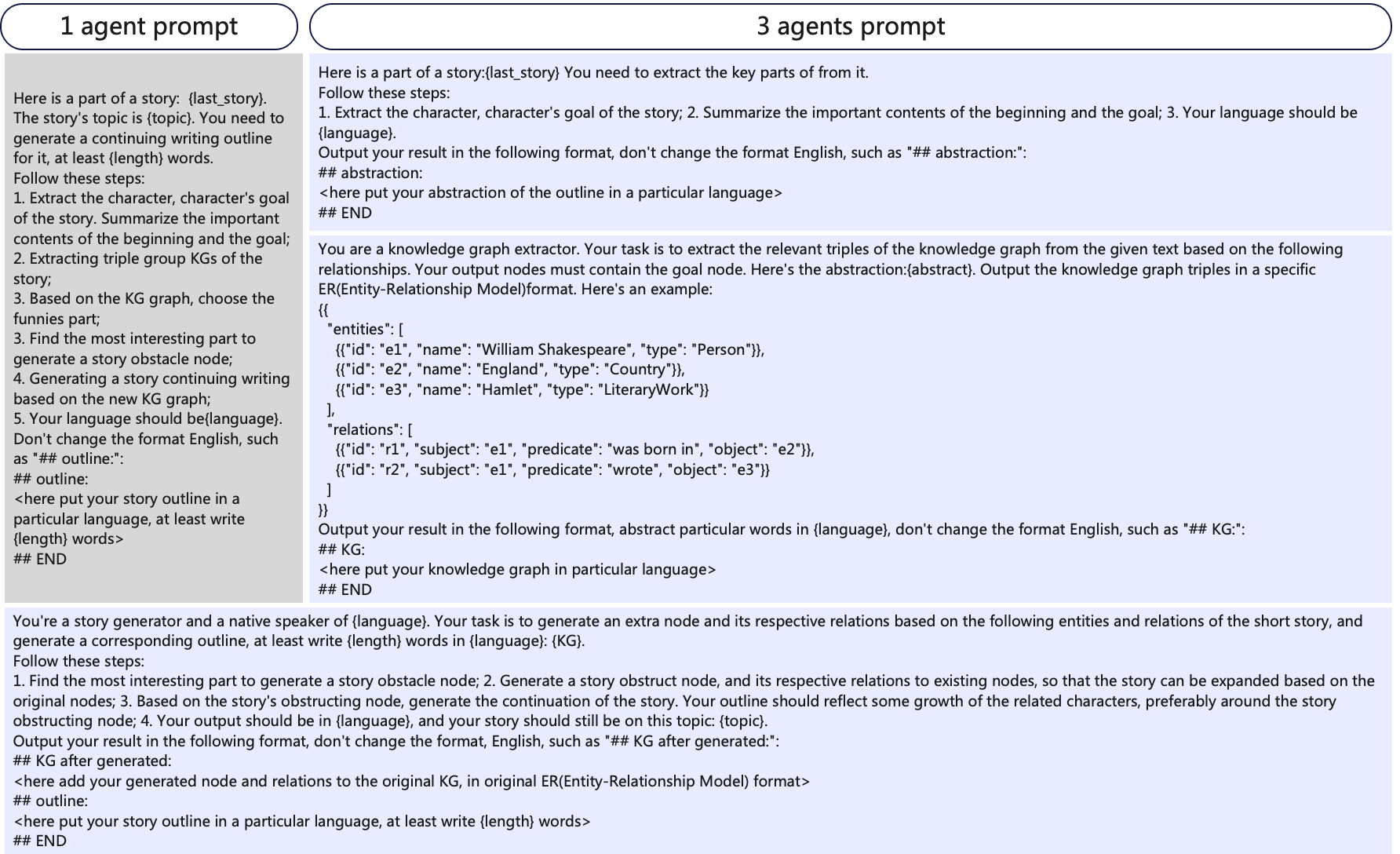

Figure 3: Prompts of two different KG-driven outline generation methods.

Ablation Studies

The results of ablation studies underscore the significance of both the KG-driven twist generator and the multi-agent interaction model. Removing the KG-driven component resulted in less interesting plots and greater theme drift, while excluding multi-agent interaction led to reduced readability and logical consistency. These findings affirm the necessity of both elements in achieving high-quality story generation.

Figure 4: Comparing DOC and Story Generator. Counting identifies certain dimensions of the story.

Analysis and Discussion

The integration of memory mechanisms and narratological theory yields significant improvements in LTG. By anchoring key narrative components in memory, the Story Generator maintains thematic coherence while facilitating engaging plot development through KG-driven twist generation. Multi-agent interactions enhance narrative refinement through simulated writer-reader dialogues, ensuring logical consistency and readability. The novel architectural design serves as a robust framework for long-form story generation, bridging the gap between domain-specific LLM-generated and human-written narratives.

Conclusion

The Story Generator structure provides a comprehensive solution to the challenges associated with LTG, employing strategic memory retention, KG-based twist generation, and multi-agent collaboration. By significantly outperforming existing models in human evaluations, this approach marks an essential advancement in automated narrative generation, with potential applications in entertainment, education, and virtual reality story crafting.