- The paper introduces a novel loss function that integrates error mean and variance to address high localized errors in PINNs.

- It demonstrates improved accuracy in diverse PDE problems, including Poisson, Burgers', elasticity, and Navier-Stokes equations.

- The approach maintains computational efficiency while providing a promising enhancement for complex physical system modeling.

Physics-informed neural networks (PINNs) have emerged as a compelling approach for solving partial differential equations (PDEs) by leveraging the approximation capabilities of neural networks, integrating physical laws into the learning process, and minimizing the requirement for extensive training datasets. The paper "Improved Physics-informed neural networks loss function regularization with a variance-based term" (2412.13993) introduces a novel regularization method, addressing one of the critical challenges faced by PINNs—localized high-error regions that can significantly affect overall prediction accuracy.

Background and Problem Statement

The standard approach to loss functions in machine learning, primarily using mean square error (MSE), has been effective in reducing average error. However, this method often fails to manage localized outliers due to its assumption of uniform variance across the error distribution, which is particularly problematic in PINNs with regions displaying sharp gradients or discontinuities. These localized discrepancies necessitate an improved strategy to ensure uniform error distribution and more accurate predictions.

Methodology

To overcome the limitations of traditional loss functions, the authors propose a loss function that combines the mean and the standard deviation of the error metric. This variance-based term aims to reduce both the mean and variability of errors:

$\mathcal{L} = \alpha \frac{1}{N}\sum_{i=1}^N e_i + (1-\alpha)\sqrt{\frac{\sum_{i=1}^N (e_i - \Bar{e})^2}{N}}$

where ei denotes the error at point i, $\Bar{e}$ is the average error, N is the number of training points, and α is a hyperparameter moderating the trade-off between mean and variance in the loss function.

Numerical Examples and Results

The paper evaluates the proposed variance-based loss function across multiple PDE problems of varying complexity, including:

- 1D Poisson Equation:

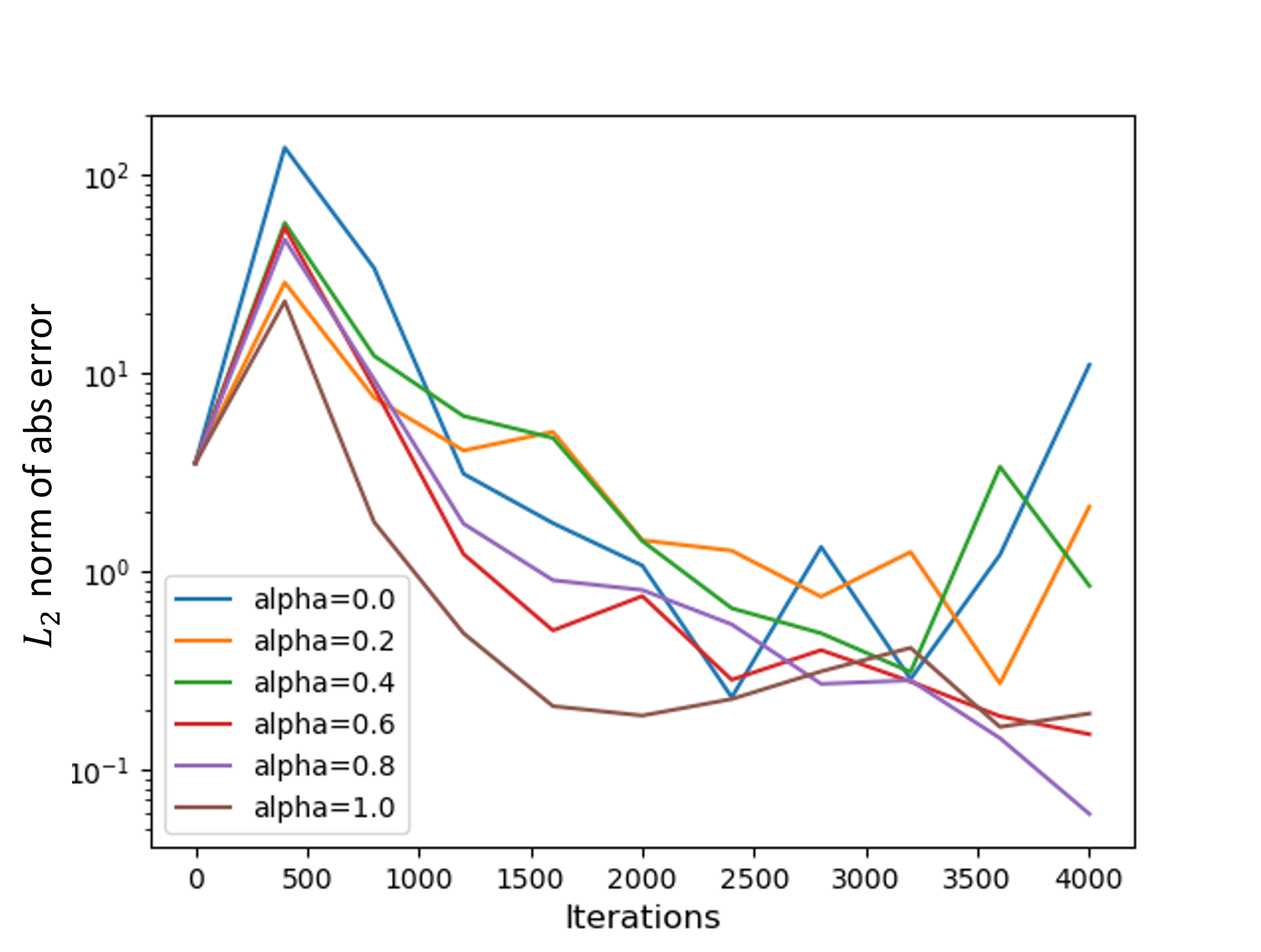

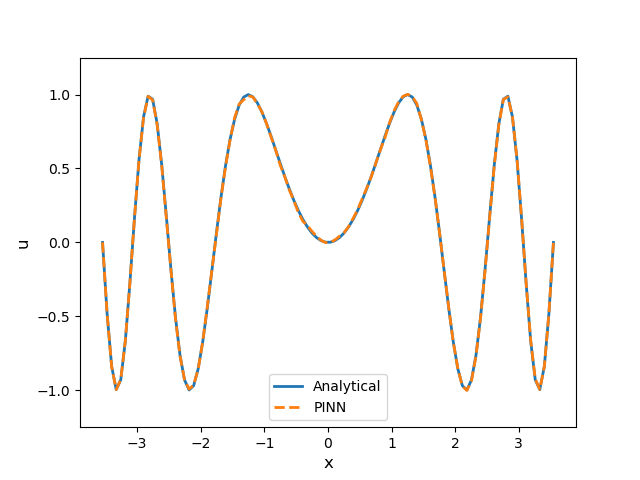

- The addition of error variance improved accuracy, especially in scenarios where the solution is more complex.

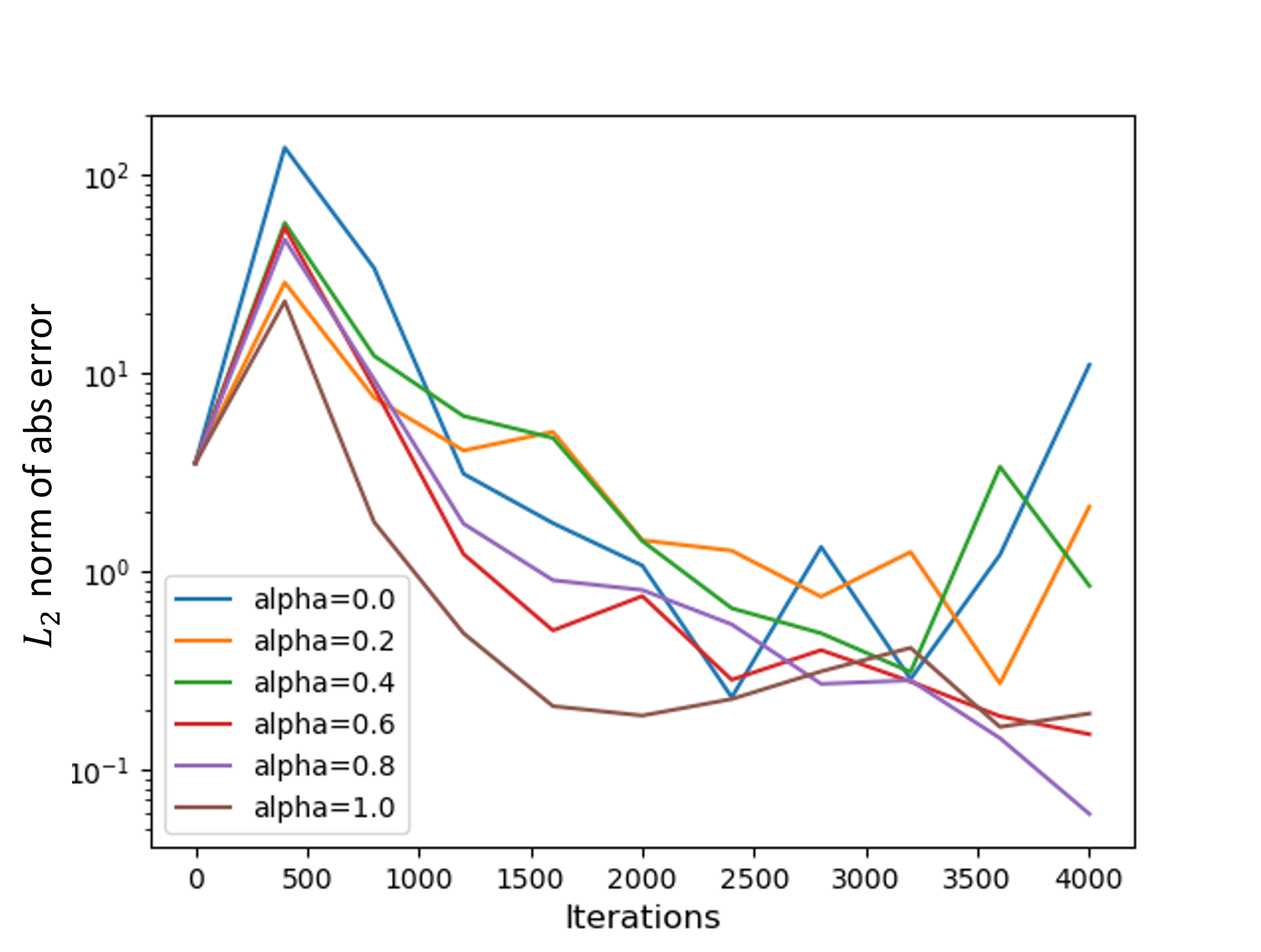

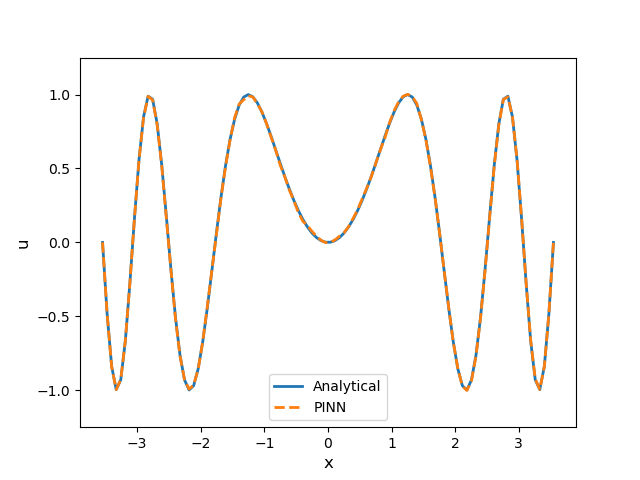

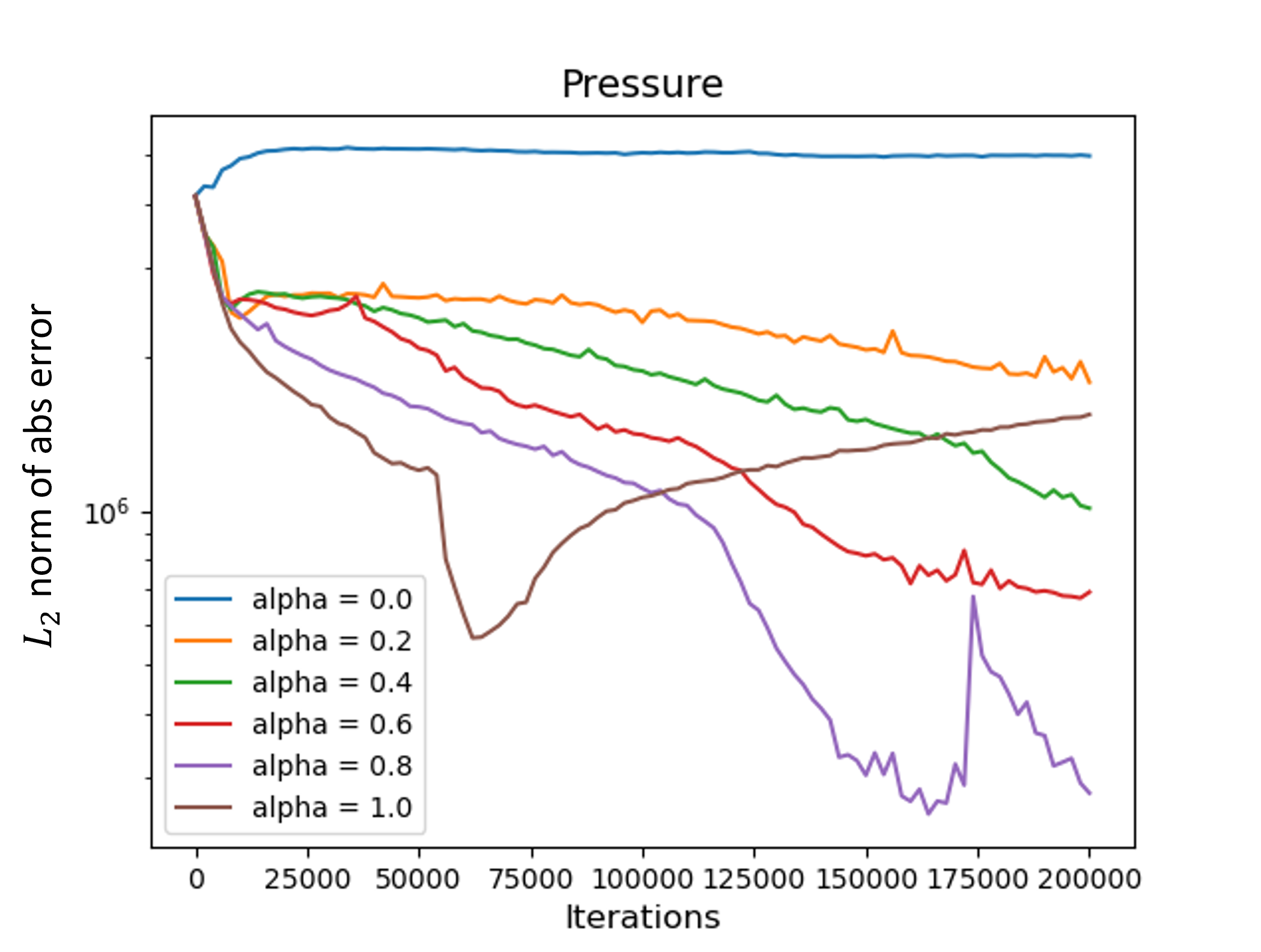

Figure 1: On the left, the L2 norm of the absolute error vs. the number of iterations for different values of alpha for the 1D Poisson example. On the right, a plot of the analytical solution along with the PINN solution for the best alpha (0.8).

- Unsteady Burgers' Equation:

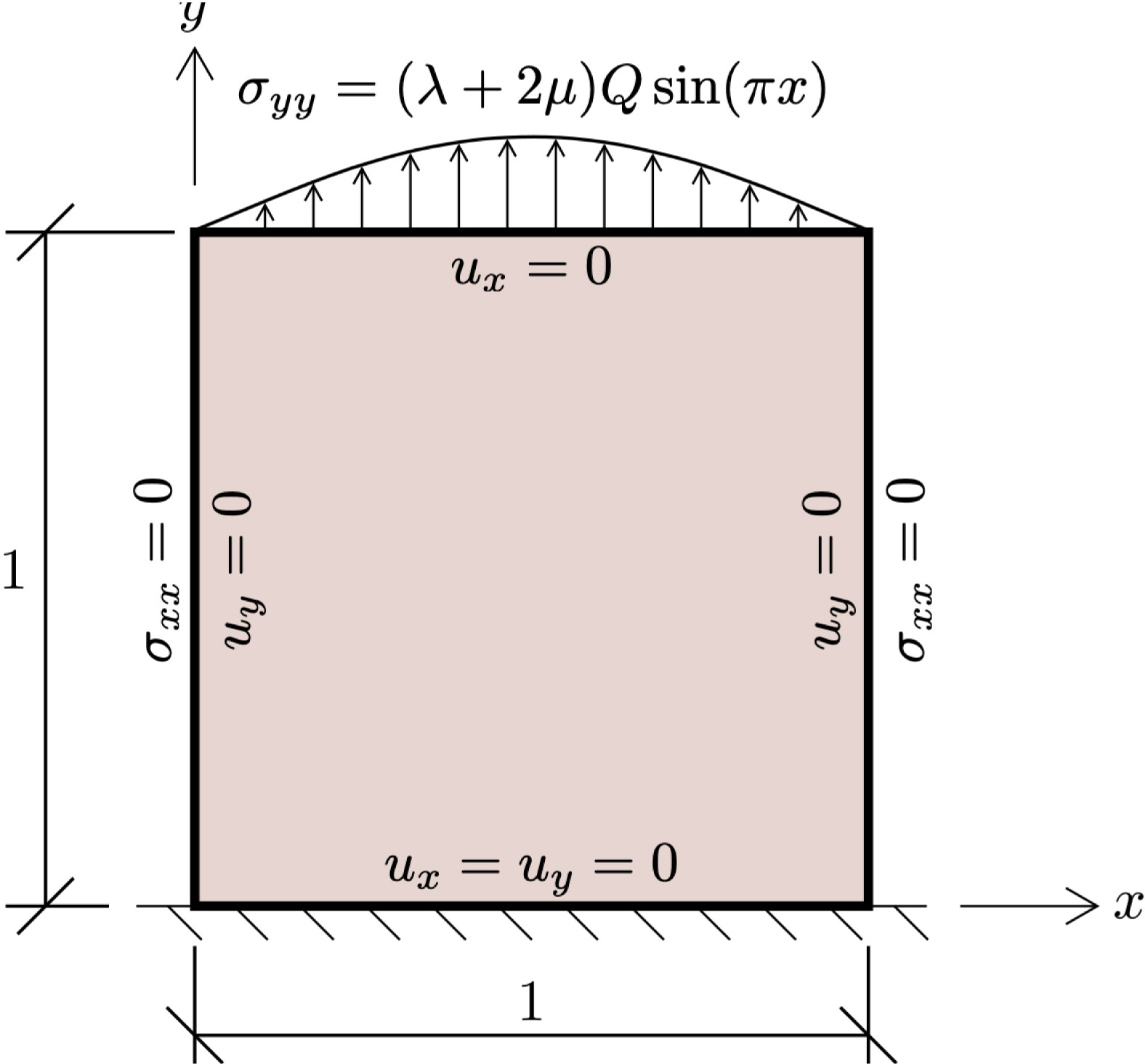

- 2D Linear Elasticity Example:

- 2D Steady Navier-Stokes Equations:

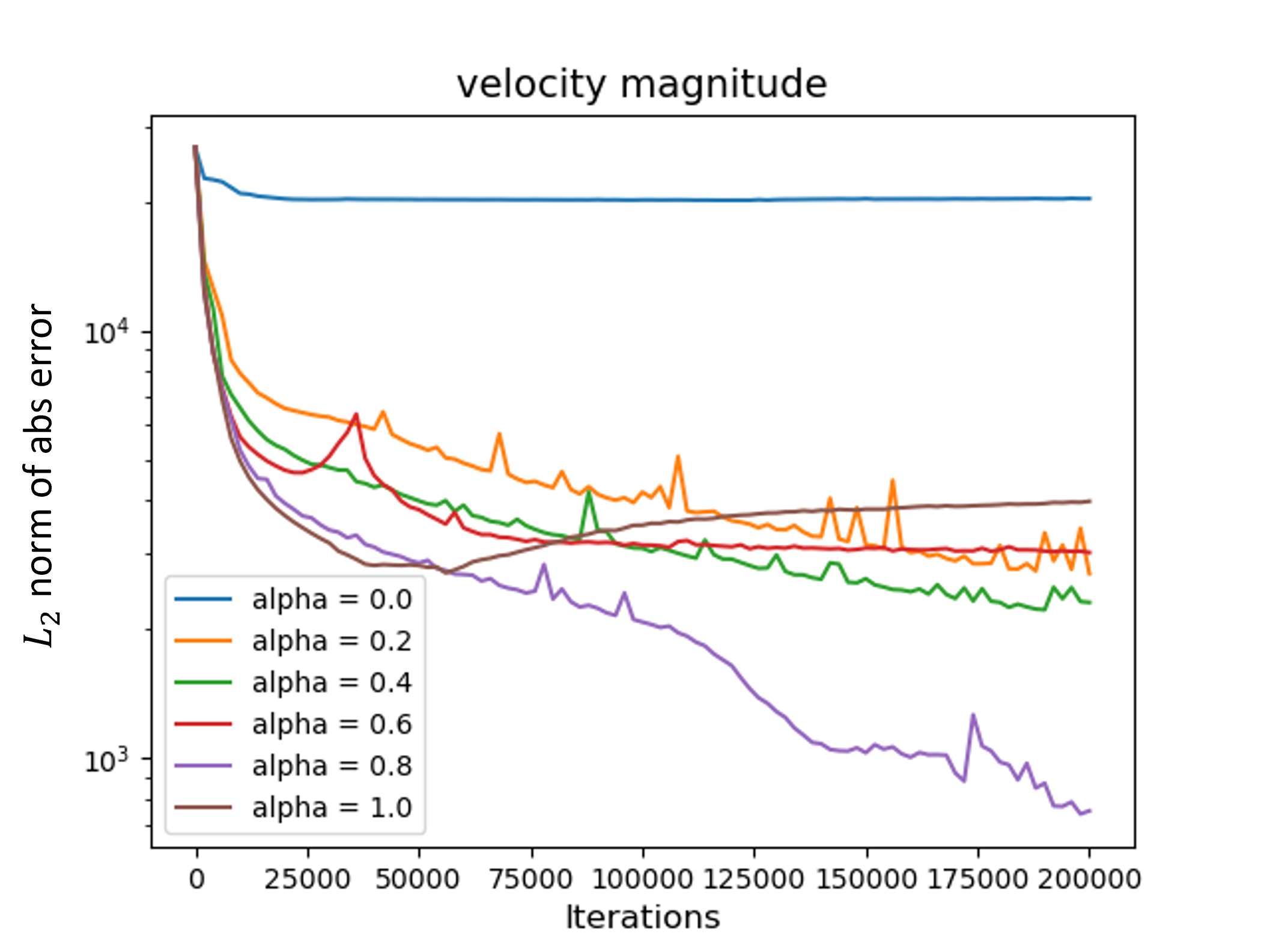

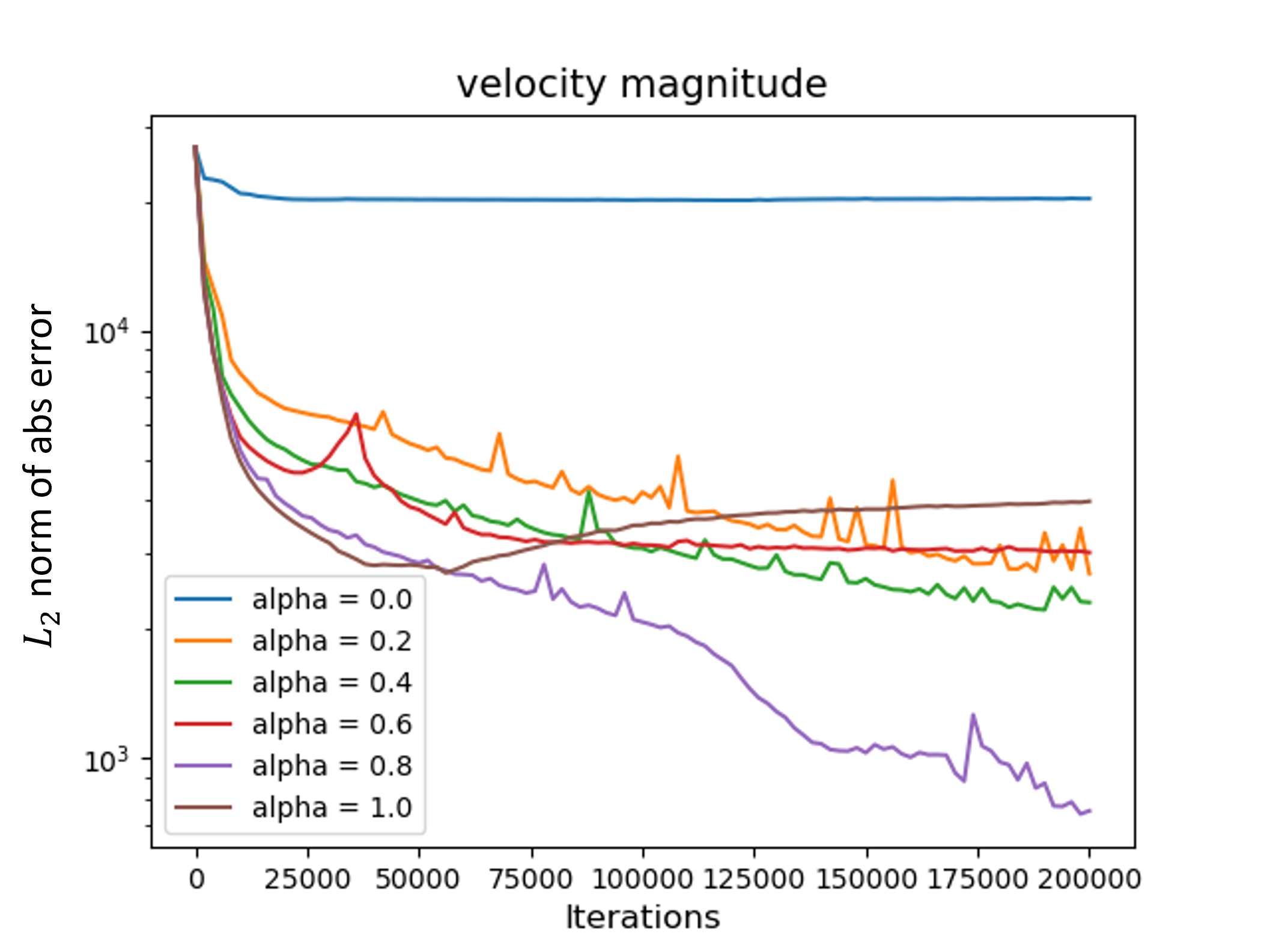

- The variance-based term enabled improved velocity field prediction with a reduction in maximum error compared to MSE.

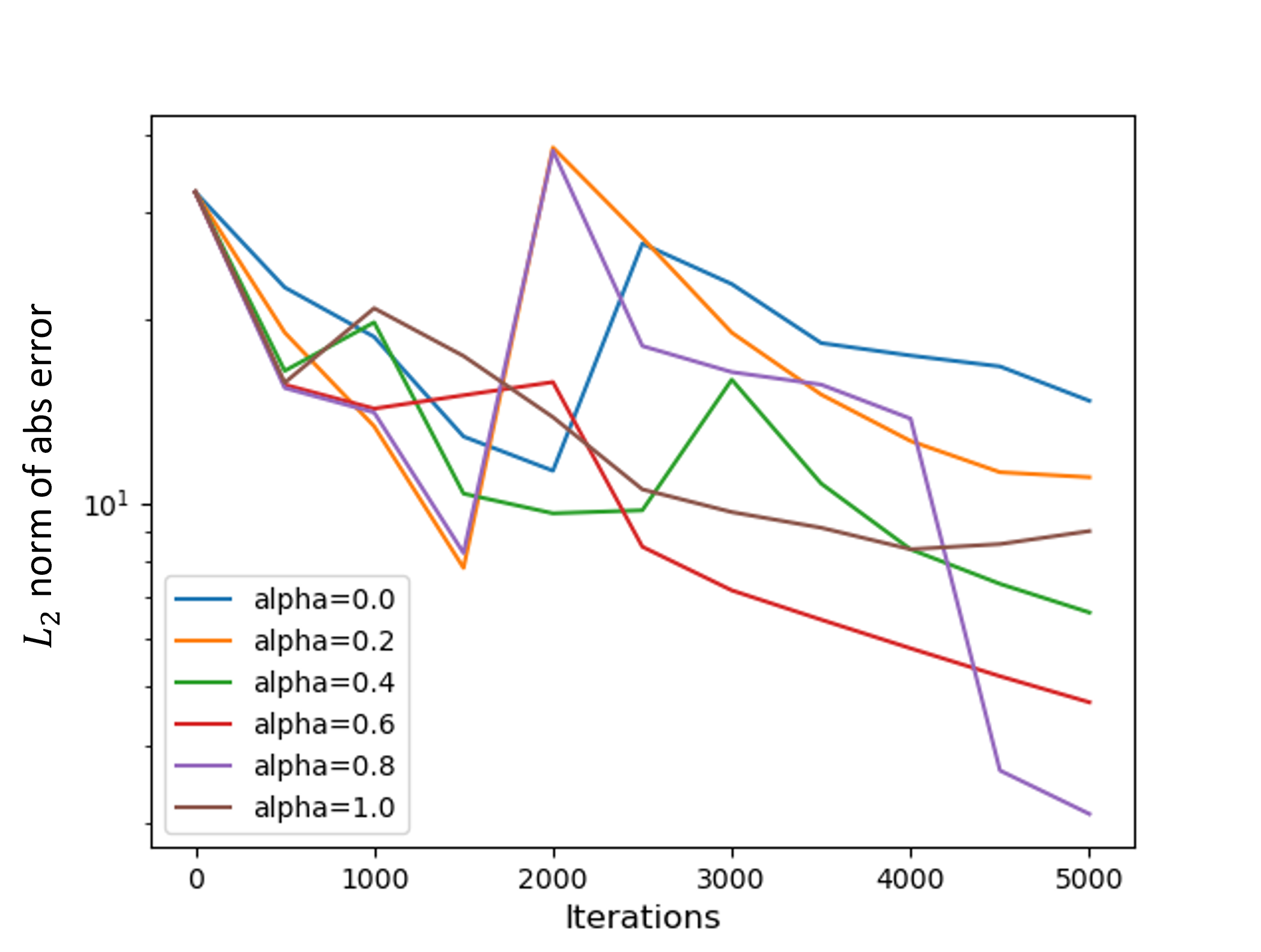

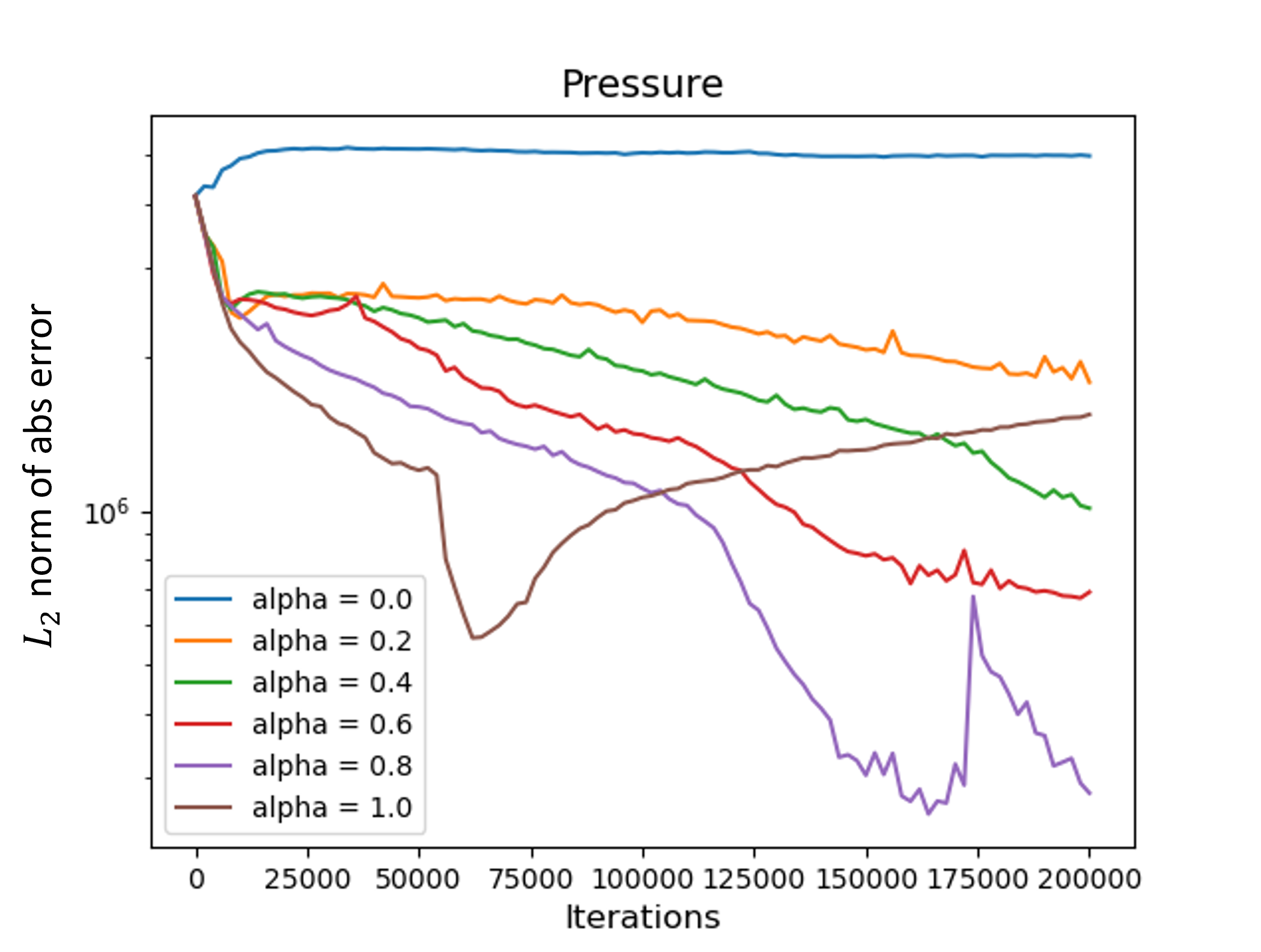

Figure 4: On the left, the L2 norm of the absolute error of the pressure vs. the number of iterations for different values of alpha for the 2D Navier-Stokes example. On the right, the L2 norm of the absolute error of velocity magnitude vs. the number of iterations for different values of alpha.

Implications and Future Directions

The addition of a variance-based term to the PINNs loss function exhibits considerable improvement in reducing localized error impacts without substantial computational overhead, facilitating more precise modeling in complex systems governed by PDEs. This methodological enhancement has implications for improving PINNs applications across various engineering fields, including fluid mechanics, solid mechanics, and beyond.

Future research may focus on the theoretical assessment of the optimum value of α, further extending the methodology to integrate more sophisticated optimization algorithms, such as BFGS, alongside typical methods like Adam. Moreover, exploring advanced neural architectures to further exploit the variance-based loss for high-dimensional PDE problems can broaden the applicability and effectiveness of PINNs.

Conclusion

The presented variance-based loss function signifies a strategic advancement in the regularization of PINNs, addressing critical challenges in managing localized high-error regions and enhancing prediction accuracy. These findings underscore the potential of this approach in advancing computational techniques for complex physical systems, offering a promising path forward in the efficient and accurate solving of PDEs with neural networks.