- The paper introduces a metric based on causal optimal transport that effectively captures noisy, dynamic neural trajectories.

- It demonstrates the method by distinguishing preparatory versus movement-related neural dynamics with improved sensitivity.

- Applied to both motor systems and diffusion models, the metric reveals nuanced differences in statistical and temporal neural patterns.

Summary of "Comparing Noisy Neural Population Dynamics Using Optimal Transport Distances"

Introduction to Neural Representation Comparisons

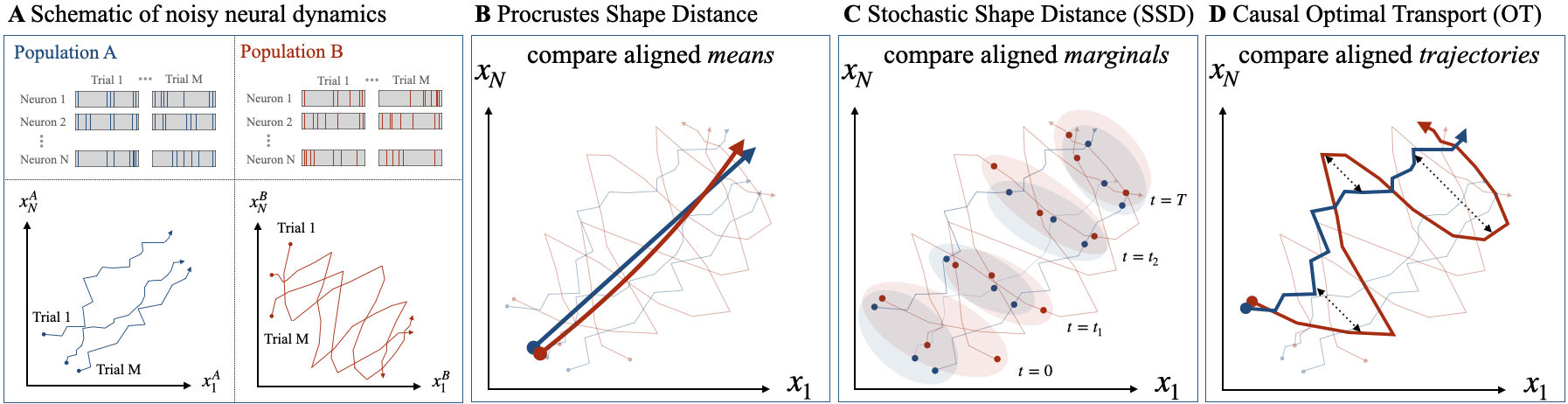

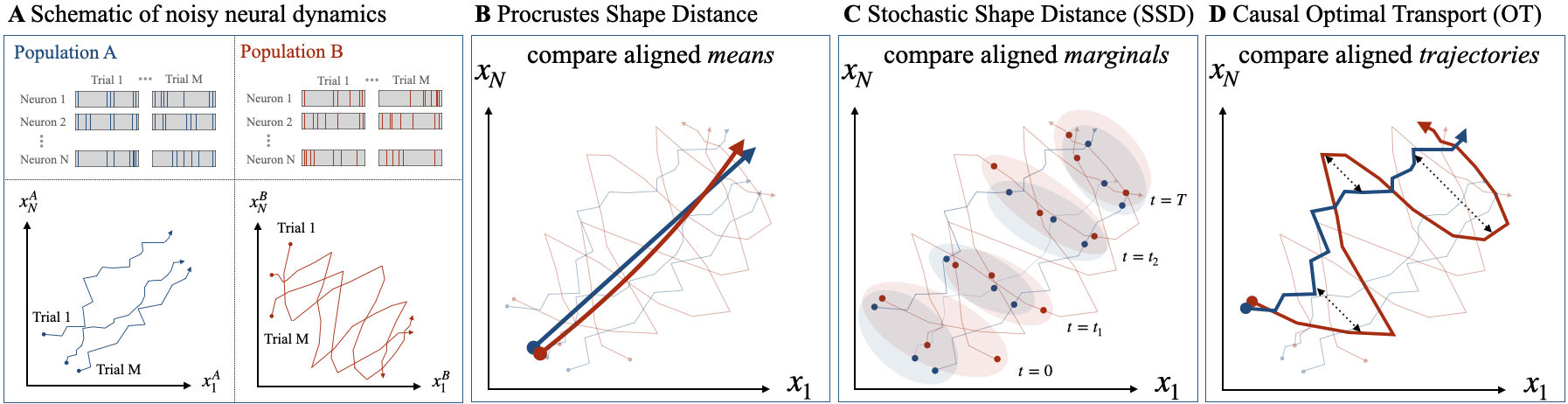

The paper presents a novel approach for comparing high-dimensional neural representations across biological and artificial neural systems, specifically accounting for noisy and dynamic responses. Traditional methodologies fail to address the inherent noise and temporal dynamics in neural systems, which can lead to substantial computational impacts. Recognizing this limitation, the authors propose a metric derived from optimal transport distances between Gaussian processes to better capture these aspects in neural population dynamics.

Limitations of Existing Metrics

Current approaches like RSA, CKA, CCA, and Procrustes shape distance primarily assume deterministic and static neural responses, which is often not reflective of real-world neural data. In biological systems, such as sensory neurons, neural responses to identical stimuli exhibit variability across trials, emphasizing the dynamic nature of neural computations. The paper critiques these existing metrics by highlighting their failure to accommodate recurrent dynamics and noise correlations effectively, thereby advocating for methods that integrate these dimensions.

Proposed Metric: Causal Optimal Transport

The authors introduce a metric leveraging causal optimal transport (OT) distances, designed to compare entire trajectories rather than isolated statistical moments. The causality condition embedded within this metric is crucial for maintaining temporal consistency, allowing the metric to differentiate systems with complex statistics evolving across time. This is particularly valuable in scenarios where neural trajectories are spatially proximate yet vary significantly in informational content across different temporal stages.

Application in Motor and Diffusion Models

The paper applies the proposed metric to simplified models of motor system activity, demonstrating its efficacy in distinguishing preparatory neural dynamics from purely motor outputs. Additionally, the metric is employed to assess latent diffusion models in text-to-image synthesis, showcasing its ability to integrate the stochastic nature of generative processes. These applications underline the metric's cross-domain versatility, reinforcing its potential in both biological and artificial neural analysis.

Analysis of Experimental Results

The experiments reveal structured differences captured by the causal OT metric that are not apparent in comparisons based solely on mean trajectories or marginal covariances. For instance, neural systems with tuned preparatory and movement-related dynamics exhibit distinguishable patterns under causal OT scrutiny, whereas simpler metrics fail to reveal such nuanced distinctions. In diffusion models, the metric also successfully identifies subtle variances in conditional latent modes, underscoring its sensitivity to both statistical and dynamic properties.

Figure 1: Metrics for comparing the shapes of noisy neural processes. {\bf A)} Spike trains for two populations of neurons with noisy dynamic responses. Each trial can be represented as a trajectory in neural (firing rate) state space.

Implications and Future Directions

The proposed metric offers significant improvements in understanding neural dynamics by incorporating temporal and noise-related aspects, enhancing the reliability of inter-system comparisons. Potential future developments include expanding the metric's application scope in non-Gaussian processes and exploring computational models requiring less data for effective estimation of trajectory statistics. The research sets a strong foundation for ongoing exploration into the nature of neural computations across diverse systems.

Conclusion

In summary, the paper defines an innovative approach to neural representation comparison using causally adaptive distances that account for stochastic and dynamic elements, overcoming the limitations of traditional metrics. The framework holds promise for advancing our understanding of complex neural systems, paving the way for more comprehensive analyses in computational neuroscience and artificial intelligence.