- The paper identifies that over-reliance on generative AI can diminish human critical thinking in complex knowledge work.

- It demonstrates a prototype that integrates reflective provocations within AI interfaces to stimulate rigorous evaluation.

- The study underscores redesigning AI tools to actively promote user engagement and prevent cognitive complacency.

Generative AI and Knowledge Work: Risks and Solutions

The paper "When Copilot Becomes Autopilot: Generative AI's Critical Risk to Knowledge Work and a Critical Solution" explores the far-reaching effects of generative AI in professional settings, focusing particularly on its impact on human critical thinking. It highlights a critical dimension overlooked in most AI discussions: the potential for AI to not just aid but also inadvertently degrade human cognitive capabilities if undue reliance is placed on its outputs. This paper argues for designing AI interfaces that encourage sustained critical engagement rather than passive consumption of AI-generated solutions.

Risks of Generative AI in Knowledge Work

Generative AI systems, such as advanced LLMs, carry inherent risks that extend beyond the commonly discussed problem of hallucinations, where AI models generate incorrect or misleading content. The paper argues that the gravest risk lies in the potential diminishment of human critical thinking abilities. As AI systems take on more complex tasks, there is a tendency for users to become passive recipients of AI-generated content, leading to a mechanized convergence in knowledge work where diversity of thought and critical assessment are bypassed.

Generative AI's influence on cognitive engagement shifts the focus from ensuring output accuracy to fostering holistic critical assessment skills among users. This shift is crucial because knowledge work often deals with tasks that are qualitative and lack definitive solutions, demanding a nuanced assessment that AI systems are not equipped to handle autonomously.

To mitigate these risks, the paper proposes designing AI systems that actively incorporate elements fostering critical thinking. The authors introduce a prototype system integrated into spreadsheet applications, which not only functions as a decision aide but includes mechanisms to provoke critical assessment of AI suggestions. The prototype generates factors and corresponding provocations—critiques designed to stimulate deeper consideration and the evaluation of AI-prompted solutions.

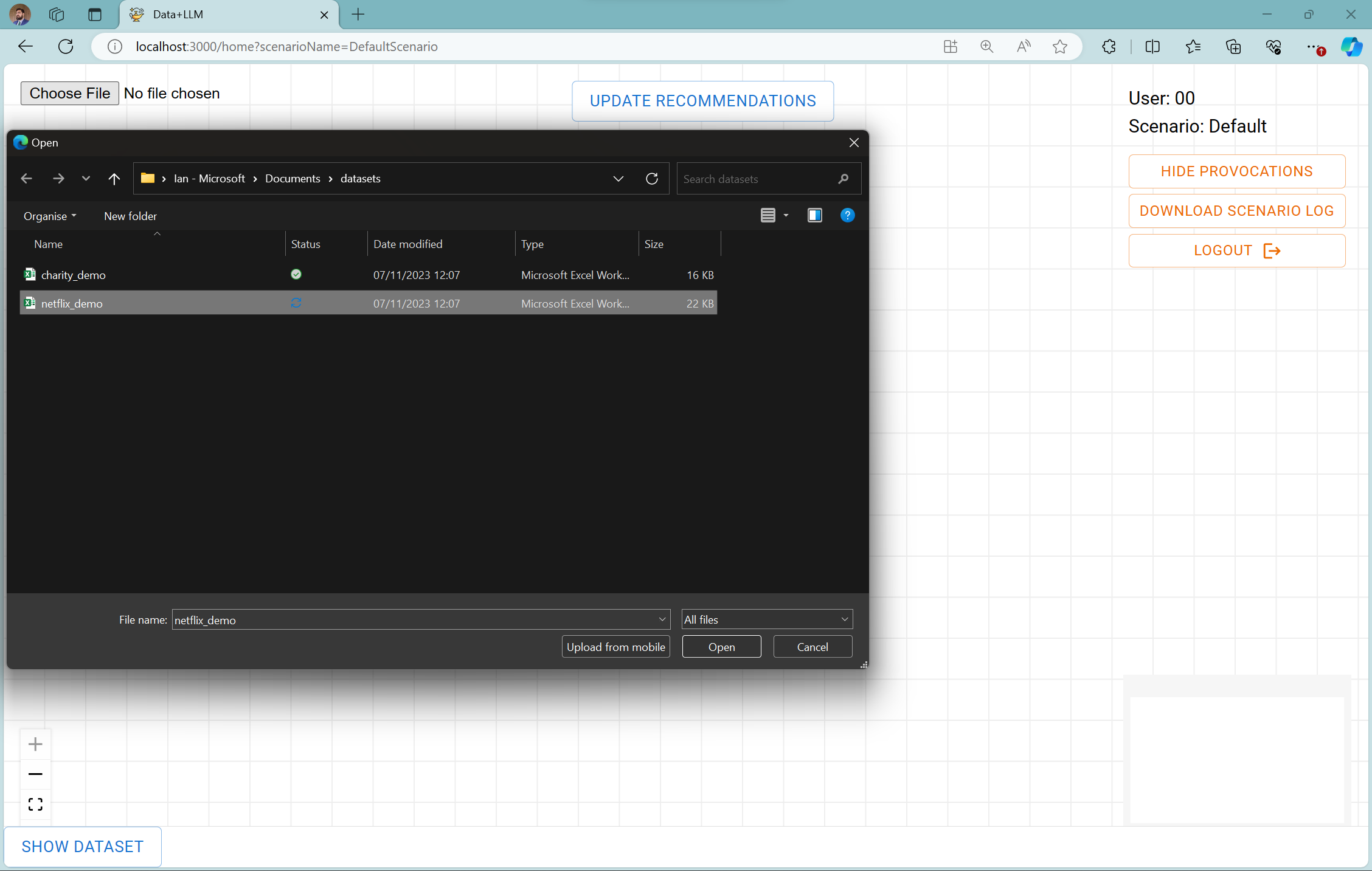

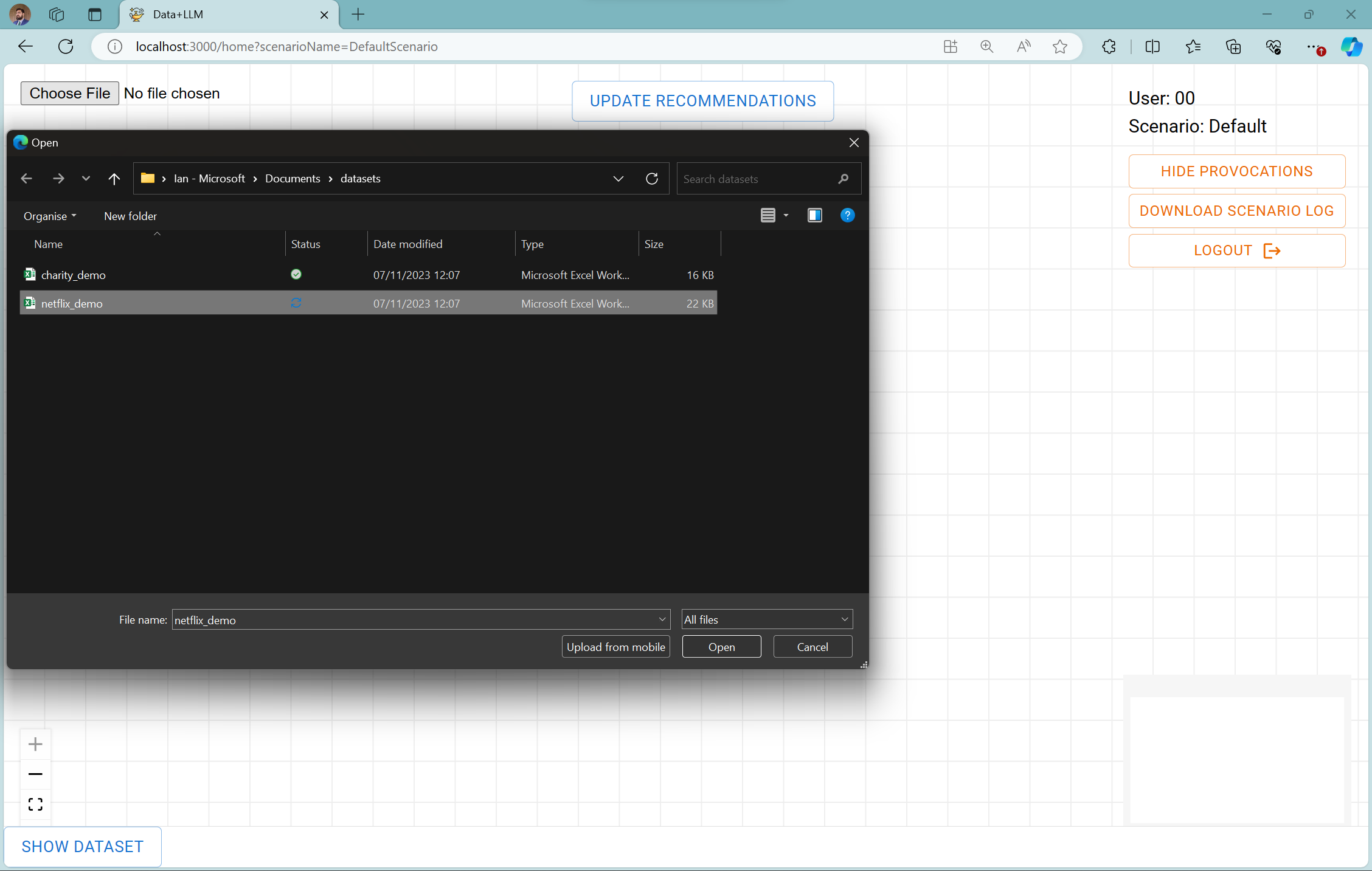

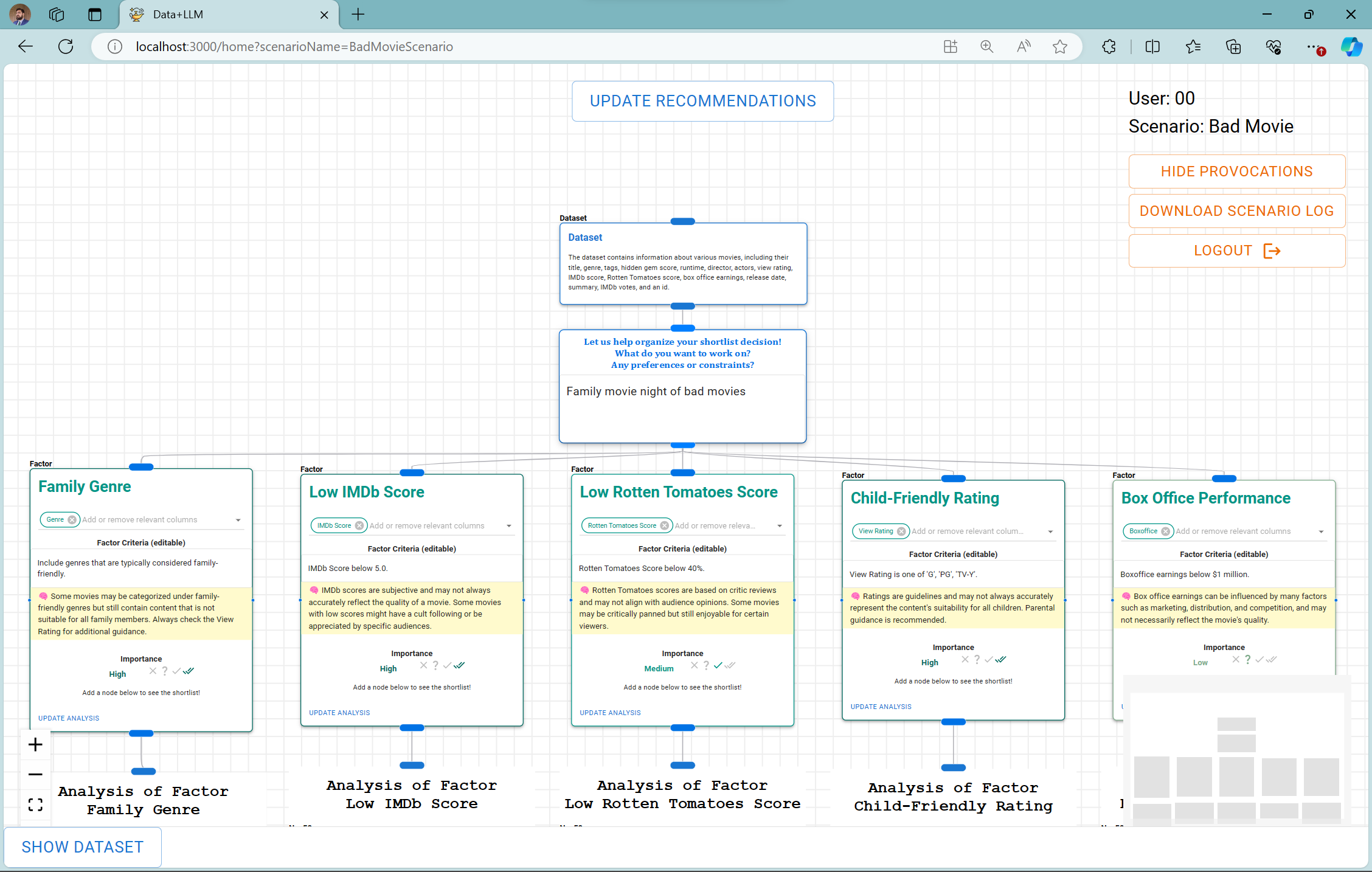

Figure 1: Dataset loading and initial prompt.

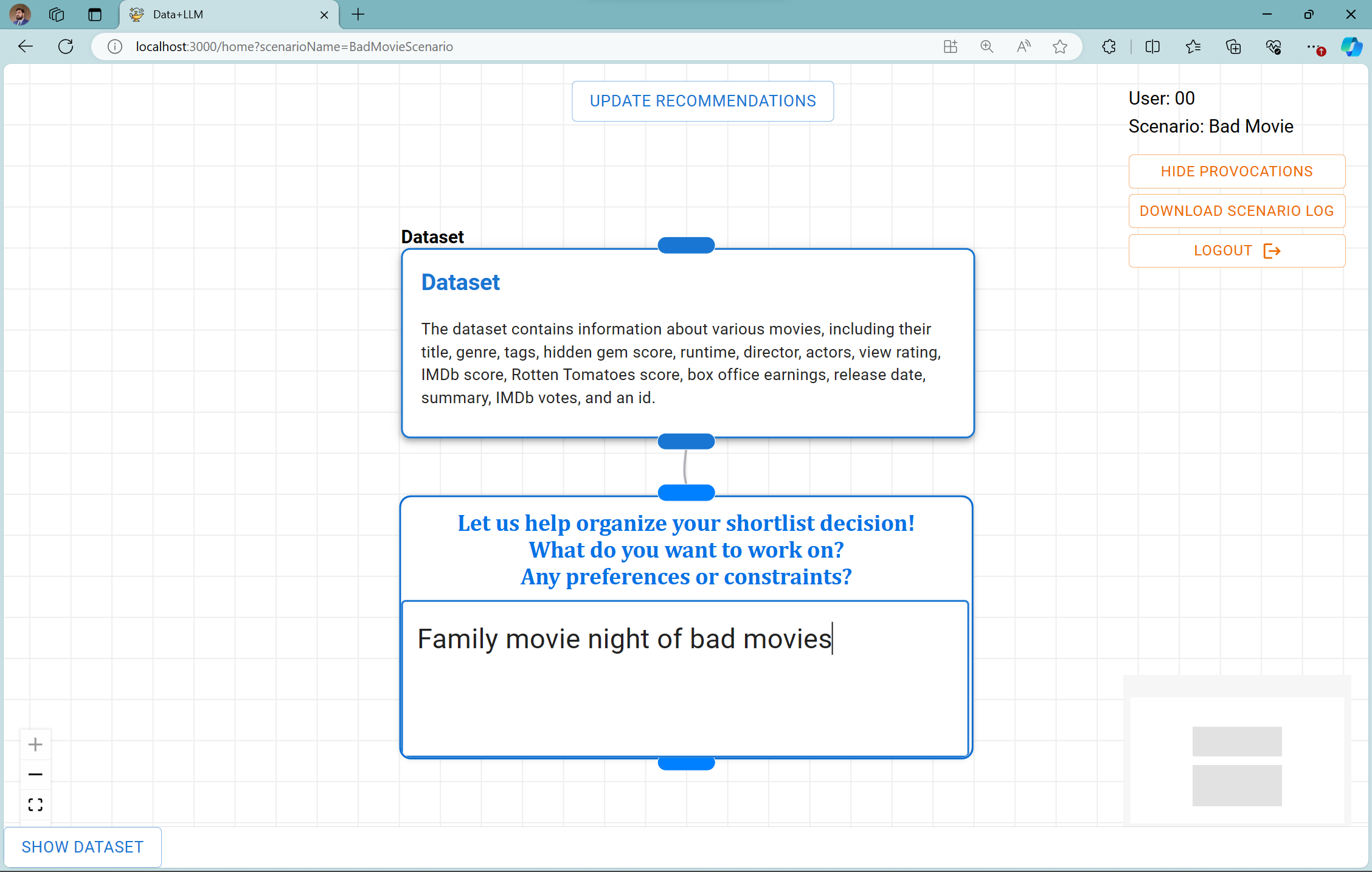

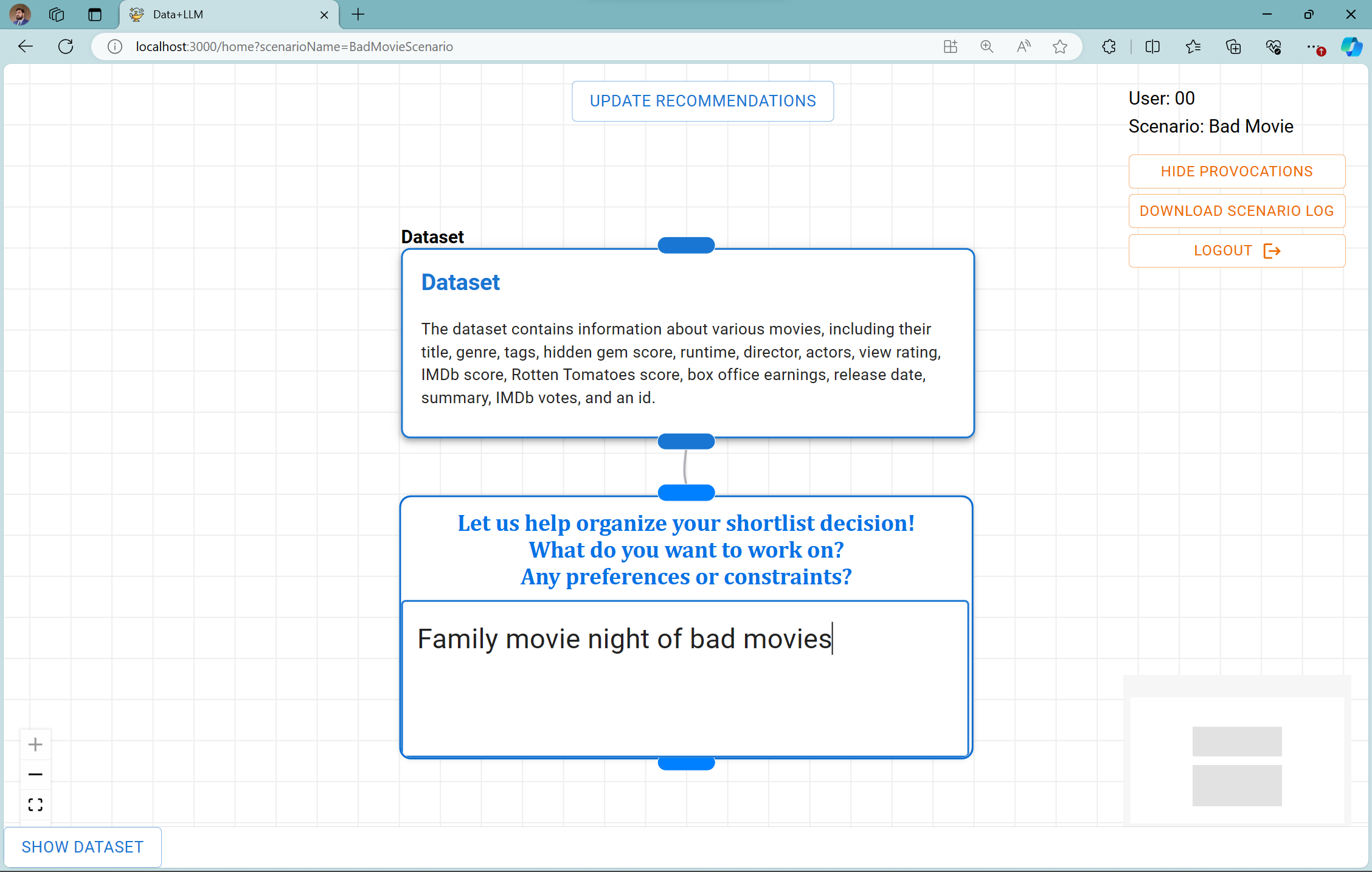

This approach is exemplified by its application in spreadsheet shortlisting tasks where users must select the most suitable items from a dataset based on AI-suggested criteria. Unlike standard AI recommendations, this system generates provocative statements highlighting potential shortcomings or alternative viewpoints, thus compelling users to reflect critically on the AI outputs.

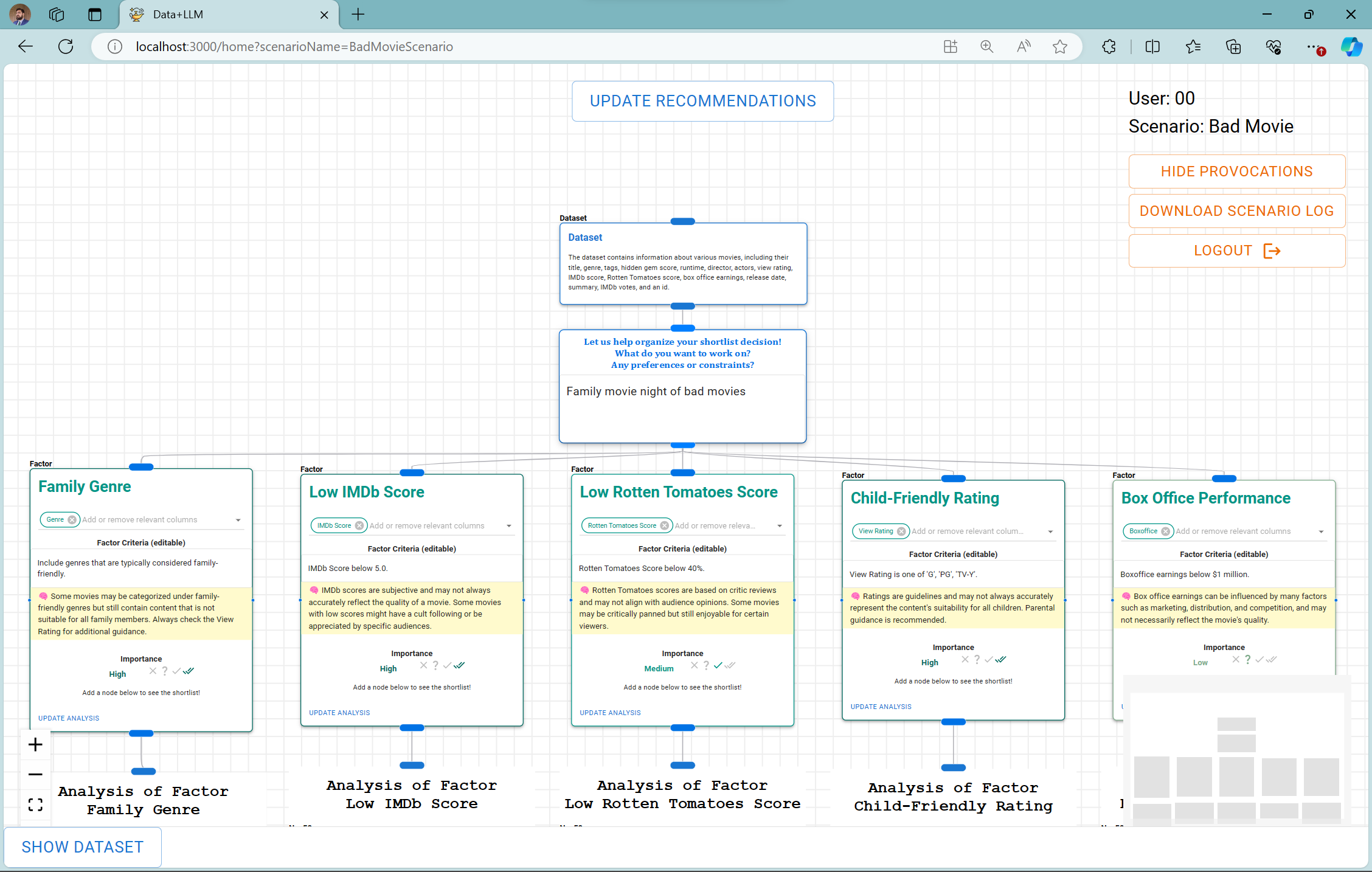

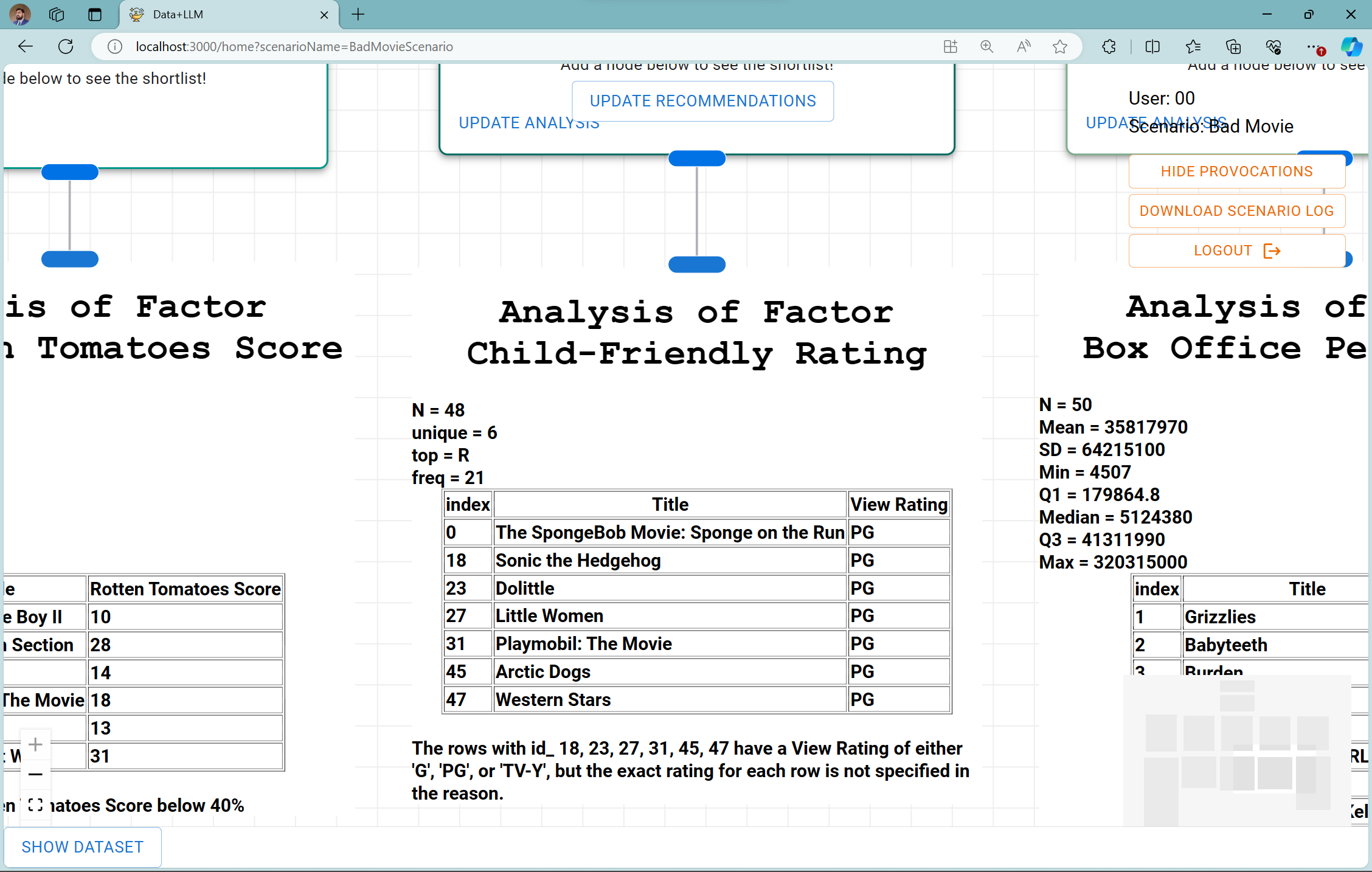

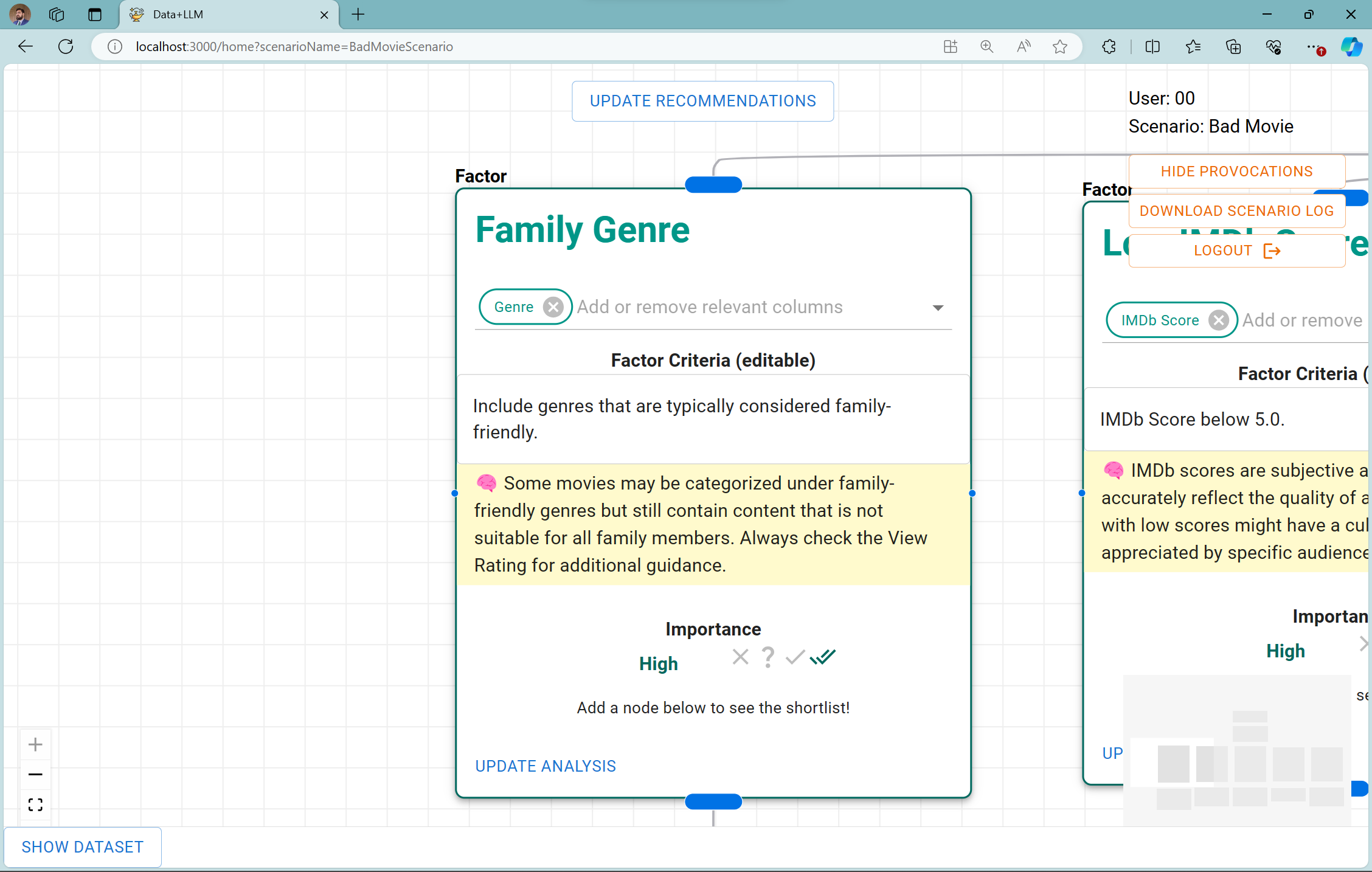

Figure 2: Factor cards

Prototype System and User Interaction

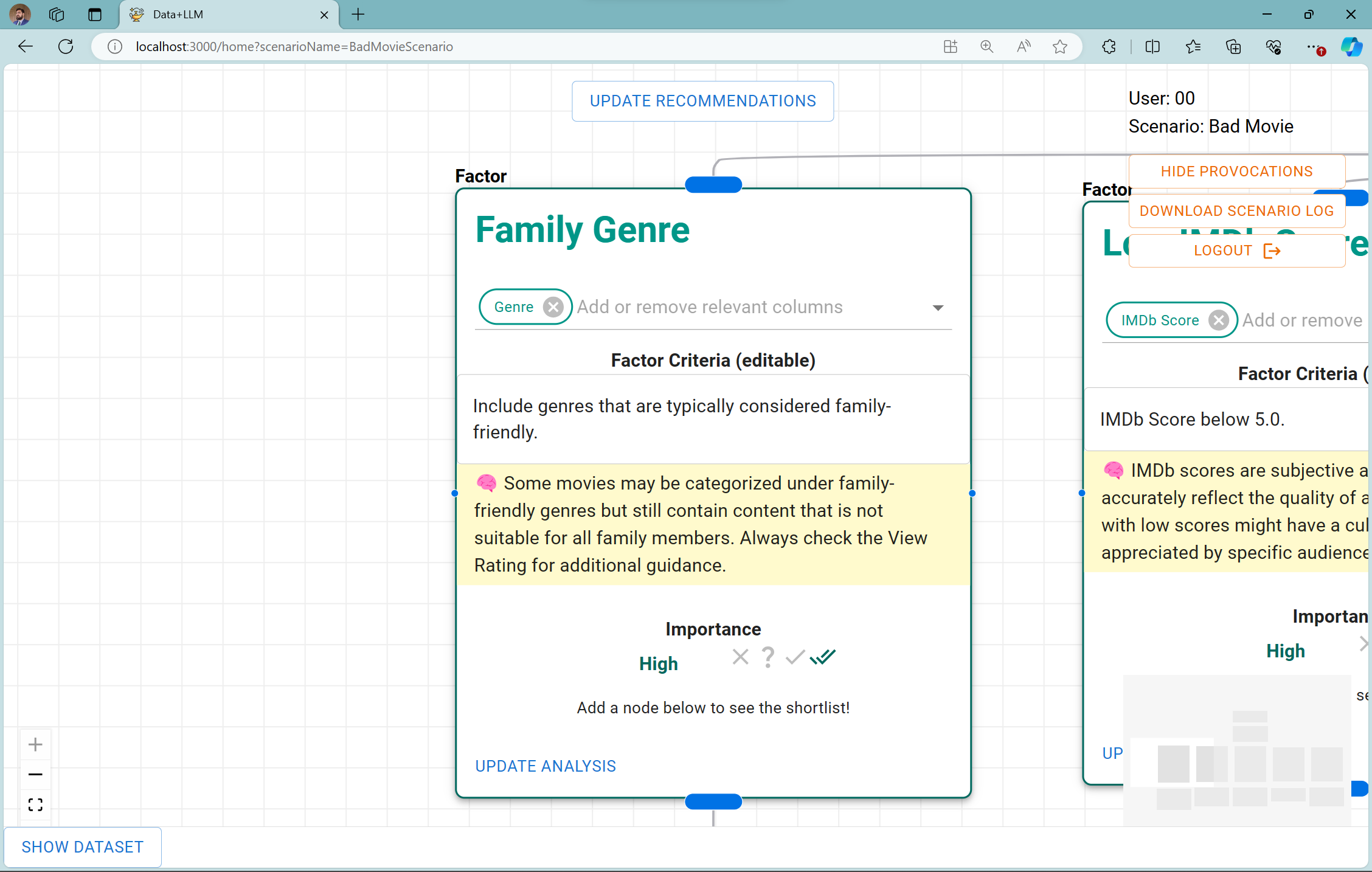

The paper details the prototype's functional mechanisms, where users interact with AI-generated factor cards (Figure 1), each accompanied by a provocation designed to induce critical thought (Figure 2, Figure 3). These provocations pose reflective questions or notes on the generated factors, alerting users to bias, data issues, or alternative approaches. Such interactions are aimed at reinforcing users' critical faculties, ensuring they remain engaged as active decision-makers.

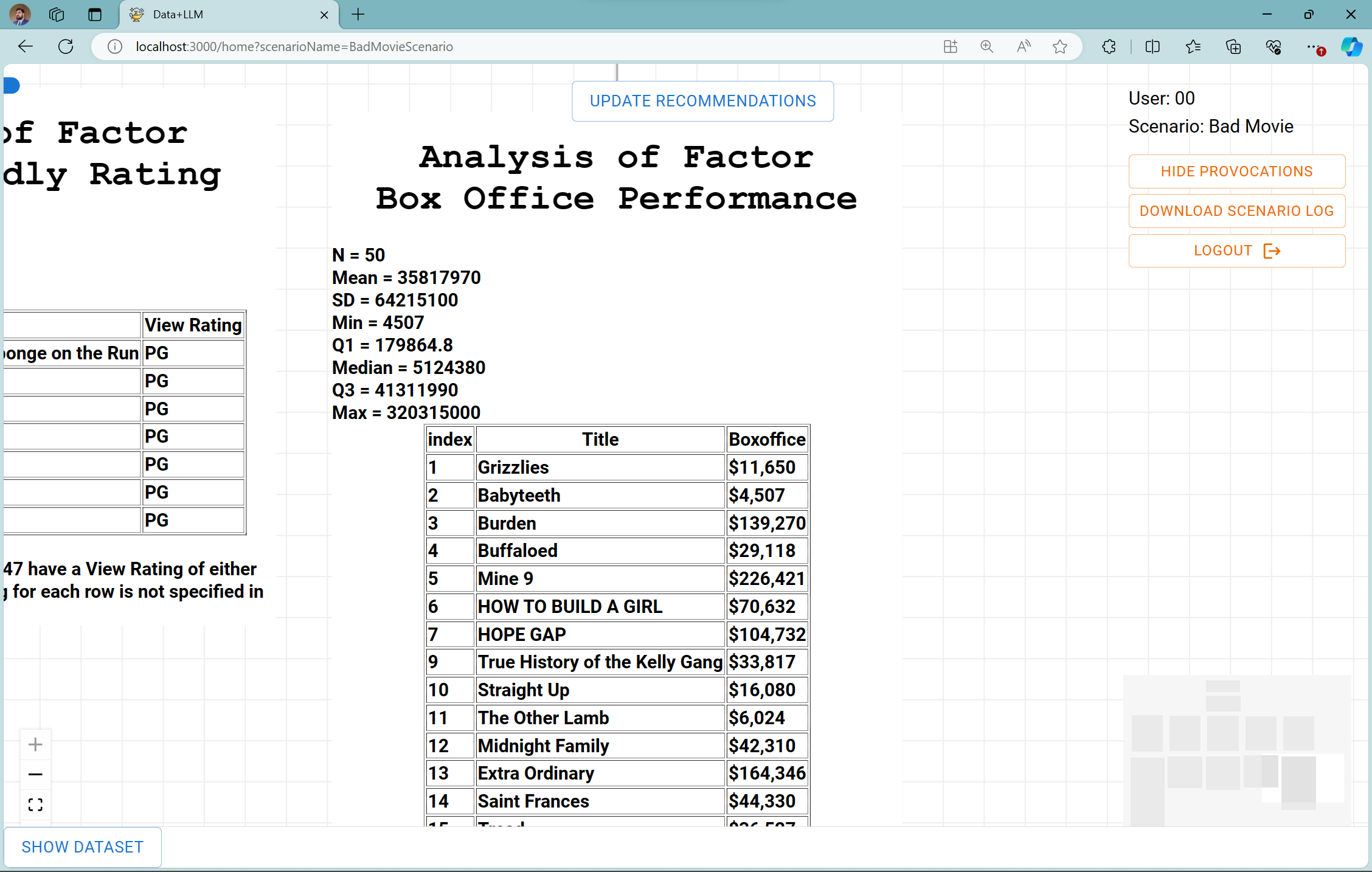

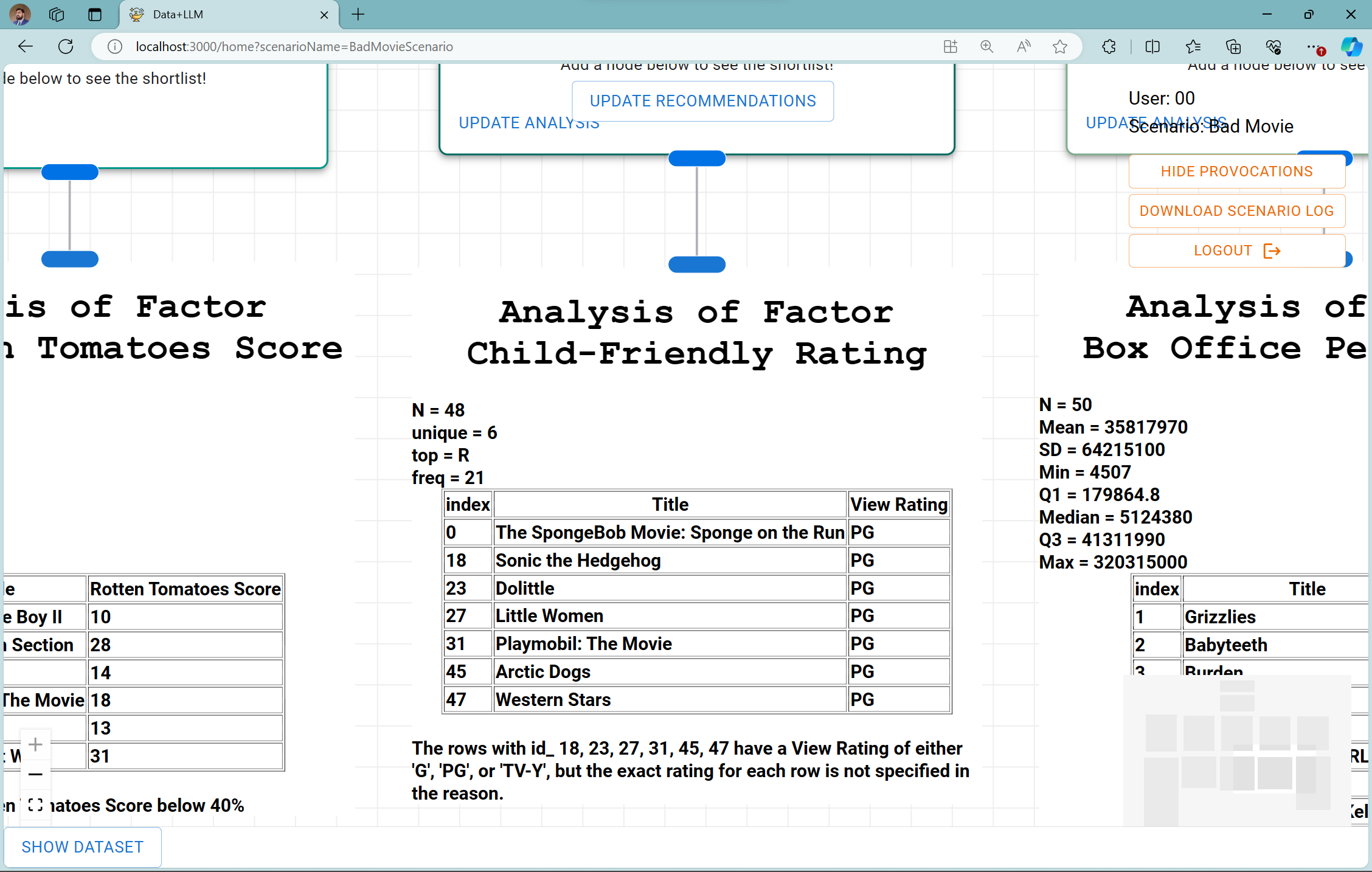

Figure 3: Factor analysis

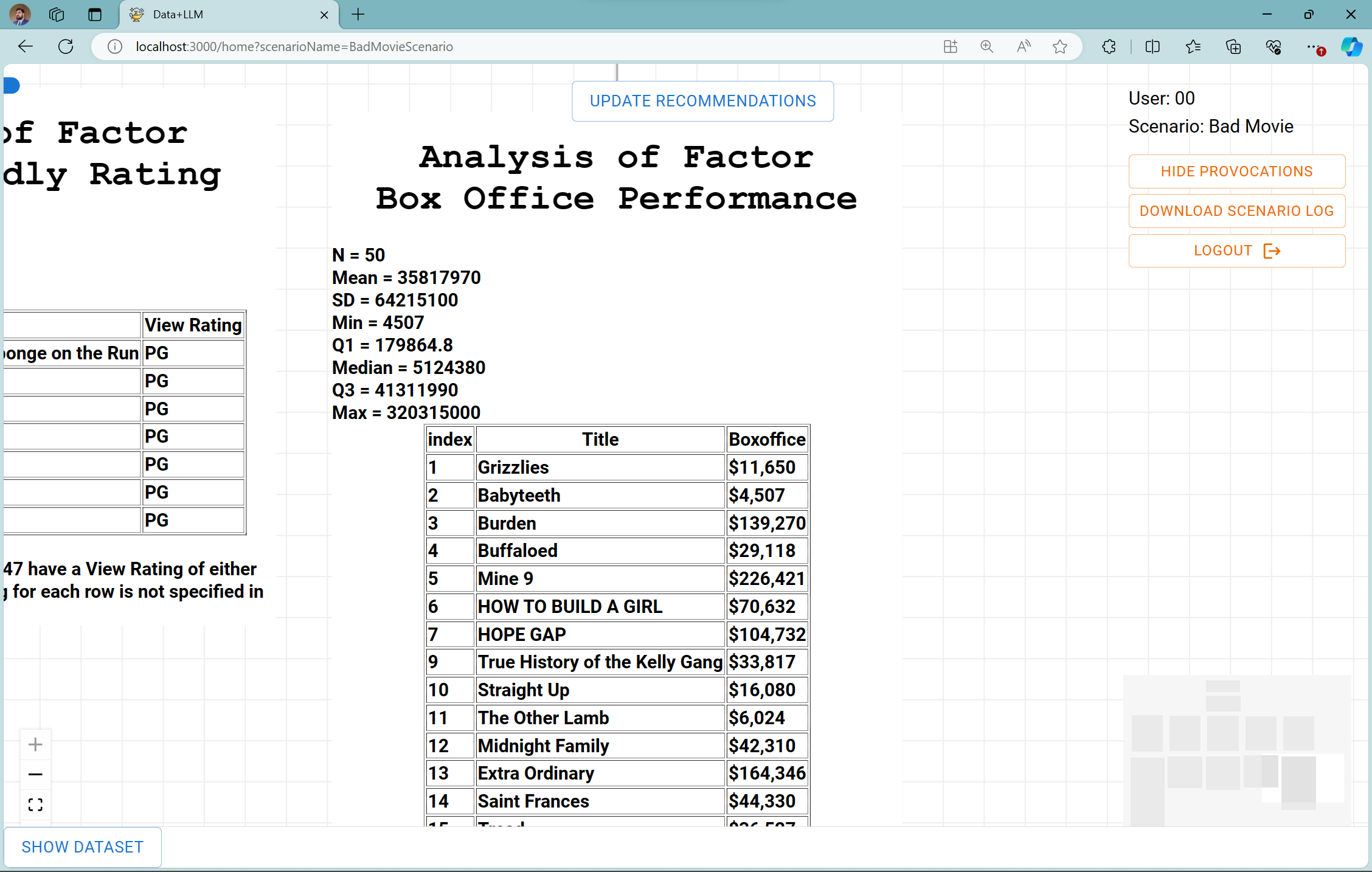

The system further employs dynamic interfaces (Figure 4) for data analysis, allowing users to assess both global and factor-local shortlists. This operational design demonstrates how AI tools can be steered as facilitators for cognitive engagement rather than mere tools for efficiency.

Figure 4: Global list

Implications and Future Directions

The implementation of critical thinking tools as described in this paper sets a precedent for future AI systems in knowledge work applications. It suggests a design paradigm shift where AI systems are crafted not merely as intelligent aides but as catalysts for maintaining and enhancing human cognitive capacities. The incorporation of reflective interactions within generative AI systems holds promise for broad applicability across various domains such as data analysis, creative content generation, and decision support systems.

The research proposes an agenda for further exploration of AI design frameworks and interaction modalities that cultivate critical analysis skills. This aligns with a broader vision to extend the role of AI from merely solving problems to redefining how problems are conceived and critically approached by human users.

Conclusion

By emphasizing AI's potential role as an intellectual provocateur rather than a passive cog, the paper sheds light on addressing the nuanced risks AI poses to human cognitive processes. It calls for concerted efforts in AI design to foster environments where critical thinking is not only preserved but enhanced. This approach promises richer, more resilient interactions between humans and AI, safeguarding against the risks of cognitive complacency in AI-assisted workflows.