- The paper presents a novel approach (OG-RAG) that integrates domain-specific ontologies to enhance the accuracy of responses from large language models.

- It employs a hypergraph representation with optimized selection of hyperedges to maintain complex relationships and improve retrieval efficiency.

- OG-RAG demonstrates a 55% boost in fact recall and 40% improvement in response correctness, facilitating better fact-based reasoning in specialized domains.

OG-RAG: Ontology-Grounded Retrieval-Augmented Generation For LLMs

Introduction

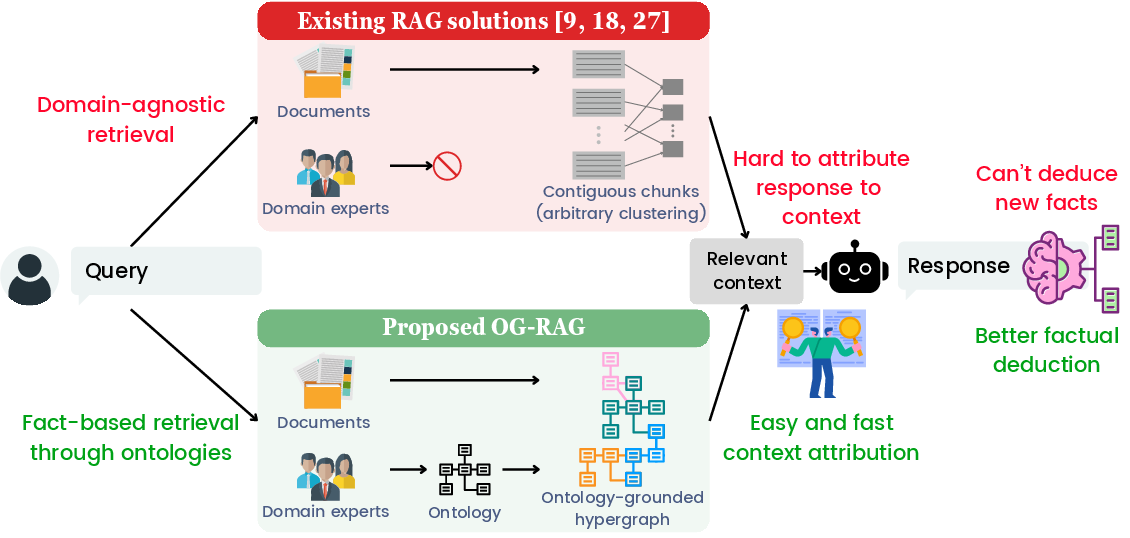

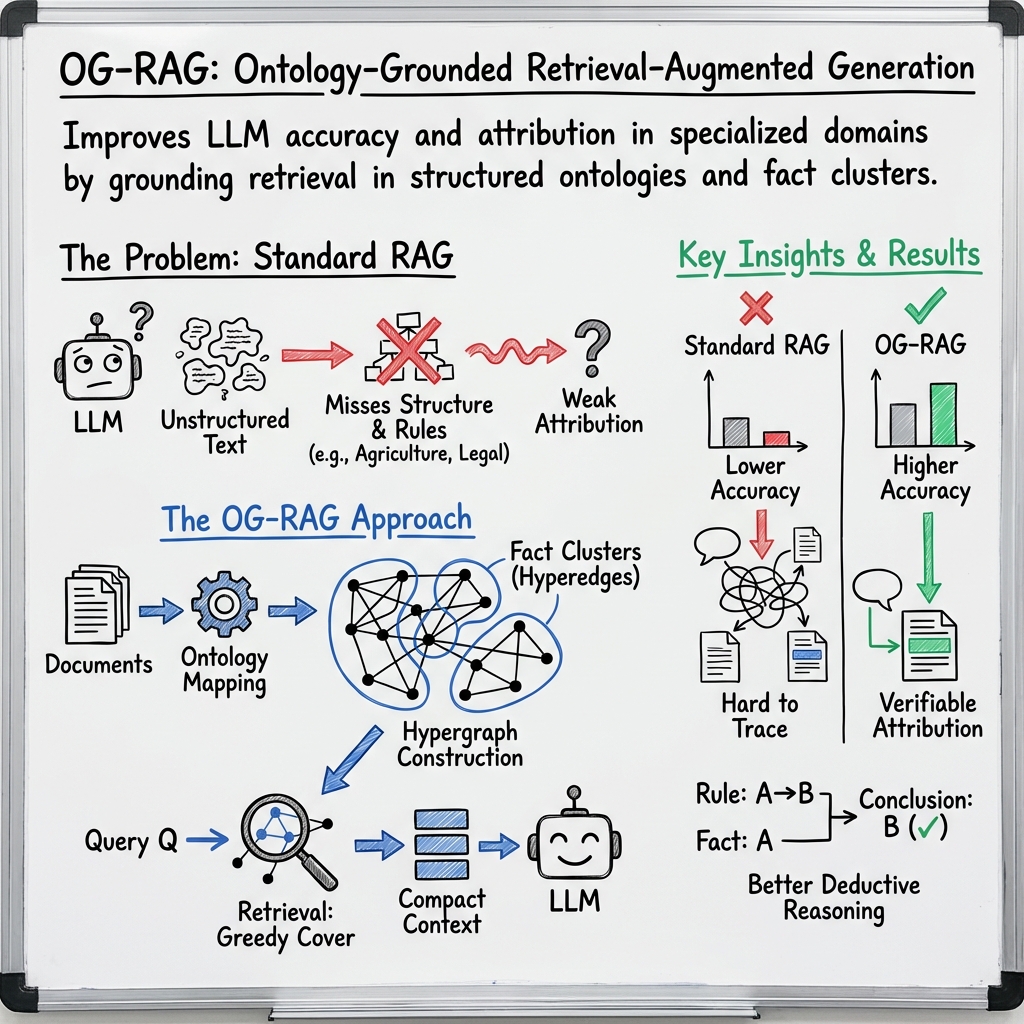

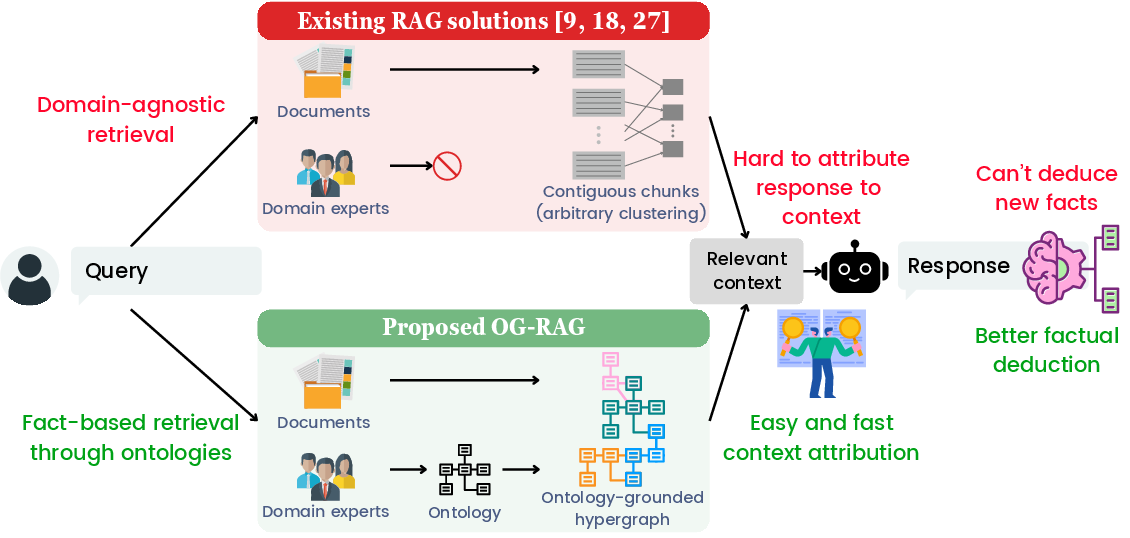

The paper "OG-RAG: Ontology-Grounded Retrieval-Augmented Generation For LLMs" (2412.15235) introduces a novel approach called OG-RAG, which aims to enhance the adaptability and fact-based reasoning of LLMs by integrating domain-specific ontologies into the retrieval process. Traditional LLMs face significant challenges when attempting to apply generalized knowledge to specialized domains, such as healthcare or agriculture, without costly fine-tuning. OG-RAG addresses these challenges by employing ontological structures to guide the retrieval of relevant information, thereby improving the contextual accuracy of generated responses.

Figure 1: Comparison of the proposed OG-RAG with existing retrieval-augmented generation (RAG) solutions.

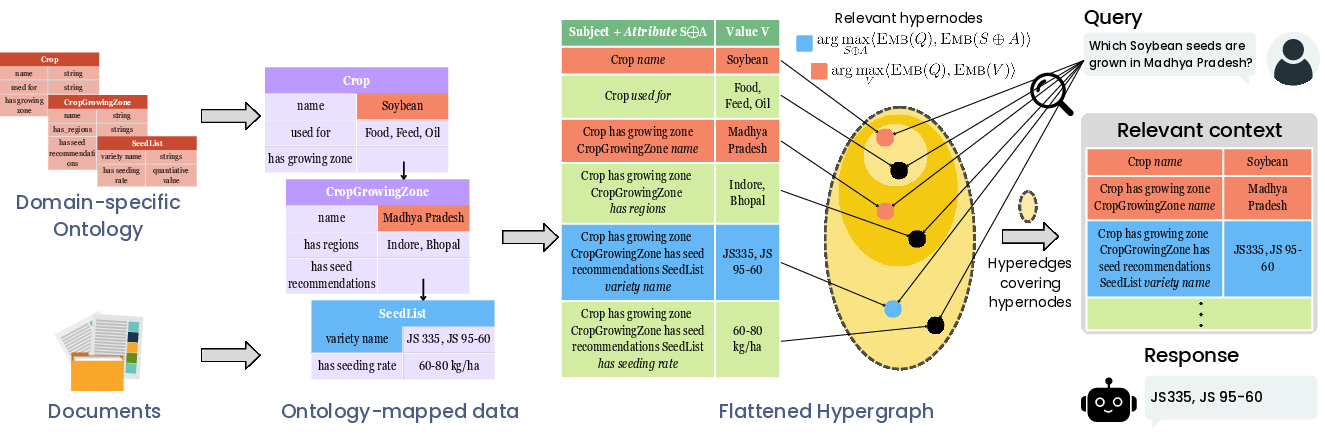

Core Methodology

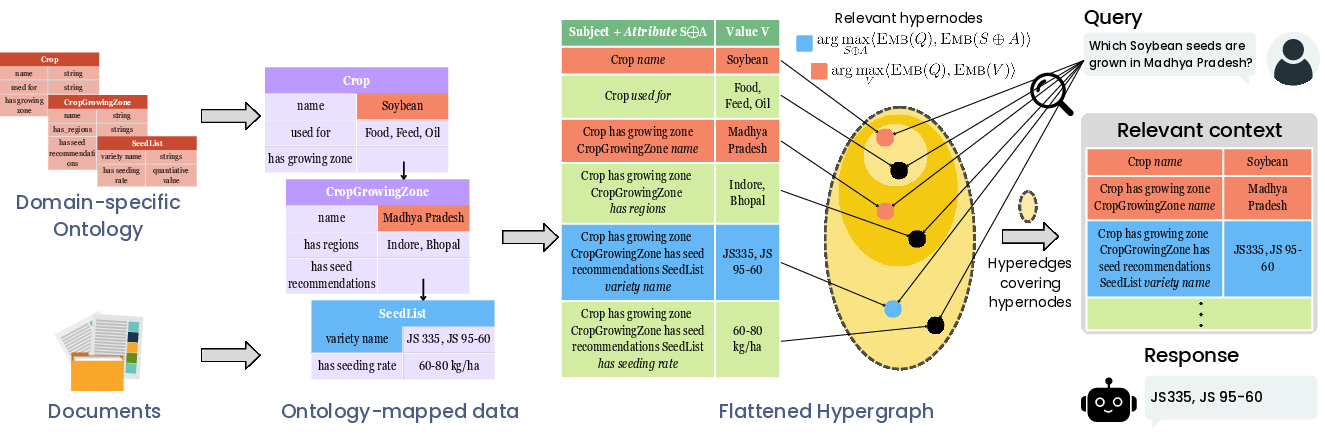

OG-RAG constructs a hypergraph representation of domain documents, where entities and their interrelationships are defined using domain-specific ontologies. These ontologies provide a structured framework that enhances the retrieval of contextually relevant information. Each hyperedge in this hypergraph encapsulates clusters of factual knowledge grounded on the ontology, thus enabling precise contextual support for LLM-generated responses.

The retrieval process is optimized by employing algorithmic strategies to select a minimal set of hyperedges that together form a compact context. This approach not only retains complex relationships between entities but also supports efficient retrieval across diverse domains. The hypergraph model empowers LLMs to adapt to specialized workflows and decision-making tasks without the heavy computational burden associated with model fine-tuning.

Figure 2: OG-RAG: Ontology-Grounded Retrieval-Augmented Generation.

Results and Evaluation

The paper reports that OG-RAG increases the recall of accurate facts by 55% and improves response correctness by 40% compared to existing baseline methods across four different LLMs. It also enables faster attribution of response contexts by 30% and increases fact-based reasoning accuracy by 27%. These results were validated across various domains, such as healthcare, legal, and agricultural sectors, demonstrating the flexibility and effectiveness of ontology-grounded retrieval methods.

The evaluation of OG-RAG involves comparison with standard retrieval-augmented generation techniques, including RAG. The comparisons indicate substantial improvements in the retrieval and application of domain-specific knowledge, with OG-RAG outperforming other systems in accurately grounding responses in contextually relevant facts.

Implications and Future Directions

The integration of ontology-grounded retrieval mechanisms into LLM systems offers a significant step towards more adaptable and accurate AI applications in specialized domains. This research underscores the potential of combining structured ontological frameworks with advanced LLM capabilities to enhance the factual accuracy and contextual relevance of generated responses.

A key implication is the possibility for OG-RAG to facilitate more reliable decision-making processes in industries that demand high accuracy and specialized knowledge, such as healthcare diagnostics, legal reasoning, and agricultural planning. The system’s ability to attribute responses to their factual context also enhances transparency and trust in AI systems.

Future research could focus on refining the ontology-driven retrieval algorithms to further increase their efficiency and extend their applicability. Additionally, exploring automated ontology construction techniques could broaden the utility of OG-RAG by enabling end-to-end, domain-specific retrieval-augmented models for a wider range of applications.

Conclusion

OG-RAG presents a compelling approach to enhancing the factual alignment and precision of LLMs by grounding their retrieval processes in ontology-specific frameworks. Through rigorous evaluation and significant performance gains, OG-RAG demonstrates its potential to transform the integration of LLMs into domain-specific tasks, offering both practical improvements in response generation and theoretical advancements in retrieval-augmented model design. The adaptability and efficiency of OG-RAG emphasize the importance of ontologies in bridging the gap between general-purpose AI tools and domain-focused applications, paving the way for more informed and accurate decision support systems.