- The paper introduces DO-RAG, a novel QA framework integrating multi-level knowledge graph construction with retrieval-augmented generation to boost factual accuracy.

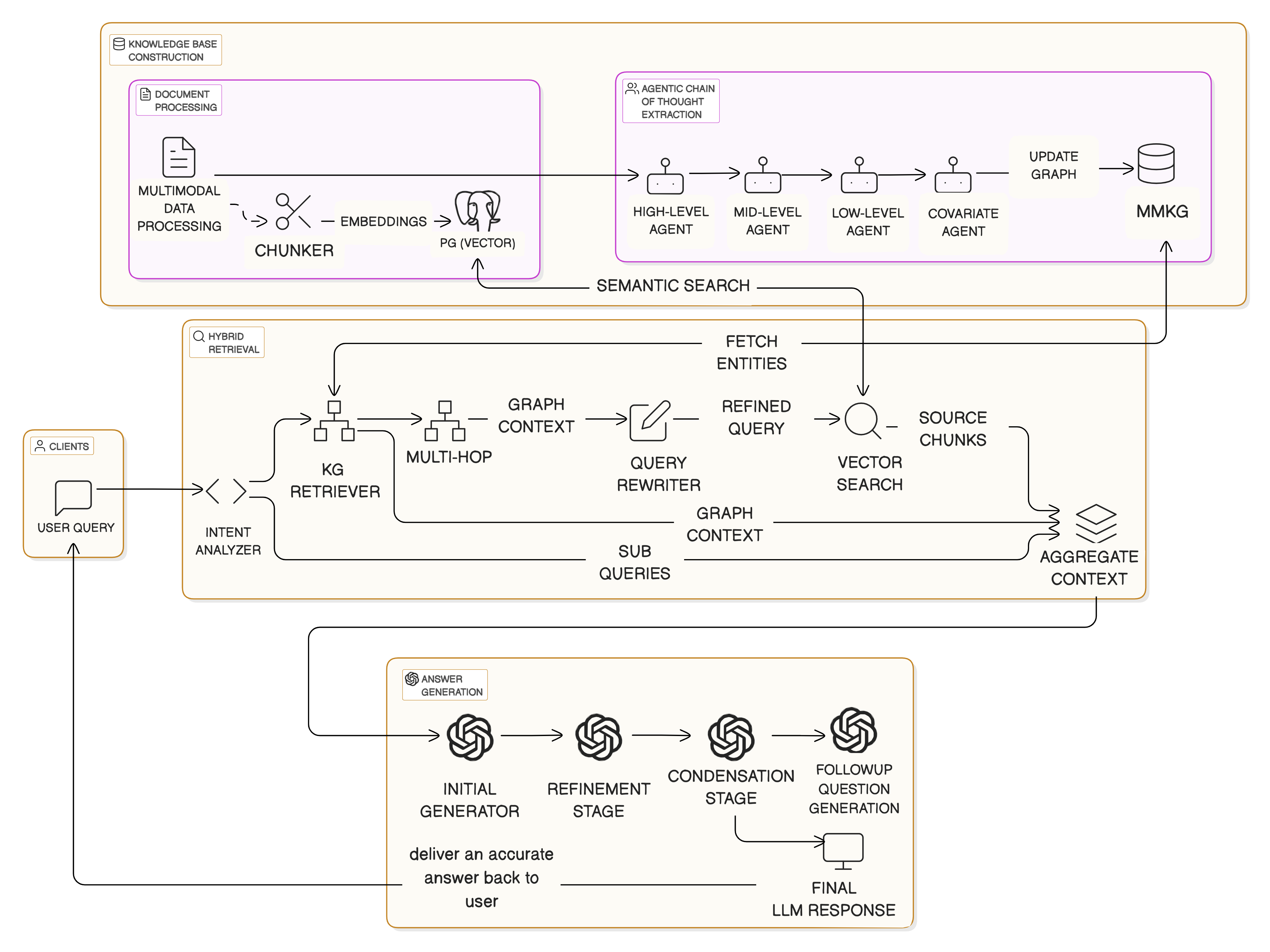

- It employs a hybrid retrieval process combining semantic vector search with graph traversal, ensuring precise query resolution through dynamic, multi-modal document parsing.

- Empirical evaluations show up to a 33.38% enhancement in answer relevancy and contextual precision, underscoring its effectiveness in high-precision QA applications.

DO-RAG: A Domain-Specific QA Framework Using Knowledge Graph-Enhanced Retrieval-Augmented Generation

Introduction

The paper introduces DO-RAG, a Domain-Specific Question Answering (QA) framework that integrates knowledge graph (KG) construction with Retrieval-Augmented Generation (RAG) to enhance closed-domain QA systems' factual consistency and contextual understanding. Closed-domain QA requires high precision, often needing structured expert knowledge that generic models struggle to incorporate. While advancements in LLMs have improved fluency, they often exhibit hallucinations, especially when handling specialized terminology and complex reasoning, as shown in various retrieval-augmented frameworks.

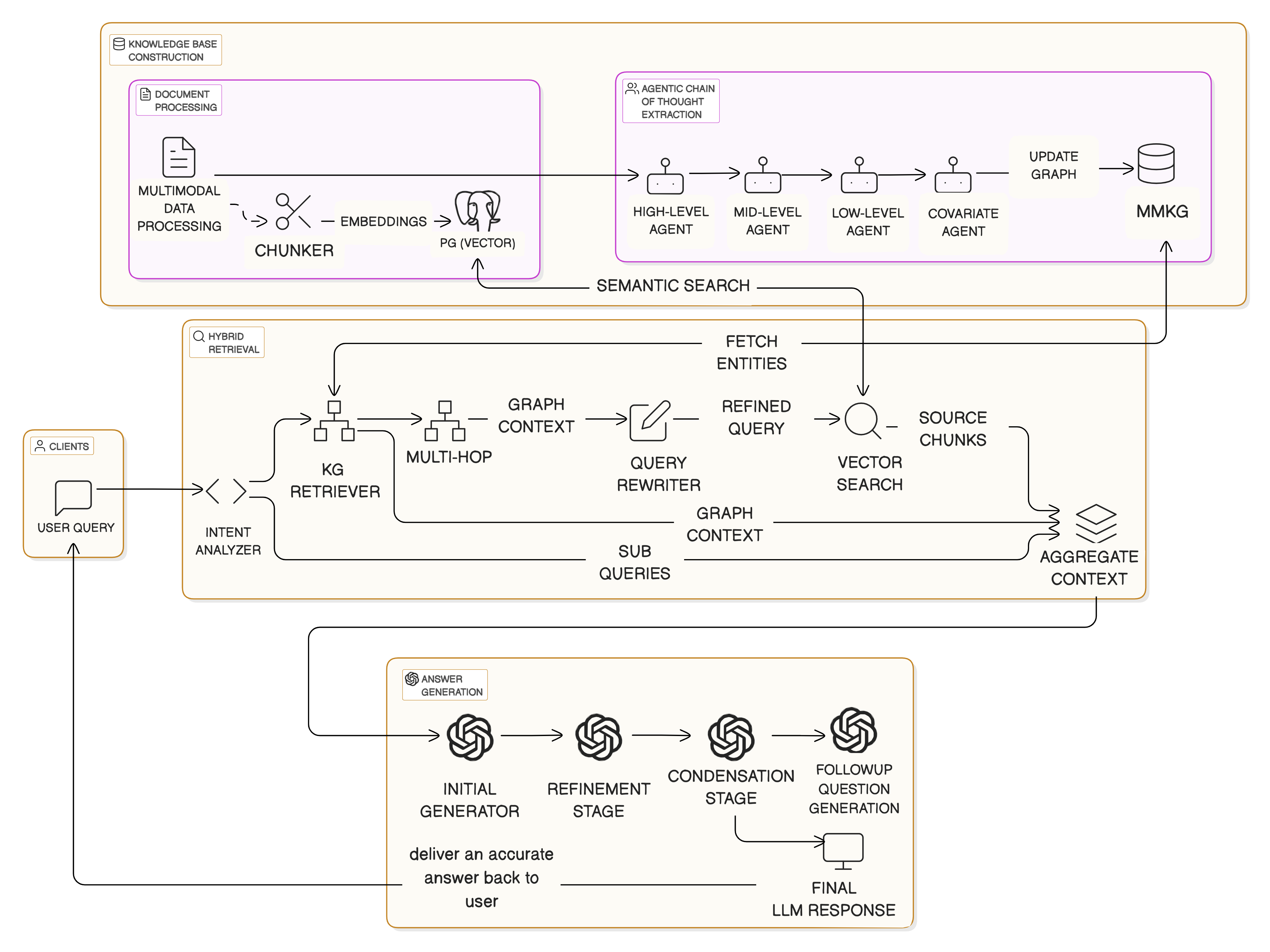

Figure 1: High-level overview of DO-RAG methodology.

Proposed Approach: DO-RAG

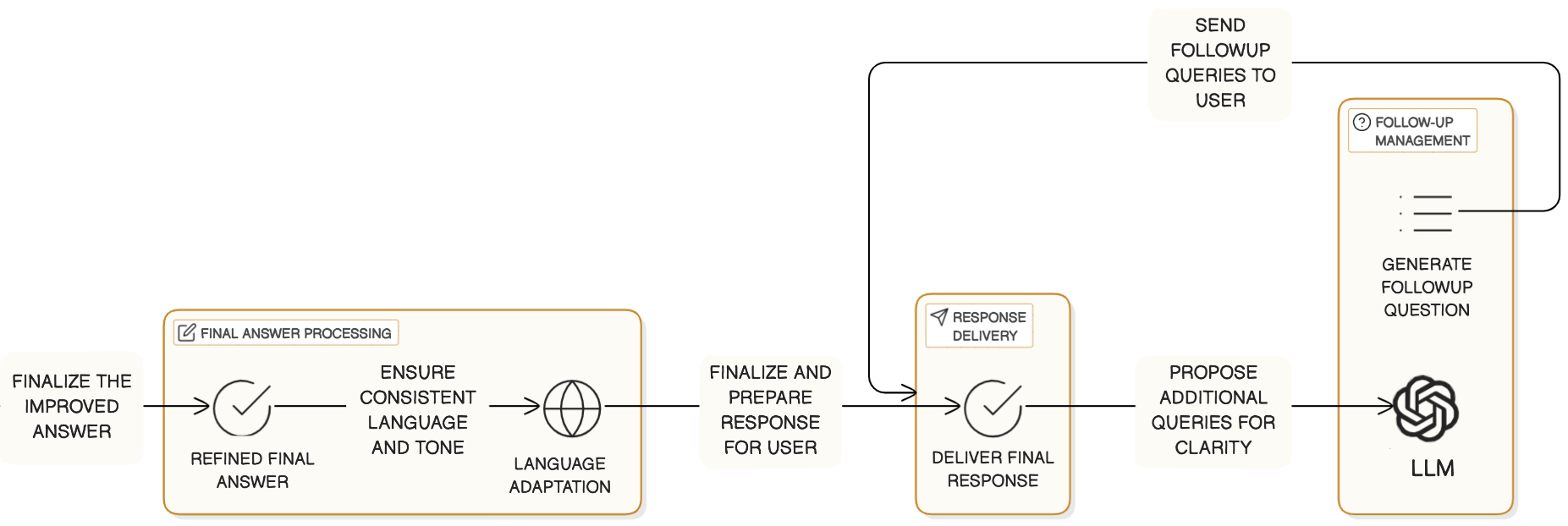

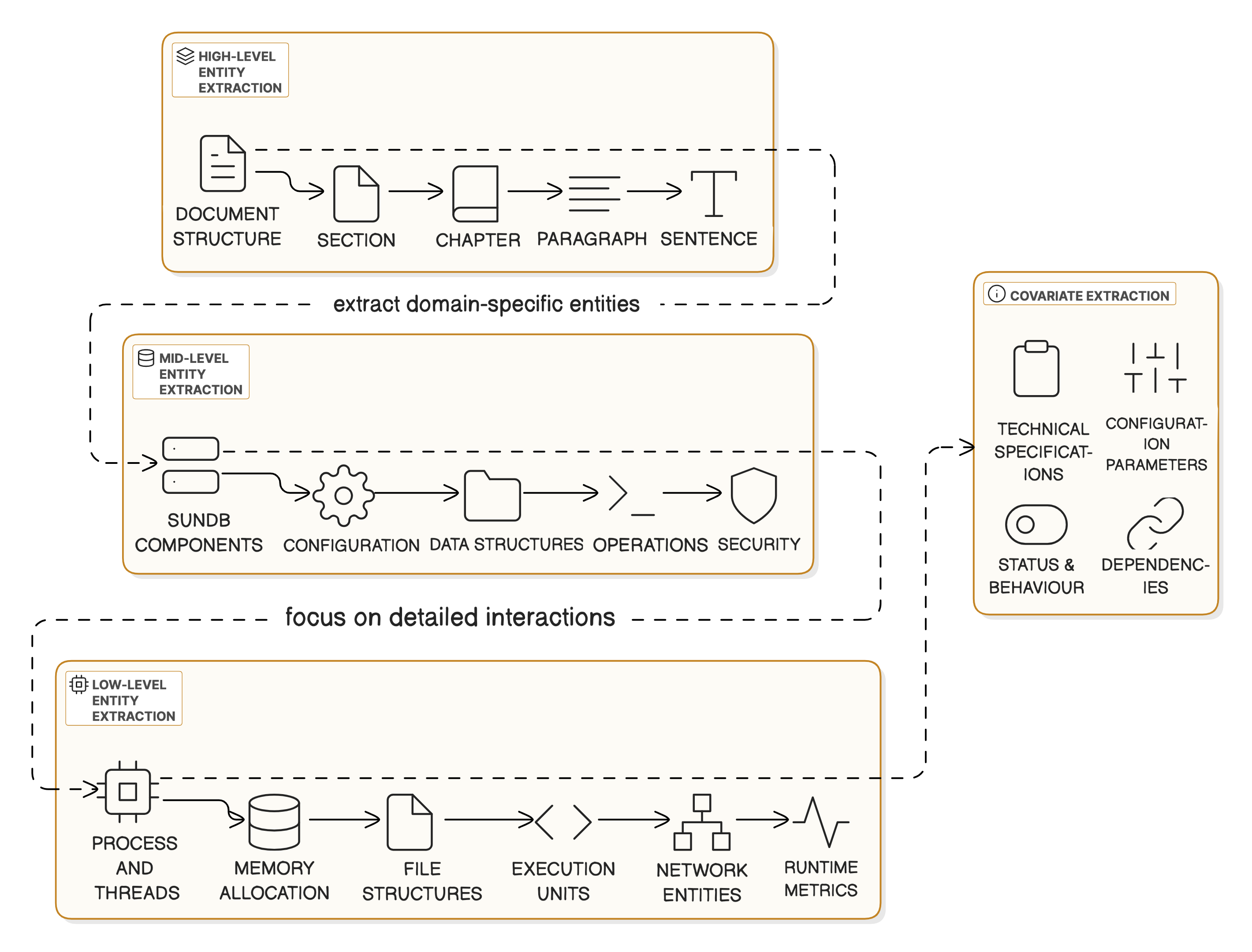

DO-RAG employs a hybrid methodology combining multimodal document parsing and dynamic KG construction through a multi-level entity-relation extraction pipeline. Key steps include:

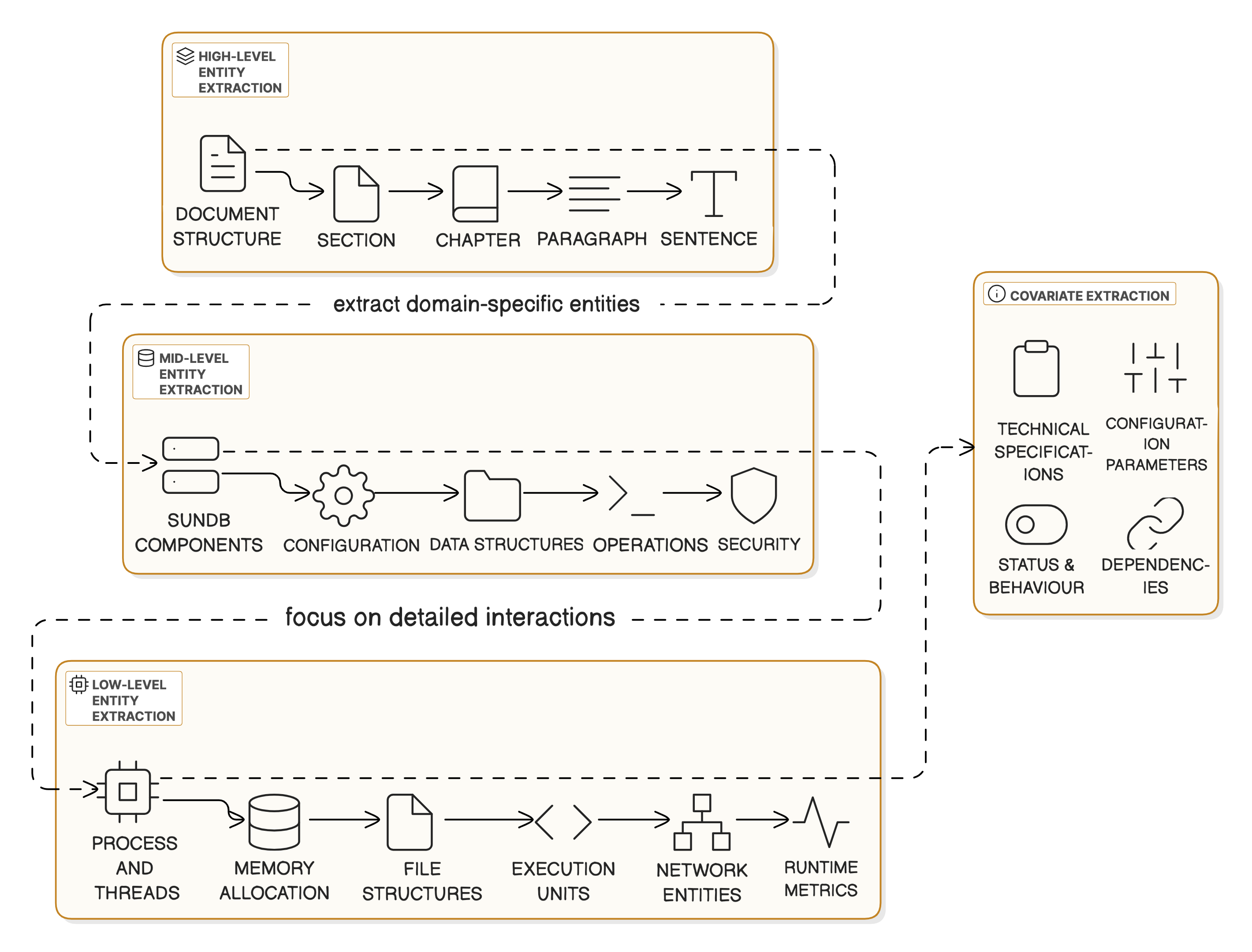

- Document Ingestion and KG Construction: Using an agentic chain-of-thought process, DO-RAG ingests documents (text, tables, images) and constructs a multimodal KG. This process involves high-level agents identifying structure, mid-level agents for domain-specific entities, and low-level agents capturing finer relationships.

Figure 2: Multi-level entity-relation extraction.

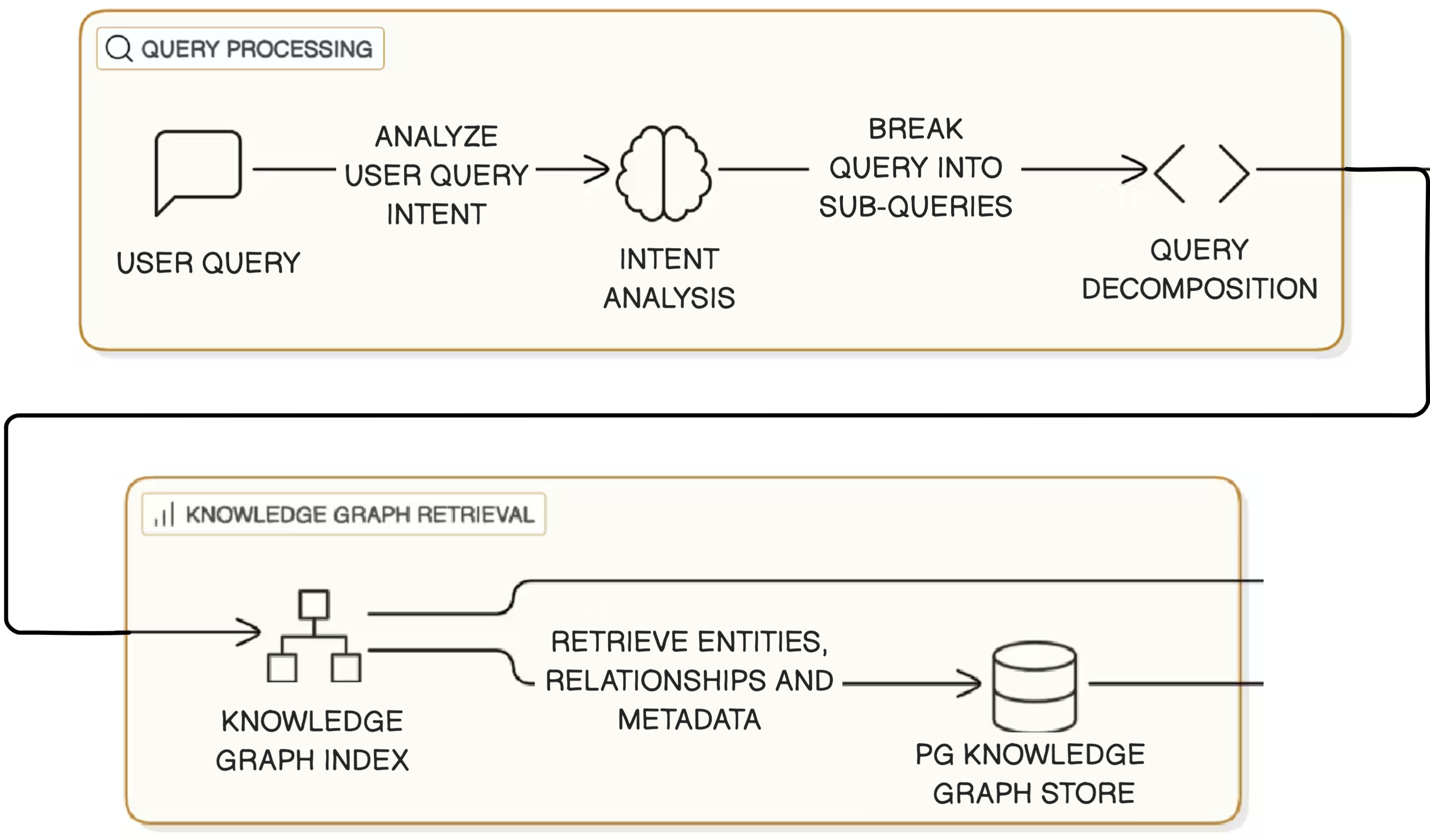

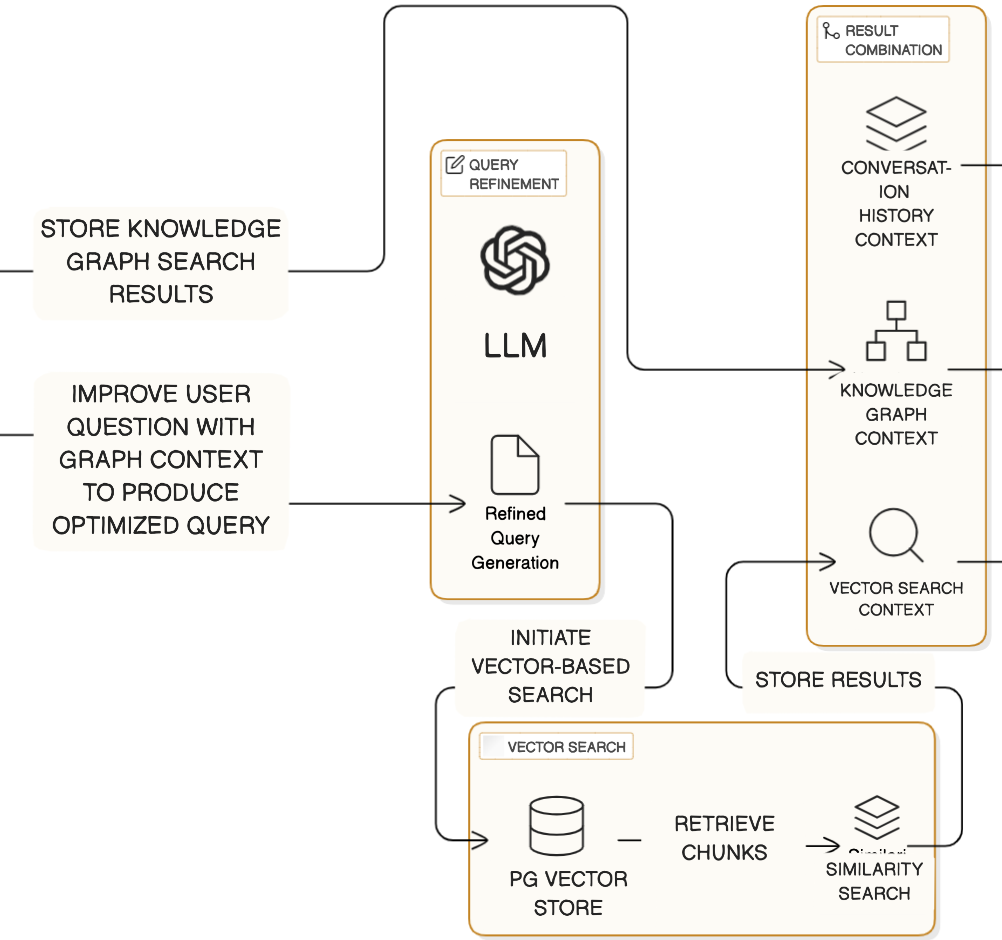

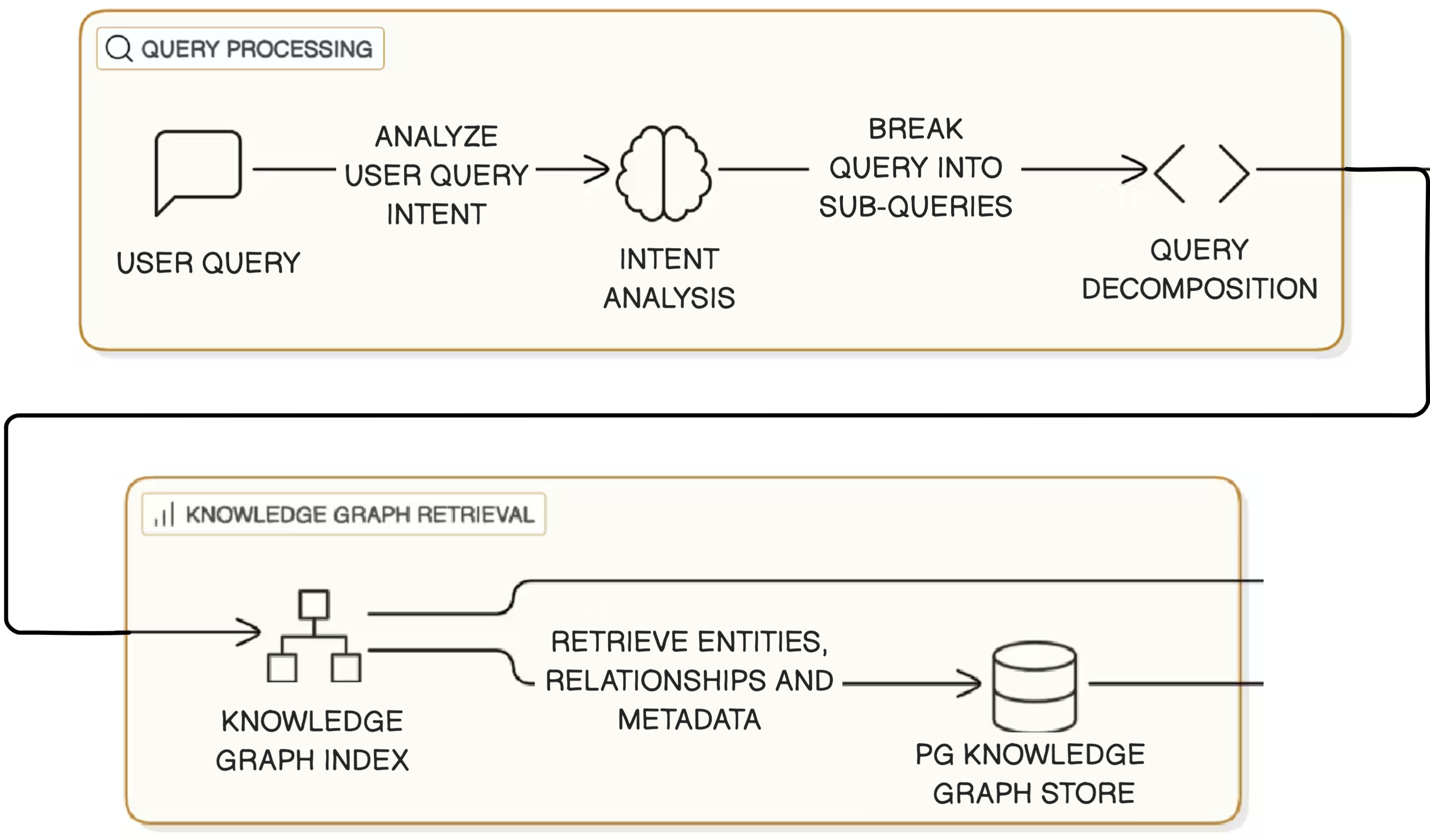

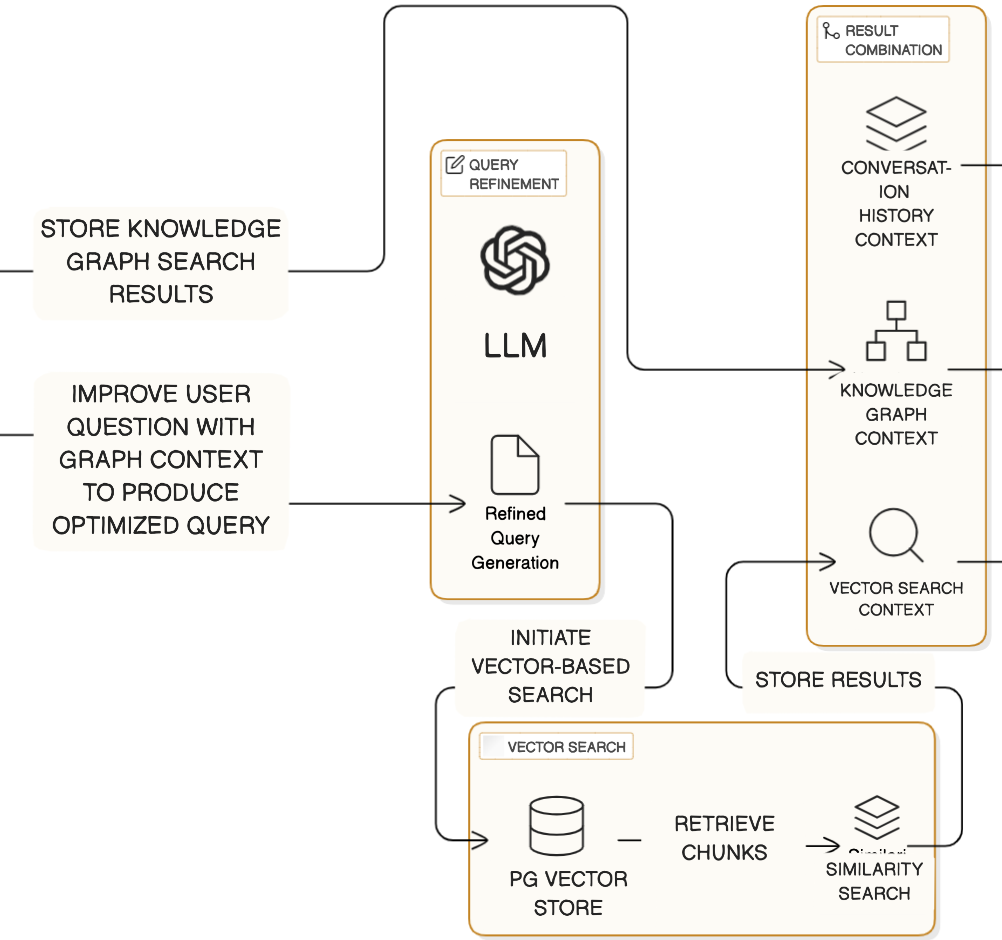

- Hybrid Retrieval Process: The query is decomposed using LLM-based intent analysis, followed by KG traversal and semantic vector search to consolidate evidence. The framework balances graph and vector search with a unified retrieval score, blending traditional retrieval relevance with structural insights from the KG.

Figure 3: Query processing and knowledge graph retrieval.

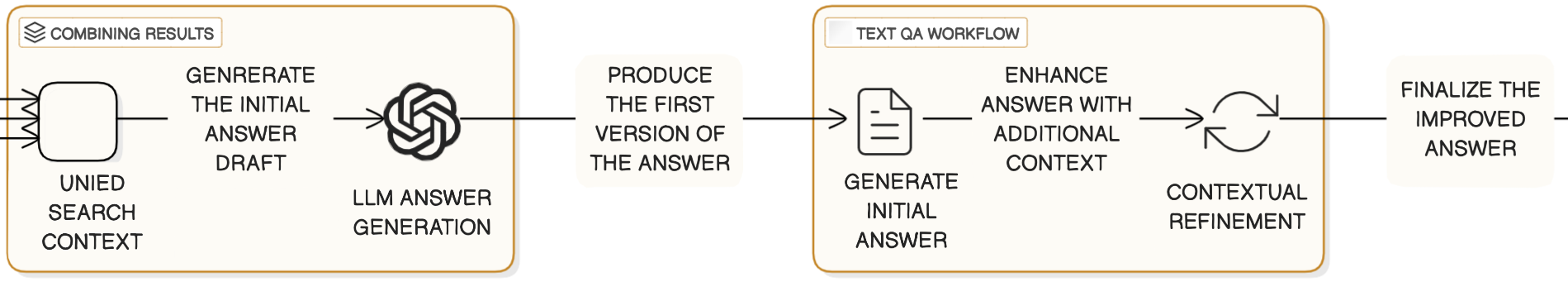

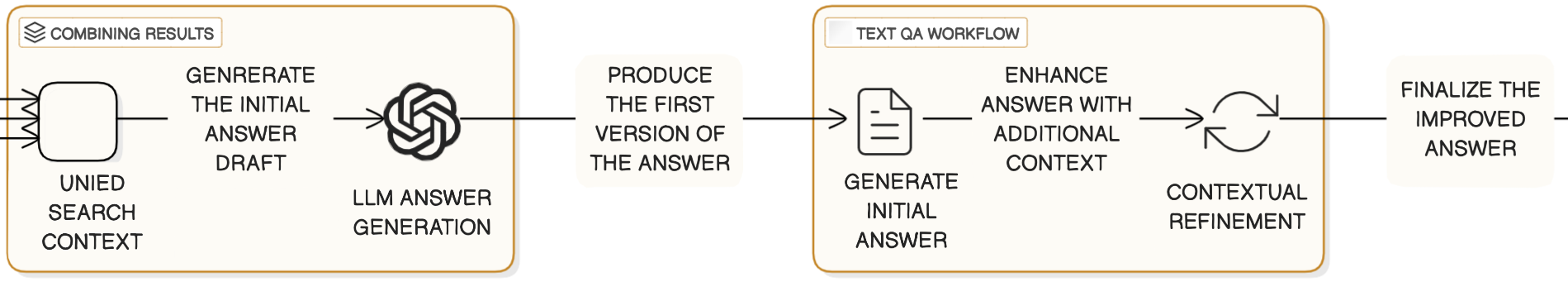

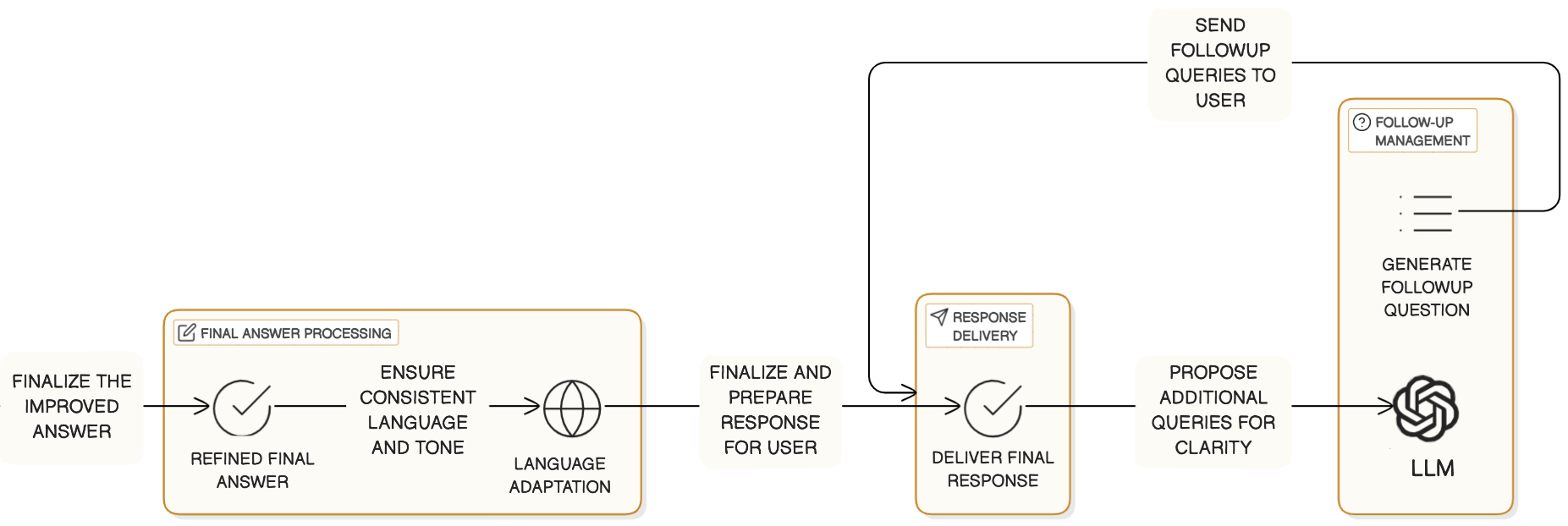

- Grounded Response Generation: Consistency is emphasized by generating initial responses followed by refinement and condensation. A notable contribution is the post-generation refinement step that iteratively cross-verifies and corrects hallucinations by grounding outputs back to the KG.

Figure 4: Combining results and Text QA workflow.

Experimental Evaluation

Empirical evaluations in the database and electrical engineering domains show marked improvements over existing frameworks. DO-RAG presents near-perfect contextual recall and answer relevancy exceeding 94%, outperforming comparable RAG systems like FastGPT, TiDB.AI, and Dify.AI by up to 33.38%.

Figure 5: Composite score comparison across frameworks and LLMs.

Key Results and Discussion

DO-RAG's KG-enhanced retrieval method shows improved Answer Relevancy and Contextual Precision compared to baseline models. The hybrid architecture allows it to maintain high recall and precision, integrating multi-modal data effectively. This enhanced accuracy underscores DO-RAG's potential for deployment in QA systems where domain-specific accuracy is critical.

Nonetheless, some challenges remain, particularly the computational intensity of DO-RAG's multi-agent extraction process and the risks of hallucinations in creatively biased LLMs. By refining prompt engineering strategies, future refinements could further mitigate such issues while exploring distributed processing for scalability concerns.

Conclusion

DO-RAG exemplifies a scalable, high-precision solution for closed-domain QA by unifying the strengths of structured knowledge representations with generative technology. Its agentic approach shows promise not only in near-term domain applications but also in the broader goal of constructing adaptable, intelligent QA systems grounded robustly in factual accuracy and retrieval precision. The framework offers a pathway for advanced QA systems capable of reliable multi-domain support, harmonizing dynamic KG with RAG methods in AI systems.