- The paper introduces DSRAG, which constructs a multimodal knowledge graph from text, images, and tables to mitigate factual and contextual errors in domain-specific tasks.

- The paper details a dual-stage retrieval process combining graph-guided semantic pruning with vector-based search to optimize domain-specific information retrieval.

- The paper demonstrates that integrating both Concept and Instance Knowledge Graphs significantly improves performance over traditional RAG models in technical question answering.

DSRAG: A Domain-Specific Retrieval Framework Based on Document-derived Multimodal Knowledge Graph

Introduction

LLMs typically struggle with domain-specific tasks due to insufficient specialized knowledge and frequent factual hallucinations. Retrieval-Augmented Generation (RAG) has been proposed to enhance their performance by integrating external knowledge bases. However, existing RAG methods, including graph-based variants like GraphRAG, encounter limitations in terms of domain knowledge accuracy and context modeling. DSRAG (Domain-Specific RAG) is introduced as a domain-specific, graph-enhanced framework that incorporates multimodal knowledge graphs (MMKGs) to address these challenges.

DSRAG leverages domain-specific documents to construct a multimodal knowledge graph from text, images, and tables, capturing both conceptual and instance layers. This approach facilitates more reliable response generation by the LLM through semantic pruning and structured subgraph retrieval. The efficacy of this framework is demonstrated via the Langfuse multidimensional scoring mechanism, showcasing its enhanced accuracy in domain-specific question answering tasks.

Methodology

Framework of DSRAG

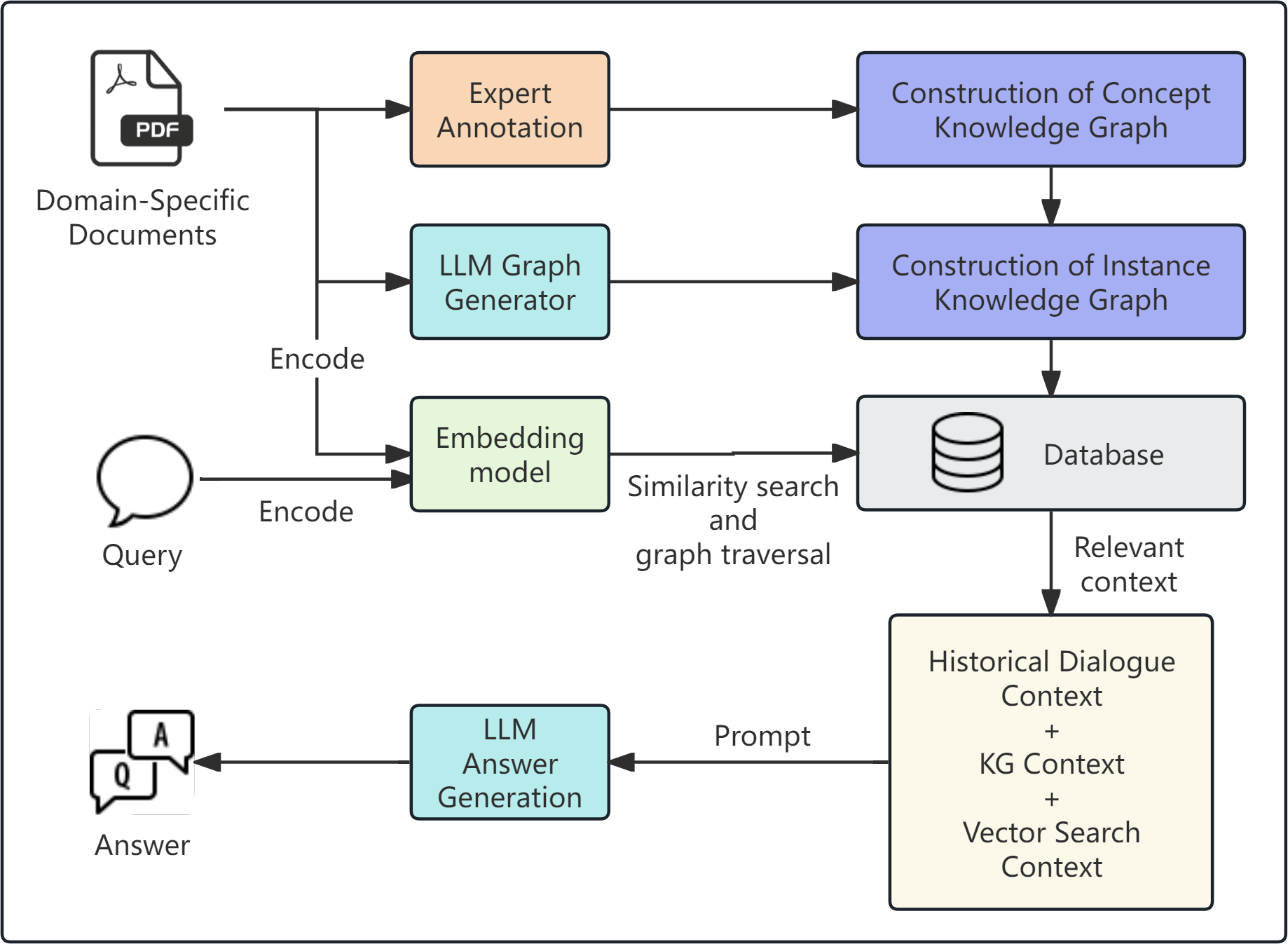

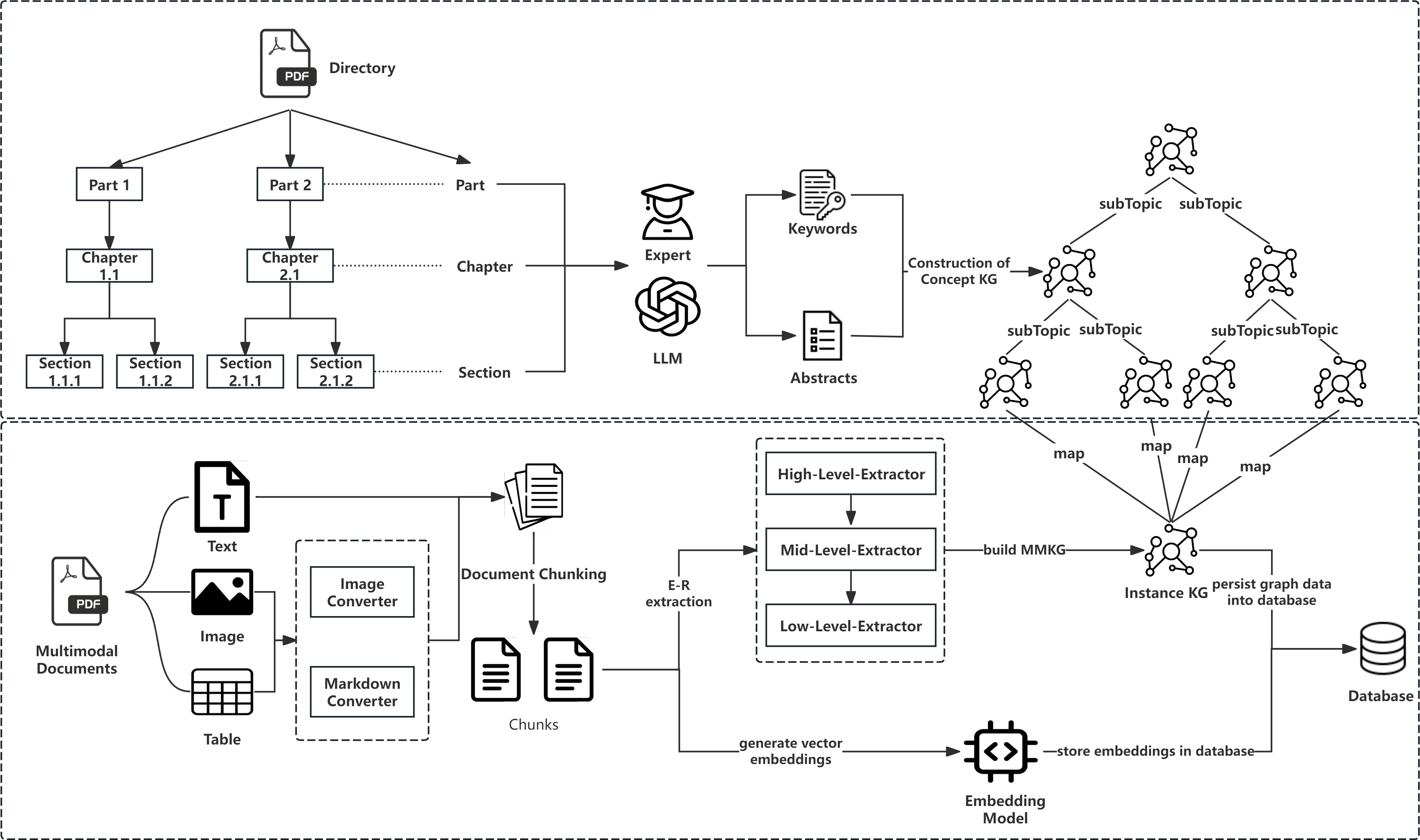

The overall structure of DSRAG employs a domain-specific multimodal knowledge graph (DSKG) to mitigate semantic heterogeneity and factual errors in question answering. DSRAG constructs a hierarchical knowledge graph from domain documents, divided into Concept KG and Instance KG. This framework integrates structured graph-based information with vector-based retrieval methods.

Figure 1: The overall framework of DSRAG.

DSKG Construction

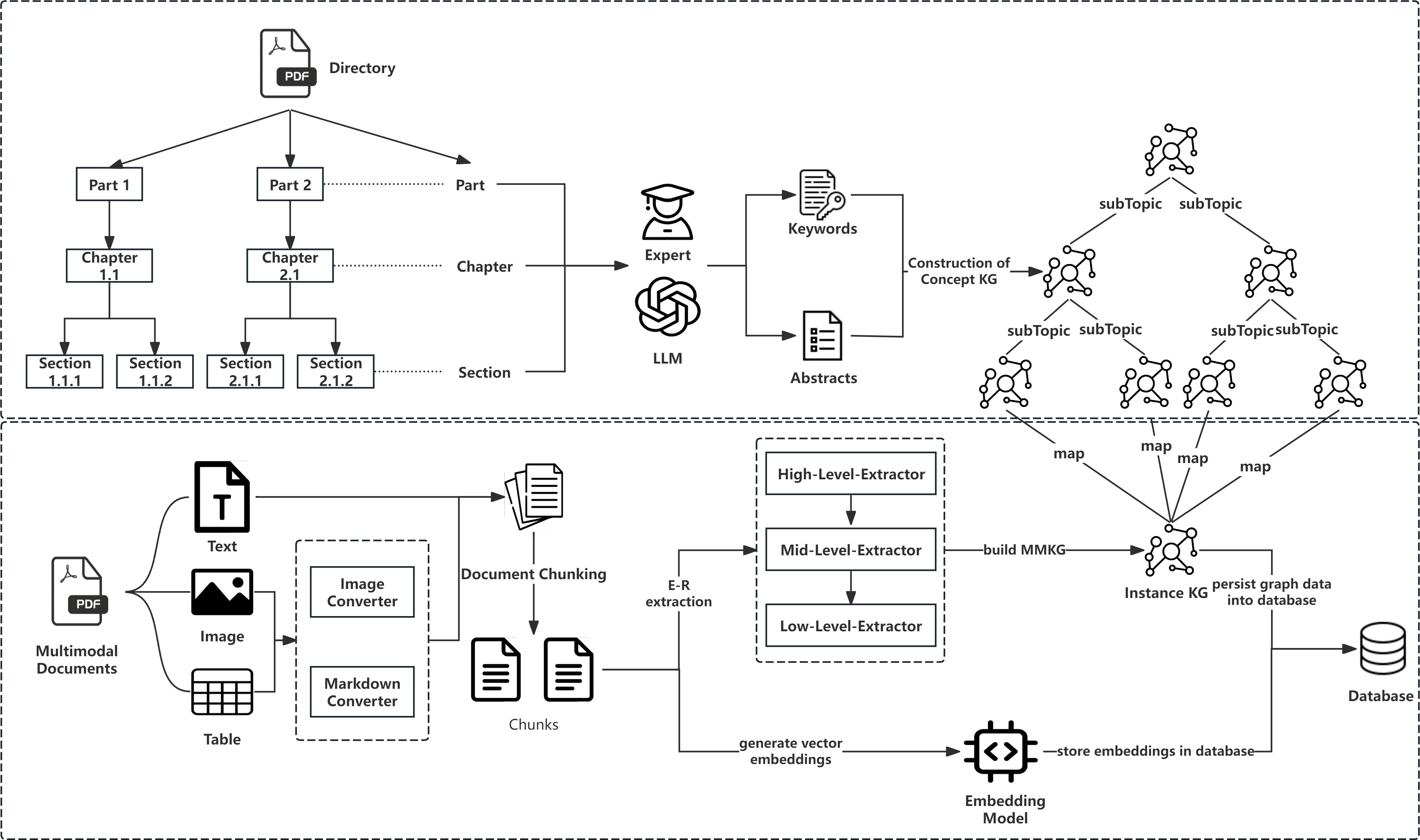

The construction of DSKG involves several stages:

- Data Preprocessing: Utilizes tools for semantic parsing and conversion of documents to a unified format, employing OCR for tables and images.

- Concept KG Construction: Conceptualizes core domain concepts and relationships from the structure of the documents, using expert annotations and LLMs.

- Instance KG Construction: Builds upon the Concept KG framework to extract fine-grained data and relationships, employing a layered extraction architecture for comprehensive modeling.

Figure 2: Flow Diagram of DSKG Construction from Original Documents to MMKG.

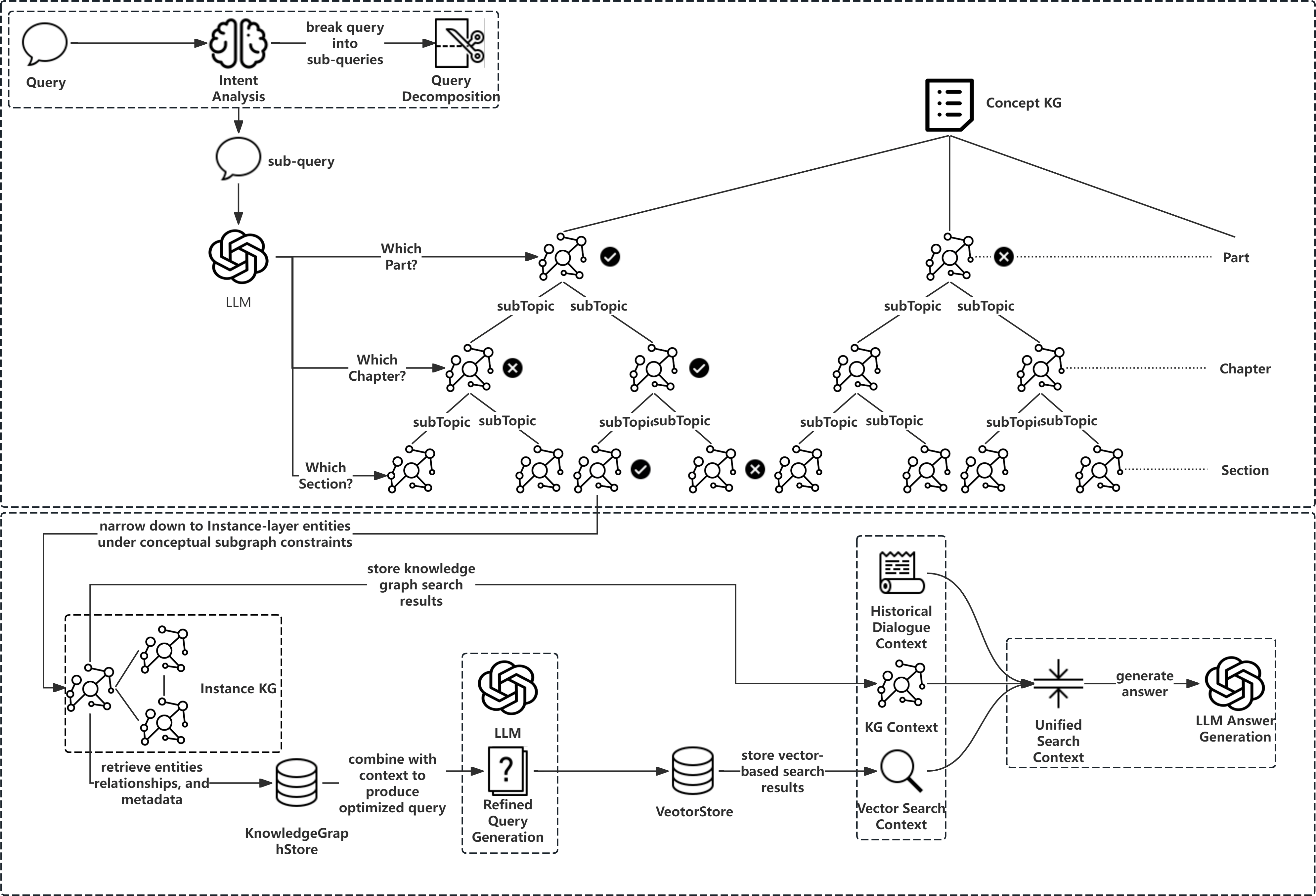

DSKG-Enhanced Retrieval

DSKG optimizes the retrieval process through a dual-stage strategy:

Experiments and Results

Dataset and Environment

The evaluation used a comprehensive dataset from the database domain, featuring multimodal elements like text and tables. Experiments were conducted on technical questions using domain-specific documentation as knowledge sources.

DSRAG demonstrated superior performance over baseline models (NaiveRAG, TiDB AutoFlow, and RAGFlow) in faithfulness, answer relevancy, and contextual precision. This underscores the importance of integrating multimodal KGs within RAG frameworks for domain-specific tasks.

Ablation Study

An ablation study confirmed that the inclusion of both Concept KG and Instance KG significantly elevates the performance metrics, validating the framework's comprehensive design. The complete DSRAG outperformed configurations with limited or no KG integration.

Conclusion

DSRAG presents an innovative adaptation of retrieval-augmented generation by leveraging a multimodal knowledge graph. This framework effectively addresses domain-specific challenges, surpassing traditional models in yielding factually consistent, contextually accurate responses. Future research could expand upon complex graph structures and intermodal data integration to refine the approach for broader applications in intelligent domain-specific question answering systems.