- The paper presents IGC, a novel module integrated early in LLM layers to perform arithmetic tasks reliably.

- It employs non-differentiable GPU-based tensor operations and auxiliary loss to enhance arithmetic computation efficiency.

- The IGC architecture achieves 98-99% accuracy on BigBench benchmarks with minimal parameter overhead and improved interpretability.

Integrating a Gated Calculator into LLMs for Arithmetic Task Solutions

Introduction

The paper presents the Innovative Gated Calculator (IGC) architecture aimed at enhancing LLMs with arithmetic computation capabilities. LLMs like GPT-3 and GPT-4 have historically struggled with arithmetic tasks, especially those involving numbers with multiple digits. Despite the advancements in NLP, these tasks remain challenging for LLMs, necessitating external tools or complex procedures like Chain of Thought (COT). The IGC module offers a direct integration into an LLM to handle arithmetic without such overheads, achieving significant improvements on arithmetic benchmarks.

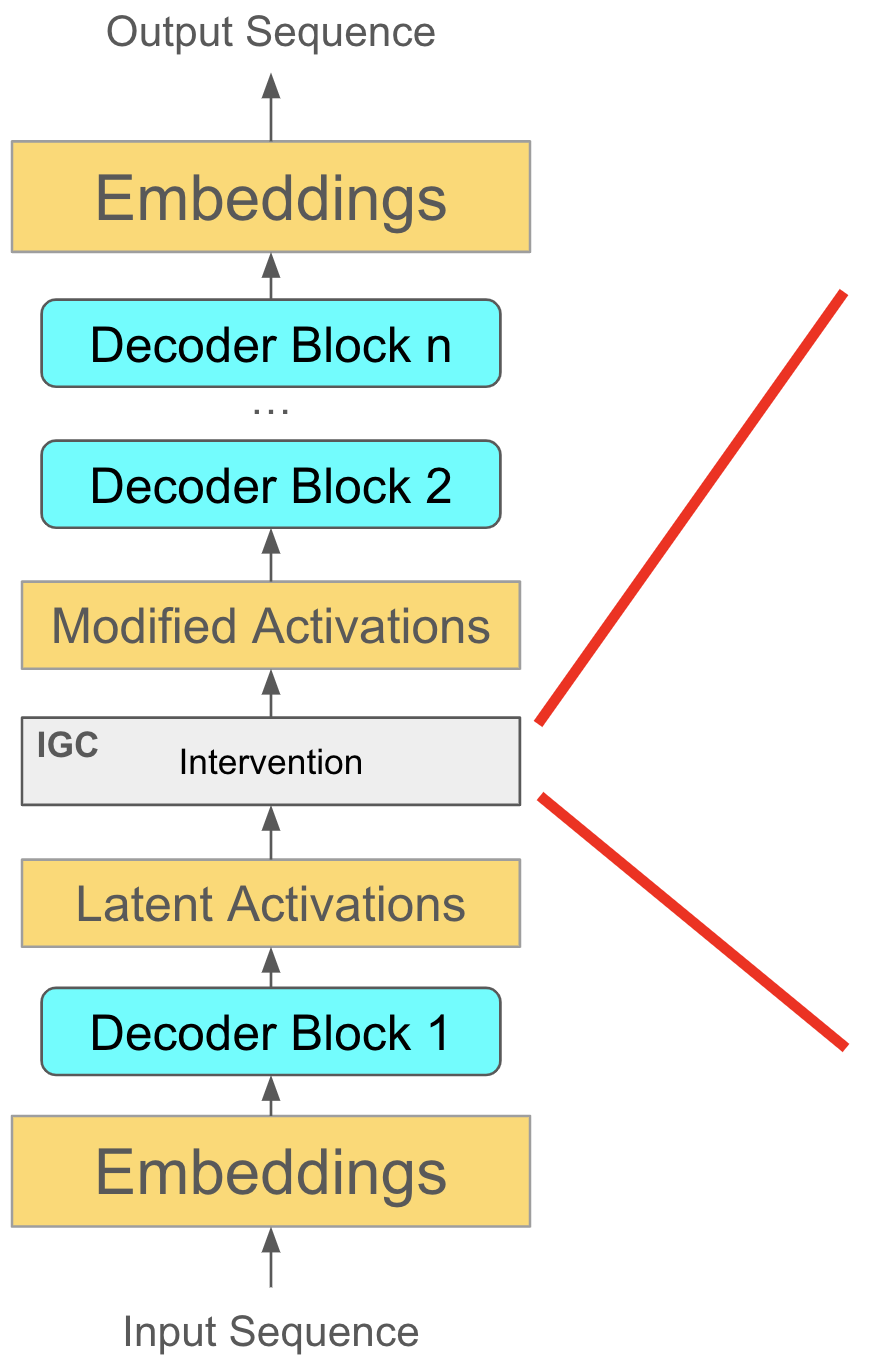

IGC Architecture and Integration

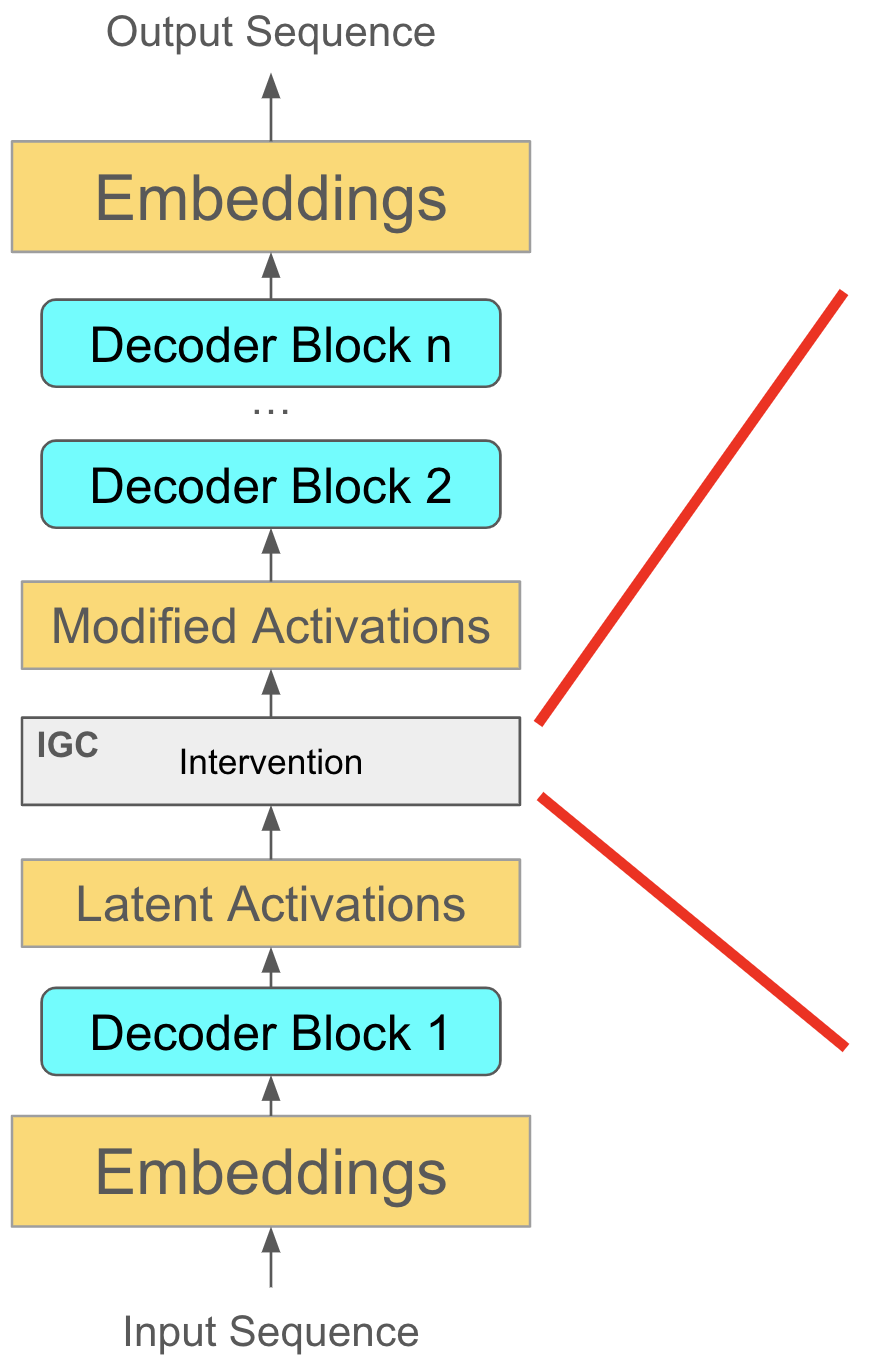

The IGC is inserted into a pretrained LLM at a fixed, early layer (such as layer 1). It modifies intermediate activations to facilitate arithmetic computations by splitting inputs around an anchor token and processing them through a sequence of components.

Figure 1: The IGC architecture illustrating its insertion after a fixed layer in a pretrained LLM and its operation during training.

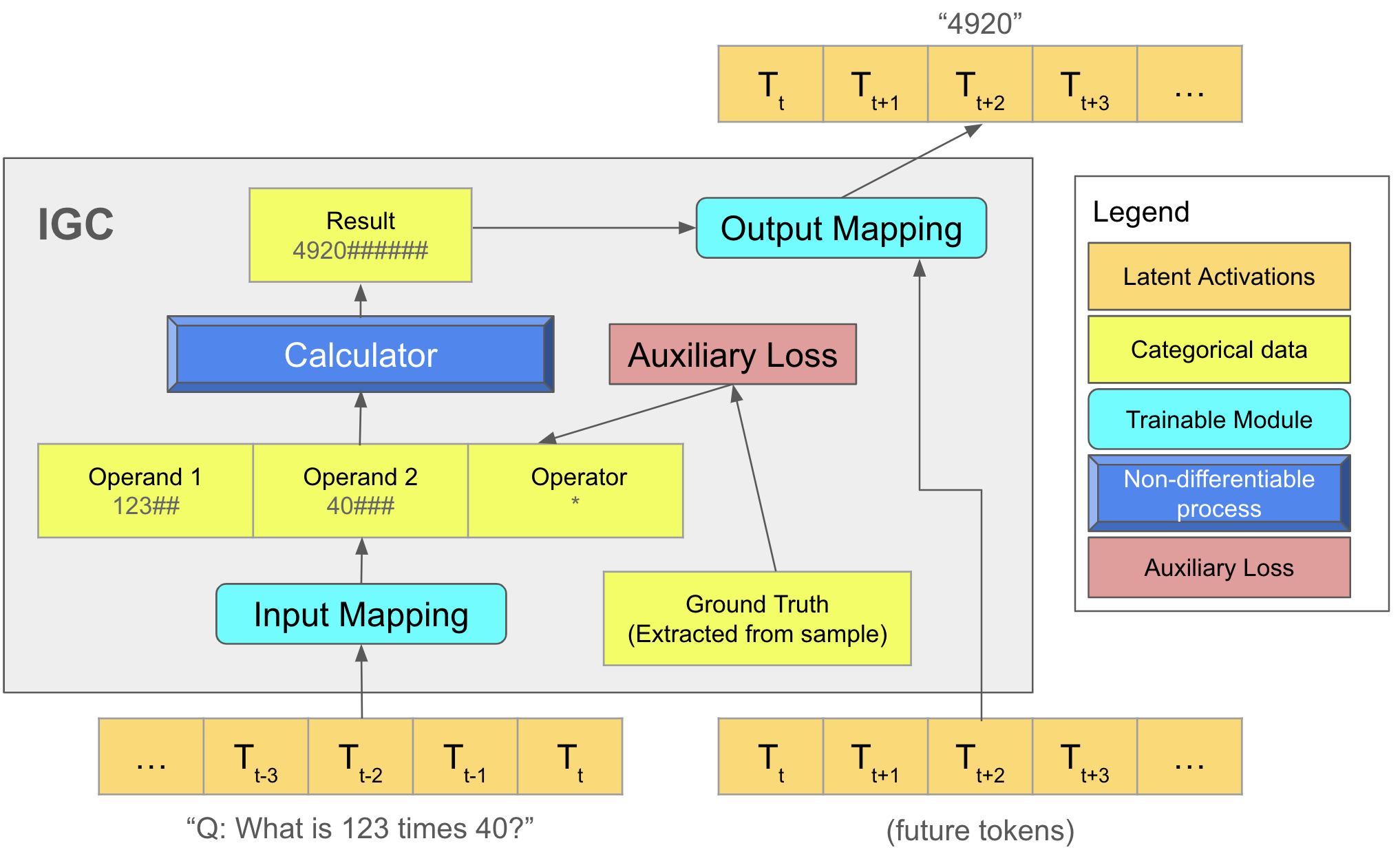

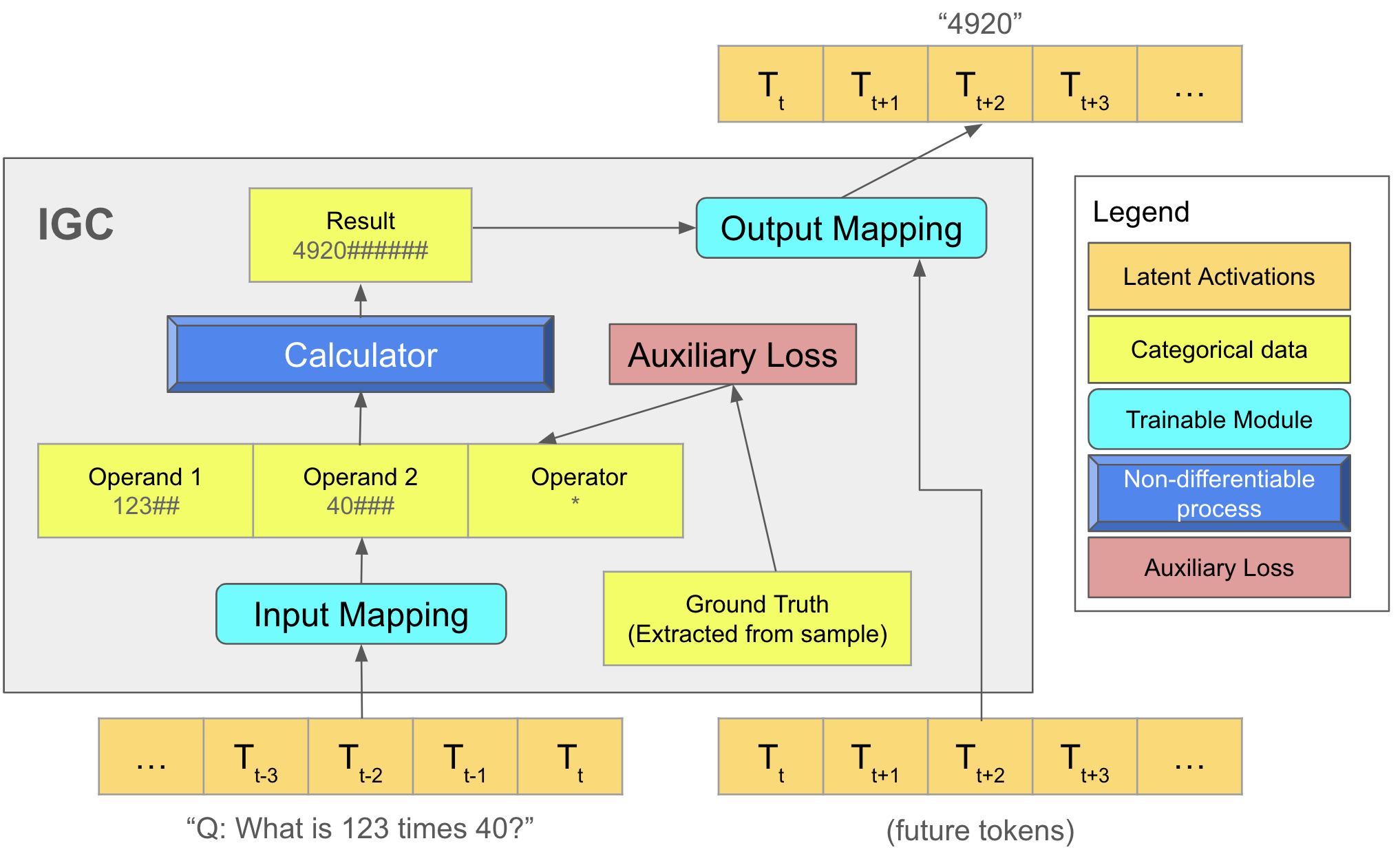

The module comprises three main components:

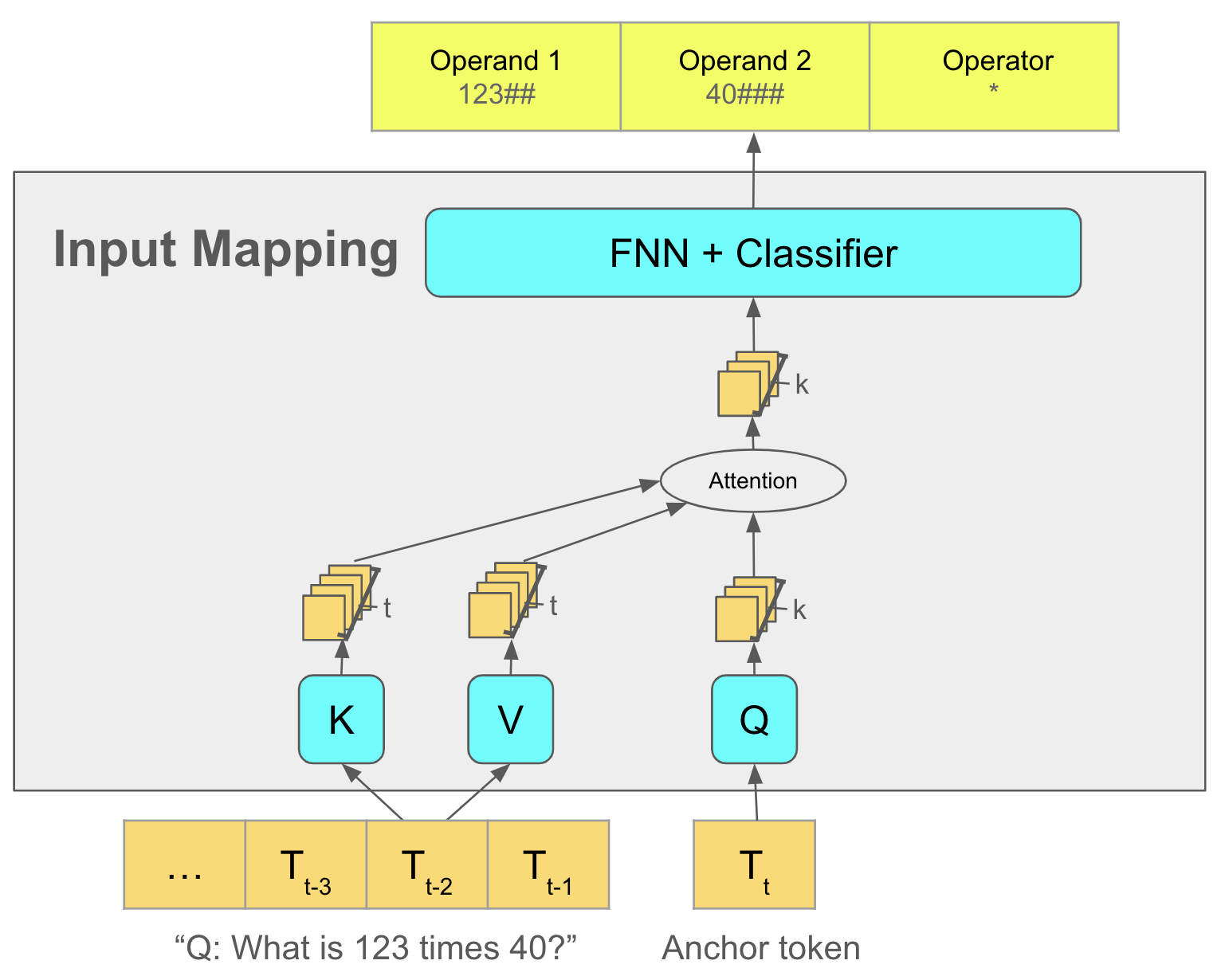

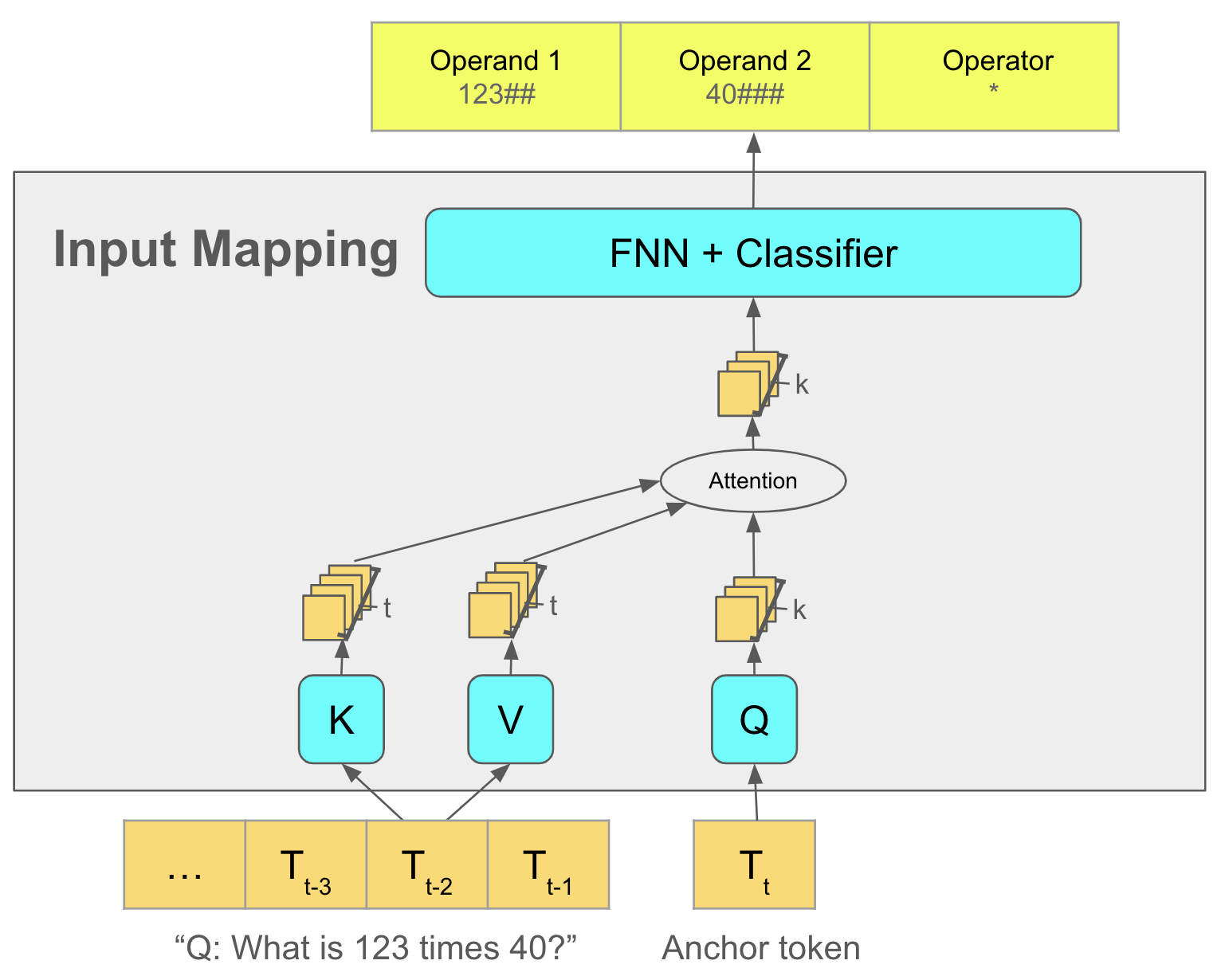

- Input Mapping Submodule: Extracts numbers and operators from variable-length token embeddings and formats them into fixed-length categorical data.

- Calculator: Operates on the GPU, performing arithmetic operations using non-differentiable tensor operations. It emulates arithmetic directly, bypassing token generation during calculations.

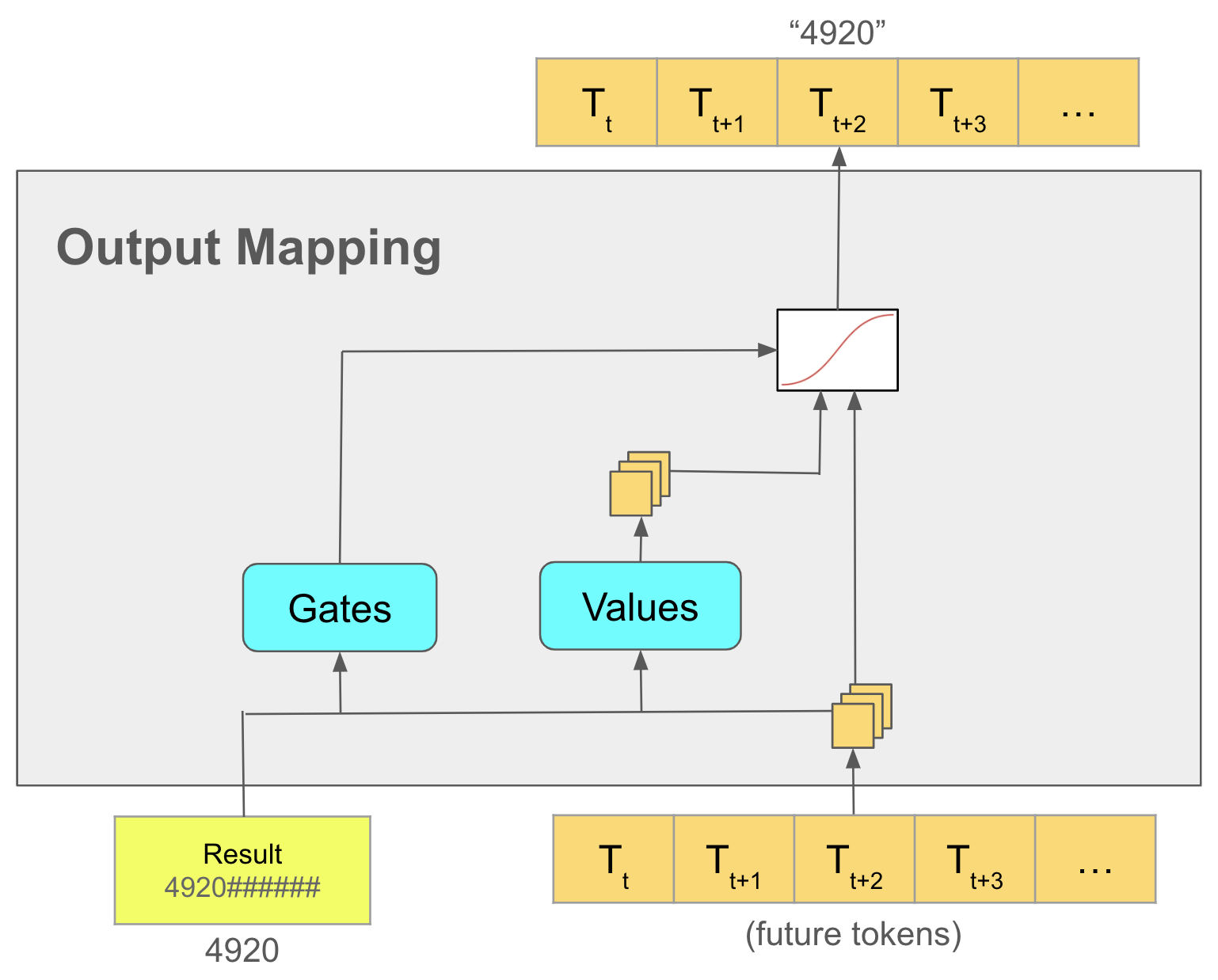

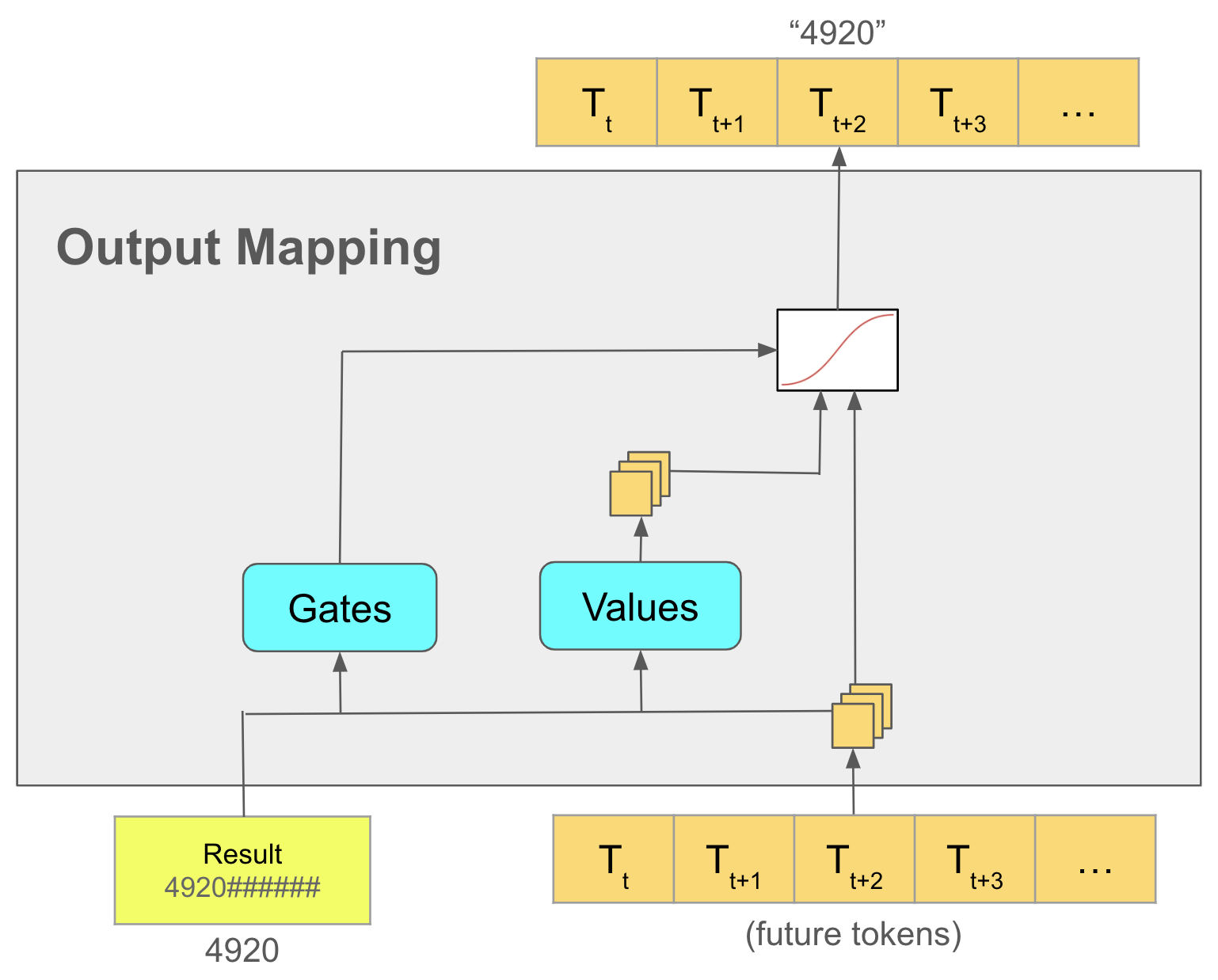

- Output Mapping Submodule: Modifies output tokens using the calculated results, gated by learned weights to adaptively filter changes, preventing interference in non-arithmetic contexts.

Figure 2: Detailed function of the Input and Output Mapping submodules within the IGC architecture.

Methodology

The IGC integrates non-differentiable methods, requiring innovative training strategies:

- Training Setup: An auxiliary loss is applied to enhance the Input Mapping due to the non-differentiability of the Calculator. This submodule uses predefined ground-truth data annotations for the arithmetic tasks involved.

- Discrete Execution: Ensures operations are conducted once the entire arithmetic context is available, enhancing learning efficiency and reducing computational redundancy.

Experimental Evaluation

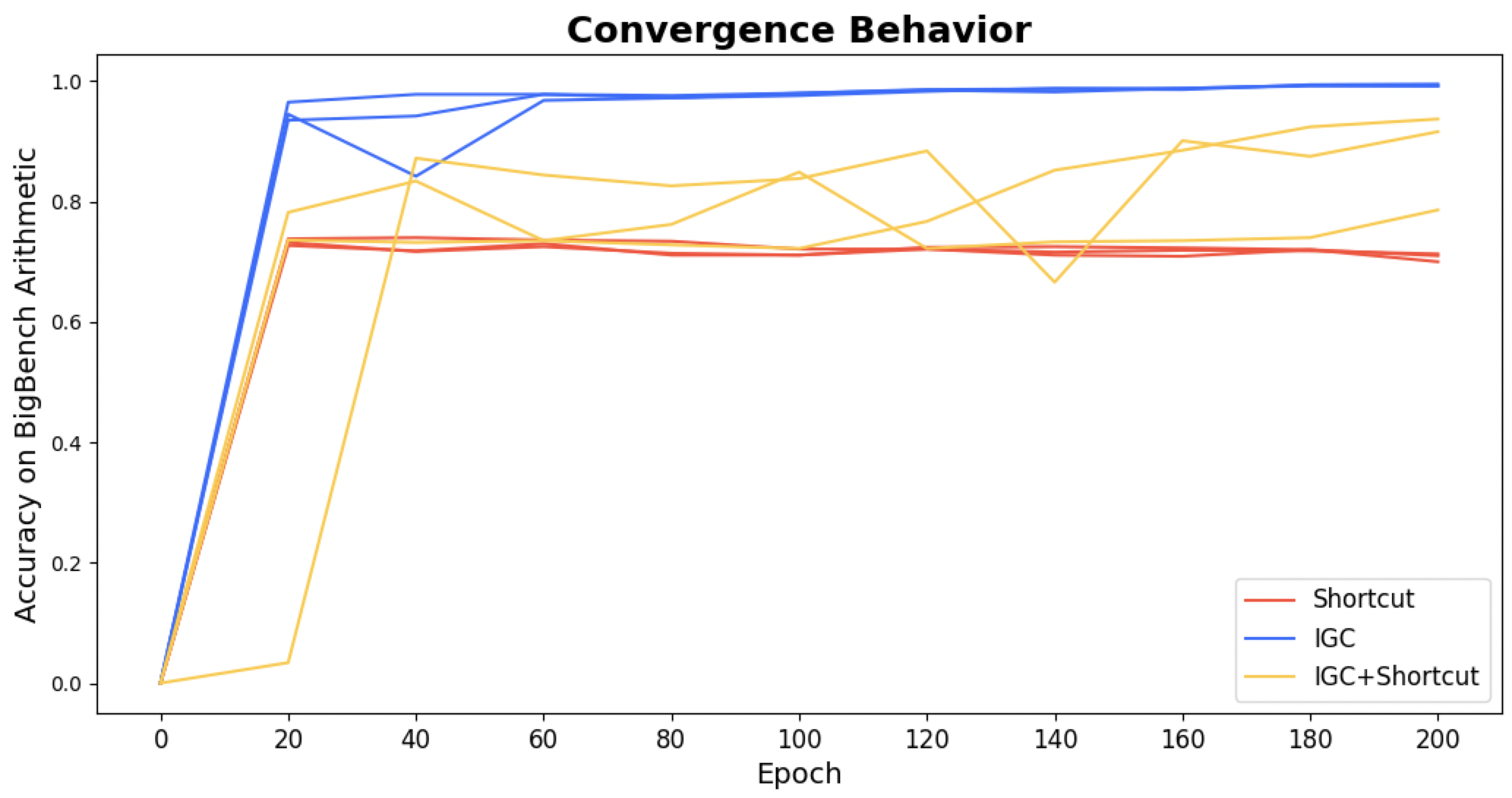

The IGC was tested on the BigBench Arithmetic Benchmark, showing superior performance compared to contemporary models. Using a finetuned LLaMA model as a base, the IGC achieved near-perfect accuracy, outperforming models like PaLM 535B, which are significantly larger.

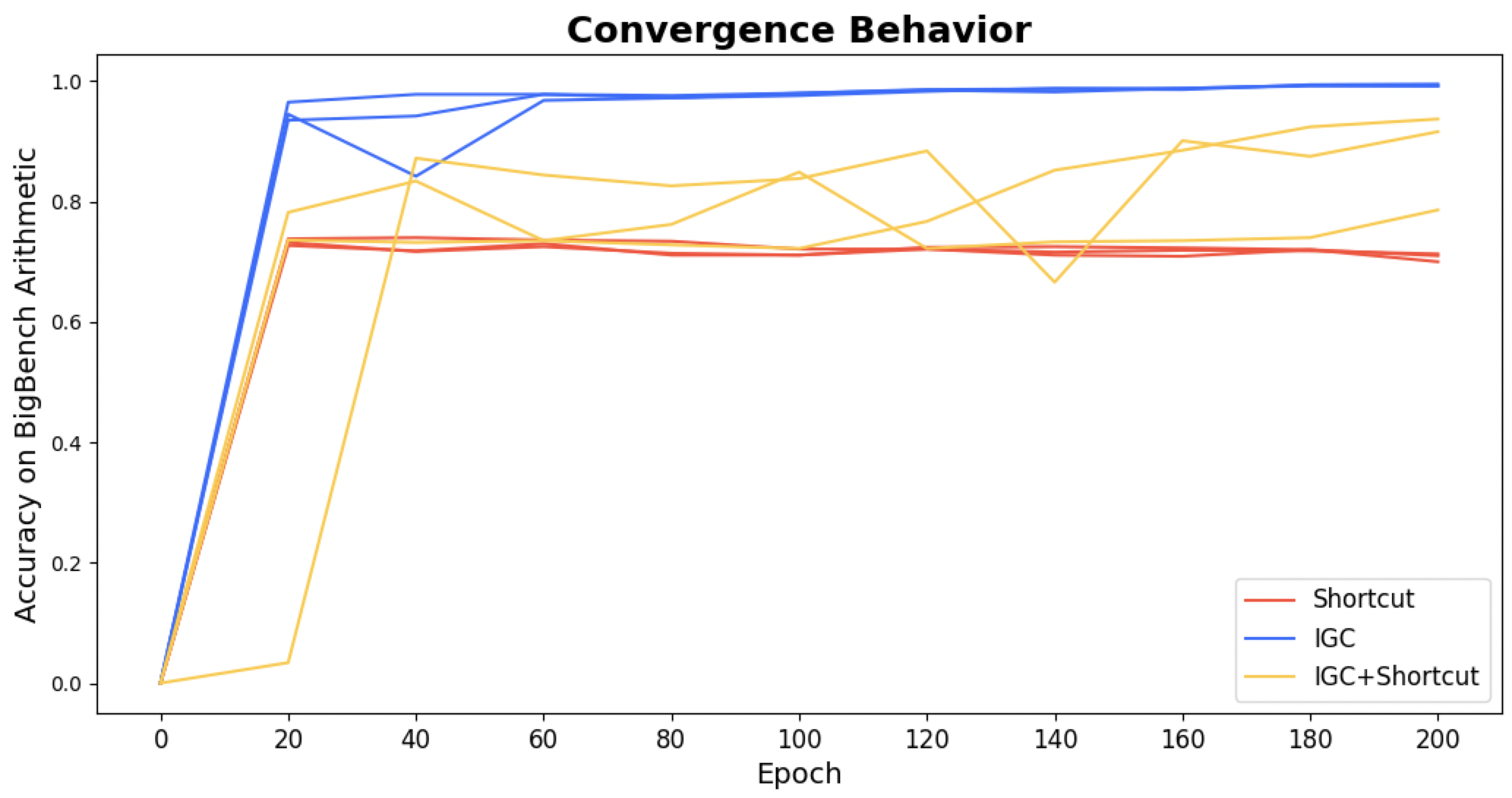

Figure 3: Performance of various architectures on the BigBench Arithmetic benchmark, highlighting IGC's superior training convergence and consistency.

- Performance Metrics: Achieved 98-99% accuracy across various arithmetic operations, with multiplication tasks demonstrating notable improvements over existing models.

- Model Efficiency: The IGC model, with only 17 million parameters added to an 8 billion parameter base, highlights efficiency in parameter utilization and training time, with convergence achieved in a few days using a minimal dataset.

Comparison with Existing Methods

IGC stands out for its:

- Capability: Handles complex arithmetic efficiently within the LLM, avoiding the external CPU-GPU communication required by methods utilizing external tools or COT.

- Integration and Interpretability: Offers internal processing, preserving the LLM’s framework integrity while providing explicit intermediary representations for diagnostic purposes.

Future Directions and Limitations

- Pretraining Potential: IGC could be incorporated directly during LLM pretraining, allowing the model to condition arithmetic understanding as a foundational capability rather than a post-hoc addition.

- Task Generalization: Beyond arithmetic, the IGC architecture conceptually extends to other discrete tasks, such as database lookups or operand-specific logic operations, positioning it as a versatile LLM enhancement tool.

Conclusion

The IGC module exemplifies significant strides towards effective arithmetic integration within LLMs, offering both performance and efficiency gains. Its implementation sets a foundation for expanding LLM capabilities to inherently support arithmetic logic, mitigating dependency on external computational aids. Moreover, its architecture presents opportunities for broader application within AI through innovative non-differentiable computation techniques.