- The paper investigates transitioning from centralized to federated learning to mitigate privacy risks in educational mental health research.

- It details how decentralized model training enables secure analysis of mental health indicators without compromising sensitive student data.

- The study outlines a roadmap combining short-term implementations and long-term innovations to advance AI-driven mental health solutions in academia.

The Transition from Centralized Machine Learning to Federated Learning for Mental Health in Education: A Survey of Current Methods and Future Directions

The integration of AI and ML within the domain of mental health in education offers transformative opportunities for enhancing student well-being while overcoming the privacy challenges posed by conventional centralized learning frameworks. This paper delineates the promise of federated learning (FL) as a novel paradigm that circumvents privacy risks by enabling decentralized model training where data remains localized. It surveys existing methodologies, identifies current gaps in applying FL, and proposes a roadmap for future directions in enabling effective, privacy-conscious AI-driven mental health solutions within educational settings.

Overview of Mental Health Issues in Education

Mental health issues, such as anxiety, stress, depression, attention-deficit/hyperactivity disorder (ADHD), and substance use disorders (SUD), significantly impact students' academic performance and overall well-being. The paper highlights the importance of early detection and intervention in mitigating these challenges. It also emphasizes the complexity of mental health assessments, which traditionally rely on subjective experiences rather than objective measures. Diverse analytical methods, including qualitative, quantitative, and mixed approaches, are employed to gain insights into mental health conditions, with quantitative methods playing a pivotal role in statistical analysis and trend identification.

Machine Learning Applications in Mental Health

AI/ML has been successfully deployed across various aspects of mental health in the educational sector. These technologies facilitate a range of tasks, including diagnosing mental health conditions, providing personalized treatment plans, predicting academic performance based on mental health metrics, and enabling automated systems such as chatbots for immediate student support. Despite the capabilities of centralized ML frameworks, privacy concerns persist due to the need for aggregating sensitive data on centralized servers.

Federated Learning: A Solution to Privacy Concerns

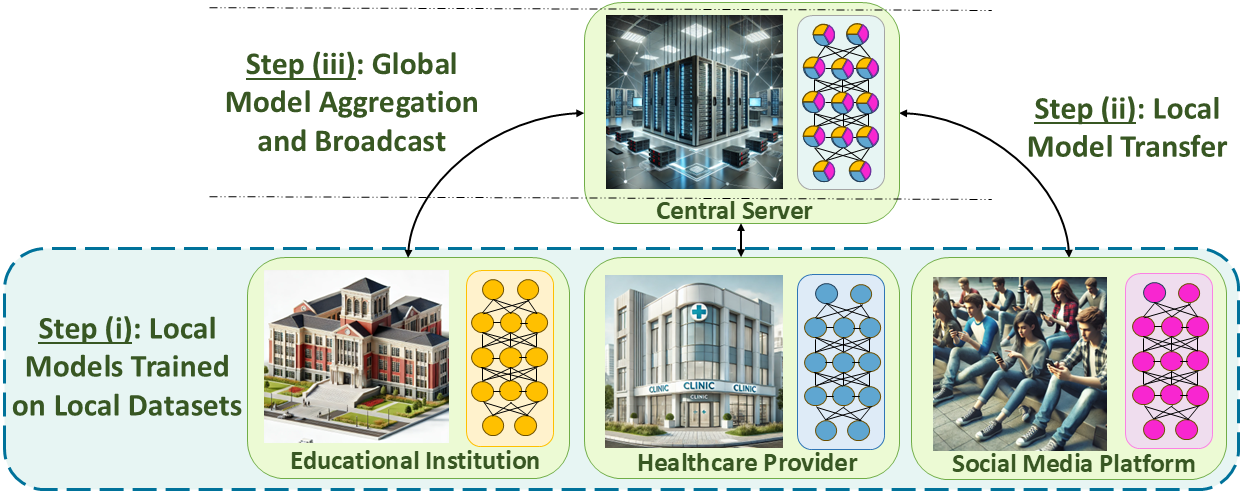

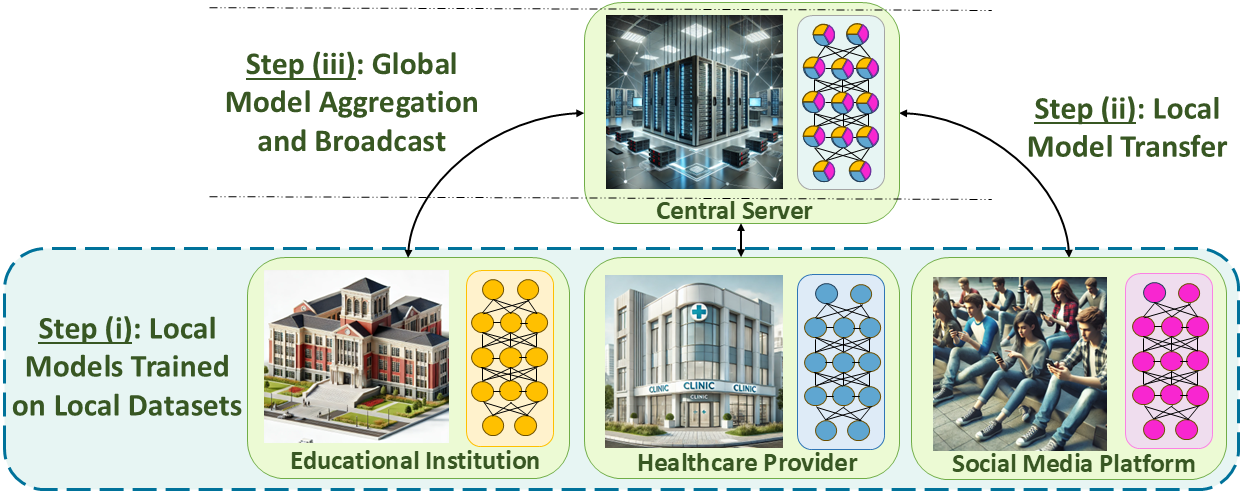

FL presents a solution to privacy issues in conventional ML by maintaining raw data at individual institutions instead of transferring it to a central server. FL facilitates local model training and aggregates the models to develop a global one, thus ensuring data privacy (Figure 1).

Figure 1: A schematic of FL model training architecture.

Although the application of FL in mental health research is nascent, recent studies demonstrate its potential to deliver privacy-preserving assessments for stress, anxiety, and depression. The paper summarizes these studies, highlighting their success while also identifying the limited scope of existing implementations, particularly within educational contexts.

Proposed Research Directions

To unlock the full potential of FL in mental health within education, the paper outlines an extensive roadmap, categorized into short- and long-term strategies:

Short-Term Vision

- Conventional FL Applications: Leverage decentralized datasets for stress detection and mental health diagnoses; tackle issues like data heterogeneity.

- Heterogeneous Dataset Integration: Develop methods that enable seamless integration of FL across datasets with varying feature spaces.

Long-Term Vision

- Vertical FL (VFL): Facilitate privacy-conscious, multi-domain data integration, such as educational and healthcare data, to enhance mental health analysis.

- FL with Complementary Learning: Exploit complementary server-side learning to enrich model training by combining centralized and decentralized datasets.

- Personalized and Multi-Task FL: Develop personalized FL models that account for the unique attributes of minority populations and support multi-task learning across diverse institutions.

- Integration of LLMs: Deploy LLMs within FL frameworks as virtual counselors for personalized mental health support, considering ethical implications and the need for expert supervision.

- Explainable AI (XAI): Enhance model transparency through XAI within FL. Address challenges like feature complexity and privacy concerns.

- Multi-Modal Federated Learning (MMFL): Address the integration challenges of multi-modal data, ensuring efficient, privacy-conscious training.

- Federated Unlearning: Implement mechanisms for data removal to comply with privacy regulations like GDPR while preserving model utility.

- Security and Privacy Enhancements: Explore differential privacy and cryptographic methods for robust data protection.

- Alternative FL Architectures: Investigate decentralized and hierarchical FL architectures to overcome limitations like single points of failure and SPoF vulnerabilities.

10. FL under Data/Concept Drift: Study adaptive learning algorithms to ensure model robustness in dynamic environments with changing data distributions.

Conclusion

The transition from centralized to federated learning in mental health research for educational settings offers significant advancements in AI-driven methodologies, emphasizing both privacy preservation and model efficiency. By comprehensively reviewing existing approaches and projecting future research directions, the paper establishes a foundation for the continued exploration of FL, ensuring it addresses the nuanced needs of the education sector. As FL continues to evolve, it presents opportunities for enhancing mental health outcomes through collaborative, privacy-conscious frameworks tailored to the complexities of educational and human-centered domains.