- The paper details testing practices in research software, identifying key challenges and diverse methods employed by developers.

- It emphasizes the contrast between systematic and ad-hoc approaches, with unit and regression testing emerging as predominant strategies.

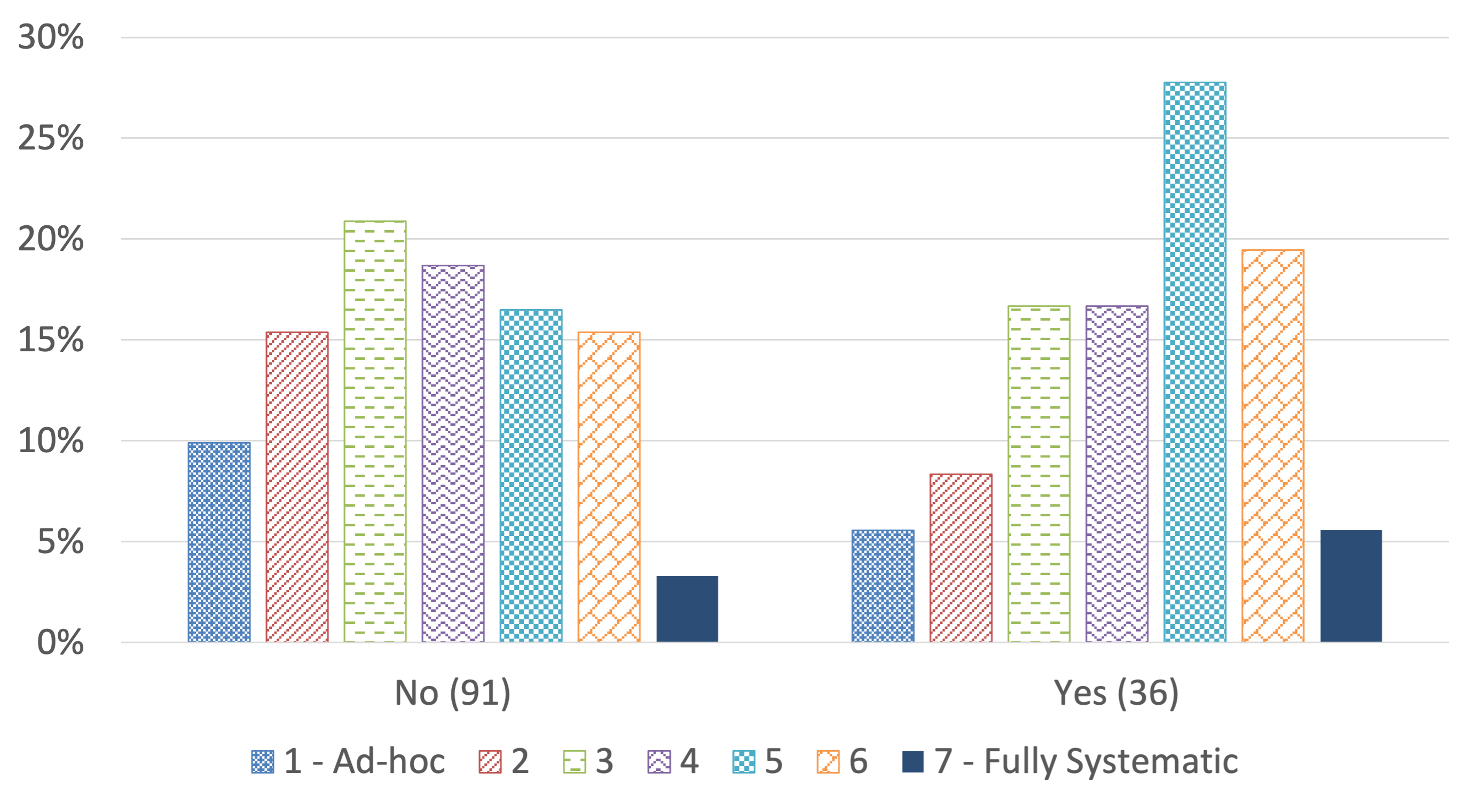

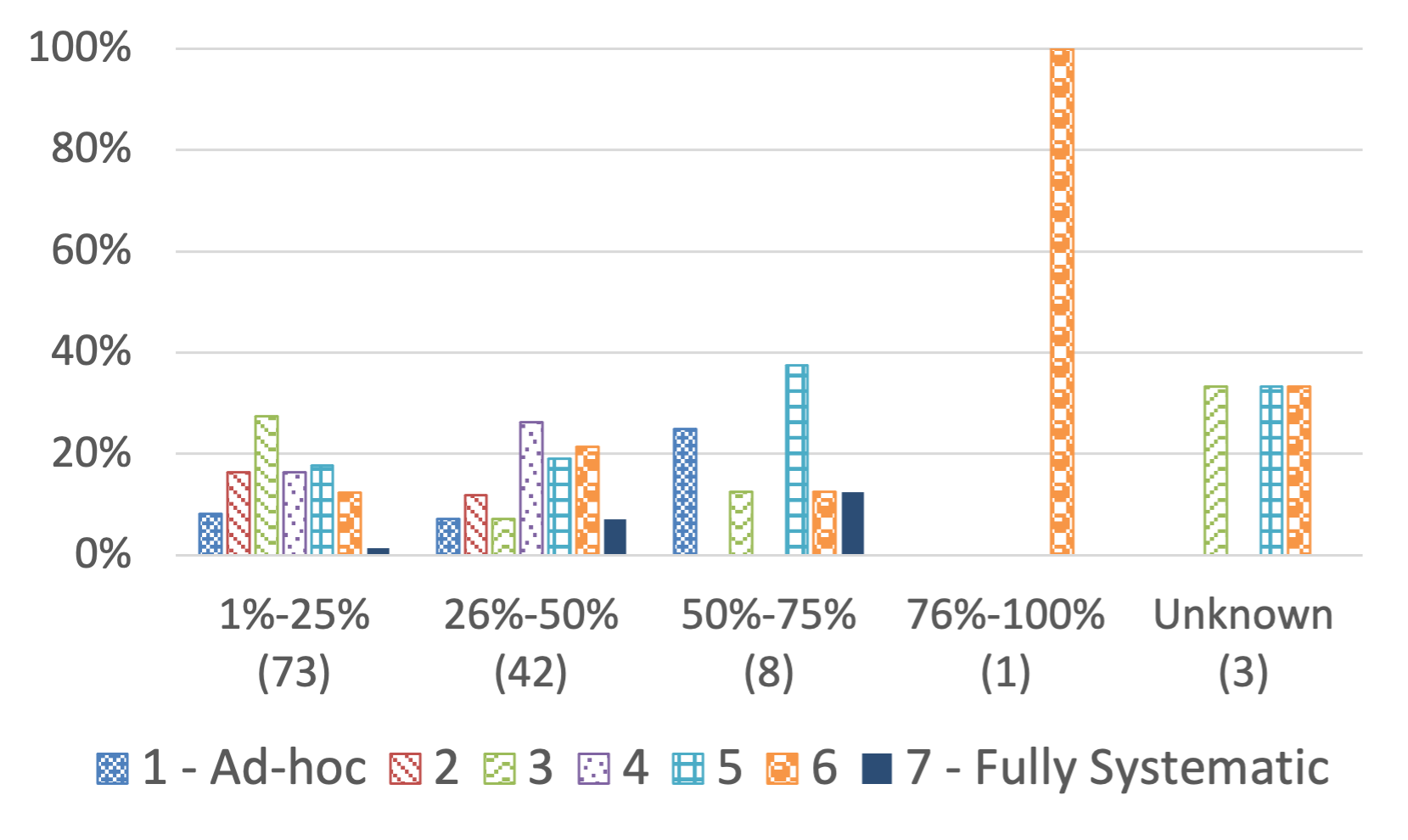

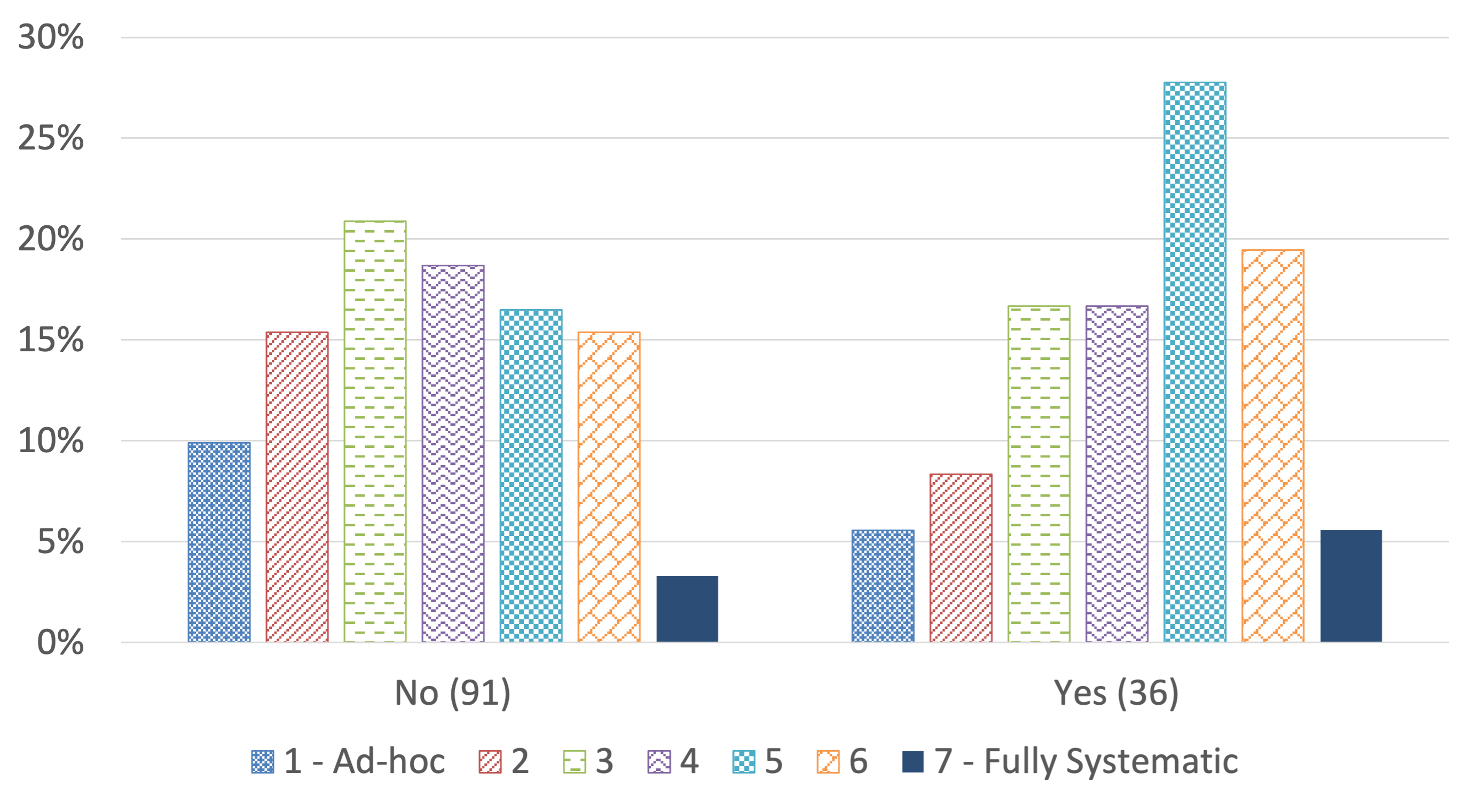

- Survey findings indicate demographic factors and specialized resource allocation significantly affect testing quality and reproducibility.

This essay provides a comprehensive examination of the paper titled "Testing Research Software: An In-Depth Survey of Practices, Methods, and Tools" (2501.17739). The paper takes a rigorous approach to understanding the intricacies and challenges associated with testing research software, through an extensive survey involving research software developers. Research software, serving critical roles across scientific disciplines, necessitates robust testing practices due to its complexity and the unique demands of scientific rigor. The study focuses on the varied approaches taken by developers, the challenges they face, and the demographic factors influencing testing practices.

Survey Design and Methodology

The survey was meticulously designed to capture detailed insights into the testing practices of research software developers. It entailed multiple-choice and open-ended questions, covering topics such as test case design, challenges with expected outputs, quality metrics, execution methods, tools used, and demographic influences. The diverse distribution channels ensured a wide and representative sample of research software developers. Data collection utilized the Qualtrics survey tool to facilitate comprehensive, structured responses, which were analyzed using both quantitative and qualitative methods.

Characteristics of the Testing Process

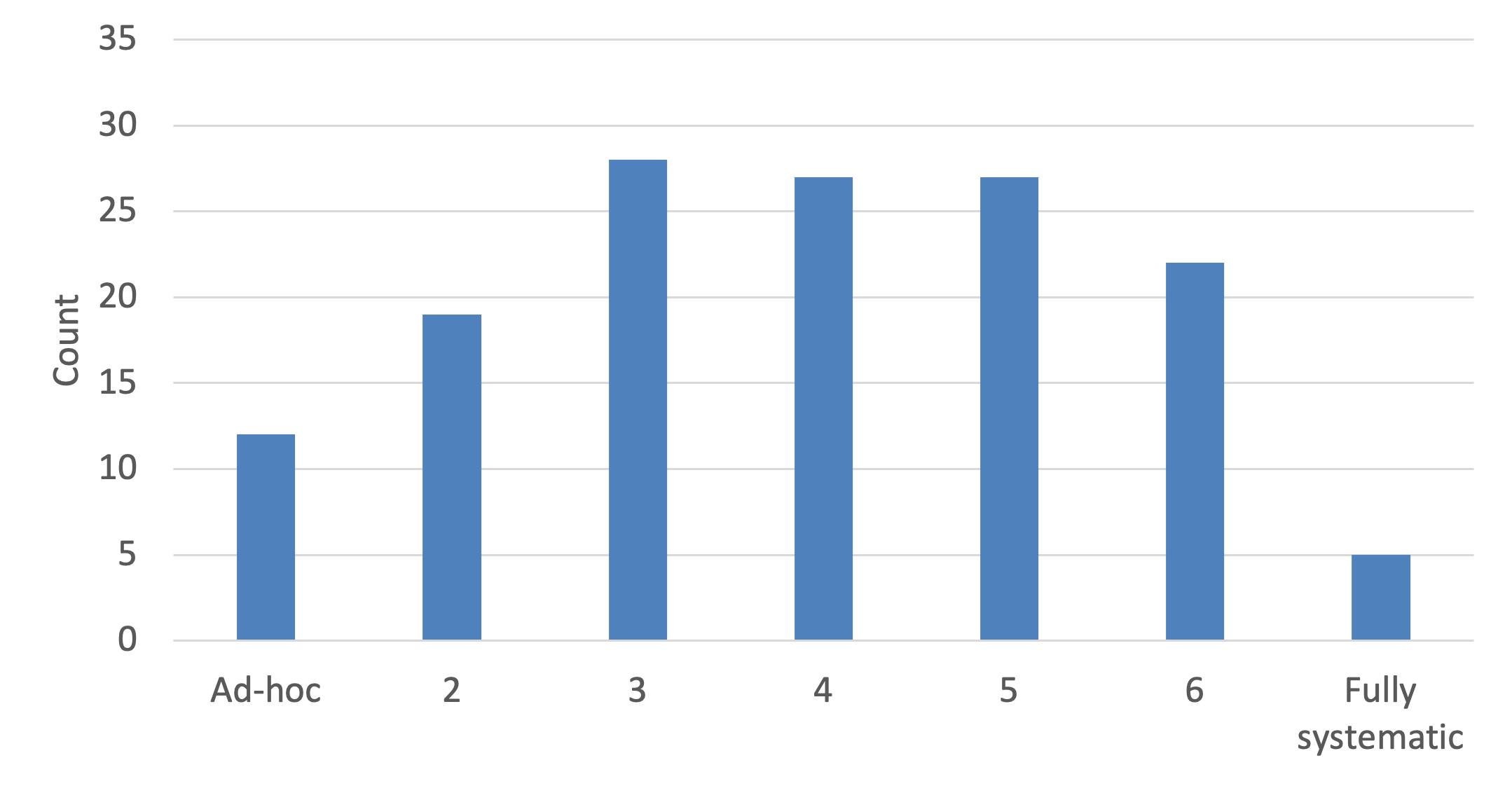

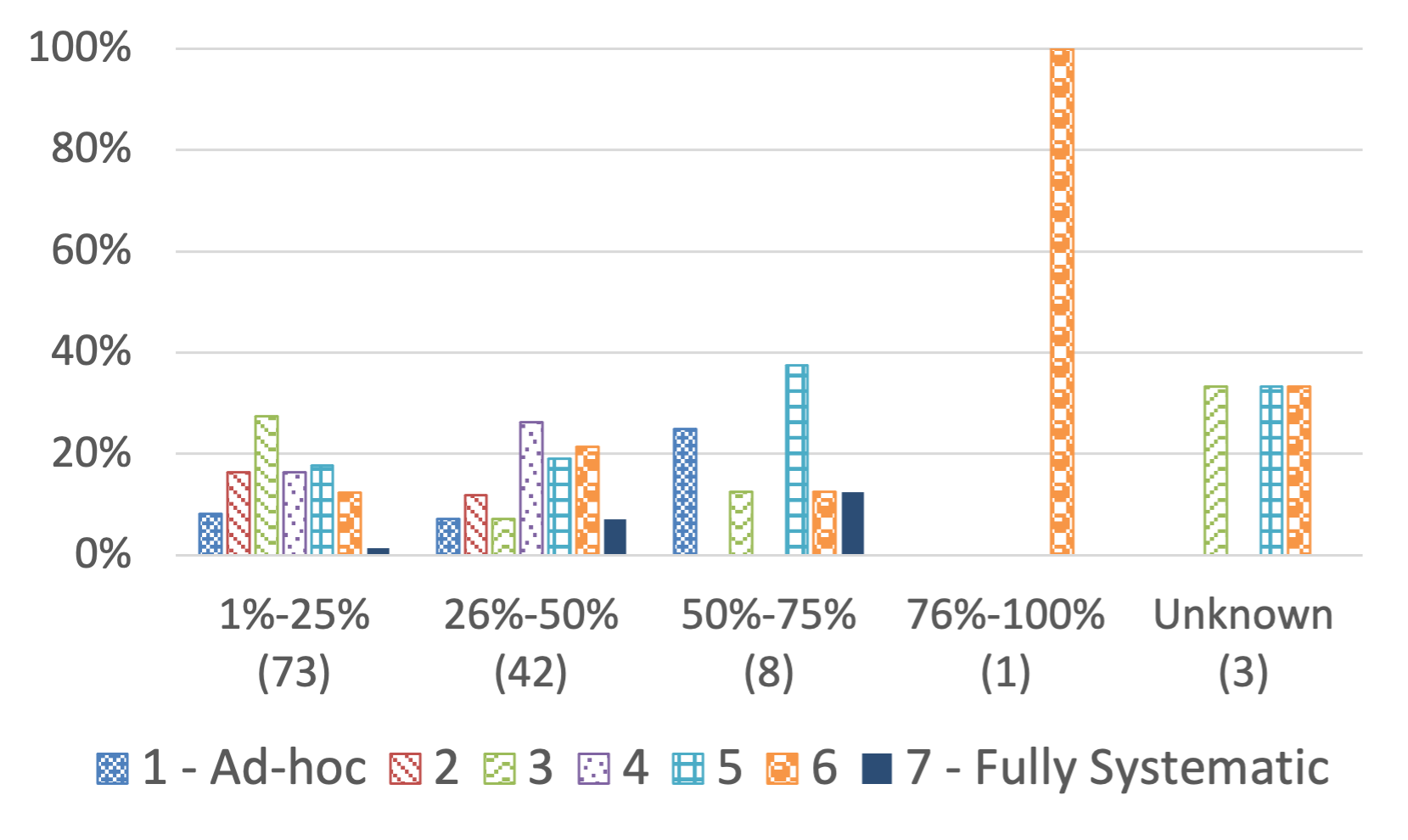

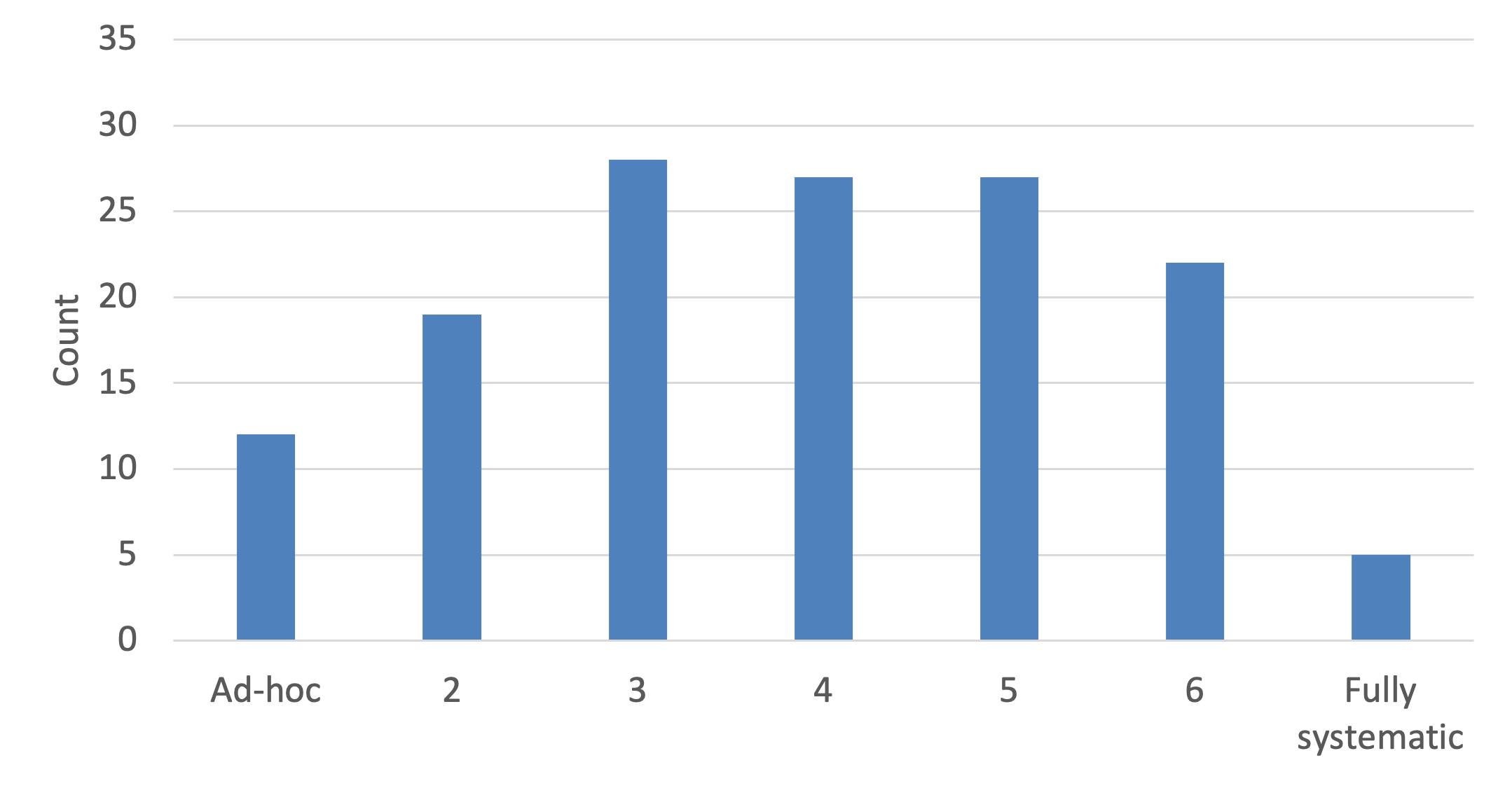

The paper reveals significant variability in the automation and systematic nature of testing processes among research software projects, with a general lean towards more automated practices. Unit testing and regression testing emerged as the predominant testing strategies. However, most projects lacked documented test specifications, indicating a potential gap in standardization and process rigor which could affect overall software quality.

Figure 1: Whether the testing process is ad-hoc or systematic: 1 - ad-hoc and 7 - systematic (Q11).

Industrial contexts often feature more structured testing protocols, suggesting a need for research software projects to adopt similar practices to ensure better quality control and reliability.

Challenges in Testing Research Software

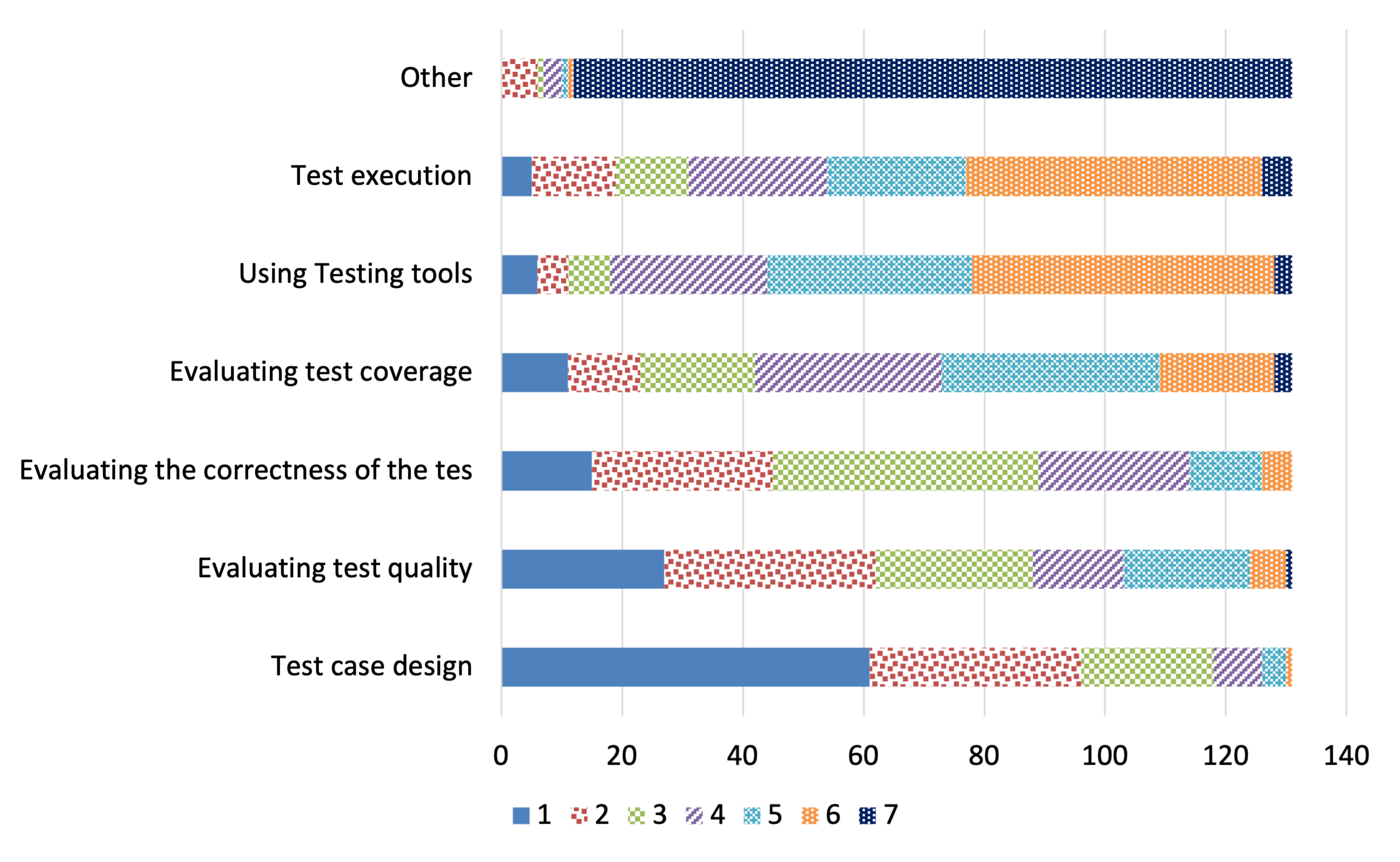

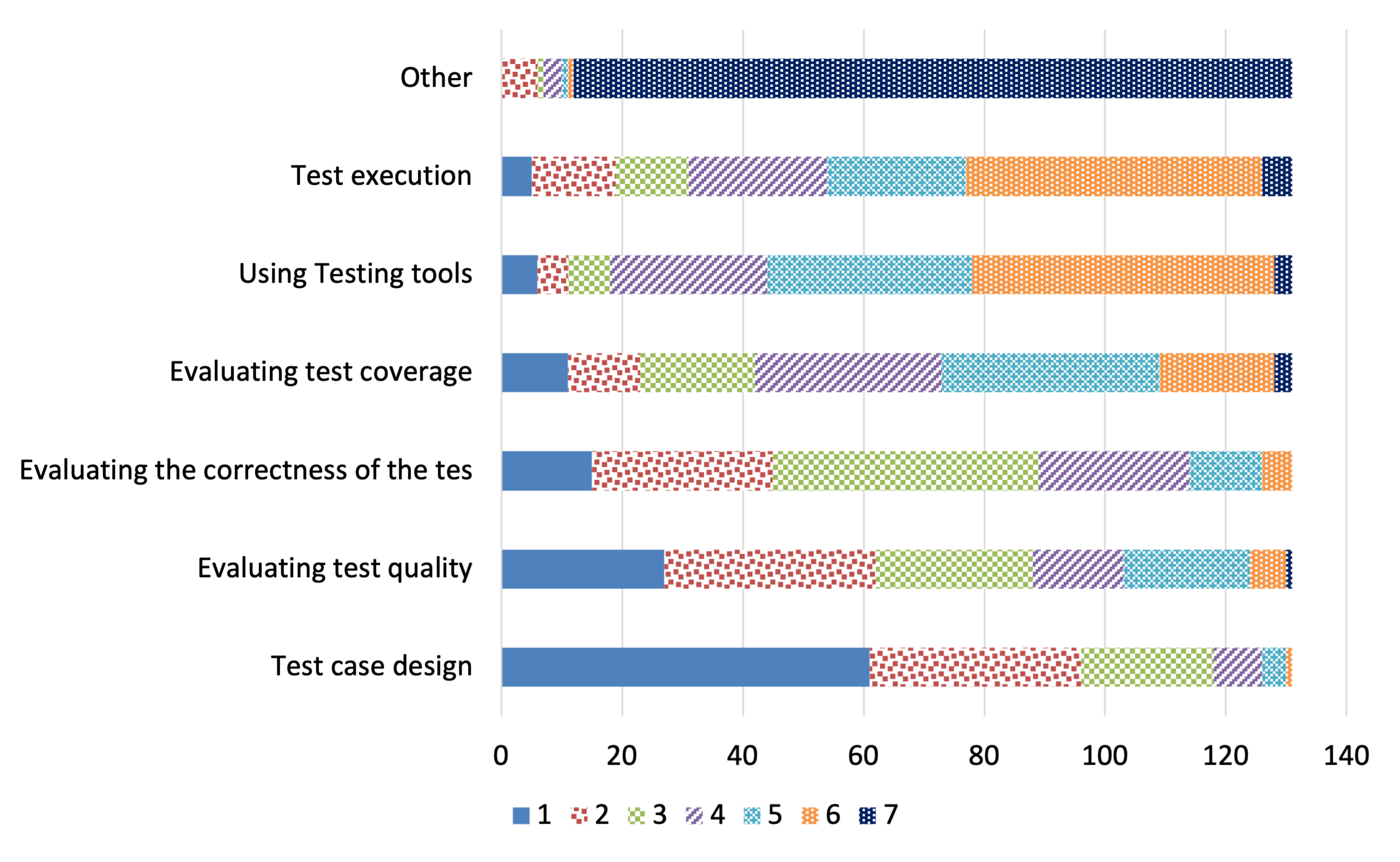

Test case design, largely due to the oracle problem and resource constraints, was identified as a significant challenge. Unlike in industrial software environments, these challenges in research software stem from non-deterministic outputs and complex algorithms inherent to scientific computations. The study suggests that strategies such as metamorphic testing could mitigate some of these issues by allowing testing in the absence of well-defined expected outcomes.

Figure 2: Ranking of testing challenges. Numbers on the right indicate the ranking given to a particular testing task based on its challenge level by the respondents (Q15).

Addressing these challenges is imperative for improving software reliability and aiding scientific validation processes.

The manual design of test inputs dominates, with ad-hoc methods also prevalent, highlighting a need for greater adoption of automated test input generation techniques. Determining expected outputs presents substantial difficulties, primarily due to the oracle problem. This issue is exacerbated by the complexity and non-determinism intrinsic to many research software applications.

Figure 3: Systematic testing process (Q11) Vs. percentage of time spent on testing (Q10).

These observations underline the necessity for specialized tools that accommodate the unique requirements of scientific software testing.

The study reports inconsistent use of testing and software quality metrics. Statement coverage is the most utilized metric, while tools for input generation and execution like Pytest and GitHub Actions are frequently mentioned. However, the lack of standardization in the toolset across projects poses a challenge for knowledge sharing and collaboration.

Implications for Research Software Development

Demographic factors such as domain and team size significantly influence testing practices. Projects with dedicated FTEs for testing exhibited more rigorous testing approaches, underscoring the benefit of allocating specialized resources to improve testing quality.

Figure 4: Systematic testing process (Q11) Vs. having FTEs devoted to testing (Q8).

Encouraging implementation of systematic testing processes and fostering community-driven tool development can lead to enhancements in research software testing practices.

Conclusion

The in-depth survey of practices, methods, and tools utilized in testing research software reveals a landscape characterized by variability, complexity, and unique challenges. The study's findings highlight the need for enhanced tool support and systematic testing methods tailored for scientific software. By addressing the identified challenges and leveraging demographic insights, stakeholders can improve the quality and reliability of research software, thereby advancing scientific discovery and ensuring robust, reproducible results.