Foundational Models for 3D Point Clouds: A Survey and Outlook

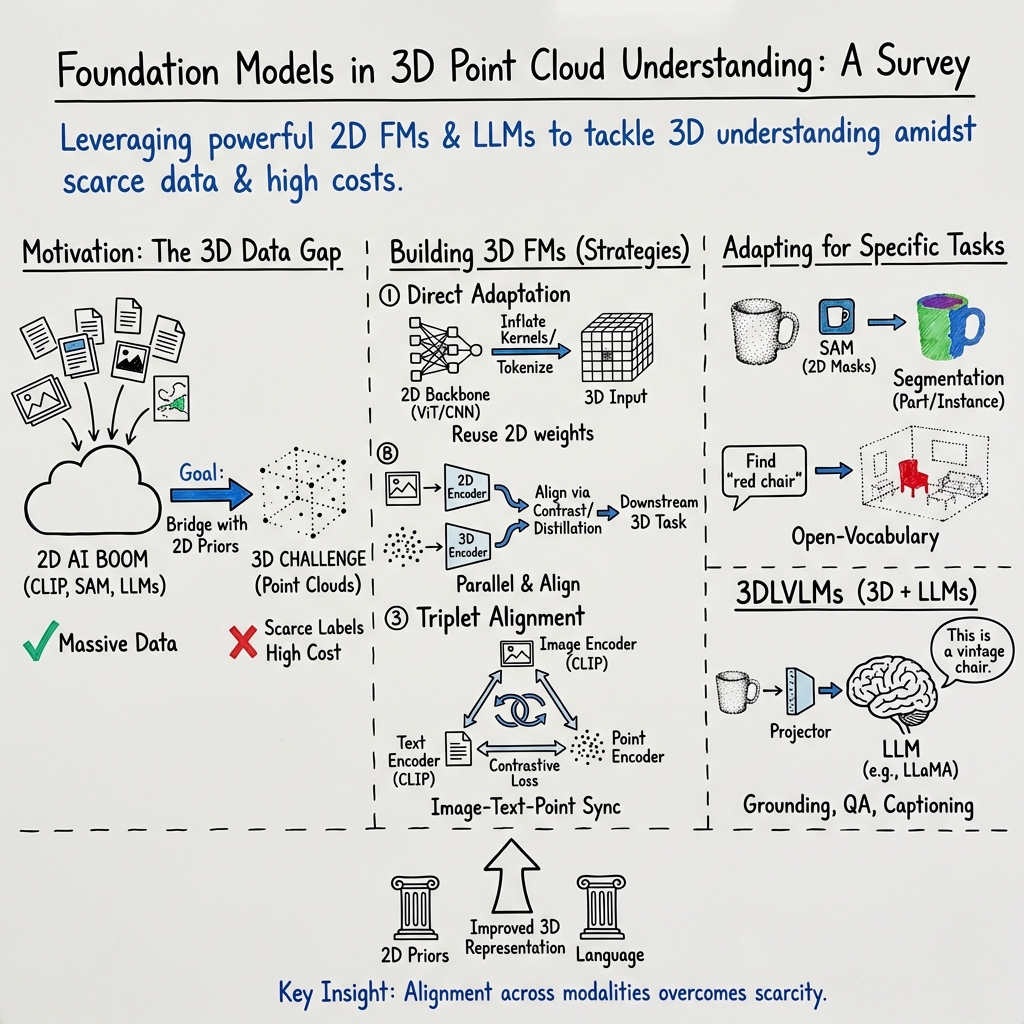

Abstract: The 3D point cloud representation plays a crucial role in preserving the geometric fidelity of the physical world, enabling more accurate complex 3D environments. While humans naturally comprehend the intricate relationships between objects and variations through a multisensory system, AI systems have yet to fully replicate this capacity. To bridge this gap, it becomes essential to incorporate multiple modalities. Models that can seamlessly integrate and reason across these modalities are known as foundation models (FMs). The development of FMs for 2D modalities, such as images and text, has seen significant progress, driven by the abundant availability of large-scale datasets. However, the 3D domain has lagged due to the scarcity of labelled data and high computational overheads. In response, recent research has begun to explore the potential of applying FMs to 3D tasks, overcoming these challenges by leveraging existing 2D knowledge. Additionally, language, with its capacity for abstract reasoning and description of the environment, offers a promising avenue for enhancing 3D understanding through large pre-trained LLMs. Despite the rapid development and adoption of FMs for 3D vision tasks in recent years, there remains a gap in comprehensive and in-depth literature reviews. This article aims to address this gap by presenting a comprehensive overview of the state-of-the-art methods that utilize FMs for 3D visual understanding. We start by reviewing various strategies employed in the building of various 3D FMs. Then we categorize and summarize use of different FMs for tasks such as perception tasks. Finally, the article offers insights into future directions for research and development in this field. To help reader, we have curated list of relevant papers on the topic: https://github.com/vgthengane/Awesome-FMs-in-3D.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is a big guide to a fast-growing area in artificial intelligence: teaching computers to understand the 3D world using “point clouds.” A point cloud is like a huge set of dots in space that form the shape of things (imagine lots of LEGO studs floating in the air that outline a chair, a room, or a street). The paper explains how powerful “foundation models” — the same kind of models that made chatbots and image tools smart — can be used to make sense of these 3D dots. It reviews what people have tried so far, what works, what’s hard, and where the field is headed.

Key Questions the Paper Tries to Answer

The paper looks at simple but important questions:

- How can we use big, pre-trained models from 2D data (like images and text) to boost understanding of 3D point clouds?

- What kinds of training tricks and model designs help when 3D datasets are small or expensive to make?

- How do LLMs (the kind that write and understand text) help computers reason about 3D scenes?

- Which methods work best for common 3D tasks, like recognizing objects, finding parts, and detecting things in scenes?

How the Research Was Done (Methods and Approaches)

This is a survey paper, which means the authors didn’t run one new experiment. Instead, they carefully read and compared many recent studies to organize ideas, methods, and results into a clear “map” of the field. They also explain key background topics so newcomers can follow along.

Here are the building blocks they cover, explained in everyday terms:

- Point clouds: A point cloud is a set of 3D points (dots) with coordinates like (x, y, z). Sometimes each point also has extra information, like color or brightness. Unlike pictures, there’s no fixed “grid” — it’s just an unordered collection of points.

- Foundation models (FMs): Think of these as giant, general-purpose AI “toolboxes” trained on massive datasets. They learn broad patterns (like shapes, textures, and words) so they can adapt to many tasks later. Examples include:

- Vision models: CLIP, ViT, DINO, SAM (great at understanding images and segments).

- LLMs: GPT, LLaMA, and similar (great at understanding and producing text).

- Vision-LLMs (VLMs): Combine pictures and text, like CLIP and BLIP-2.

- Pre-training: Like practicing on a huge pile of examples so the model becomes good at spotting useful patterns.

- Adaptation: Taking the pre-trained model and adjusting it for a new task (like adding a small “head” for classification or tuning a few parameters). Modern tricks like LoRA and QLoRA let you adapt giant models without needing a supercomputer.

To make sense of how 2D knowledge helps in 3D, the paper groups the methods into three easy-to-grasp strategies:

- Direct adaptation: Start with a 2D model and tweak it to handle point clouds (for example, turning 2D filters into 3D ones, or projecting 3D points into 2D views).

- Dual encoders: Use two “encoders” side by side — one for images and one for point clouds — and teach them to align, so the point-cloud encoder learns from the image encoder’s strong features.

- Triplet alignment: Make three encoders (text, image, and point cloud) “speak the same language” by aligning their features. This often uses CLIP-style training so that descriptions, pictures, and 3D shapes match each other.

They also describe datasets used in 3D research:

- Object-level datasets (like ShapeNet and ModelNet) contain individual items (chairs, tables, etc.).

- Scene-level datasets (like ScanNet and nuScenes) contain full rooms or outdoor scenes with many objects.

- New multimodal datasets add language and images to 3D data, so models can learn both what things look like and how we talk about them.

Main Findings and Why They Matter

The survey highlights several important lessons:

- 2D knowledge transfers surprisingly well to 3D. Since images and 3D shapes both describe the same physical world, features learned from millions of images help a lot with point clouds. For example, models can project 3D points into multiple 2D views, run them through a strong image encoder, and combine the results.

- Aligning image, text, and 3D representations is powerful. When a model learns that “a red chair” links to certain images and certain 3D shapes, it can recognize chairs in point clouds even without many 3D labels. This enables “zero-shot” tasks, where the model performs well on new categories it wasn’t explicitly trained on in 3D.

- LLMs enable 3D reasoning. By connecting point-cloud features to text embeddings, models can do tasks like:

- 3D captioning: describing objects and scenes with words.

- Grounding: finding the exact object someone mentions (“the small blue mug on the left shelf”).

- Question answering: responding to questions about a 3D scene.

- Practical adaptation matters. Fully fine-tuning giant models is expensive. Parameter-efficient methods (like LoRA and prompt tuning) let researchers adapt big models to 3D tasks with less memory and compute.

- Data remains the biggest challenge. High-quality 3D data is costly to collect and label. Scene-level datasets are harder than object-level ones. Multimodal datasets that link 3D with images and text are helping, but there’s still a need for more diverse, well-annotated 3D data.

Overall, the paper’s main contribution is a clear, structured “roadmap” showing how different methods connect, what they achieve, and where they struggle. It also curates a list of relevant works so researchers can quickly find what they need.

What This Means for the Future

This research is important because many real-world tasks happen in 3D:

- Safer self-driving cars and smarter home robots need to understand complex 3D scenes.

- AR/VR and gaming benefit from accurate 3D recognition and scene understanding.

- Industries like construction, mapping, and healthcare rely on precise 3D data.

By leveraging strong 2D and LLMs, we can speed up progress in 3D without always needing huge 3D datasets. The paper suggests future work should focus on:

- Better multimodal datasets that combine 3D with images and language at scale.

- Smarter ways to adapt big models to 3D tasks with limited compute.

- Stronger integration of LLMs for planning, reasoning, and interactive 3D tasks.

- More robust methods for full scenes, not just single objects.

In simple terms: this paper shows that teaching computers about 3D using the skills they mastered in 2D and text is not only possible — it’s already working well. If researchers keep building on this foundation, we’ll get AI systems that understand our 3D world more like we do, making everyday technology smarter and more helpful.

Collections

Sign up for free to add this paper to one or more collections.