- The paper introduces ELIR, leveraging latent consistency flow matching to achieve a fourfold reduction in model size and faster inference than previous methods.

- The methodology employs a convolution-centered architecture with Tiny AutoEncoder and RRDB blocks, bypassing transformers for enhanced efficiency.

- Experiments demonstrate ELIR's competitive performance on tasks like blind face restoration and super-resolution, with improved metrics such as FID, PSNR, and FPS.

Efficient Image Restoration via Latent Consistency Flow Matching

Abstract and Introduction

The paper introduces ELIR (Efficient Latent Image Restoration), a novel approach to image restoration that emphasizes efficiency in both model size and computational cost while maintaining high performance in image quality metrics. The motivation stems from recent advances in generative image restoration (IR), which often require large models and significant computational resources, making deployment on edge devices challenging.

ELIR addresses the distortion-perception trade-off in the latent space using a latent consistency flow-based model. It is designed to be 4 times smaller and faster than existing state-of-the-art diffusion and flow-based methods for tasks like blind face restoration, enabling deployment on resource-constrained devices. The method shows competitive performance across various IR tasks, including blind face restoration, super-resolution, denoising, and inpainting while substantially reducing model size and computational demands.

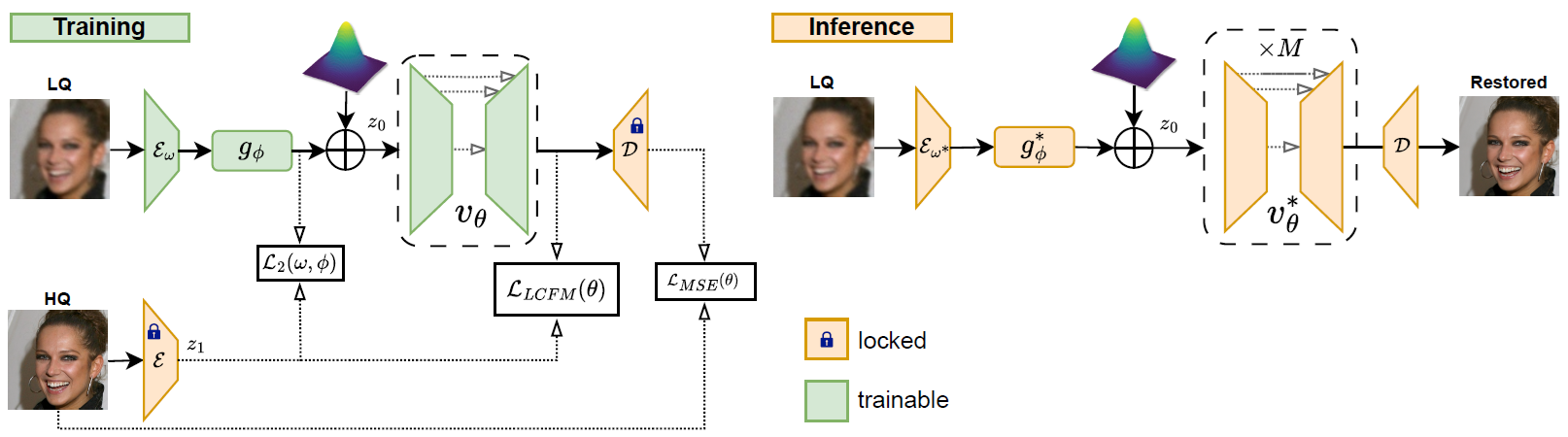

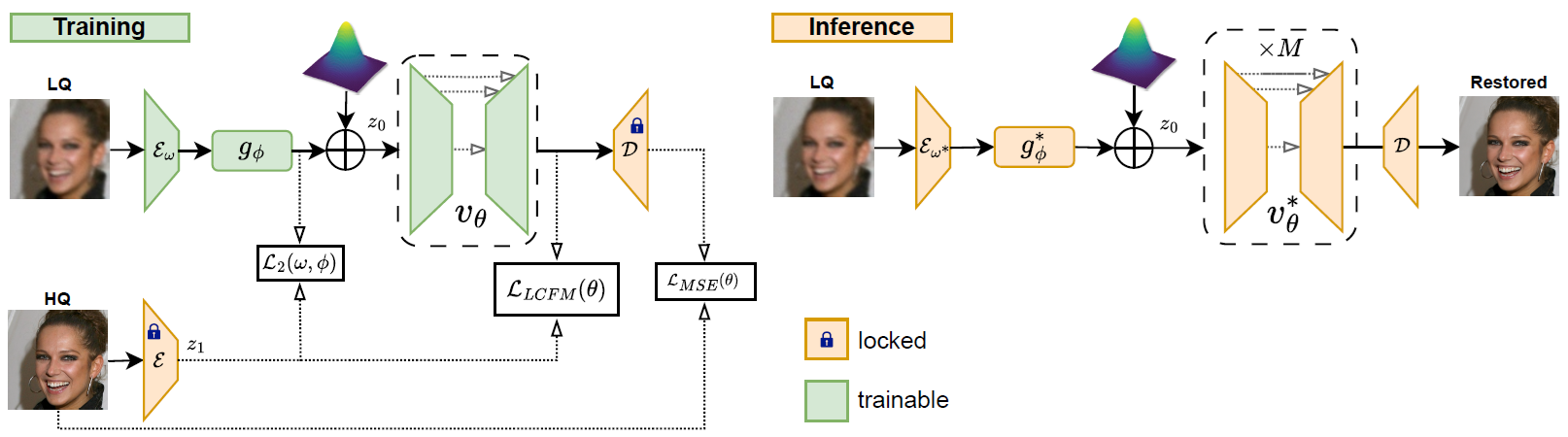

Figure 1: ELIR Overview. During training, we optimize the encoder Eω, coarse estimator gϕ, and the vector field vθ for a specific IR task.

GAN-based Methods

Previous GAN-based techniques like BSRGAN, GFPGAN, and GPEN have shown effectiveness in tasks such as blind super-resolution and face restoration. These methods leverage GAN priors to enhance image restoration capabilities but often suffer from large model sizes and computational inefficiencies.

Diffusion-based Methods

Diffusion models, such as DDRM and GDP, offer superior generative capabilities compared to GANs, yet their deployment is hindered by high computational and memory costs due to the extensive neural function evaluations required during inference.

Flow-based Methods

Flow-based approaches, including PMRF and FlowIE, focus on image enhancement but encounter similar deployment challenges due to resource-intensive operations. ELIR builds upon these by introducing Latent Consistency Flow Matching (LCFM) to improve efficiency.

Methodology

ELIR integrates latent flow matching and consistency flow matching to form LCFM, optimizing the transport between latent representations of source and target distributions. Training involves minimizing encoder-decoder errors alongside matching latent flows to balance distortion and perception in image restoration.

The architecture bypasses transformer-based designs and utilizes a convolution-centered approach with Tiny AutoEncoder and RRDB blocks for efficient model size and inference speed, enabling practical deployment on edge devices. The LCFM process, coupled with efficient architecture, allows ELIR to outperform in terms of frames per second processing speed and model size compared to existing methods.

Experiments

Blind Face Restoration

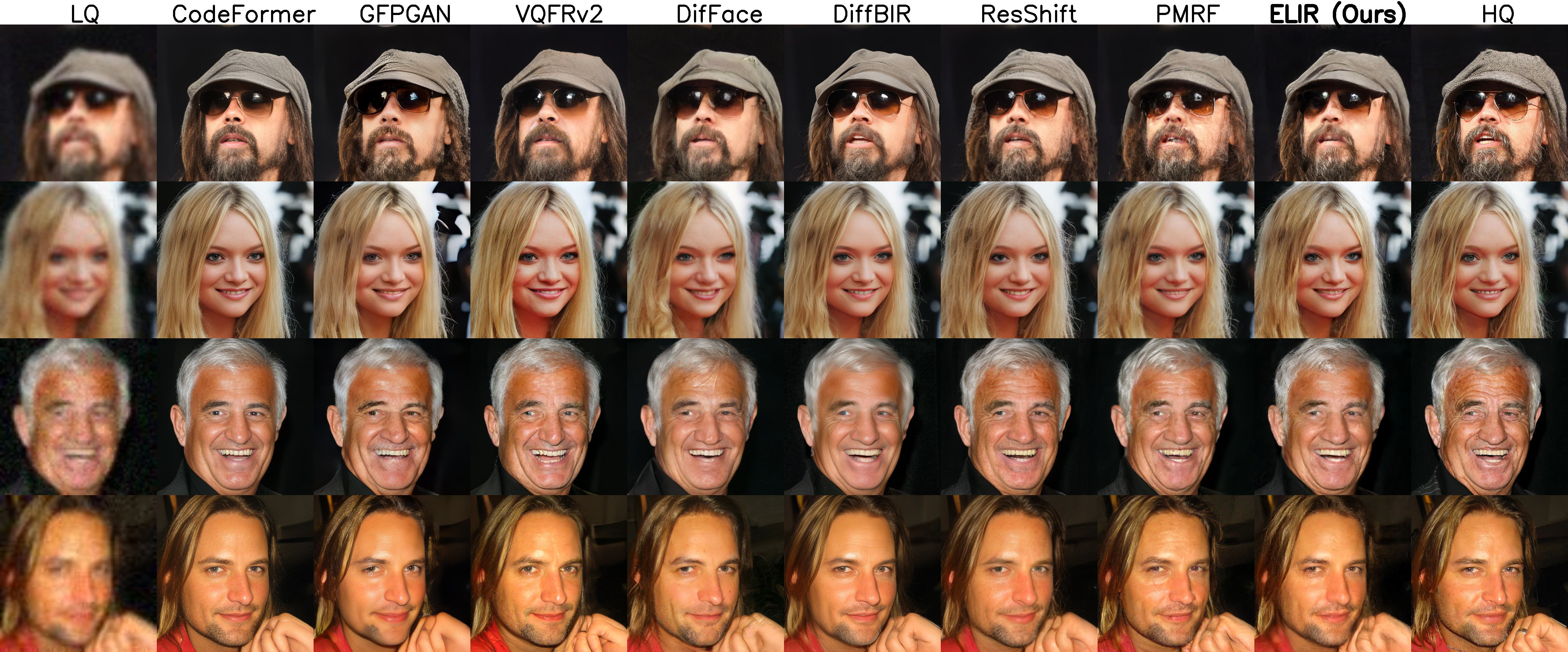

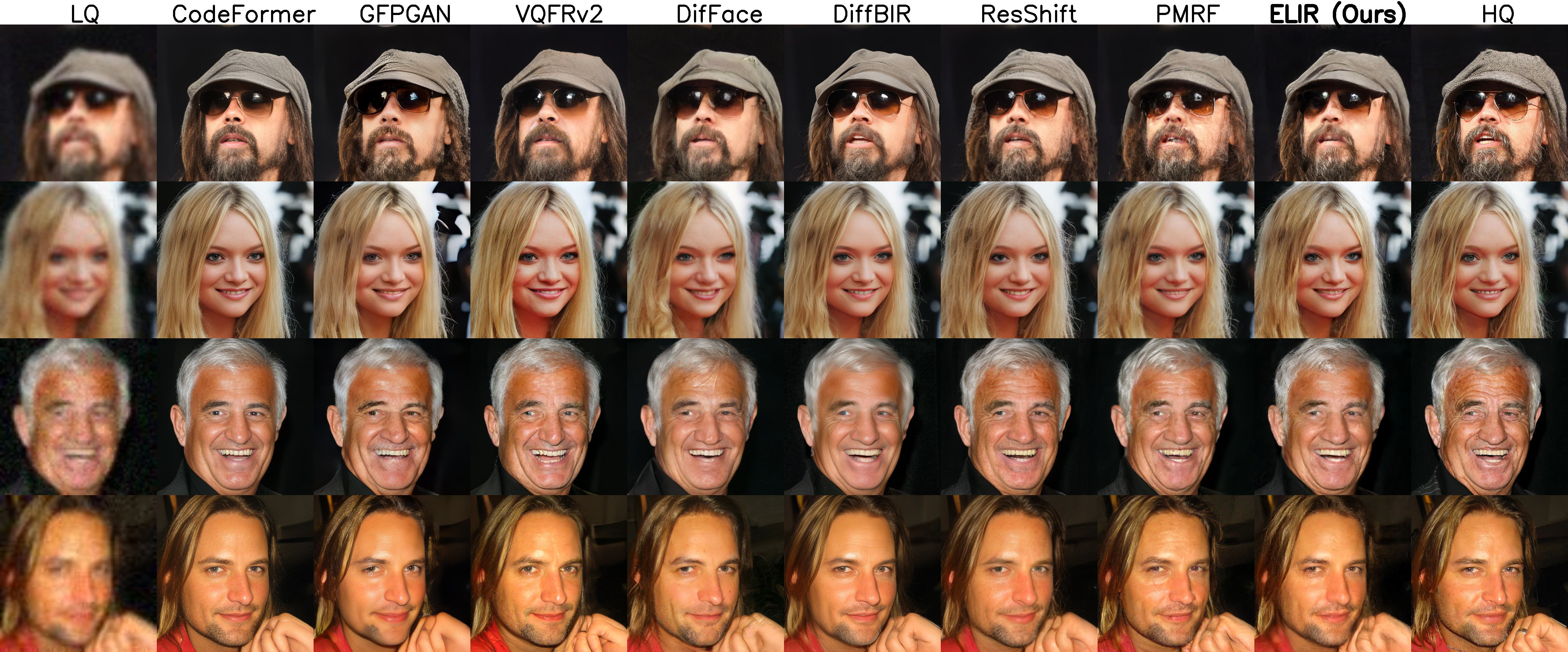

ELIR shows competitive results against models like GFPGAN, VQFR, and PMRF, providing a balanced approach between perceptual quality and distortion metrics such as FID and PSNR. ELIR's efficiency improvements are evident in its reduced model size and increased FPS compared to diffusion-based methods.

Figure 2: BFR Visual Results. Visual comparisons between ELIR and baseline models sampled from CelebA-Test for blind face restoration.

Image Restoration Tasks

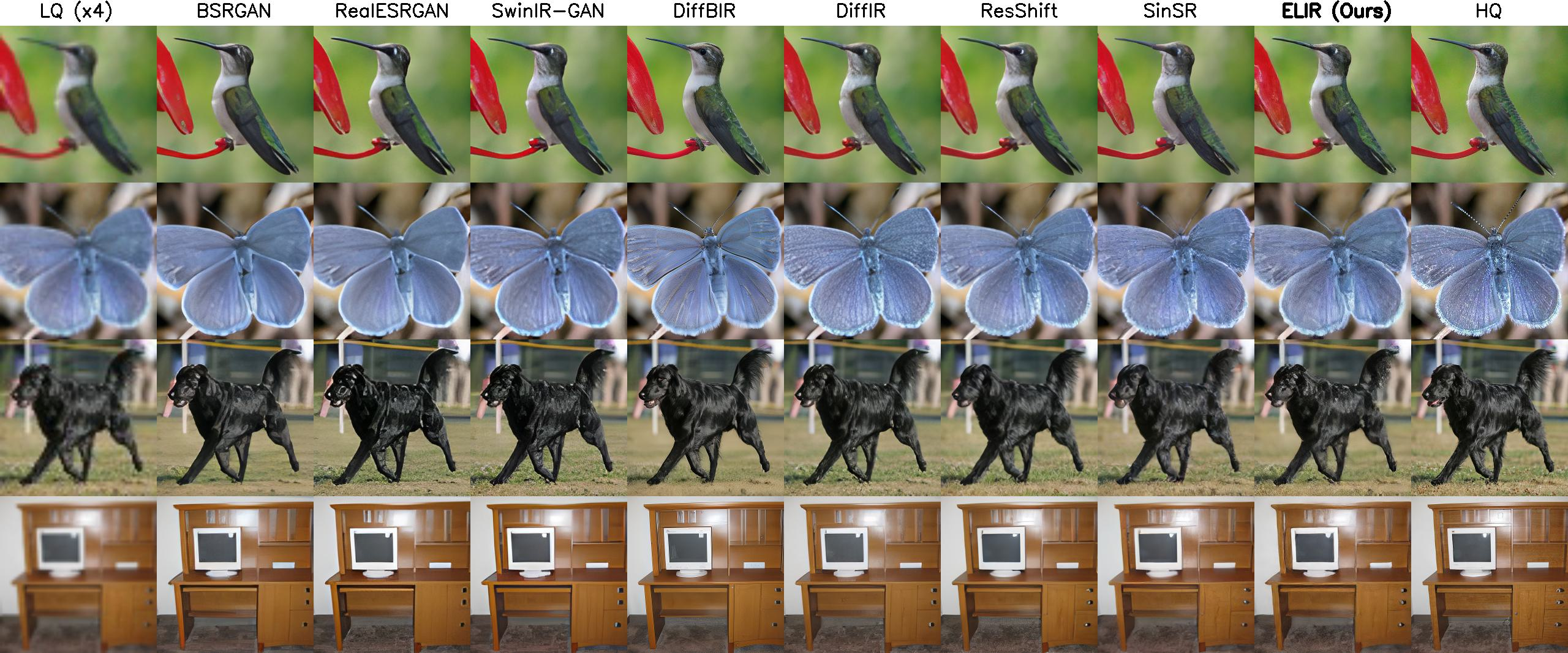

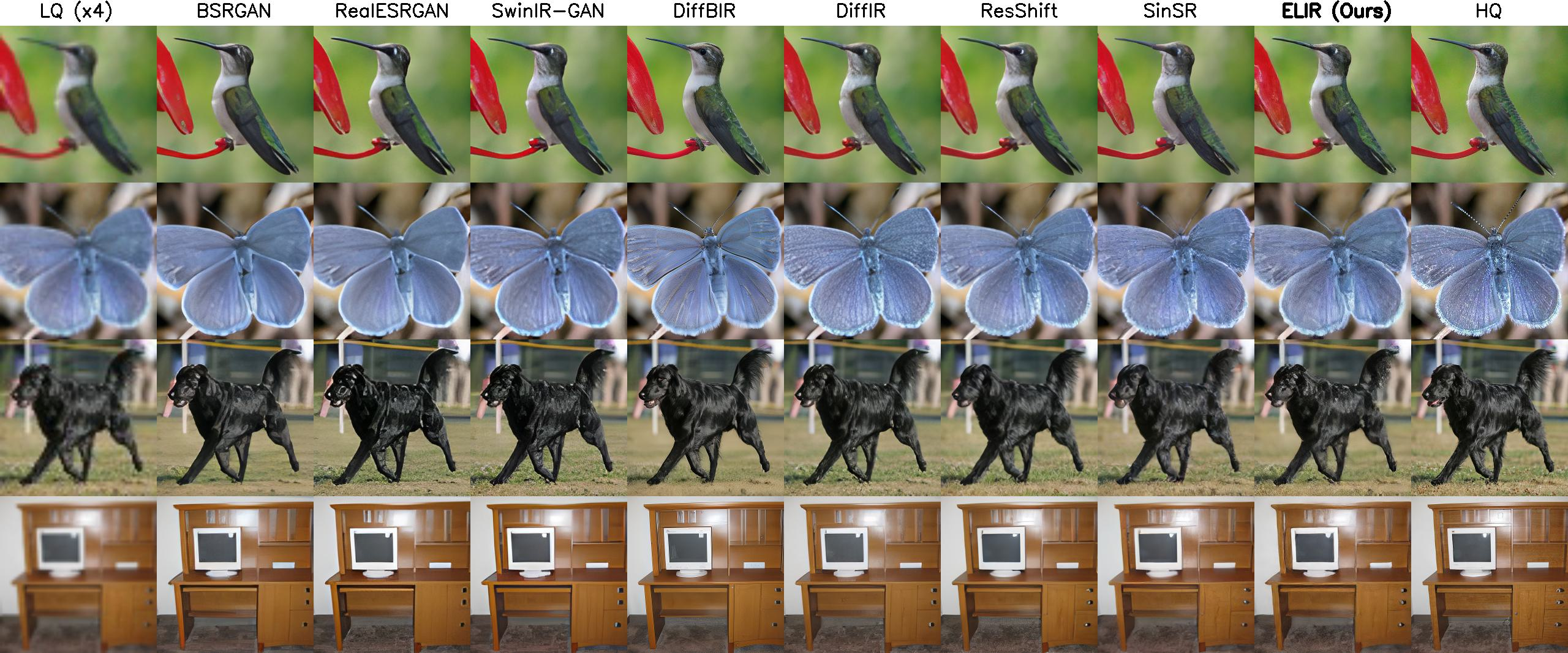

In tasks like super-resolution and inpainting, ELIR demonstrates competitive performance with PMRF, exhibiting significant model size reduction and latency improvements. These results confirm ELIR's suitability for deployment in real-time applications where computational resources are limited.

Figure 3: BSR Visual Results. Visual comparisons between ELIR and baseline models sampled from ImageNet-Validation for blind super-resolution.

Conclusions

ELIR introduces a robust approach to efficient image restoration by leveraging latent representations and consistency flow matching. Its compact architecture and optimized training process allow deployment on resource-constrained devices while maintaining competitive image restoration performance. Future work could explore further architectural adjustments and real-world deployment scenarios to enhance ELIR's applicability.