- The paper's main contribution is the Transformer Flow Approximation Theorem, showing that transformer layers approximate an ODE solution via forward Euler discretization.

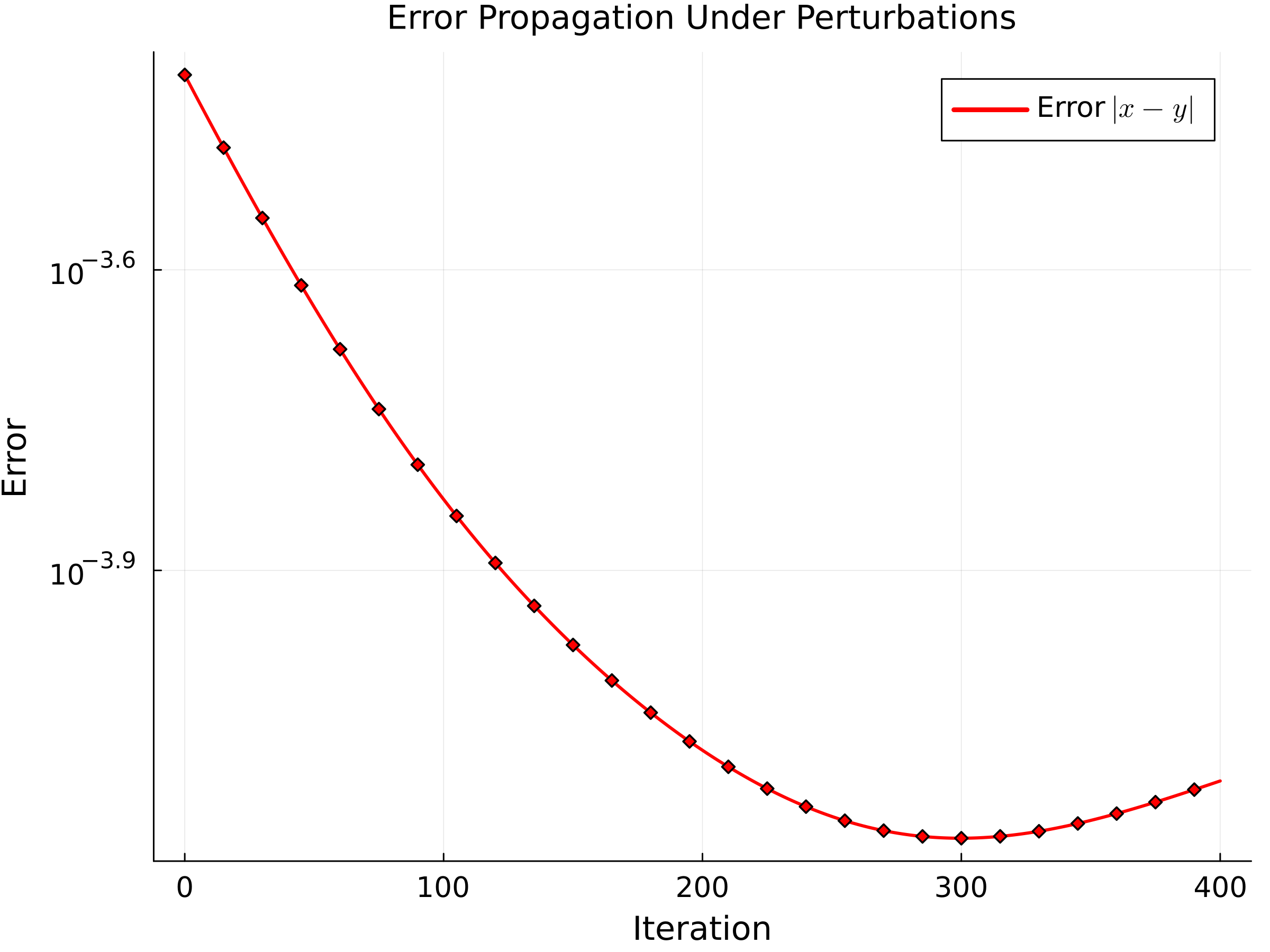

- It demonstrates contractive dynamics under one-sided Lipschitz conditions, ensuring exponential decay of perturbations across layers.

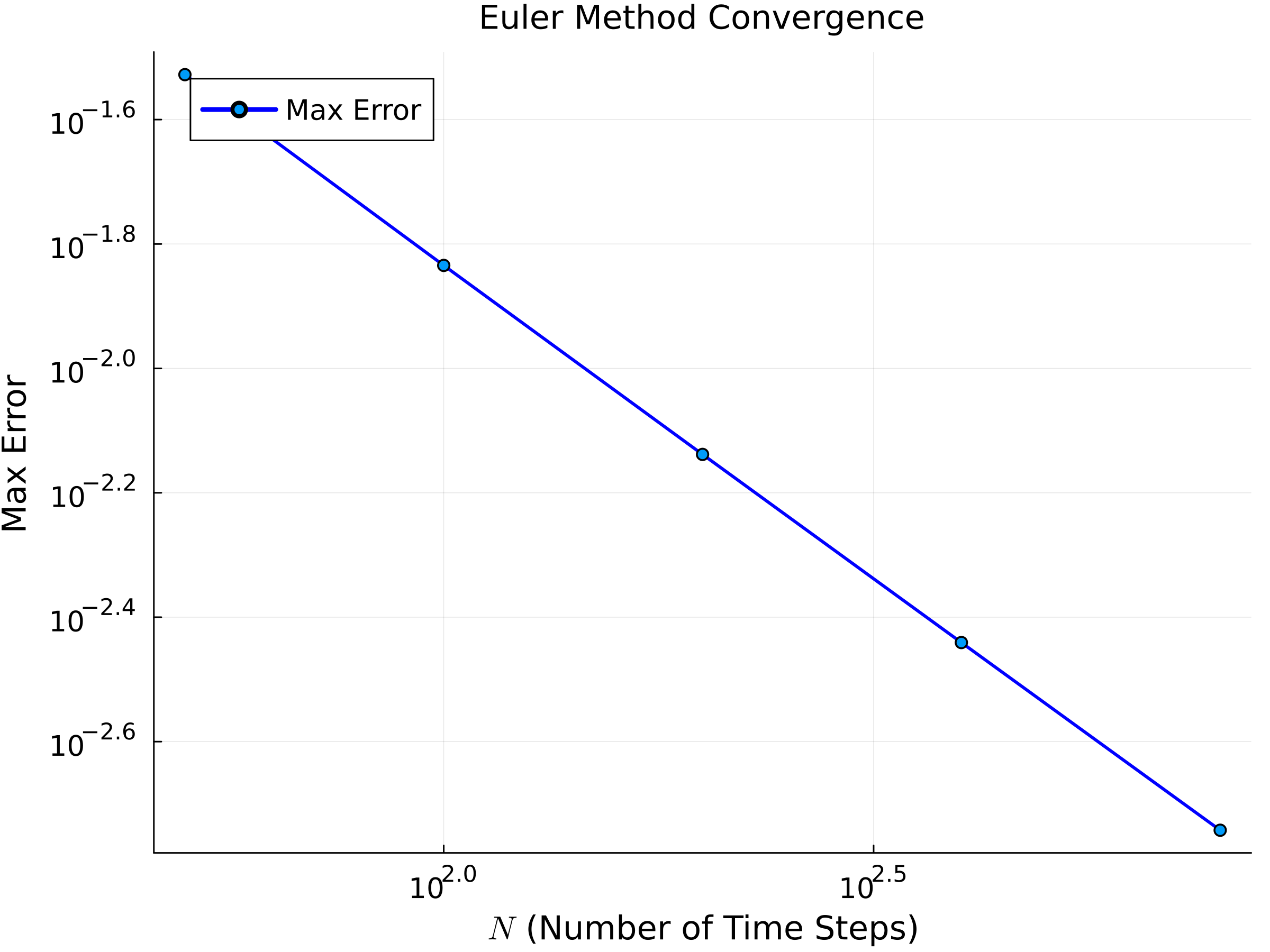

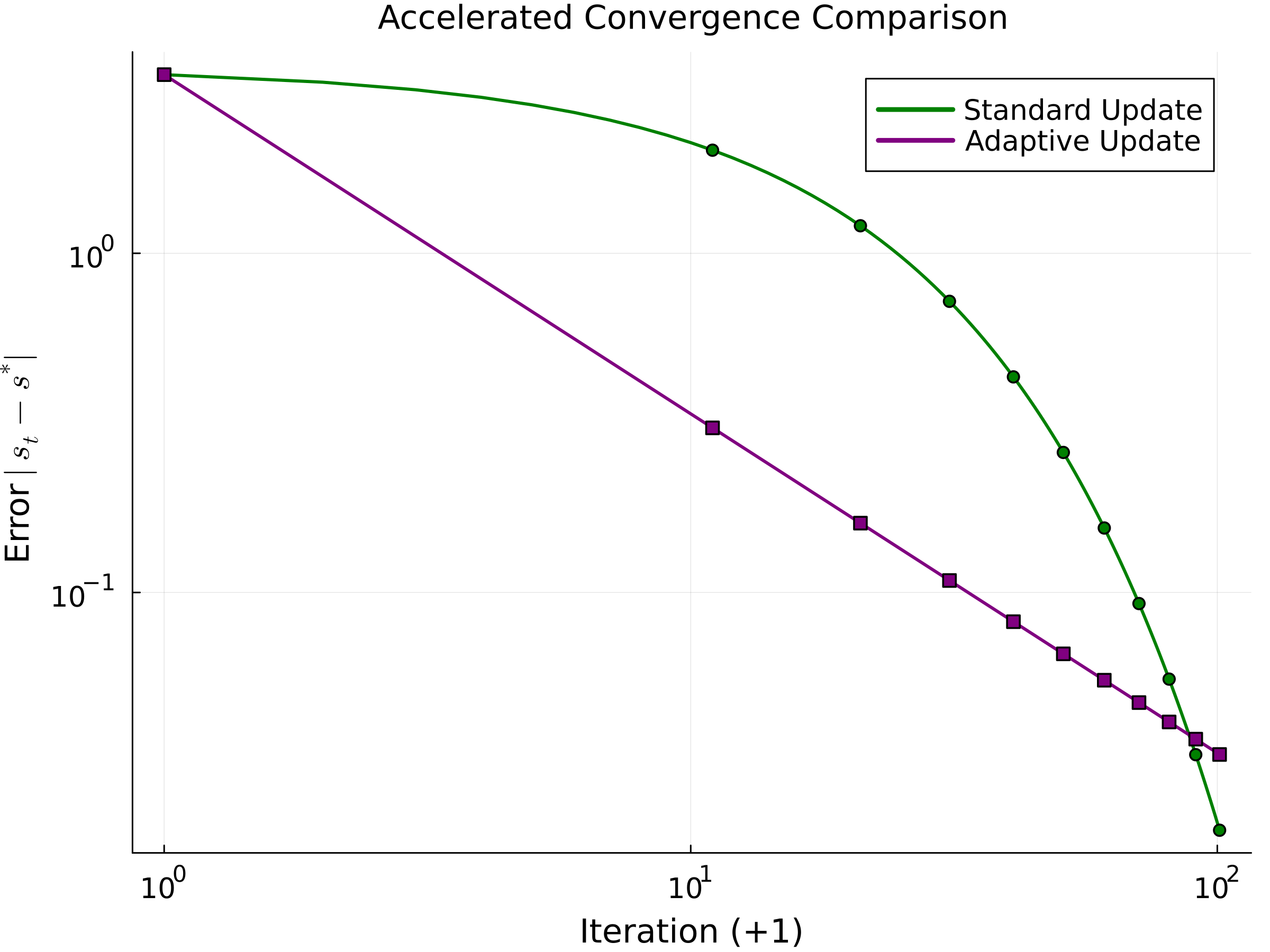

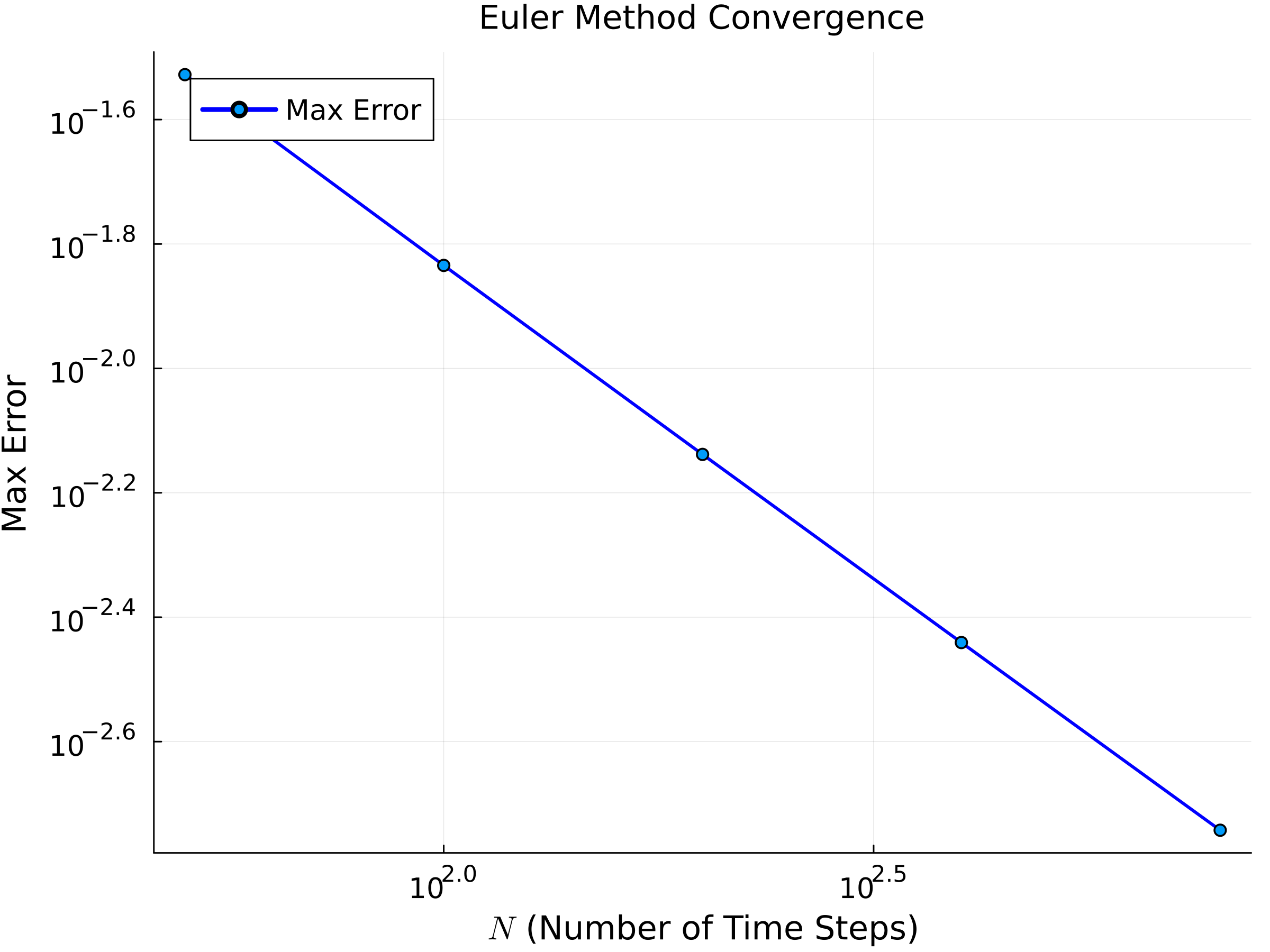

- Empirical validation confirms an O(1/N) convergence rate and accelerated convergence with adaptive mechanisms, linking theory with practical performance.

Introduction

This paper provides a formal analysis of transformer architectures by framing them within the context of continuous dynamical systems. It posits that discrete transformer updates naturally correspond to a forward Euler discretization of an ordinary differential equation (ODE). This interpretation leads to the main theoretical result, the Transformer Flow Approximation Theorem, which states that token representations across transformer layers converge to the solution of the ODE as the number of layers increases. The paper further explores the implications of one-sided Lipschitz continuity, demonstrating contractive properties that ensure exponential decay of perturbations across layers.

The central contribution of the paper is the Transformer Flow Approximation Theorem. Under standard Lipschitz assumptions, transformer layers approximate a continuous flow described by an ODE. The theorem asserts:

- Convergence: As the number of layers grows, the token representations converge uniformly to the unique solution of the ODE derived from the layer update.

- Contractive Dynamics: If the underlying mapping has a one-sided Lipschitz condition with a negative constant, the dynamics are contractive. Perturbations thus decay exponentially across layers.

Figure 1: Log-log plot of the maximum error E(N) versus the number of time steps N for the Euler method. The observed O(1/N) convergence rate verifies the theoretical analysis.

This theorem links architectural components to broader iterative reasoning frameworks, providing avenues for accelerated convergence and empirical validation through controlled experiments.

The paper connects its findings with existing research in several areas:

- Transformer Architectures: The continuous perspective offers insights into the stability and expressivity of transformers.

- Neural ODEs: Transformers are shown to be discrete realizations of continuous flows akin to Neural ODEs.

- Dynamical Systems in Deep Learning: Building on prior studies, the paper establishes the contractive behavior of transformers.

- Iterative Reasoning Frameworks: By relating transformer updates to iterative methods like mirror descent, the analysis suggests strategies for improved convergence rates.

Empirical Validation

The paper includes experimental results that confirm the theoretical predictions regarding convergence rates and stability:

- Euler Method Convergence: Validated with synthetic data, showing the expected O(1/N) convergence rate.

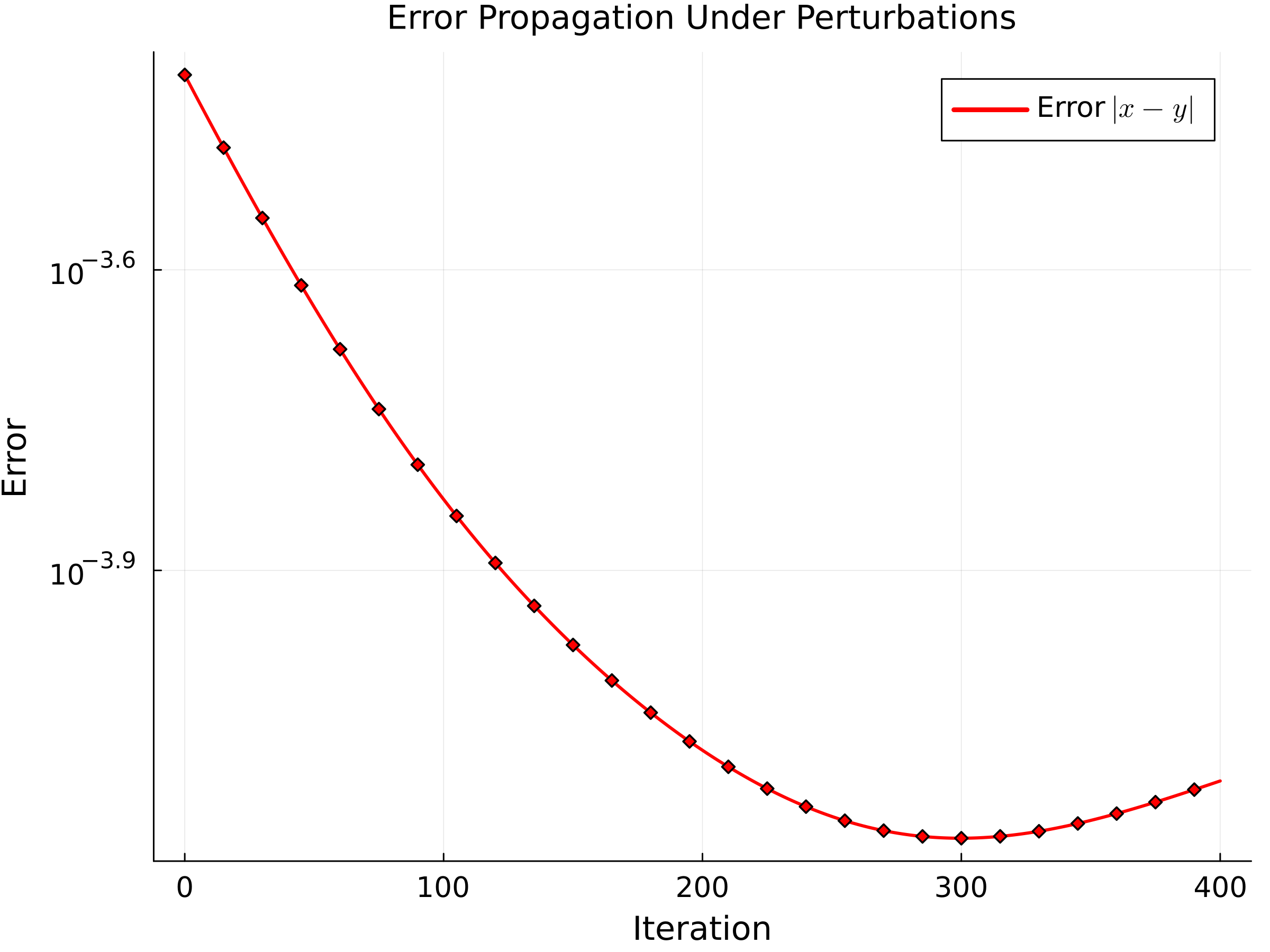

- Robustness to Perturbations: Experiments demonstrate exponential decay of perturbations, supporting theoretical stability results.

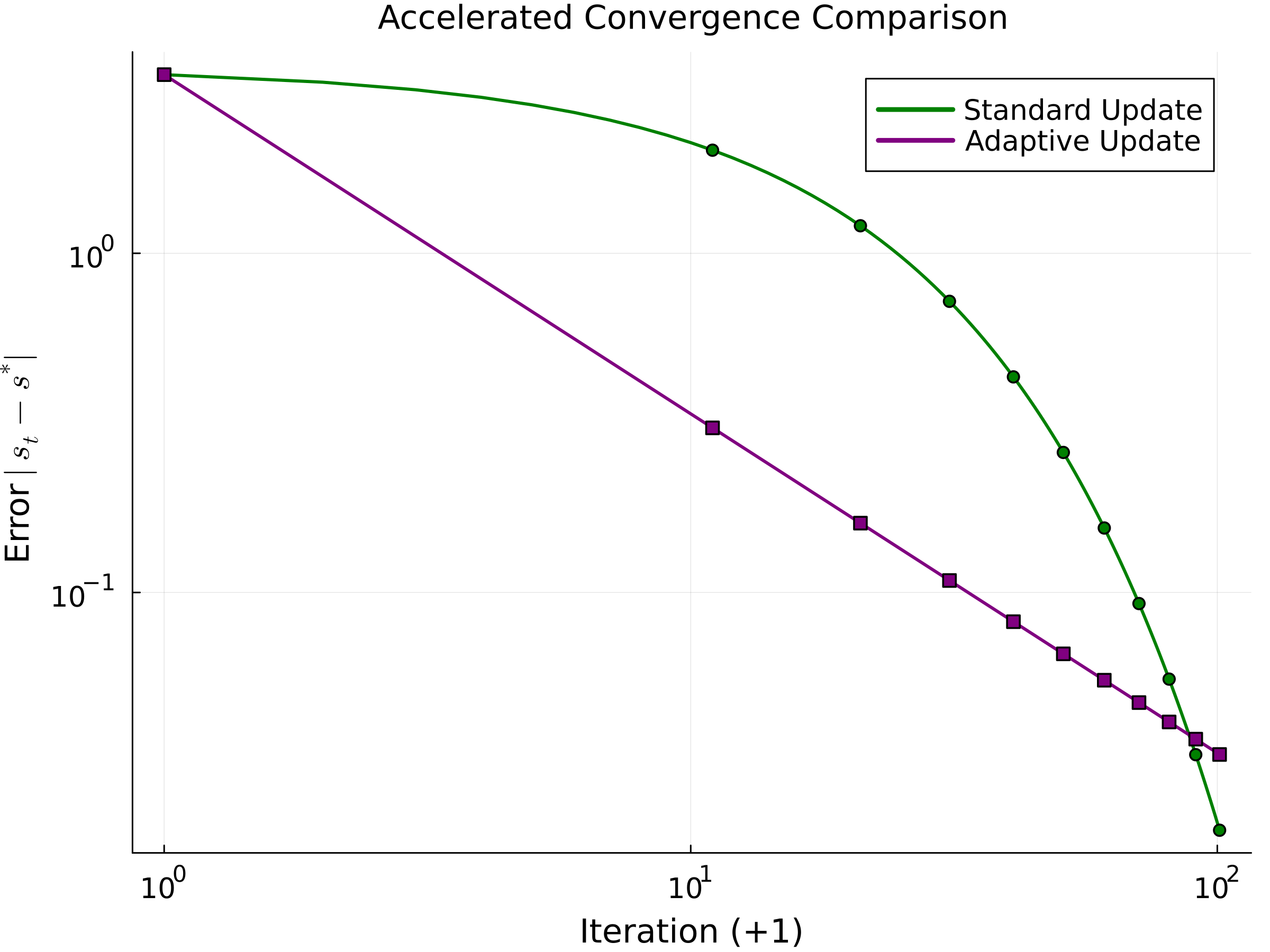

- Accelerated Convergence: Adaptively tuned averaging parameters yield faster convergence in practice, demonstrating the efficacy of iterative feedback mechanisms.

Figure 2: Semilog-y plot of the error ϵn versus iteration n. The exponential decay confirms that perturbations are attenuated, consistent with the contractivity of the update.

Figure 3: Log-log plot comparing the convergence errors of the standard update and the adaptive (accelerated) update. The adaptive update achieves an O(1/t2) convergence rate, indicating faster convergence.

Practical and Theoretical Implications

The paper opens several avenues for future research and application:

- Architectural Innovations: It suggests incorporating adaptive mechanisms and higher-order discretization methods to improve convergence and stability.

- Model Scalability: Extending these theoretical insights to large-scale systems could enhance performance and interpretability.

- Algorithmic Improvements: Leveraging contractive dynamics in architectural designs could mitigate training complexity and increase robustness.

Conclusion

The continuous dynamical system approach offers a unified perspective on transformer architectures, linking them to well-studied iterative and feedback-driven processes. This analysis provides theoretical support for both the robustness and expressivity of transformers, suggesting practical strategies for accelerated convergence and architectural enhancements. These results are poised to catalyze innovative directions in the study and application of deep learning models.