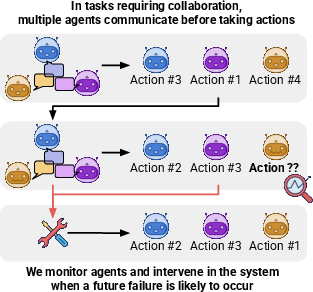

- The paper introduces a live-monitoring system that predicts failures by measuring action prediction uncertainty using entropy metrics.

- The intervention mechanism resets or rolls back communication when the success probability falls below a defined threshold.

- Experimental results show collaboration improvements of up to 20% in diverse settings, highlighting the robustness of the approach.

Preventing Rogue Agents Improves Multi-Agent Collaboration

The paper "Preventing Rogue Agents Improves Multi-Agent Collaboration" (Arxiv ID: (2502.05986)) addresses the challenge of ensuring robust operation in multi-agent systems by detecting and mitigating the impact of rogue agents. This research proposes a novel approach that involves monitoring individual agent behaviors during action prediction and intervening when a potential error is detected. The experiments, conducted in both newly introduced and existing environments, demonstrate substantial performance improvements, validating the effectiveness of the proposed method.

Introduction to Multi-Agent Systems and Challenges

Multi-agent systems offer the advantage of distributed problem-solving capabilities where tasks are divided among specialized agents. They are conducive to increased modularity and better handling of complex environments. However, a critical drawback is that the failure of a single agent could lead to systemic failure due to the interdependencies between agents. This paper focuses on preemptively identifying such rogue agents — agents that tend to deviate and potentially cause failure — and intervening before these agents can adversely affect the entire system.

Methodology: Monitoring and Intervention

The proposed methodology involves continuous monitoring of agents to predict potential task failures through a framework that involves:

Experimental Framework: WhoDunitEnv

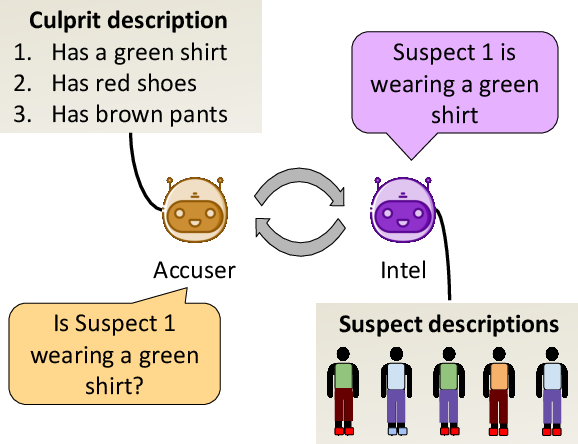

To evaluate the methodology, the paper introduces WhoDunitEnv, a modular environment that simulates multi-agent collaboration tasks inspired by the game "Guess Who." Agents in this environment share information to collectively deduce the identity of a suspect from a lineup. The environment's design allows for control over complexity and communication structure.

Two variants of WhoDunitEnv are tested: one with asymmetric agent roles and another with symmetric roles, each varying in complexity based on suspect numbers, attribute counts, and turn limits.

Figure 2: An illustration of WhoDunitEnv Accuser and Intel collaborate to identify the culprit from a lineup of suspects. Accuser, knowing the culprit's identity, can query and accuse. Intel chooses what and how much information to provide about the suspects.

Results and Analysis

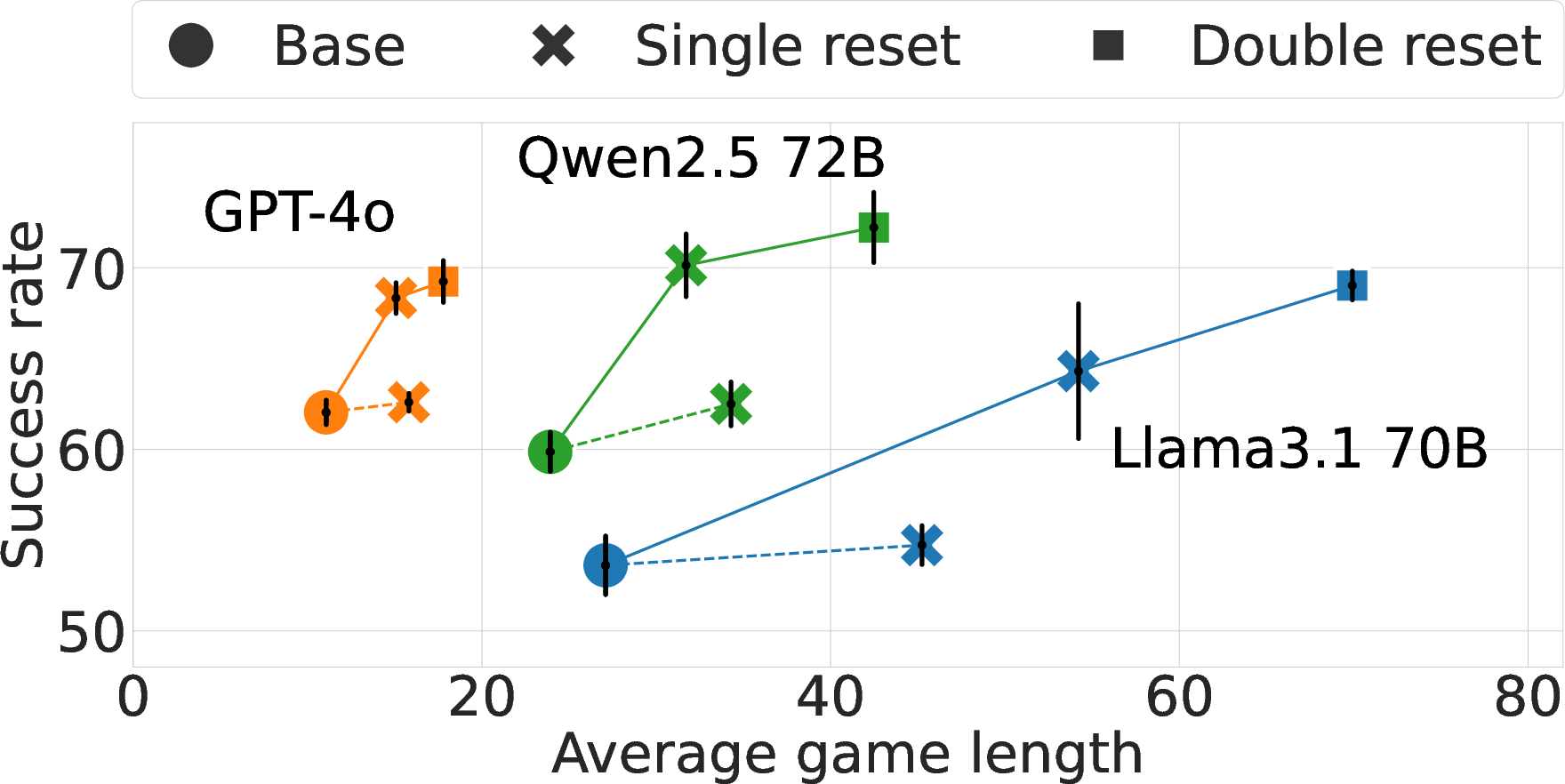

The experiments conducted show significant improvements in collaboration success rates across multiple settings and agent configurations. Key findings include:

- Success-rate improvements of up to 17.4% in WhoDunitEnv and up to 20.0% in other systems like CodeGen and GovSim.

- Effective identification of potential failure scenarios through the monitoring system, leading to timely interventions.

- Performance maintained under varying complexities and task difficulties, demonstrating the robustness and adaptability of the approach.

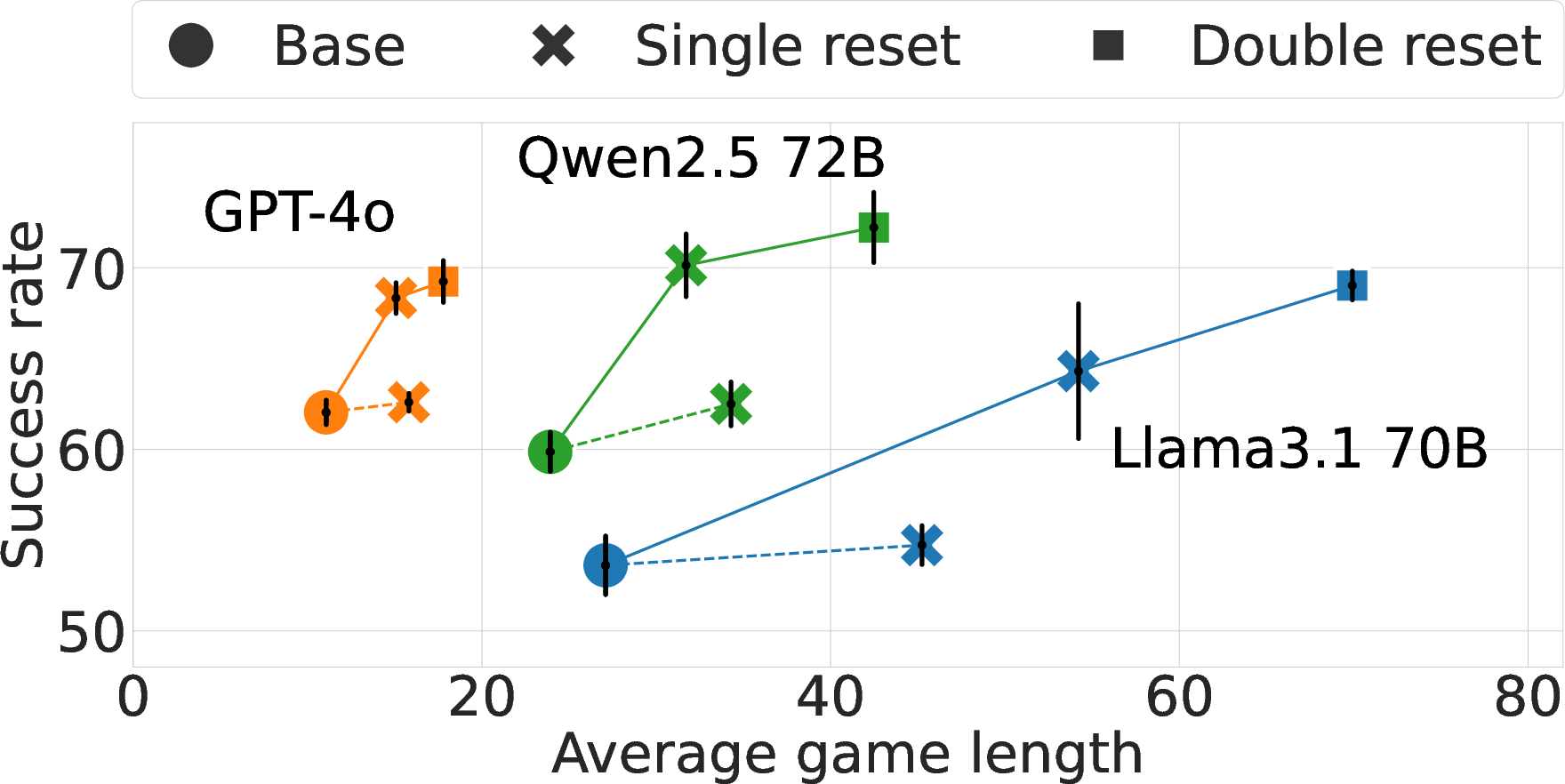

Figure 3: Main results for WhoDunitEnv. Live interventions lead to significant Success-Rate gains for three strong LLMs of up to 10 points, outperforming the random baseline (a cross connected by a dashed line). Resetting twice leads to additional, yet diminishing gains. Black lines indicate standard error.

Future Implications and Developments

This research has substantial implications for the development of reliable multi-agent systems where robustness against rogue agent behavior is crucial. The method of live monitoring and intervention opens new avenues for enhancing multi-agent system reliability, particularly in high-stakes domains such as automated driving, collaborative robotics, and distributed AI systems.

Future research could focus on refining the detection and intervention mechanisms to further increase efficiency and lower computational overhead. Additionally, extending the framework to more diverse and complex real-world scenarios will be essential to validate and enhance the generalizability of this approach.

Conclusion

The study demonstrates that proactive monitoring coupled with strategic intervention significantly enhances the robustness of multi-agent collaboration systems. The introduction of the WhoDunitEnv environment provides a valuable framework for further experimental studies. This reseach opens new pathways to build more resilient, reliable AI systems capable of operating effectively in dynamic and complex environments.