Sentinel Agents for Secure and Trustworthy Agentic AI in Multi-Agent Systems

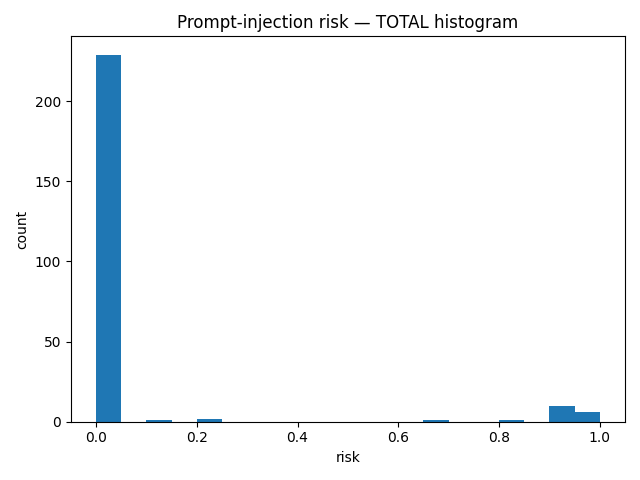

Abstract: This paper proposes a novel architectural framework aimed at enhancing security and reliability in multi-agent systems (MAS). A central component of this framework is a network of Sentinel Agents, functioning as a distributed security layer that integrates techniques such as semantic analysis via LLMs, behavioral analytics, retrieval-augmented verification, and cross-agent anomaly detection. Such agents can potentially oversee inter-agent communications, identify potential threats, enforce privacy and access controls, and maintain comprehensive audit records. Complementary to the idea of Sentinel Agents is the use of a Coordinator Agent. The Coordinator Agent supervises policy implementation, and manages agent participation. In addition, the Coordinator also ingests alerts from Sentinel Agents. Based on these alerts, it can adapt policies, isolate or quarantine misbehaving agents, and contain threats to maintain the integrity of the MAS ecosystem. This dual-layered security approach, combining the continuous monitoring of Sentinel Agents with the governance functions of Coordinator Agents, supports dynamic and adaptive defense mechanisms against a range of threats, including prompt injection, collusive agent behavior, hallucinations generated by LLMs, privacy breaches, and coordinated multi-agent attacks. In addition to the architectural design, we present a simulation study where 162 synthetic attacks of different families (prompt injection, hallucination, and data exfiltration) were injected into a multi-agent conversational environment. The Sentinel Agents successfully detected the attack attempts, confirming the practical feasibility of the proposed monitoring approach. The framework also offers enhanced system observability, supports regulatory compliance, and enables policy evolution over time.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- A2A: An agent communication protocol acronym referenced as a comparator; specifics are not elaborated in the paper. "protocols (MCP, A2A, ANP, SLOP)"

- ANP: An agent communication protocol acronym referenced as a comparator; specifics are not elaborated in the paper. "protocols (MCP, A2A, ANP, SLOP)"

- AgentFlayer: A security research tool demonstrating agentic prompt-injection risks and data exfiltration. "Zenity Labsâ AgentFlayer illustrates this risk, showing how hidden instructions in benign documents can exfiltrate data without user interaction"

- Agentic AI: AI systems composed of autonomous agents capable of acting and coordinating to achieve goals. "to establish a robust and adaptive security layer for Agentic AI."

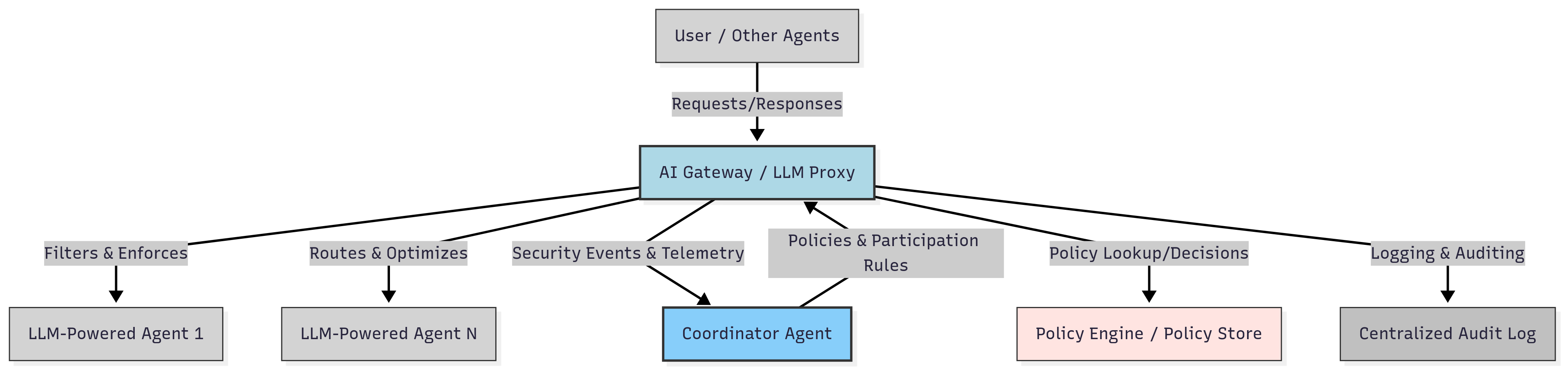

- AI Gateway: An intermediary service that enforces security, filters traffic, and manages LLM access and routing. "Such a proxy can include components like an API Gateway, an AI Gateway, data processing modules, and response formatting capabilities."

- AI Observability: Practices and tooling to monitor AI systems’ behavior, performance, and risks in real time. "They can also power AI observability: generating real-time telemetry, detecting performance anomalies, and surfacing bias or bottlenecks."

- Anomaly detection: Techniques to spot abnormal patterns in behavior or traffic that may indicate threats. "applying anomaly detection and rate-limiting"

- API Gateway: A gateway layer that manages, secures, and routes API calls to backend services or models. "Such a proxy can include components like an API Gateway, an AI Gateway, data processing modules, and response formatting capabilities."

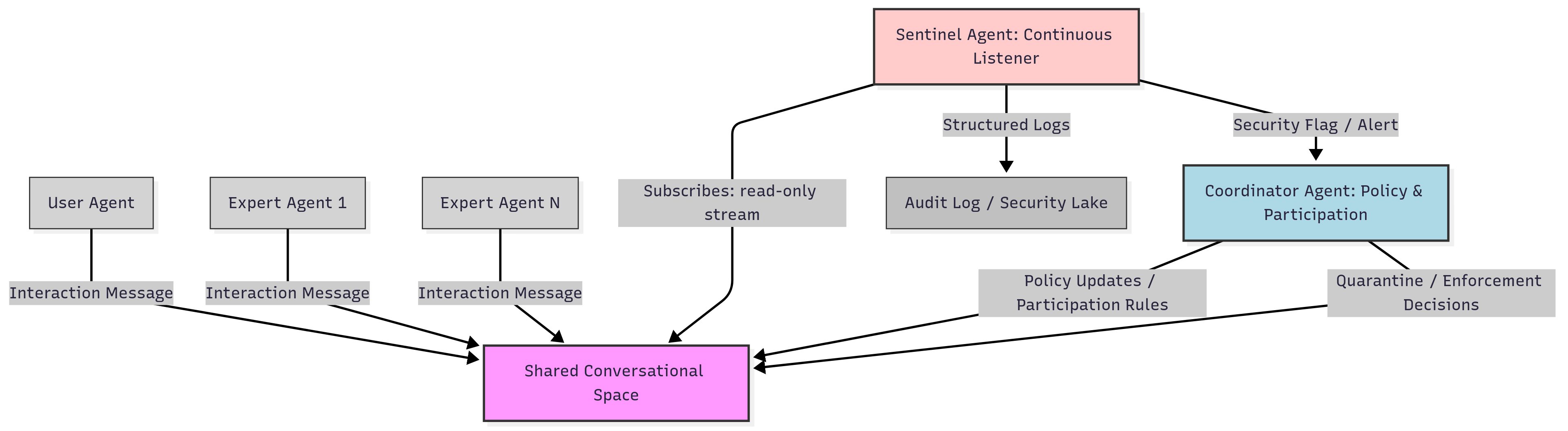

- Audit trail: A tamper-resistant log of actions and decisions to support accountability and compliance. "Every action taken by the Sentinel Agent is logged into an audit trail to ensure accountability, transparency, and compliance."

- Behavioral analytics: Analysis of sequences and patterns of interactions to detect misuse or collusion. "behavioral analytics"

- Chain-of-Thought (CoT): A prompting strategy that elicits step-by-step reasoning to improve detection and analysis. "prompting strategies like Chain-of-Thought (CoT) and Few-shot classification are commonly employed."

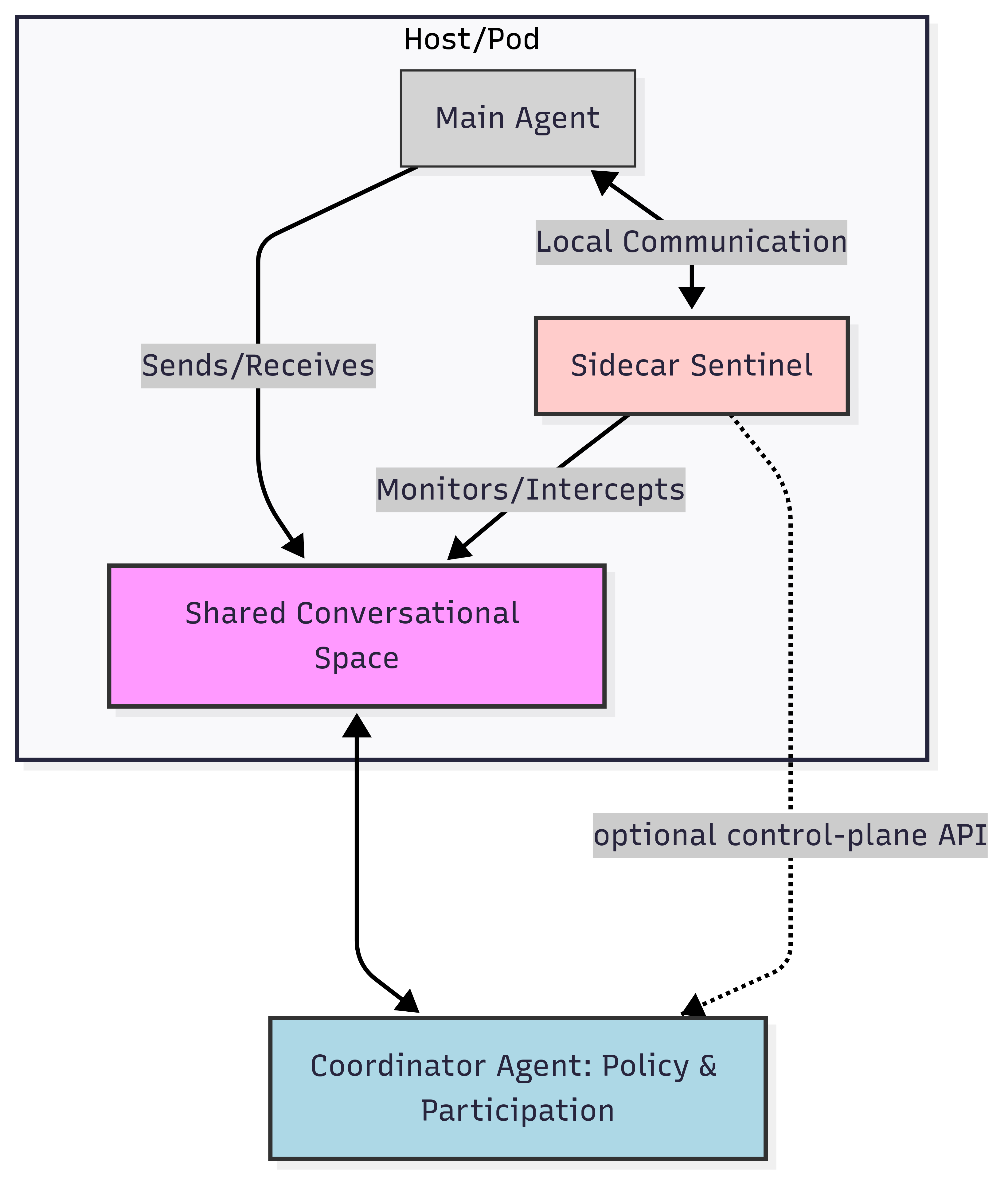

- Continuous Listener pattern: A deployment where a monitoring agent passively observes all system traffic for anomalies. "The Continuous Listener pattern (i.e. \cite{nist800137,istio_telemetry}), illustrated in Figure~\ref{fig:continuous-listener}, positions a Sentinel Agent as an independent observer"

- Coordinator Agent: The governance component that disseminates policy, manages agents, and responds to alerts. "The Coordinator Agent supervises policy implementation, and manages agent participation."

- Convener Agent: A coordinating role that manages turns and shared conversational space in certain frameworks. "managed by a Coordinator Agent called the ``Convener Agent''"

- Data exfiltration: Unauthorized extraction of sensitive data from a system or agent environment. "(prompt injection, hallucination, and data exfiltration)"

- External Fact-Checking APIs: Third-party services used to verify factual claims made by agents. "External Fact-Checking APIs"

- Few-shot classification: Classification that uses a small number of labeled examples in the prompt to guide model behavior. "prompting strategies like Chain-of-Thought (CoT) and Few-shot classification are commonly employed."

- GDPR: A European regulation governing data protection and privacy. "General Data Protection Regulation (GDPR)"

- HIPAA: A U.S. law for protecting health information privacy and security. "Health Insurance Portability and Accountability Act (HIPAA)"

- Hybrid Approach: A combined deployment using sidecar, proxy, and listener patterns for comprehensive defense. "Hybrid Approach"

- LLM: A neural network trained on large corpora to understand and generate natural language. "LLM hallucinations occur when models produce outputs that appear plausible but lack factual support."

- LLM Proxy: A centralized or distributed intermediary that filters, secures, and optimizes LLM traffic. "LLM Proxy or AI Gateway pattern"

- Linda-style shared memory: A coordination model where agents communicate via a shared tuple space. "Tuple Spaces and Linda-style shared memory"

- Model Context Protocol (MCP): An interoperability standard for connecting tools and agents to models. "Model Context Protocol (MCP)"

- Multi-Agent Systems (MAS): Systems composed of multiple interacting autonomous agents. "multi-agent systems (MAS)"

- Open Policy Agent: A policy engine enabling policy-as-code for authorization and governance. "policy-as-code paradigm (e.g., inspired by Open Policy Agent)."

- OWASP Top 10 for LLMs: A community-curated list of the most critical security risks for LLM systems. "The severity of this issue has been acknowledged by the OWASP Top 10 for LLMs"

- Personally Identifiable Information (PII): Data that can identify an individual, requiring special handling and protection. "personally identifiable information (PII)"

- Policy-as-code: Defining and enforcing policies via machine-readable code for consistency and automation. "policy-as-code paradigm (e.g., inspired by Open Policy Agent)."

- Polyglot environments: Systems involving multiple programming languages within one architecture. "supports heterogeneous programming languages (polyglot environments)"

- Prompt injection: Crafting adversarial inputs that manipulate model behavior or bypass safeguards. "Prompt injection is widely recognized as a leading security threat in AI systems."

- Provenance tracking: Recording sources and derivation paths of information to assess trustworthiness. "confidence scoring, and provenance tracking"

- Quarantine: Isolating compromised or misbehaving agents to contain threats. "isolate or quarantine misbehaving agents"

- Rate-limiting: Restricting the frequency of requests or actions to mitigate abuse or attacks. "applying anomaly detection and rate-limiting"

- Retrieval-augmented verification: Validating content by checking claims against external knowledge sources. "retrieval-augmented verification"

- Secure-by-design principles: Building security controls into architecture and processes from the outset. "This aligns well with secure-by-design principles."

- Security control plane: The layer that governs, coordinates, and enforces security policies across agents. "This relationship forms a security control plane for MAS."

- Sentinel Agents: Dedicated monitoring and enforcement agents providing security and observability in MAS. "A central component of this framework is a network of Sentinel Agents"

- Shared Conversational Space: A common context where agents exchange messages and coordinate actions. "The ``Shared Conversational Space'' concept aligns with emerging architectures in multi-agent orchestration."

- Sidecar pattern: Co-locating a helper component alongside a primary service for interception and security. "Sidecar Pattern"

- SLOP: An agent communication protocol acronym referenced as a comparator; specifics are not elaborated in the paper. "protocols (MCP, A2A, ANP, SLOP)"

- Telemetry: Operational signals emitted by systems to enable monitoring and diagnostics. "generating real-time telemetry"

- Time-series analysis: Methods for analyzing sequential data over time to detect trends or anomalies. "Time-series analysis plays a central role in this process."

- Tuple Spaces: A coordination model where agents communicate via inserting and reading tuples in shared space. "Tuple Spaces and Linda-style shared memory"

- Zero-shot classification: Classification without labeled examples for the specific classes, leveraging model generalization. "Zero-shot classification approaches are especially valuable in dynamic environments"

Collections

Sign up for free to add this paper to one or more collections.