- The paper introduces CaDDi, unifying sequential and temporal modeling via a non-Markovian discrete diffusion framework to enhance expressiveness and control.

- The paper employs a hybrid approach with independent state corruption and causal inference to mitigate error accumulation and optimize denoising.

- The paper demonstrates superior benchmark performance, highlighting improved control, efficiency, and adaptability in complex sequence generation tasks.

Non-Markovian Discrete Diffusion with Causal LLMs

Introduction

The paper introduces CaDDi, a novel approach to unify sequential and temporal modeling through a causal discrete diffusion model framework. This is a significant progression for discrete diffusion models that historically lagged behind the expressiveness of autoregressive transformers in sequence modeling. By extending the framework to a non-Markovian diffusion process and leveraging pretrained LLMs, this approach enables more expressive and controllable sequence generation without necessitating architectural changes.

Non-Markovian Discrete Diffusion Framework

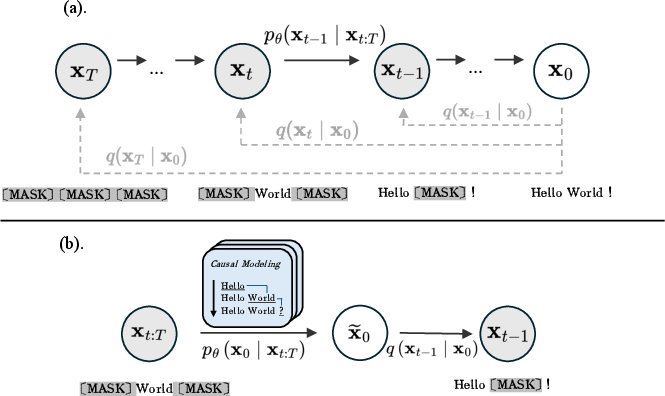

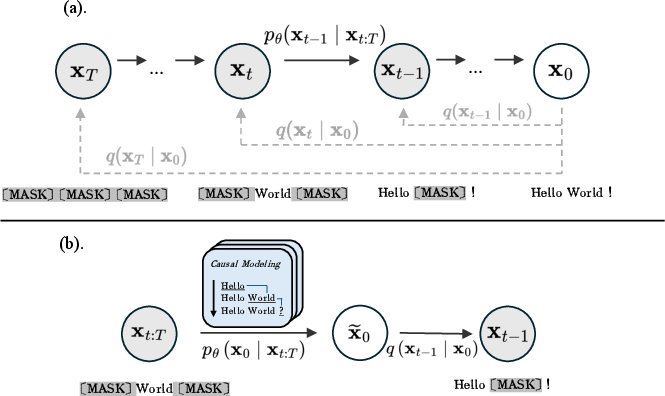

Traditional diffusion models are bound by Markovian assumptions, where each timestep merely relies on the immediate previous state. CaDDi breaks from this limitation by adopting a non-Markovian framework that encompasses the entire generative trajectory, facilitating robust inference and aligning with causal language modeling principles.

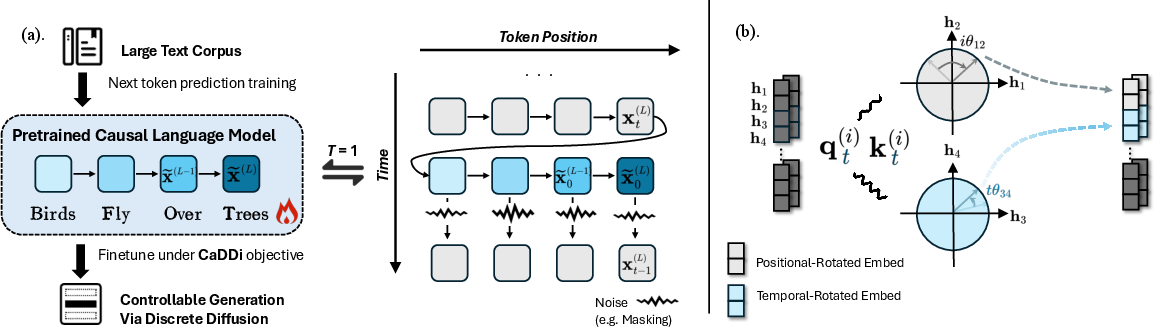

Figure 1: Framework of non-Markovian discrete diffusion, where noise is added independently to the initial state at each timestep.

The framework forgoes the step-by-step Markovian model for a more comprehensive trajectory analysis, mitigating error accumulation and improving the denoising process inherent in diffusion models. By integrating the entire generative trajectory, CaDDi aligns more naturally with autoregressive models, treating them as a special case (T=1).

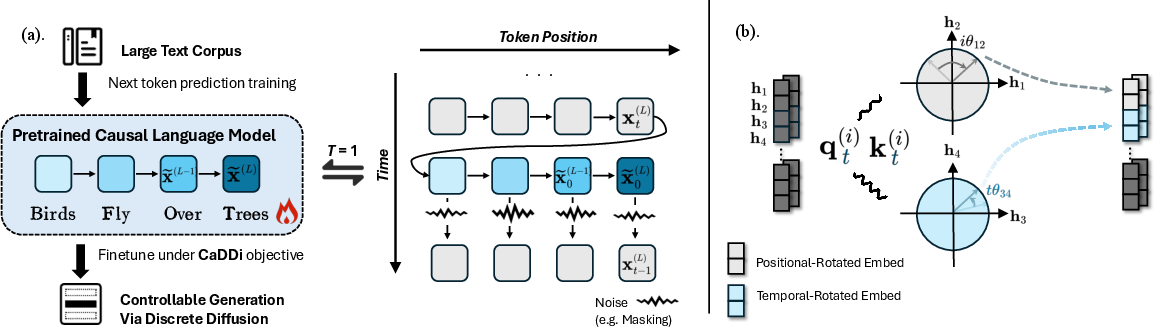

Implementation and Inference

CaDDi employs a hybrid non-Markovian forward trajectory approach, where the future states complement prior information rather than solely building upon the immediate past state. The process involves independent corruption at timesteps to generate diverse noisy states that better preserve informative patterns, leveraging both absorbing and uniform kernels to optimize the noising process.

Additionally, the adoption of a causal inference model enables an efficient denoising path by predicting the clean data directly, employing an x0-parameterization scheme. This maximizes the ELBO objective under the variational framework, offering a robust pathway for data reconstruction over a lengthy trajectory.

Figure 2: Inference paradigm for a standard causal LLM versus CaDDi with autoregressive denoising.

Inference utilizes an autoregressive methodology, capitalizing on non-Markovian paths to significantly bolster generation expressiveness and temporal dependency handling.

Evaluation

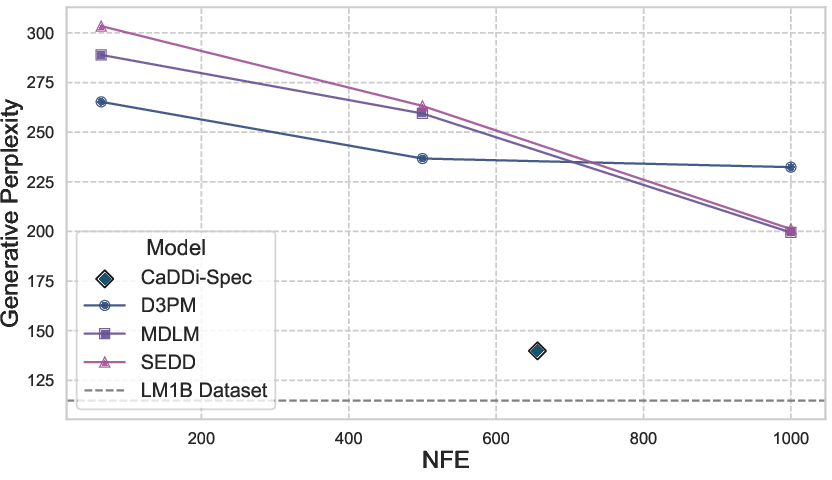

CaDDi is evaluated against several benchmarks, showcasing its superiority in navigating both biological sequences and natural language tasks. The model's architecture allows for enhanced control over generated outputs, supporting complex tasks like text infilling or generation from arbitrary loci, which traditional autoregressive models struggle with.

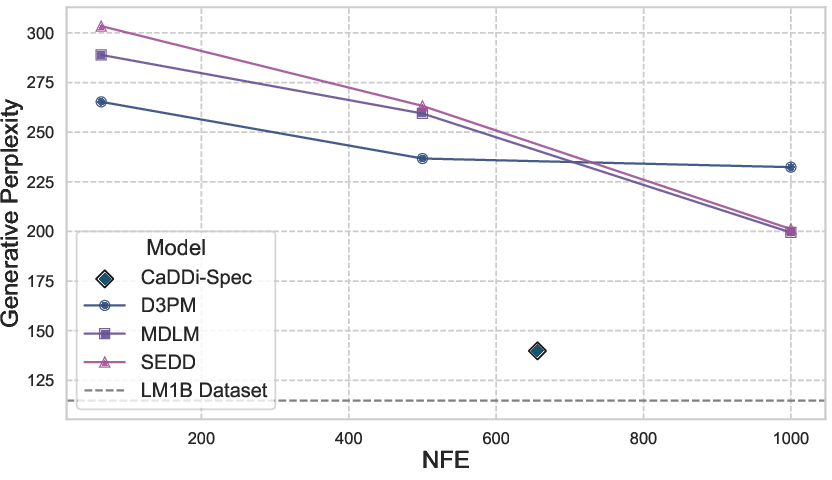

Figure 3: Performance comparison of several discrete diffusion models on LM1B across NFE, with CaDDi demonstrating improved efficiency in perplexity as NFE increases.

The model achieves superior scores across a range of metrics, emphasizing its control and precision over sequence modeling tasks without compromising inference speed due to its semi-speculative decoding strategies.

Implications and Future Directions

The fusion of non-Markovian diffusion processes with causal language modeling in CaDDi proposes a promising pathway for improving structured sequence modeling. Given its ability to harness the benefits of large-scale LLMs without necessitating structural overhauls, CaDDi can be pivotal in future advancements of controllable and expressive sequence generation paradigms.

CaDDi's introduction opens avenues for exploring further refinements in hybrid noising procedures, expanding diffusion model applicability in domains traditionally served by autoregressive models while enhancing computational efficiencies through continual optimization of semi-speculative decoding strategies.

Conclusion

CaDDi marks a substantial development in bridging discrete diffusion models with autoregressive capabilities, facilitating structured, controlled, and expressive sequence modeling that effectively leverages existing LLM capabilities. This approach presents a significant leap in balancing between expressiveness and inference efficiency, setting a foundational precedent for continued research and development in the domain of advanced sequence processing methodologies.