KGGen: Extracting Knowledge Graphs from Plain Text with Language Models

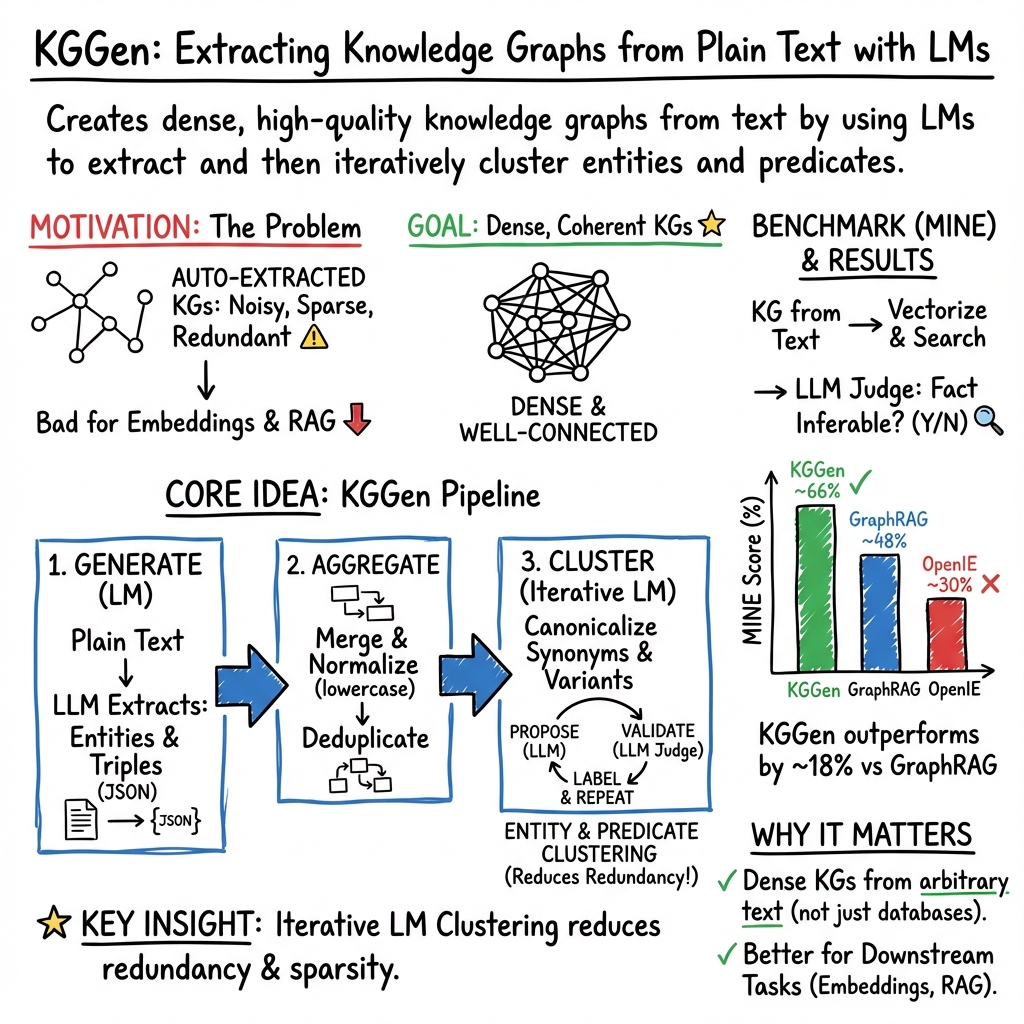

Abstract: Recent interest in building foundation models for KGs has highlighted a fundamental challenge: knowledge-graph data is relatively scarce. The best-known KGs are primarily human-labeled, created by pattern-matching, or extracted using early NLP techniques. While human-generated KGs are in short supply, automatically extracted KGs are of questionable quality. We present a solution to this data scarcity problem in the form of a text-to-KG generator (KGGen), a package that uses LLMs to create high-quality graphs from plaintext. Unlike other KG extractors, KGGen clusters related entities to reduce sparsity in extracted KGs. KGGen is available as a Python library (\texttt{pip install kg-gen}), making it accessible to everyone. Along with KGGen, we release the first benchmark, Measure of of Information in Nodes and Edges (MINE), that tests an extractor's ability to produce a useful KG from plain text. We benchmark our new tool against existing extractors and demonstrate far superior performance.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces KGGen, a tool that turns plain text (like articles or documents) into a “knowledge graph.” A knowledge graph is like a big web of facts. Each fact is written as a triple: subject–predicate–object. For example: “Mars — is a — planet.” These triples connect together, forming a map of related ideas that computers can use to search, reason, and answer questions.

KGGen solves a major problem: good, complete knowledge graphs are hard to find and often incomplete. The authors show how to generate high-quality graphs automatically from text, and they also create a new test, called MINE, to measure how well different tools do this job.

What questions did the researchers ask?

To make their work useful and measurable, the authors focused on simple, practical goals:

- Can we automatically build a useful, accurate knowledge graph from plain text?

- Can we reduce messy duplicates and synonyms so the graph is dense and well-connected?

- Can we create a fair benchmark (MINE) to compare different text-to-graph tools?

- Does KGGen beat popular existing methods on this benchmark?

How did they do it?

Think of building a knowledge graph like organizing a huge set of sticky notes across a wall:

- Each sticky note is an “entity” (like “New York City” or “photosynthesis”).

- Strings connecting sticky notes represent “relations” (like “is located in,” “causes,” or “depends on”).

KGGen uses a LLM (a smart text-reading AI) in three stages:

Step 1: Extract entities and relationships (“generate”)

- First, the AI reads the text and lists important entities.

- Next, it reads the text again and writes down relations between those entities as triples (subject–predicate–object).

- Doing this in two passes helps keep the entities and their relations consistent.

Step 2: Combine graphs from multiple sources (“aggregate”)

- If you have multiple documents, KGGen collects all the entities and relations, merges duplicates like capitalization differences (“NASA” vs “nasa”), and creates one big graph.

- This makes the graph less repetitive and easier to use.

Step 3: Cluster similar entities and edges (“cluster”)

- This is the special sauce. The AI groups different words that mean the same thing—like “NYC” and “New York City,” or “weaknesses,” “vulnerabilities,” and “being vulnerable.”

- It does this iteratively, like a careful referee: propose a cluster, check it, label it, repeat.

- The same idea applies to relations: it merges overly specific or similar relation types into clearer, more general ones.

- The result is a denser, cleaner graph with fewer isolated nodes, which helps other algorithms learn from it.

Why is “dense” important? Imagine a city map with lots of roads connecting places versus a map with almost no roads. The dense map lets you actually get from A to B. Dense graphs help AI systems retrieve facts and reason better.

What did they find?

The authors created a new benchmark called MINE (Measure of Information in Nodes and Edges) to fairly test how well a tool captures facts from text.

Here’s how MINE works, simply:

- They generated 100 short articles (about 1,000 words each) on varied topics.

- From each article, they collected 15 true facts.

- They built knowledge graphs from the articles using different tools.

- They then asked: “Can this graph help infer each fact?” and scored how often the answer was yes.

On this test:

- KGGen scored about 66% on average.

- GraphRAG (a popular method) scored about 48%.

- OpenIE (an earlier method) scored about 30%.

So KGGen performed roughly 18% better than the next best tool and far better than the older method.

They also inspected the graphs by eye:

- GraphRAG sometimes produced very small or sparse graphs that missed important connections.

- OpenIE often produced messy, overly long or generic nodes (like “it” and “are”), making the graph less useful.

- KGGen tended to produce cleaner, denser graphs that captured more meaningful relationships.

Why does this matter?

- Better knowledge graphs help AI systems answer questions more accurately, especially when they need to connect ideas across multiple documents. This is crucial for retrieval-augmented generation (RAG), where a chatbot or assistant looks up external knowledge before answering.

- Dense, high-quality graphs make it easier to train “graph foundation models”—special AI systems built to understand and search across many knowledge graphs.

- Because KGGen reduces duplicates and merges synonyms, downstream tools can learn patterns more reliably and avoid getting confused by minor wording differences.

Looking ahead

The authors note two main areas for improvement:

- Smarter clustering: sometimes the tool merges too much or too little. Refining this step could make graphs even cleaner.

- Bigger tests: MINE focuses on shorter articles right now. Future benchmarks could use longer, real-world collections to match how knowledge graphs are used in practice.

In short, KGGen makes it easier to turn everyday text into a useful map of knowledge, and it sets a new standard for testing how well different tools can do this.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of concrete gaps and open questions the paper leaves unresolved, aimed to guide future research and engineering work.

- Benchmark realism and external validity

- MINE uses 100 short (~1k words) LLM-generated “Wikipedia-like” articles; evaluate on real-world, human-authored, multi-document corpora (e.g., Wikipedia, PubMed, news, legal) with heterogeneous writing styles, noise, and discourse structure.

- Assess performance on longer and larger-scale corpora (books, codebases, organizational document lakes) to test extraction fidelity, coverage, and system scalability.

- Extend beyond English to multilingual and code-mixed datasets; quantify language-specific failure modes.

- Fairness and robustness of evaluation

- Replace or augment LLM-generated articles and LLM-as-judge with human-authored articles and human evaluation to avoid model-family bias and potential circularity.

- Report inter-annotator agreement for fact verification and judging; quantify judge reliability and calibration.

- Provide statistical significance tests (e.g., paired tests, confidence intervals) for reported performance differences.

- Conduct sensitivity analyses for MINE hyperparameters (encoder choice, top-k, hop count, LLM judge prompts) and show that rankings are stable.

- Baseline completeness and comparability

- Include modern IE/RE baselines (e.g., REBEL, spaCy/AllenNLP pipelines, SOTA joint BERT/Transformer models, schema-guided IE) and rule-plus-ML hybrids.

- Ensure hardware/model parity across LLM-based methods (same model family/size, decoding settings) to isolate algorithmic gains from model quality.

- Downstream utility beyond MINE

- Demonstrate that KGGen improves downstream tasks (link prediction, KG completion, QA, recommendation) relative to baselines using standard datasets (FB15k-237, WN18RR, OpenBioLink).

- Quantify benefits for GraphRAG end-to-end QA (answer accuracy, faithfulness, latency), not just extraction quality.

- Clustering correctness and control

- Provide quantitative error analysis of over-clustering (merging non-equivalent concepts) and under-clustering (failing to merge synonyms), including human-labeled coreference/equivalence datasets.

- Analyze order dependence and stability of the iterative LLM clustering (do different orderings/seeds produce different clusters?).

- Introduce transitivity and consistency checks (e.g., merge graph closure) to avoid contradictory cluster assignments.

- Evaluate polysemy/homonym handling (e.g., “Turkey” country vs. animal), and add explicit word-sense disambiguation or context-aware disambiguation strategies.

- Assess temporal semantics in clustering (e.g., “is president” vs. “was president”) to prevent collapsing time-scoped facts into timeless entities.

- Ontology/schema alignment and relation semantics

- Add ontology induction or schema alignment to standard vocabularies (e.g., Wikidata/Schema.org), and evaluate canonicalization against gold relations.

- Measure semantic drift introduced by edge “consolidation” (are nuanced relations over-generalized, harming downstream reasoning?).

- Incorporate and evaluate type and domain/range constraints to improve relation quality.

- Entity linking and provenance

- Integrate entity linking to external KGs (Wikidata IDs) for disambiguation, deduplication, and interoperability; quantify EL accuracy.

- Attach provenance (document IDs, sentence spans) and confidence scores to triples, and evaluate their utility for trust, auditing, and debugging.

- Retrieval and graph-query design in MINE

- Justify and ablate the two-hop neighborhood and top-k retrieval strategy; test varying k/hops and neighborhood construction (edge weights, relation filtering).

- Evaluate alternative similarity encoders (e.g., E5, GTE, bge-large) and graph-aware retrieval (e.g., personalized PageRank, path ranking) for fact matching.

- Examine failure cases where facts are not retrieved despite being encoded in the graph; provide targeted improvements.

- Reproducibility and open science

- Release all prompts, seeds, and inference settings; quantify variability across runs and models.

- Provide deterministic pathways (constrained decoding, rule-backed steps) to reduce stochasticity in outputs.

- Scalability, cost, and efficiency

- Characterize computational complexity and cost (token usage, wall-clock time) of the multi-stage LLM pipeline and iterative clustering as a function of entity/edge count.

- Explore approximate or hybrid clustering (e.g., blocking, embedding-based candidate generation + LLM verification) for million-scale graphs.

- Benchmark memory/latency trade-offs and parallelization strategies on realistic document lakes.

- Error analysis and safety

- Provide granular error taxonomy (entity boundary errors, relation hallucinations, negation/uncertainty mishandling, temporal mis-scoping).

- Assess robustness to adversarial or noisy inputs (OCR artifacts, contradictory statements, misinformation); design mitigation strategies.

- Data and model dependence

- Evaluate portability to open-source LLMs (e.g., Llama, DeepSeek, Mistral) and quantify performance/cost trade-offs relative to GPT-4o.

- Test domain transfer (biomedical, legal, financial) and identify domain-adaptation techniques needed (domain prompts, few-shot examples, schema constraints).

- Graph quality metrics beyond MINE

- Report structural metrics (degree distribution, clustering coefficients, connectivity, redundancy) and their correlation with downstream performance.

- Compare against established KG quality measures (LP-Measure, KGTtm, DiffQ) to triangulate quality assessments.

- Information fidelity and semantics

- Evaluate handling of negation, modality, and quantifiers (e.g., “may cause,” “not associated with,” “some vs. all”) to prevent incorrect fact extraction.

- Preserve case-sensitive distinctions (e.g., “Apple” vs. “apple”); analyze unintended consequences of lowercasing during aggregation.

- Graph representation and standards

- Provide RDF/OWL exports, SPARQL compatibility, and integration with triple stores; measure performance impacts of representation choices.

- Consider reification/n-ary relation modeling (events, time, qualifiers) and their effects on expressivity and retrieval.

- Confidence, calibration, and uncertainty

- Attach and calibrate confidence to triples and clusters (e.g., via self-consistency, cross-model agreement); evaluate uncertainty-aware retrieval.

- Practical deployment considerations

- Document the operational envelope (cost per GB of text, throughput), privacy/security considerations for sensitive corpora, and update/refresh strategies (incremental clustering, versioning).

- Head-to-head with GraphRAG databases

- Compare performance when GraphRAG uses KGGen-generated graphs vs. its native extractor, controlling for retrieval and summarization settings; measure end-user QA gains.

- Extended ablations

- Quantify the contribution of each pipeline stage (entity extraction, relation extraction, aggregation, entity clustering, edge clustering) via ablations.

- Study prompt and instruction sensitivity (e.g., cluster-instruction string, batch size b, number of loops n) and provide recommended defaults with rationale.

Practical Applications

Overview

This paper introduces KGGen, a Python toolkit for extracting dense, high-quality knowledge graphs (KGs) from plain text using LLMs, and MINE, the first benchmark for evaluating text-to-KG extraction via downstream retrieval tasks. KGGen’s multi-stage generate–aggregate–cluster pipeline reduces sparsity and redundancy, improving utility for embeddings (e.g., TransE/TransR) and graph-based RAG systems. Below are practical, real-world applications organized by immediacy, with sector links, tooling/workflows, and feasibility notes.

Immediate Applications

- Domain-specific Graph RAG on private corpora (industry)

- Sectors: software, legal, finance, healthcare, manufacturing, cybersecurity

- What: Replace GraphRAG’s default KG construction with KGGen to build richer, less sparse KGs for multi-hop retrieval over internal documents (policies, reports, manuals, tickets).

- Tools/Workflows: pip install kg-gen; use generate→aggregate→cluster; persist graph in Neo4j or RDF/SPARQL; index nodes/edges with embeddings (e.g., SentenceTransformers); integrate with GraphRAG or similar retrievers.

- Assumptions/Dependencies: Access to LMs (GPT-4o or DSPy-supported alternatives), data privacy controls, prompt tuning for domain language, monitoring of over/under-clustering.

- Data cleaning and entity resolution for enterprise knowledge management (industry)

- Sectors: data engineering, BI/analytics

- What: Use KGGen’s clustering to collapse synonyms, variants, and noise across glossaries, catalogs, and logs to unify entity definitions and reduce duplication.

- Tools/Workflows: Run cluster module on existing entity lists; label canonical clusters; map to enterprise ontology; export to data catalogs.

- Assumptions/Dependencies: Ontology alignment guidelines; LLM-as-judge validation budget; human-in-the-loop for high-stakes entities.

- Building KGs from healthcare notes for decision support (industry/academia)

- Sectors: healthcare, biomedical informatics

- What: Extract clinically relevant entities/relations from EMR notes and guidelines to support reasoning, cohort discovery, and clinical Q&A.

- Tools/Workflows: KGGen with specialized prompts; secure deployment; link to terminologies (SNOMED, ICD, RxNorm); store in HIPAA-compliant graph DB.

- Assumptions/Dependencies: Strict privacy/security, clinician review, domain prompt calibration, regulatory compliance.

- Cybersecurity threat intelligence graphs (industry/policy)

- Sectors: cybersecurity

- What: Create KGs from CVEs, alerts, reports; cluster “vulnerabilities/weaknesses/exploits” to unify triage and response; support ATT&CK mapping.

- Tools/Workflows: KGGen extraction from feeds; integrate with SIEM/SOAR; ATT&CK ontology alignment; run graph queries for incident correlation.

- Assumptions/Dependencies: Timely ingestion, domain-specific relation schemas, false-positive mitigation.

- Contract and compliance knowledge bases (industry/policy)

- Sectors: legal, governance, risk, compliance

- What: Build KGs from contracts, policies, and regulatory texts to answer “who/what/when” and compliance coverage queries.

- Tools/Workflows: KGGen; store as RDF/Neo4j; define canonical relation types (“obligates”, “prohibits”, “requires”); integrate with audit workflows.

- Assumptions/Dependencies: Document normalization, legal expert validation, versioning for updates.

- Improved training data for KG embeddings and link prediction (academia/industry)

- Sectors: AI/ML

- What: Use KGGen to densify graphs before training TransE/TransR/LightGCN; evaluate link prediction and completion on less sparse corpora.

- Tools/Workflows: KGGen preprocessing; off-the-shelf KG embedding libraries; LP-Measure or DiffQ for post-hoc KG quality checks.

- Assumptions/Dependencies: Sufficient relational density; careful consolidation to avoid merging distinct entities.

- Benchmarking and tool selection using MINE (academia/industry)

- Sectors: AI/ML, data engineering

- What: Use MINE to compare KG extraction pipelines on retrieval efficacy; select prompts/models/settings for your domain.

- Tools/Workflows: Run MINE on representative documents; evaluate top-k neighborhood inference; LLM-as-judge scoring.

- Assumptions/Dependencies: LLM evaluation reliability, chosen k/neighborhood depth, domain representativeness of test set.

- Educational concept maps and curriculum graphs (academia/daily life)

- Sectors: education

- What: Convert textbooks/lecture notes into KGs to surface prerequisite relations and multi-hop connections across topics.

- Tools/Workflows: KGGen with education-focused relation schema; visualize in graph tools; use for study aids and tutoring systems.

- Assumptions/Dependencies: Quality of source notes; instructor review for canonicalization.

- Personal knowledge management (daily life)

- Sectors: productivity

- What: Turn personal notes, articles, and bookmarks into navigable KGs; discover links between ideas, projects, and tasks.

- Tools/Workflows: KGGen on notes; local graph store; optional RAG for recall; periodic clustering to unify labels.

- Assumptions/Dependencies: Cost of LLM calls; small-scale deployment; minimal safety concerns.

Long-Term Applications

- Training graph foundation models with large, diverse synthetic KGs (academia/industry)

- Sectors: AI/ML

- What: Use KGGen at scale to generate rich training corpora for graph retrievers (e.g., GFM-RAG-like systems), improving generalization on noisy/incomplete KGs.

- Tools/Workflows: Distributed KGGen pipelines; synthetic corpus generation; curated relation schemas; large-scale training infrastructure.

- Assumptions/Dependencies: Compute/budget for generation and training; dataset curation; benchmark expansion beyond MINE’s short articles.

- Continual organizational KGs from streaming data (industry)

- Sectors: enterprise software

- What: Auto-build and update KGs from emails, chats, docs, tickets in near real-time to power internal copilots and search.

- Tools/Workflows: Streaming ingestion; incremental generate→aggregate→cluster; change detection; access control; feedback loops.

- Assumptions/Dependencies: Privacy/safety gating, robust deduplication, drift detection, human oversight.

- Web-scale KG-based QA and fact-checking (industry/policy)

- Sectors: media, public policy, search

- What: Construct large topical KGs (health, climate, economics) to ground LLM answers and support verification pipelines for public-facing systems.

- Tools/Workflows: Multi-source ingestion; conflict resolution; provenance tracking; KG-backed QA; audit trails.

- Assumptions/Dependencies: Source reliability, bias management, provenance enforcement, scalable LLM evaluation.

- Scientific literature mining and hypothesis generation (academia)

- Sectors: biomedical, materials, social science

- What: Build cross-paper KGs linking entities (genes, compounds, phenomena) to accelerate discovery and meta-analyses.

- Tools/Workflows: KGGen with domain ontologies; canonicalization against controlled vocabularies; multi-hop query tools; RAG for synthesis.

- Assumptions/Dependencies: Domain adaptation (prompts, relation schemas), expert curation, handling of contradictory findings.

- Cross-lingual, multilingual knowledge graphs (industry/academia)

- Sectors: global enterprises, localization

- What: Extend KGGen to multi-language corpora to unify concepts across languages and regions for consistent analytics and search.

- Tools/Workflows: Multilingual LMs; cross-lingual embeddings; language-aware clustering; mapping to global ontologies.

- Assumptions/Dependencies: Multilingual model quality, standardized entity labels, regional privacy laws.

- KG-driven robotics and embedded reasoning (industry)

- Sectors: robotics, IoT

- What: Use KGs as structured world models linking tasks, objects, and constraints to support planning and explainability in autonomous systems.

- Tools/Workflows: KGGen for textual manuals/instructions; integrate with planners; continual updates from sensor logs.

- Assumptions/Dependencies: Multi-modal integration beyond text, reliable grounding to physical state, safety certification.

- Energy and infrastructure asset graphs for predictive maintenance (industry)

- Sectors: energy, transportation, utilities

- What: Create KGs from maintenance logs, incident reports, and technical specs to support link prediction and risk analysis.

- Tools/Workflows: KGGen ingestion; relation schemas for assets/failures/causes; embeddings for failure risk scoring; dashboards.

- Assumptions/Dependencies: Quality/coverage of historical data, correct clustering of technical terms, integration with existing CMMS.

- Policy evidence graphs and stakeholder analysis (policy/academia)

- Sectors: public administration, NGOs

- What: Build KGs from public comments, reports, and hearings to trace claims, evidence, and actors across policy debates.

- Tools/Workflows: Provenance and stance relations; conflict detection; RAG-backed briefings; transparency portals.

- Assumptions/Dependencies: Bias and framing mitigation, rigorous provenance, legal constraints on data use.

Notes on Feasibility and Risk

- Model access and cost: KGGen currently demonstrated with GPT-4o via DSPy; organizations may substitute with open or hosted LMs, with potential quality trade-offs.

- LLM-as-judge reliability: Both clustering validation and MINE’s scoring depend on LLM evaluators; mitigate with calibrated prompts, majority voting, or human audits.

- Over/under-clustering: Domain-specific tuning and optional human-in-the-loop reviews are recommended for critical applications (healthcare, legal).

- Privacy and compliance: For sensitive corpora, deploy within secure environments, enforce data minimization, and maintain audit trails for KG changes.

- Ontology alignment: Maximize downstream utility by mapping KGGen outputs to established ontologies and relation schemas in each domain.

- Benchmark scope: MINE currently focuses on short articles; for production deployments, extend evaluation to large corpora and multi-document reasoning scenarios.

Glossary

- all-MiniLM-L6-v2: A compact SentenceTransformers model used to embed text into vectors for similarity search. "The nodes of the resulting KGs are then vectorized using the all-MiniLM-L6-v2 model from SentenceTransformers, enabling us to use cosine similarity to assess semantic closeness between the short sentence information and the nodes in the graph."

- community (graph communities): A tightly connected group of nodes in a graph, often used for summarization or compression. "GraphRAG aggregates well-connected nodes into 'communities' and generates a summary for each community to remove redundancy."

- cosine similarity: A measure of similarity between two vectors based on the cosine of the angle between them. "enabling us to use cosine similarity to assess semantic closeness between the short sentence information and the nodes in the graph."

- dependency parse: A syntactic analysis that represents grammatical relations between words in a sentence as a directed graph. "It first generates a 'dependency parse' for each sentence using the Stanford CoreNLP pipeline."

- DiffQ: A differential-testing framework that evaluates KG quality via embedding-based comparisons and assigns a quality score. "In contrast, DiffQ uses embedding models to evaluate the KG's quality and assign a DiffQ Score, resulting in improved KG quality assessment."

- DSPy: A framework for programming LLM pipelines with typed prompts (“signatures”) and structured outputs. "We use the DSPy framework throughout these stages to define signatures that ensure that LLM responses are consistent JSON-formatted outputs."

- entity embeddings: Vector representations of entities in a knowledge graph used for tasks like link prediction and reasoning. "TransE represents relationships as vector translations between entity embeddings and has demonstrated strong performance in link prediction when trained on large KGs"

- entity resolution: The process of identifying and merging different mentions that refer to the same real-world entity. "Inspired by crowd-sourcing strategies for entity resolution, the clustering stage has an LM examine the set of extracted nodes and edges to identify which ones refer to the same underlying entities or concepts."

- few-shot prompting: Providing a model with a small number of examples in the prompt to guide its behavior on a task. "Throughout this extraction, few-shot prompting provides the LM with examples of 'good' extractions."

- GFM-RAG: A “Graph Foundation Model for RAG” that trains on many KGs to generalize graph-based retrieval. "For example, GFM-RAG (Graph Foundation Model for RAG) trains a dedicated graph neural network on an extensive collection of KGs"

- graph neural network: A neural architecture that operates on graph-structured data to learn from nodes and edges. "trains a dedicated graph neural network on an extensive collection of KGs"

- GraphRAG: A method that integrates graph-based retrieval with LLMs and can also construct KGs from text. "Microsoft developed GraphRAG, which integrated graph-based knowledge retrieval with LMs"

- KG embeddings: Learned vector representations of entities and relations in a knowledge graph for downstream tasks. "The lack of domain-specific and verified graph data poses a serious challenge for downstream tasks such as KG embeddings, graph RAG, and synthetic graph training data."

- KGGen: A text-to-KG generator that uses LLMs and clustering to produce dense, high-quality knowledge graphs. "We present a solution to this data scarcity problem in the form of a text-to-KG generator (KGGen), a package that uses LLMs to create high-quality graphs from plaintext."

- KGEval: A method for approximating KG accuracy via sampling with statistical guarantees. "This led to accuracy approximation methods like KGEval"

- KGrEaT: A framework evaluating KGs via performance on downstream tasks rather than only correctness. "frameworks like Knowledge Graph Evaluation via Downstream Tasks(KGrEaT) and DiffQ(differential testing) emerged."

- KGTtm: A model for measuring the trustworthiness/coherence of triples in a KG. "The KGTtm model, on the other hand, evaluates the coherence of triples within a knowledge graph"

- Knowledge graph (KG): A graph of entities (nodes) and relations (edges), typically represented as triples. "Knowledge graph (KG) applications and Graph Retrieval-Augmented Generation (RAG) systems are increasingly bottlenecked by the scarcity and incompleteness of available KGs."

- LLM: A neural LLM (e.g., GPT-4o) used here for extraction, clustering, and evaluation. "a LLM (LM) like GPT-4o is used to extract a KG from a text corpus automatically"

- link prediction: The task of inferring missing edges (relations) between entities in a knowledge graph. "has demonstrated strong performance in link prediction when trained on large KGs"

- LLM-as-a-Judge: Using an LLM to evaluate or validate outputs, often with constrained responses. "Validate the single cluster using an LLM-as-a-Judge call with a binary response."

- LP-Measure: A link-prediction-based metric for assessing KG quality without human labels. "LP-Measure assesses tDhe quality of a KG through link prediction tasks, eliminating the need for human labor or a gold standard"

- Measure of Information in Nodes and Edges (MINE): A benchmark that evaluates how well text-to-KG methods capture facts for RAG. "we produce the Measure of Information in Nodes and Edges (MINE), the first benchmark that measures a knowledge-graph extractor's ability to capture and distill a body of text into a KG."

- Monte-Carlo search: A stochastic search method, here used to assist interactive annotation of KG triples. "leading to methods like Monte-Carlo search being used for the interactive annotation of triples"

- multi-hop reasoning: Reasoning over multiple edges/relations in a KG to connect distant facts. "This structured, graph-based augmentation has been shown to improve multi-hop reasoning and synthesis of information across documents"

- natural logic inference: A rule-based reasoning approach operating directly on natural language forms. "then, Angeli et al. run natural logic inference to extract the most representative entities and relations from the identified clauses."

- Open Information Extraction (OpenIE): An approach that extracts relational tuples from text without a fixed schema. "Open Information Extraction (OpenIE) was implemented by Stanford CoreNLP based on \citet{angeli-etal-2015-leveraging}."

- ontology: A structured specification of concepts and their relationships within a domain. "Interest in automated methods to produce structured text to store ontologies dates back to at least 2001"

- Retrieval-Augmented Generation (RAG): A paradigm where generation is grounded by retrieving external knowledge. "Consider retrieval-augmented generation (RAG) with a LLM (LM) -- this requires a rich external knowledge source to ground its responses."

- SentenceTransformers: A library for producing sentence and text embeddings for similarity and retrieval. "The nodes of the resulting KGs are then vectorized using the all-MiniLM-L6-v2 model from SentenceTransformers"

- Stanford CoreNLP: A suite of NLP tools providing parsing, extraction, and analysis capabilities. "Open Information Extraction (OpenIE) was implemented by Stanford CoreNLP"

- subject-predicate-object triples: The canonical KG representation of a fact as (head, relation, tail). "KGs consist of a set of subject-predicate-object triples, and have become a fundamental data structure for information retrieval"

- top-k: Selecting the k highest-scoring items (e.g., most similar nodes) for a query. "We do this by determining the top-k nodes most semantically similar to each fact."

- TransE: A KG embedding model that treats relations as translations in embedding space. "Embedding algorithms such as TransE rely on abundant relational data to learn high-quality KG representations."

- TransR: A KG embedding model that embeds entities and relations in separate spaces with projections. "embedding algorithms such as TransE and TransR \citep{TransE, TransR}."

- Two-State Weight Clustering Sampling (TWCS): A sampling-based method to estimate KG accuracy with less annotation. "Two-State Weight Clustering Sampling(TWCS)"

- vector translations: The modeling of relations as translation vectors applied to entity embeddings. "TransE represents relationships as vector translations between entity embeddings"

Collections

Sign up for free to add this paper to one or more collections.