WorldCraft: Photo-Realistic 3D World Creation and Customization via LLM Agents

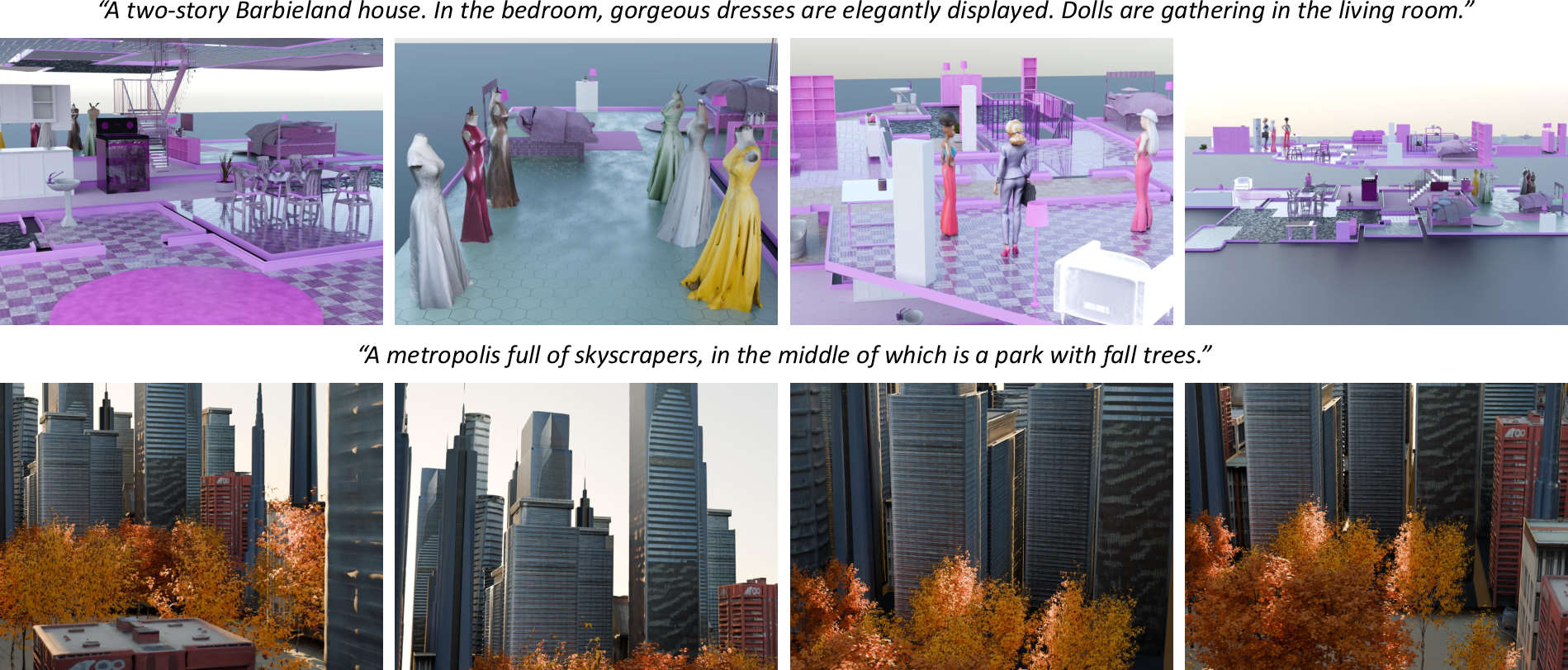

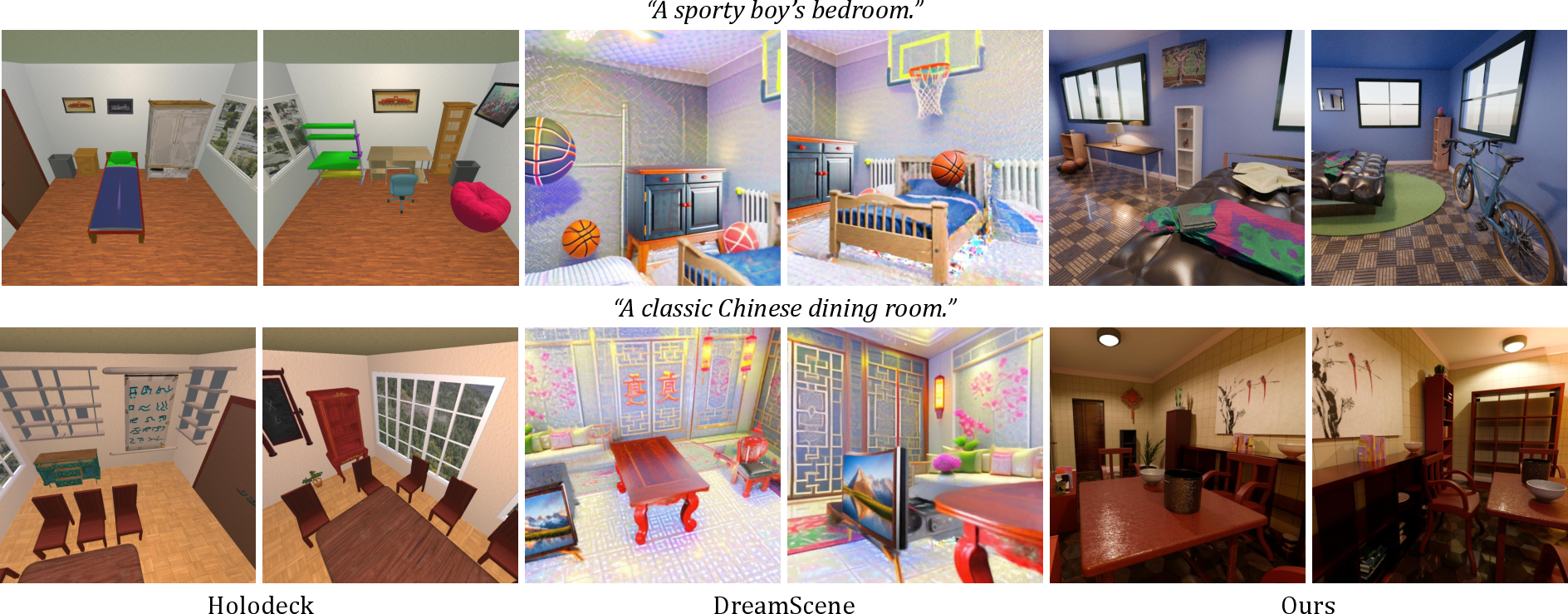

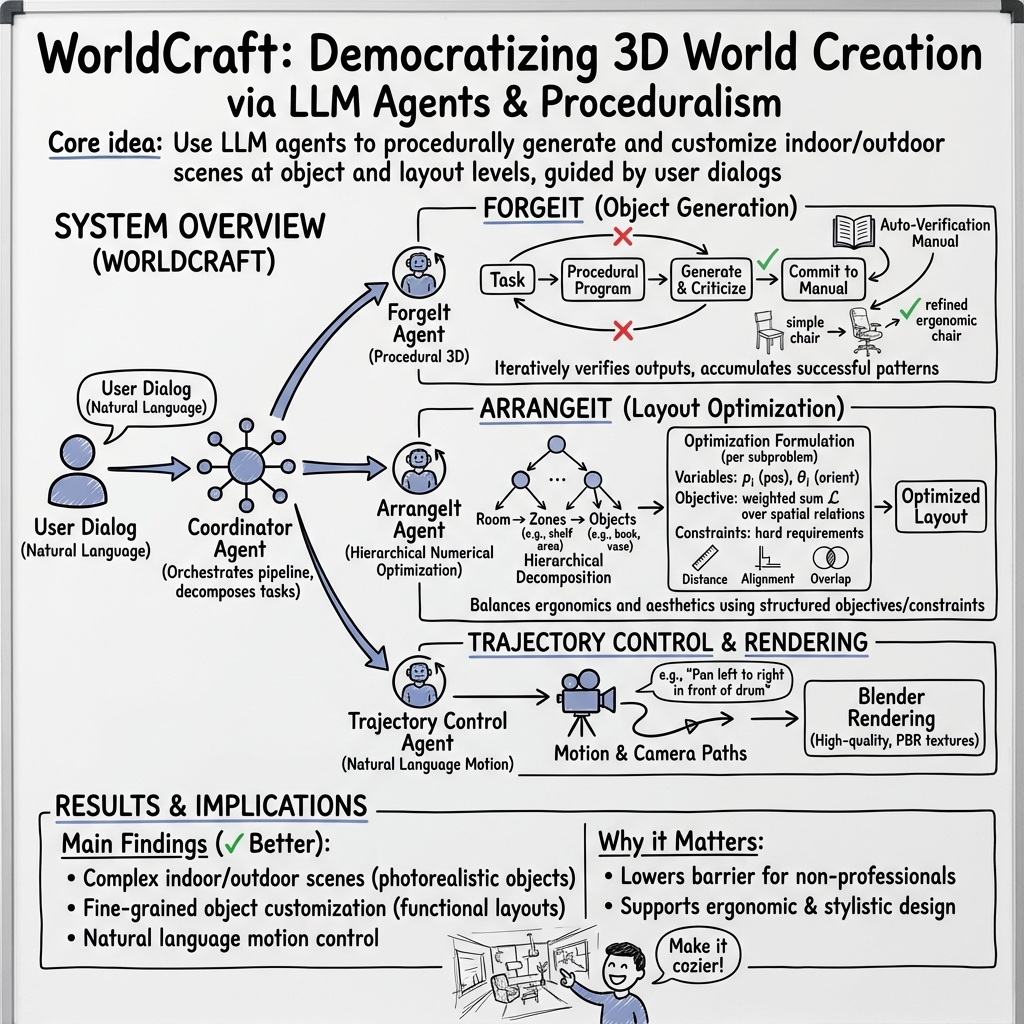

Abstract: Constructing photorealistic virtual worlds has applications across various fields, but it often requires the extensive labor of highly trained professionals to operate conventional 3D modeling software. To democratize this process, we introduce WorldCraft, a system where LLM agents leverage procedural generation to create indoor and outdoor scenes populated with objects, allowing users to control individual object attributes and the scene layout using intuitive natural language commands. In our framework, a coordinator agent manages the overall process and works with two specialized LLM agents to complete the scene creation: ForgeIt, which integrates an ever-growing manual through auto-verification to enable precise customization of individual objects, and ArrangeIt, which formulates hierarchical optimization problems to achieve a layout that balances ergonomic and aesthetic considerations. Additionally, our pipeline incorporates a trajectory control agent, allowing users to animate the scene and operate the camera through natural language interactions. Our system is also compatible with off-the-shelf deep 3D generators to enrich scene assets. Through evaluations and comparisons with state-of-the-art methods, we demonstrate the versatility of WorldCraft, ranging from single-object customization to intricate, large-scale interior and exterior scene designs. This system empowers non-professionals to bring their creative visions to life.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces WorldCraft, an AI-powered system that can build realistic 3D worlds (like rooms, houses, parks, or city scenes) from simple text instructions. Instead of needing a professional 3D artist, you can type what you want, and the system creates it, lets you customize individual objects, arranges them in smart ways, and even animates the scene—all through natural language.

Think of it like having a team of smart helpers who can design a photorealistic “Minecraft-like” world, but with real-world materials and lighting, just by talking to them.

What questions did the researchers ask?

The researchers wanted to know:

- Can an AI understand a person’s text instructions well enough to build detailed and realistic 3D scenes?

- Can it give users fine control over individual objects (like changing the size, color, or shape of a sofa) and the overall layout (like where things go in a room)?

- Can it arrange scenes so they look good and work well (for example, placing a pool table in the center with enough space to play)?

- Can it let users animate objects and the camera by just describing the motion in words?

- Can non-experts use it easily and get high-quality results?

How does WorldCraft work?

WorldCraft uses several AI “agents,” each with a job, guided by a main “coordinator.” Here’s the idea in everyday terms:

The Coordinator: the project manager

This agent reads your request, breaks it into smaller steps, and tells the other agents what to do. It also asks you follow-up questions and lets you tweak things along the way.

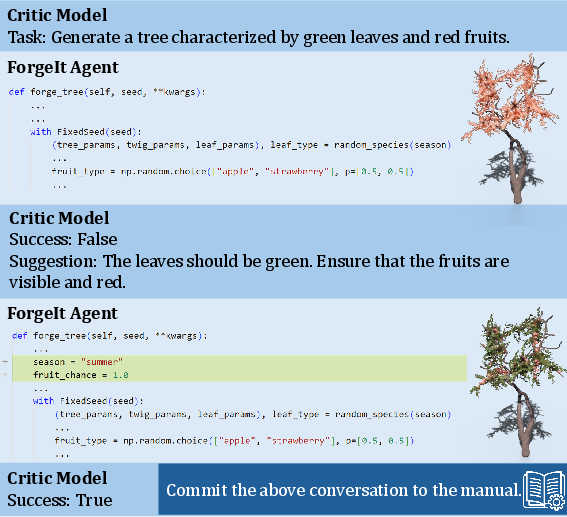

ForgeIt: the object maker

ForgeIt is the agent that creates individual 3D objects (like chairs, bookshelves, trees) using “procedural generation.” Procedural generation means building things using rules and code, like a recipe, instead of drawing them by hand. This makes objects clean, editable, and efficient to render.

To get really good at making objects, ForgeIt uses “auto-verification”:

- Imagine ForgeIt keeps a growing notebook of recipes. It tries to make an object based on your description.

- A “critic” AI looks at the result (from different camera angles) and says whether it matches the description.

- If it’s not right, the critic suggests fixes. ForgeIt updates the recipe and tries again.

- When it gets it right, the recipe is saved. Over time, ForgeIt builds a big, reliable manual it can reuse.

This process helps ForgeIt master complex tools and control hundreds of tiny settings without a human constantly correcting it.

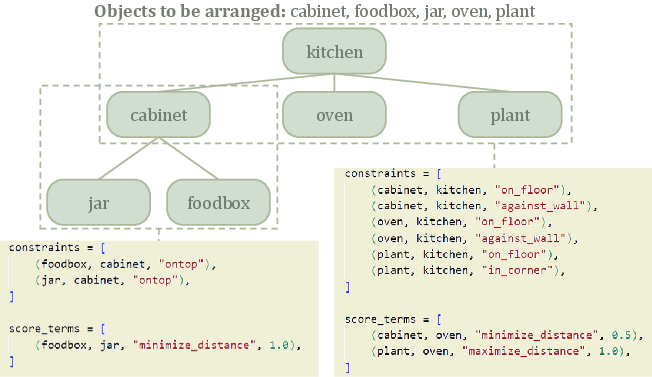

ArrangeIt: the layout planner

ArrangeIt decides where everything goes in the scene so it’s practical and looks good. It treats the problem like arranging furniture in a room:

- It breaks big tasks into smaller ones (for example, first place a shelf, then arrange the books on it).

- It uses a “score” to judge how good a layout is. The score checks things like:

- Is there enough space to walk?

- Are objects visible and reachable?

- Are similar items lined up neatly?

- Is the style pleasing?

- It tries many small changes, keeps the better ones, and gradually improves the arrangement. You can think of this like trying different furniture positions, then getting pickier over time until it looks and feels right.

Under the hood, this is called “numerical optimization,” but the idea is simple: keep testing and improving until the layout fits the rules and your instructions.

Trajectory Control: the animation director

Once the scene is built, you can animate the camera and objects by describing motions, like “Move the camera from left to right in front of the drum,” or “Make the lamp tilt toward the desk.” The system turns your words into motion commands and places them correctly in the 3D world.

Extra assets from deep 3D generators

WorldCraft can also plug in other 3D generation tools to add artistic or complex objects. These tools are optional add-ons that enrich the scene when needed.

What did they find?

The researchers tested WorldCraft on many scenes—from single objects to large houses and outdoor areas—and compared it with other state-of-the-art methods. They found:

- It creates more realistic and detailed scenes that match the user’s text better.

- Layouts respect real-world needs (like not blocking pathways, giving space around a pool table, and avoiding odd placements like a basketball hoop over a bed).

- It gives fine control over individual objects and their materials, making them look “photorealistic” under realistic lighting.

- It supports multi-step conversations: you can ask for changes, and the system updates the scene accordingly.

- It often runs faster than methods that rely heavily on large image diffusion models.

- Users and automated evaluations rated its results higher for consistency with the prompt, visual appeal, and functionality.

Why does this matter?

- It makes high-quality 3D world creation accessible to anyone—no advanced software skills needed.

- It can help game developers, filmmakers, and VR/AR creators quickly prototype environments.

- It can build training worlds for robots and AI, speeding up research in robotics and embodied AI.

- It lets people design, customize, and animate their ideas using simple language, turning imagination into visual worlds more easily.

In short, WorldCraft shows that a team of AI agents, guided by natural language, can design and animate realistic 3D environments. This could significantly lower the barrier to professional-quality 3D content creation and open up new creative and practical applications.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single consolidated list of what remains missing, uncertain, or unexplored in the paper, phrased to be actionable for future research.

- Quantitative reliability of the critic LLM in ForgeIt: no measurement of accuracy, bias, or failure modes in judging 3D object compliance from 2D renders; unclear how often critic misclassifications lead to suboptimal manuals.

- Convergence and sample-efficiency of the “ever-growing manual”: no guarantees or analysis of how many iterations/tasks are required to reach competence for a category, nor how performance scales as the manual grows.

- Coverage and generalization of the manual: undefined breadth across object categories and styles; unclear how well learned procedures transfer to novel categories or new versions of procedural generators.

- Manual maintenance and versioning: no strategy for updating or deprecating manual entries when procedural generator APIs change; missing mechanisms to detect conflicts or stale entries.

- Storage, retrieval, and conditioning of manual entries: no description of indexing, retrieval, or context selection when generating code; unclear how the agent chooses relevant manual entries in complex multi-turn edits.

- Objective failures in ForgeIt’s auto-verification loop: no fallback strategies when iterative refinement stalls, oscillates, or overfits to critic biases; no exploration of safeguards against infinite loops.

- Robustness to ambiguous or underspecified user instructions: no methods for uncertainty handling, clarification queries, or probabilistic interpretation of vague descriptions in asset and layout generation.

- Physical plausibility and physics integration: ArrangeIt’s constraints do not include physics (gravity, support, collisions, stability); absence of a physics engine or simulation to verify feasibility of arrangements and animations.

- Ergonomics modeling depth: ergonomics is treated heuristically (distance, visibility) without validated human factors models (reachability, comfort zones, ADA compliance, safety clearances); no empirical validation.

- Automatic lighting, materials, and global illumination: the pipeline does not model physically accurate lighting design beyond PBR; no constraints or optimization for lighting placement, exposure, or visual balance.

- Orientation representation and numerical stability: ArrangeIt uses Euler angles with potential gimbal lock; no investigation of quaternion-based optimization or manifold-aware rotation handling.

- Weight selection for objective terms: λ weights are not learned or systematically tuned; no methods for multi-objective balancing or user-controllable trade-offs with guarantees.

- Optimization algorithm choice and scalability: reliance on simulated annealing lacks comparative analysis with gradient-based, mixed-integer, or sampling-based planners; scalability to hundreds/thousands of objects is untested.

- Hierarchical decomposition reliability: object-tree construction relies on LLM inference without formal rules; no metrics for correctness of inferred containment/attachment relationships or handling cyclic dependencies.

- Constraint satisfaction guarantees: no formal guarantees for hard constraints (e.g., non-overlap, minimum clearance); no post-optimization validation or repair steps reported.

- Trajectory control collision and smoothness: camera/object paths lack explicit collision avoidance, jerk minimization, or cinematic style constraints; no handling of occlusions or motion-induced visibility losses.

- Temporal consistency and multi-object coordination: no support for coordinated, constraint-coupled motions (e.g., group choreography, object-camera co-optimization) or temporal logic constraints.

- Asset integration consistency: off-the-shelf 3D generator assets may have scale, coordinate system, topology, or material mismatches; no automatic normalization, retopology, or PBR alignment steps documented.

- Style coherence across heterogeneous assets: no mechanism to enforce a consistent artistic style, material palette, or design language when mixing procedural and deep-generated assets.

- Real-time interactivity and performance: the pipeline targets offline Blender rendering; no assessment of latency, LOD, or real-time constraints for XR/game engines; hardware and runtime scaling details are sparse.

- Evaluation rigor and metrics: reliance on GPT-4 and user ratings without inter-rater reliability, statistical significance, or standardized benchmarks; CLIP may not capture layout/functionality—no specialized spatial/ergonomic metrics used.

- Benchmarking breadth: limited baselines (Holodeck, DreamScene) and scenarios; no tests on standardized scene-layout datasets or broad cross-domain benchmarks (e.g., offices, factories, public spaces).

- Failure case analysis: absent systematic cataloging of failure cases in asset generation, layout optimization, and trajectory control, and no diagnostic tooling for error attribution.

- Safety and security of code generation: no discussion of sandboxing, permissioning, or adversarial prompt handling for tool execution (Blender/Infinigen) to prevent harmful scripts or resource abuse.

- Reproducibility and determinism: dependence on proprietary GPT-4-0314 and closed APIs (Meshy) without reproducibility paths; no assessment of variability across LLM temperature/seeds or model upgrades.

- Multilingual and cross-cultural design support: pipeline appears English-only; style interpretations may be culturally biased; no evaluation on multilingual prompts or culturally diverse ergonomic norms.

- Multi-user collaboration and version control: no mechanisms for concurrent editing, conflict resolution, or scene graph diffs across users; unclear how iterative edits are tracked and merged.

- Scene connectivity and circulation: decomposition into sub-spaces does not address inter-room connectivity (doors, corridors), circulation flow, or global functional constraints across spaces.

- Environmental dynamics: weather, day-night cycles, and time-varying lighting are not modeled; open question how dynamic scene conditions affect arrangement and rendering quality.

- Integration with robotics/simulation: claims of relevance to embodied AI but no coupling with physics engines, task planners, or evaluation on robot navigation/manipulation tasks.

- Legal and licensing considerations: use of third-party generators and assets raises licensing/IP questions; no policy for asset provenance and redistribution.

- Dataset release and open-source artifacts: the manual, agent prompts, code, and assets are not reported as publicly available; replicability and community benchmarking are hindered.

- Scalability of user-in-the-loop workflows: no measurement of user effort, cognitive load, or guidance strategies (e.g., progressive disclosure) for large, complex scenes.

- Anchoring and perception from 2D renders: critic judgments use eight views; no analysis of view selection, occlusion effects, or 3D property misperception from 2D projections.

- Post-processing and quality assurance: no automated checks for mesh quality (self-intersections, normals, UVs), material correctness, or render artifacts; lacks validation pipelines before final export.

- Ethical considerations and bias: LLM-driven design decisions may encode biases (style, object choice); no audits or fairness checks in asset/layout choices or evaluation.

Practical Applications

Immediate Applications

The items below outline where WorldCraft’s current capabilities can be deployed today, along with sectors, potential tools/workflows, and assumptions/dependencies affecting feasibility.

- Bold: Game and XR environment prototyping and dressing

- Sectors: gaming, XR/VR, software

- What you can do: Rapid grayboxing-to-polish of indoor/outdoor levels; populate scenes with controllable PBR assets; iterate layouts and camera paths via text.

- Tools/products/workflows: “WorldCraft for Unreal/Unity” exporter; Blender add-on for ArrangeIt and Trajectory Control; navmesh bake post-process.

- Assumptions/dependencies: Engine export stability (FBX/USD/GLTF), platform poly/LOD budgets, IP/licensing for any deep 3D generator assets.

- Bold: Film/animation previsualization and set dressing

- Sectors: media/entertainment, virtual production

- What you can do: Previz sets, block scenes, generate repeatable camera moves, iterate props and layouts for beats and coverage.

- Tools/products/workflows: “WorldCraft Previz Toolkit” for Blender with shot templates; USD pipeline handoff to DCC/VP stages.

- Assumptions/dependencies: Not a substitute for final hero assets/lighting; consistency with studio color/asset namespaces; render farm scheduling.

- Bold: Interior design and real-estate staging

- Sectors: AEC (early-stage), real estate, e-commerce

- What you can do: Text-guided room layouts honoring ergonomic constraints; style-consistent furnishing; quick alternative schemes; virtual tours.

- Tools/products/workflows: “Realtor Stager AI” workflow; IFC/GLTF import of floorplans; ArrangeIt constraint presets (clearances, sightlines).

- Assumptions/dependencies: Accurate room dimensions required; not code-compliant engineering; manual review for safety/egress.

- Bold: Product lifestyle imagery and 3D catalog scenes

- Sectors: retail/e-commerce, marketing

- What you can do: Background scene generation around a hero product; consistent PBR lighting/camera packs; variant materials for A/B tests.

- Tools/products/workflows: “PBR Staging Studio” using ForgeIt + Trajectory Control; template packs (tabletop, kitchen, outdoor).

- Assumptions/dependencies: Accurate product geometry/materials; brand guideline enforcement; rights for any background assets.

- Bold: Robotics simulation environment creation

- Sectors: robotics, academia, software

- What you can do: Procedurally generate functionally plausible homes/warehouses; enforce spatial constraints; domain randomize assets and layouts.

- Tools/products/workflows: Exporters to Habitat, iGibson, SAPIEN, AI2-THOR; ArrangeIt scenario parameter sweeps.

- Assumptions/dependencies: Physics and affordances must be configured in the simulator; sim-to-real gap persists; object semantics require mapping.

- Bold: Synthetic dataset generation for vision/embodied AI

- Sectors: academia, software

- What you can do: Generate labeled scenes with controlled layout factors (distances, symmetries) for benchmarking perception/planning.

- Tools/products/workflows: “ArrangeIt Experiment Generator” with factor tags; automatic multi-view renders and annotations.

- Assumptions/dependencies: CLIP-aligned text may bias distributions; need careful validation against real-world statistics.

- Bold: Education and teaching 3D fundamentals

- Sectors: education

- What you can do: Classroom-friendly scene creation and camera exercises from text; interactive lessons on geometry, lighting, and ergonomics.

- Tools/products/workflows: “Teacher Mode” with safe prompts, age-appropriate content filters, and scaffolded tasks.

- Assumptions/dependencies: Hardware for rendering; content moderation; accessibility features (voice input) for inclusive classrooms.

- Bold: SMB marketing content and explainer videos

- Sectors: marketing/advertising, media

- What you can do: One-off product demos with simple camera paths; iterate messaging via scene edits without a full studio.

- Tools/products/workflows: “One-Click Ad Scenes” presets; batch render pipelines; caption-to-camera choreography.

- Assumptions/dependencies: Render time budgeting; music/voiceover licensing outside scope; QC for realism.

- Bold: UGC worldbuilding for social 3D platforms

- Sectors: social/gaming platforms

- What you can do: Text-to-environment creation for creator communities; asset customization and layout editing by chat.

- Tools/products/workflows: Platform SDK integration; content moderation hooks; poly-reduction and LOD pipelines.

- Assumptions/dependencies: Platform API availability; performance constraints; safety policies for user prompts.

- Bold: Parametric 3D asset authoring and marketplace QA

- Sectors: software, creative marketplaces

- What you can do: Generate asset families via ForgeIt recipes; use auto-verification renders for QA and tagging.

- Tools/products/workflows: “ForgeIt Recipes” repository; critic-verified thumbnails; natural-language search tags.

- Assumptions/dependencies: PBR compatibility; IP provenance; reviewer oversight for borderline cases.

- Bold: Accessibility-first creative tooling

- Sectors: accessibility, daily life, education

- What you can do: Voice/text-driven scene editing for users with motor impairments; simplified workflows for home planning.

- Tools/products/workflows: Screen-reader friendly UI; voice command packs; shareable scene links.

- Assumptions/dependencies: High-quality ASR; latency tolerance; privacy for home layouts.

- Bold: Script-to-storyboard pipelines

- Sectors: media/entertainment, education

- What you can do: Convert script beats into 3D blockouts with camera trajectories, output storyboard frames.

- Tools/products/workflows: Trajectory Control “shot grammar” translator; auto shot list export (CSV/JSON).

- Assumptions/dependencies: Coverage heuristics may need director input; style transfer to 2D line-art requires external tools.

Long-Term Applications

These opportunities likely require additional research, scaling, integration, or policy development before broad deployment.

- Bold: End-to-end playable world generation

- Sectors: gaming, software, robotics (sim)

- What you can do: From text to navigable, gameplay-ready levels (navmesh, occlusion, streaming, quest logic).

- Tools/products/workflows: “WorldCraft Game Builder” with engine plug-ins; asset LOD/instancing; automated playtesting agents.

- Assumptions/dependencies: Engine-specific integration; robust validation for progression and fairness; content safety.

- Bold: BIM-aware architectural design co-pilot

- Sectors: AEC, energy

- What you can do: Layouts that honor codes (egress, ADA), materials, costs; export IFC; early energy/daylight optimization.

- Tools/products/workflows: “WorldCraft for BIM” solver (multi-objective); code rulebase; connections to EnergyPlus/Radiance.

- Assumptions/dependencies: Formalized building codes; accurate dimensions/tolerances; liability and professional oversight.

- Bold: Urban planning and participatory design at civic scale

- Sectors: public policy, urban planning, transportation

- What you can do: Scenario authoring (zoning, density, green space), rapid visuals for public engagement, ADA/traffic/heat modeling hooks.

- Tools/products/workflows: “Civic WorldCraft” with GIS ingestion; constraint templates (setbacks, FAR); public comment integration.

- Assumptions/dependencies: Up-to-date GIS and zoning data; model transparency; risk of miscommunication requires facilitation.

- Bold: Digital twins for operations and resilience

- Sectors: energy, utilities, smart cities, manufacturing

- What you can do: Procedurally generated, richly parameterized twins for what-if analyses (energy loads, evacuation, supply chains).

- Tools/products/workflows: Twin orchestration via ArrangeIt constraints; telemetry overlays; anomaly simulation.

- Assumptions/dependencies: Semantic fidelity of geometry; synchronization with sensor data; cyber-physical security.

- Bold: Closed-loop robotics curriculum generation

- Sectors: robotics, academia

- What you can do: Auto-generate scenes targeted to failure modes; progressive curricula based on performance signals.

- Tools/products/workflows: Reward-aware scene generator; affordance tagging; sim2real transfer monitors.

- Assumptions/dependencies: Realistic physics and contact; domain gap mitigation; safety for eventual real deployment.

- Bold: Real-time virtual production on LED volumes

- Sectors: media/entertainment, hardware

- What you can do: On-stage world generation and set re-dressing with synchronized camera/lens metadata.

- Tools/products/workflows: USD-stageable WorldCraft nodes; lens calibration; nDisplay/Disguise integration.

- Assumptions/dependencies: Real-time performance at high resolution; consistent color pipeline; onset network reliability.

- Bold: Personalized AR room configuration and shopping

- Sectors: retail, daily life, mobile

- What you can do: On-device text-to-scene for one’s own room scans; instant furniture arrangement comparisons and cost roll-ups.

- Tools/products/workflows: Mobile AR SDKs; scene scale calibration; material recognition.

- Assumptions/dependencies: Device compute and battery; privacy safeguards; accurate room scans.

- Bold: Safety and compliance checking as constraints

- Sectors: policy, AEC, manufacturing

- What you can do: Encode OSHA/ADA/fire codes as hard constraints; auto-flag violations and suggest fixes.

- Tools/products/workflows: Compliance rule engines; explainable constraint reports; audit trails.

- Assumptions/dependencies: Rule formalization and versioning; legal responsibility; third-party certification.

- Bold: “Dynamic manual” generalization to other complex tools

- Sectors: software engineering, CAD/CAM, lab automation, DevOps

- What you can do: Apply ForgeIt’s auto-verification to teach LLMs other procedural/parameterized systems (CNC, EDA flows, lab robots).

- Tools/products/workflows: “AutoManual SDK” with critic evaluators and test harnesses per domain.

- Assumptions/dependencies: Reliable, automated oracles for verification; safe sandboxes; cost of failed iterations.

- Bold: Multi-agent co-creative platforms with provenance

- Sectors: software, creative industries

- What you can do: Designers collaborate with specialized agents (asset, layout, camera) with versioning and rights tracking.

- Tools/products/workflows: Agent orchestration layer; data provenance/watermarking; repository of verified recipes.

- Assumptions/dependencies: IP governance; interoperability standards; human-in-the-loop UX.

- Bold: Standards and policy for generative 3D provenance and IP

- Sectors: policy, legal, platforms

- What you can do: Watermarking, licensing frameworks, disclosure norms for synthetic scenes used in ads/real estate/public policy.

- Tools/products/workflows: C2PA-like extensions for 3D; platform policy toolkits; audit logs.

- Assumptions/dependencies: Cross-industry buy-in; enforceability; alignment with privacy laws.

- Bold: Training data for future 3D foundation models

- Sectors: AI/ML research, software

- What you can do: Generate diverse, labeled 3D corpora (assets, layouts, trajectories) for pretraining and alignment.

- Tools/products/workflows: Large-scale procedural data farms; curriculum scheduling; bias/coverage dashboards.

- Assumptions/dependencies: Diversity and realism sufficient to improve downstream performance; careful bias mitigation.

Common assumptions and dependencies across applications

- Quality/realism: Photorealism is high but not equivalent to engineering-grade tolerances; physics is simulator-dependent.

- Toolchain: Access to Blender, Infinigen, and optional deep 3D generators/APIs; stable exporters (USD/GLTF/FBX).

- LLM availability: Performance and cost depend on access to capable LLMs (or strong open-source alternatives) and latency budgets.

- IP/compliance: Generated content must respect licensing, trademarks, and safety regulations; moderation is needed for public-facing use.

- Compute/cost: Scene creation is efficient relative to diffusion models but still non-trivial at scale; rendering pipelines dominate runtime for video deliverables.

Glossary

- 3DGS (3D Gaussian Splatting): A point-based 3D representation using Gaussian primitives for fast rendering. "NeRF and 3DGS representations."

- Auto-verification: An automated feedback loop where a critic model evaluates outputs and guides iterative improvements. "ForgeIt dynamically constructs a manual through an auto-verification mechanism."

- Autoregressive text-to-trajectory model: A sequence model that converts language into motion commands by generating trajectories token-by-token. "use an autoregressive text-to-trajectory model to translate them into trajectory commands."

- Autoregressive transformer: A transformer that generates sequences by conditioning on previously produced tokens, used for object or layout synthesis. "generates indoor scenes using an autoregressive transformer"

- Bounding box: An axis-aligned box enclosing an object, used for spatial reasoning, constraints, and anchoring trajectories. "based on their bounding boxes."

- CLIP score: A metric from the CLIP model quantifying image–text semantic similarity. "For consistency, we additionally report a CLIP score measuring the similarity between the rendered image of the generated scene and the input text."

- Denoising diffusion models: Generative models that synthesize data by reversing a noise-adding process through iterative denoising. "denoising diffusion models"

- Embodied agents: Agents situated in environments (simulated or physical) that perceive and act, often used for robotics training. "to train embodied agents"

- Euler angles: A rotation parameterization for 3D orientation using sequential rotations around coordinate axes. "denotes its orientation in Euler angles."

- GANs (Generative Adversarial Networks): Generative models trained via adversarial objectives between generator and discriminator. "using GANs to render novel viewpoints"

- Hierarchical numerical optimization: An approach that decomposes a complex arrangement into subproblems and optimizes them with objectives and constraints. "formulates scene arrangement as a hierarchical numerical optimization problem"

- Infinigen: A procedural generator for diverse 3D assets and scenes with extensive controllable parameters. "Procedural generators like Infinigen \cite{infinigen2023infinite, infinigen2024indoors} offer a glimpse of potential in LLM-based scene generation."

- Marching Tetrahedra: A mesh extraction algorithm that reconstructs surfaces from volumetric data using tetrahedral cells. "mesh extraction using algorithms like Marching Tetrahedra"

- Metropolis-Hastings criterion: A Monte Carlo acceptance rule used within stochastic optimization and sampling methods. "with the Metropolis-Hastings criterion"

- NeRF (Neural Radiance Fields): A neural volumetric representation modeling radiance and density for novel-view synthesis. "NeRF and 3DGS representations."

- Panoramic images: Wide-field images (often 360°) used as intermediates for creating 3D scene representations. "create panoramic images from textual inputs"

- Physically Based Rendering (PBR) textures: Material textures designed under PBR principles to achieve realistic lighting and shading. "PBR textures."

- Procedural generation: Rule-based, programmatic synthesis of content (objects, scenes) through parameterized algorithms. "leverage procedural generation to create indoor and outdoor scenes"

- Radiance fields: Continuous functions representing emitted light and density throughout 3D space. "translated 3D semantic labels into radiance fields."

- Scene graph: A structured representation of objects and their relations used for compositional scene creation. "create a scene graph for compositional 3D scene creation."

- Simulated annealing: A stochastic optimization technique inspired by physical annealing, used to search layout configurations. "employ simulated annealing"

- Trajectory control: Directing object or camera motion paths via commands, often generated from natural language. "a trajectory control agent"

Collections

Sign up for free to add this paper to one or more collections.