Scaling Laws in Scientific Discovery with AI and Robot Scientists

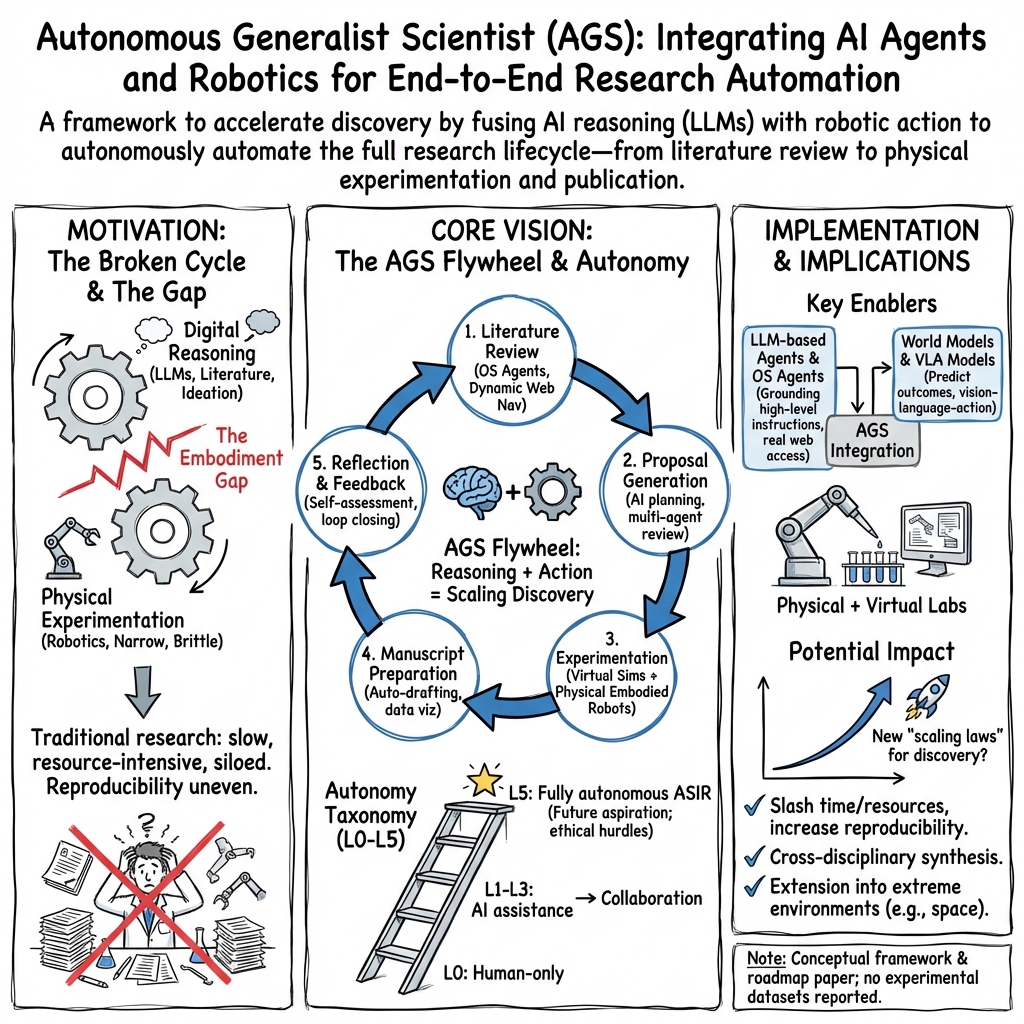

Abstract: Scientific discovery is poised for rapid advancement through advanced robotics and artificial intelligence. Current scientific practices face substantial limitations as manual experimentation remains time-consuming and resource-intensive, while multidisciplinary research demands knowledge integration beyond individual researchers' expertise boundaries. Here, we envision an autonomous generalist scientist (AGS) concept combines agentic AI and embodied robotics to automate the entire research lifecycle. This system could dynamically interact with both physical and virtual environments while facilitating the integration of knowledge across diverse scientific disciplines. By deploying these technologies throughout every research stage -- spanning literature review, hypothesis generation, experimentation, and manuscript writing -- and incorporating internal reflection alongside external feedback, this system aims to significantly reduce the time and resources needed for scientific discovery. Building on the evolution from virtual AI scientists to versatile generalist AI-based robot scientists, AGS promises groundbreaking potential. As these autonomous systems become increasingly integrated into the research process, we hypothesize that scientific discovery might adhere to new scaling laws, potentially shaped by the number and capabilities of these autonomous systems, offering novel perspectives on how knowledge is generated and evolves. The adaptability of embodied robots to extreme environments, paired with the flywheel effect of accumulating scientific knowledge, holds the promise of continually pushing beyond both physical and intellectual frontiers.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper imagines a “robot scientist” that can do most parts of research on its own. The authors call it an Autonomous Generalist Scientist (AGS). Think of AGS as a smart team made of:

- AI “brains” that read, plan, and write, and

- robot “hands” that run real experiments in the lab.

The main idea is that combining powerful AI with capable robots could make scientific discovery much faster, cheaper, and more reliable. As more of these systems are built and improved, science might follow new “scaling laws,” meaning discoveries could grow in a predictable, accelerating way as the tools get better.

Key Objectives and Questions

The paper explores simple but big questions:

- How can we automate the full research cycle—from finding ideas, to testing them, to writing up results?

- What “levels” of automation make sense, from no AI at all to fully independent robot scientists?

- What tools are needed for getting the latest information online, doing safe and accurate lab work, and writing papers?

- How should science handle papers written or discovered by AI/robots—do we need special platforms?

- Do robot scientists need to look like humans to be useful in human-designed labs?

- Can robot scientists work in extreme places, like space, where humans can’t easily go?

Methods and Approach (Explained Simply)

The paper doesn’t run one big experiment; it proposes a framework and roadmap. Here’s how they break it down, in everyday terms:

- Levels of automation: The authors define six levels (0–5) to describe how involved AI and robots can be:

- Level 0: Humans do everything with simple tools (like spreadsheets).

- Level 1: AI helps with basic tasks (like searching for papers), but humans decide and control.

- Level 2: AI “agents” can research online more independently, but still need strong human guidance.

- Level 3: AI works with robots to run physical experiments, collaborating with humans.

- Level 4: Semi-independent AI+robots do complex research with minimal supervision.

- Level 5: Fully autonomous super-intelligent AI+robots lead new discoveries on their own (this is more a future idea than reality).

- Getting information (literature review): The paper suggests using “OS agents.” Think of them like super smart computer drivers that can browse the web, click, read, and collect fresh information like a person would, not just use fixed databases. This helps the system stay up-to-date.

- Planning research: AI looks at what’s known and what’s missing, then proposes ideas and steps to test them. It refines plans by simulating different paths and getting feedback (from humans or other AI agents).

- Doing experiments: Robots act as the “hands” that follow the AI’s plan. They can handle lab equipment, measure things precisely, and repeat steps the same way each time. Before touching real materials, the AI runs virtual simulations—like practicing in a video game—to reduce mistakes and save time.

- Writing papers: AI turns data into charts and tables, organizes sections (introduction, methods, results, discussion), manages references, and prepares the manuscript for submission. It also supports peer review by checking for errors and asking specialized “critic” agents to review parts of the paper.

- Safety, ethics, and communication: The paper stresses safe robot behavior around people and lab equipment, ethical data use, and human oversight. It also suggests strong feedback loops—each step informs the next, like a well-coordinated team.

- Publishing machine-generated research: The authors propose a platform (aiXiv) for AI/robot-created papers and proposals to be reviewed by both humans and AI, with clear rules on responsibility and accuracy.

Main Findings and Why They Matter

This is a vision paper, so it doesn’t claim to have built the final AGS. Its main “results” are ideas and structures:

- A clear roadmap and levels of automation: This helps researchers and policymakers talk about progress in a shared, precise way.

- OS agents can make literature reviews fresher and more complete than traditional API tools.

- Combining AI “brains” with robot “hands” could:

- Speed up science (fewer slow, manual steps).

- Improve reproducibility (robots repeat tasks consistently).

- Boost cross-field thinking (AI connects ideas from different areas).

- Key challenges are identified and organized: perception and manipulation (robots seeing and handling things), independent reasoning, knowledge transfer, safety near humans, smooth human-robot communication, and ethics and law. Naming these clearly helps the community focus on what to fix.

- Humanoid vs. non-humanoid robots: Human-like shapes work well in human labs, but other designs might be better in special settings.

- Extreme environments: Robot scientists could do research in space or other harsh places where humans struggle.

- Scaling laws: If AGS becomes common and powerful, discovery rates could increase in a predictable, accelerating pattern. That’s a big promise for faster progress.

Implications and Potential Impact

If this vision comes true, science could change a lot:

- Faster discoveries: More ideas tested quickly could lead to breakthroughs in health, climate, materials, and beyond.

- Fairer access: Automated writing and analysis could help researchers with fewer resources publish high-quality work.

- New roles for humans: People might focus more on big-picture questions, ethics, creativity, and interpreting results, while AI+robots handle routine or risky tasks.

- Stronger safety and trust systems: Clear rules, human oversight, and careful testing will be crucial to avoid mistakes and misuse.

- Exploration beyond Earth: Robot scientists could expand our reach, turning distant places into new research sites.

In short, the paper lays out a practical, step-by-step plan for building AI+robot systems that can act like generalist scientists. Done responsibly, this could transform how we discover new knowledge—and how fast we do it.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a single list of unresolved gaps and limitations that future researchers could concretely address to validate, operationalize, and safely scale the proposed Autonomous Generalist Scientist (AGS) paradigm.

- Quantify the proposed “scaling laws of scientific discovery”: define variables (e.g., compute, robot-hours, instrumentation diversity, corpus size), target outcomes (novelty, correctness, reproducibility, citation impact), functional forms, and empirical protocols to test them.

- Operationalize the autonomy-level taxonomy (Levels 0–5) with measurable criteria, standardized task suites (virtual and physical), pass/fail thresholds, and benchmarking procedures enabling reproducible classification.

- Establish end-to-end evaluation KPIs for AGS (from literature review to manuscript submission), including throughput, error rates, cost, energy use, and scientific quality metrics validated against independent human assessments.

- Design robust, legally compliant literature-review agents: access/paywall strategies, retraction detection, provenance tracking, versioned snapshots of sources, and policies for minimizing web-scraping/legal risk.

- Build automated fact-checking pipelines for LLM outputs: multi-source corroboration, citation verification, hallucination auditing, and benchmark datasets to measure factuality in scientific contexts.

- Create originality and “idea plagiarism” detection for proposal generation: novelty metrics (e.g., semantic distance from prior art), prior-claim matching, and human-in-the-loop safeguards to prevent derivative or unethical proposals.

- Translate hypotheses into testable predictions automatically: power analysis, sample-size calculations, experimental control selection, and pre-registration workflows integrated with AGS planning.

- Develop a formal lab Protocol DSL that compiles natural-language procedures into verified, executable robot action plans with static analysis, simulation-based validation, and runtime safety guarantees.

- Demonstrate robotic manipulation generalization across diverse lab instruments and materials: sterile technique, microfluidics, hazardous chemicals/biologicals, calibration routines, and error recovery under real lab variability.

- Advance perception for lab environments: multi-sensor fusion under occlusions/reflective surfaces, fine-grained state estimation (e.g., microliter-level liquids), anomaly detection, and uncertainty quantification during procedures.

- Integrate world models and LLMs with explicit representations of physics, constraints, and uncertainty: planning under partial observability, active learning for experiment selection, and robust sim-to-real transfer protocols.

- Formalize comprehensive safety cases for AGS: permissioning models, hard safety constraints, kill-switches, risk modeling, near-miss logging, and third-party safety audits spanning cyber-physical operations.

- Address ethical and legal compliance at runtime: GLP/GMP adherence, BSL-level certification for bio work, IRB/animal protocols, export control/dual-use governance, and clear accountability/liability frameworks.

- Harden AGS security: adversarial prompt defenses, data-poisoning detection, secure instrument drivers/firmware, supply-chain integrity, and resilience to cyber-physical attacks.

- Standardize provenance and reproducibility artifacts: machine-readable metadata (e.g., RO-Crate), instrument logs, environment snapshots, digital twins, and cross-lab replication protocols with independent verification.

- Create cost and sustainability models: CAPEX/OPEX for AGS deployment, energy footprint of compute/robotics, material consumption, ROI analyses, and strategies for equitable access in resource-limited settings.

- Improve interoperability with lab infrastructure: standardized instrument APIs (e.g., SiLA, OPC UA), driver abstractions, protocol portability across vendors, and avoiding vendor lock-in via open interfaces.

- Manage continual learning and model updates: drift detection, safe rollback/versioning, gated deployment after offline evaluation, and transparent change logs affecting scientific conclusions.

- Design human-robot collaboration UX: interpretable plans, adjustable autonomy, escalation policies, trust calibration tools, and interfaces that make AGS decisions auditable and contestable by humans.

- Validate automated manuscript generation: statistical checks, methods compliance, figure integrity, non-fabrication guarantees, accurate citation linking, plagiarism screening, and transparent disclosure policies acceptable to journals.

- Calibrate AI-based peer review simulations: demonstrate predictive validity against actual journal outcomes, minimize echo-chamber effects, ensure reviewer diversity, and measure bias/error in automated critique.

- Specify aiXiv-like platform governance: submission standards, metadata/DOI flows, moderation and spam prevention, machine-human attribution, licensing, and integration with traditional publishers and indexing services.

- Compare humanoid vs. non-humanoid embodiments in labs: controlled studies of task success, speed, energy use, safety, retrofitting costs, and human-collaboration quality in standard lab environments.

- Engineer AGS for extreme environments (space, deep-sea, high-radiation): radiation-hardening, autonomy under long communication delays, in-situ resource utilization, redundancy/maintenance strategies, and planetary protection compliance.

- Define robust impact metrics beyond publication count: novelty, correctness, societal benefit/risk, long-term replicability, and anti-gaming mechanisms to discourage superficial productivity.

- Map domain boundaries of AGS applicability: identify fields requiring irreplaceable human oversight (e.g., clinical trials), and design governance interfaces for hybrid human–AGS workflows.

- Secure inclusive data access: partnerships for paywalled and proprietary datasets, legal/ethical frameworks for sensitive data, and mechanisms to avoid privileging well-indexed Anglophone literature.

- Mitigate bias and inequity: assess how training corpora skew AGS research agendas, implement debiasing methods, and ensure equitable topic selection across global, linguistic, and disciplinary diversity.

- Implement biosecurity safeguards: DNA synthesis screening, pathogen risk controls, real-time dual-use risk classifiers, and compliance with international biosafety/biosecurity standards.

- Establish calibration ladders and traceability: periodic instrument/model calibration with NIST-traceable standards, drift monitoring, and documented maintenance affecting data validity.

- Compile a failure-mode taxonomy and remediation playbooks: contamination handling, waste disposal, environmental compliance, sample salvage, and automatic safe shutdown procedures.

- Coordinate multi-robot laboratories: scheduling, resource contention resolution, inter-robot safety protocols, fault isolation, and real-time communications resilience.

- Govern data lifecycle: retention policies, privacy compliance (GDPR/HIPAA), anonymization pipelines, and validity of synthetic data used in analysis or training.

- Enable public transparency and audits: publish protocol logs, model cards, safety reports, red-teaming outcomes, and third-party replication packages to build trust and external accountability.

- Evaluate workforce and education impacts: define new competencies, training standards, and pathways for researchers to effectively collaborate with and supervise AGS systems.

Practical Applications

Below is an overview of practical, real-world applications implied by the paper’s framework for an Autonomous Generalist Scientist (AGS)—a fusion of agentic AI and embodied robotics that automates the research lifecycle (literature → proposal → experimentation → manuscript → reflection). Applications are grouped by deployment horizon and annotated with sectors, example tools/workflows, and feasibility assumptions or dependencies.

Immediate Applications

- AI-powered literature review and evidence synthesis

- Sectors: pharmaceuticals, materials science, biotech, software/AI, public policy

- Tools/workflows: OS agents for web navigation (e.g., OS-Copilot, OSWorld, VisualWebArena, GPT-4V); search+scrape pipelines; LLM-based summarization and clustering; “living reviews”

- Assumptions/dependencies: compliance with publisher ToS and data licensing; robust browser automation; institutional access to paywalled content; human oversight for critical facts

- Automated gap analysis, hypothesis generation, and proposal drafting

- Sectors: academia, corporate R&D, government labs and grant offices

- Tools/workflows: multi-agent “ResearchAgent” loops; topic modeling and novelty scoring; Gantt/budget auto-generation; iterative review via agent panels

- Assumptions/dependencies: domain-tuned LLMs to reduce hallucinations; alignment with agency/journal guidelines; final human approval

- LLM-guided experimental design and parameter optimization

- Sectors: chemistry, synthetic biology, materials, semiconductors, energy storage

- Tools/workflows: LLM-in-the-loop design of experiments (DOE), Bayesian optimization; protocol drafting from literature; integration with ELN/LIMS

- Assumptions/dependencies: safe action spaces; validated priors; high-quality historical data; alignment with safety SOPs

- Semi-automated wet-lab execution with existing robotics

- Sectors: pharma/biotech CROs, synthetic biology foundries, academic core facilities

- Tools/workflows: robot platforms for liquid handling and sample prep (e.g., Chemistry3D/ORGANA-like setups); ROS-LLM for task orchestration; camera and force feedback for QA

- Assumptions/dependencies: instrument APIs and calibration; compliant enclosures and guards; safety guardrails (e.g., SafeVLA); human-in-the-loop supervision

- Sim2Lab pre-screening (simulation before wet-lab)

- Sectors: materials discovery, drug screening, catalysis, battery development

- Tools/workflows: physics/ML simulators; automated hypothesis pruning; “Sim2Real” transfer checklists

- Assumptions/dependencies: validated models; compute availability; reproducible pipelines; uncertainty quantification

- Reproducibility-by-design research pipelines

- Sectors: academic journals, pharma QA, regulatory submissions

- Tools/workflows: automated logging of parameters, sensor data, and versioned code/protocols; executable “protocol DSLs”; robot-executed reruns for reproducibility

- Assumptions/dependencies: shared metadata standards and ontologies; standardized lab interfaces; secure audit trails

- Manuscript automation suite (data-to-paper)

- Sectors: academia, industry research communications, publishers

- Tools/workflows: automated figure/table generation (e.g., MatPlotAgent, Data Interpreter), section drafting, reference normalization, journal-specific formatting and submission management

- Assumptions/dependencies: human fact-checking; access to citation databases; journal API integration; authorship/attribution policies

- Internal AI “peer review” and compliance checks

- Sectors: universities, journals, funders, corporate R&D governance

- Tools/workflows: agent panels critiquing statistics, methods, ethics; pre-registration and reporting checklists; journal-style decision simulation

- Assumptions/dependencies: domain-calibrated evaluators; explainability and audit logs; mechanisms to prevent model bias amplification

- Safety and compliance layers for lab autonomy

- Sectors: lab automation vendors, EHS departments, regulators

- Tools/workflows: policy-as-code; force/torque limits; real-time risk assessment; fail-safes and emergency stops; SafeVLA-like safety-aware VLMs

- Assumptions/dependencies: reliable sensors; standards compliance (e.g., ISO/IEC); validated risk models; periodic safety audits

- Autonomy taxonomy for procurement and governance

- Sectors: regulators, funding bodies, RFP/RFI processes, institutional review boards

- Tools/workflows: adoption of Levels 0–5 autonomy in capability declarations; evaluation rubrics tied to level-specific safeguards

- Assumptions/dependencies: community consensus on definitions; mapping to liability and compliance frameworks

- Research education assistants (Auto-TA for methods)

- Sectors: higher education, research training programs

- Tools/workflows: guided literature reviews, proposal scaffolds, data visualization tutoring, writing support

- Assumptions/dependencies: academic integrity policies; detection/attribution tooling; instructor oversight

- Knowledge management: AI-curated lab notebooks and living protocols

- Sectors: all research labs; tech transfer offices

- Tools/workflows: ELN/LIMS integration; automated protocol updates; “diffs” and provenance tracking

- Assumptions/dependencies: vendor integrations; privacy and IP protection; role-based access control

Long-Term Applications

- Semi-independent AGS-driven labs (Level 4)

- Sectors: pharma, advanced materials, energy R&D, national labs

- Tools/workflows: 24/7 autonomous hypothesis → experiment → analysis cycles; multi-robot orchestration; autonomous resource scheduling

- Assumptions/dependencies: general-purpose dexterous manipulation; robust world models; regulatory acceptance of minimal human oversight

- Fully autonomous robot scientists (Level 5) discovering new principles

- Sectors: frontier science; national innovation programs

- Tools/workflows: self-directed cross-domain research; autonomous exploration of vast hypothesis spaces; continual learning flywheels

- Assumptions/dependencies: artificial superintelligence remains speculative; stringent ethical and governance frameworks; fail-safe design

- Extreme-environment research (space, deep sea, polar, nuclear)

- Sectors: space agencies, energy, environmental science, defense

- Tools/workflows: radiation-hardened, pressure/temperature-tolerant platforms; long-latency autonomy; on-site sample analysis and fabrication

- Assumptions/dependencies: ruggedized hardware; reliable power; autonomous maintenance; comms intermittency management

- aiXiv-like platforms for machine-generated research and proposals

- Sectors: scholarly publishing, funding agencies, policy

- Tools/workflows: hybrid AI+human review pipelines; machine-readable methods and verification badges; attribution/provenance ledgers

- Assumptions/dependencies: accepted credit and responsibility models; falsification-resistant verification; community buy-in

- Humanoid lab technicians for human-centric facilities

- Sectors: lab automation providers, clinical labs, hospitals

- Tools/workflows: anthropomorphic bimanual manipulation; tool use without retrofits; natural human-robot collaboration

- Assumptions/dependencies: reliable dexterity and safety certification; cost-effective hardware; HRI fluency

- Cross-domain generalist experimentation via world models + VLMs

- Sectors: contract research organizations; on-demand “lab-as-a-service”

- Tools/workflows: rapid adaptation to new protocols from language and few-shot demos; plug-and-play lab modules; semantic affordance libraries

- Assumptions/dependencies: strong generalization and transfer; standardized instrument semantics; continual learning without catastrophic forgetting

- Regulatory sandboxes and international standards for AGS

- Sectors: governments, standards bodies (ISO/IEC), insurers

- Tools/workflows: liability and safety frameworks; graded autonomy permissions; data provenance and audit standards; ethics-by-design toolkits

- Assumptions/dependencies: multi-stakeholder consensus; harmonization across jurisdictions; effective enforcement mechanisms

- Discovery scaling networks (auto-innovation flywheels)

- Sectors: industry consortia, national labs, open-science alliances

- Tools/workflows: federated AGS nodes sharing hypotheses, datasets, and protocols; reproducibility incentives; automated negative result capture

- Assumptions/dependencies: interoperable ontologies; IP and data-sharing agreements; cybersecurity and trust frameworks

- Patient-specific research and rapid translational pipelines

- Sectors: precision medicine, diagnostics, personalized therapeutics

- Tools/workflows: on-demand assays and compound synthesis; closed-loop from clinical data → hypothesis → bench tests

- Assumptions/dependencies: CLIA/GMP compliance; clinical validation; data privacy and consent

- Autonomous micro-labs for education and workforce development

- Sectors: K–12, higher ed, vocational training

- Tools/workflows: safe, low-cost robot labs; guided inquiry curricula; remote access for underserved communities

- Assumptions/dependencies: hardware cost declines; safety and robustness; curricular standards and teacher training

- Closed-loop R&D-to-manufacturing integration

- Sectors: automotive, aerospace, electronics, advanced manufacturing

- Tools/workflows: digital thread from CAD/CAE → materials/process discovery → pilot lines; automated DOE on production tooling

- Assumptions/dependencies: MES/PLM integration; robust metrology feedback; change-control governance

- Ethical co-authorship, provenance, and credit systems

- Sectors: academia, publishers, research funders

- Tools/workflows: contribution ledgers (e.g., ORCID extensions), data/model/code provenance chains, audit-ready author declarations

- Assumptions/dependencies: community standards and infrastructure; verifiable attribution; incentive alignment for adoption

Notes on cross-cutting feasibility factors

- Reliability and safety: Requires strong guardrails, formal risk models, and standards compliance to operate around people and hazardous materials.

- Data and access: Publisher access, high-quality training data, and secure instrument APIs are critical; privacy and IP constraints must be managed.

- Human oversight and ethics: Persistent need for human governance, especially for attribution, accountability, and societal impact.

- Interoperability: Success depends on shared ontologies, metadata schemas, and instrument/control standards across vendors and labs.

- Cultural adoption: Journals, regulators, educators, and institutions must accept machine-assisted workflows and outputs, with transparent auditing.

Glossary

- AIXIV: A specialized platform concept for coordinating AI/robot-generated research with traditional publishers. "AIXIV (Fig.~\ref{aixiv_framework}) represents a potential intermediary framework connecting autonomous research systems with established scientific publishers."

- Agentic AI: AI systems designed to act autonomously with goal-directed behavior and decision-making. "We envision an autonomous generalist scientist (AGS) systemâa fusion of agentic AI and embodied roboticsâthat redefines the research lifecycle."

- AGI Robots (AGIR): Semi-independent robots with general intelligence capable of conducting sophisticated research across domains. "Level 4 introduces semi-independent systems (AGIR - AGI Robots) capable of conducting sophisticated research across both virtual and physical domains with minimal oversight, generating novel insights through cross-disciplinary data integration."

- Anthropomorphic design: Human-like robot form factor that aids intuitive human-robot collaboration. "anthropomorphic design facilitates intuitive human-robot collaboration through familiar physical characteristics and movement patterns."

- Artificial SuperIntelligence Robots (ASIR): Fully autonomous robotic systems surpassing human capabilities, able to drive groundbreaking research independently. "Finally, Level 5 represents fully autonomous Artificial SuperIntelligence Robots (ASIR) that exceed human capabilities, independently driving groundbreaking research and potentially discovering new scientific principlesâthough this level presents significant technical and ethical challenges and remains a future aspiration."

- Asilomar AI Principles: A set of widely referenced ethical guidelines for responsible AI development and deployment. "System development must adhere to established ethical guidelines, like the 23 Asilomar AI Principles, while maintaining compliance with applicable regulatory structures and international standards for advanced AI applications."

- AutoRT: A safety-focused autonomous robot task system from DeepMind implementing comprehensive safety protocols. "Advanced systems including DeepMind's AutoRT \cite{ahn2024autort} implement comprehensive safety protocols, such as force limitation mechanisms and human-proximity operational constraints."

- Autonomous Generalist Scientist (AGS): An integrated AI-robotic system intended to automate the entire research lifecycle across digital and physical domains. "We envision an autonomous generalist scientist (AGS) systemâa fusion of agentic AI and embodied roboticsâthat redefines the research lifecycle."

- Bilateral manipulation capabilities: Robotic ability to coordinate two manipulators/hands for precise experimental procedures. "Advanced bilateral manipulation capabilities enable precise experimental procedures across diverse scientific disciplines, while anthropomorphic design facilitates intuitive human-robot collaboration through familiar physical characteristics and movement patterns."

- Chain of Thought reasoning: A prompting technique that elicits step-by-step reasoning in LLMs. "The framework incorporates Chain of Thought reasoning \cite{wei2022chain} to break down complex experimental procedures into logical, sequential operations, ensuring methodological coherence throughout the process."

- Computational Simulation: Pre-experimental virtual modeling used to test scenarios before physical implementation. "Computational Simulation: Pre-experimental virtual modeling, similar to approaches in \cite{jablonka202314}, enables scenario testing before physical implementation."

- Embodied robotics: Robotic systems capable of physical interaction and manipulation integrated with AI reasoning. "We envision an autonomous generalist scientist (AGS) systemâa fusion of agentic AI and embodied roboticsâthat redefines the research lifecycle."

- Foundation models: Large pretrained models with broad, general-purpose capabilities that can be adapted across tasks and domains. "The autonomous generalist scientist presents a groundbreaking framework harnessing the comprehensive interdisciplinary knowledge within foundation models, while synergizing AI agent capabilities with embodied robotic systems to automate scientific inquiry across digital and physical domains."

- Flywheel effect: A reinforcing feedback loop where progress accelerates as accumulated knowledge and capability grow. "AGS could unlock new scaling laws and a flywheel effect in knowledge growth (Fig.\ref{ags_paradigm}), transforming how science is done."

- Humanoid design: Robot morphology modeled on human form, advantageous for human-centric environments and tools. "The necessity of humanoid design for AGS presents an intriguing technological consideration."

- Iterative Enhancement Mechanisms: Systematic self-improvement processes driven by continuous evaluation and adjustment. "Iterative Enhancement Mechanisms: Through systematic self-evaluation integration, the research platform progressively refines its operational capabilities."

- LLMs: Deep neural models trained on vast text corpora that enable advanced language understanding, generation, and reasoning. "Recent advances in AI, particularly LLMs ~\cite{wang2024survey}, offer a game-changer."

- LLM-based embodied AI: Integration of LLMs with embodied agents to connect high-level reasoning with physical interaction. "LLM-based embodied AI represents a promising path toward real-world AGI by connecting high-level reasoning with physical interaction."

- Multimodal agents: Agents that process and act on multiple modalities (e.g., vision and text) in complex digital environments. "Multimodal agents, such as VisualWebArena ~\cite{koh2024visualwebarena} and OSWorld ~\cite{xie2024osworld}, navigate sites and fetch up-to-date data directly."

- Operational Security: Safety practices and risk-aware behaviors ensuring robots operate safely near humans and in unstructured environments. "Operational Security. Human-robot collaboration introduces potential safety concerns, particularly in unstructured environments \cite{wu2024safety}."

- OS agents: Software agents that interact with operating systems and web interfaces like a human to perform searches and manipulations. "To overcome these hurdles, OS agents mimic human-like interactions with digital platforms, moving beyond static API limits."

- Reproducibility: The ability to consistently replicate experimental outcomes across conditions and implementations. "Scientific Reproducibility Assurance: The framework prioritizes experimental reproducibility and accuracy as scientific cornerstones."

- ROS-LLM: An integration approach combining Robot Operating System (ROS) with LLMs to enhance robotic planning and reasoning. "Integration frameworks such as ROS-LLM \cite{mower2024ros} demonstrate how LLMs can enhance robotic systems for structured experimental reasoning and dynamic decision processes."

- SafeVLA: A safety-oriented vision-language framework designed to protect environments, hardware, and human collaborators. "The SafeVLA framework \cite{zhang2025safevla} integrates safety considerations into vision-language architectures to protect environmental elements, hardware systems, and human collaborators."

- Scaling laws: Empirical relationships describing how capabilities or discovery rates scale with resources, data, or model size. "We foresee a future where scientific discovery follows new scaling laws, driven by the proliferation and sophistication of such systems."

- Topic modeling: An unsupervised NLP technique for discovering latent themes in large text corpora. "Using topic modeling and clustering, the system narrows broad topics into focused queries, ensuring the research is both timely and set to advance the field."

- Vision-language architectures: Model designs that jointly process visual and textual inputs to reason and act. "The SafeVLA framework \cite{zhang2025safevla} integrates safety considerations into vision-language architectures to protect environmental elements, hardware systems, and human collaborators."

- Vision-LLMs: AI models that combine visual and textual modalities for perception, understanding, and decision-making. "Vision-LLMs \cite{ma2024survey} and public robotics datasets \cite{padalkar2023open} support this development but face generalizability limitations."

- World model research: Study of learned environment representations enabling agents to predict outcomes and plan actions. "Concurrently, world model research focuses on environmental interaction learning, enabling robots to form internal representations of surroundings and predict action outcomes."

Collections

Sign up for free to add this paper to one or more collections.