- The paper presents an advanced XAI approach that integrates deep learning with a UNet2-based weather model to improve interpretability and operational performance.

- It employs Integrated Gradients for feature attribution and Local Temperature Scaling to calibrate output confidence and mitigate overconfidence.

- User-centered design drives a novel interface system validated by expert studies, enhancing transparency in precipitation predictions.

"Explainable AI-Based Interface System for Weather Forecasting Model" (2504.00795)

Introduction

The integration of Explainable AI (XAI) with weather forecasting models heralds significant advancements in meteorology, particularly in precipitation prediction. The study leverages deep learning to improve the interpretability and trustworthiness of AI systems, thereby enhancing their usability in real-world forecasting scenarios. This essay critically analyzes the development of a user-centered XAI interface aimed at improving the operational efficacy of weather prediction models through rigorous explanation methods.

Model and Data

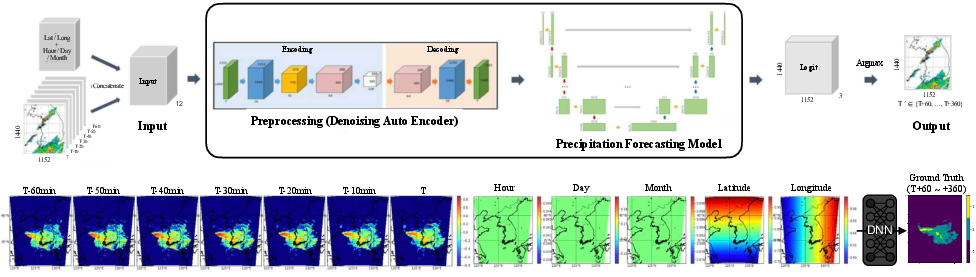

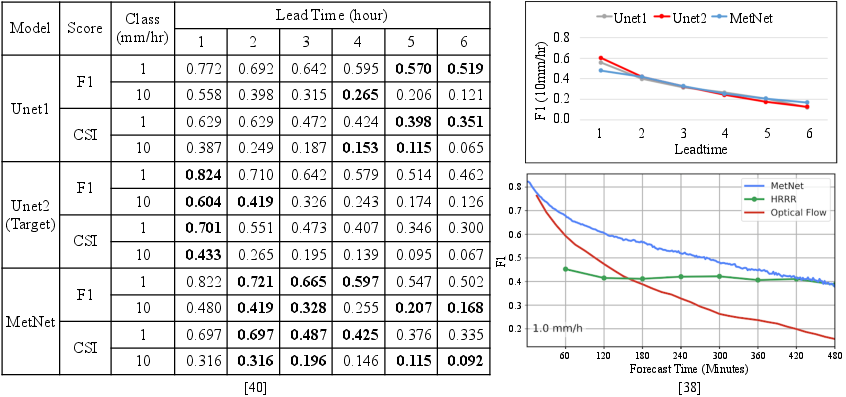

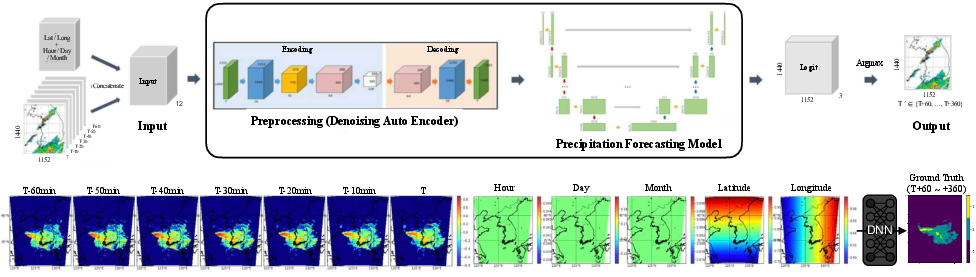

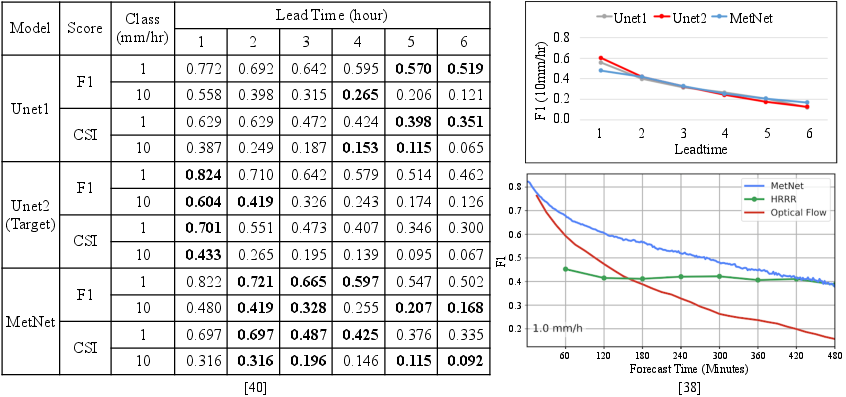

The focal point of the research is UNet2, an advanced UNet-based model designed for short-term rainfall intensity prediction. It operates on 2020 radar synthesis data, adopting a complex input scheme that incorporates spatiotemporal features. UNet2 predicts rainfall across three intensities (no rain, light rain, and heavy rain) at hourly intervals over a six-hour horizon. Notably, the model's performance is deemed competitive, with F1 scores parallel to MetNet and the HRRR numerical models, optimizing prediction reliability particularly in heavy rainfall scenarios.

Figure 1: The target precipitation forecasting model and data. The data consists of radar hybrid scan reflectivity.

Figure 2: The performance of the target model. UNet1 and UNet2 built by NIMS are comparable to MetNet and HRRR numerical model for very short-term predictions.

Explanation Requirements and XAI Methodology

User-Centric Explanation Framework

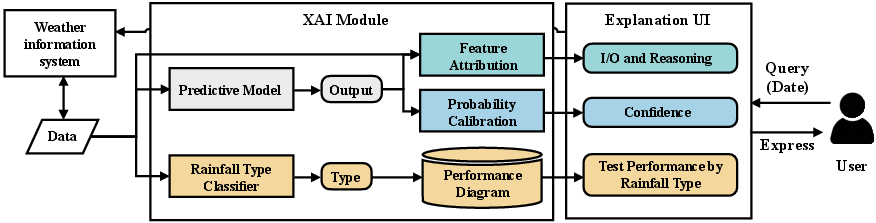

User feedback is instrumental in shaping the XAI framework, primarily centered on three user-driven needs: model performance by rainfall type, output reasoning, and confidence of output explanation. Customized XAI methods are meticulously mapped to these needs, enhancing the interpretability of the model's outputs.

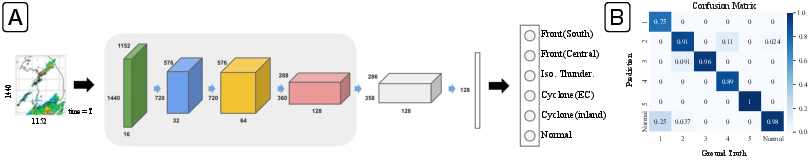

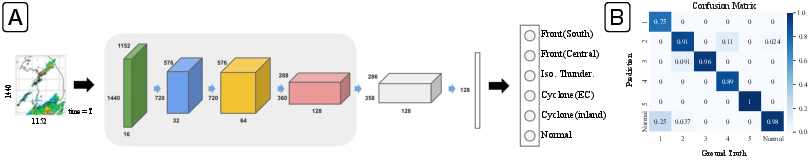

A classifier is employed to categorize rainfall scenarios, facilitating a comparative performance analysis across different precipitation types. This classification enables a refined understanding of model biases and performance consistency across diverse weather patterns.

Figure 3: The structure of the precipitation classifier (A) and the resulting confusion matrix (B).

Output Reasoning Through Feature Attribution

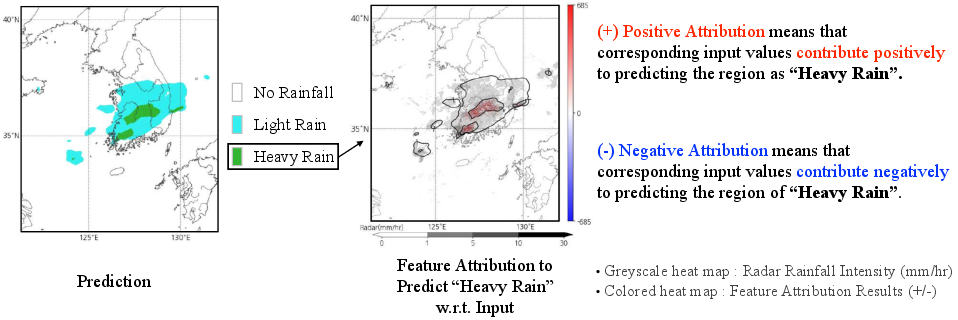

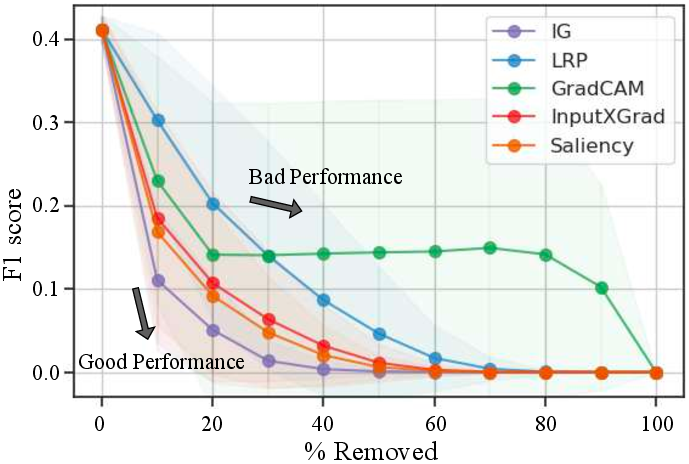

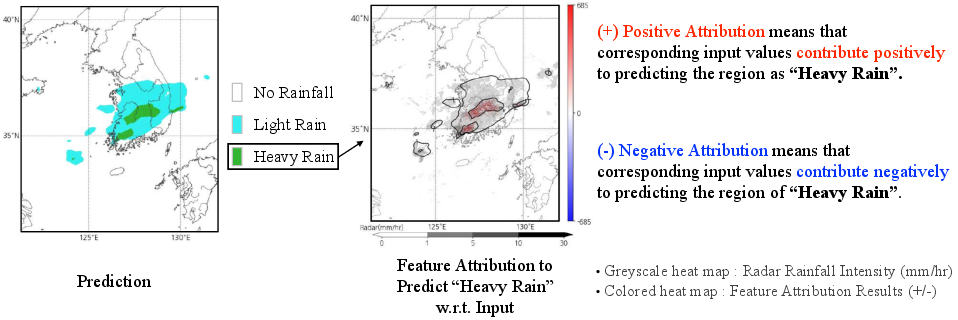

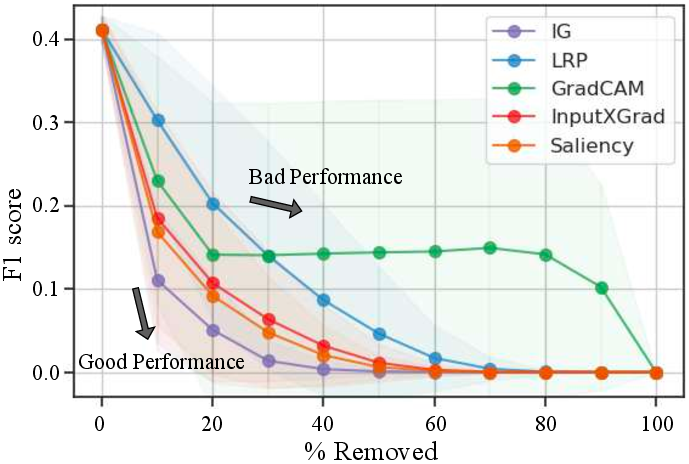

Feature attribution provides insights into model decision-making processes by evaluating the impact of various input features on prediction outcomes. Integrated Gradients emerges as the superior method for attribution, validated through both quantitative and qualitative assessments. This approach yields intuitive heatmaps that elucidate the underlying meteorological phenomena influencing model predictions.

Figure 4: Feature attribution. The heatmap describes the location and the degree of relevance of the inputs as the cause of the trained model prediction.

Figure 5: Quantitative evaluation of the output reasoning explanation from different feature attribution methods.

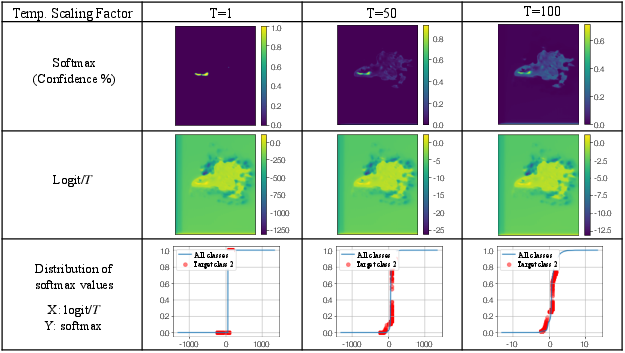

Confidence Calibration

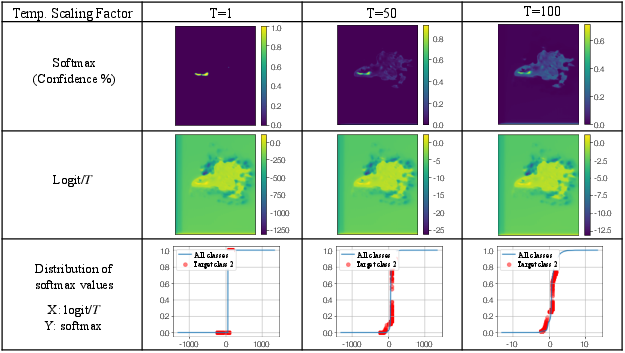

Deep neural models often exhibit overconfidence, which undermines prediction reliability. The study implements temperature scaling, specifically Local Temperature Scaling (LTS), to calibrate model confidence. This adjustment aligns confidence levels with actual forecast probabilities, enhancing the predictive reliability of the weather model without impacting performance metrics.

Figure 6: The principle of temperature scaling. The softmax probability is scaled by a scalar parameter to reduce overconfidence.

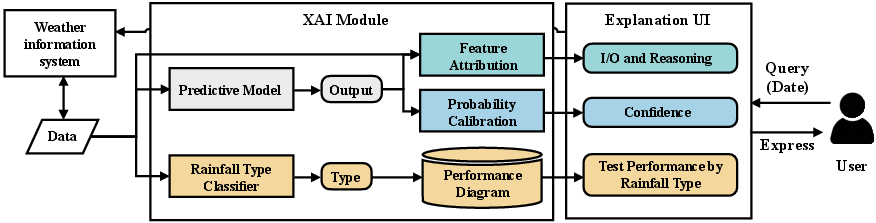

XAI Interface System Development

An interface system is developed to integrate XAI explanations with user-friendly visualization paradigms. This system offers interactive components that delineate model performance, reasoning, and confidence, effectively enhancing user trust and understanding. The user study conducted with meteorological experts underscores the demand for intuitive and expedited interpretability, essential for high-stakes decision-making.

Figure 7: Use case diagram for user interface and XAI modules.

Conclusion

This research underscores the significance of user-centered design in the deployment of XAI systems for meteorological applications. By aligning explanation methods with user requirements, the study enhances the operational utility of weather prediction models. While challenges remain in meeting the diverse needs of users and refining model accuracy, the study establishes a robust framework for integrating XAI into practical forecasting systems. Future research will likely expand on these foundations, advancing the interface between AI systems and meteorological expertise.