- The paper demonstrates that integrating Hebbian learning with self-organizing criticality yields emergent connectome dynamics consistent with empirical neuronal avalanches.

- The model uses a sandpile-based approach on hierarchical modular networks with directed, weighted connections to mimic realistic neural behavior.

- Avalanche size and edge weight distributions follow power-law and lognormal trends, reflecting robust statistical features similar to experimental connectome data.

Learning and Criticality in a Self-Organizing Model of Connectome Growth

Introduction

The paper presents a computational framework for simulating brain-like networks capable of self-organization and learning mechanisms to explore features of structural and functional brain connectivity. It extends sandpile models, commonly used to study self-organized criticality (SOC), into more realistic network architectures that reflect neuronal connectivity, employing Hebbian learning to capture the relationship between neuron-firing dynamics and network connectivity.

Model Description

This model implements a sandpile-based approach on hierarchical modular networks (HMNs), aiming to replicate the avalanche-like dynamic behavior observed in neuronal systems. Importantly, this approach departs from traditional lattice-based implementations by using directed and weighted connections, representing realistic neuronal synaptic strengths. Hebbian learning plays a crucial role in the model, operationalizing the principle of "fire together, wire together" to enhance and weaken synaptic connections based on activation patterns.

Avalanche Dynamics

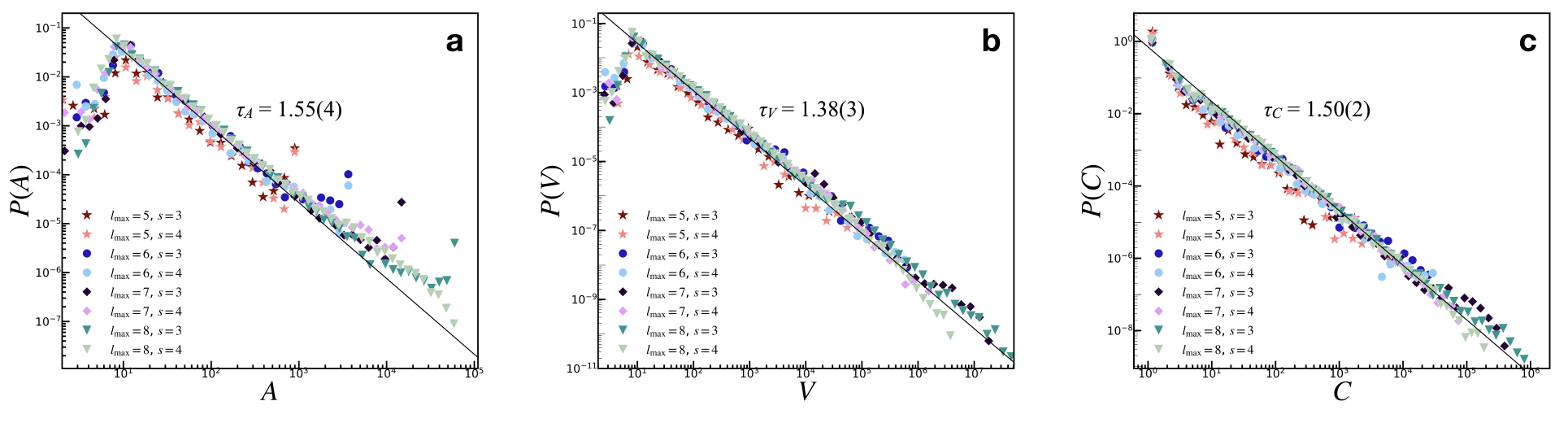

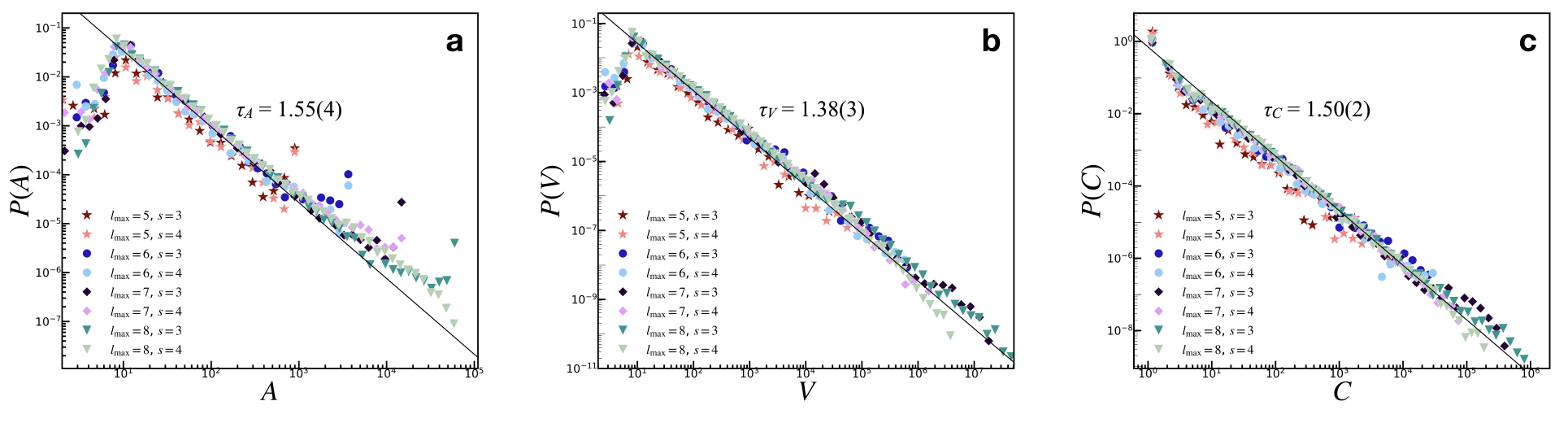

Figure 1: Avalanche size distributions indicating PL tails with exponent $3/2$ for toppled sites, aligning with empirical observations of neuronal systems.

The model produces characteristic avalanche size distributions, recovering the critical exponent of $3/2$ associated with neuronal avalanches. This is consistent across various avalanche measures, such as area (A), activation (V), and toppled sites count (C), confirming that critical behavior is preserved even in the more complex network setup. The inclusion of Hebbian learning allows the model to capture dynamic adjustment of connectivity, thereby supporting ongoing criticality.

Edge Weight Distributions

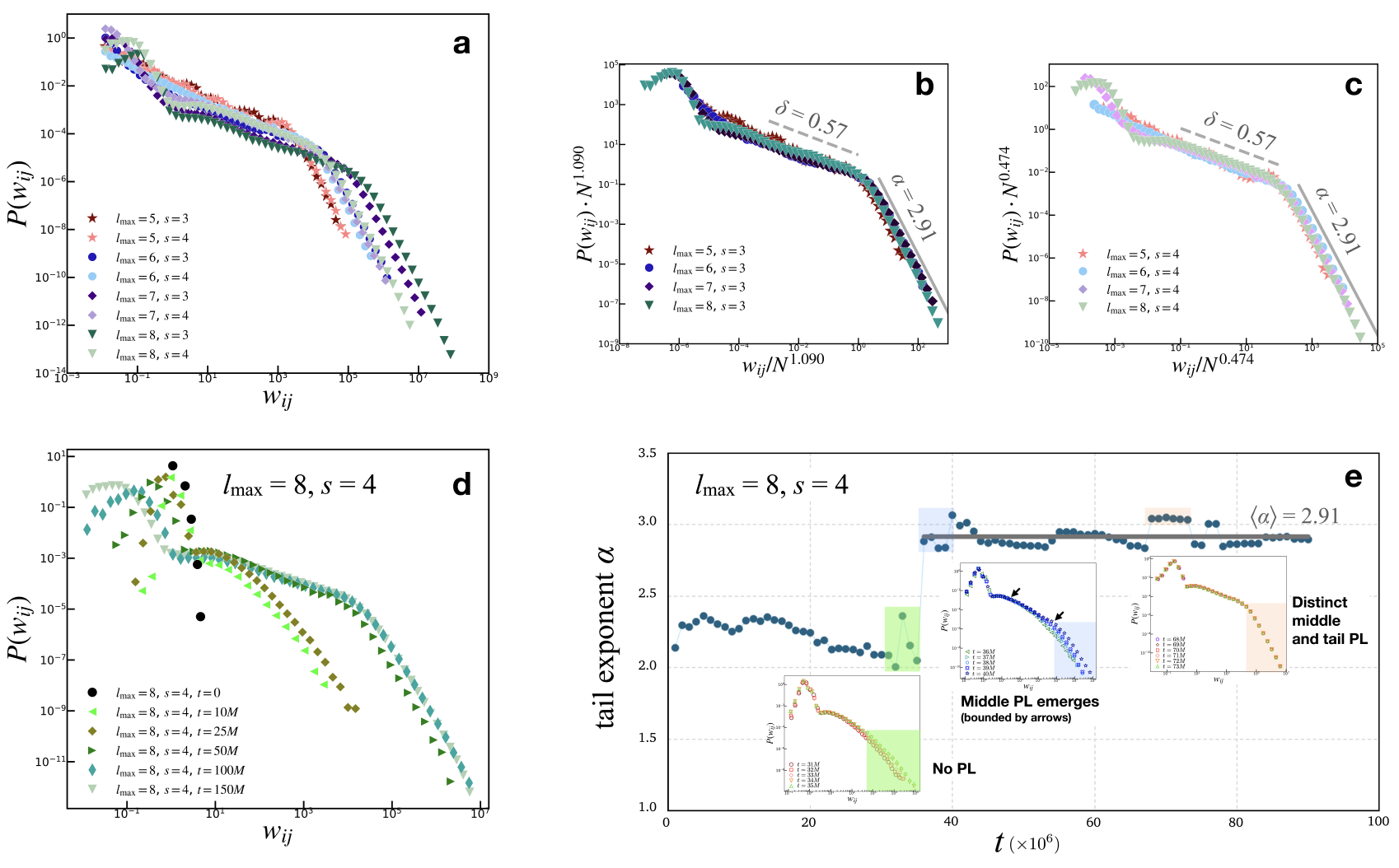

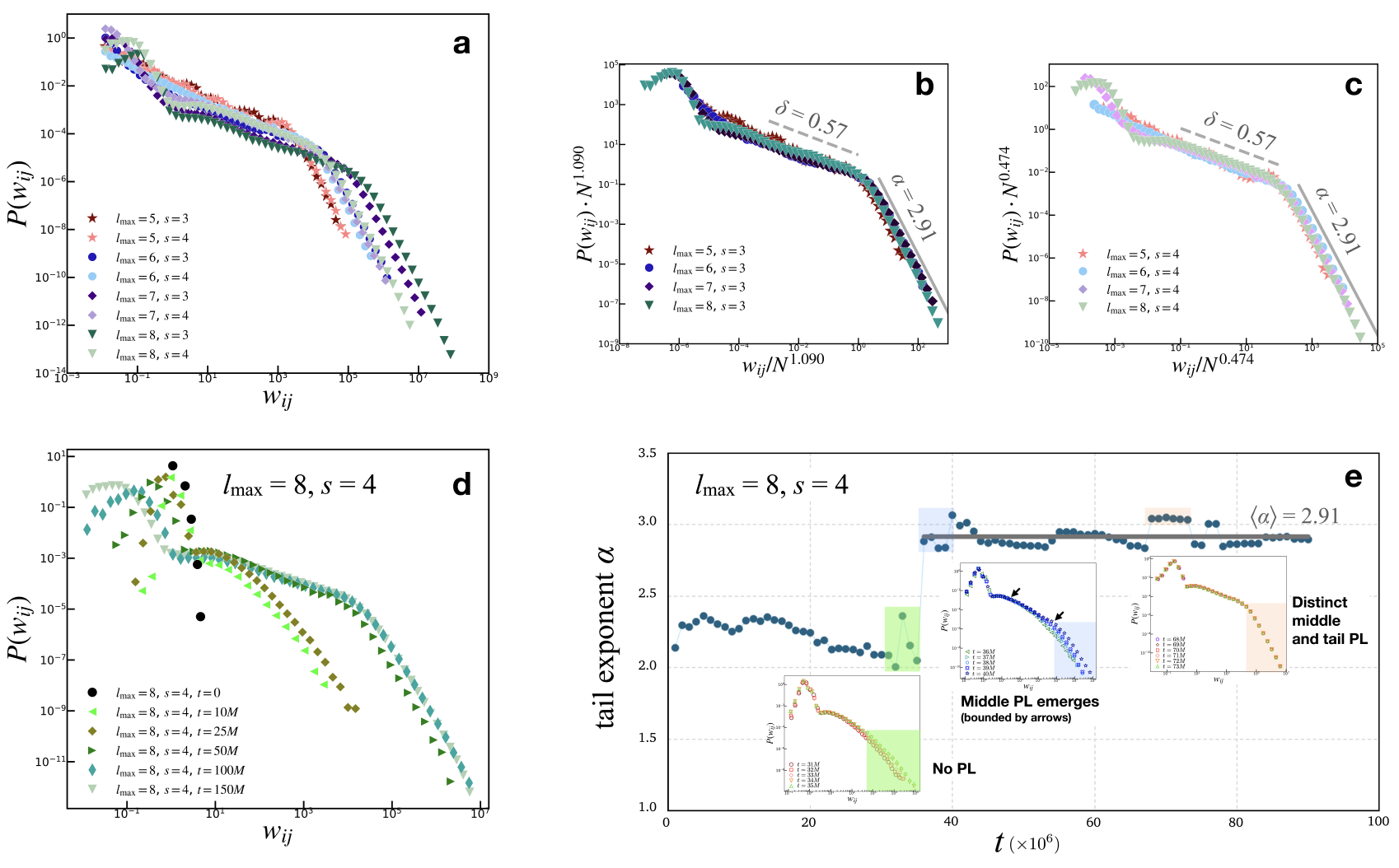

Figure 2: Global edge weight distributions showing distinct PL regimes for both intermediate and tail regions, revealing universality.

The model robustly reproduces PL distributions of edge weights with exponents aligning closely with empirical connectomes, suggesting that neuronal network structural properties are emergent from underlying dynamic criticality. The edge weights evolve to exhibit two distinct PL behaviors: a gentle slope for intermediate weights and a steeper slope approaching $3$, similar to empirical connectomes.

Node Degree and Strength Distributions

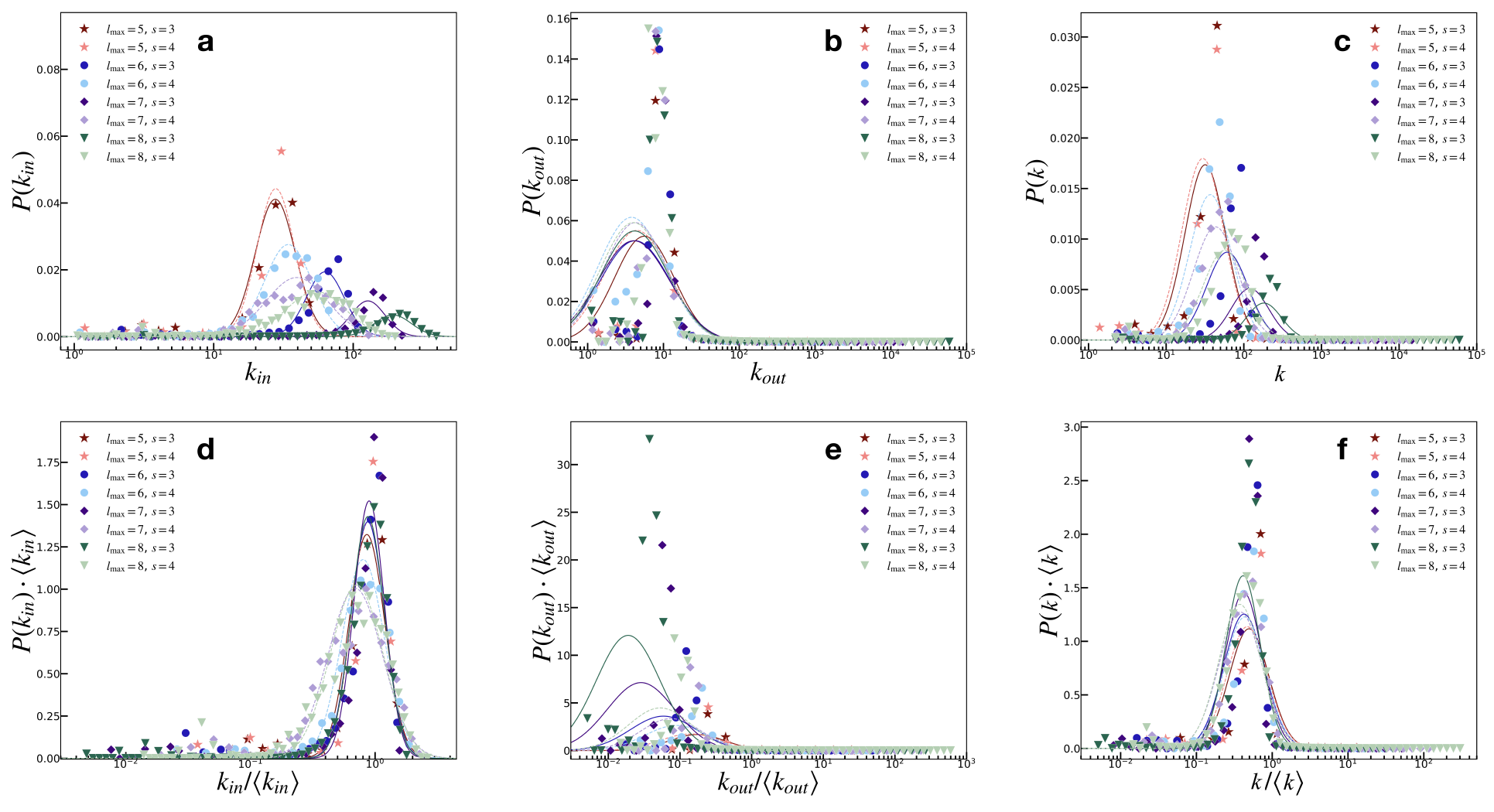

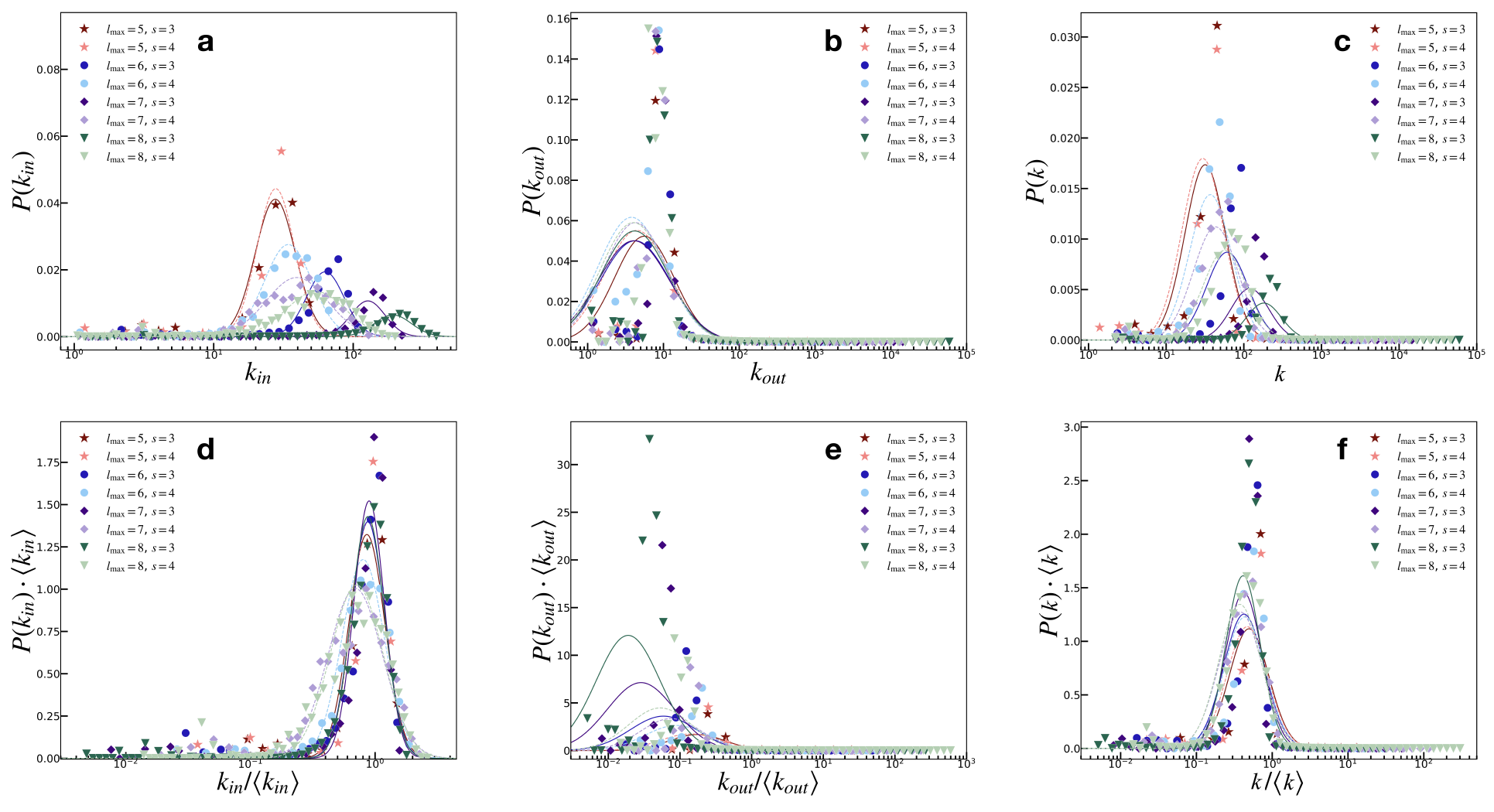

Figure 3: Node degree distributions demonstrate lognormal properties, consistent with empirical observations of brain networks.

Node degree and strength distributions follow lognormal trends, emphasizing constraints in neuronal networks, such as spatial and metabolic costs. The model suggests that lognormal distributions arise naturally, rather than purely random or scale-free configurations, due to structural constraints and dynamic adjustments through learning mechanisms.

Discussion and Future Directions

The model successfully integrates SOC principles and Hebbian learning within a network topology representative of brain connectivity, replicating key statistical features observed in empirical connectome data. By combining simple rules of SOC with realistic learning and network structures, the approach offers insights into the adaptive and emergent properties of neuronal networks.

Further exploration may include the integration of additional biological factors, such as synaptic plasticity and long-range connections, to enhance realism and capture more complex brain dynamics. Additionally, reciprocity in link formation may further align model predictions with empirical data, particularly for complex systems like human connectomes.

Conclusion

This framework provides a powerful tool for understanding the intersection of structure and dynamics in brain networks, demonstrating that statistical features of connectomes can emerge from simple criticality principles combined with biologically plausible learning and growth mechanisms. The model highlights the potential of self-organizing systems to reveal the fundamental operations underlying complex neural architectures.