- The paper presents a novel AI system that integrates fine-tuned vision-language models with a reasoning LLM to improve mild TBI prediction.

- It employs a multi-layered architecture combining a data lake, a VLM agent layer, and consensus-based reasoning to process MRI scans efficiently.

- The system shows significant performance improvements over conventional models like ResNet50, ensuring more reliable diagnostic support.

Proof-of-TBI: Integrating Vision LLMs and OpenAI-o3 LLM for TBI Diagnosis

Introduction

Mild Traumatic Brain Injury (TBI) poses substantial challenges due to the subtlety and ambiguity of symptoms visible in medical imaging. Traditional diagnostic methodologies often suffer from inefficiencies and inaccuracies, necessitating innovative solutions in medical diagnostics. The paper introduces "Proof-of-TBI," a medical diagnosis support system combining fine-tuned vision-LLMs (VLMs) with the OpenAI-o3 reasoning LLM to provide support in diagnosing mild TBI from MRI scans.

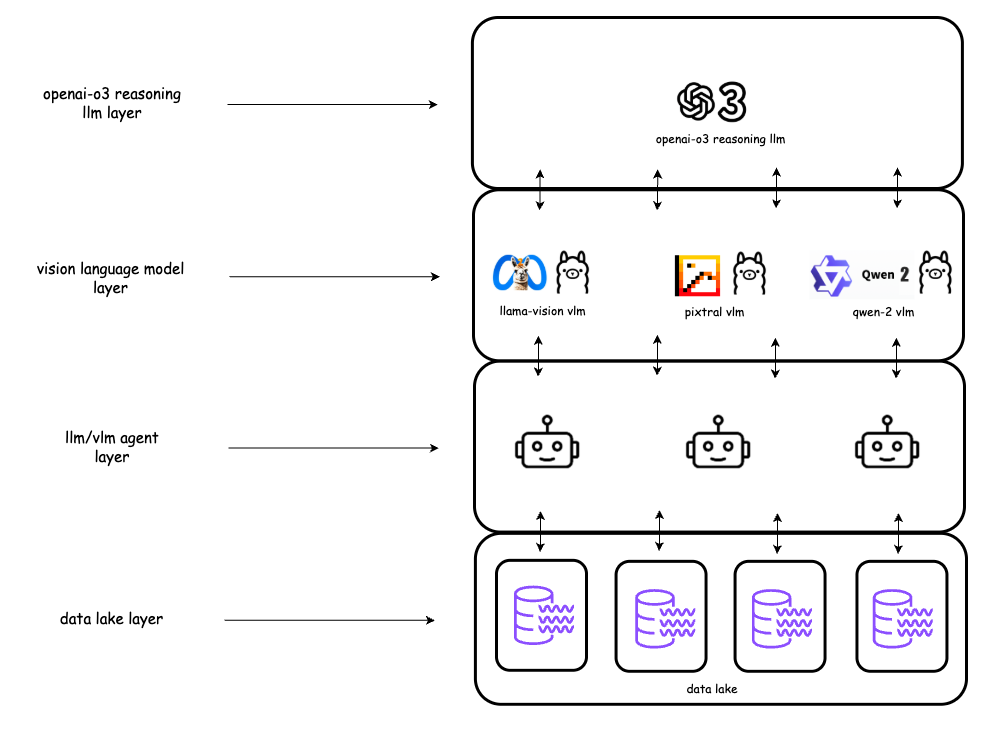

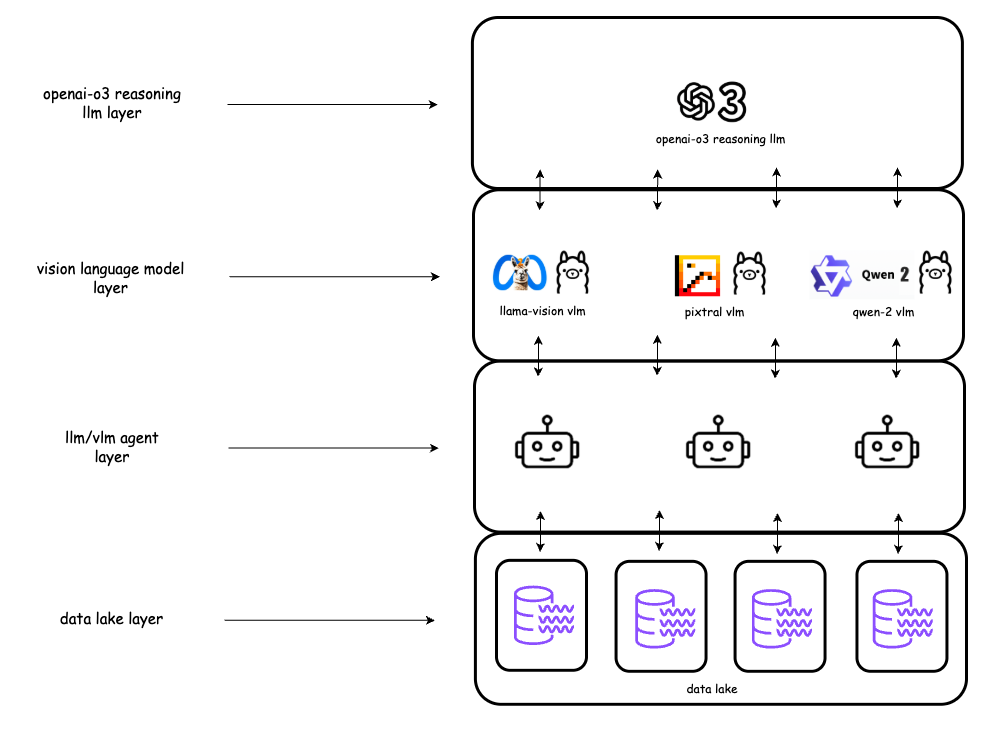

Figure 1: Proof-of-TBI platform layered architecture.

System Architecture

The architecture of the Proof-of-TBI platform is divided into four layers:

- Data Lake Layer: Centralized management and storage of MRI images tailored for vision-LLM training.

- LLM Agent Layer: Implements prompt engineering to coordinate interactions between VLMs and the reasoning LLM, facilitating the automated decision-making process.

- Vision LLM Layer: Consortium of specialized, fine-tuned VLMs trained on TBI MRI scans for predictive accuracy. This integration utilizes Ollama, providing an optimized framework for running these models efficiently (Figure 2).

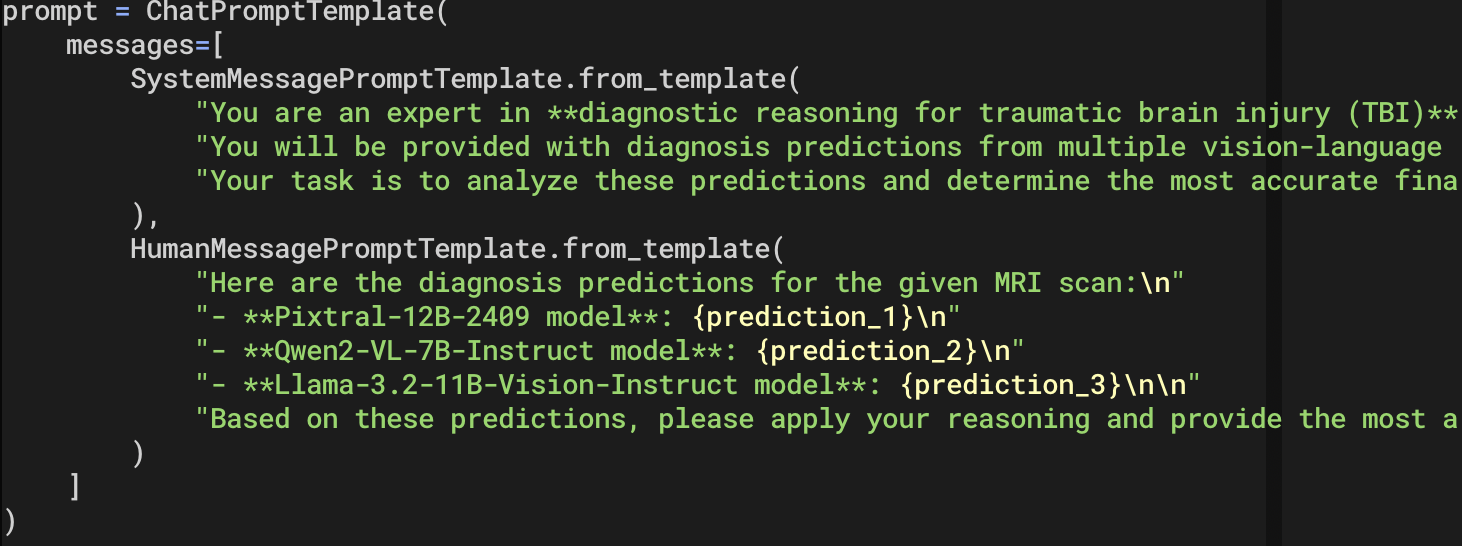

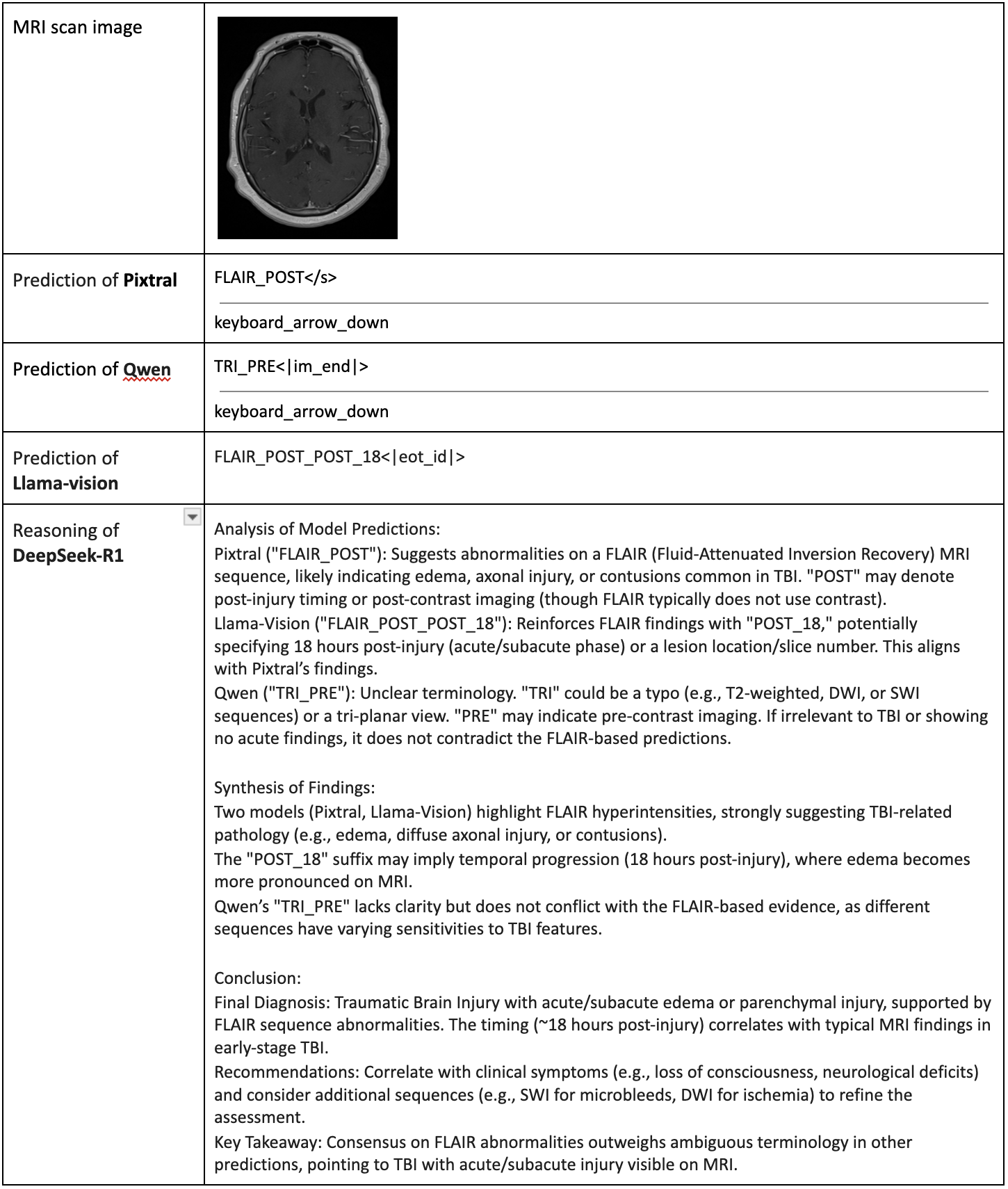

- Reasoning LLM Layer: The OpenAI-o3 model evaluates VLM predictions, synthesizing inputs to render reliable diagnoses through advanced reasoning capabilities (Figure 3).

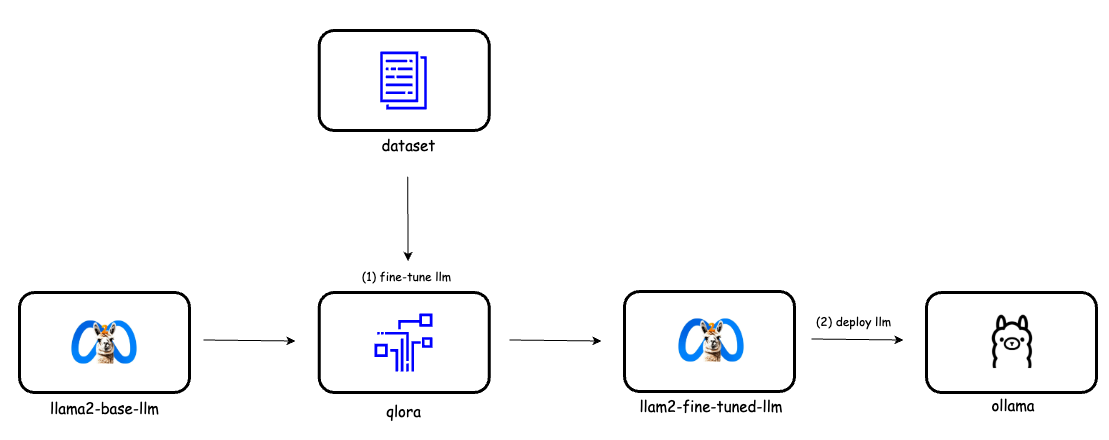

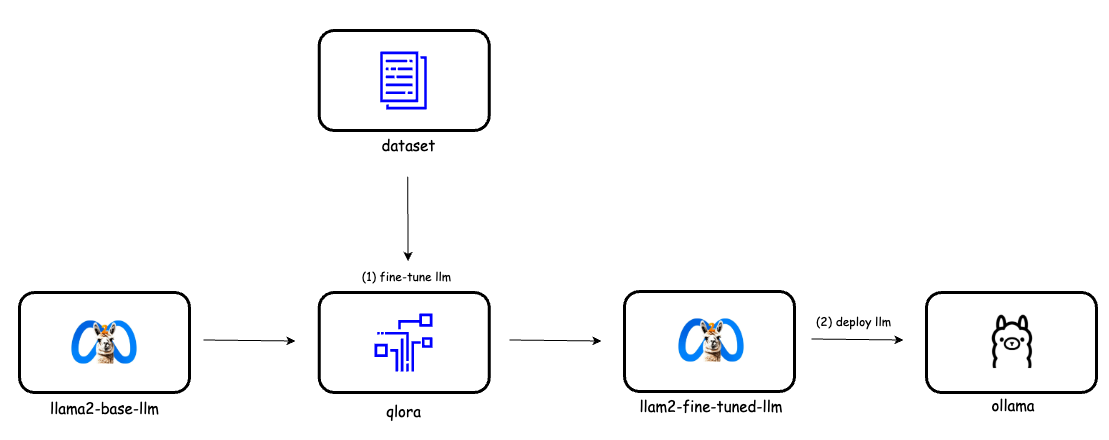

Figure 2: Fine-tune Vision LLMs with Qlora and deploy with Ollama.

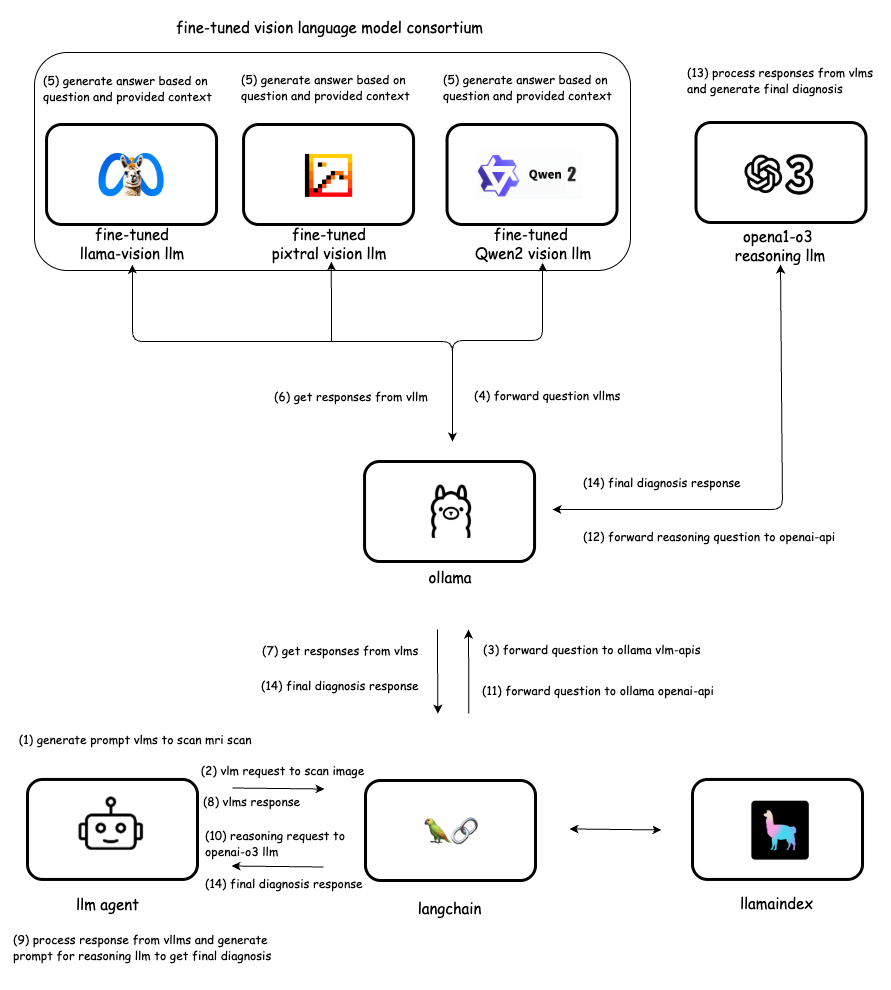

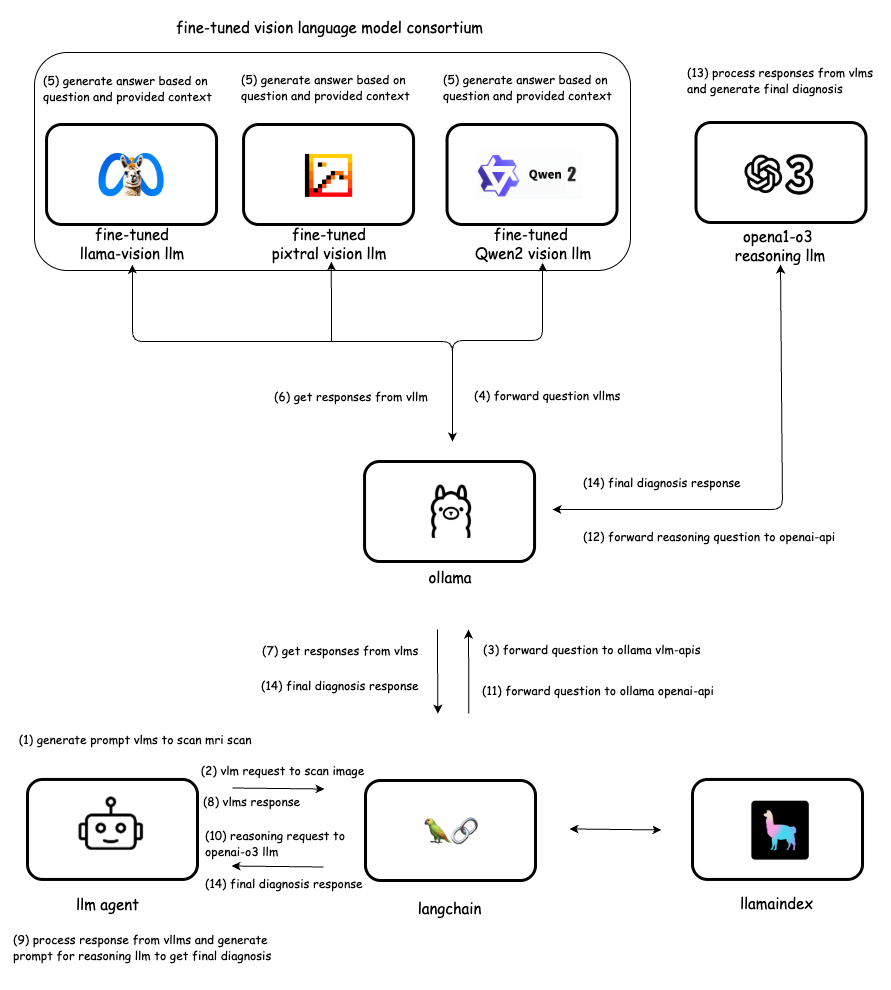

Figure 3: Vision LLM integration flow with Ollama LLM-API, LlamaIndex, LangChain and Smart Contracts.

The platform has four primary functions:

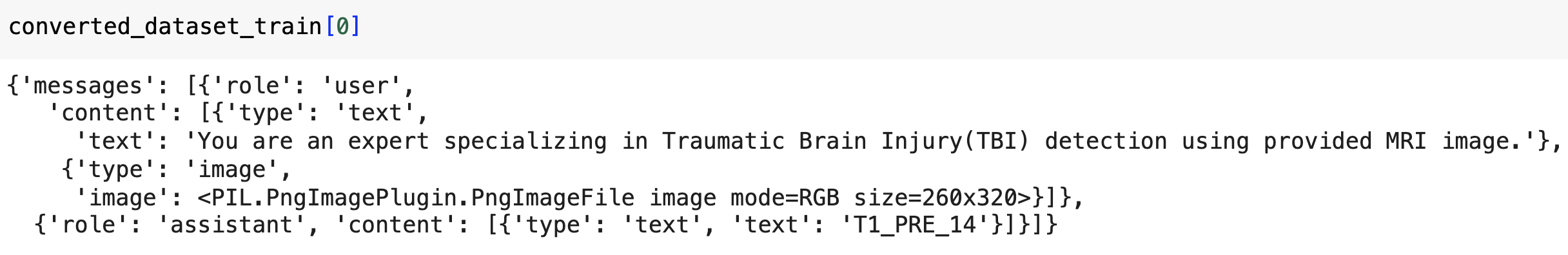

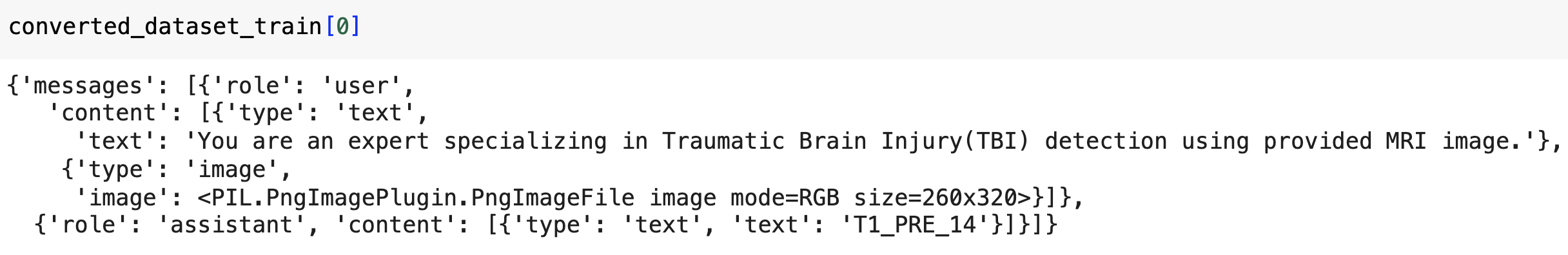

- Data Lake setup: It stores extensive labeled datasets of MRI scans, including annotations that are essential for model training and adaptation (Figure 4).

- Vision LLM Fine-Tuning: Using the Unsloth library, fine-tuning enables VLMs to effectively handle TBI MRI scan diagnostics. QLoRA facilitates scalable deployment with reduced resource consumption.

- TBI Prediction by Fine-Tuned VLMs: Models make predictions for TBI presence based on MRI scans, which are aggregated and structured for further reasoning.

- Final TBI Diagnosis Prediction: OpenAI-o3 consolidates model outputs, ensuring precision in the final diagnosis through consensus-based reasoning.

Figure 4: The required data format of the unsloth library to fine-tune the vision LLM.

Implementation and Evaluation

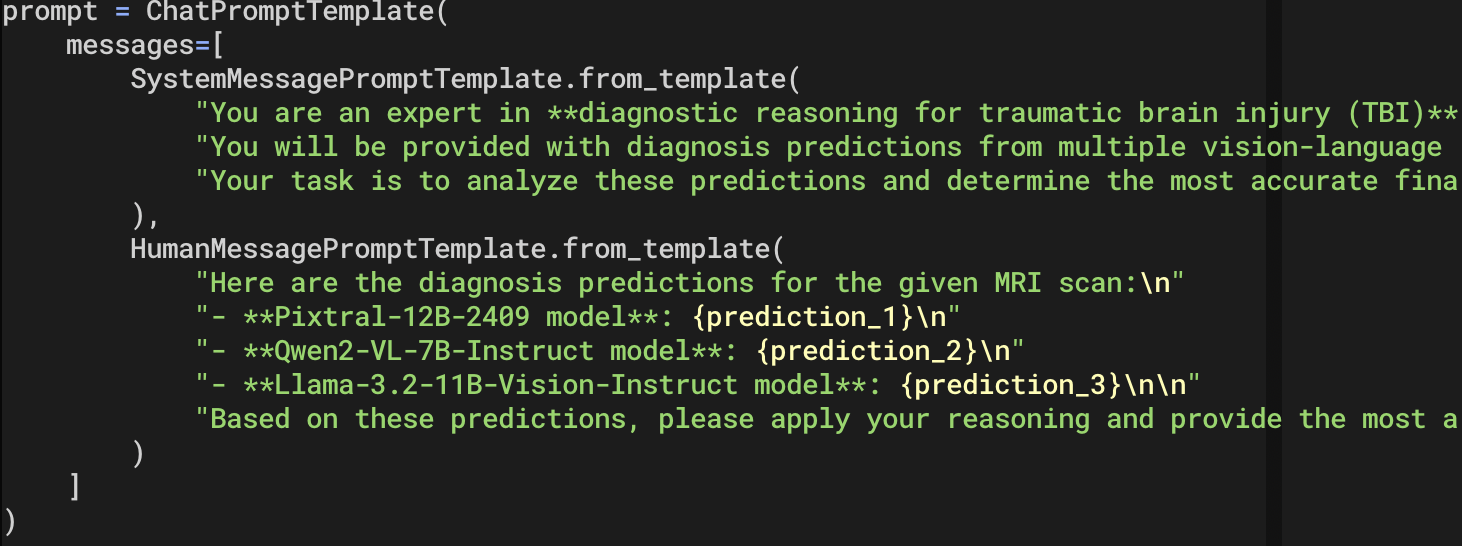

The implementation, involving collaboration with the U.S. Army Medical Research team, leverages contributions from Llama-Vision, Pixtral, and Qwen2-VL models. Figure 5 exemplifies prompt engineering utilized to fine-tune these models, optimizing diagnostic accuracy.

Figure 5: Prompt for OpenAI-o3 reasoning LLM for final diagnosis reasoning.

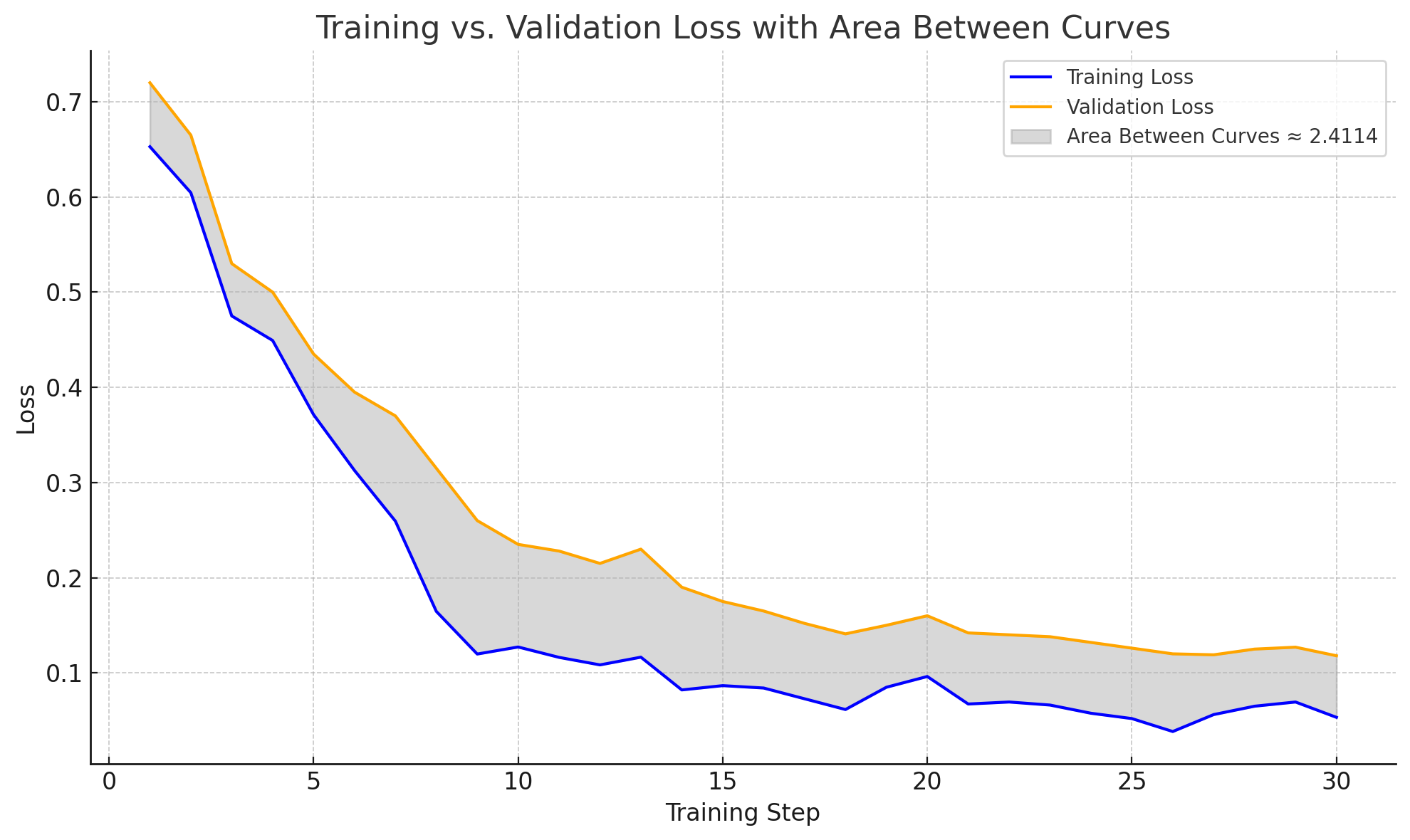

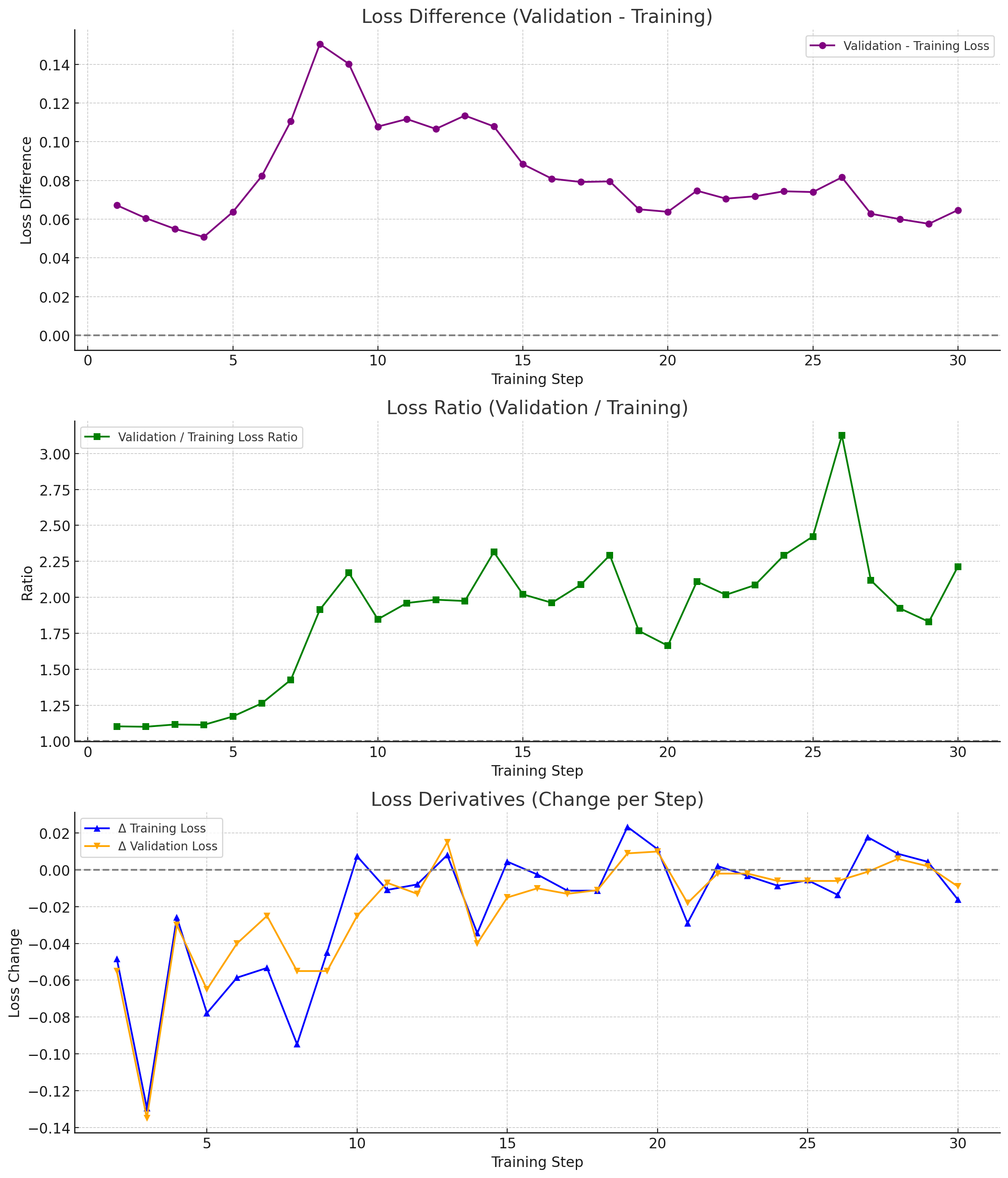

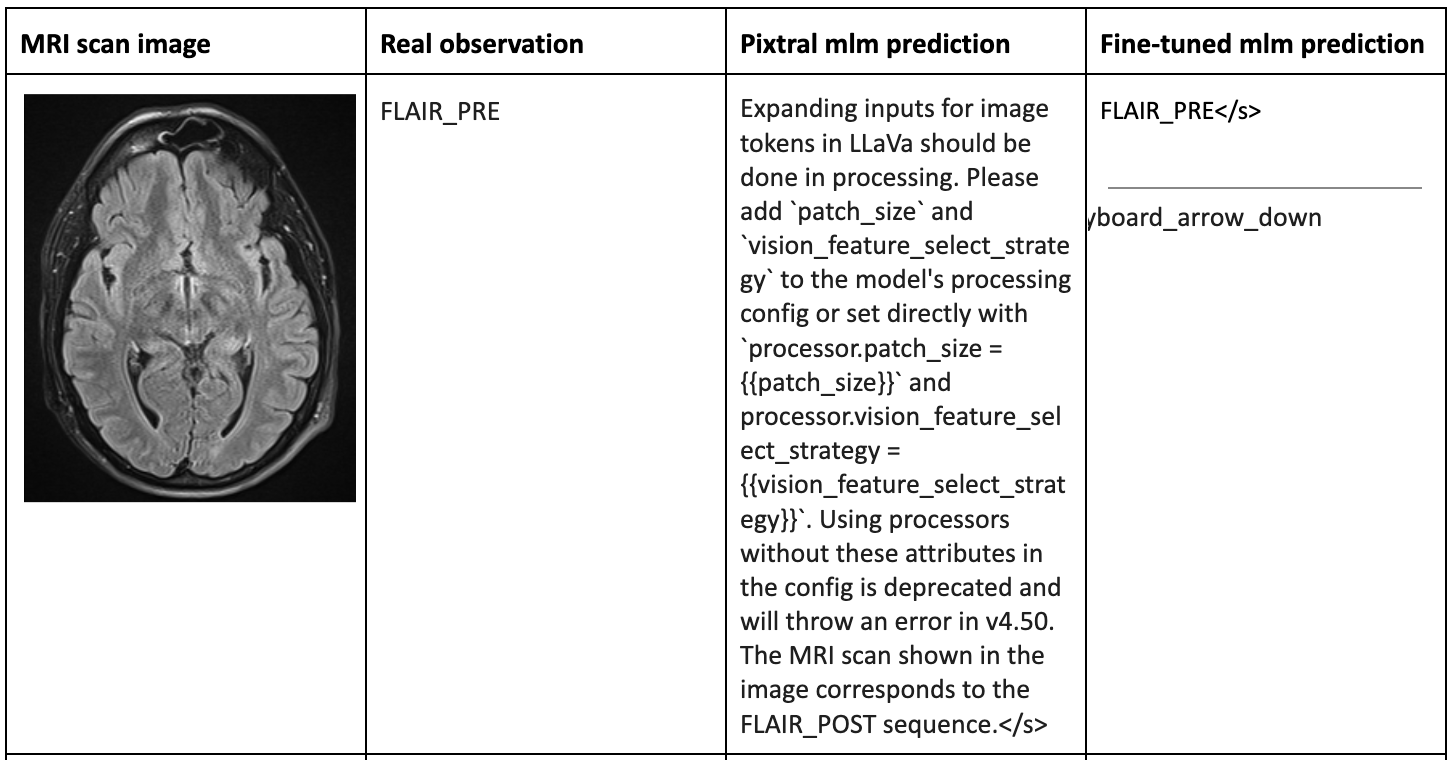

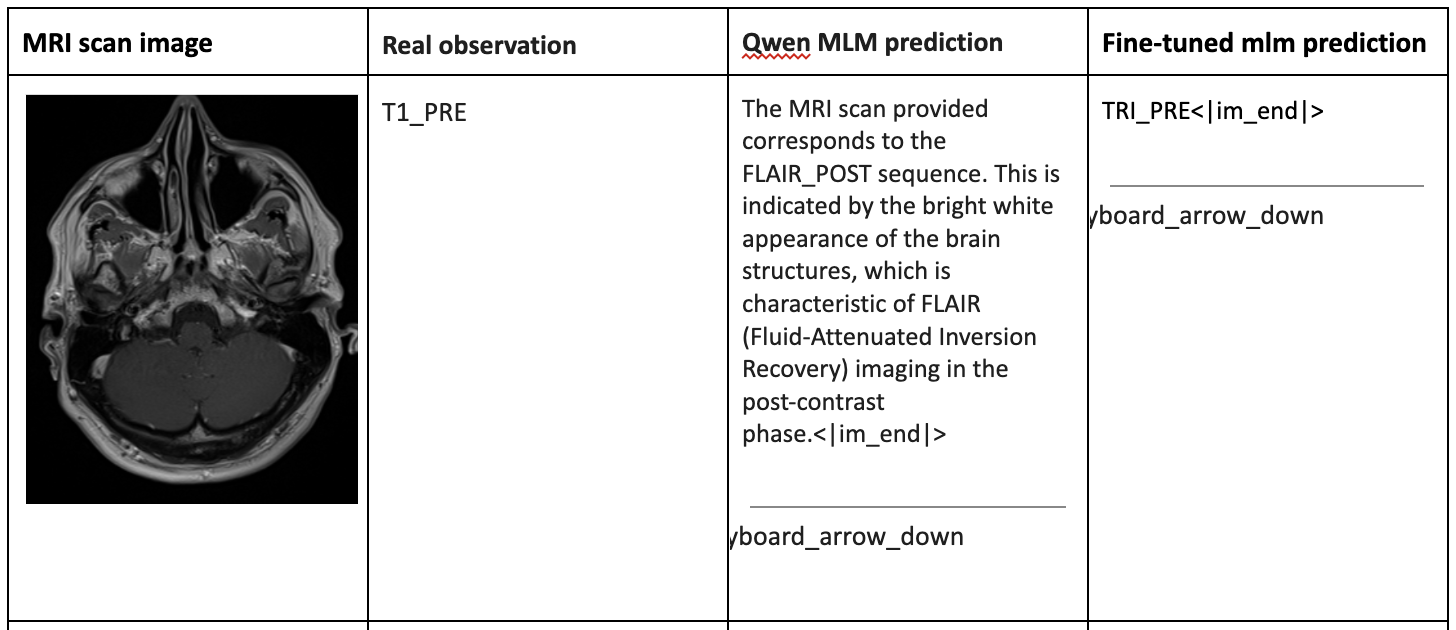

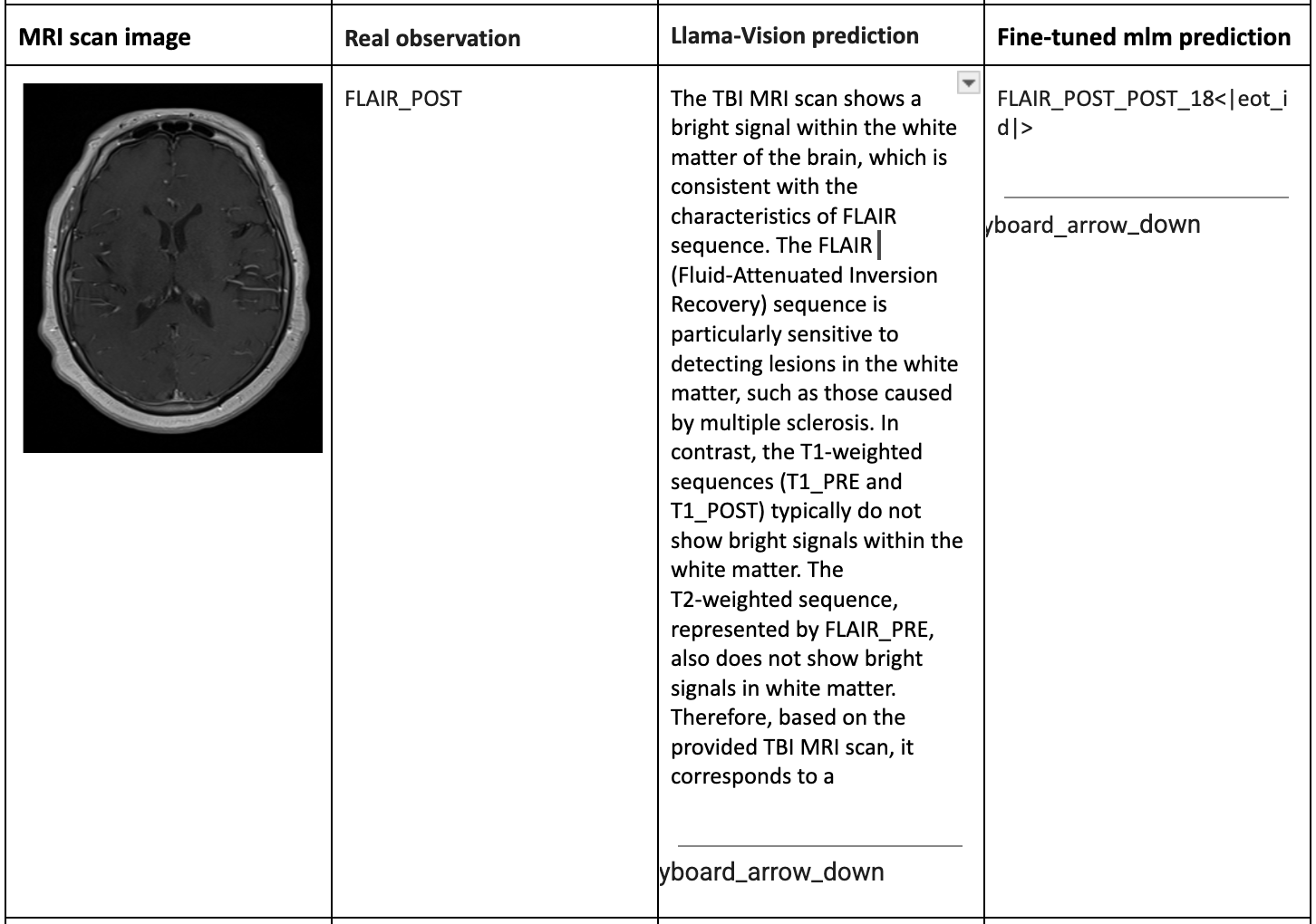

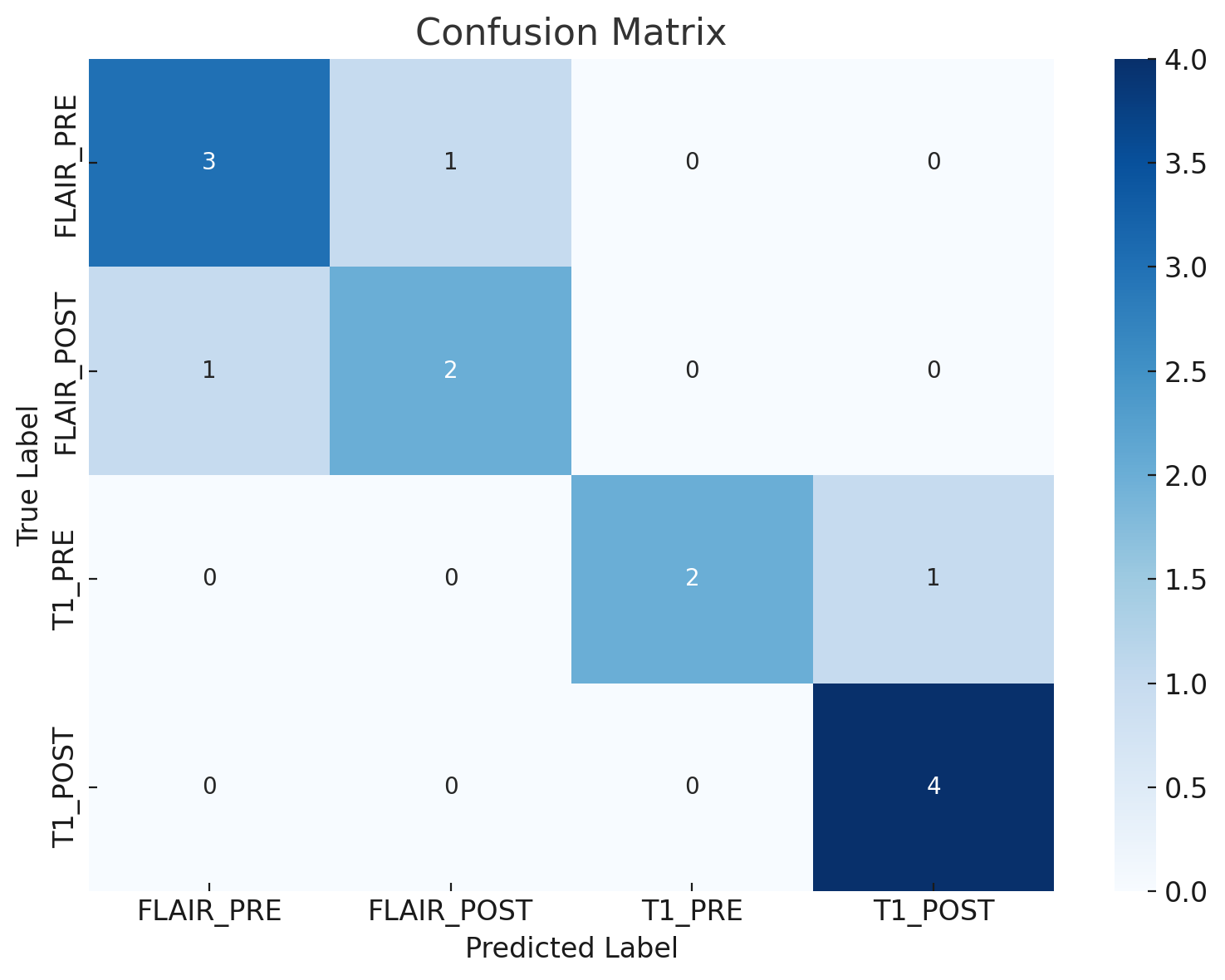

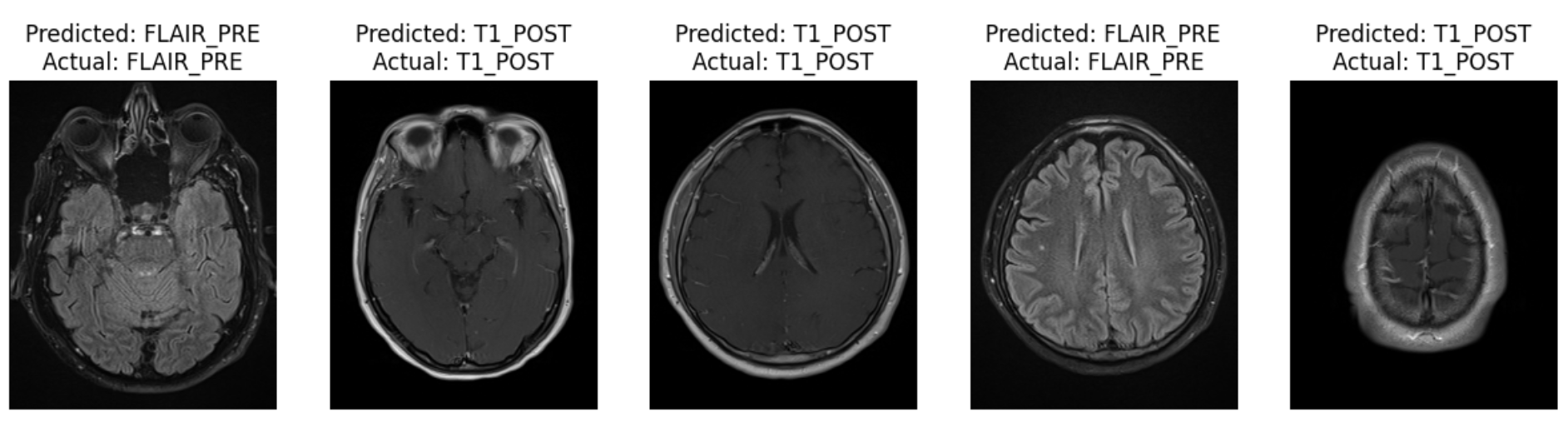

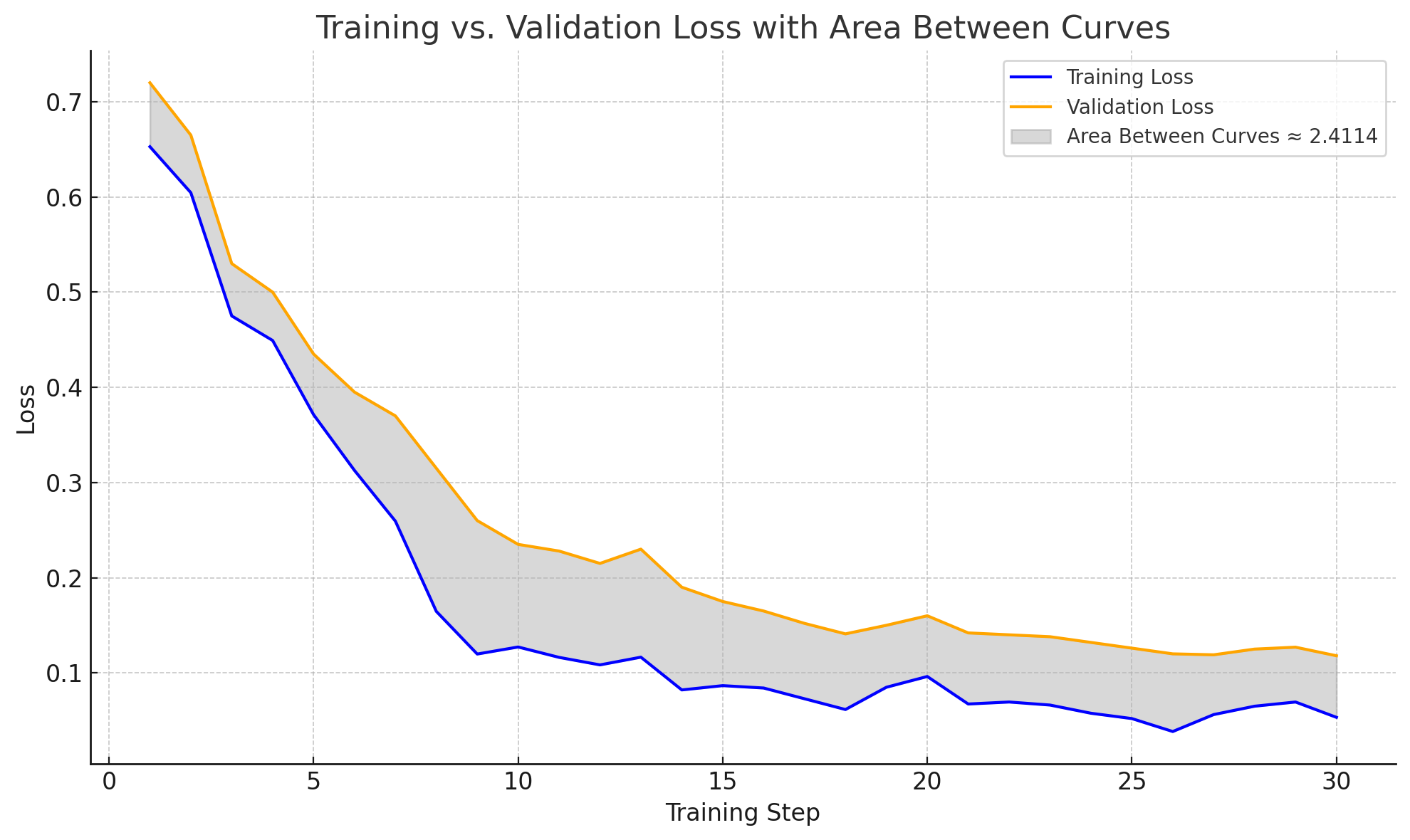

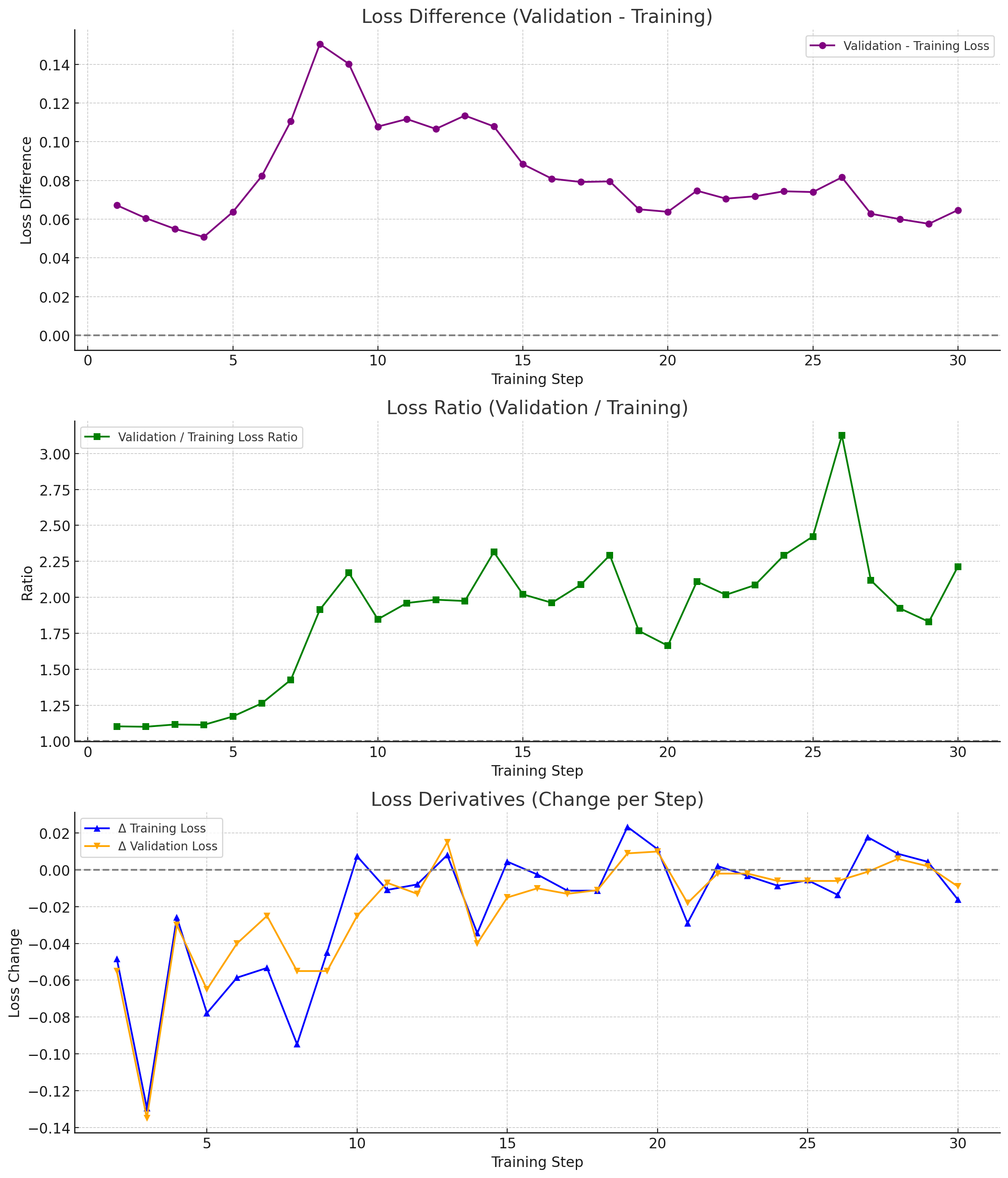

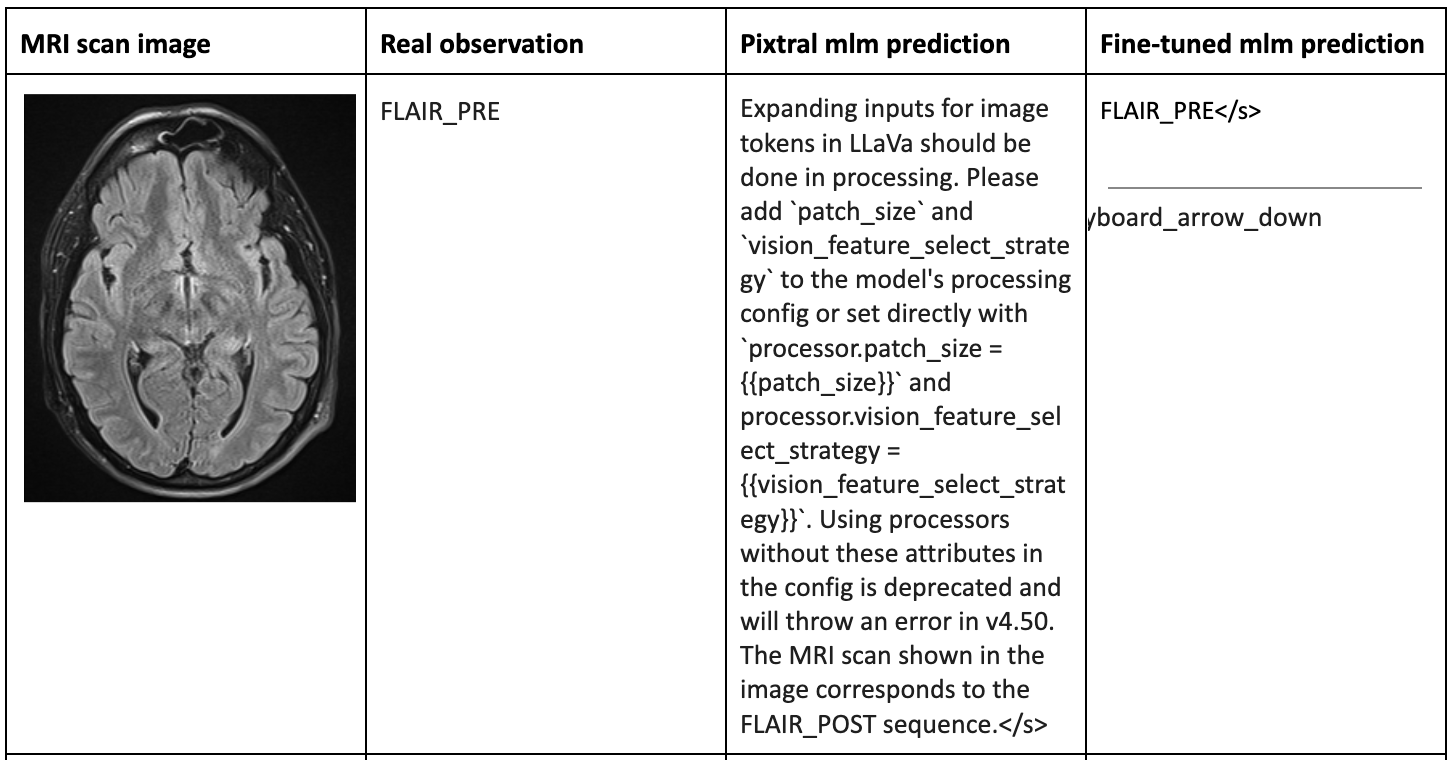

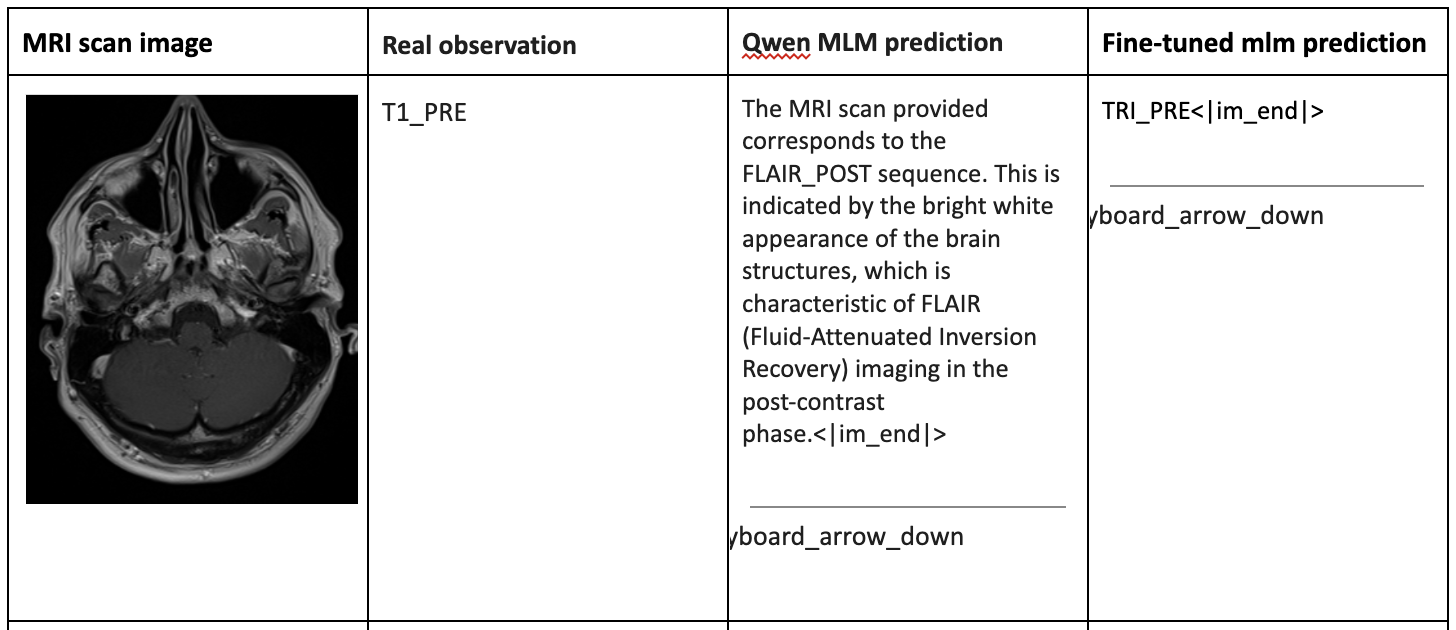

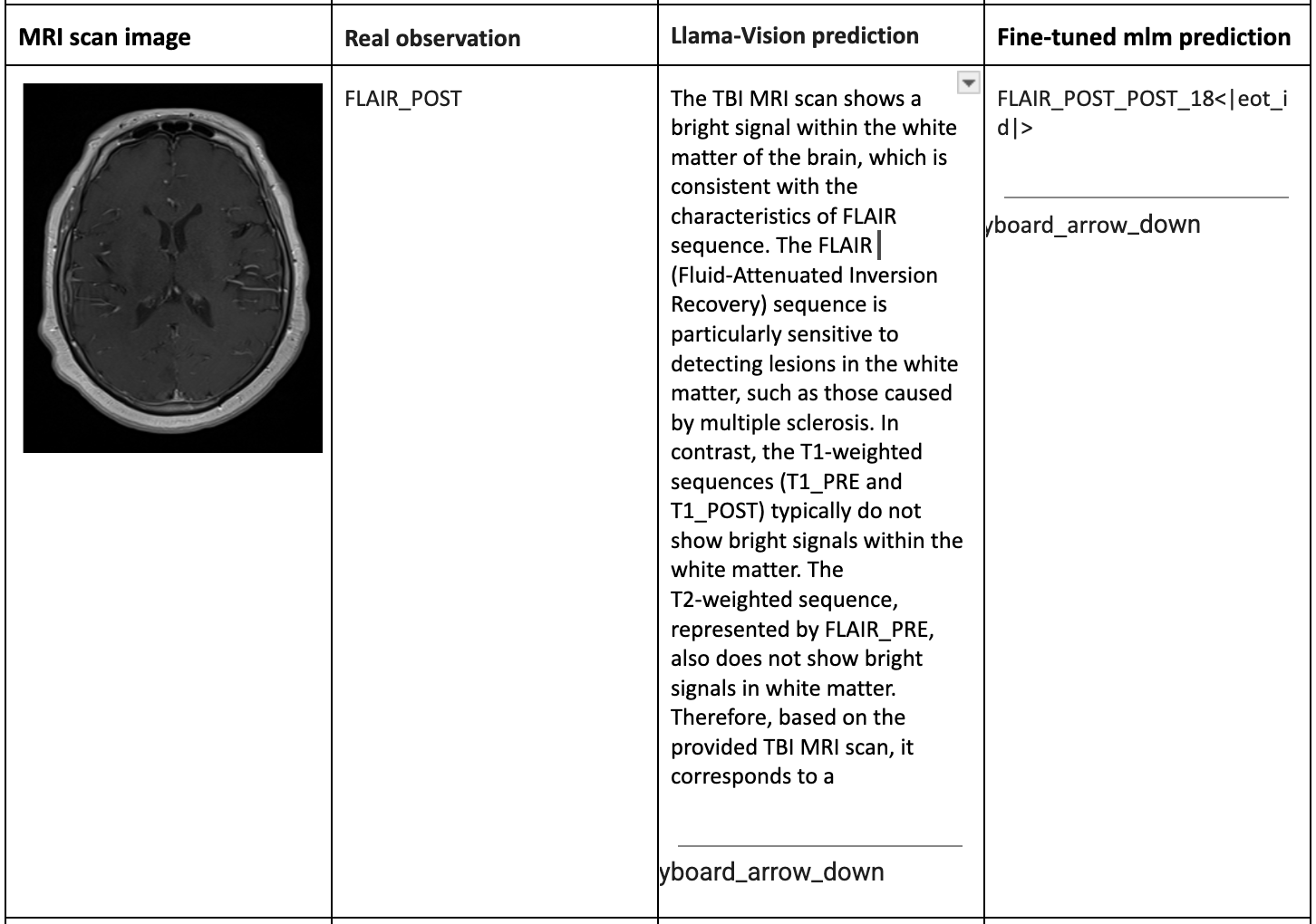

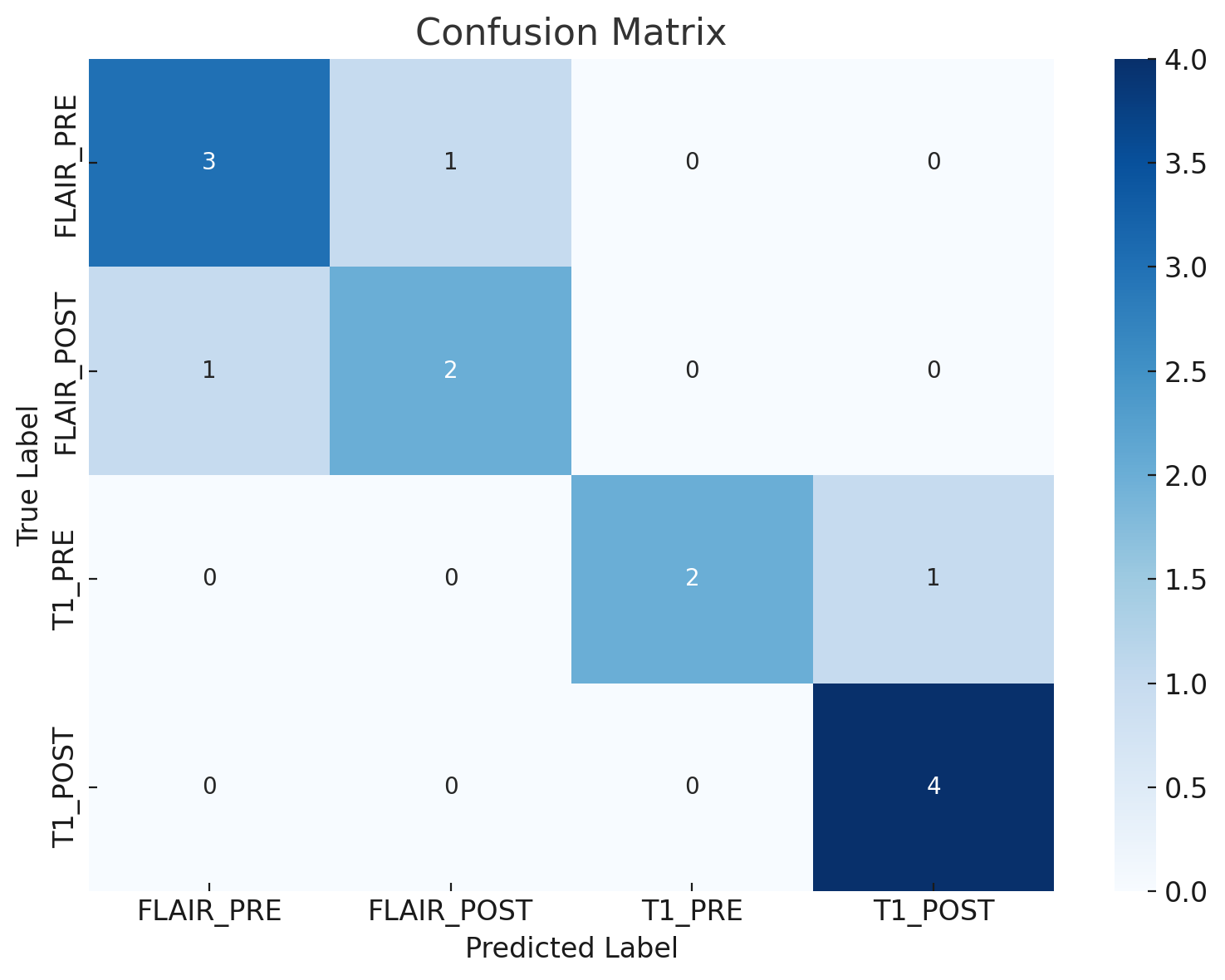

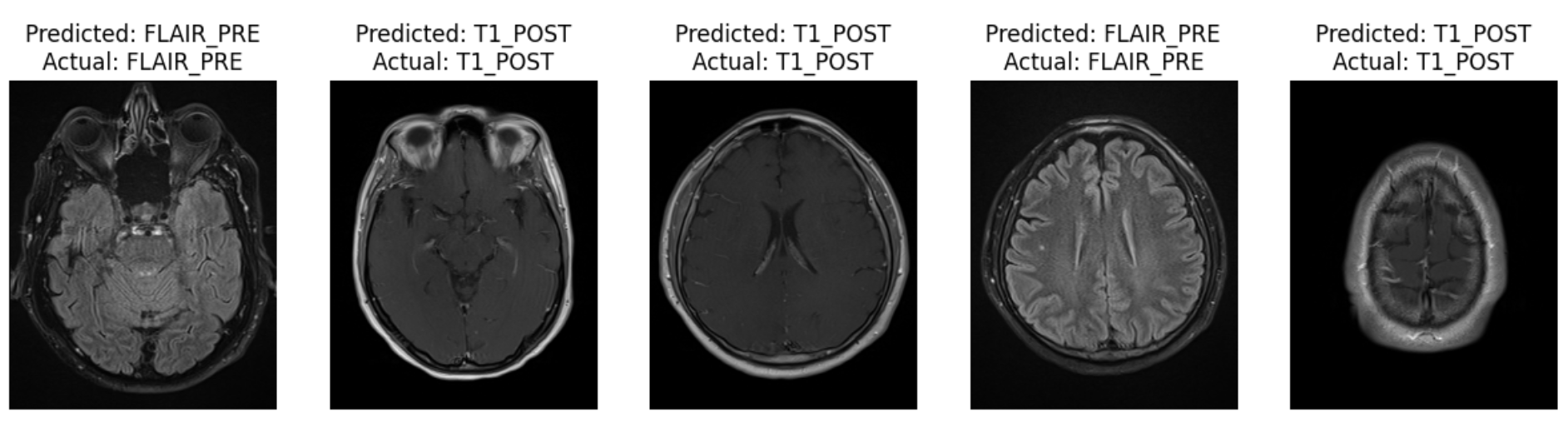

Evaluations focus on the reduction of training and validation losses during fine-tuning as showcased in Figures 6 and 7. Predictive performance indicates significant improvements post-fine-tuning, evidenced by comparisons against predictions from standard models like ResNet50 (Figures 8-12).

Figure 6: Training loss and validation loss during fine-tuning of the Llama-3.2-11B-Vision-Instruct vision-LLM.

Figure 7: Ratio of training to validation loss during the fine-tuning of the Llama-3.2-11B-Vision-Instruct vision-LLM.

Figure 8: The prediction results of Pixtral-12B-2409 vision LLM.

Figure 9: The prediction results of Qwen2-VL-7B-Instruct vision LLM.

Figure 10: The prediction results of Llama-3.2-11B-Vision-Instruct vision LLM.

Figure 11: Confusion matrix of the fine-tuned Llama-3.2-11B-Vision-Instruct vision-LLM on TBI MRI scan classification.

Figure 12: Prediction results of the ResNet50 image classification model on the TBI MRI scan dataset.

Additionally, Figure 13 illustrates how OpenAI-o3 synthesizes these vision model predictions into a final, consistent diagnosis, showcasing its efficient reasoning capabilities.

Figure 13: Diagnosis reasoning made by OpenAI-o3 LLM.

Conclusion

The Proof-of-TBI platform demonstrates a pioneering approach to leveraging AI for medical diagnostics, specifically for mild TBI prediction. By combining fine-tuned vision-LLMs and OpenAI's reasoning LLM, the system enhances both the accuracy and reliability of TBI diagnoses. As healthcare continues to adapt AI solutions, Proof-of-TBI exemplifies the integration of sophisticated AI models to address clinical challenges, setting a precedent for further advancements in diagnostic systems across diverse medical domains. Future work could explore the extension of this platform to include additional open-source LLMs, further enhancing its diagnostic breadth and robustness.