- The paper introduces MCD-TSF, a novel diffusion-based framework that leverages timestamps and textual data to significantly improve forecasting accuracy.

- It details a multimodal encoding and fusion approach using Timestamp-Assisted Attention and Text-Time series Fusion to integrate diverse data types effectively.

- Experimental results on multiple domains reveal that MCD-TSF outperforms models like PatchTST and CSDI in terms of MSE and MAE.

Multimodal Conditioned Diffusive Time Series Forecasting

The paper "Multimodal Conditioned Diffusive Time Series Forecasting" presents MCD-TSF, a novel approach leveraging diffusion models to incorporate multimodal inputs for time series forecasting (TSF). This approach aims to enhance forecast accuracy by integrating timestamps and textual data to guide the diffusion processes more effectively.

Introduction and Motivation

Time series forecasting is crucial for various industries, assisting in efficient decision-making by predicting future values based on historical data. Traditional methods have relied on statistical models and neural networks, but recent advancements focus on leveraging deep learning techniques, particularly transformers, to capture complex temporal dependencies.

Despite the advancements, standard diffusion models typically focus on unimodal numerical sequences, failing to utilize the abundant multimodal information such as textual descriptions and timestamps available in real-world datasets. This paper addresses this gap by proposing the MCD-TSF model, which integrates these modalities to improve TSF performance.

MCD-TSF Model Architecture

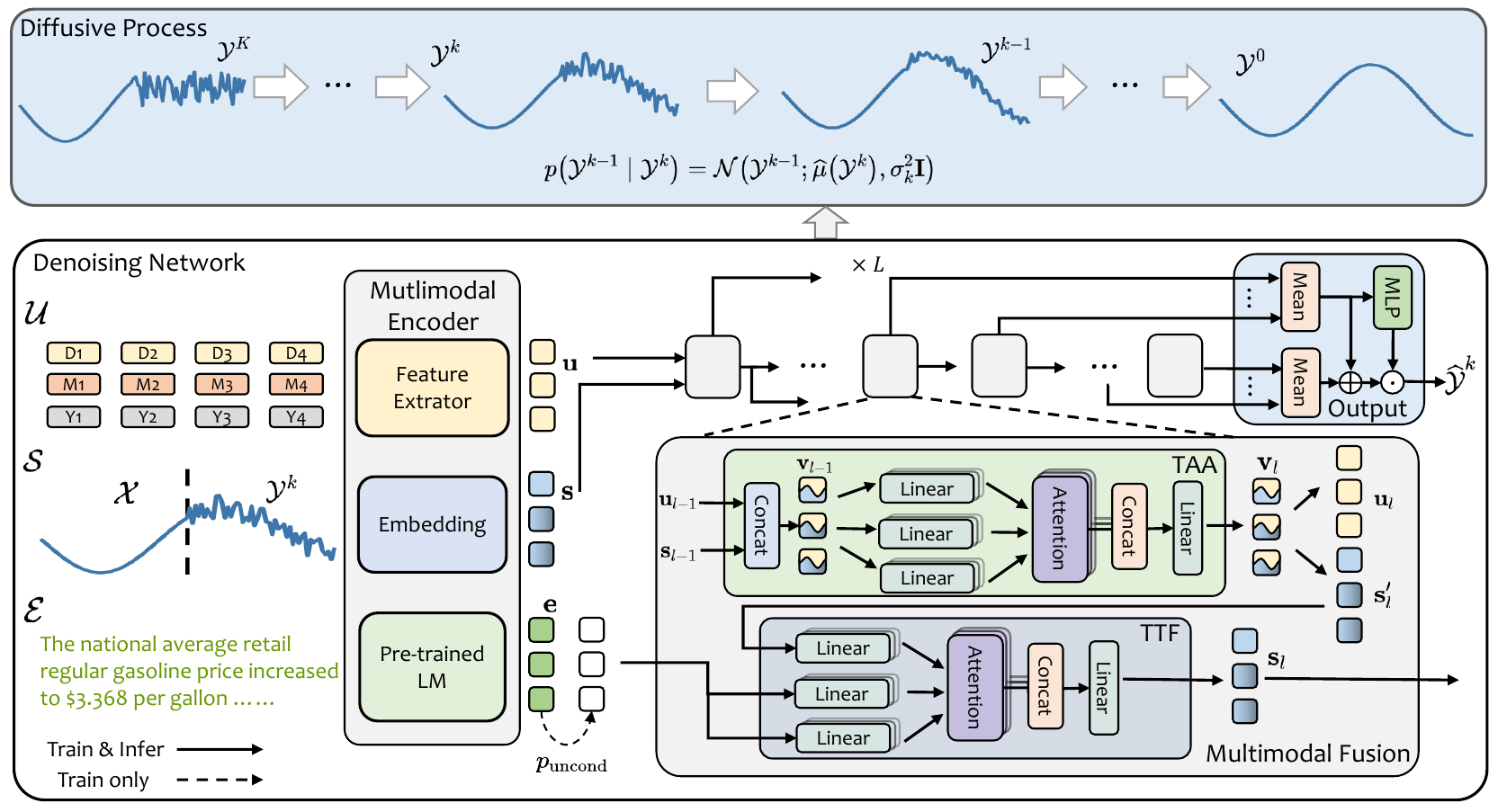

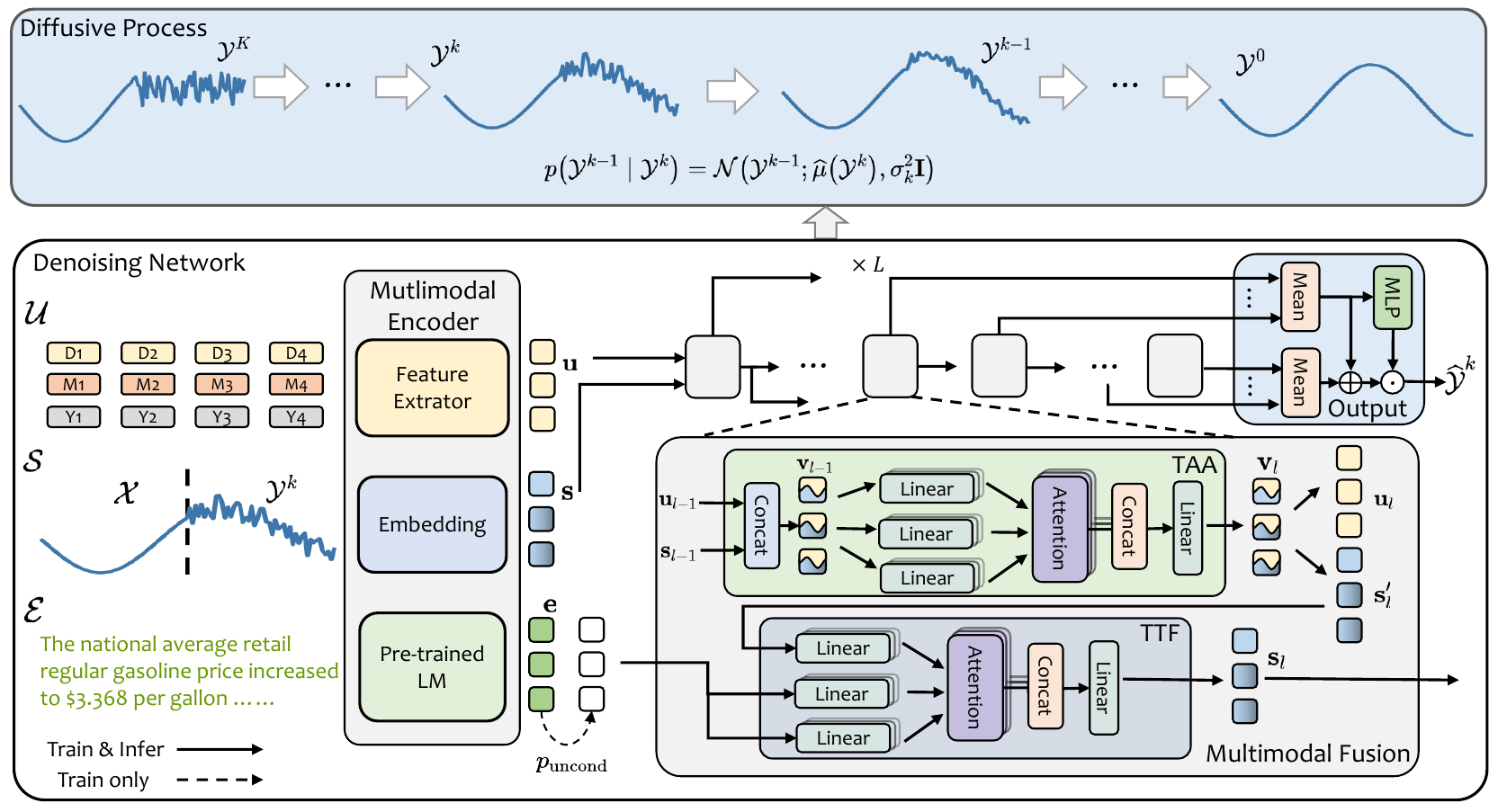

The MCD-TSF architecture considers three core inputs: historical time series, timestamps, and textual descriptions. The architecture comprises a diffusion framework, multimodal encoder, and fusion module, which includes the Timestamp-Assisted Attention (TAA) and Text-Time series Fusion (TTF).

Figure 1: The overall architecture of the proposed MCD-TSF.

Multimodal Encoding and Fusion

The model encodes each input type into feature vectors: time series data through a 1×1 convolution, timestamps via hierarchical temporal feature extraction, and text through a pretrained LLM like BERT.

In the fusion module, TAA first enhances temporal understanding by merging timestamp features with time series data, followed by TTF, which incorporates textual information, utilizing the pretrained model representations to adjust and guide the time series’ insights adaptively.

Diffusion Process

The MCD-TSF employs a diffusive forecasting approach, denoising future predictions step-by-step from Gaussian noise using a conditioned process. The diffusion network incorporates timestamp and textual data, controlled using classifier-free guidance (CFG) to dynamically balance the influence of these modalities.

Experimentation and Results

MCD-TSF was evaluated on the Time-MMD benchmark encompassing various domains such as energy, agriculture, and health, showing superior performance over existing models like PatchTST and CSDI in terms of Mean Squared Error (MSE) and Mean Absolute Error (MAE).

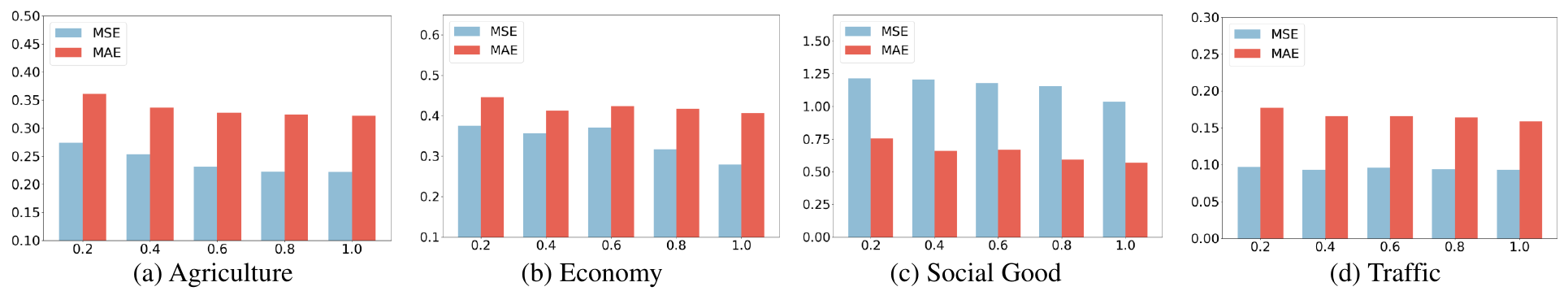

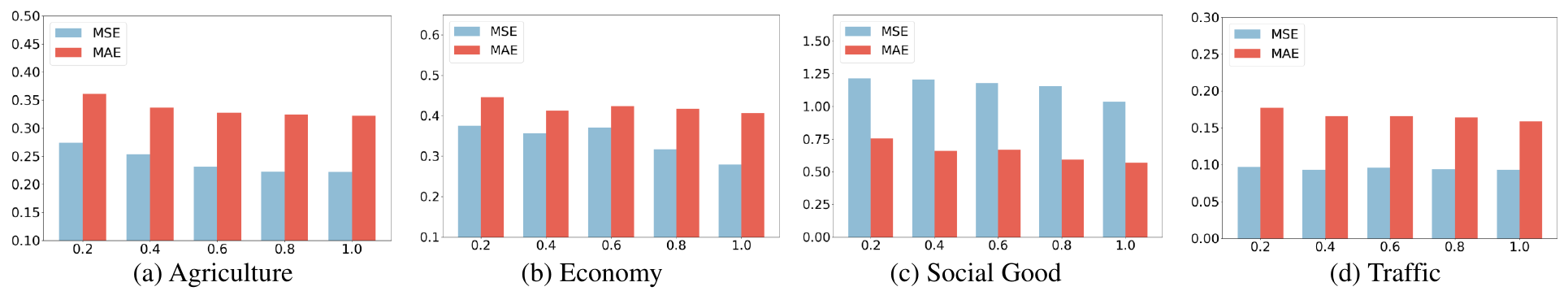

Experiments demonstrated significant improvements when both timestamps and textual information are used. The analysis of different timestamp weights λ and text guidance strength w confirms optimal integration levels improve accuracy.

Figure 2: Results of our approach on different domains with various timestamp weights.

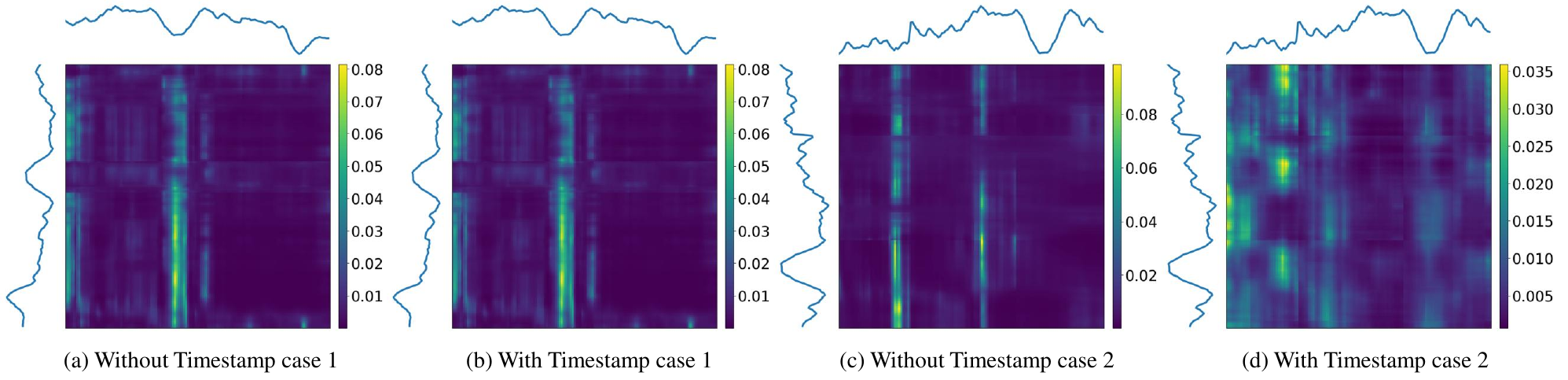

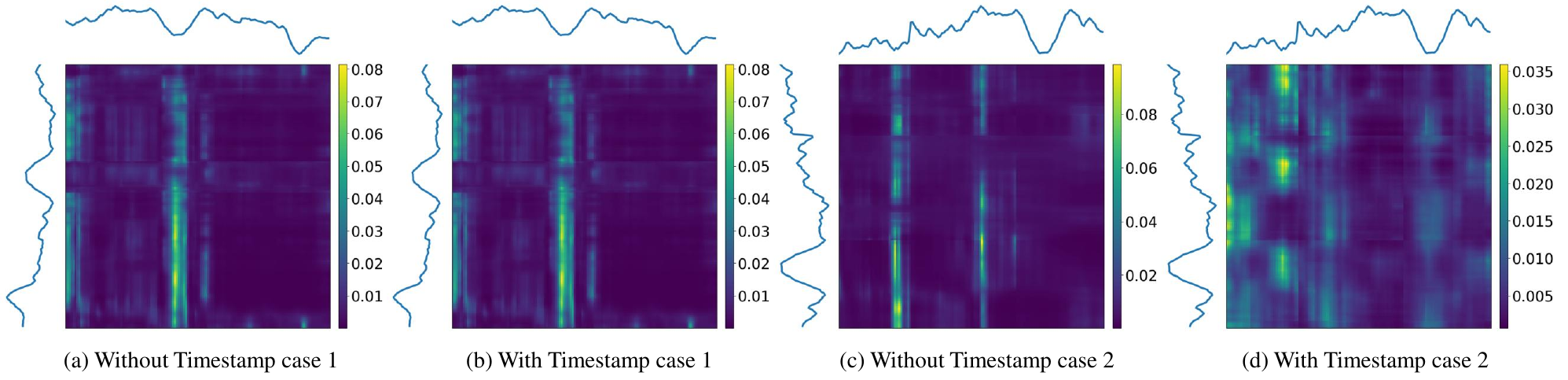

Figure 3: Attention heatmaps illustrating the timestamp effect on temporal focus.

Conclusions

The study highlights MCD-TSF as an effective framework for leveraging multimodal data in time series forecasting, achieving state-of-the-art results across multiple domains. By effectively integrating and balancing timestamp and textual data, the model not only improves forecast accuracy but also provides a robust methodology adaptable to varying data modalities.

Continued research is expected to explore further applications and extensions of multimodal diffusion models in other complex forecasting tasks, ensuring that MCD-TSF becomes a pivotal framework for comprehensive multimodal time series analysis.