Automatic Legal Writing Evaluation of LLMs

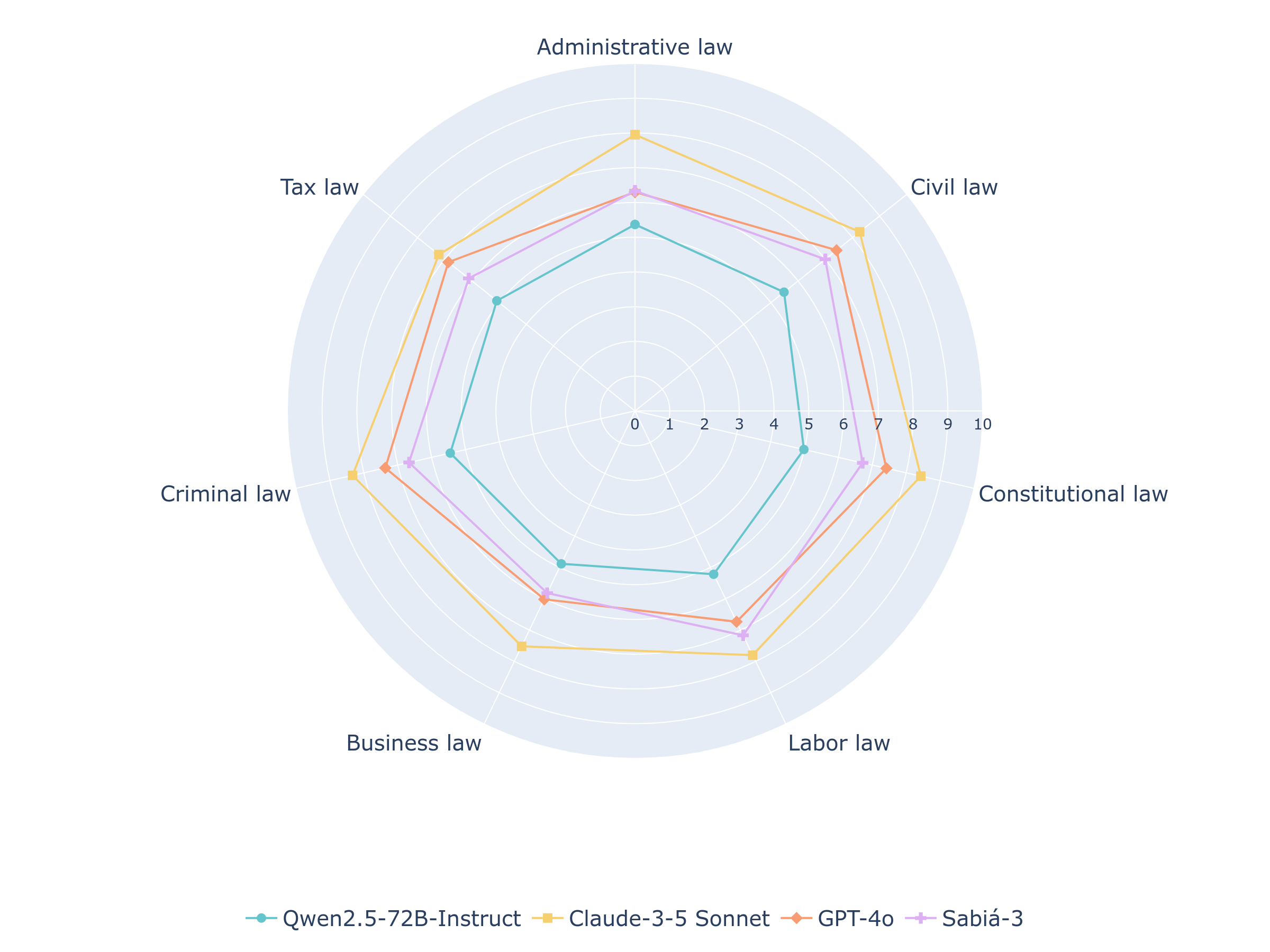

Abstract: Despite the recent advances in LLMs, benchmarks for evaluating legal writing remain scarce due to the inherent complexity of assessing open-ended responses in this domain. One of the key challenges in evaluating LLMs on domain-specific tasks is finding test datasets that are public, frequently updated, and contain comprehensive evaluation guidelines. The Brazilian Bar Examination meets these requirements. We introduce oab-bench, a benchmark comprising 105 questions across seven areas of law from recent editions of the exam. The benchmark includes comprehensive evaluation guidelines and reference materials used by human examiners to ensure consistent grading. We evaluate the performance of four LLMs on oab-bench, finding that Claude-3.5 Sonnet achieves the best results with an average score of 7.93 out of 10, passing all 21 exams. We also investigated whether LLMs can serve as reliable automated judges for evaluating legal writing. Our experiments show that frontier models like OpenAI's o1 achieve a strong correlation with human scores when evaluating approved exams, suggesting their potential as reliable automated evaluators despite the inherently subjective nature of legal writing assessment. The source code and the benchmark -- containing questions, evaluation guidelines, model-generated responses, and their respective automated evaluations -- are publicly available.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- AlpacaEval: A benchmark used to assess chatbot-style open-ended performance via model comparisons. "chatbot benchmarks such as AlpacaEval"

- Analytical grading: An evaluation approach that awards points for specific components (e.g., presentation, argumentation, legal basis) rather than only the final result. "The OAB exam grading is analytical."

- Benchmark saturation: The phenomenon where established benchmarks no longer differentiate model performance because scores approach the ceiling. "concerns about benchmark saturation"

- Brazilian Bar Examination: The national licensing exam (OAB) required to practice law in Brazil. "The Brazilian Bar Examination meets these requirements."

- Chain-of-thought prompting: A prompting technique that elicits step-by-step reasoning from LLMs before final answers. "chain of thought prompting"

- Commented answer: The official explanatory answer key detailing expected legal reasoning and acceptable argumentation. "commented answer"

- Criminal Procedure Code: The Brazilian legal code governing criminal procedural rules. "Criminal Procedure Code"

- Criminal review: A judicial remedy that allows revision of a criminal conviction after judgment. "Criminal review is admissible even after the death of the convicted person"

- Data contamination: The unintended overlap between evaluation data and a model’s training corpus, risking biased assessments. "data contamination"

- Extinction of punishability: A legal concept where criminal liability is extinguished (e.g., by the death of the convicted person). "extinction of punishability"

- FGV (Fundação Getúlio Vargas): The Brazilian institution that organizes the OAB exam. "Fundação Getúlio Vargas (FGV)"

- Frontier models: The most advanced, high-capability LLMs at the cutting edge of performance. "frontier models like OpenAI's o1 achieve a strong correlation with human scores"

- Inter-annotator agreement: A measure of consistency among human evaluators assessing the same items. "inter-annotator agreement"

- Jurisprudence: Case law and judicial precedents used to support legal arguments. "applying relevant legislation and jurisprudence to the presented case"

- LegalBench: A large, interdisciplinary legal NLP benchmark reflecting real-world legal tasks. "LegalBench"

- Legal theses: Specific legal arguments or doctrinal positions evaluated as items within the rubric. "items representing legal theses"

- LLM judge: An LLM used as an automated evaluator to grade open-ended responses. "LLM judge"

- Machine-readable format: A structured representation enabling automated parsing and analysis. "machine-readable format"

- Mean Absolute Error (MAE): An error metric equal to the average absolute difference between predicted and true values. "Mean Absolute Error (MAE)"

- Multi-turn judge prompt: An evaluation prompt that considers prior turns to assess interdependent sub-answers. "multi-turn judge prompt"

- oab-bench: A benchmark derived from OAB exams for evaluating LLMs on legal writing tasks. "We introduce oab-bench, a benchmark comprising 105 questions across seven areas of law from recent editions of the exam."

- Open-ended legal QA: Legal question answering tasks requiring free-form, reasoned responses. "open-ended legal QA"

- Pact of San José, Costa Rica: A regional human rights treaty (American Convention on Human Rights). "the Pact of San José, Costa Rica"

- peça prático-profissional: The practical-professional legal essay component of the OAB exam. "peça prático-profissional"

- Position bias: Systematic bias in evaluations due to the ordering or placement of options/responses. "position bias"

- Principle of non-transferability of punishment: Legal principle stating criminal penalties do not transfer to others (e.g., heirs). "principle of non-transferability of punishment"

- Reasoning model: An LLM designed to perform extended internal reasoning before producing an answer. "a reasoning model released by OpenAI"

- Reference-guided grading modes: Evaluation modes where the judge uses official references or guidelines to assess answers. "reference-guided grading modes"

- Retrieval-Augmented Generation (RAG): A method that augments generation with retrieved documents to improve factuality and alignment. "Retrieval-Augmented Generation (RAG)"

- Score distribution table: The official rubric specifying how points are allocated across parts of an answer. "score distribution table"

- Standardized exams: Tests with fixed formats and scoring procedures used to compare performance across cohorts and models. "standardized exams"

- UltraFeedback: A post-training dataset providing preference signals to improve LLM alignment via reinforcement learning. "UltraFeedback"

Collections

Sign up for free to add this paper to one or more collections.