Optimization of embeddings storage for RAG systems using quantization and dimensionality reduction techniques

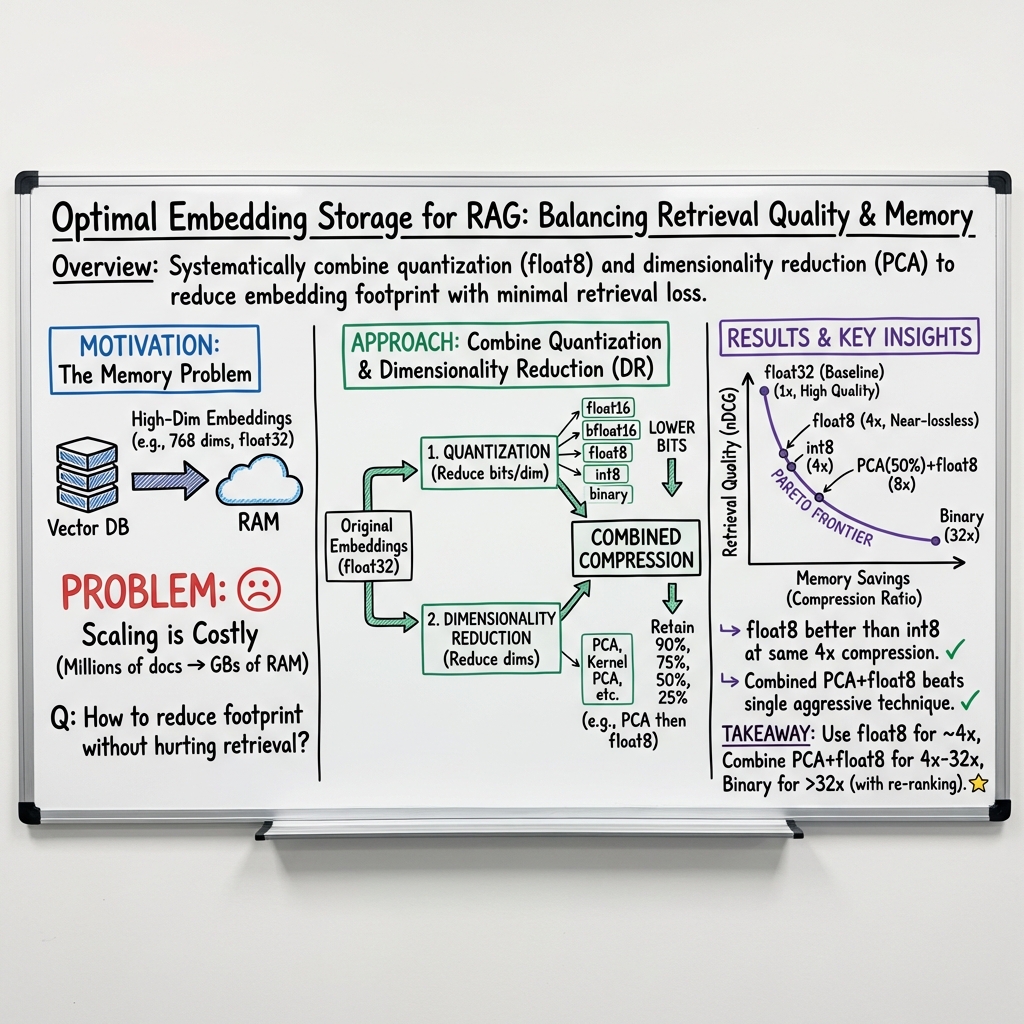

Abstract: Retrieval-Augmented Generation enhances LLMs by retrieving relevant information from external knowledge bases, relying on high-dimensional vector embeddings typically stored in float32 precision. However, storing these embeddings at scale presents significant memory challenges. To address this issue, we systematically investigate on MTEB benchmark two complementary optimization strategies: quantization, evaluating standard formats (float16, int8, binary) and low-bit floating-point types (float8), and dimensionality reduction, assessing methods like PCA, Kernel PCA, UMAP, Random Projections and Autoencoders. Our results show that float8 quantization achieves a 4x storage reduction with minimal performance degradation (<0.3%), significantly outperforming int8 quantization at the same compression level, being simpler to implement. PCA emerges as the most effective dimensionality reduction technique. Crucially, combining moderate PCA (e.g., retaining 50% dimensions) with float8 quantization offers an excellent trade-off, achieving 8x total compression with less performance impact than using int8 alone (which provides only 4x compression). To facilitate practical application, we propose a methodology based on visualizing the performance-storage trade-off space to identify the optimal configuration that maximizes performance within their specific memory constraints.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper looks at how to store “embeddings” more efficiently in computer systems that use RAG (Retrieval-Augmented Generation). RAG is a way to help AI chatbots and tools answer questions by first searching for helpful facts in a big library and then using those facts to write a better answer. The problem is that the “embeddings” used for this search are big and take up a lot of memory. The paper tests different ways to shrink these embeddings so they use less space but still work well.

The main questions the paper asks

- Can we reduce the size of embeddings without hurting search quality too much?

- Which shrinking methods work best: changing number precision (quantization) or reducing the number of dimensions (dimensionality reduction)?

- What happens if we combine both methods?

- How can teams choose the best settings for their memory limits while keeping good performance?

How the researchers tested their ideas

Think of an embedding like a set of numbers that represent the meaning of a sentence—like coordinates on a map that help you find similar sentences nearby. The bigger and more precise these numbers are, the better the map, but the more memory it uses.

The paper tests two types of “shrinking”:

- Quantization: This is like rounding numbers and storing fewer digits to save space.

- Examples they tried: float16 and bfloat16 (about half the size of normal float32), float8 (about one quarter the size), int8 (also one quarter), and binary (extremely small, but very rough).

- Dimensionality reduction: This is like summarizing a long list by keeping only the most important parts.

- Examples they tried: PCA (Principal Component Analysis), Kernel PCA, UMAP, Autoencoders, and Random Projections.

They used two popular embedding models:

- bge-small-en-v1.5 (384 dimensions, smaller)

- nomic-embed-text-v1.5 (768 dimensions, larger)

They measured performance using nDCG@10, which you can think of as a score for “How good are the top 10 search results?” Higher scores mean better search quality. They ran tests on standard retrieval datasets (MTEB benchmark) and compared everything to the normal, uncompressed setup (float32).

What they found and why it matters

Here are the key results, explained simply:

- Float8 quantization is a big win.

- It shrinks storage by 4x and loses less than 0.3% performance on average.

- It beats int8 at the same compression level and is easier to use because it doesn’t need extra calibration data.

- PCA is the best way to reduce dimensions.

- Among several methods, PCA keeps search quality the highest while cutting dimensions.

- It’s also simple and fast to use compared to more complex methods like Kernel PCA or Autoencoders.

- Combining PCA and float8 is even better.

- Keeping about 50% of the dimensions with PCA, then using float8, gives about 8x total compression.

- This combo performs better than using int8 alone (which gives only 4x compression).

- Binary (1-bit) is tiny but risky.

- It cuts size by 32x, but the performance drop is much larger. It can be useful only if you re-rank the top results later with higher-precision vectors.

- Bigger embeddings handle shrinking better.

- The 768-dimension model loses less performance when compressed than the 384-dimension one. More dimensions carry more “backup” information.

To help teams pick the best setup, the authors suggest making a simple chart: plot performance on the vertical axis and storage size on the horizontal axis. Then choose the configuration with the highest performance that fits under your memory limit. This makes the trade-off easy to see.

Why this is useful

- Lower cost and easier deployment: Using float8 or combining float8 with PCA lets you store many more embeddings in the same memory. This is great for big knowledge bases and cheaper cloud bills.

- Better fit for edge devices and browsers: Smaller embeddings can run on devices with limited memory.

- Simple changes, big impact: Switching to float8 and using PCA does not require re-training models and is straightforward to implement.

- Clear decision-making: The “performance vs. storage” chart helps pick the right balance for your needs.

Takeaway

If you’re building a RAG system and want to cut memory usage without losing much search quality:

- First try float8 for a quick 4x reduction with almost no performance loss.

- If you need more compression, add PCA (keep around 50% of dimensions) and still use float8 to get about 8x total reduction with strong performance.

- Avoid int8 unless you must, and be careful with binary unless you plan to re-rank results.

This approach makes RAG systems more efficient, cheaper, and easier to run at scale while keeping answers accurate and helpful.

Collections

Sign up for free to add this paper to one or more collections.