4bit-Quantization in Vector-Embedding for RAG

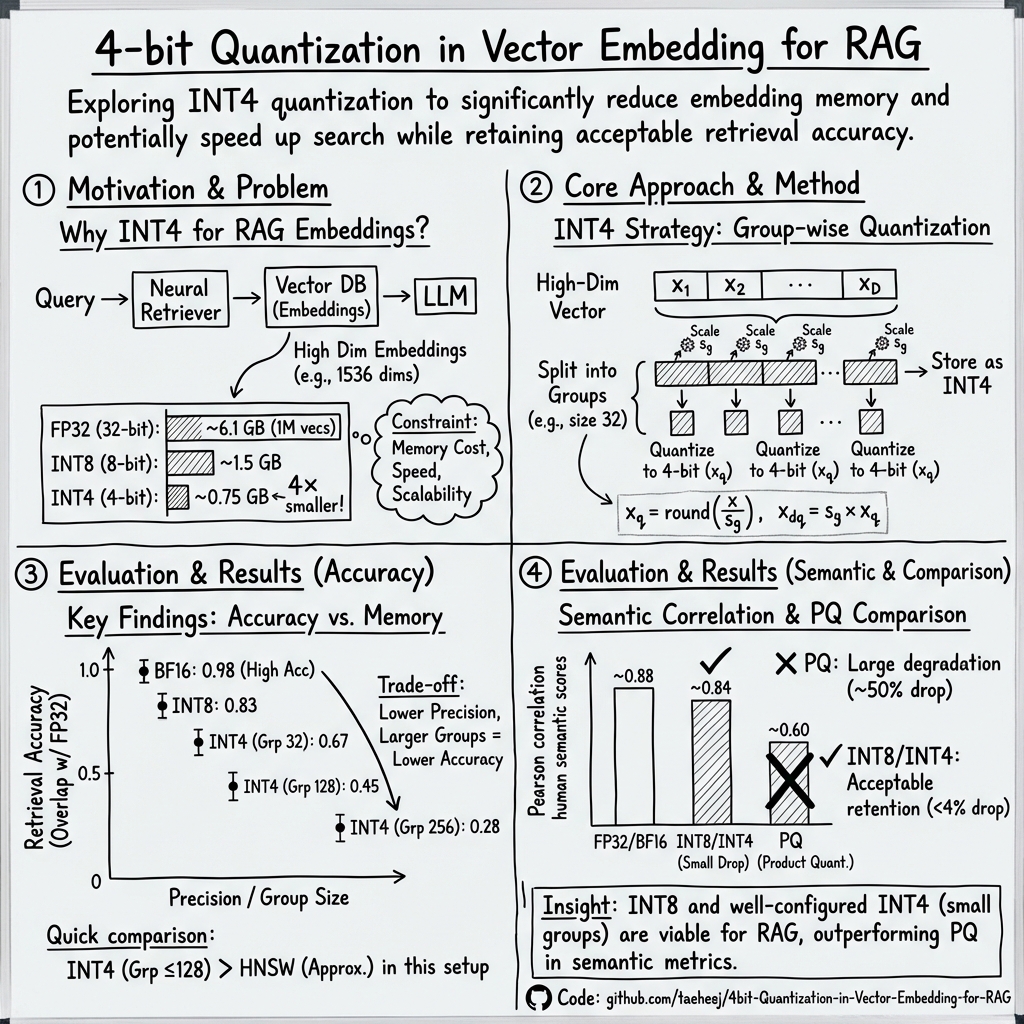

Abstract: Retrieval-augmented generation (RAG) is a promising technique that has shown great potential in addressing some of the limitations of LLMs. LLMs have two major limitations: they can contain outdated information due to their training data, and they can generate factually inaccurate responses, a phenomenon known as hallucinations. RAG aims to mitigate these issues by leveraging a database of relevant documents, which are stored as embedding vectors in a high-dimensional space. However, one of the challenges of using high-dimensional embeddings is that they require a significant amount of memory to store. This can be a major issue, especially when dealing with large databases of documents. To alleviate this problem, we propose the use of 4-bit quantization to store the embedding vectors. This involves reducing the precision of the vectors from 32-bit floating-point numbers to 4-bit integers, which can significantly reduce the memory requirements. Our approach has several benefits. Firstly, it significantly reduces the memory storage requirements of the high-dimensional vector database, making it more feasible to deploy RAG systems in resource-constrained environments. Secondly, it speeds up the searching process, as the reduced precision of the vectors allows for faster computation. Our code is available at https://github.com/taeheej/4bit-Quantization-in-Vector-Embedding-for-RAG

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper looks at a way to make a special kind of AI system, called a RAG system, run with much less memory. RAG stands for “Retrieval-Augmented Generation.” It helps LLMs answer questions using up-to-date information stored in a searchable database. The paper tests storing the database in a very compact form using 4-bit numbers (instead of standard 32-bit numbers) and checks how much speed and memory it could save, and how that affects accuracy.

The big questions the paper asks

To make the explanation easier to follow, here are the main questions the researchers wanted to answer:

- Can we store the RAG system’s “embedding vectors” (the math-y representations of documents) using 8-bit or even 4-bit numbers to save a lot of memory?

- If we do that, how much does the accuracy of search and matching go down?

- Is there a smart way (called “group-wise quantization”) to make 4-bit storage work better?

- How does this simple quantization approach compare against another popular compression method called Product Quantization?

How the system works (in everyday language)

What is RAG?

Think of a helpful librarian plus a writer. The librarian quickly finds the most relevant articles or documents for your question. The writer (the LLM) reads those documents and gives you a well-formed answer. RAG does exactly this: it retrieves useful info and then generates a response based on that info.

What are “embedding vectors”?

Imagine each document is turned into a very long list of numbers, like a barcode but with hundreds or thousands of slots. This list (called a vector) captures the meaning of the document. Similar documents have similar vectors.

What does “vector search” mean?

Vector search is like finding the nearest “neighbors” in a big map of these number-lists. If your question is turned into a vector, the system looks for document vectors that are most similar—like finding arrows pointing in a similar direction.

- Cosine similarity: A common way to measure similarity. Think of each vector as an arrow. If two arrows point in the same direction, their cosine similarity is high; if they point in opposite directions, it’s low.

What is “quantization”?

Quantization means storing numbers with fewer bits (fewer “steps” on your measuring stick). For example:

- 32-bit floating point: very precise, takes a lot of space.

- 8-bit integer: less precise, takes much less space.

- 4-bit integer: even less precise, takes tiny space.

Analogy: Imagine you have rulers with different levels of detail. A 32-bit ruler has ultra-fine markings; a 4-bit ruler has only a few big marks. You can measure faster and store less, but your measurement isn’t as exact.

What is “group-wise quantization”?

If you try to compress a whole long vector with the same small ruler, some parts will get measured poorly. Group-wise quantization breaks the long vector into smaller chunks (groups). Each chunk gets its own mini-ruler (scaling). This usually improves accuracy when you compress very hard, like with 4 bits.

What the researchers did (their approach)

To keep things understandable, here’s how they tested their ideas:

- They used a big set of real document embeddings (1 million items, each with 1536 numbers).

- They tested different storage types:

- BF16 (a 16-bit format often used in AI)

- INT8 (8-bit integer)

- INT4 (4-bit integer), with different group sizes (like splitting a 1536-long vector into groups of 32, 64, 128, or 256 numbers per group)

- They measured:

- How much the similarity scores changed compared to the original 32-bit scores (using RMSE, a way to measure average error).

- How many of the top-10 most relevant documents you still find when you search with quantized vectors, compared to the original.

- They compared their method against:

- HNSW (a popular fast-but-approximate search method).

- Product Quantization (PQ), a technique that compresses vectors by splitting them and clustering parts. PQ often compresses a lot but can lose exactness.

What they found and why it matters

Here are the most important results explained in simple terms:

- Memory savings can be huge:

- Using 32-bit numbers for 1 million vectors of length 1536 needs about 6.1 GB just for the numbers.

- Using 8-bit drops that to about 1.5 GB.

- Using 4-bit drops it further to about 0.75 GB.

- Accuracy drops as you use fewer bits:

- BF16 and INT8 kept accuracy fairly high; the average error (RMSE) was small.

- INT4 had more noticeable errors—but group-wise quantization helped. Smaller group sizes (like 32 or 64) were more accurate than large ones (like 256).

- Retrieval accuracy (finding the right top-10 documents) stayed surprisingly good under certain 4-bit settings:

- INT8: strong accuracy with only a small drop.

- INT4: lower accuracy overall, but with group sizes 32–128 it still beat the popular HNSW approximate search on their test.

- Product Quantization (PQ) had much bigger accuracy loss in their “exact top-10” tests and in semantic similarity tests:

- When they checked how cosine similarity lined up with human judgments of sentence similarity, INT8/INT4 lost only up to ~4% compared to the original.

- PQ lost much more, sometimes around 50%.

Why it matters:

- If you can store embeddings in 4-bit or 8-bit form with reasonable accuracy, you can fit many more documents into memory (or use cheaper hardware).

- This can make RAG systems faster and more affordable, especially on devices or servers with limited resources.

Limitations

- Speed was not directly measured in this paper. Even though using fewer bits usually speeds up search, the hardware and software need to support 4-bit math well. Many popular tools currently support 8-bit but not 4-bit operations.

- So, while they expect speed-ups, they didn’t show timing results yet.

What this could mean for the future

- RAG systems could become more widely available and cheaper to run if embeddings can be safely stored in 8-bit or even 4-bit form.

- With smart 4-bit group-wise quantization, you can get big memory savings and still keep accuracy high enough for many tasks.

- If hardware and AI frameworks add strong support for 4-bit operations, we might see noticeable speed boosts.

- Storing more documents in the same memory means RAG systems can search a bigger knowledge base, potentially giving more accurate and up-to-date answers.

Summary in plain words

This paper shows a way to pack the “meaning maps” of documents into very small boxes (4-bit or 8-bit) so that a RAG system can store more information and search faster. Doing this carefully (especially with group-wise quantization) keeps accuracy surprisingly good. It doesn’t beat full precision in every case, but it often beats other fast methods, and uses far less memory. This could help build smarter, faster, and more affordable AI helpers that stay accurate with large, fresh knowledge bases.

Collections

Sign up for free to add this paper to one or more collections.