- The paper introduces a multi-agent framework using LLMs to accurately identify and patch real-world CVE vulnerabilities.

- The system integrates three specialized agents—Similarity Analyzer, Vulnerability Verifier, and Code Patcher—that leverage semantic analysis and CoT reasoning to achieve 89.5% F1-score in detection and 95.0% accuracy in patch generation.

- AutoPatch significantly reduces costs by over 50× compared to traditional fine-tuning methods, offering a scalable, economically viable approach to code security.

AutoPatch: Multi-Agent Framework for Patching Real-World CVE Vulnerabilities

Introduction

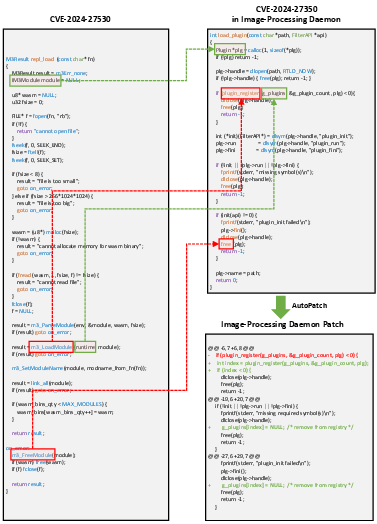

The paper "AutoPatch: Multi-Agent Framework for Patching Real-World CVE Vulnerabilities" discusses a novel approach to leveraging LLMs for vulnerability patching in code generated by these models. Despite the growing capabilities of LLMs in automated code generation, they face significant challenges in maintaining up-to-date knowledge of newly disclosed CVEs. AutoPatch addresses this issue by presenting a framework that integrates Retrieval-Augmented Generation (RAG) techniques and a comprehensive database of high-severity CVEs to enable efficient and accurate vulnerability identification and patching.

AutoPatch Framework Overview

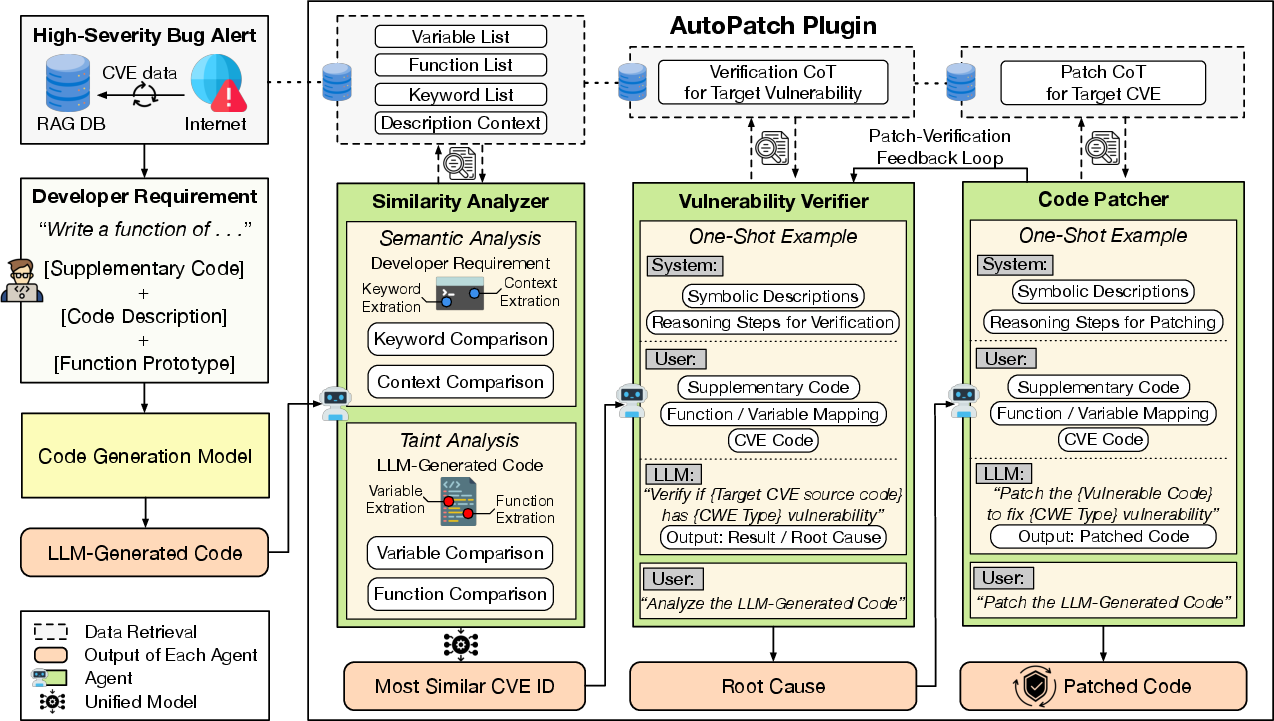

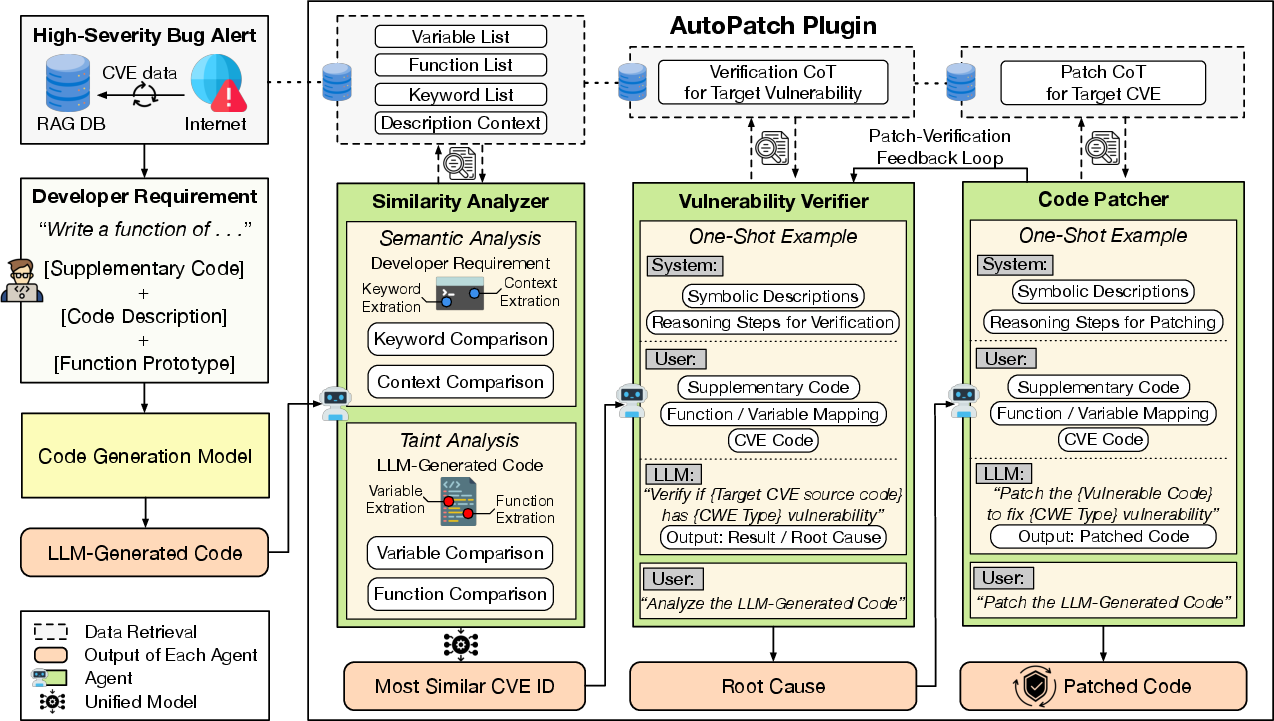

AutoPatch is structured as a multi-agent system comprising three specialized LLM agents: the Similarity Analyzer, the Vulnerability Verifier, and the Code Patcher. These agents work collaboratively within an LLM-integrated IDE environment to identify and patch vulnerabilities that may exist in LLM-generated code.

1. Similarity Analyzer: This agent performs semantic and taint analysis to pinpoint relevant CVEs. By extracting key terms and analyzing contextual descriptions, it matches the LLM-generated code against a database of known vulnerabilities, achieving high accuracy in CVE matching (90.4%).

2. Vulnerability Verifier: Upon identifying a potential CVE match, this agent verifies the vulnerability in the code via enriched Chain-of-Thought (CoT) reasoning, achieving 89.5% F1-score for vulnerability detection.

3. Code Patcher: Once a vulnerability is confirmed, the Code Patcher uses enhanced CoT reasoning to generate secure code revisions, achieving a 95.0% accuracy rate.

Figure 1: The overall architecture of AutoPatch.

Implementation and Evaluation

AutoPatch is implemented using LangChain and a PostgreSQL vector database, serving as a robust security plugin for LLM-integrated IDEs such as GitHub Copilot. The evaluation involved generating 525 code snippets from 75 recent high-severity CVEs and assessing the framework's performance with models like GPT-4o and DeepSeek.

Experiments show that AutoPatch significantly outperforms traditional fine-tuning approaches both in terms of accuracy and cost-efficiency:

Conclusion and Implications

The AutoPatch framework presents a scalable and economically viable solution for enhancing the security of LLM-generated code. By integrating multi-agent strategies with retrieval-augmented techniques, AutoPatch bridges the gap between static LLM knowledge and the dynamic landscape of software vulnerabilities. This approach not only advances the automation of vulnerability patching but also offers significant cost advantages, reducing the need for expensive and frequent model fine-tuning. Future work could extend AutoPatch to address zero-day vulnerabilities and refine its adaptability across diverse programming environments.