Gated Attention for Large Language Models: Non-linearity, Sparsity, and Attention-Sink-Free

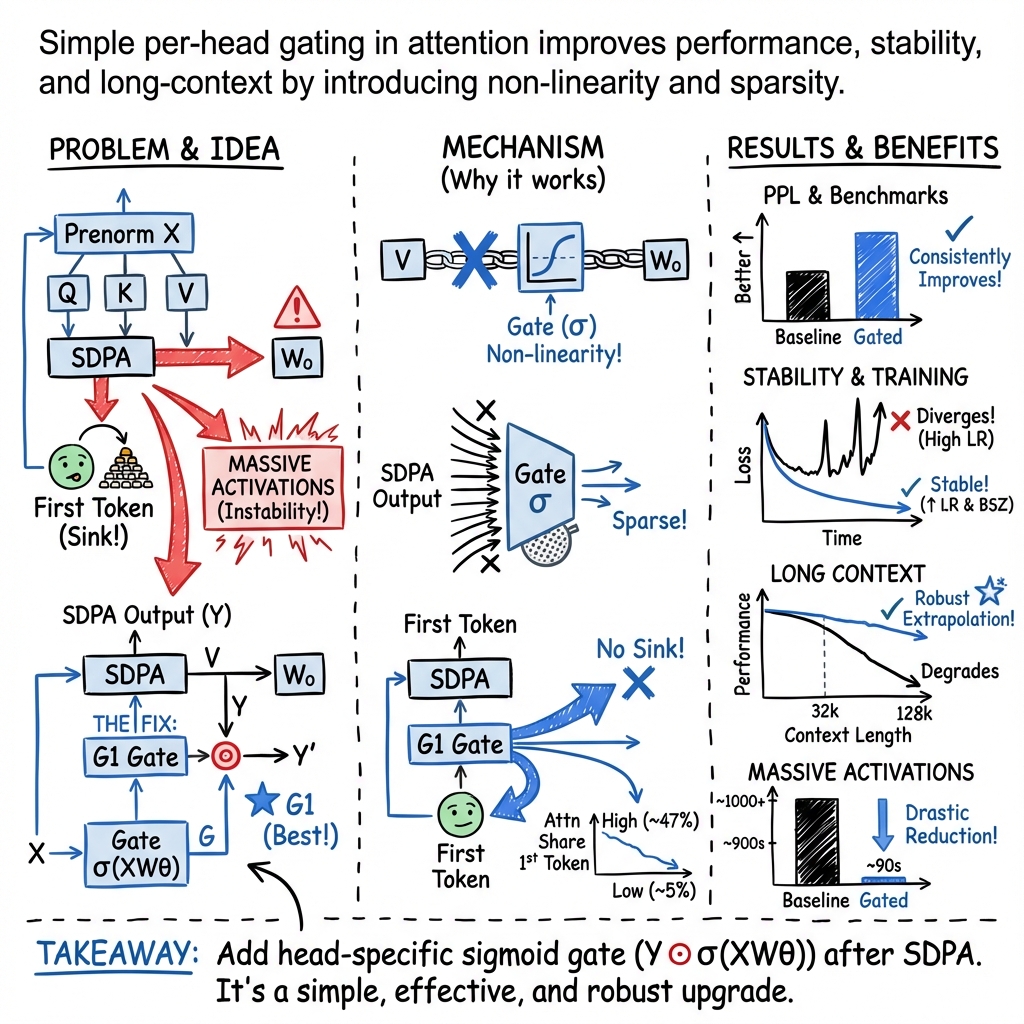

Abstract: Gating mechanisms have been widely utilized, from early models like LSTMs and Highway Networks to recent state space models, linear attention, and also softmax attention. Yet, existing literature rarely examines the specific effects of gating. In this work, we conduct comprehensive experiments to systematically investigate gating-augmented softmax attention variants. Specifically, we perform a comprehensive comparison over 30 variants of 15B Mixture-of-Experts (MoE) models and 1.7B dense models trained on a 3.5 trillion token dataset. Our central finding is that a simple modification-applying a head-specific sigmoid gate after the Scaled Dot-Product Attention (SDPA)-consistently improves performance. This modification also enhances training stability, tolerates larger learning rates, and improves scaling properties. By comparing various gating positions and computational variants, we attribute this effectiveness to two key factors: (1) introducing non-linearity upon the low-rank mapping in the softmax attention, and (2) applying query-dependent sparse gating scores to modulate the SDPA output. Notably, we find this sparse gating mechanism mitigates 'attention sink' and enhances long-context extrapolation performance, and we also release related $\href{https://github.com/qiuzh20/gated_attention}{codes}$ and $\href{https://huggingface.co/QwQZh/gated_attention}{models}$ to facilitate future research.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at a simple idea to make LLMs stronger and more stable: add a small “gate” to the attention part of the model. The attention part decides what words to focus on while reading a sentence. The gate works like a smart filter or volume knob that turns parts of the attention output up or down, depending on what the current word needs.

The authors show that placing this gate in just the right spot—right after the attention calculation—consistently makes models more accurate, more stable during training, and better at handling long texts.

What questions did the researchers ask?

They wanted to understand:

- Does adding a gate to attention really help, and where should it go?

- Which kind of gate works best (per-head vs shared, small vs big, additive vs multiplicative)?

- Why does the gate help? Is it because it adds non-linearity (more “bending power” to the math) or because it creates sparsity (filters most things out and keeps only what’s needed)?

- Can the gate fix a known problem called “attention sink,” where the model pays too much attention to the first token?

- Does it help the model understand much longer texts without breaking?

How did they study it?

The team ran a lot of experiments on very large models and huge datasets:

- Models:

- A 15-billion-parameter Mixture-of-Experts (MoE) model (think: a model with many specialists, but only a few are active at a time).

- A 1.7-billion-parameter “dense” model (all parts active).

- Data: Trained on up to 3.5 trillion tokens (that’s an enormous amount of text).

- They tested over 30 variations of where and how to add the gate:

- After the attention math (Scaled Dot-Product Attention, or SDPA).

- After the “value” part of attention.

- After the “query” and “key” parts.

- After the final output of the attention block.

- Gate types:

- Head-specific vs head-shared (different gates per attention head vs one gate for all).

- Elementwise vs headwise (fine-grained vs coarse-grained).

- Multiplicative (scales the output) vs additive (adds to the output).

- Different activation functions (sigmoid vs SiLU).

To keep things fair, they also compared against models that just had more parameters (like more attention heads or more experts) without any gates.

They measured results using common benchmarks (like MMLU for general knowledge, GSM8k for math, HumanEval for coding) and checked language modeling quality through perplexity (PPL), where lower is better. They also looked at training stability (fewer “loss spikes” is better), and how well the model handles longer contexts.

What did they discover?

The biggest and most consistent win came from a simple choice:

- Put a small, head-specific, multiplicative “sigmoid gate” right after the attention calculation (after SDPA).

- This gate boosted accuracy across tasks, lowered perplexity, and made training smoother.

Key takeaways:

- Performance gains: Adding the gate after SDPA often reduced PPL by more than 0.2 and improved benchmark scores (like +2 points on MMLU), which is quite notable for such a small change.

- Training stability: Models with the gate had fewer training “spikes,” handled larger learning rates and bigger batches without breaking, and scaled better.

- Best gate style:

- Head-specific > head-shared (each attention head benefits from its own gate).

- Multiplicative > additive (scaling the output works better than adding to it).

- Sigmoid > SiLU (the sigmoid gate’s 0–1 range helps create sparsity).

- Why it works (two reasons):

- Non-linearity: Attention normally has two linear steps in a row, which act like a single “low-rank” linear map (a bit limited). The gate adds a bend (non-linearity) in the middle, making it more expressive.

- Sparsity: The gate mostly stays near zero for many parts, only allowing through the most important information for the current word. This input-dependent filtering reduces noise.

A major bonus:

It dramatically reduces “attention sink” (when the model focuses too much on the first token). With the gate, attention sink nearly disappears.

- It improves long-context performance—especially when extending context windows up to 128k tokens—making the model handle much longer texts more reliably.

Why does this simple gate help?

Think of attention like a spotlight scanning a page. Without a gate, the spotlight’s output flows straight into the next step, using mostly linear math—like two flat lenses stacked together. That can be limiting and sometimes causes the model to over-focus on the first word (attention sink).

The gate acts like:

- A smart dimmer: It turns down unhelpful parts of the attention output (sparsity).

- A flexible lens: It introduces a bend in the math (non-linearity), improving the model’s ability to represent complex relationships.

Two especially important details:

- Query-dependent gating: The gate uses the current word’s state to decide what to pass through, so it filters the context based on what this word needs right now.

- Head-specific gating: Different attention heads focus on different patterns; giving each head its own gate lets them specialize better.

Together, these make the model more precise and stable.

What could this mean in practice?

- Better LLMs with minimal changes: You can improve accuracy and stability without changing the whole architecture or adding lots of parameters.

- Smoother training: Fewer spikes and crashes mean you can push training harder (larger learning rates, bigger batches), saving time and money.

- Longer context handling: Models become more robust when stretched to read longer documents, which helps tasks like long story understanding, codebases, legal texts, and research papers.

- Open resources: The authors released code and models to help the community build on this.

Summary in everyday terms

Imagine you’re reading a book and highlighting the most important parts. Standard attention sometimes highlights the very first word too much (attention sink), and the highlighting tool is a bit stiff (too linear). The gate is like a smarter highlighter that mostly stays off but turns on when the current sentence really needs certain parts. This makes the highlighting more accurate and avoids wasting ink on the first word every time. As a result, the model reads better, learns more steadily, and handles longer books without getting overwhelmed.

Extra: Key terms explained simply

- Attention: The part of the model that decides which words matter most for the current word.

- Gate: A small filter/knob that controls how much of the attention output gets through.

- Non-linearity: A “bend” in the math that lets the model represent more complex patterns.

- Sparsity: Most values are turned way down (near zero), keeping only the helpful bits.

- Attention sink: When the model pays too much attention to the first token by default.

- MoE (Mixture-of-Experts): A model with many specialist parts; only a few are active per input.

- Perplexity (PPL): A measure of how well a model predicts the next word; lower is better.

Collections

Sign up for free to add this paper to one or more collections.