- The paper introduces InternAgent, a closed-loop framework that automates scientific research from hypothesis generation to verification.

- It employs specialized agents for literature survey, code analysis, idea innovation, and assessment to construct detailed research methodologies.

- Experimental results across 12 tasks demonstrate improved performance and stability, outperforming existing systems like Dolphin.

InternAgent: An Autonomous Scientific Research Framework

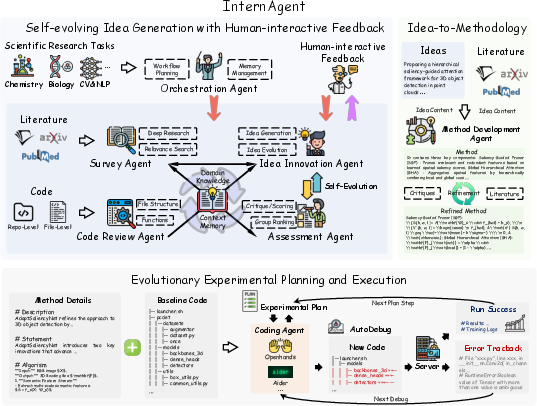

The paper "InternAgent: When Agent Becomes the Scientist -- Building Closed-Loop System from Hypothesis to Verification" (2505.16938) introduces InternAgent, a unified closed-loop multi-agent framework designed for Autonomous Scientific Research (ASR) across various scientific domains. InternAgent automates the entire research cycle, including idea generation, methodology construction, experiment execution, and results feedback.

Key Components and Capabilities

InternAgent comprises four primary modules that facilitate autonomous scientific research:

The Survey Agent adaptively aligns with user-specified requirements, employing literature review and deep research modes to explore existing methodologies. The relevance evaluation of each document is mathematically represented as R:Labs×T→[0,1], where Labs is the abstract of retrieved literature L, and R(r,t) measures the relevance of literature l to the task t.

The Code Review Agent analyzes code structures, dependencies, and functionalities to identify potential enhancements. It manages user-provided code and searches for relevant codebases, performing thorough analyses at both the repository and file levels.

The Idea Innovation Agent generates and evolves ideas, balancing creativity and rigor. The idea generation process is represented by the function G:(T,B,L)→I, where B denotes analysis of baseline methods and I is the set of generated ideas.

The Assessment Agent evaluates ideas using multidimensional scoring, considering coherence, credibility, verifiability, novelty, and alignment.

Human-interactive feedback refines ideas based on insights and critiques, with the iterative process facilitating continuous improvement.

The Orchestration Agent coordinates all other agents, synchronizing tasks and managing data flow.

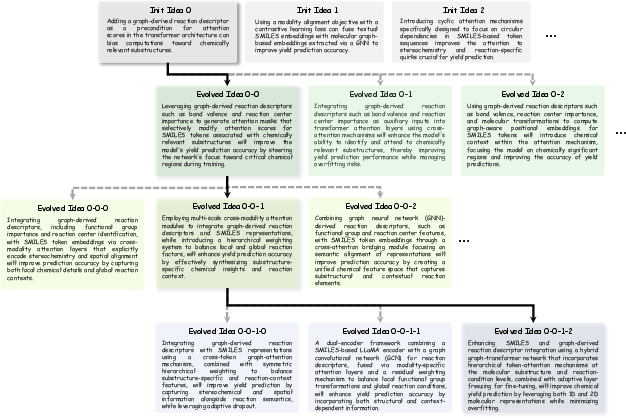

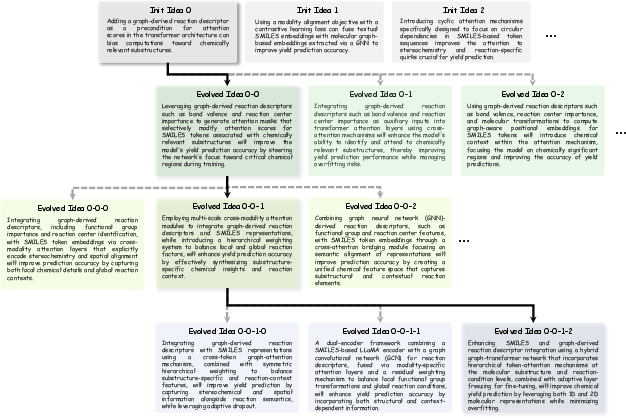

Figure 2: The idea evolution process, showcasing the iterative refinement of research concepts through multiple stages.

The Methodology Development Agent constructs basic method structures by integrating ideas with baseline codes and relevant literature. The transformation function is represented as: T:I×T×B×L→M, where I denotes research ideas, T includes task descriptions, B represents baseline methods, L is the literature corpus, and M is the resulting methodological framework.

The exception-guided debugging framework converts abstract methodological text descriptions into executable implementation codes. The coder module employs Aider coding assistant for single-file tasks and the OpenHands framework for complex repository-level codes.

Experimental Validation and Results

InternAgent has been validated across 12 scientific research tasks, demonstrating its versatility and effectiveness. The tasks span multiple modalities, including science, time series, natural language, image, and point cloud.

The experiments demonstrate that InternAgent can generate better ideas on each specific domain benefiting from the self-evolving idea generation process and automatically implement them. For example, in Reaction Yield Prediction, methods proposed by InternAgent can largely outperform those proposed by Dolphin (i.e., +3.6 on max performance).

The runtime statistics of InternAgent on all 12 tasks including the training costs (i.e., GPU hours) and monetary costs in the idea generation stage (including self-evolving idea generation and idea-to-methodology) and code execution and debug stage are provided. The idea generation cost of each idea is about \$0.6 using GPT-4o which is cost-efficient.

The survey agent can search for domain-related papers and automatically select the most relevant literature to read and extract task-related information. Under deep research mode, the survey agent needs to search for literature related to specific technical terms used in generated ideas.

The results show that InternAgent can improve both the performance and the stability of the results. This phenomenon further shows the quality of ideas and code implementation of InternAgent.

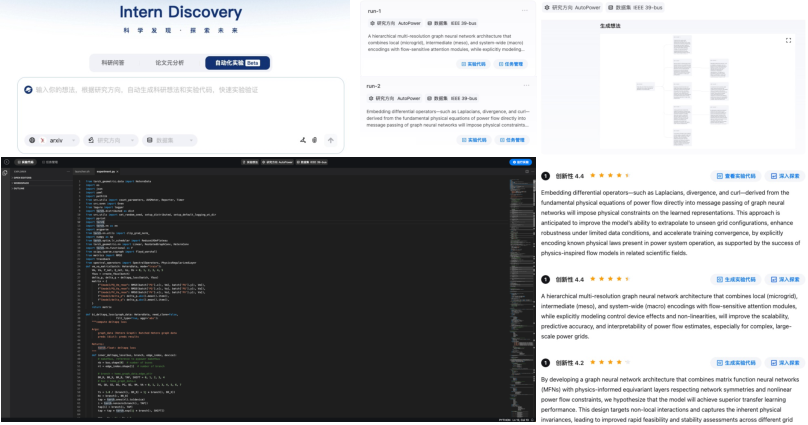

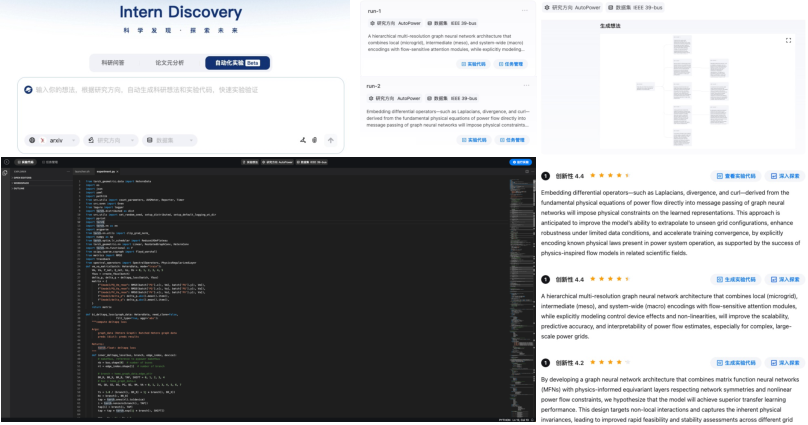

Figure 3: The InternAgent software platform, highlighting its various functionalities and user interfaces for scientific research tasks.

Comparison with Existing Systems

InternAgent is compared with existing auto-research systems such as Dolphin (Yuan et al., 7 Jan 2025) and AI-Scientist-V2 (Yamada et al., 10 Apr 2025).

InternAgent demonstrates a higher performance improvement rate compared to Dolphin, attributed to the idea-to-methodology feature, enabling the concretization of high-level ideas and the progressive integration of submodules into the baseline code.

InternAgent can support repo-level tasks such as AutoPCDet, AutoVLM, AutoTPPR, and so on, and achieve better performance on these repo-level tasks compared to their baselines. For example, on Auto2DSeg, InternAgent pipeline can improve the DeepLabV3Plus baseline [chen2018encoder] from the original 78.80\% to 81.0\%. This is attributed to the detailed methodology, code comprehension achieved by the code review agent, and the auto-exploration ability of the coder agent.

Human evaluations comparing the novelty of ideas generated by InternAgent and AI-Scientist-V2 (Yamada et al., 10 Apr 2025) across various research tasks show InternAgent outperforming AI-Scientist-V2 in all aspects, especially in overall rating and soundness.

Implications and Future Directions

The development of InternAgent represents a significant step toward automating scientific research. Key technical challenges that need to be addressed in the future include:

- Knowledge Retrieval: Establishing connections and relationships between papers.

- Knowledge Understanding and Representation: Utilizing VLM/LLM to accurately analyze relevant academic papers.

- Agent Capability Enhancement: Improving the ability of AI systems to autonomously perform complex tasks in scientific research.

- Scientific Discovery-related Benchmark Construction: Evaluating the value that an idea can bring, rather than simply evaluating its novelty.

Conclusion

InternAgent is a promising framework for automating scientific research across diverse domains. By integrating self-evolving idea generation, human-interactive feedback, idea-to-methodology construction, and multi-round experiment planning and execution, InternAgent facilitates the entire research cycle from hypothesis generation to verification. The framework's modular design and extensive experimental validation demonstrate its potential to accelerate scientific discovery and reduce the dependence on human effort in scientific research.