- The paper presents Facial Basis, a data-driven coding system that models facial expressions as additive combinations of localized components.

- It employs 3DMM fitting and sparse dictionary learning to decouple facial movements from pose and identity, ensuring robust analysis.

- The study demonstrates superior performance in predicting autism compared to traditional FACS, highlighting potential clinical applications.

Beyond FACS: Data-driven Facial Expression Dictionaries, with Application to Predicting Autism

The paper "Beyond FACS: Data-driven Facial Expression Dictionaries, with Application to Predicting Autism" introduces an innovative facial expression coding system termed as "Facial Basis," which aims to provide a comprehensive, data-driven alternative to the traditional Facial Action Coding System (FACS). This novel approach presents significant advantages over automated FACS coding systems, particularly in predicting clinical conditions such as autism spectrum disorder (ASD).

Introduction

The Facial Action Coding System (FACS) has long been the gold standard for analyzing facial expressions to infer emotional and psychological states. However, the labor-intensive nature and the inherent limitations of automated FACS accuracy have prompted the exploration of new methods. The paper proposes a data-driven approach to solve these issues, leveraging unsupervised learning to create facial expression dictionaries composed of localized and interpretable units.

Expression Coding System: Facial Basis

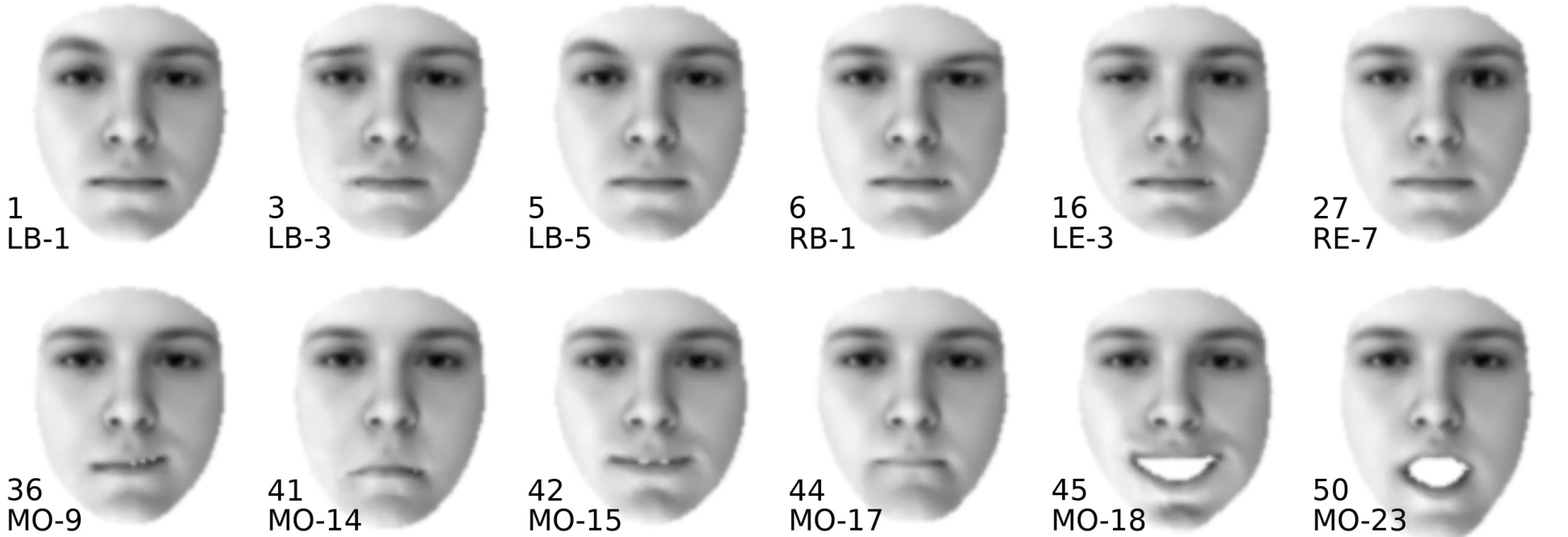

The "Facial Basis" coding system is designed to model facial expressions as linear combinations of localized components, termed Basis Units (BUs). These units correspond to distinct 3D facial movements and are learned through sparse dictionary learning techniques. Unlike FACS action units (AUs), which may overlap and exhibit non-additive behaviors, the BUs provide a streamlined, additive representation, permitting robust encoding of all observable movements without relying on pre-defined AUs.

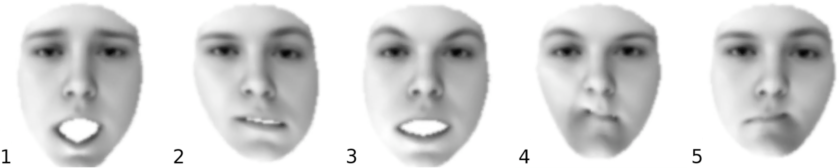

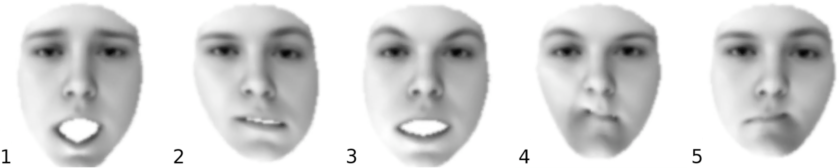

Figure 1: The first five components of the expression model used for 3DMM fitting~\cite{cao13}.

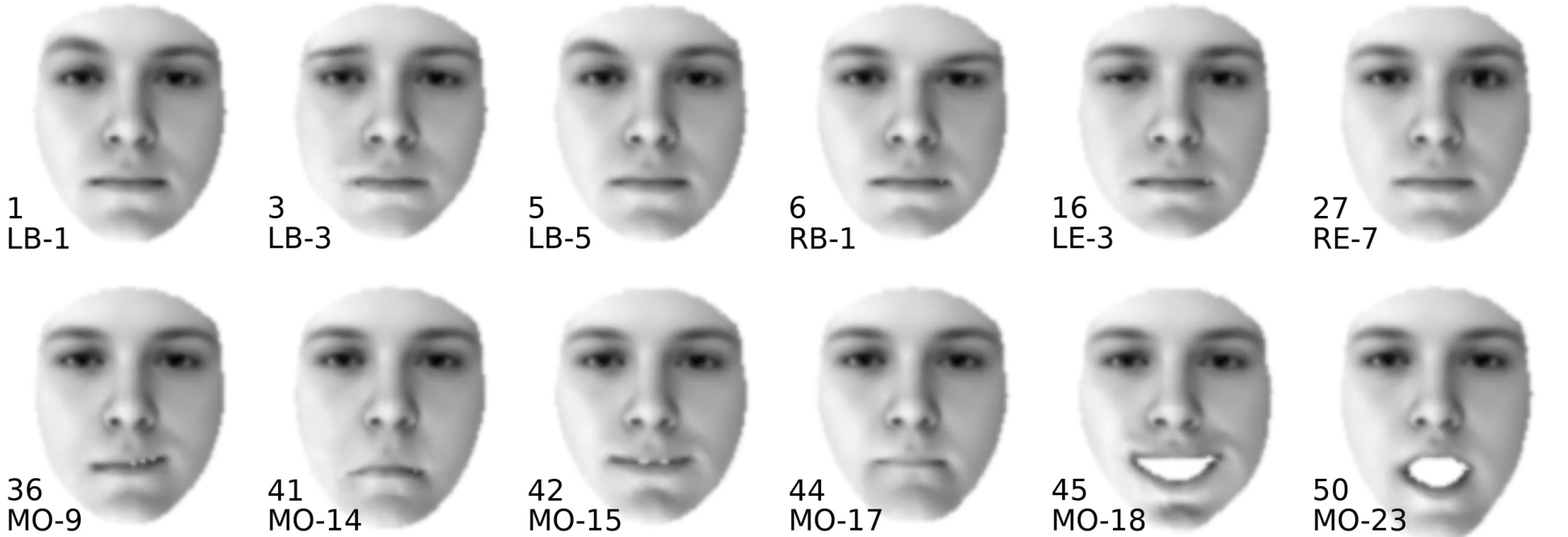

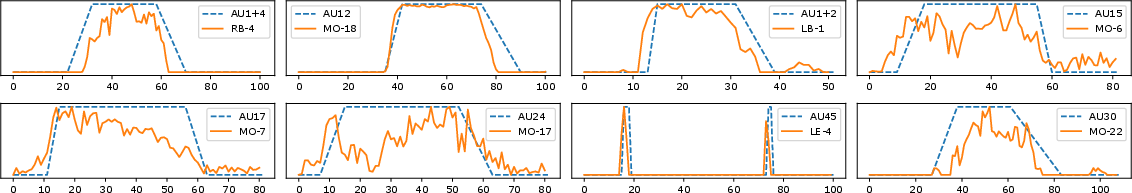

Figure 2: The expression encoded by some Facial Basis Units (BUs).

Methodology

3DMM Fitting

The approach begins with 3D Morphable Model (3DMM) fitting to reconstruct the 3D face shape in target images. This model decouples facial expressions from head pose and identity, ensuring unbiased expression analysis even under varying poses.

Localized Basis Learning

To learn the BUs, a sparse dictionary is constructed from extensive datasets of facial expressions captured from a variety of scenarios. This unsupervised process minimizes manual annotation requirements and captures a wide spectrum of expression components, ensuring robustness and adaptability.

Optimization Strategy

The optimization framework employed constrains the BUs to adhere to facial anatomical semantics, thereby ensuring that the learned units represent plausible facial movements.

Experimental Results

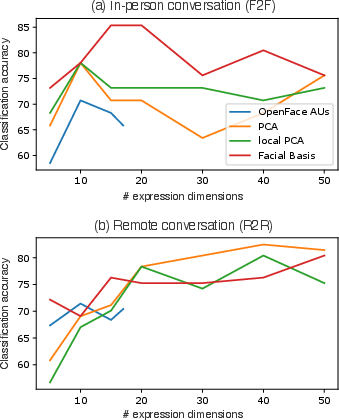

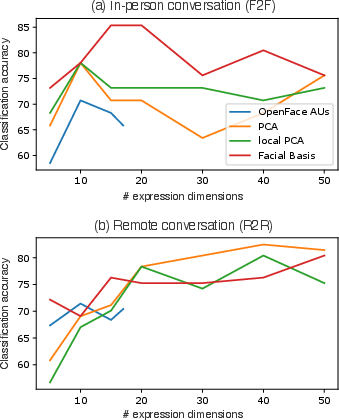

The proposed system's efficacy is underscored through comparative analysis with traditional FACS coders like OpenFace. The Facial Basis outperforms existing tools in classifying individuals with autism versus neurotypical controls in both in-person and remote conversational settings.

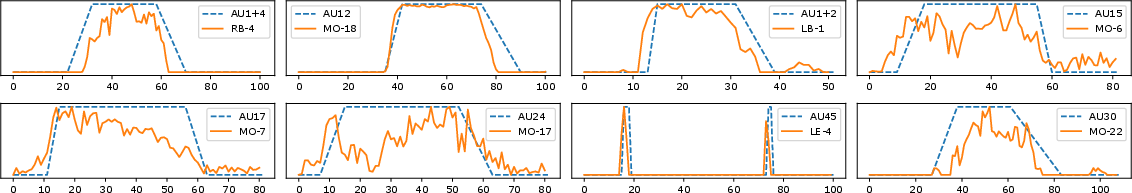

Figure 3: The AU labels (ground truth) from videos of the MMI dataset and the BU coefficients of the corresponding expression units, plotted over time. Results suggest that both the FACS AUs and the Facial BUs can be used to infer localized movements.

Figure 4: AUT vs. NT classification results of the compared coding systems w.r.t. the number of expression components used per coding system.

Additionally, the study reveals that the Facial Basis can capture unique asymmetric facial behaviors not detectable by AUs alone, which is pivotal for detecting subtle cues in ASD.

Clinical Implications

By showcasing increased accuracy in predicting autism from facial behavior, this study marks a significant step toward deploying AI in mental health diagnostics. The Facial Basis holds promise for broader clinical and behavioral research applications, providing a scalable, interpretable, and comprehensive tool for understanding facial cues.

Limitations and Future Work

Despite its advantages, the Facial Basis is constrained by its dependency on the quality and scope of training data. Moreover, certain non-plausible units highlight the need for further refinement. Future work could focus on integrating more extensive datasets and refining learning algorithms to enhance anatomical plausibility and interpretability.

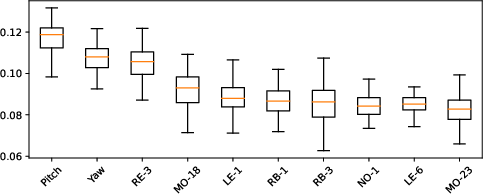

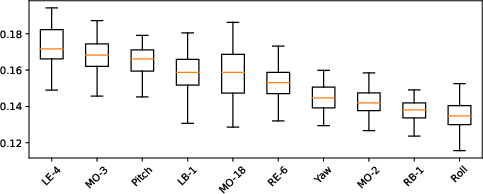

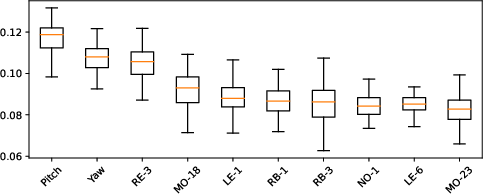

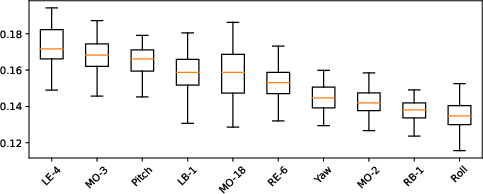

Figure 5: Average feature weights of behavioral components that include head movement (Pitch, Yaw, Roll angles) as well as Facial BUs. Top: in-person sample (F2F); bottom: remote (R2R) sample.

Conclusion

The "Beyond FACS" study exemplifies a significant innovation in facial expression analysis by constructing a data-driven, unsupervised alternative to traditional methods. The Facial Basis system not only advances autism spectrum disorder research but also sets a precedent for future endeavors in AI-driven facial expression analysis, emphasizing interpretability and comprehensive behavioral encoding.