- The paper introduces AutoCT, a framework that integrates LLMs with MCTS to autonomously generate and refine predictive features for clinical trial outcomes.

- The study leverages innovative feature engineering and evaluation techniques, achieving competitive ROC-AUC scores on clinical prediction tasks.

- The framework reduces human intervention by using interpretable models and metrics like SHAP values to enhance the reliability of clinical trial predictions.

Automating Interpretable Clinical Trial Prediction with LLMs

The paper "AUTOCT: Automating Interpretable Clinical Trial Prediction with LLM Agents" proposes a framework that automates the predictive analytics of clinical trials by integrating LLMs with classical machine learning techniques. This framework aims to enhance the interpretability and reliability of predicting clinical trial outcomes by autonomously generating and refining features without human intervention.

Framework Overview

AUTOCT introduces an innovative integration of LLMs into a structured machine learning pipeline, optimizing the feature engineering process through Monte Carlo Tree Search (MCTS). The framework leverages LLMs for feature suggestion and evaluation, enabling a dynamic and scalable approach to feature discovery and refinement.

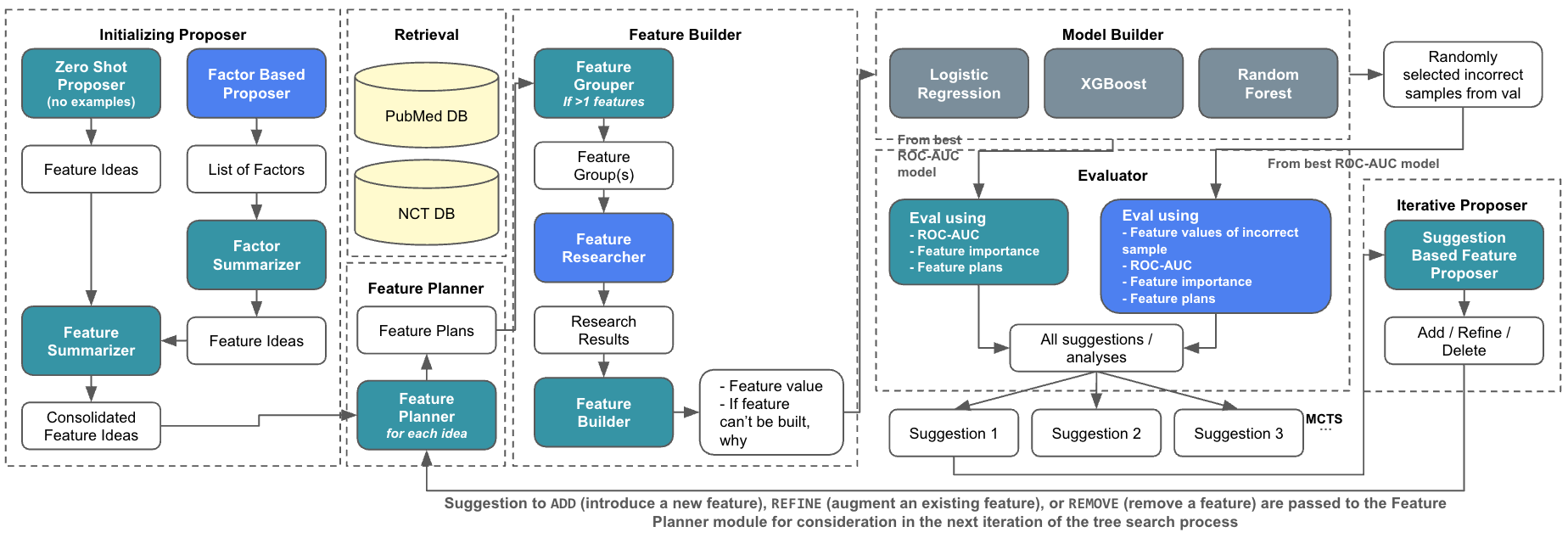

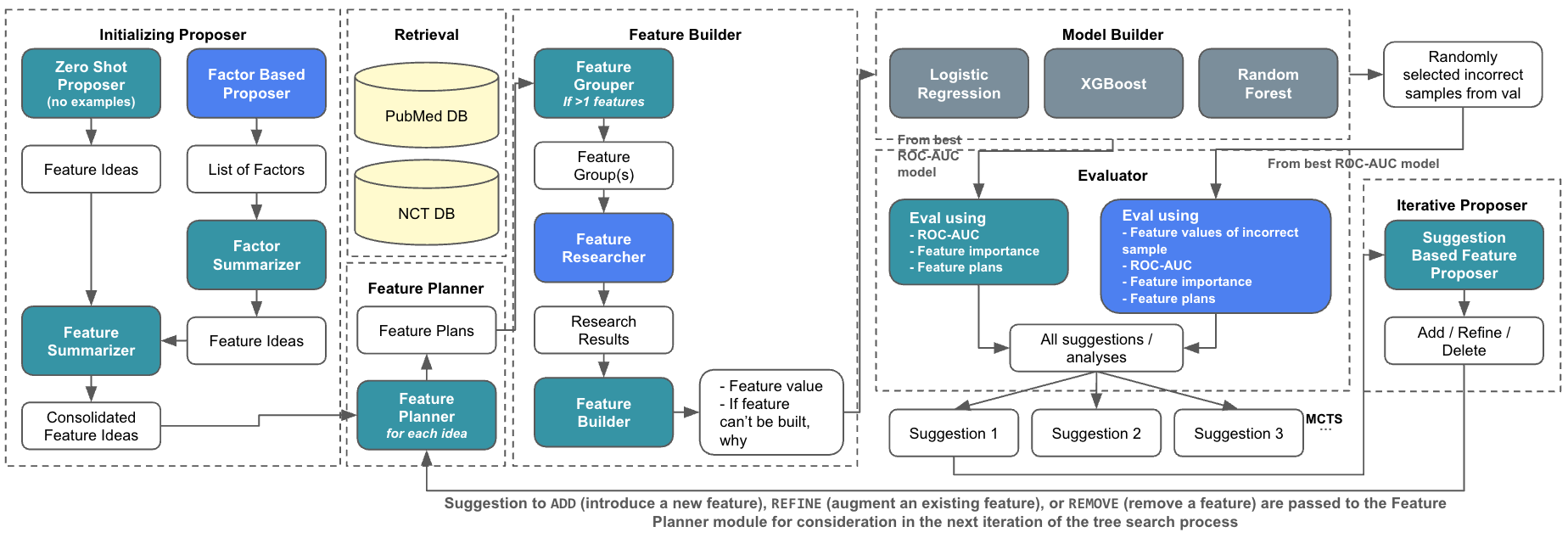

Figure 1: Overview of the AutoCT\ Framework. Turquoise boxes indicate components using LLMs with Chain-of-Thought (CoT) reasoning. Blue boxes represent components using LLMs with ReAct-style reasoning (interleaving reasoning and action). White boxes denote inputs and outputs, while gray boxes correspond to standard function calls without LLM involvement.

The system is composed of several components, including the Feature Proposer, Planner, Builder, and Evaluator, each fulfilling a unique role in transforming abstract feature ideas into executable entities. The Evaluator's feedback and error analysis fuel the MCTS, thereby optimizing feature sets for predictive tasks.

Methodology

Feature Generation and Optimization

At the core of AUTOCT is the use of LLMs to autonomously propose features. Using a combination of Zero-shot and Factor-based proposers, the system generates intuitive feature sets. These are further refined through iterative suggestions provided by the Evaluator, which assesses model performance metrics such as ROC-AUC and SHAP values.

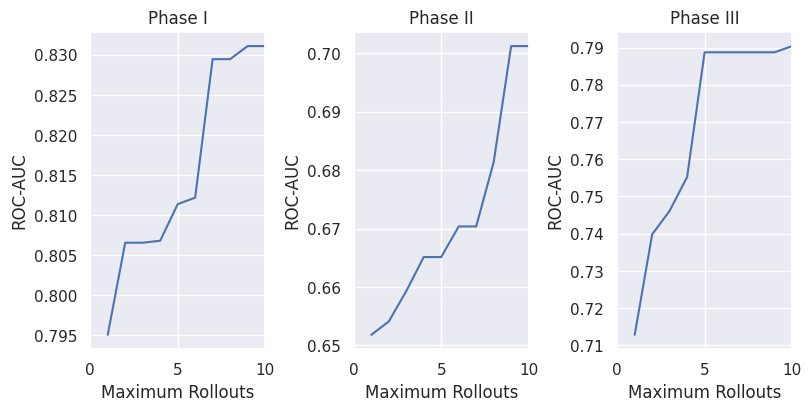

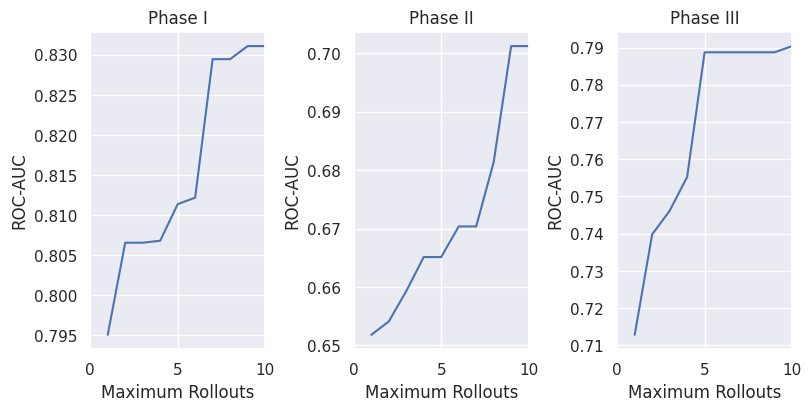

Figure 2: Average test set ROC-AUC of the top 5 models under varying maximum rollout limits in MCTS. Models are ranked by test set performance to smooth out noise and illustrate overall trends.

Monte Carlo Tree Search

MCTS is employed to explore the feature space, balancing exploration and exploitation through the UCT criterion. The algorithm evaluates potential feature sets by simulating rollouts, guided by the Evaluator's recommendations. This process facilitates the discovery of robust features that contribute to the model's predictability and interpretability.

Experimental Results

AUTOCT is tested on the Trial Approval Prediction task within the TrialBench benchmark dataset, achieving competitive ROC-AUC scores across various trial phases. These results demonstrate its capability to perform on par with state-of-the-art models, affirming its utility in clinical settings.

Furthermore, applications on tasks such as Patient Dropout, Mortality, and Adverse Event prediction exhibit the algorithm's flexible adaptability and robustness in diverse prediction scenarios.

Implications and Future Directions

The AUTOCT framework exemplifies a significant advancement in the automation of clinical trial prediction, highlighting its potential to reduce human labor and augment predictive accuracy through interpretable models. Future research could enhance retrieval systems, optimize hyperparameters, and expand the framework to more complex pipelines.

The ability to leverage LLMs for autonomous feature engineering coupled with the rigorous exploration capabilities of MCTS situates AUTOCT as a promising tool for high-stakes biomedical applications. Continued developments could refine its efficiency, accuracy, and application scope, further bridging the gap between traditional machine learning methodologies and modern interpretability requirements.