- The paper introduces LLM-First Search (LFS), a novel method where LLMs autonomously guide both exploration and evaluation in complex search tasks.

- LFS outperforms traditional methods like MCTS by eliminating fixed heuristics and reducing computational costs through improved token efficiency.

- Experimental results demonstrate that LFS scales effectively with model strength, showcasing superior performance in complex reasoning and planning.

LLM-First Search: Self-Guided Exploration of the Solution Space

The paper "LLM-First Search: Self-Guided Exploration of the Solution Space" (2506.05213) introduces a novel LLM-guided recursive search method, LLM-First Search (LFS). This approach eliminates pre-defined heuristics and hyperparameters, allowing LLMs to autonomously drive the exploration and evaluation processes during complex search tasks such as reasoning and planning. This paper critiques existing methods such as Monte Carlo Tree Search (MCTS) for their rigidity and high computational cost, providing experimental evidence for the superior adaptability and efficiency of LFS.

Introduction to LLM-First Search (LFS)

LFS diverges significantly from conventional search algorithms by directly leveraging the capabilities of LLMs to dictate both exploration and exploitation strategies dynamically. Unlike MCTS, which operates under fixed exploration constants, LFS uses internal scoring mechanisms of LLMs to determine which paths to pursue. This design is particularly beneficial in tasks with varying complexities, as it allows for more context-sensitive reasoning and reduces the necessity for manual tuning.

Critical Analysis of Conventional Methods

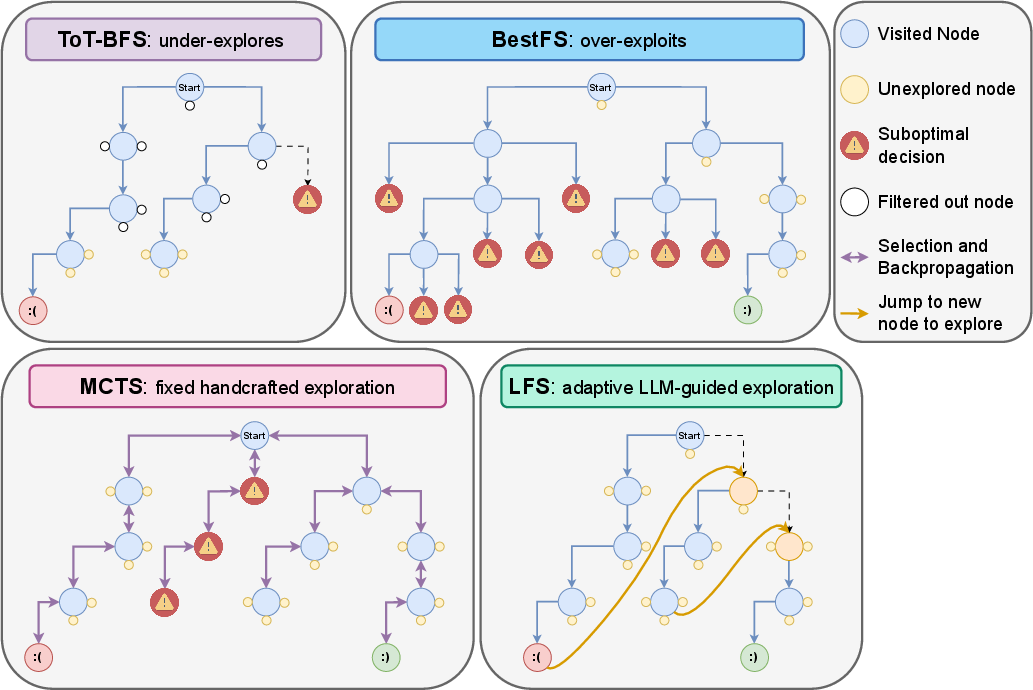

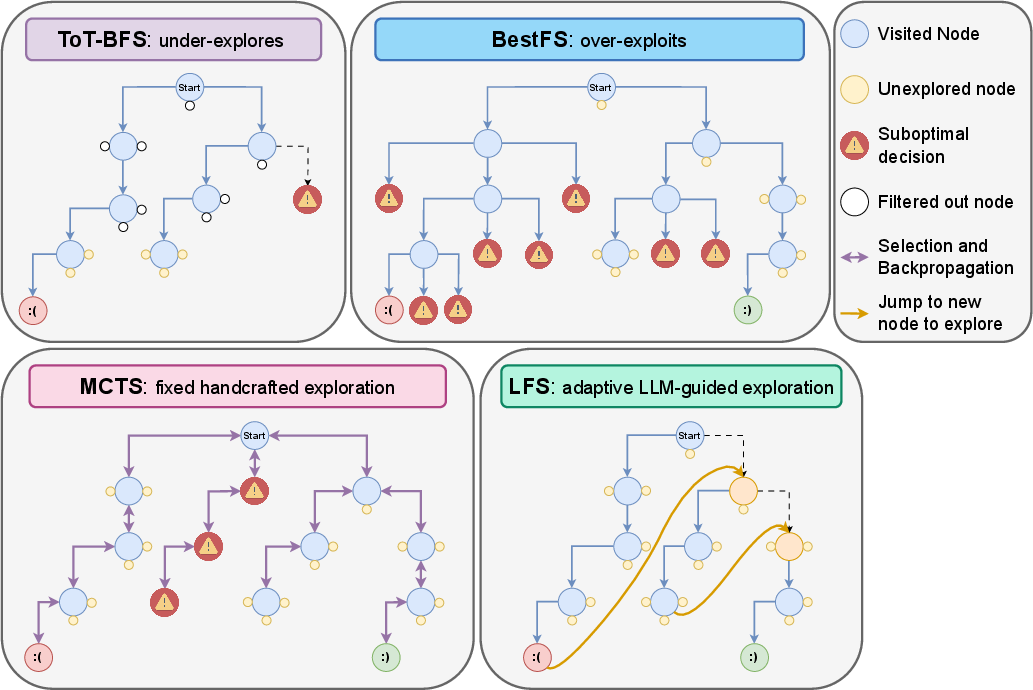

Existing search strategies such as Tree-of-Thought Breadth-First Search (ToT-BFS), Best First Search (BestFS), and MCTS have delivered state-of-the-art results across various domains. However, their performance often suffers from limitations related to hyperparameter sensitivity and the exploration-exploitation trade-off. For instance, MCTS's effectiveness heavily depends on the exploration constant, which is often task-specific and requires careful tuning to optimize performance, as highlighted in various studies [coulom2006efficient, kocsis2006bandit]. LFS addresses these challenges by placing the LLM at the forefront of the search process, thus leveraging its internal decision-making capabilities without external constraints.

Implementing LLM-First Search (LFS)

LFS operates within a Markov Decision Process (MDP) framework, where the LLM functions as the policy agent, continuously interacting with the environment. It performs two primary operations: Explore and Evaluate. The exploration decision involves assessing whether to continue along the current path or switch to an alternative based on the LLM’s evaluations.

1

2

3

4

5

6

7

8

9

10

11

|

def LFS_search(LLM, initial_state, max_tokens):

current_state = initial_state

token_count = 0

while token_count < max_tokens:

if LLM.evaluate_path(current_state):

next_state = LLM.explore_alternatives(current_state)

else:

next_state = LLM.continue_path(current_state)

current_state = next_state

token_count += LLM.token_usage()

return current_state |

Exploration Decision

At each decision point, the LLM autonomously evaluates whether the current path continues to show promise or whether unexplored paths hold greater potential. This is accomplished without pre-defined heuristics.

Evaluate

The LLM assesses the potential value of each action within the current state to determine the most promising path forward, based on its scoring system.

Experimental Results

The paper reports on comprehensive experiments conducted on Countdown and Sudoku problem sets using two different models: GPT-4o and o3-mini. Across these tasks, LFS demonstrated competitive performance, enhanced computational efficiency, and great scalability with stronger models.

Figure 1: Illustrative comparison of search strategies. This figure visualises how different methods expand the search tree during reasoning.

Key Findings

- Enhanced Performance on Complex Tasks: LFS outperformed other models, especially as task difficulty increased, showing a scalable advantage.

- Improved Efficiency: Comparative analysis showed LFS requiring fewer tokens on average (Figures 5-8, 20-21).

- Scalability with Model Strength: LFS demonstrated significantly better performance with stronger models like GPT-4o compared to alternatives.

Discussion

The LFS framework represents a paradigm shift in how LLMs can be utilized to solve complex reasoning tasks. By integrating exploration and evaluation, it places decision-making capabilities directly at the core of the model, avoiding the pitfalls associated with static algorithms and manual configurations.

Practical Implications

The implementation of LFS offers substantial benefits for applications where adaptability and resource efficiency are critical, such as real-time decision systems and autonomous agents operating in dynamic environments. Additionally, it supports scalability across different task complexities and computational budgets, making it suitable for deployment in diverse settings.

Conclusion

The proposed LLM-First Search method represents a significant improvement in leveraging LLMs for complex search tasks. It provides a more dynamic, flexible, and efficient framework, thereby addressing limitations inherent in conventional search strategies. Future work should explore extending LFS to additional reasoning domains and integrating this approach into wider AI applications where adaptable exploration strategies could enhance problem-solving efficacy.

In summary, LFS redefines the role of LLMs in structured search algorithms by integrating exploration and evaluation, which paves the way for more generalizable and scalable AI models. While the experiments focus on specific domains, the methodology has broad applicability, suggesting promising directions for future AI research and development.