- The paper demonstrates that activation probes can detect high-stakes interactions in LLMs with performance similar to larger finetuned models.

- It details probe architectures such as Attention, Softmax, and Mean, showing robust AUROC scores across diverse out-of-distribution datasets.

- It proposes cascade systems that combine probes with larger models to optimize cost efficiency and monitoring accuracy in real-world applications.

Summary and Implications of Detecting High-Stakes Interactions with Activation Probes

Introduction

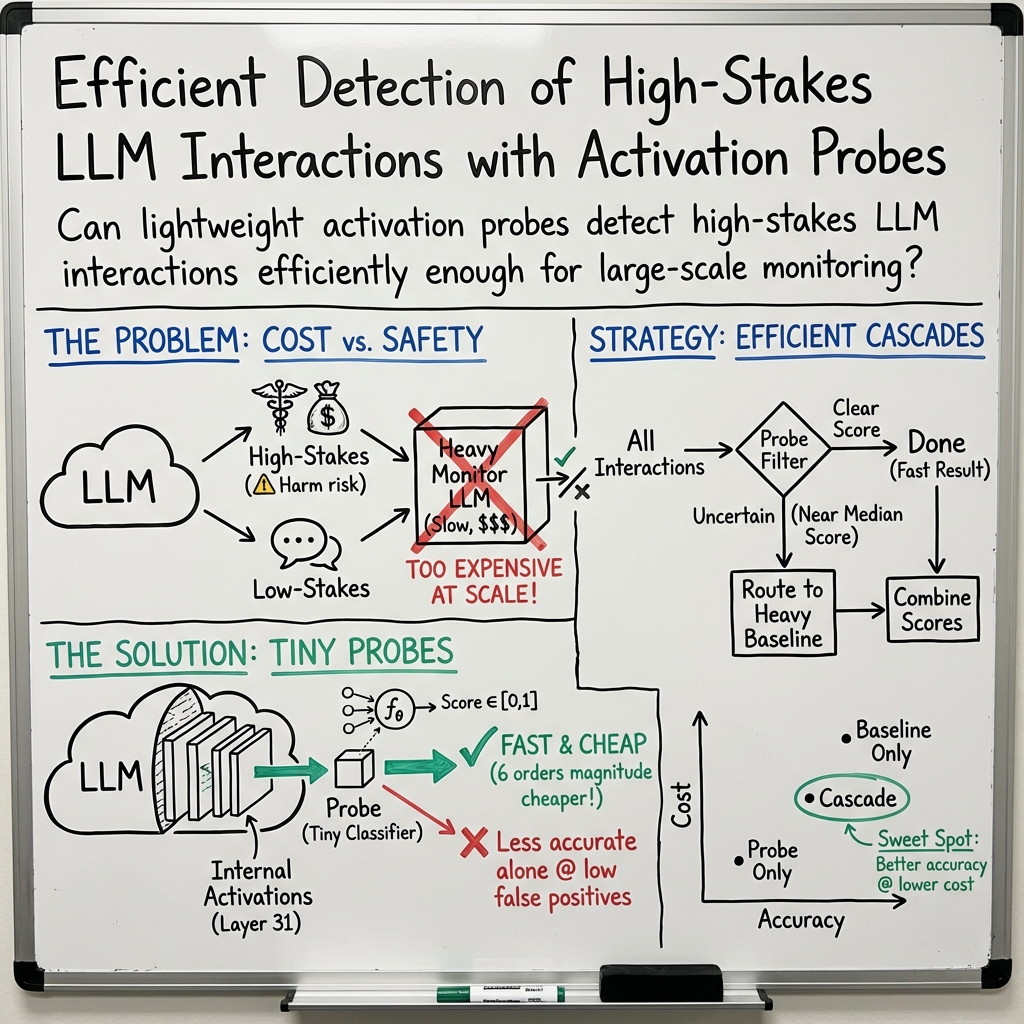

The paper "Detecting High-Stakes Interactions with Activation Probes" (2506.10805) explores the use of activation probes for identifying high-stakes interactions where significant harm could result if LLMs misbehave. These probes represent an efficient method to monitor interactions for risky behaviors by examining activations within LLMs. The approach suggests substantial cost savings while maintaining generalization and performance comparable to more computationally expensive techniques like prompting and finetuning with medium-sized LLMs.

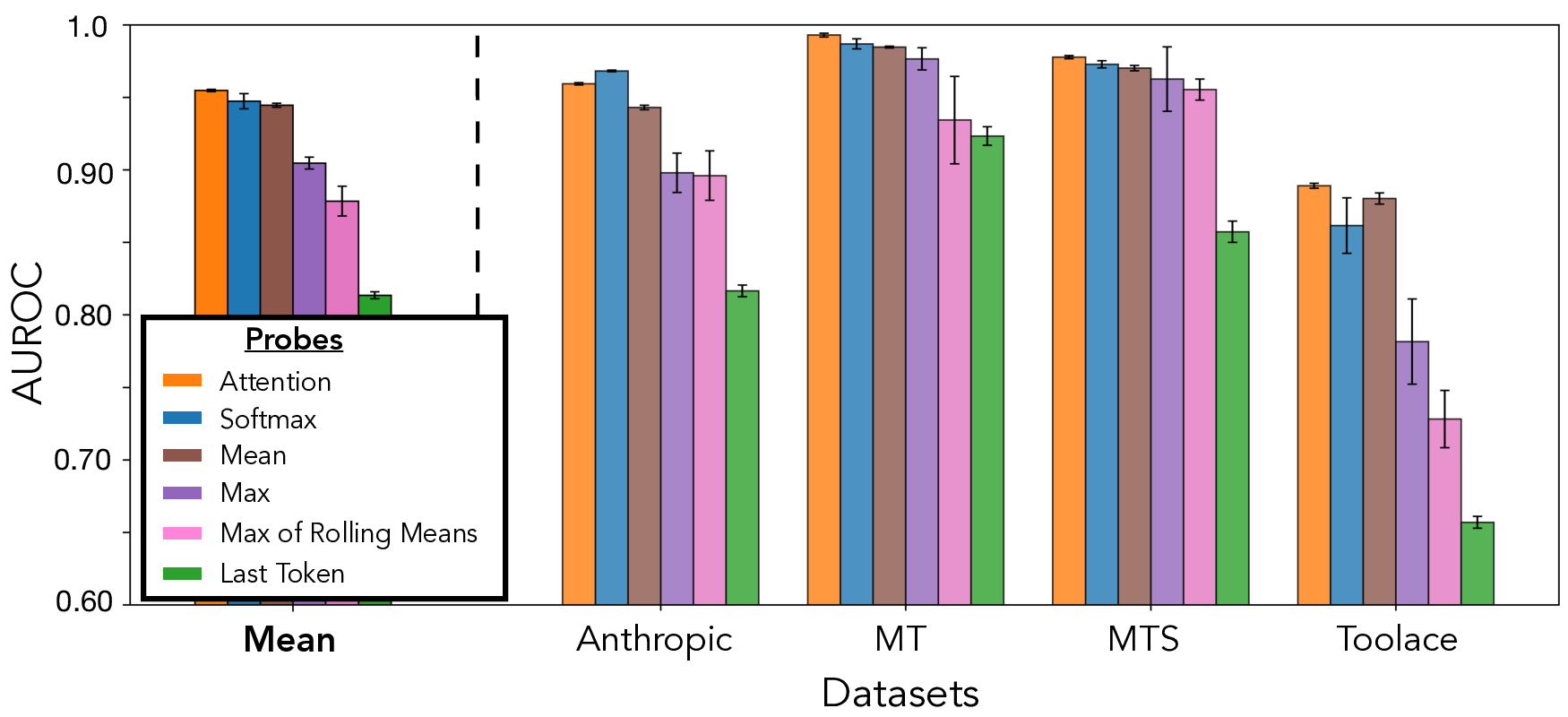

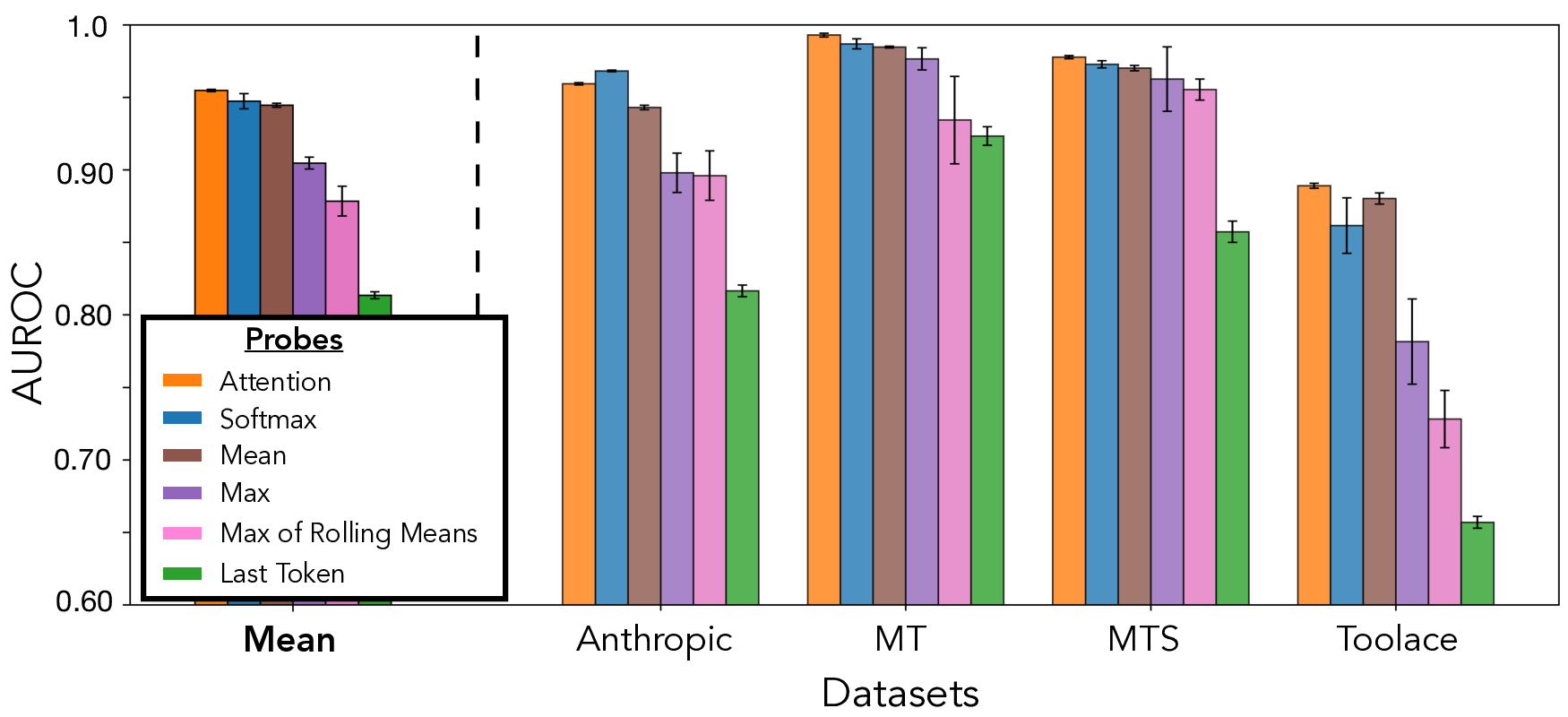

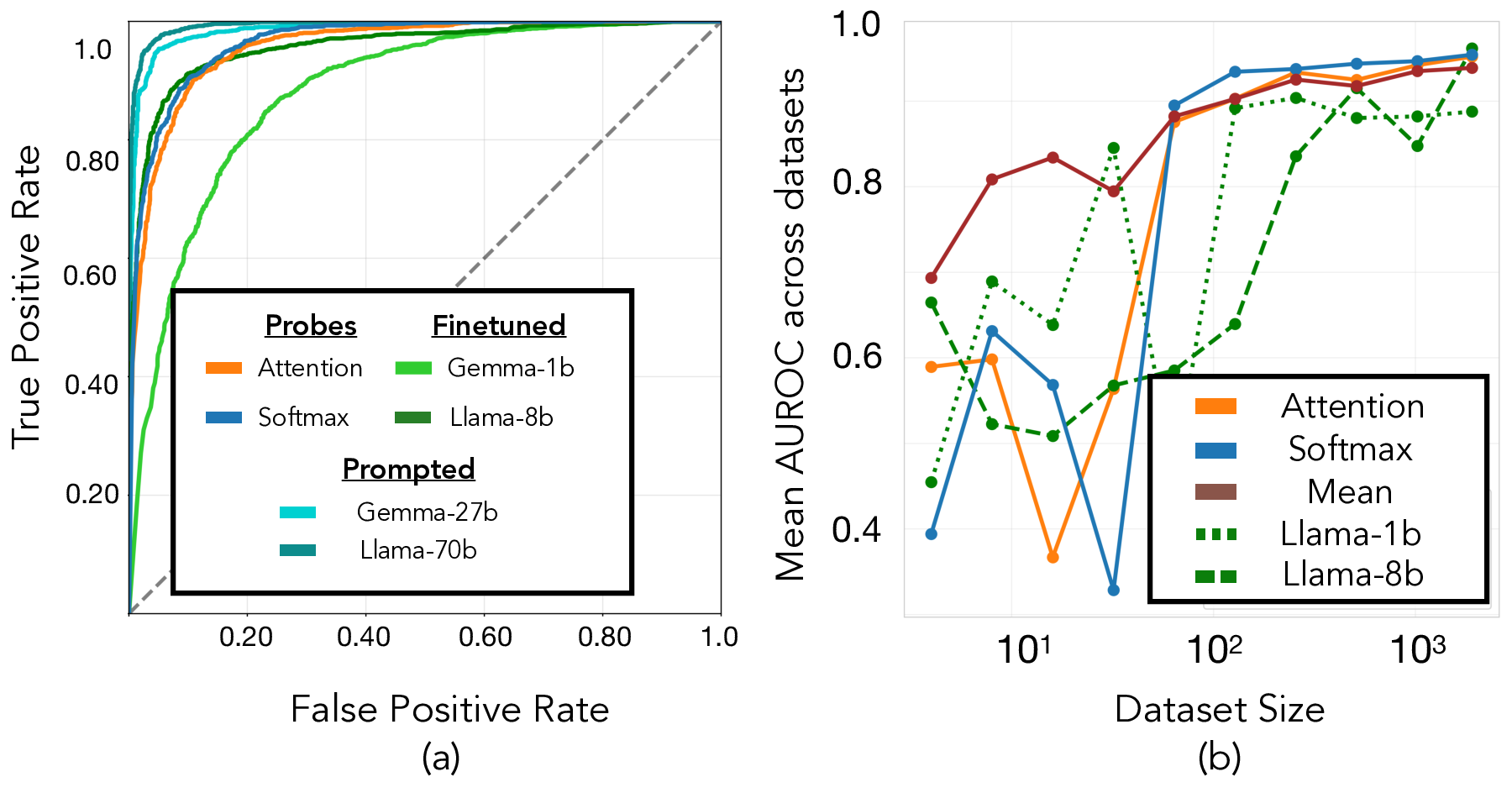

The paper evaluates various probe architectures, demonstrating their effectiveness in monitoring potential high-stakes situations. Among the examined designs, Attention, Softmax, and Mean probes emerged as the most effective, as illustrated in the performance comparison in Figure 1.

Figure 1: Attention probes are overall most effective. We compare the performance of various common probe approaches. Each probe is trained on the training split of our synthetic dataset and evaluated on all OOD evaluation datasets.

These probes achieved comparable AUROC scores to medium-sized prompted and finetuned baselines. Their capability to generalize across diverse out-of-distribution datasets was confirmed, suggesting probes can serve as the first line of monitoring defense, which is particularly valuable given their low computational demands.

Comparative Analysis With Baselines

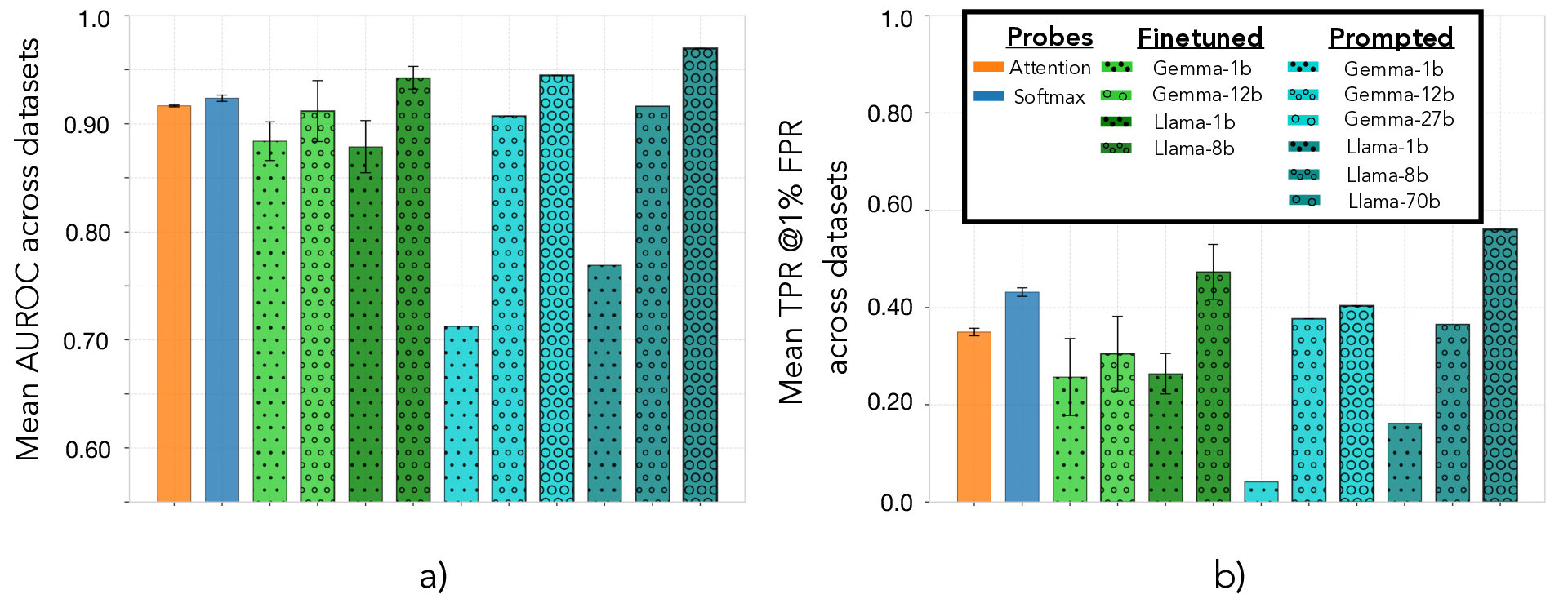

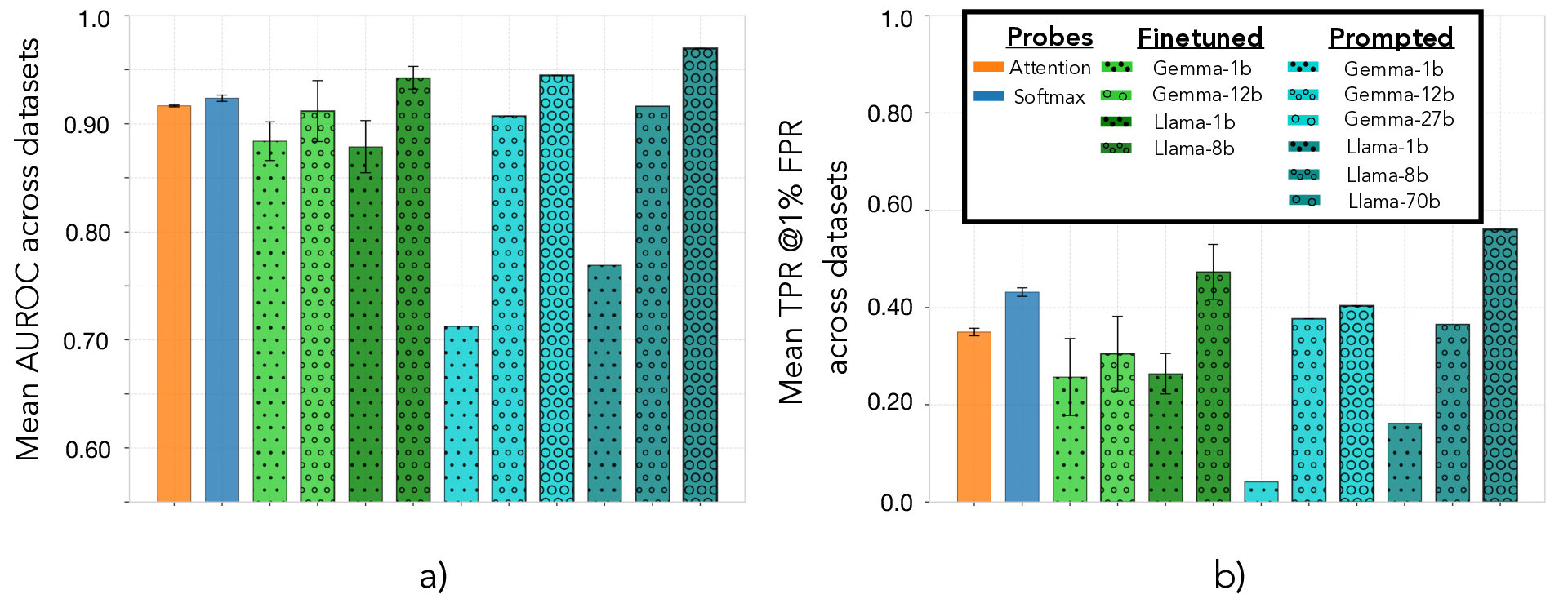

The paper provides a comprehensive comparison between probe methods and finetuned or prompted baselines. Probes outperform smaller models at a significantly lower computational cost, offering compelling advantages in efficiency.

Figure 2: Probes achieve a Mean AUROC across test datasets comparable to the medium (8b-12b) finetuned baselines.

Attention probes, for instance, demonstrated high efficiency with performance metrics close to that of medium-sized LLMs, with potential for substantial computational savings. These results reinforce the use of activation probes as cost-effective solutions for real-time large-scale monitoring of LLM interactions.

Cascade Systems for Efficient Monitoring

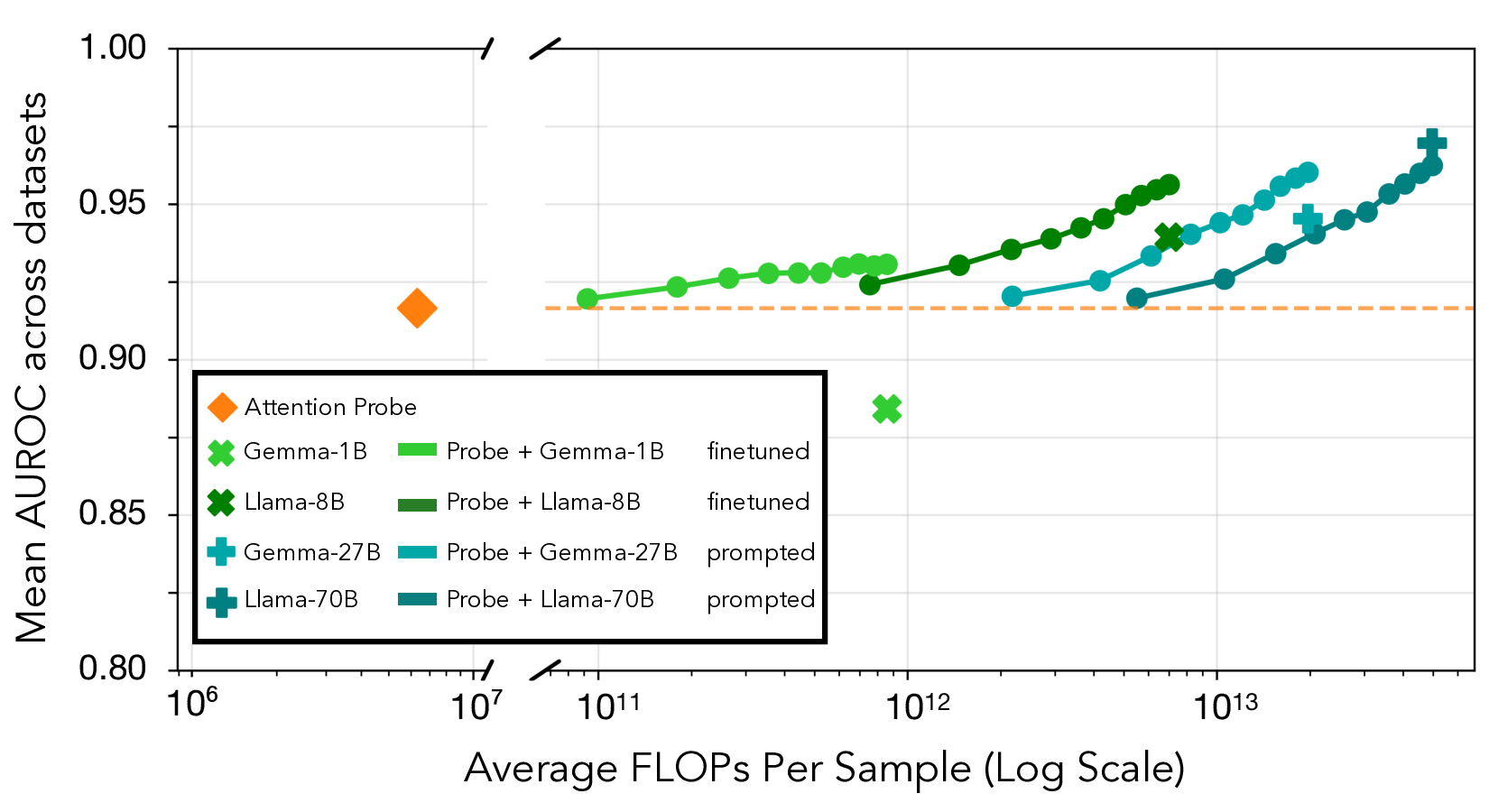

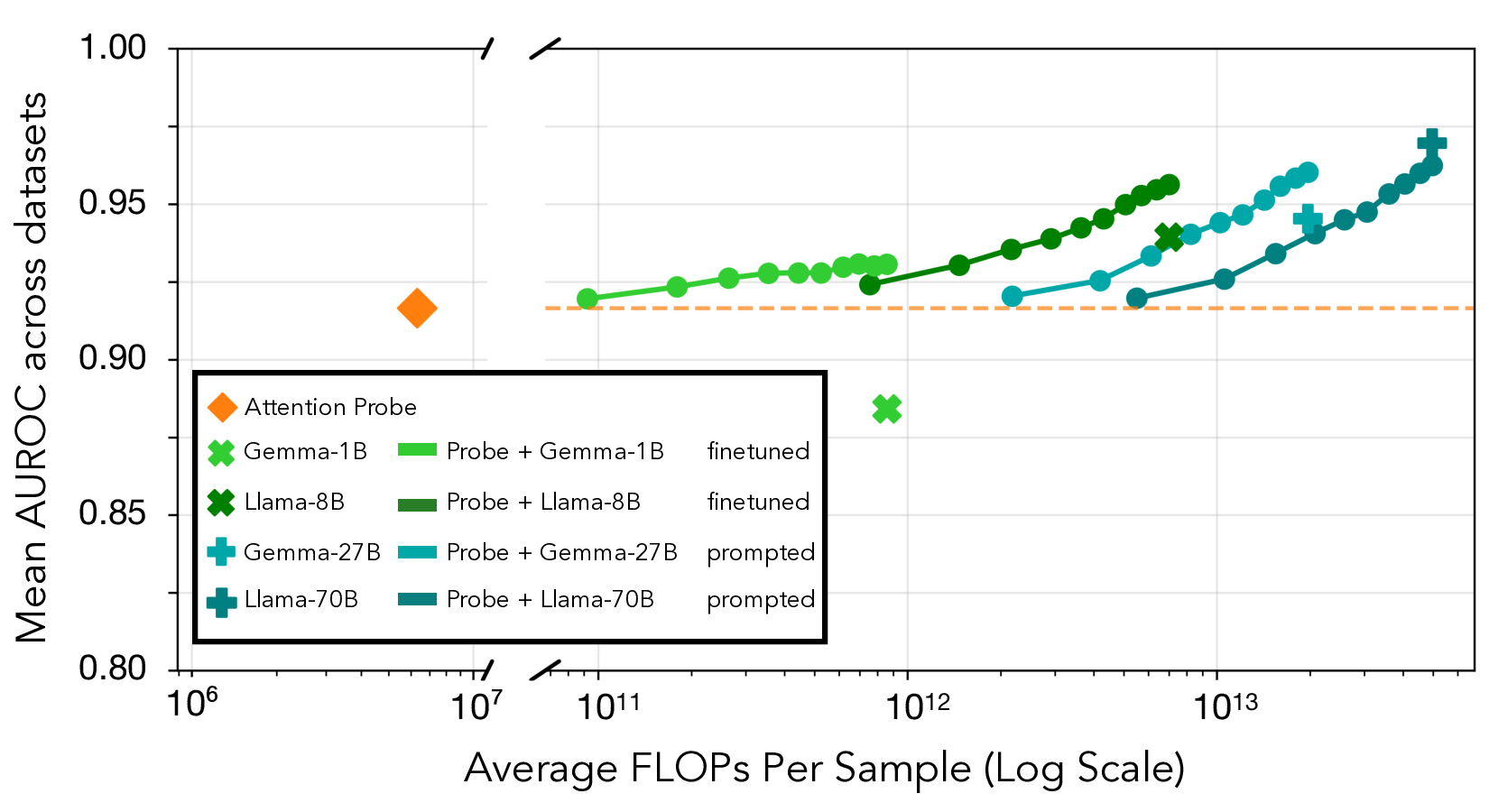

Activating probes within cascade systems shows promise for integrating high efficiency with the precision of larger LLM monitors. In this hybrid setup, probes flag samples with uncertain scores to be analyzed further by more expensive models, optimizing both performance and cost.

Figure 3: Combining probe and LLM baselines outperforms either method alone for all but the most expensive baseline (Llama-70b).

The analysis demonstrates cascades consistently outperform standalone methods. This approach consolidates probes and computationally intensive baselines into a suite that balances comprehensive monitoring with manageable computational overhead.

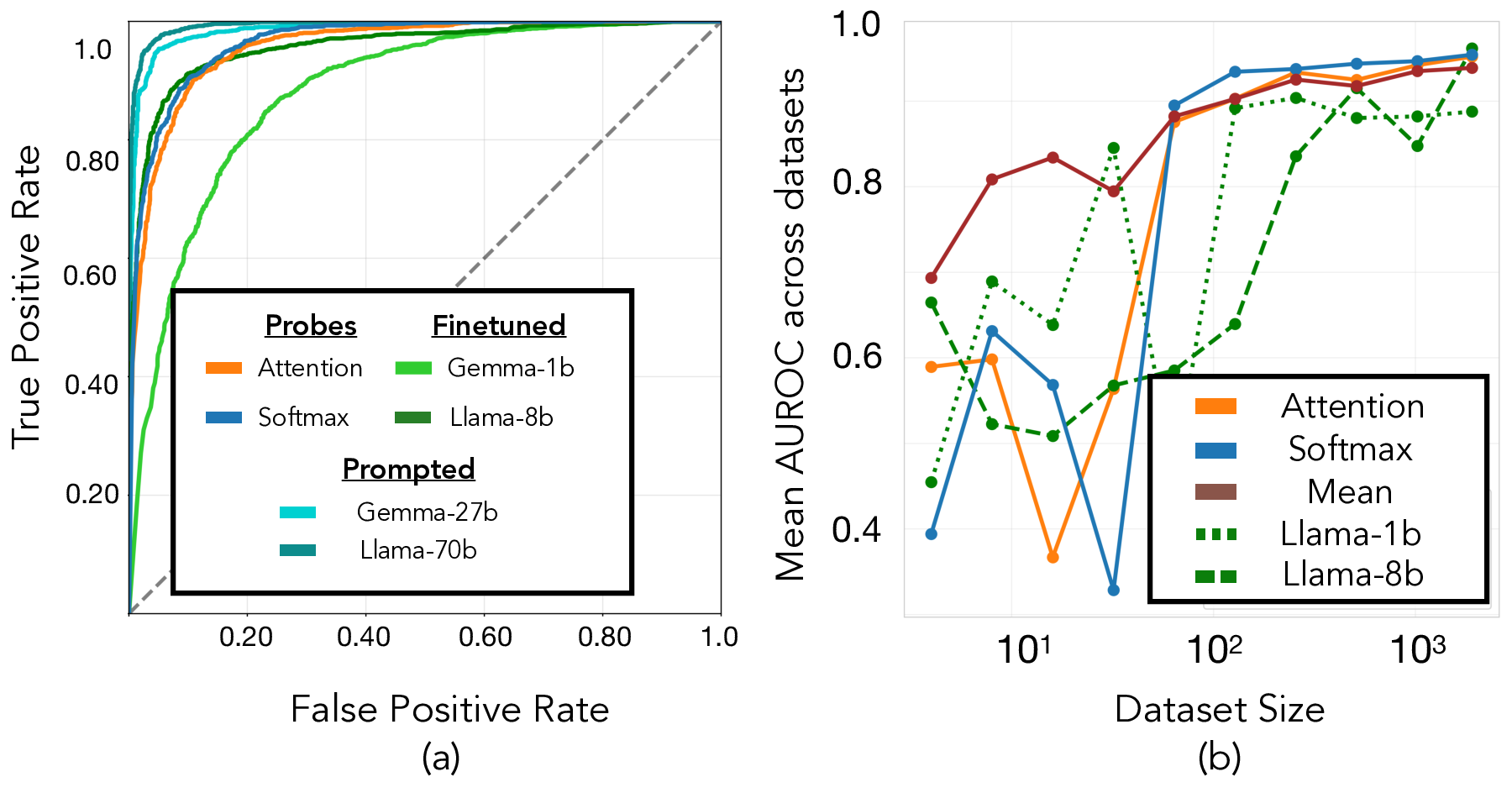

Training on Real-world Data

The paper underscores the necessity of training probes on deployment samples to refine their aptitude in specific contexts, improving their performance beyond what is achievable via synthetic data alone. This methodology offers a critical mechanism for the adaptation of monitoring systems to dynamic, real-world environments, ensuring they remain responsive to domain-specific requirements.

Figure 4: The prompted baseline shows a strong performance vs. probes on the Anthropic HH dataset.

Conclusion

Overall, the paper positions activation probes as a cost-effective and efficient solution for monitoring high-stakes interactions in LLMs. Their scalability and integration potential in cascade systems offer practical implications for AI safety, aligning monitoring practices with the evolving nature of LLM applications. Future research should continue to explore hybrid monitoring architectures and expand the scope of probe applications to enhance their utility and adaptability.

Future Work

The study encourages further exploration into improving the robustness of probe-based monitoring systems, particularly how they can seamlessly integrate into existing AI deployments to handle complex risk assessments autonomously. Additionally, understanding the interplay between diverse probes and methodologies in layered monitoring contexts will strengthen their contribution to safe AI deployment practices.