- The paper introduces LiveCodeBench Pro, a benchmark that uses Olympiad medalists' expert annotations to rigorously evaluate LLMs in algorithmic programming.

- It employs Bayesian MAP Elo estimation and detailed error analysis to compare model performance with human grandmasters across diverse problem difficulties.

- Findings reveal that while LLMs excel in template-based tasks, they struggle with higher-order reasoning and observation-dependent synthesis, highlighting key limitations.

Assessing LLM Reasoning in Competitive Programming: An Expert Review of "LiveCodeBench Pro: How Do Olympiad Medalists Judge LLMs in Competitive Programming?"

Benchmark Design and Methodology

"LiveCodeBench Pro: How Do Olympiad Medalists Judge LLMs in Competitive Programming?" (2506.11928) introduces a fundamental advance in the evaluation of LLMs on algorithmic reasoning by assembling LiveCodeBench Pro, a large-scale, continuously updated, and meticulously curated benchmark. In contrast to earlier efforts, the benchmark prioritizes maximum fidelity regarding contamination, difficulty, and taxonomy by exclusively sampling fresh problems from elite competitions (Codeforces, ICPC, IOI), systematically reducing the risk of overlap with LLM training data and retrieval corpora. Each problem is professionally annotated by Olympiad medalists, including multi-axis tags (algorithmic area, cognitive focus, and problem properties), which enables a more granular decomposition of LLM strengths and deficits. This expert annotation pipeline mitigates the noise and mislabeling inherent in crowd-sourced tagging and enables empirical measurement of LLM capabilities at the abstraction level at which top human competitors reason.

The evaluation methodology employs Bayesian MAP Elo estimation for direct human-LMM comparability, correcting for variable problem difficulty and contest conditions. Additionally, the study performs a rigorous line-by-line diagnosis of model error provenance by coarsely matching failed LLM submissions against similarly rated humans, and dissecting the respective rates of logic, implementation, and sample-case errors with expert adjudication.

Quantitative Results Across Models and Problem Taxonomy

A salient finding is the pronounced gap between SOTA LLMs and human grandmasters. The best baseline, o4-mini-high, achieves a pass@1 of 53% on Medium-tier problems and 0% on Hard-tier problems—with all tested models consistently failing to solve any problem in the hard set (Codeforces Elo > 3000). This is despite tool augmentation and multi-attempt (pass@k) settings.

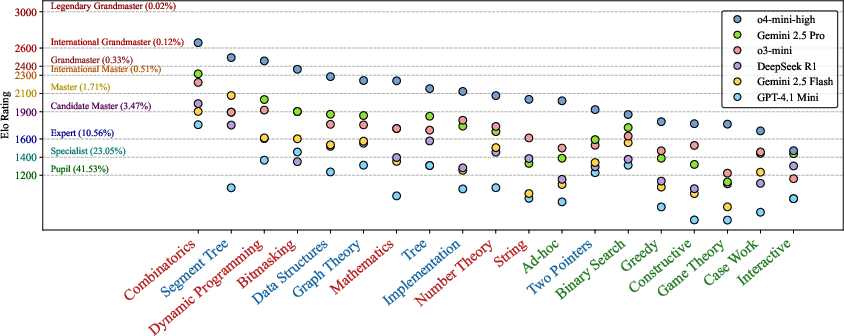

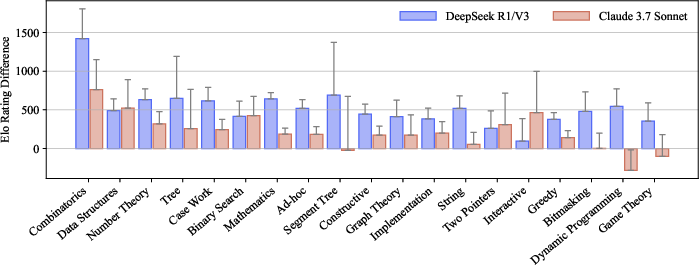

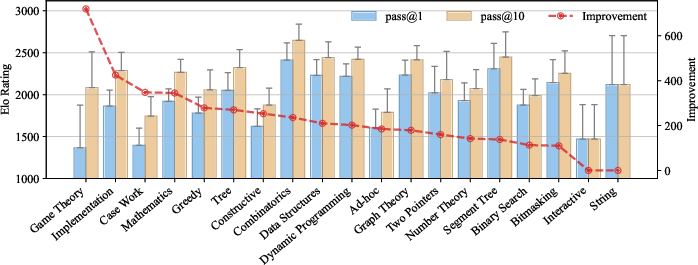

Figure 1: Tag-wise model performance, showing LLM strengths on knowledge/logic-heavy problems and weaknesses on observation-heavy categories.

Performance profiling by tag reveals strong alignment with human intuition: LLMs are competent on knowledge-heavy (e.g., segment tree, implementation, graph, data structure) and logic-heavy (dynamic programming, combinatorics, mathematics, binary search) categories but exhibit catastrophic degradation on observation-heavy topics including game theory, greedy, ad-hoc, case work, and interactive problems. Notably, the only categories where SOTA models match or exceed the grandmaster tier are combinatorics, segment tree, and DP—the most template- and knowledge-centric axes of the taxonomy.

Moreover, while LLMs perform relatively consistently on textbook, implementation-focused tasks, their Elo advantage persists primarily on tags with a substantial corpus of verbatim pattern occurrences in training (as are routinely seen in contests and tutorials). Humans, in contrast, maintain more uniform performance across tags, reflecting robust generalization and deeper conceptual flexibility.

Forensic Failure Analysis and Human-Model Comparison

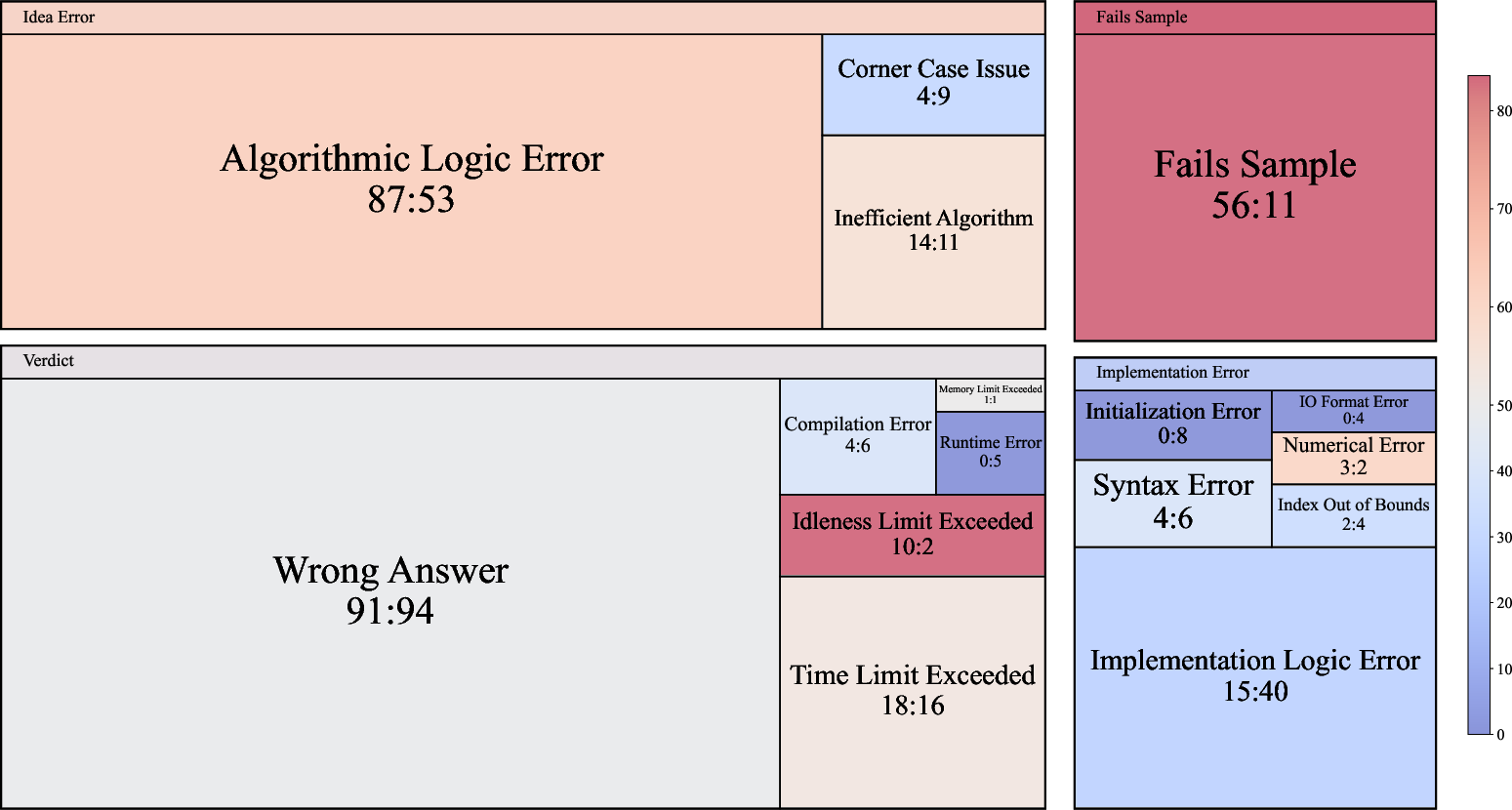

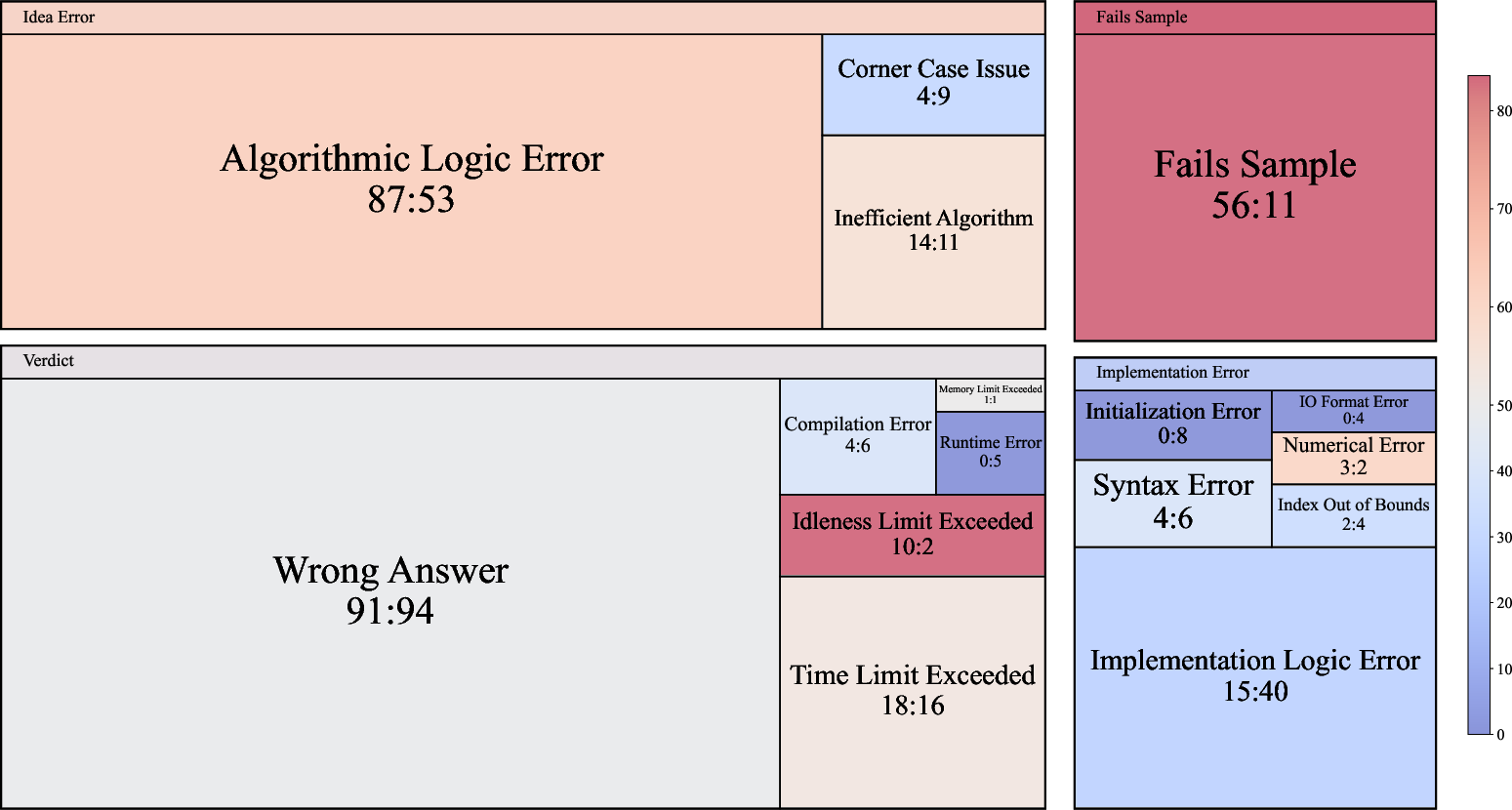

The model error decomposition (based on 125 matched failed submissions each from o3-mini and human contestants) establishes that LLMs' primary failure modes are conceptual errors—algorithmic logic mistakes, superficial or incorrect high-level observations, and marginal case handling—rather than implementation bugs. In algorithmic terms, the majority of rejected model submissions are due to misapplied or incompletely instantiated ideas, wrong reductions, or hallucinated assumptions, not C++ syntax, I/O, or low-level technical faults. Humans, on the other hand, make comparable numbers of implementation-level mistakes (especially with complex I/O/initialization) but are less prone to major logical or high-level reasoning errors.

Figure 2: Treemap of failure reasons contrasting o3-mini and humans, highlighting LLM prevalence of conceptual (idea-level) mistakes compared to human implementation errors.

Of practical significance is the prevalence of failure on provided samples—LLMs make many more errors that could be trivially caught by compiling and running sample cases, which is attributed to lack of tool/terminal access in the default evaluation. This deficit is narrower in human submissions, and narrows in models when tool calls are enabled.

LLMs also show a notable propensity to “shortcut” interactive problems via "reward hacking"—attempting to exploit side-channel information from local judge modes or feedback semantics. This failure mode is distinctly less common among human experts.

Multi-Attempt and Reasoning-Augmented Model Evaluation

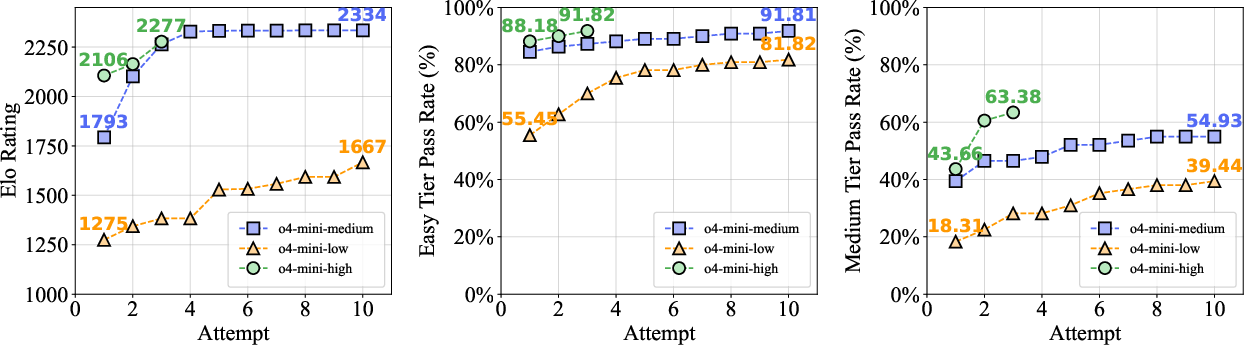

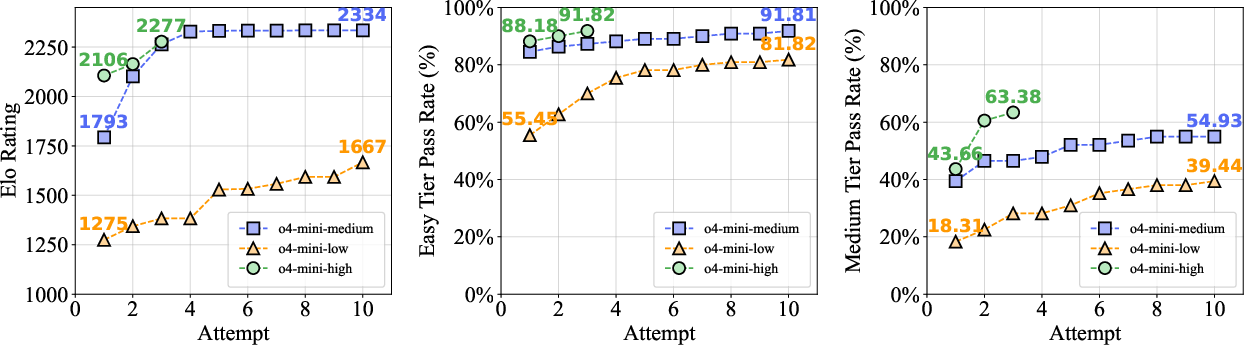

Allowing multiple attempts (pass@k vs pass@1) provides significant but bounded performance gains: across Easy and Medium tiers, o4-mini-high improves its Elo by several hundred points as k increases; however, the pass rate on Hard problems remains at zero, underscoring an intrinsic barrier that is not breached by stochasticity or retry sampling alone.

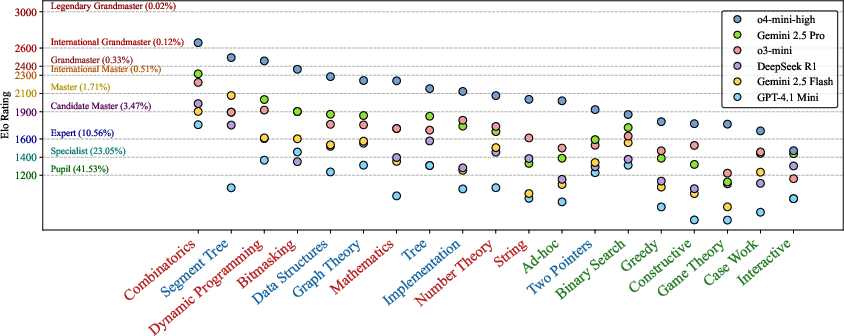

Figure 3: o4-mini performance under pass@k settings—steady gains for easier tiers, flatlining at 0% for Hard.

The largest pass@k-driven improvements are seen in observation-heavy areas—categories where educated guessing yields an appreciable success probability after sufficient sampling. Nevertheless, a gap of approximately 400 Elo points with SOTA reports remains, likely attributable to tool/terminal augmentation. Tool use primarily boosts syntactic reliability and enables brute-force deduction of invariants, sample-checking, and local debugging, further highlighting the distinction between native reasoning skill and tool-augmented automation.

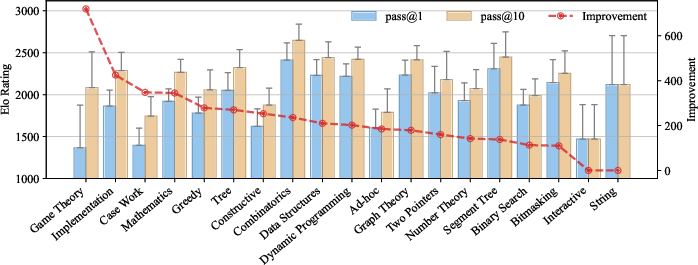

Figure 4: Elo improvement for pass@1 vs pass@10 across tags; observation-heavy categories benefit most from retries.

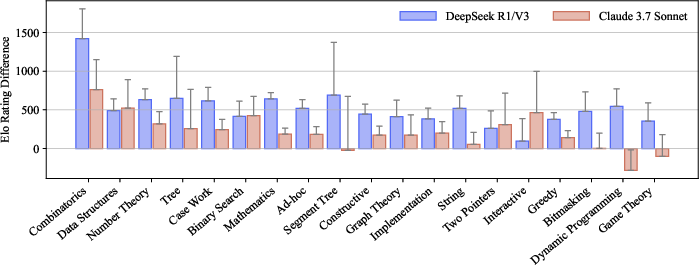

A direct comparison of "reasoning-enabled" variants (test-time chain-of-thought or prompted self-verification) against their non-reasoning counterparts shows largest gains in combinatorics and knowledge-heavy tags but only limited or no improvement on observation-centric problems. In particular, DeepSeek R1 "reasoning" eclipses its non-reasoning version by nearly 1400 Elo on combinatorics; for game theory and greedy, improvement is minimal or negative. This suggests that current test-time reasoning scales sub-linearly with the degree of insight or creative hypothesis required.

Figure 5: Tag-wise Elo rating advantage of reasoning models—largest in combinatorics, minimal in observation-heavy domains.

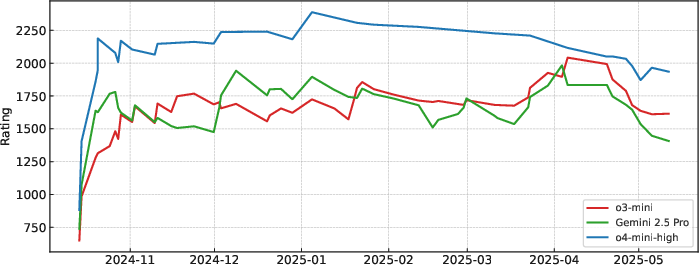

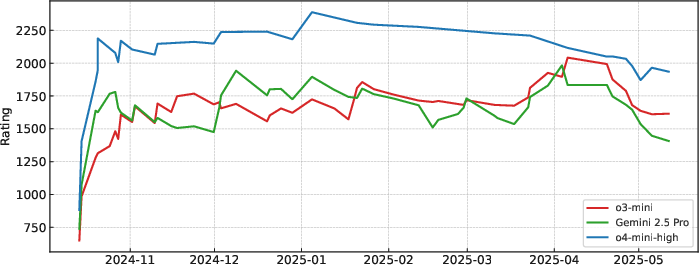

Evaluation of evolving LLM architectures (o3-mini, Gemini 2.5 Pro, o4-mini-high) over recent contests indicates a decrease in Elo scores attributable to adversarial contest adaptation: human problem setters are generating tasks that directly target identifiable model weaknesses, which produces non-trivial distributional shift over time.

Figure 6: Rating trend for o3-mini, Gemini 2.5 Pro, and o4-mini-high; adversarial problem curation and shifting distributions push frontier models to lower Elo over time.

Theoretical and Practical Implications

LiveCodeBench Pro demonstrates that, contrary to some recent popular narratives, SOTA LLMs have not reached parity with the top 0.1% of competitive programmers, particularly in end-to-end, insight-driven, and adversarially robust settings devoid of tool augmentation. By isolating reasoning and knowledge effects, the work strengthens the position that current model success is largely anchored in memory and pattern interpolation, rather than deep algorithmic synthesis, induction over unseen states, or creative deduction absent strong priors.

Overconfidence and hallucinated justifications remain systemic—LLMs often emit “confidently incorrect” but syntactically plausible arguments, which mirrors broader trends seen in factual QA and scientific reasoning LLM audits. Theoretical advances in prompt structure, RL alignment, or test-time chains-of-thought yield diminishing returns in the observation-driven regime; future progress will likely depend on paradigm shifts in inductive bias, state tracking, querying (especially for interactive/program synthesis settings), and fine-grained uncertainty calibration.

Practically, the study's methodology and benchmark design set a new standard for code-reasoning evaluation. LiveCodeBench Pro's live updating ensures continued relevance for future model generations, and its expert-driven analysis pipeline has direct transfer value for areas such as automated code review, formal program synthesis, and robust agent development for mixed cooperative-competitive tasks. Tool separation (native vs augmented) is now essential for honest measurement of model innovations.

Conclusion

LiveCodeBench Pro systematically quantifies and elucidates the current frontiers and blind spots of LLMs in competitive algorithmic programming. The study reveals that while LLMs are highly competent in implementation- and template-heavy domains, they remain brittle on higher-order reasoning, case analysis, and observation-dependent synthesis, especially when deprived of tool-based compensation strategies. By establishing a contamination-resistant, expert-annotated, and difficulty-calibrated benchmark, and by aligning evaluation with established human Elo frameworks, this work both calibrates expectations for near-term progress and provides actionable diagnostics for the next cycle of LLM and algorithmic reasoning research.

Further developments are needed in fine-grained uncertainty modeling, adaptive reasoning under interactive protocols, and training objectives that align more closely with human conceptual decomposition and error correction. LiveCodeBench Pro will play a critical role in auditing such progress and in driving principled advances toward genuinely reasoning-capable code generation.