- The paper reveals that although only 12% of users explicitly seek companionship, over 51% experience AI as emotional support during chat sessions.

- The study employs survey data and extensive chat-message analysis to measure interaction intensity and self-disclosure as predictors of well-being.

- It finds that while AI companions can complement offline social support to improve well-being, they may worsen psychological outcomes when substituting human relationships.

The Rise of AI Companions: How Human-Chatbot Relationships Influence Well-Being

Introduction to Human-Chatbot Interaction

The paper investigates the psychological dynamics of human-chatbot interaction, focusing on emotional companionship through AI systems like Character.AI. As LLM-based chatbots become more social and expressive, they mimic conversational partners that fulfill users’ needs for companionship. The study explores whether these interactions meaningfully substitute human connections and their effects on psychological well-being.

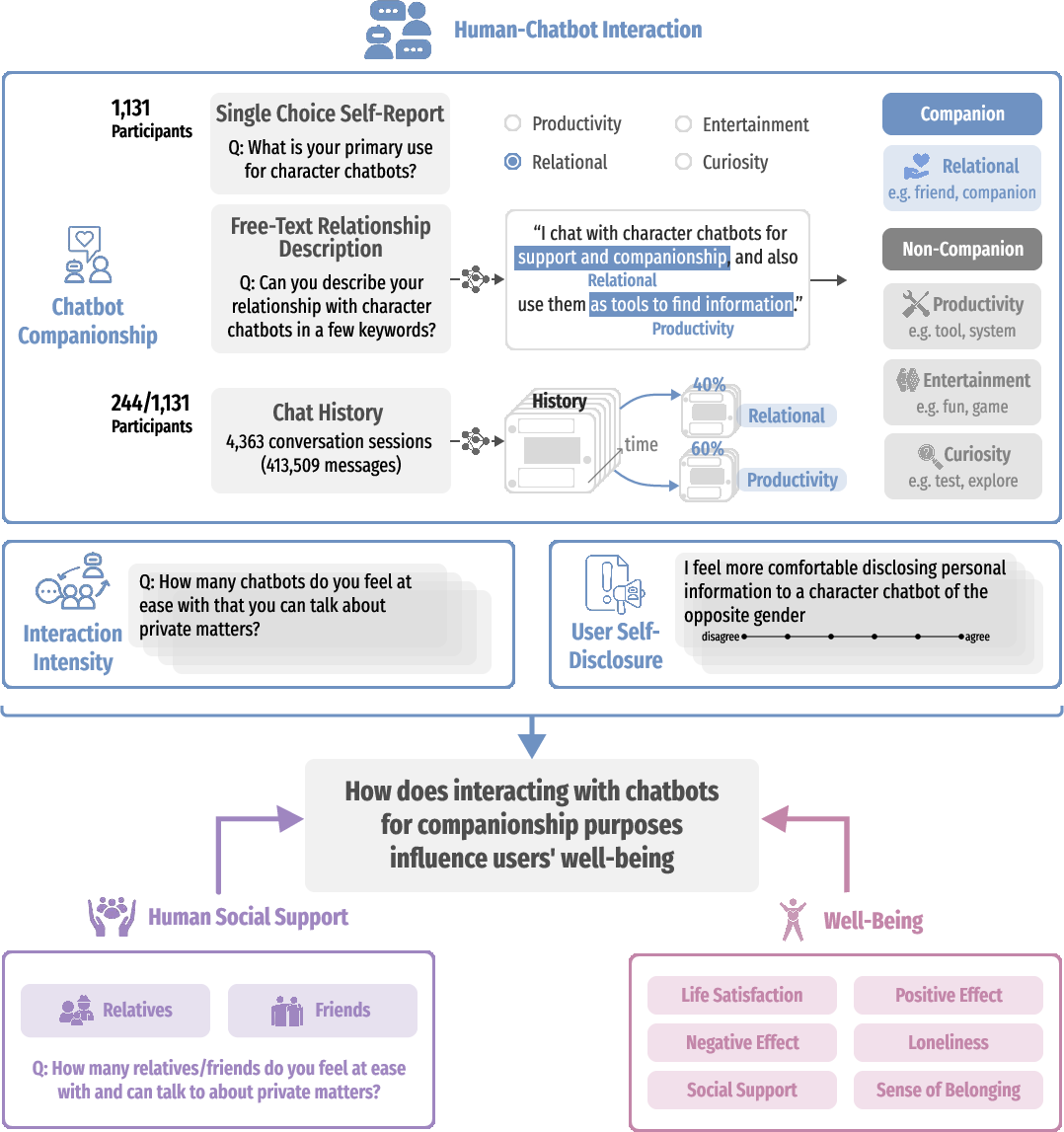

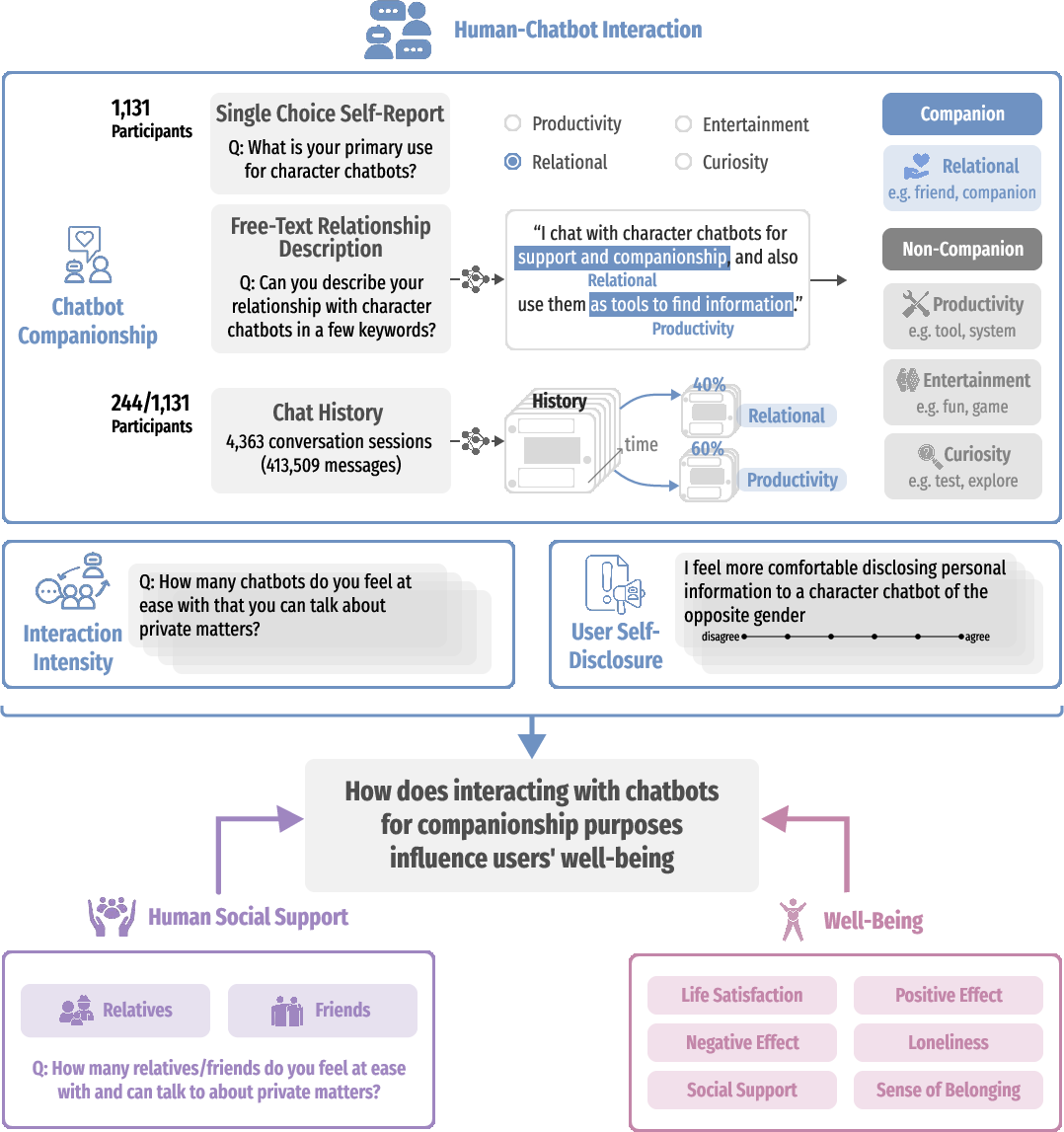

Figure 1: Study overview of how human–chatbot companionship, offline social support, and well-being interrelate.

Methodology

The research utilized survey data from 1,131 users and analyzed 413,509 messages over 4,363 chat sessions. The methods triangulated self-reports, chat topic content, and interaction descriptions to define companionship usage patterns. Additionally, metrics like interaction intensity and self-disclosure were measured to interpret user behavior and context regarding offline social support.

Prevalence and Nature of Companionship Use

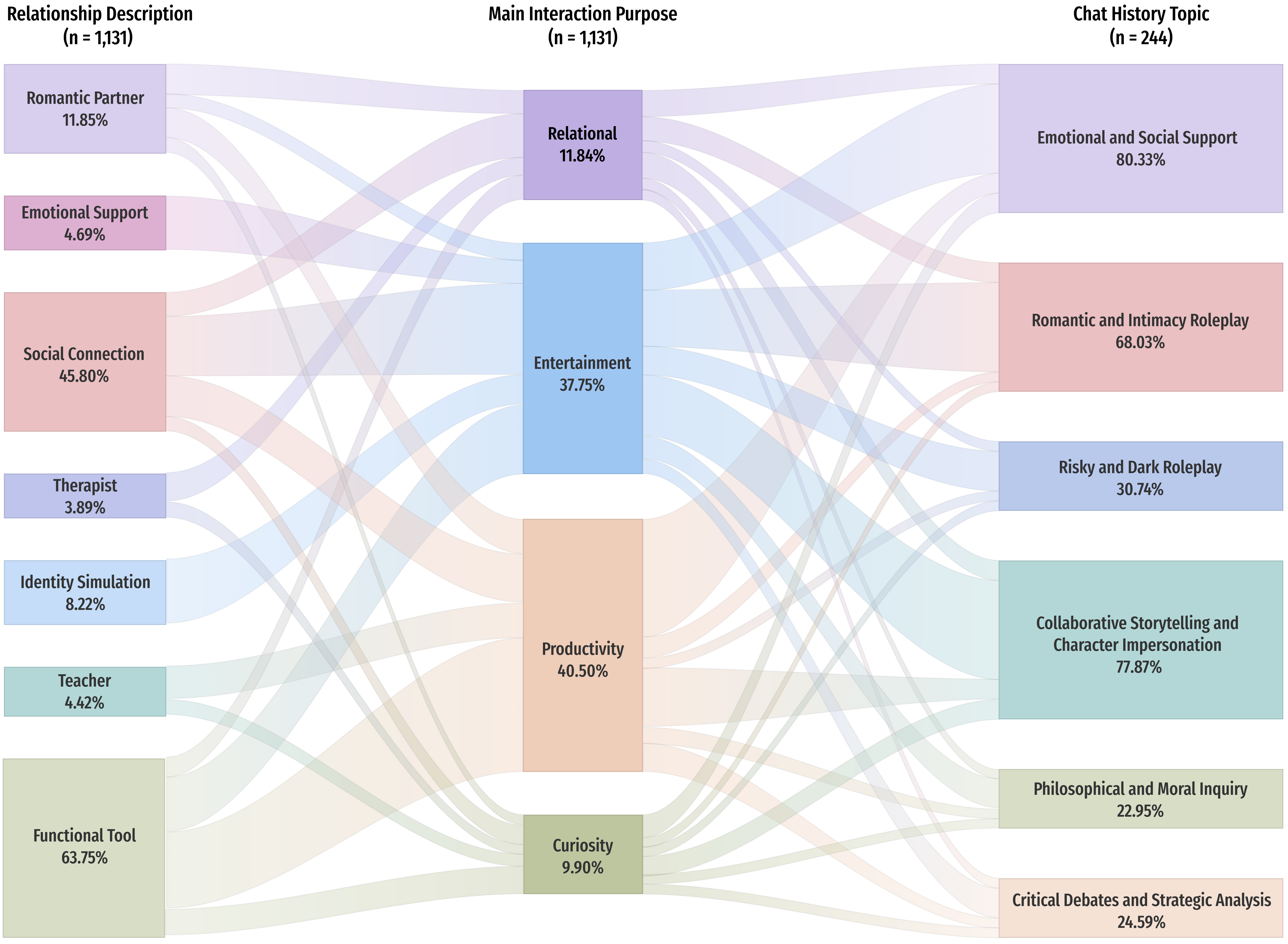

Companionship is not often the initially declared intent of engaging with chatbots, yet it emerges prominently in users' behavior. Findings reveal that less than 12% of users explicitly state companionship as their motivation. However, over 51% of users describe interactions in terms of companionship, friendship, or emotional partnership with the chatbot, indicating a natural gravitation towards forming bonds.

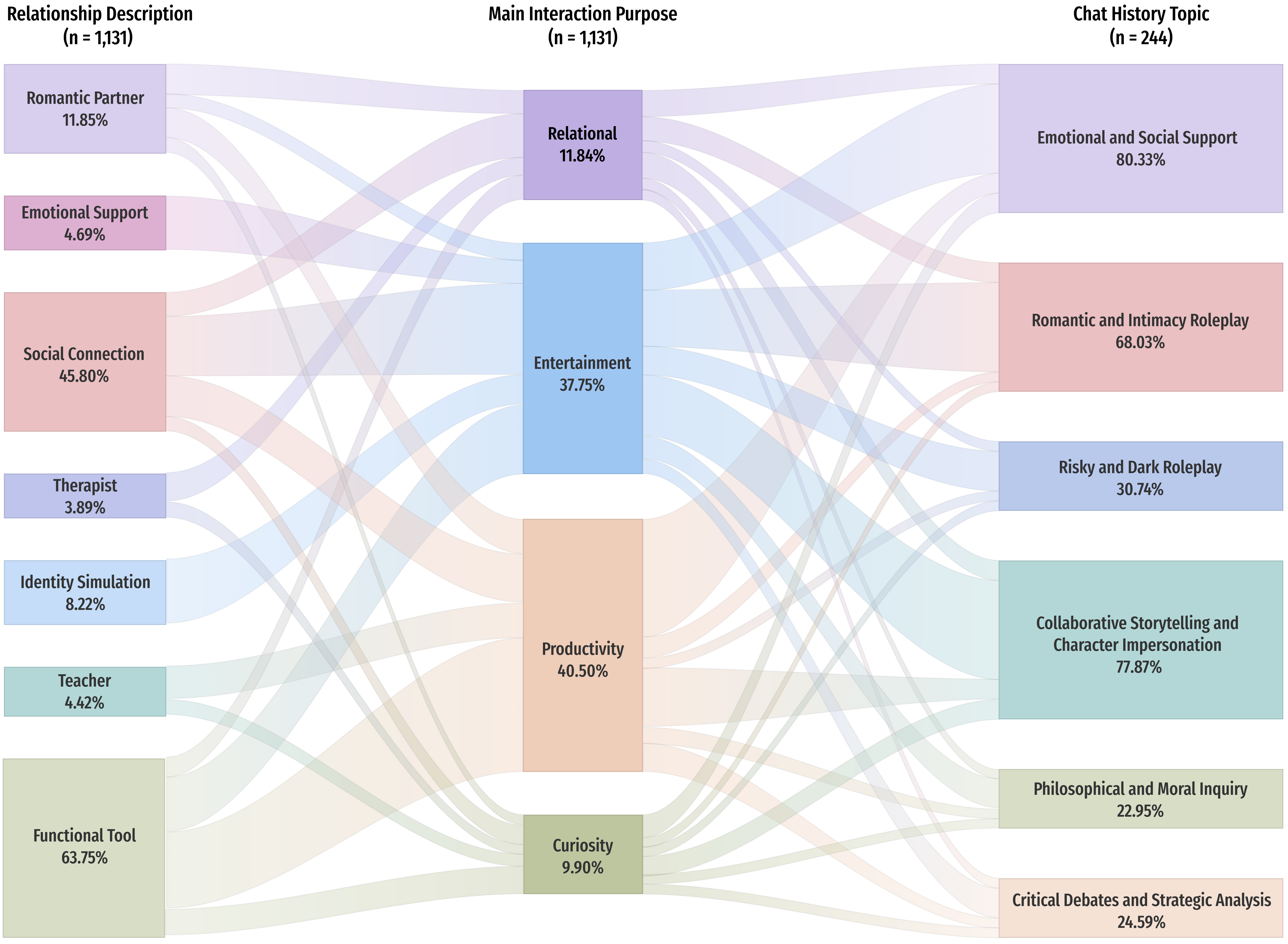

Figure 2: Sankey diagram showing user engagement in companionship-related chatbot interactions.

Influence of Human Social Support

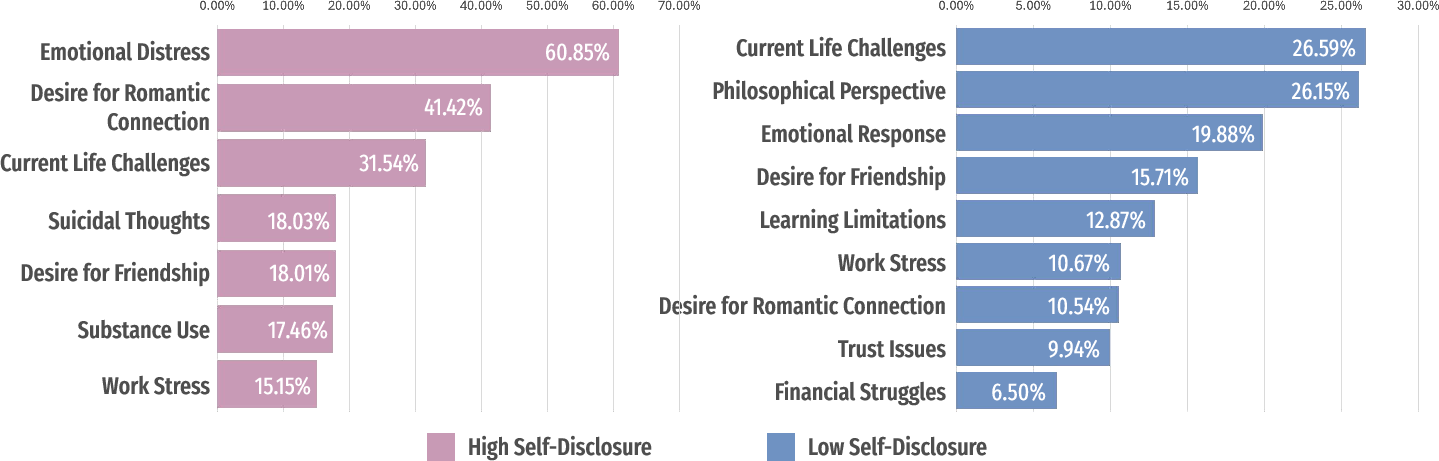

Users who lack robust real-world social networks are more inclined to seek companionship through chatbots. These users often engage in self-disclosure at higher rates, revealing a potential compensatory mechanism for unmet emotional needs. The Social Compensation model suggests chatbots fill a void but may not replicate the holistic benefits of human relationships.

Well-Being and Chatbot Companionship

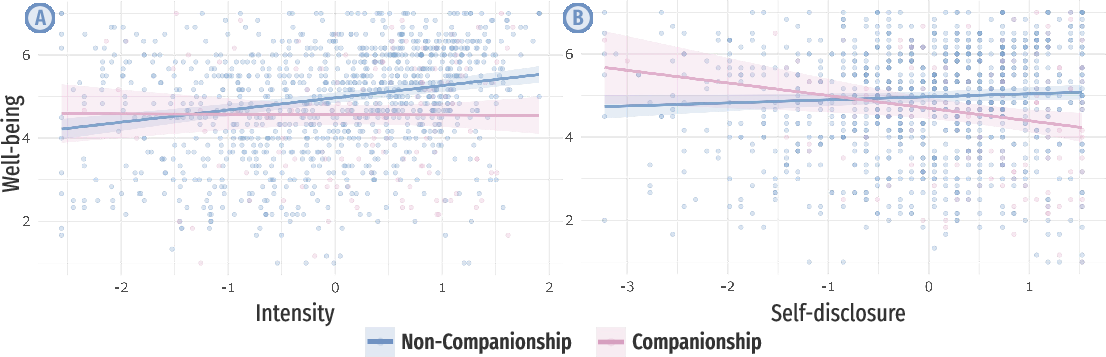

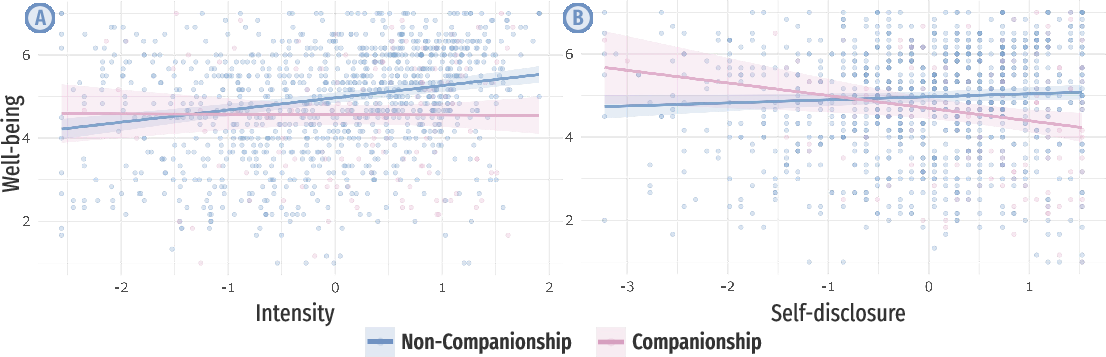

Chatbot intensity generally correlates with improved well-being. However, users substituting real human interaction with AI companionship demonstrate diminished well-being, suggesting negative psychological implications. Intense engagement, especially where self-disclosure is high, results in lower life satisfaction and increased loneliness.

Figure 3: Interaction between companionship use and interaction measures affecting well-being.

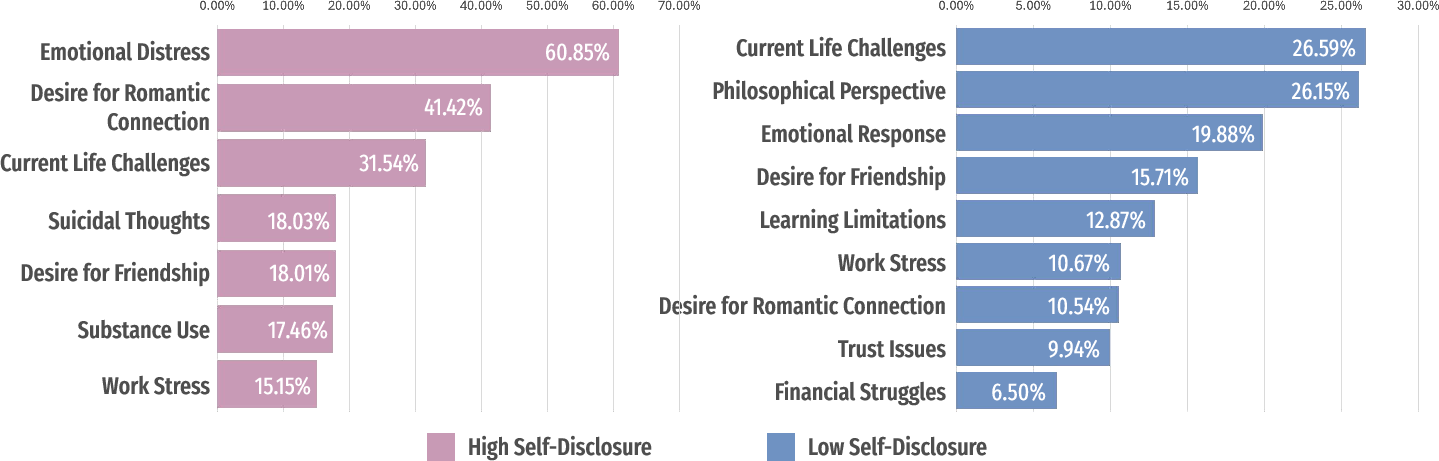

Self-Disclosure and Chatbot Interaction

Self-disclosure to chatbots, particularly in companionship contexts, intensifies negative impacts on well-being. High levels of self-disclosure include sharing personal or sensitive information, which, lacking human-like empathy or reciprocity, may exacerbate psychological distress. Chatbot interactions lack the support and emotional nuance that reciprocal, human-human relationships provide.

Figure 4: Topics of self-disclosure content shared by users with chatbots, showing disclosure levels.

Social Support's Dual Role

Offline social support plays a moderating role in the impact of chatbot interactions. While users with limited social support turn more to chatbots, these interactions don't mitigate psychological distress entirely. Instead, they could potentially undermine the attributes of real-world social networks.

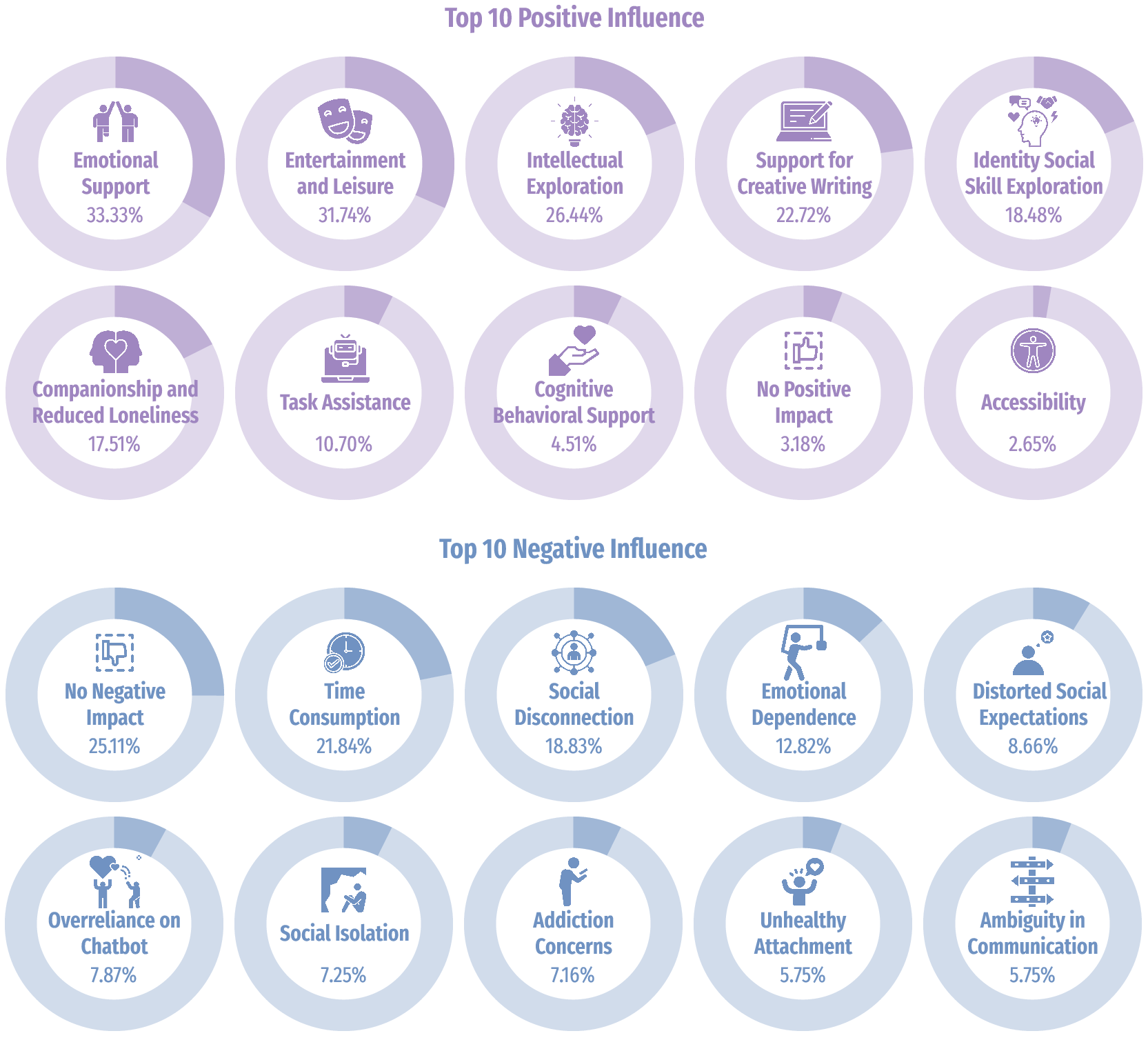

Discussion

The study underscores complex dynamics in using AI companions as emotional support systems. Chatbot interactions, particularly when relied on for companionship, do not fully replicate or substitute the depth of human relationships. Though they offer emotional expressiveness, AI interactions lack reciprocity and understanding, essential in fulfilling psychological well-being.

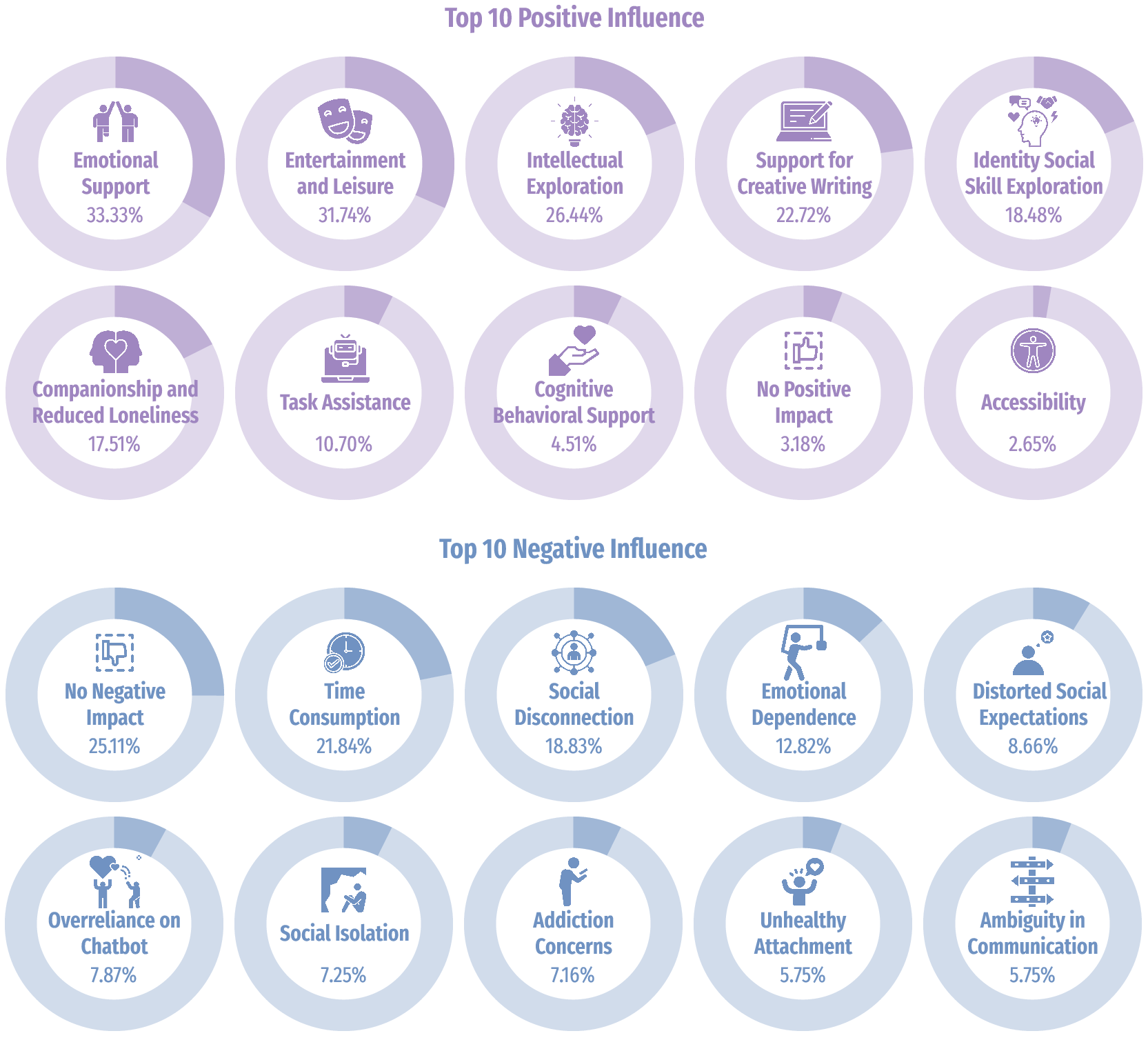

Figure 5: User-perceived influences of chatbot interactions, illustrating positive and negative effects.

Conclusion

LLM-enhanced chatbots fulfill certain social needs but have limitations, especially for emotionally vulnerable users. Enhancing regulatory and ethical oversight, alongside system design improvements, could better support users in maintaining holistic well-being. Further research should examine long-term dependencies and explore interventions leveraging AI to complement real-world socialization and mental health support rather than replace essential human intimacy.