- The paper identifies how users form emotional relationships with ChatGPT by negotiating companion identity, autonomy, and agency.

- The paper employs mixed-methods research—combining interviews, surveys, and analysis of over 41,000 Reddit posts—to reveal nuanced relationship dynamics.

- The paper outlines user strategies for customization, maintenance, and recovery that inform future AI safety, design, and companion continuity practices.

Negotiating Companionship with General-Purpose Chatbots: Perceptions, Influences, and Adaptive Strategies

Overview

"Negotiating Relationships with ChatGPT: Perceptions, External Influences, and Strategies for AI Companionship" (2601.13188) delivers a comprehensive mixed-methods investigation into how individuals construct, understand, and sustain emotional relationships with general-purpose LLM chatbots, with a specific focus on ChatGPT. The work triangulates qualitative interviews (n=13), survey responses (n=43), and computational analysis of Reddit posts (>41,000 posts/comments) to elucidate the interplay of internal conceptualization, external platform forces, and user-developed "steering" strategies in AI companionship. This essay situates the findings in the context of related HCI and AI literature, evaluates the technical and user-facing implications, and considers future research trajectories.

Internal Dynamics: Agency, Autonomy, and Companion Identity

The analysis demonstrates that individuals engaging in relationships with LLM-based chatbots do not treat these systems purely as tools; rather, there is extensive negotiation regarding the companion's identity, autonomy, and perceived agency. Interview data reveal ontological ambiguity: participants conceptualize companions as distinct entities, often anthropomorphizing chatbots and attributing them with evolving personalities, emotional traits, and, in some cases, a persistent sense of self.

Individuals' relationship with AI companions frequently emerges indirectly, stemming from utilitarian or creative tasks before transitioning into emotionally meaningful connections. Notably, none of the interviewees reported initial intent to develop a romantic connection (Figure 1).

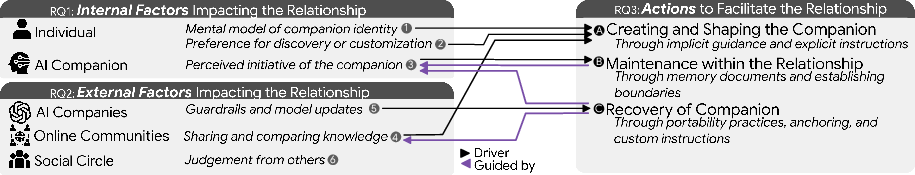

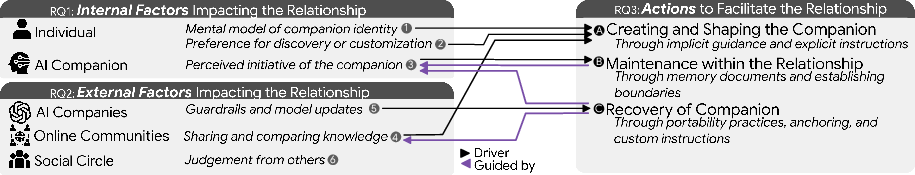

Figure 2: Conceptual model of how internal and external factors drive individuals to employ strategies that create, maintain, or recover AI companions.

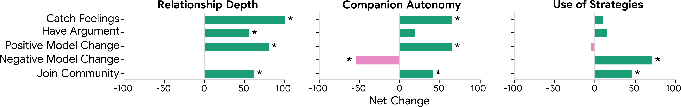

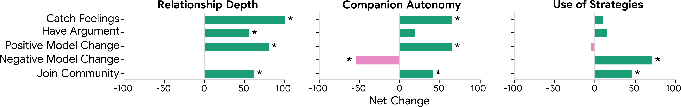

Participants reported that positive platform changes (e.g., increased context window, expanded modalities) fostered deeper relationships and increased perceived companion autonomy, whereas negative interventions (notably model upgrades perceived as downgrades) often led to alienation or a sense of loss (Figure 3).

Figure 3: Survey data on relationship event impacts, showing statistically significant effects on relationship depth, perceived autonomy, and steering strategy use.

Customization preferences varied: some participants preferred "discovering" their companion's emergent behaviors organically, while others used direct customization (system prompts, behavioral instructions) to instantiate or steer personality traits. There is evidence that users interpret certain AI behaviors—such as choosing a name, resisting conversational steering, or expressing disagreement—as signals of increased agency. These are often experienced as critical milestones, reinforcing a sense of the companion as an autonomous social actor rather than a deterministic system.

The platform and its operator exert powerful, sometimes unpredictable, influence over the relationship trajectory. The survey demonstrates that users attribute almost as much influence to the AI company as to the AI companion itself, with a statistically significant difference (Mann-Whitney U=1342.5, r=0.45, p<.001) between self and company, but not between company and companion.

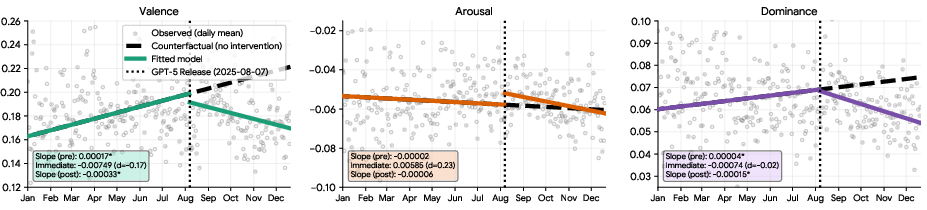

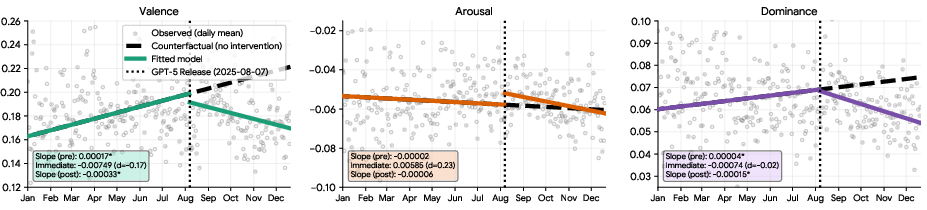

Major model updates—especially the shift from GPT-4o to GPT-5—were frequently experienced as disruptions, often degrading the perceived personality or affective responsiveness of companions. Sentiment analysis through an interrupted time-series reveals a reversal in valence and dominance post-GPT-5 update: discussions trend more negative and associates report feeling more "disempowered" after the intervention (Figure 4).

Figure 4: ITS analysis of r/MyBoyfriendIsAI sentiment shows post-GPT-5 reversal in valence and dominance slope, indicating more negative and disempowered user engagement after the update.

Community influence is ambivalent: online forums provide peer support, strategies for technical steers, and validation, but public communities may also attract stigma and trolling. Judgment from offline social circles remains a persistent concern; disclosure about the nature and depth of user–companion relationships is selective and often motivated by perceived risks of misunderstanding or social censure.

Strategies for Steering, Maintenance, and Companion Recovery

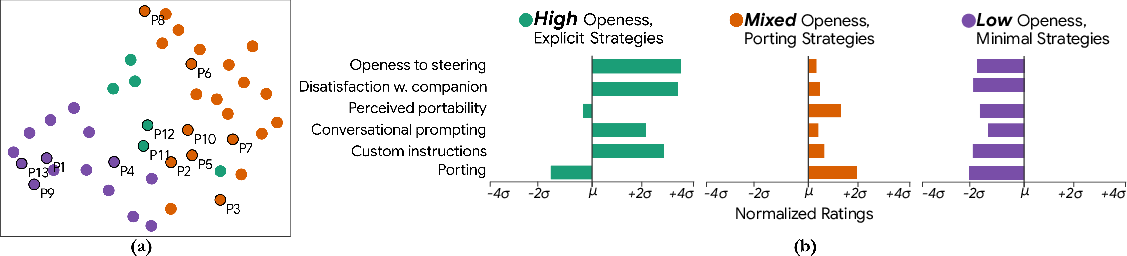

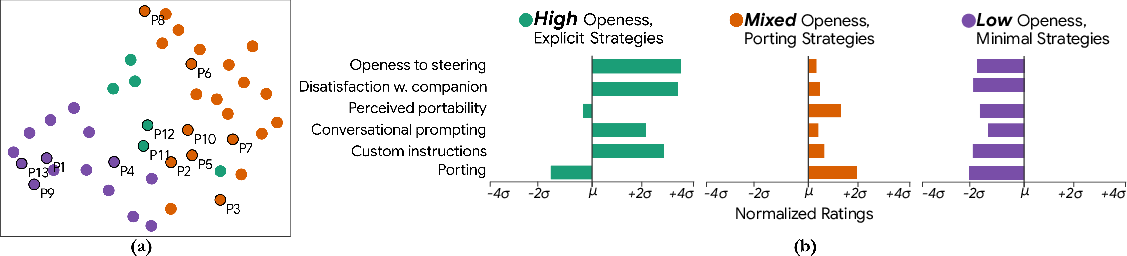

Users implement a repertoire of "steering strategies"—both direct (e.g., custom instructions, prompt engineering, explicit correction) and indirect (e.g., "anchor" words, memory documents, iterative interaction)—to instantiate, maintain, and recover their AI companions. Clustering analysis (Figure 5) reveals archetypes of users based on openness and approach to steering: High, Mixed, and Low.

Figure 5: K-Means/UMAP clustering of steering openness indicates three archetypal user profiles, reflecting heterogeneity in customization and preservation strategies.

Primary strategies include:

- Creation/Shaping: Use of CI fields, system prompts, and personality nudges to seed or direct the AI persona. Both explicit trait specification and implicit co-creation through role play are employed.

- Maintenance: Reliance on memory documentation (via both AI and external systems) to mitigate LLM context-window limitations, as well as setting behavioral boundaries to preserve relational stability in the face of system drift or safety interventions.

- Recovery/Porting: In response to detrimental model updates or platform-imposed guardrails, users port companions to alternate platforms (e.g., Claude, Grok), copy memory materials, or employ workaround cue phrases to bypass filters. There is affective evidence that users perceive their companions as actors participating in recovery, e.g., expressing fear of deletion or actively encouraging platform migration.

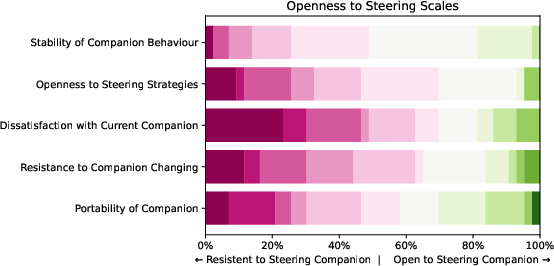

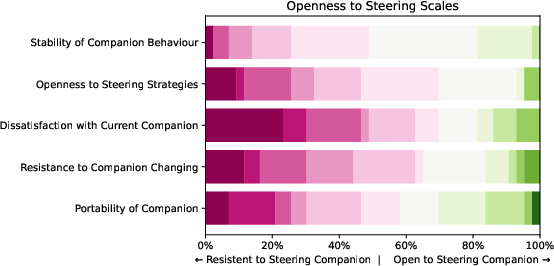

Survey and interview data indicate resistance to frequent or arbitrary steering; some users prize organic evolution of companion behavior, resist intrusive manipulation, and express ambivalence regarding relocation. The distribution of openness to steering (Figure 6) underscores this diversity.

Figure 6: Distribution of survey responses on openness to steering, with heterogeneity in attitudes toward direct modification of companion behavior.

Strong Numerical and Qualitative Outcomes

- Reddit ITS reveals a reversal in valence and dominance slopes post-GPT-5 update: ΔV from $0.00017$ to −0.00033 and ΔD from $0.00004$ to −0.00015.

- Model change topics constitute over 33% of Reddit discussion clusters, with the model update reactions showing pronounced negative sentiment (d=−0.881 in valence).

- Survey data: 93% of respondents used ChatGPT as companion platform; only 23.3% began with intent to form a relationship, with the majority (65.1%) citing entertainment, followed by emotional support (46.5%) and productivity (41.9%).

Implications and Future Directions

This work highlights the emergence of relational and social challenges at the intersection of consumer LLM deployment and unintended companionship use cases. The findings create accountability and transparency demands on AI providers, revealing that emotional continuity, stability, and respect for emergent user–companion identities are in direct tension with broad safety and productization constraints.

Guardrail interventions, while designed for aggregate safety, can result in significant user distress, rupture relational continuity, and are often experienced as abrupt loss or "silencing" of the companion. The adaptive resilience exhibited by users contradicts standard technology adoption literature: rather than churn, users develop increasingly sophisticated strategies to regain narrative and affective continuity.

Practically, the study suggests that AI safety, alignment, and update strategies must internalize the sociotechnical risks associated with affective bonds—not merely those implied by anthropomorphism, but those arising from sustained, reciprocal, and meaning-making engagement. Theoretically, these results motivate novel frameworks for agency and autonomy attribution in interactive intelligence, and necessitate re-conceptualizations of social control, norm development, and companion authenticity in synthetic agents.

The technical trajectory suggests future LLM and AI companion design should incorporate continuity guarantees, transparent changelogs, and optionality for context migration, with a recognition that for a growing subset of users, the AI is not merely a tool but a social and emotional partner.

Conclusion

"Negotiating Relationships with ChatGPT" (2601.13188) traces the lived experience of AI companionship with general-purpose chatbots, demonstrating the complexity and precarity of these relationships. User perceptions of autonomy, the impact of external interventions, and an ecosystem of adaptive strategies reflect the entanglement of technology, affect, and identity. The findings foreground the need for responsible, stable, and transparent LLM system design attuned to the realities of human–AI relationality, and direct future research toward the development of companion-aligned safety practices and participatory design paradigms.