- The paper shows that Neural Cellular Automata can effectively perform few-shot generalization on ARC tasks by leveraging iterative local updates.

- It employs a gradient-based training methodology with asynchronous updates to enhance robustness and mitigate overfitting.

- Experimental results indicate success in structured tasks like spiral drawing while highlighting limitations in handling global context propagation.

Analyzing Neural Cellular Automata for ARC-AGI

This paper investigates the application of Neural Cellular Automata (NCA) to the Abstraction and Reasoning Corpus for Artificial General Intelligence (ARC-AGI). This work explores the capabilities of NCAs in handling few-shot generalization and abstract transformation tasks in ways previously untested. The effectiveness of gradient-based trained models in iterating local updates to transform input grids into correct test grid outputs is the key focus.

Introduction to Neural Cellular Automata and ARC

The primary motivation for employing NCAs in ARC tasks is their inherent self-organizing nature, which mirrors emergent intelligent behaviors in biological systems. In contrast to conventional deep learning models that are centrally organized, NCAs perform computations through repeated local updates without global oversight, naturally leading to translation invariance and robustness. This setup imposes unique inductive biases but also demands careful training design to harness these properties efficiently.

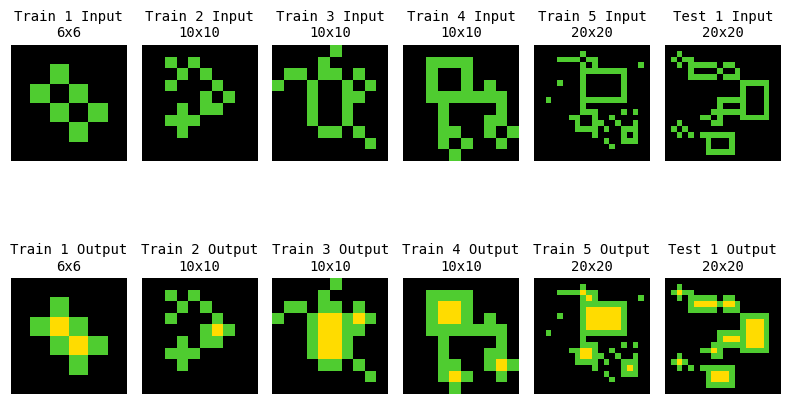

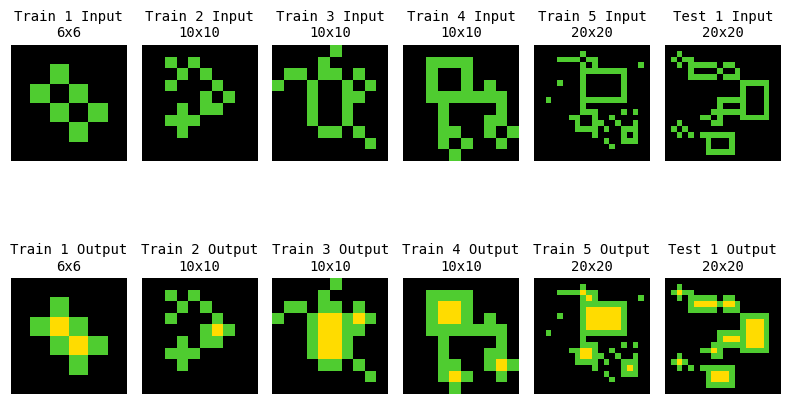

Figure 1: Task ID: 00d62c1b. An example ARC-AGI task where input grids necessitate filling enclosed structures with yellow pixels.

ARC presents a set of human-intuitive grid-based puzzles challenging for AI, as it emphasizes generalization from a few examples—a notable challenge for standard deep learning protocols. Therefore, the application of NCAs presents a strategy that might circumvent these limitations by leveraging local interactions and emergent behaviors. As such, NCA’s performance on ARC offers insights into their broader applicability and the constraints of their architectural design.

Cellular automata (CA) have long been a subject of interest due to their ability to model complex phenomena through simple local rules, with prominent examples like Conway’s Game of Life. NCAs extend this concept by incorporating neural networks to learn update rules through differentiable programming, thus offering expressive power within a framework of local cell interactions.

Prior research established NCAs' capabilities in diverse tasks ranging from image morphogenesis to pattern formation. This study extends existing knowledge by contributing empirical evidence of NCA performance in the ARC domain, addressing tasks focused on transformation and abstract reasoning. The paper posits that insights drawn here could inform improvements in decentralized learning models and self-organizing algorithms.

NCA Architecture and Training Methodology

The architecture adopted for experimentation utilizes a categorical encoding of ARC input grids, with channels representing color and state information. NCAs update iteratively using local rules, with stochastic cell selection to enhance training robustness, mitigating overfitting and aiding in generalization.

Training involves constant supervision through log loss functions computed across multiple time steps, differing from approaches that focus loss calculations only on final states. This maintains stability and encourages early convergence, preventing intermediate state corruption and promoting learning integrity across fewer time steps than typical morphogenesis models.

The choice of an asynchronous update regime increases the model’s robustness, promoting solutions that display higher resilience to input variations and noise. These training strategies collectively underscore the potential of NCAs to learn robust transformations with minimal data.

The evaluation on ARC tasks reveals NCAs’ mixed but insightful performance spectrum. Of the 400 public training tasks examined, NCAs accurately and consistently resolved 23 tasks, demonstrating partial efficacy of the approach given the task diversity and domain complexity.

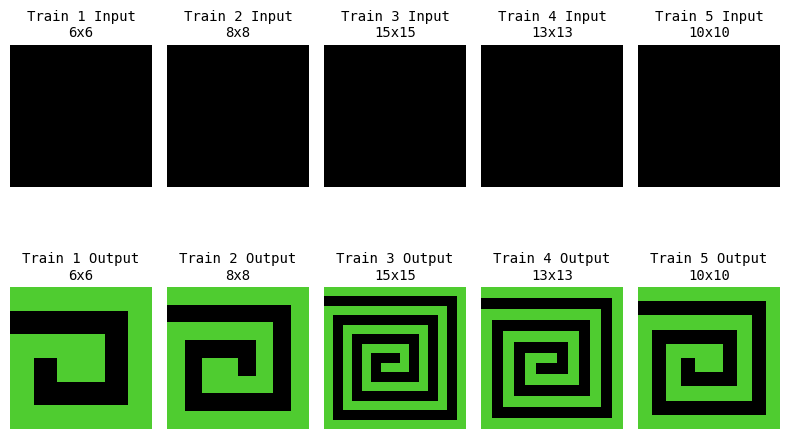

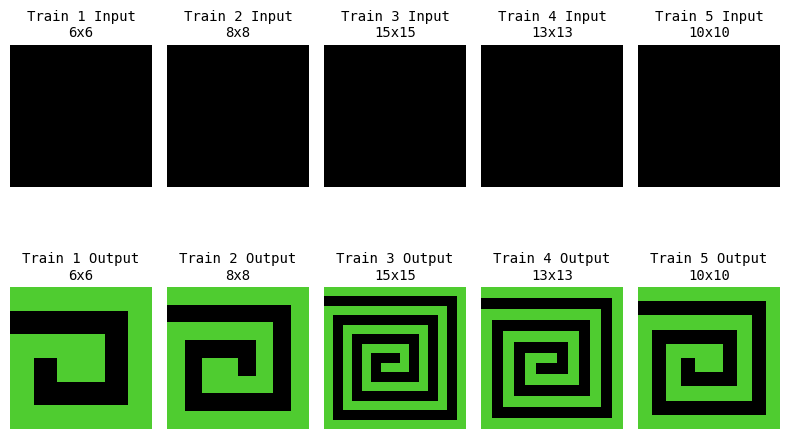

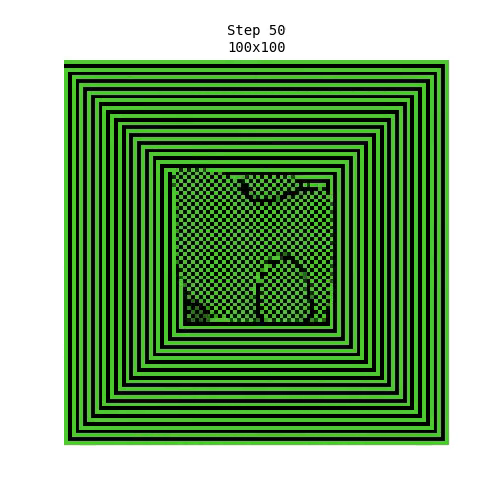

Figure 2: Task ID: 28e73c20. Training examples used to derive iterative rules for spiral construction that generalizes to larger grids.

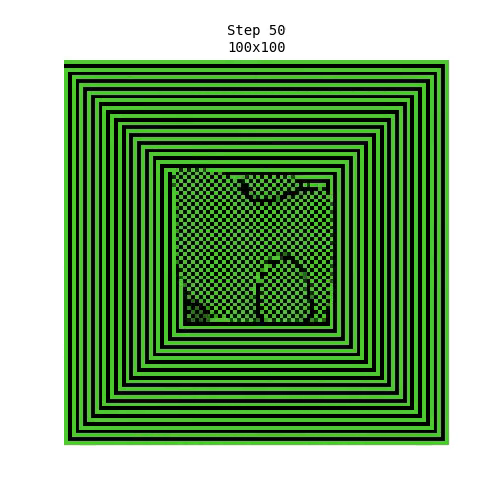

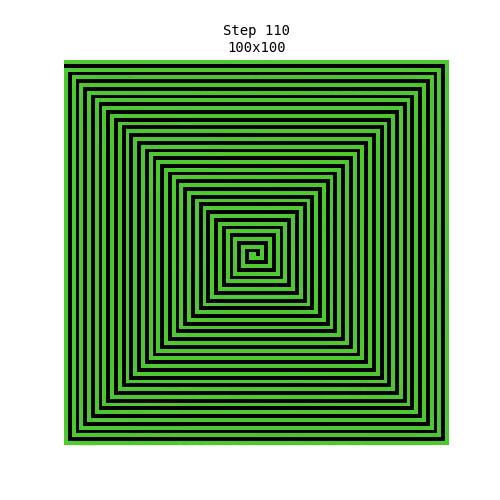

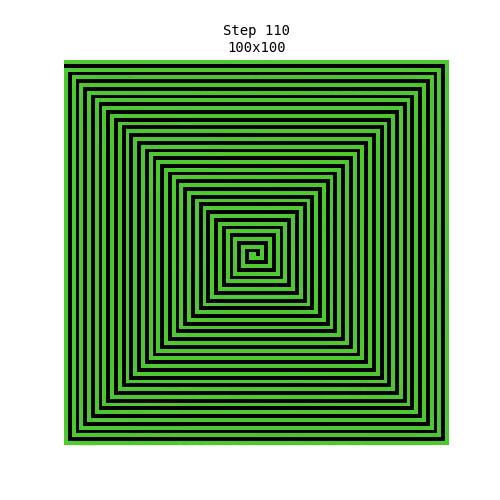

Figure 3: Spiral pattern generalized to 100x100 grid, confirming spatial coherence and iterative rule potency through larger scale tests.

Specific tasks such as spiral drawing (Figure 3) highlight NCA’s aptitude for generalizing iterative updates within structured frameworks. However, NCAs exhibit known limitations in tasks demanding global context propagation, exhibiting overfitting when trained on limited samples that do not suffice for comprehensive transformation generalization.

Discussion and Future Directions

The study’s findings accentuate the strengths of self-organizing computation, particularly in scenarios reliant on local reasoning. Yet, it also confirms the rigidity of NCAs when high degrees of coordination or representation of complex global transformations are imperative.

Future work should probe adaptive computation strategies and probabilistic learning frameworks to regulate solution complexity, possibly incorporating Bayesian inference methods. Evolving NCA architectural components to include features from advanced recurrent models could bridge current limitations and improve scalability to real-world applications beyond ARC.

The results underscore the importance of interpretative modalities for understanding NCA behavior, advocating for broader exploration across diverse reasoning benchmarks to holistically evaluate generalization capabilities. The present observations invite research into more granular hyperparameter tuning, iterative rule evolution, and architectural enhancements for sustained performance gains.

Conclusion

Neural Cellular Automata present a compelling case for decentralized model architectures applicable to tasks requiring abstract and few-shot generalization. This exploration into their potential within ARC accentuates both their operational robustness and the current architecture’s confines. Continued innovation in self-organizing systems will be pivotal to advancing autonomous reasoning models and enhancing AI’s adaptive and interpretative capacities.