- The paper demonstrates a developmental approach using standard NCA and EngramNCA with memory mechanisms to tackle abstraction and reasoning in ARC tasks.

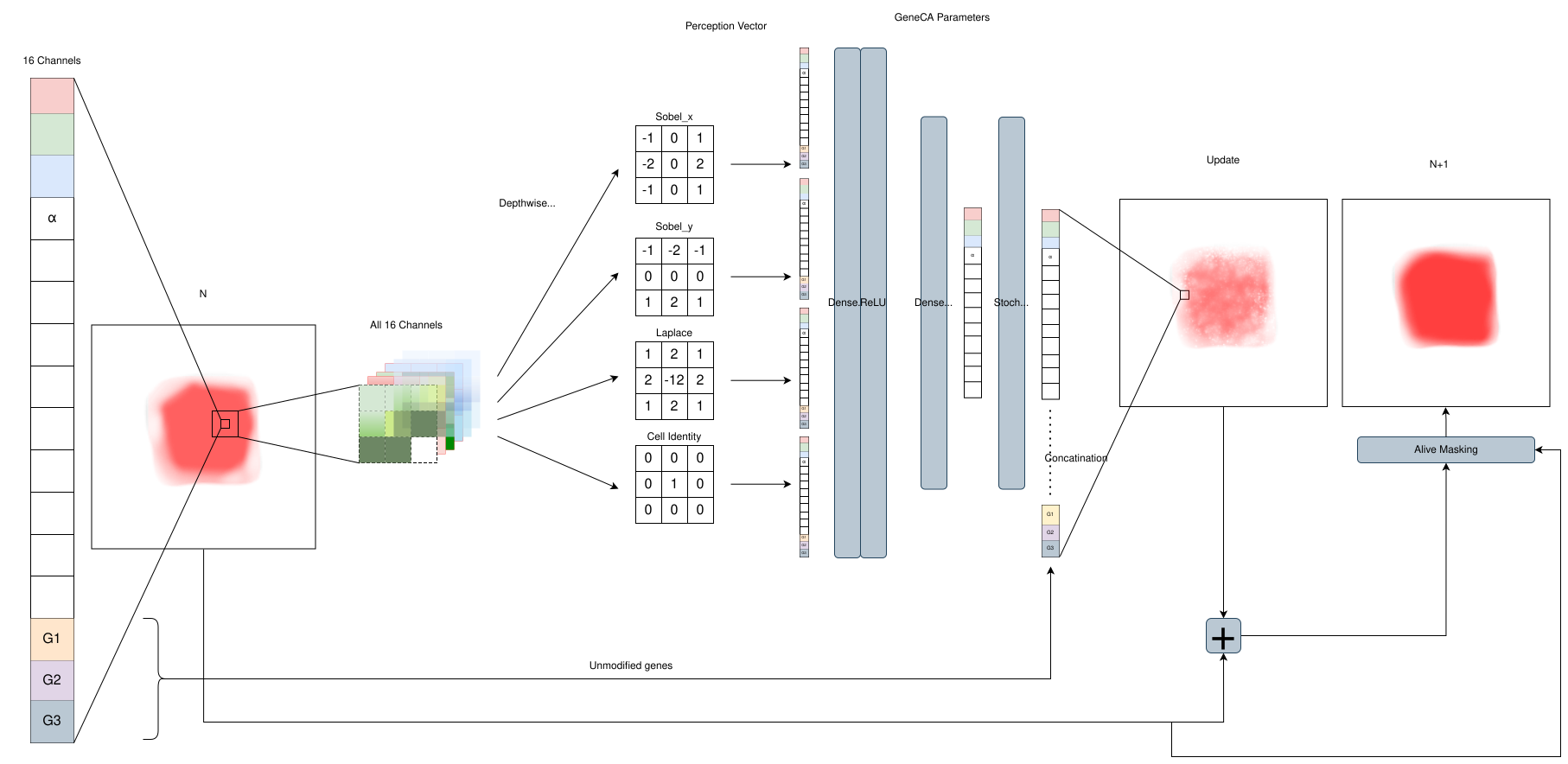

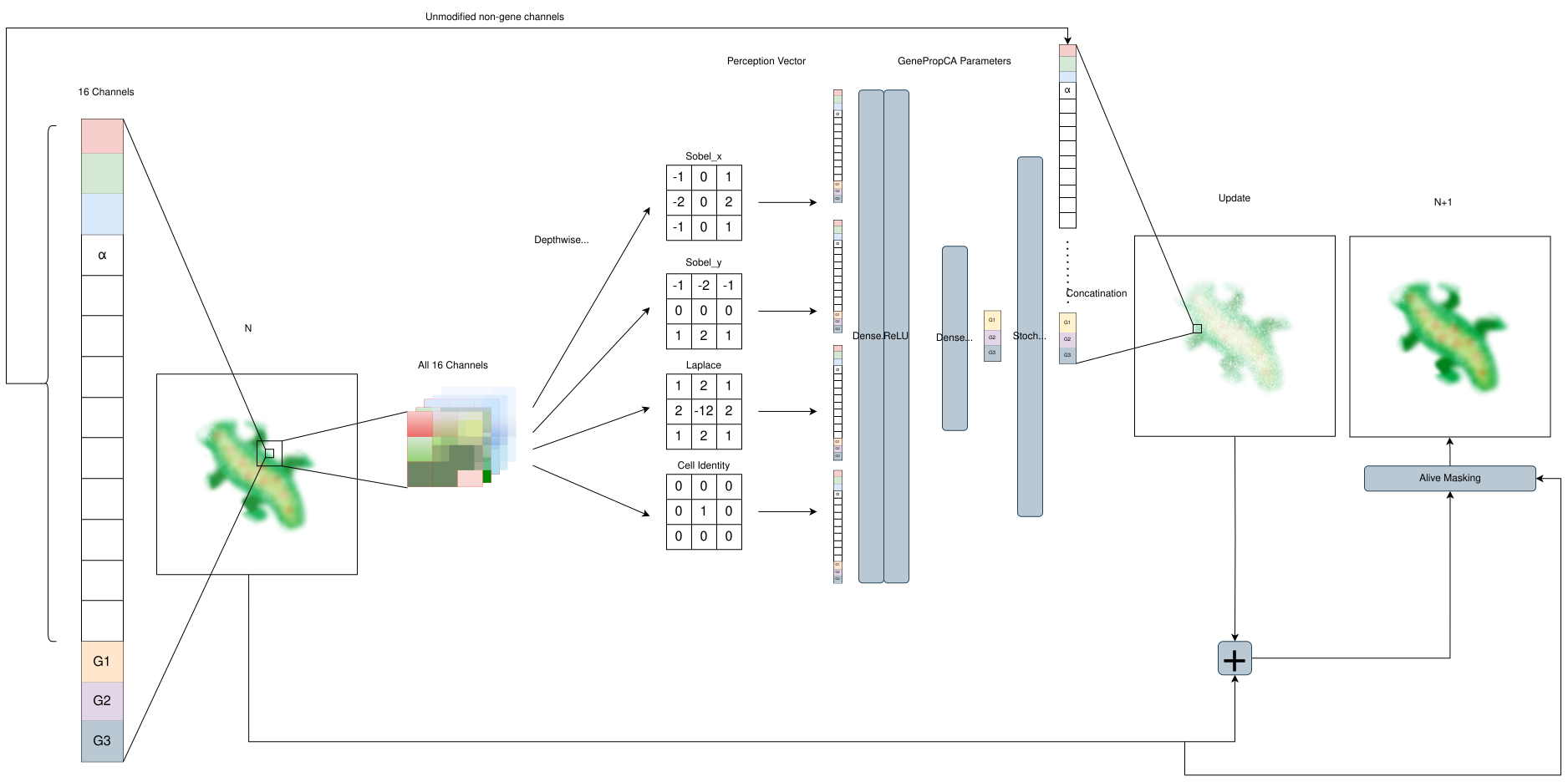

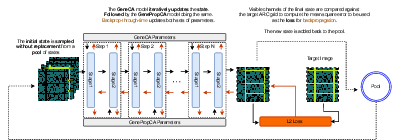

- It introduces modular architectures, GeneCA and GenePropCA, for encoding genetic primitives and propagating critical information across spatial lattices.

- Experimental results reveal that ARC-NCA models can match or exceed LLM performance on ARC puzzles while significantly reducing computational cost.

Developmental Neural Cellular Automata for ARC Reasoning: A Technical Essay on ARC-NCA

Introduction

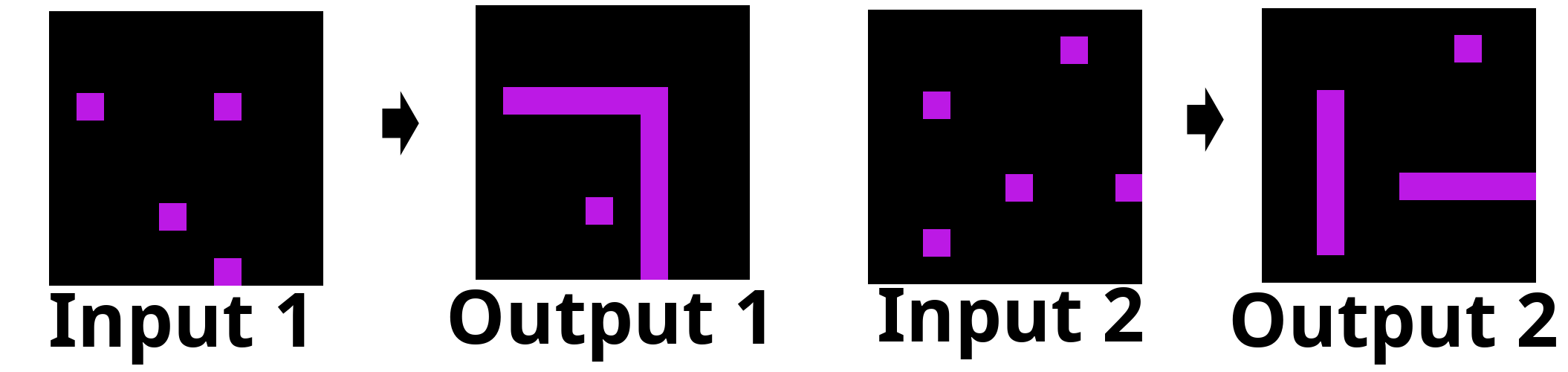

ARC-NCA presents a distinctly developmental approach to addressing the Abstraction and Reasoning Corpus (ARC), a benchmark designed to evaluate artificial general intelligence with stringent requirements for abstraction, generalization, and reasoning under few-shot supervision. This framework explores the capabilities of standard Neural Cellular Automata (NCA) and an enhanced variant, EngramNCA, which incorporates hidden memory mechanisms, for solving ARC tasks. The central motivation is to harness the emergent, self-organizing dynamics of NCAs to emulate cognitive processes observed during biological development, thereby advancing program synthesis and reasoning in computational models.

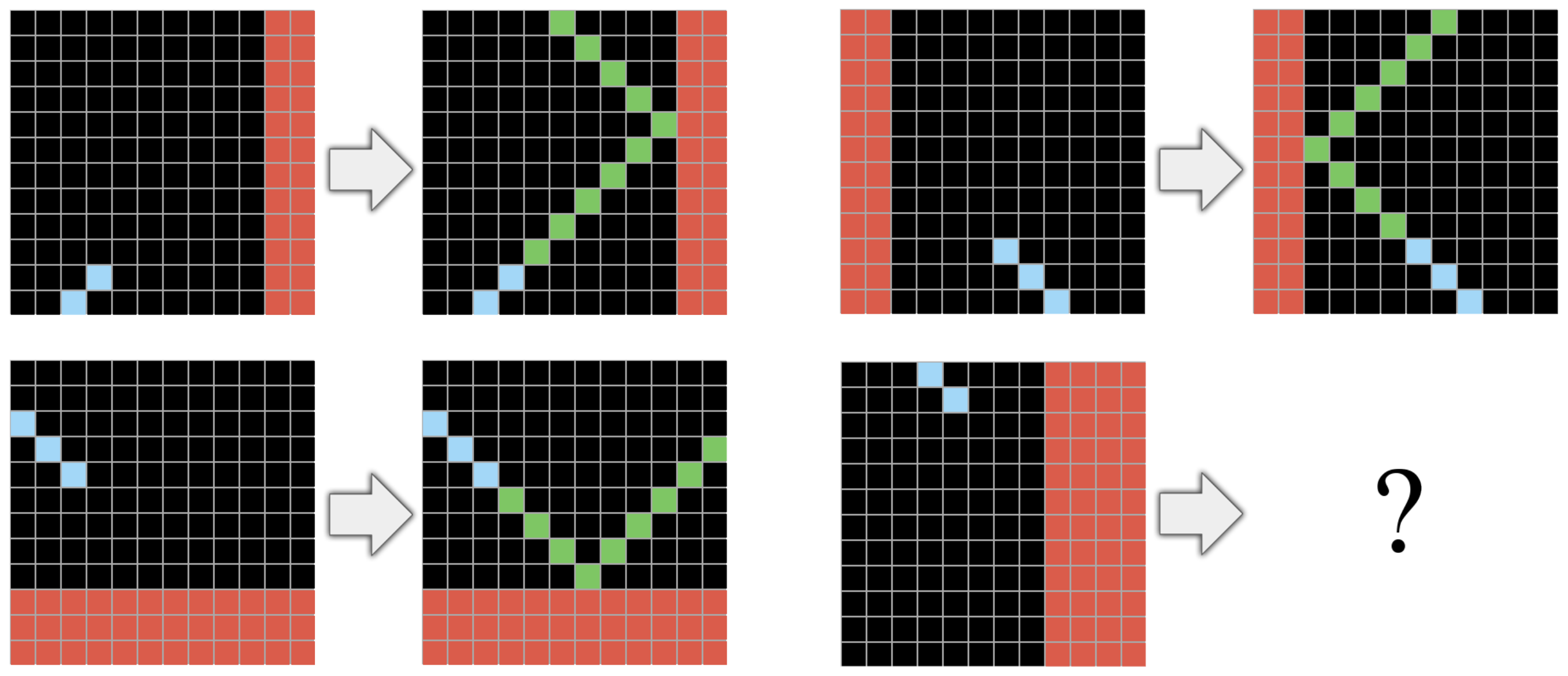

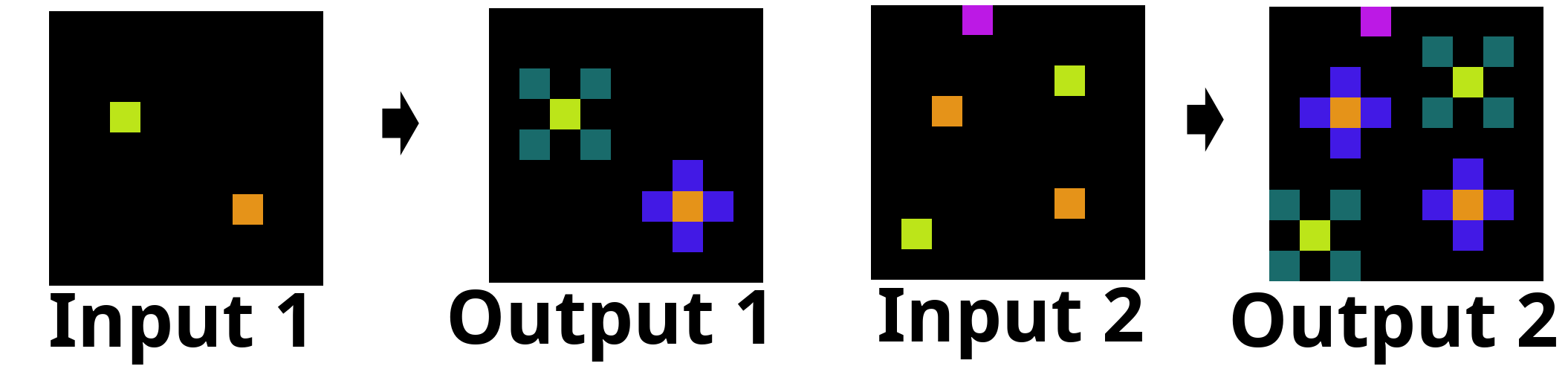

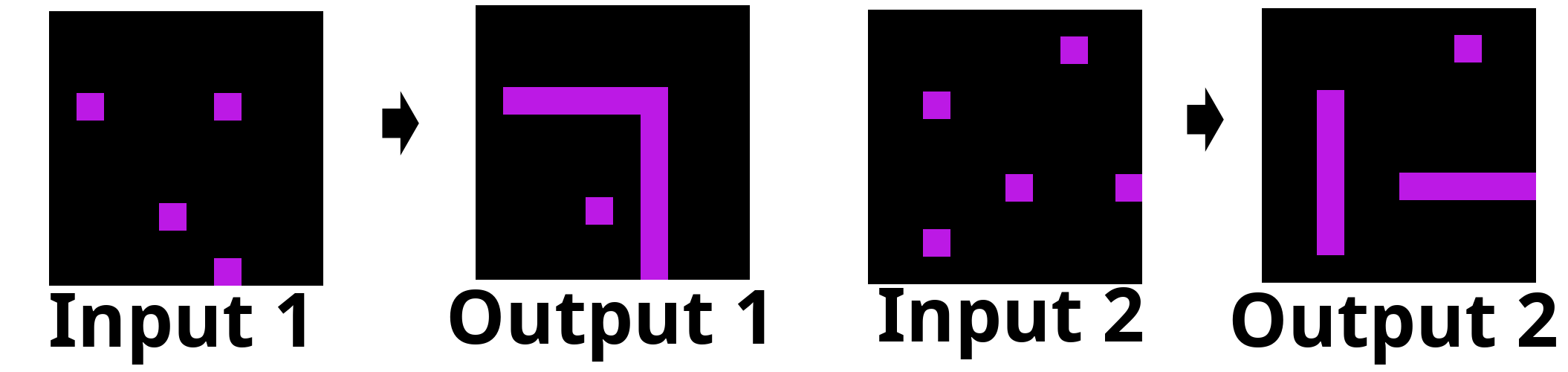

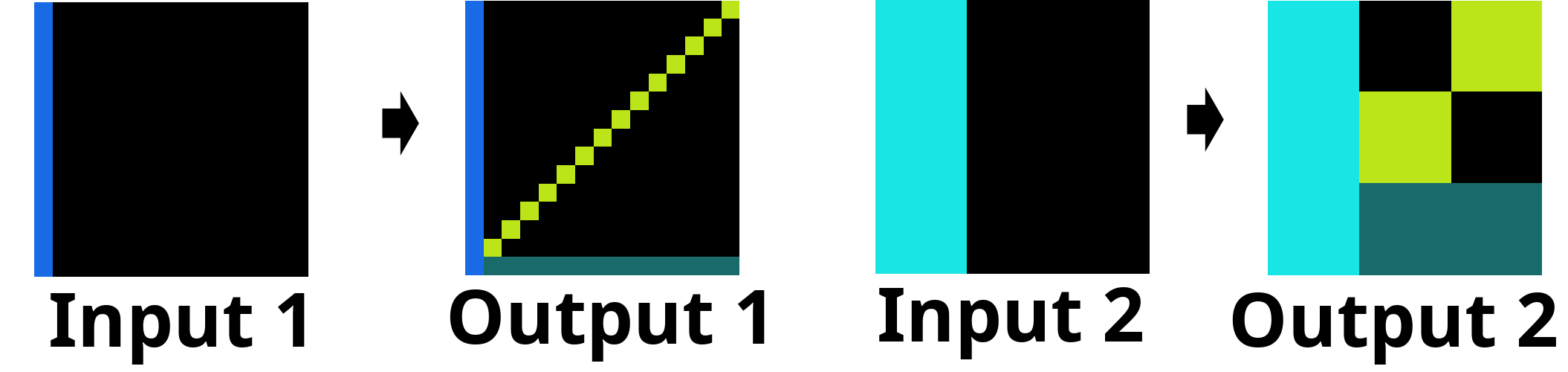

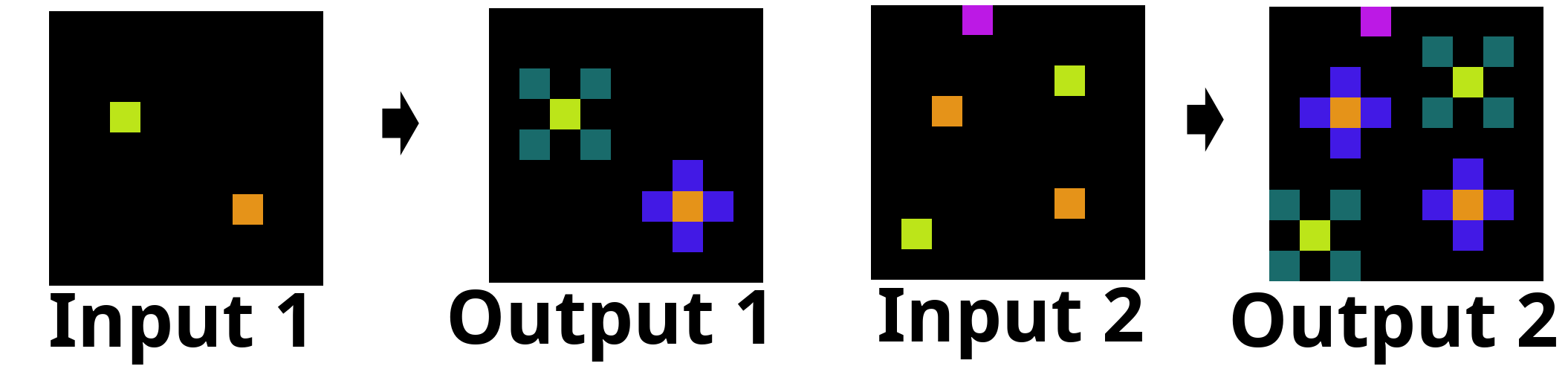

Figure 1: Example ARC task, demonstrating the complexity and variety of visual reasoning required.

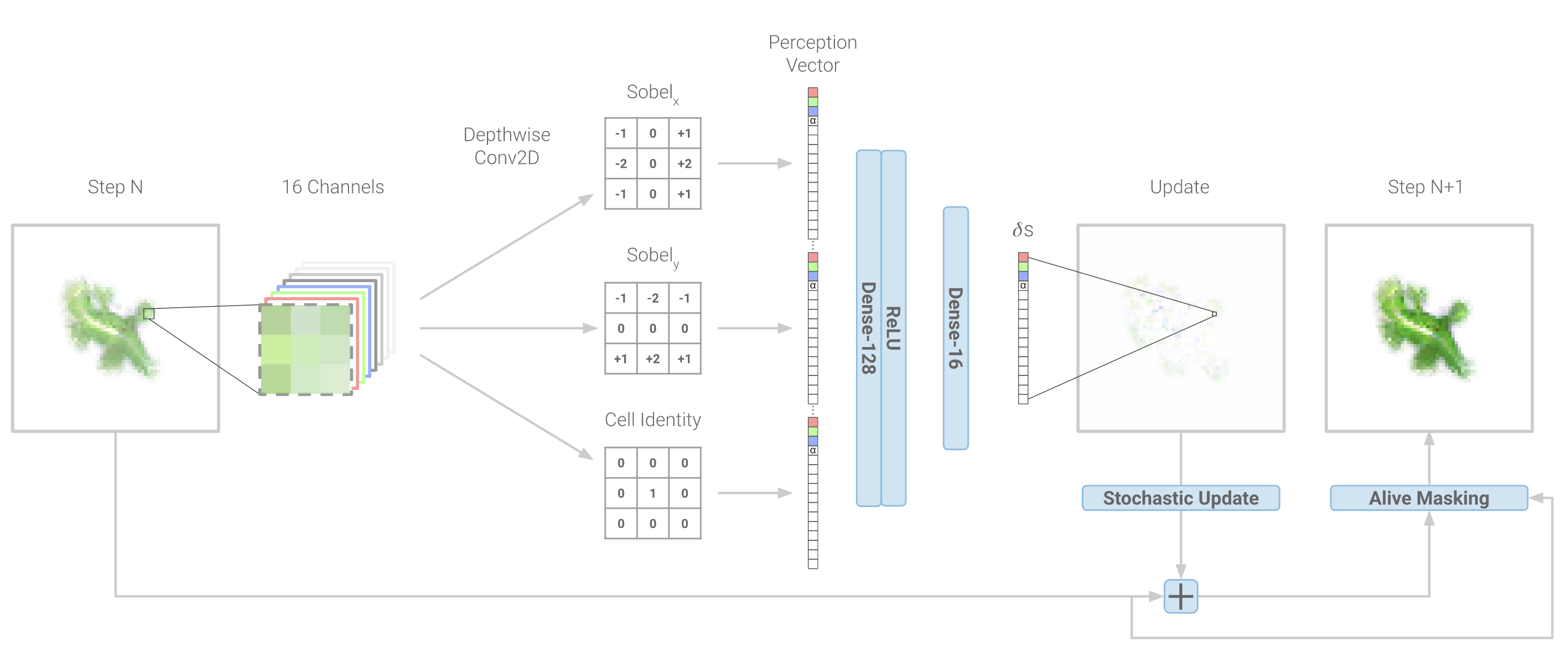

Neural Cellular Automata Architectures

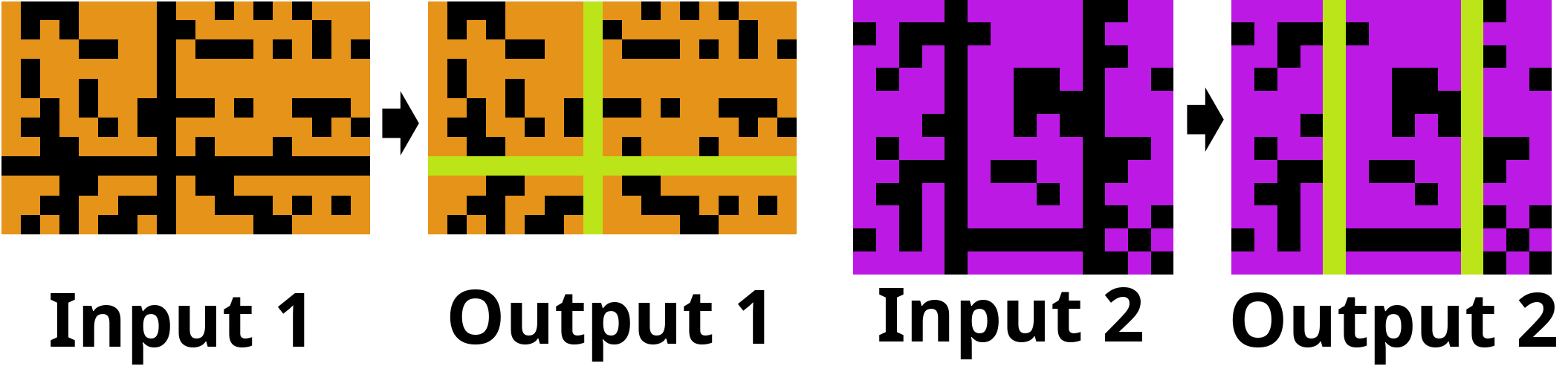

The ARC-NCA method integrates two major NCA classes:

EngramNCA is structured as an ensemble comprising GeneCA and GenePropCA:

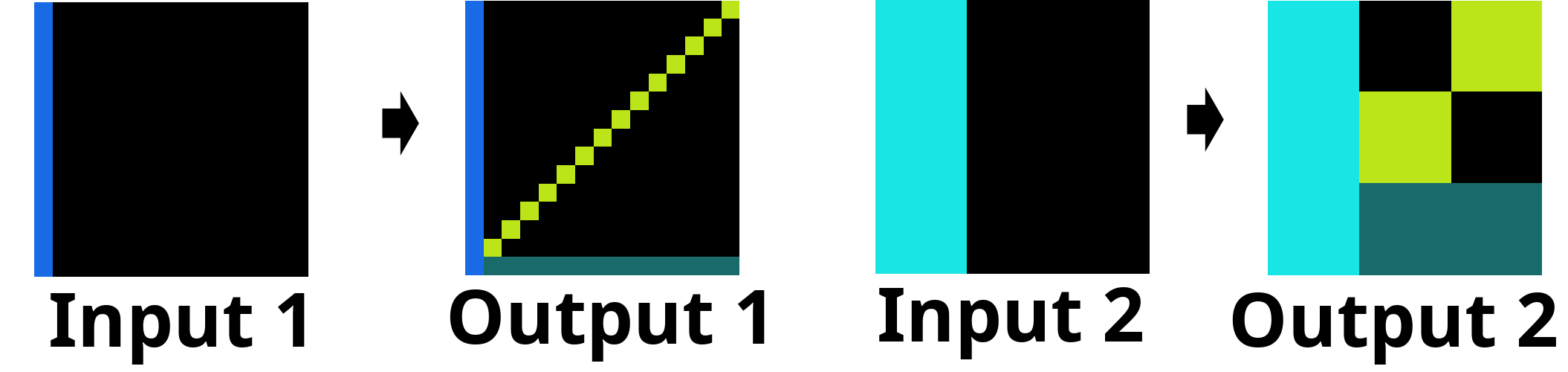

Multiple augmented variants of EngramNCA were evaluated (e.g., v2-v4), introducing mechanisms such as learnable sensing filters (replacing biological-inspired Sobel and Laplacian filters), attention-driven local-global processing, and toroidal/non-toroidal lattice behavior to accommodate ARC’s task variability.

Methodology and Data Representation

ARC tasks (visual transformation problems using grids of color-coded integers) are mapped onto NCAs by converting integer grids into real-valued RGB-α lattices via HSL-based color quantization, with an extension for binary channel encoding. This preprocessing enables seamless integration with NCA frameworks, which operate inherently on image-like, continuously valued tensors.

A key challenge is the variable input/output grid size within ARC. The solution employs either maximal grid padding (up to 30x30, with unique padding tokens) or grid exclusion for non-conforming examples, maintaining compatibility with NCA constraints.

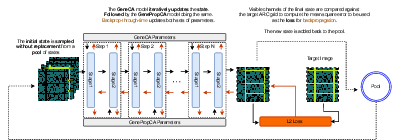

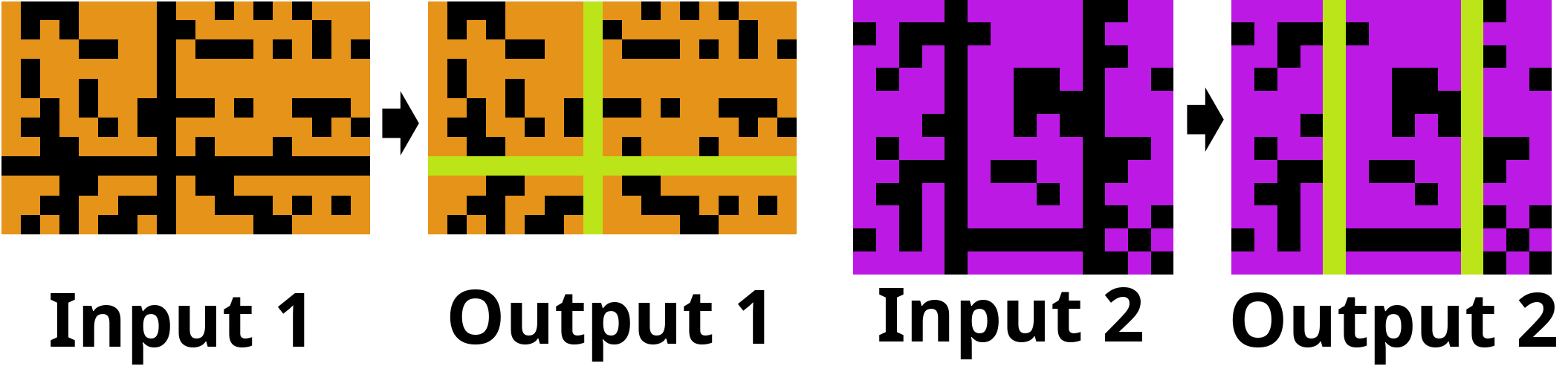

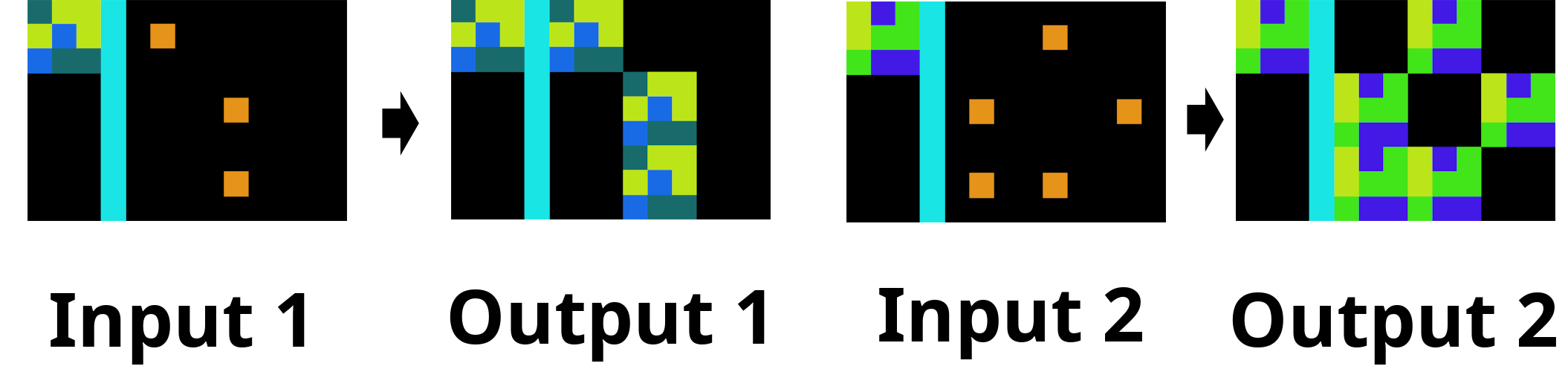

Figure 5: Backpropagation step in EngramNCA training, demonstrating gradient flow through GeneCA and GenePropCA for a single ARC task.

Each ARC problem is treated as a distinct training episode, with individual NCAs initialized and fine-tuned per problem ("test-time training") using available examples, mirroring the program synthesis paradigm.

Experimental Results

Across several ARC-NCA variants and unions thereof, quantitative metrics included pixel-wise log-loss and direct problem solve rate. The best models (notably EngramNCA v3) achieved solve rates up to 12.9% (strict criteria), and union strategies boosted this further to 17.6%. Crucially, these rates are comparable to those of state-of-the-art LLMs (ChatGPT 4.5 ~10.3%), but with over three orders of magnitude lower computational cost per task.

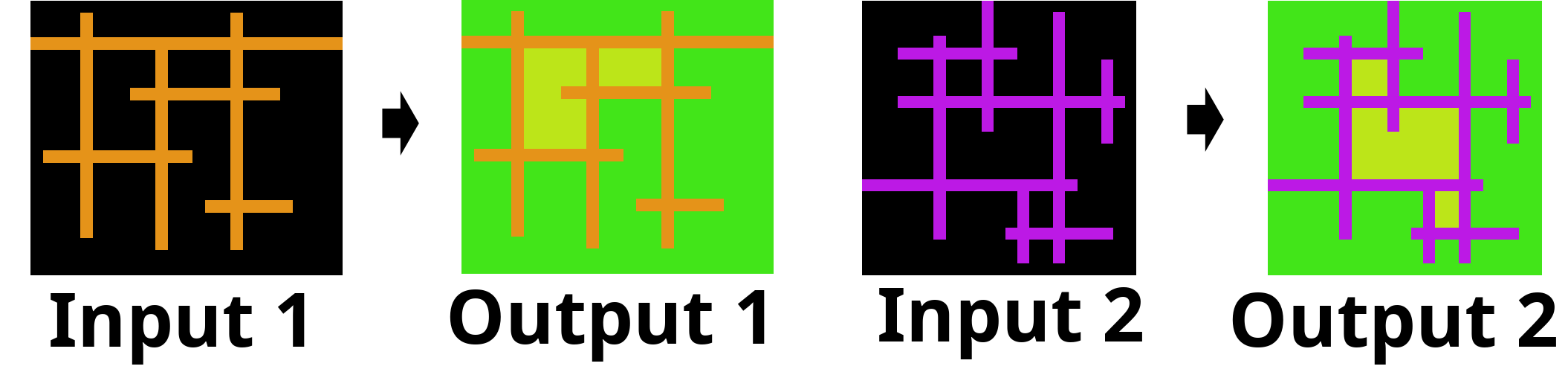

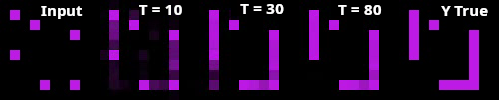

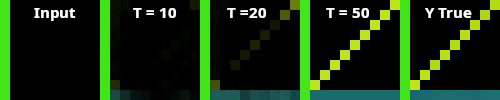

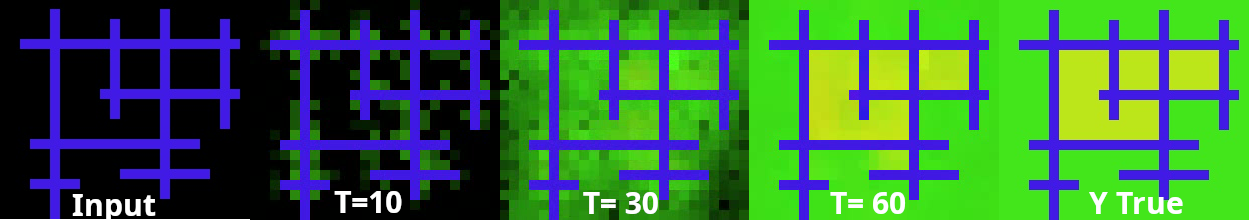

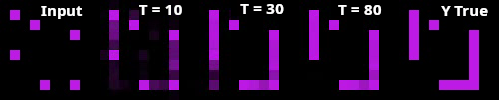

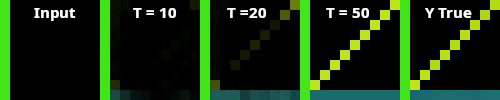

Qualitative analysis revealed NCAs’ capacity for incremental, interpretable developments over the solution lattice, consistent with developmental growth strategies, yielding both exact and "almost solved" outputs.

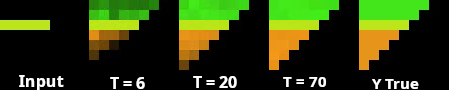

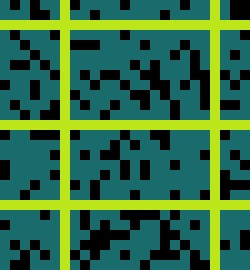

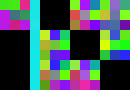

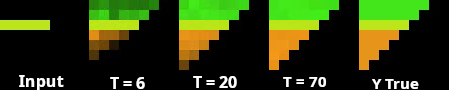

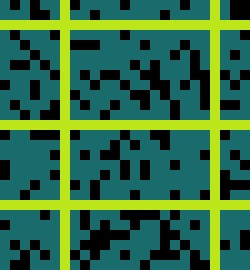

Figure 6: Example ARC solution generated by the standard NCA, demonstrating successful generalization to novel spatial locations.

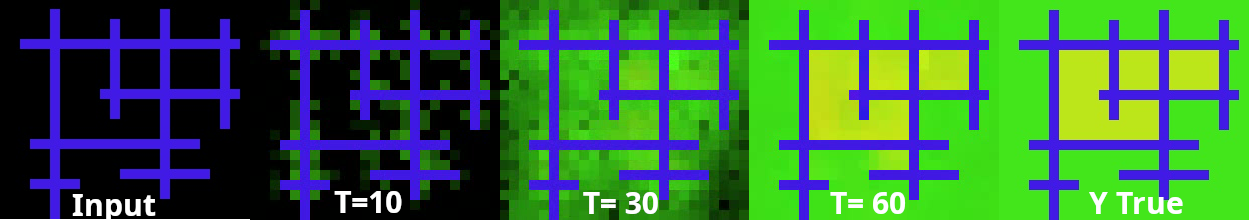

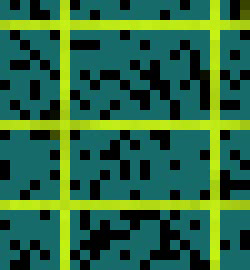

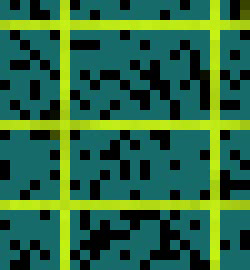

Figure 7: Example solution from EngramNCA v1, displaying intrinsic boundary-aware color filling and region differentiation.

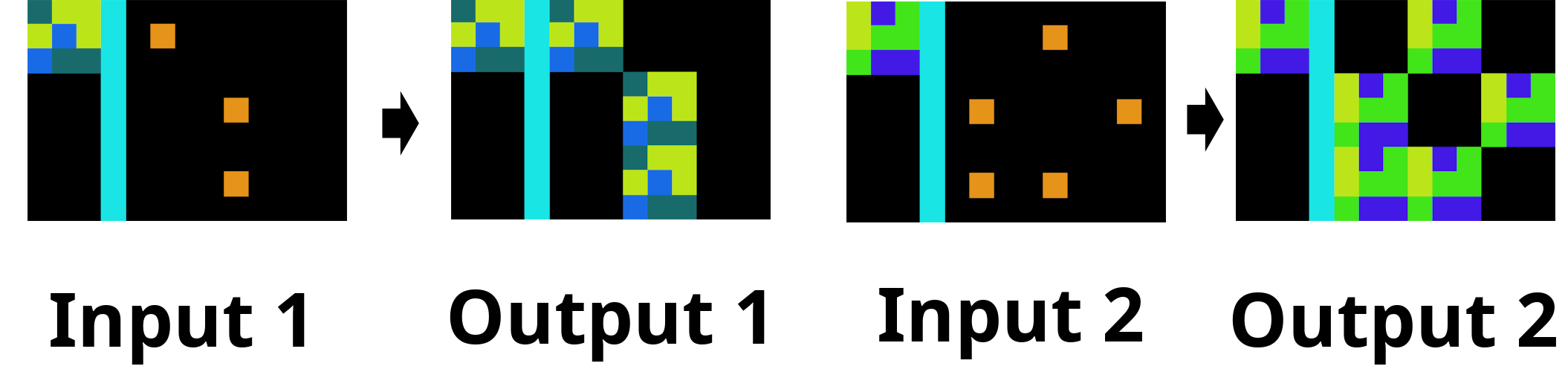

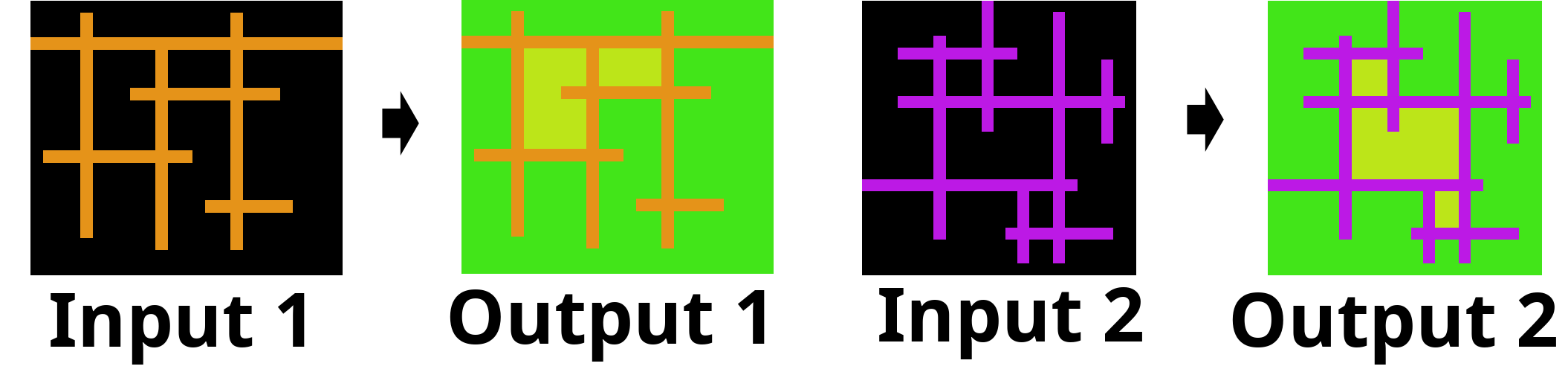

Figure 8: Example solution from EngramNCA v3, illustrating line growth and adaptive error correction at boundaries.

Figure 9: EngramNCA v4 solution, showing robust diagonal and horizontal line formation across grid sizes.

When loss thresholds are relaxed (to allow near-perfect solutions), solve rates increase: individual models approach 16–17%, while unions reach 24%. Furthermore, increasing hidden state dimensionality or employing maximal padding enables even higher coverage (up to 27%).

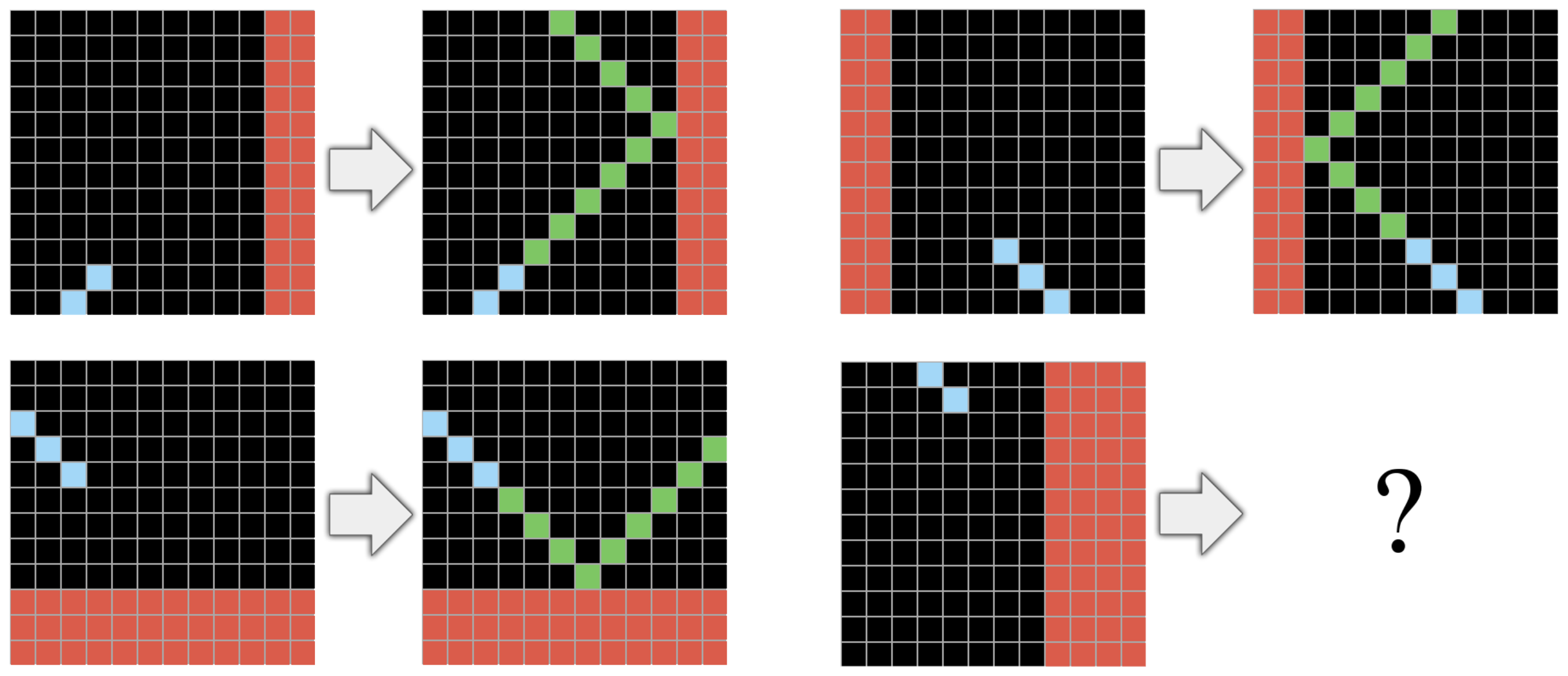

Visual inspection of partial and failed solutions exposes reasoning failure modes, such as incomplete generalization or local color misassignment, providing diagnostic insights for architectural refinement.

Figure 10: Input visual for analysis of partial solution failure modes.

Figure 11: Input demonstrating edge case reasoning pitfalls in EngramNCA v4 related to spatial patterning.

Figure 12: Input grid highlighting asynchronous NCA development and single-pixel loss errors.

Discussion and Implications

ARC-NCA demonstrates that developmental computation paradigms, specifically Neural Cellular Automata with embedded memory and regulatory mechanisms, may serve as viable contenders to transformer-based architectures for abstraction and visual reasoning tasks requiring rapid adaptation from limited examples. The program synthesis approach (per-task NCA fine-tuning) offers flexibility and interpretability, with potential synergies when combined with LLM-driven architectural search or error correction.

Strong claims substantiated by the study:

- ARC-NCA models can match or exceed the performance of major LLMs (e.g., ChatGPT 4.5) on ARC at a fraction (~1000x less) of the computational cost.

- Partial solutions suggest even higher attainable accuracy, highlighting the potential for robust refinement and ensemble strategies.

- Developmental modularity (GeneCA/GenePropCA) facilitates the separation of low-level morphogenesis and high-level reasoning, a promising direction for symbolic and compositional learning tasks.

Practical implications encompass scalable, energy-efficient program synthesis for complex reasoning benchmarks, while theoretical implications pertain to the role of self-organized developmental computation in general intelligence.

With the advent of ARC-AGI-2, which evaluates advanced facets such as symbolic interpretation and compositional reasoning, ARC-NCA’s developmental principles may extend toward more challenging domains. The evidence supports further investigation into criticality pre-training, NCA/LLM integration, and latent-space developmental architectures.

Future Directions

- Pre-training strategies for developmental models: Criticality-based or latent abstraction pre-training to facilitate transfer and generalization.

- LLM/NCA hybrid reasoning: Hierarchical interaction where LLMs guide NCA architecture/hyperparam selection or correct near-complete outputs.

- Latent representation NCAs: Tasking EngramNCA or similar models with abstraction at latent levels, enabling compositional and symbolic manipulation.

- Stability and reproducibility analyses: Multi-run statistics, robust union ensembles, and official ARC-AGI leaderboard submission for broad validation.

Conclusion

ARC-NCA introduces a principled, computationally efficient developmental framework for ARC reasoning, leveraging the local interaction and emergent pattern formation of Neural Cellular Automata. Comparative results underscore its competitiveness with LLMs given vastly superior resource utilization and adaptive abstraction capabilities. This study motivates further inquiry into developmental computation for artificial general intelligence and highlights its value for visual reasoning benchmarks at the intersection of artificial life and deep learning.