- The paper introduces a novel neuro-symbolic architecture that builds multi-layer knowledge graphs to extract core knowledge from ARC tasks.

- It employs abductive filtering and a Domain-Specific Language for synthesizing interpretable solution programs.

- Experimental results demonstrate significant improvements in grid dimension and color prediction, validating the efficacy of combining symbolic reasoning with human-like abduction.

Abductive Symbolic Reasoning on ARC: Knowledge Graph Extraction, Core Knowledge Specification, and Program Synthesis

Introduction

"Abductive Symbolic Solver on Abstraction and Reasoning Corpus" (2411.18158) develops a neuro-symbolic reasoning architecture designed to enhance machine logicality with a focus on the Abstraction and Reasoning Corpus (ARC). Unlike prior works which prioritize direct grid transitions (often yielding opaque, sometimes spurious solutions), this solver mimics abductive patterns of human problem-solving: it observes, hypothesizes, and justifies task-specific regularities in a manner that renders its decision path interpretable. The crux of the approach is the explicit symbolic representation of visual input as layered knowledge graphs—capturing objects and their relations—followed by core knowledge extraction through abductive filtering and the synthesis of Domain-Specific Language (DSL) programs as solutions.

ARC and the Motivation for Symbolic, Abductive Reasoning

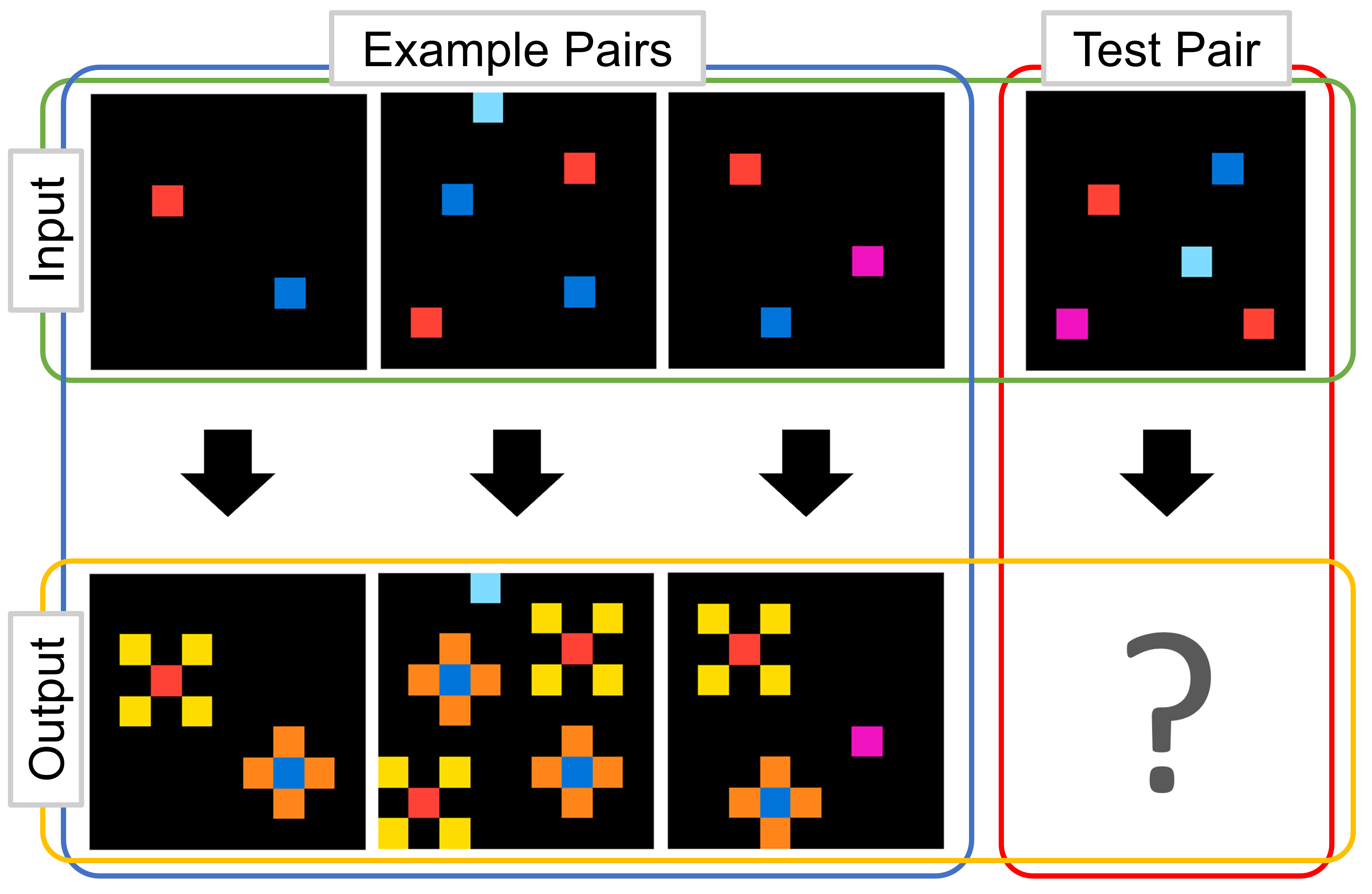

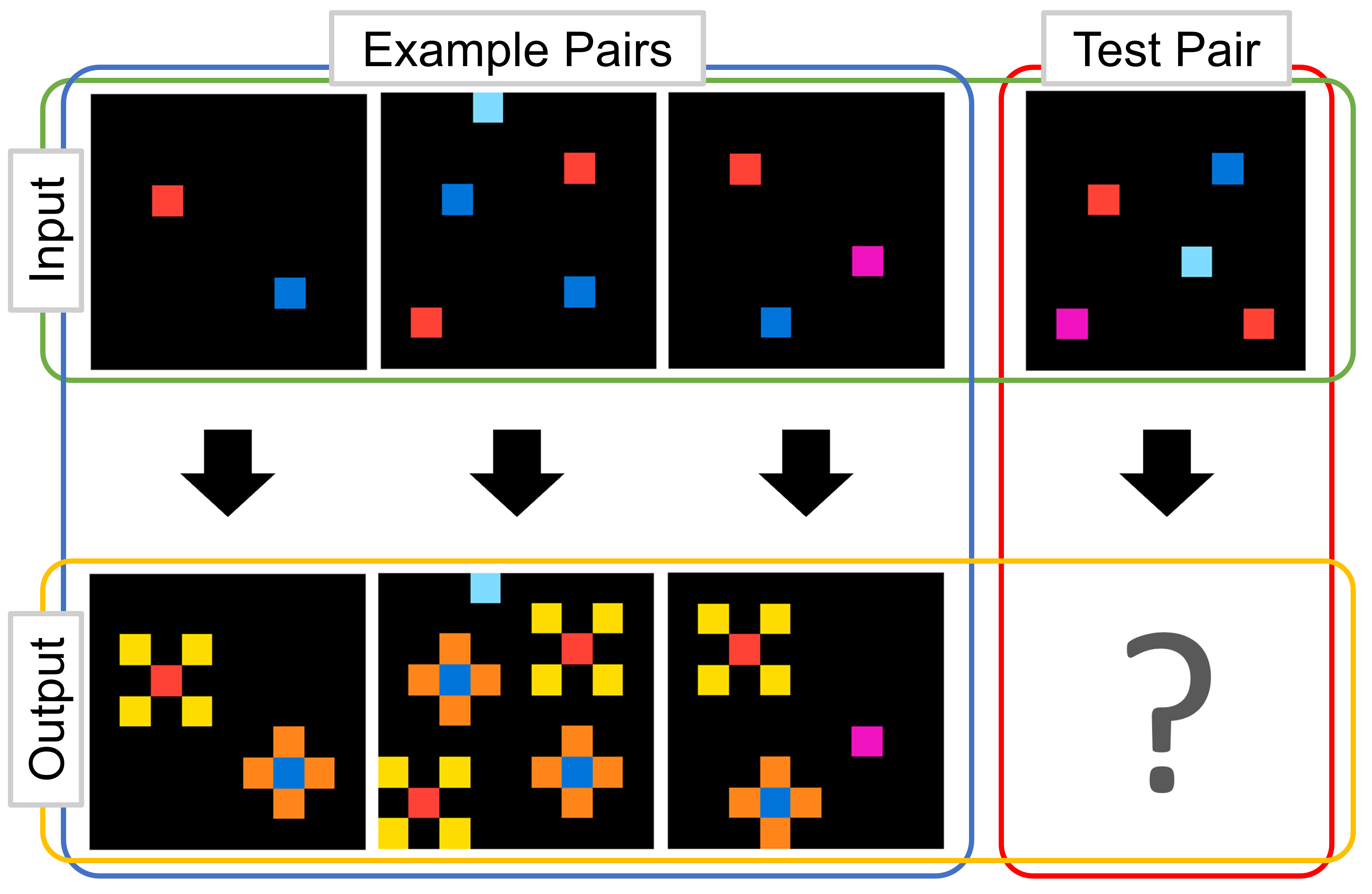

ARC poses a collection of complex visual reasoning tasks, each supplied only with a handful of input-output grid pairs. The solver must extract an underlying transformation or principle and apply it to a withheld test input. Human proficiency in such tasks partially resides in our capacity to abduce: observing effects, hypothesizing causes, and choosing explanations consistent with limited data. ARC tasks typically require sensitivity to "core knowledge" priors (objectness, goal-directedness, numeracy, and spatial topology). Previous symbolic approaches, especially those leveraging DSLs [hodeldsl, icecuber], excelled by maintaining explicit referents for these concepts; however, they lacked systematic frameworks for hypothesis filtering or feature abduction. Transformer-based black-box solutions, conversely, have been unable to explain or debug their behaviors.

Abductive Symbolic ARC Solver: System Architecture

The solver consists of three sequential modules:

- Knowledge Graph Construction: Each provided ARC example pair is converted into a four-layer knowledge graph. The layers progress from pixel nodes (Pnodes), to objects (Onodes), to grids (Gnodes), and finally to input-output grid pairs (Vnodes). Nodes are annotated with symbolic properties via a set of Property DSLs, and edges capture key relationships (e.g., spatial containment, shared color, adjacency).

- Core Knowledge Extraction (Specifier): By intersecting over all example pairs, the Specifier isolates features and object candidates that recur across the observed transitions. This abductive step implements targeted filtering: only those nodes whose features and relational signatures persist become “core knowledge”, serving as ultimate arguments for synthesized solution programs.

- Symbolic Solution Synthesis (Synthesizer): Using the output from the Specifier, the Synthesizer performs a brute-force search for compositions of Transformation DSL primitives—operations such as get_height, get_width, get_number_of_colorset—to map the input to the output grid. Hypotheses are paths in a search tree, whose leaves represent solution candidates. Only paths validated across all example pairs are retained, ensuring the synthesized program generalizes maximally under minimal supervision.

Figure 1: Example ARC task, requiring solvers to extract and generalize a transformational pattern given limited input-output pairs.

The construction and filtering pipeline produces a compressed and explicable search space, supporting both the efficiency of the symbolic program synthesis and the interpretability of the resulting solutions.

Technical Details: DSL Taxonomy, Knowledge Graph Layers, and Abductive Search

The employed DSLs are methodically categorized as either Property DSLs (for annotating graph nodes and generating edges) or Transformation DSLs (used in program synthesis to predict outputs). The data types managed include several node types (Pnode, Onode, Gnode, Vnode), Edge, Color, and various list and set structures for efficient symbolic manipulation.

The four-layer structure serves to modularize abstraction:

- Pnodes index pixels,

- Onodes index objects formed from connected pixel regions,

- Gnodes abstract over an entire grid,

- Vnodes link input and output graphs for each example.

Edges annotate relationships at every layer, scaling up to millions for complex grids.

The Specifier’s intersectional filtering is motivated by the abductive restriction principle: only explanations that minimally and consistently account for all observed phenomena are considered. In practice, this results in constraints that are robust against spurious feature matches and solution overfitting. The Synthesizer’s program search, bounded by the specified DSL set and a maximum path depth, constructs solution programs whose interpretability is guaranteed by the symbolic decomposition.

(Figure 2)

Figure 2: Schematic depiction of the ARC knowledge graph, showing multi-layered node/edge hierarchy for a single grid pair.

(Figure 3)

Figure 3: Visual summary of the symbolic solution synthesis process, illustrating how core knowledge and transformation paths are systematically combined and pruned.

Experimental Results and Quantitative Evaluation

Two key hypotheses were evaluated:

- H1: Symbolic knowledge graphs improve human-like performance and solution quality.

- H2: Increasing the set of Transformation DSLs used by the Synthesizer enhances solver accuracy.

The experimental protocol involved two settings: one using the full knowledge graph pipeline and another ablated condition without graph construction (directly applying DSLs to grid elements). Metrics of interest included accuracy on three major ARC targets: grid height, grid width, and color set prediction.

The results are definitive:

- For grid dimension prediction (height/width), the knowledge graph approach achieved approximately 91% accuracy, compared with 80% for the baseline.

- For color set prediction—a proxy for deeper symbolic understanding—the knowledge graph method scored 74.5% versus 40.5% without KG, highlighting the significance of relational “core knowledge” in non-trivial cases.

- Combinations (HW, HWC) reflect similar, consistent improvements.

Moreover, increasing the number of available Transformation DSLs (from 5 to 10) yielded a substantial boost, especially in tasks involving composite targets: multi-DSL Synthesizers achieved up to 66.5% accuracy in joint height/width/color tasks, while restricted DSLs yielded just 21%. This demonstrates not only the necessity of a well-equipped symbolic vocabulary but also the value of compositional abductive search.

(Figure 4)

Figure 4: Experimental schema for DSL-based solution search without knowledge graph, omitting symbolic core knowledge extraction and resulting in observable accuracy loss.

(Figure 5)

Figure 5: Comparison of solver performances with and without knowledge graph, indicating clear benefit of explicit symbolic structure for color and multidimensional predictions.

Implications and Future Directions

The proposed abductive symbolic solver reifies the stages of human-like reasoning into a modular system: explicit graph-based feature abstraction, intersectional filtering via abduction, and program induction over compositional DSLs. This yields not only transparent solutions readily amenable to inspection or debugging, but also empirical improvements on 400 diverse ARC tasks. The strong performance even with a naive, depth-limited Synthesizer suggests considerable headroom: expansion of the DSL set, integration of richer graph representations, and possible hybridization with neural modules could further enhance generality and robustness.

Practically, this architecture is relevant for visual reasoning agents where interpretability, reliability, and minimum-data generalization are requisite—settings ranging from scientific discovery to automated theorem proving and next-generation AI benchmarks. Theoretically, it offers a systematic framework for incorporating core-knowledge priors and logical abduction into neuro-symbolic reasoning systems, providing a research template for more cognitively-aligned machine intelligence.

Conclusion

This paper advances the state-of-the-art in ARC symbolic reasoning by situating abduction and core knowledge extraction at the center of the solution pipeline. The multi-layer knowledge graph, rigorous Specifier unit, and compositional Synthesizer together yield a system that is not only robust in numerical benchmarks but also fundamentally interpretable. The empirical results underscore the importance of explicit symbolic structure and abduction-driven constraint induction in tasks demanding human-like generalization from scarce data. Future work should explore extending these principles to content prediction, more sophisticated relational DSLs, and seamless integration into scalable neuro-symbolic architectures.