RocketStack: Level-aware deep recursive ensemble learning framework with adaptive feature fusion and model pruning dynamics

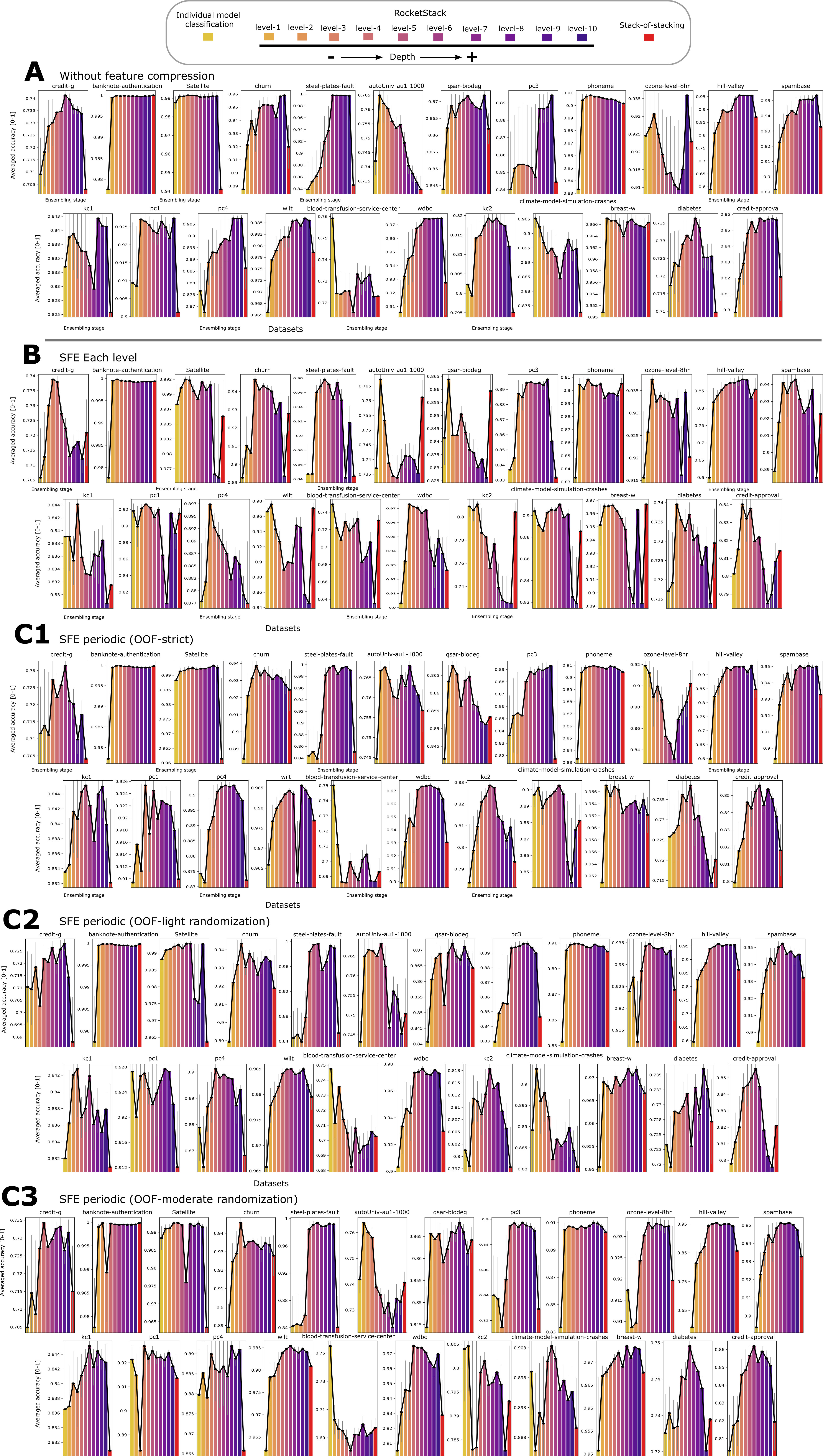

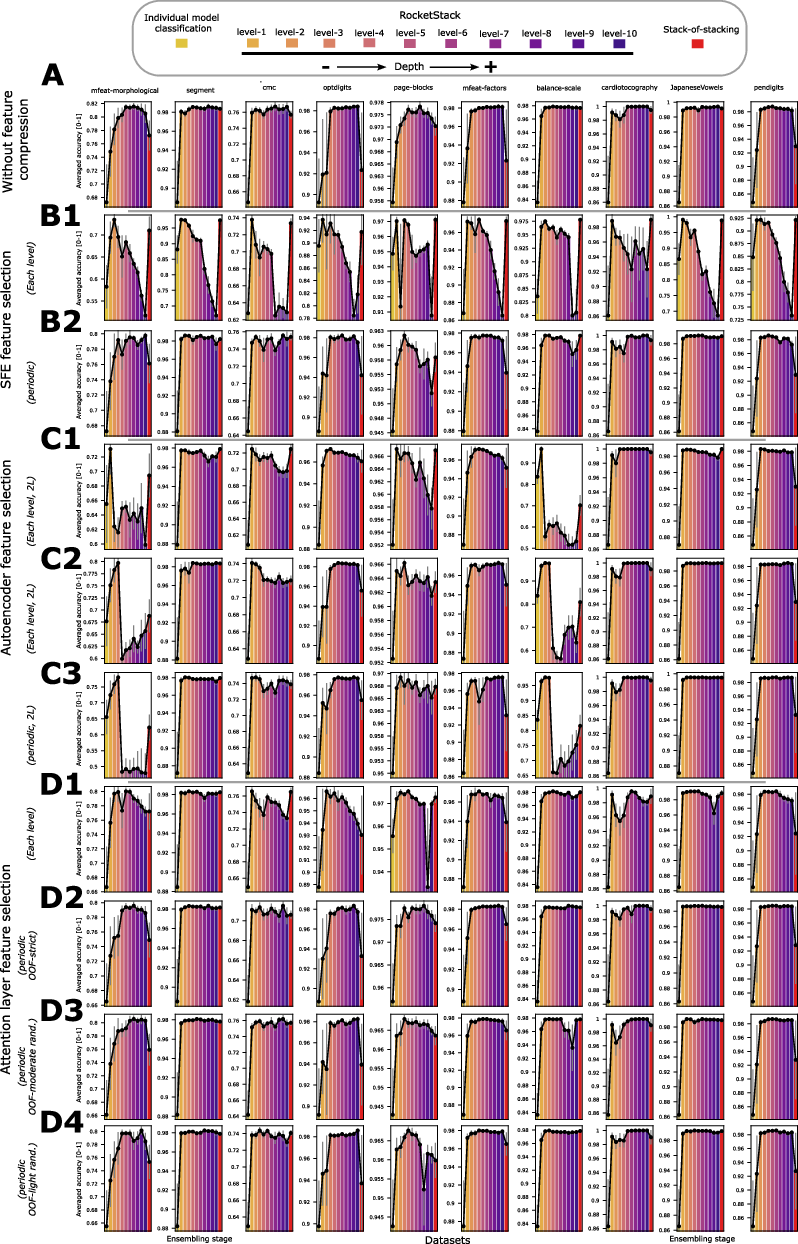

Abstract: Ensemble learning remains a cornerstone of machine learning, with stacking used to integrate predictions from multiple base learners through a meta-model. However, deep stacking remains rare, as most designs prioritize horizontal diversity over recursive depth due to model complexity, feature redundancy, and computational burden. To address these challenges, RocketStack, a level-aware recursive ensemble framework, is introduced and explored up to ten stacking levels, extending beyond prior architectures. The framework incrementally prunes weaker learners at each level, enabling deeper stacking without excessive complexity. To mitigate early performance saturation, mild Gaussian noise is added to out-of-fold (OOF) scores before pruning, and compared against strict OOF pruning. Further both per-level and periodic feature compressions are explored using attention-based selection, Simple, Fast, Efficient (SFE) filter, and autoencoders. Across 33 datasets (23 binary, 10 multi-class), linear-trend tests confirmed rising accuracy with depth in most variants, and the top performing meta-model at each level increasingly outperformed the strongest standalone ensemble. In the binary subset, periodic SFE with mild OOF-score randomization reached 97.08% at level 10, 5.14% above the strict-pruning configuration and cut runtime by 10.5% relative to no compression. In the multi-class subset, periodic attention selection reached 98.60% at level 10, exceeding the strongest baseline by 6.11%, while reducing runtime by 56.1% and feature dimensionality by 74% compared to no compression. These findings highlight mild randomization as an effective regularizer and periodic compression as a stabilizer. Echoing the design of multistage rockets in aerospace (prune, compress, propel) RocketStack achieves deep recursive ensembling with tractable complexity.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.