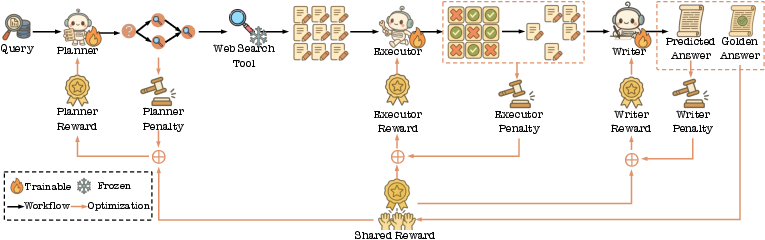

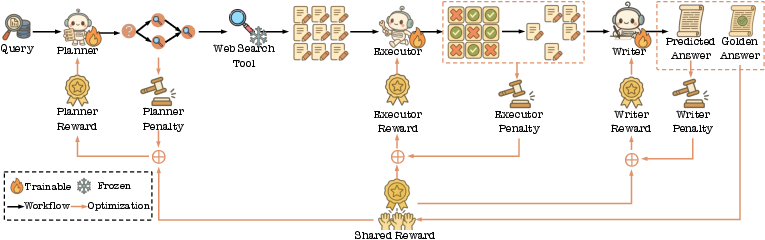

- The paper introduces a modular multi-agent architecture that uses Master, Planner, Executor, and Writer agents to decompose and address complex search queries.

- It employs dynamic DAG-based task planning and iterative re-planning to overcome the limitations of traditional IR systems and static RAG methods.

- Experimental evaluations on real-world data show significant improvements in user engagement metrics, including reduced query changes and increased page views.

Multi-Agent Framework for Next-Generation Search Systems

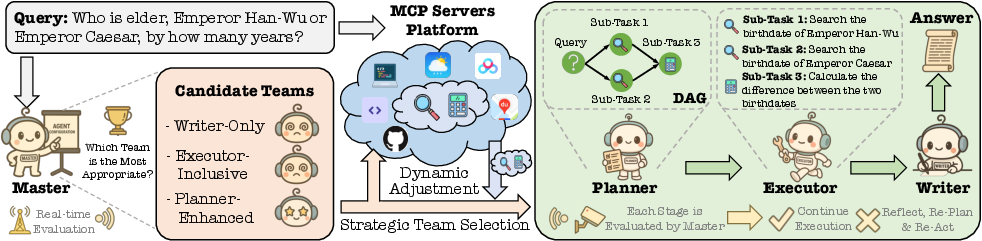

This paper introduces the AI Search Paradigm, an LLM-driven multi-agent system designed to emulate human information processing for next-generation search systems. It addresses the limitations of current IR systems, including lexical models, LTR methodologies, and RAG systems, in handling complex, multi-stage reasoning tasks. The core innovation lies in a modular architecture comprising four LLM-powered agents: Master, Planner, Executor, and Writer, which dynamically adapt to a wide spectrum of information needs.

System Overview and Agent Roles

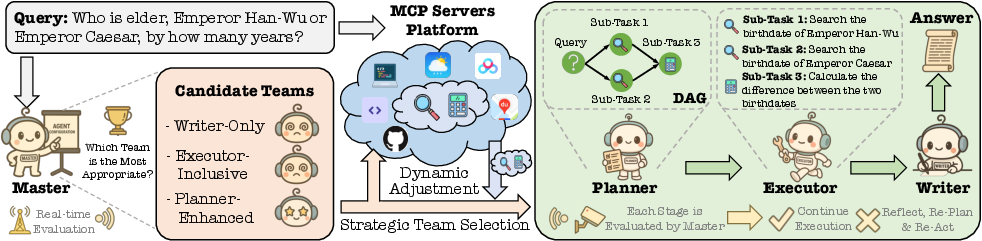

The AI Search Paradigm coordinates specialized agents responsible for distinct phases of the information-seeking process (Figure 1). These agents collaborate to deliver context-rich answers, mirroring human-level inquiry. The multi-agent setting enhances efficiency and prevents bottlenecks compared to single-agent configurations.

Figure 1: The Overview of AI Search Paradigm.

- Master Agent: Acts as the initial point of contact, assessing query complexity and coordinating subsequent agents, continuously evaluating their performance, and guiding re-planning and re-execution when needed.

- Planner Agent: Invoked for complex queries, selecting tools from a Model-Context Protocol (MCP) Servers Platform, and decomposing the overarching query into a structured sequence of sub-tasks represented as a Directed Acyclic Graph (DAG).

- Executor Agent: Executes simple queries or sub-tasks, invoking external tools selected by the Planner, and evaluating the outcomes.

- Writer Agent: Synthesizes information from completed sub-tasks to generate a coherent, contextually rich response to the user's original query.

The system supports three team configurations: Writer-Only, Executor-Inclusive, and Planner-Enhanced, adapting to different levels of reasoning and execution complexity.

Task Planner: Orchestrating Complex Queries

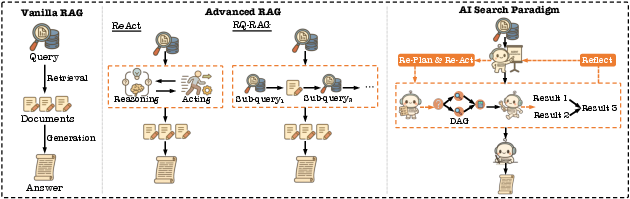

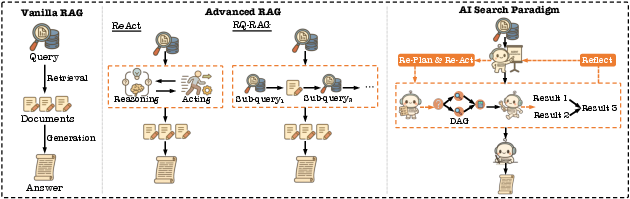

The Planner decomposes complex queries into structured sub-tasks, orchestrating execution through appropriate tools. This contrasts with traditional RAG systems that rely on static retrieval and fixed response generation. The Planner enables dynamic task planning, effective management of multiple tools, and adaptive decision-making. Figure 2 illustrates the comparison of the proposed system to existing RAG frameworks.

Figure 2: Comparison of RAG frameworks. Left: Vanilla RAG conducts one-shot retrieval followed by direct answer generation. Middle: Advanced RAG methods, such as ReAct and RQ-RAG, involve reasoning-action cycles or sequential sub-query execution. Right: AI search paradigm introduces a multi-agent system wherein Master guides Planner to formulate a plan based on the input query, while also continuously evaluating the execution status and completeness of sub-task results, and performing reflection and re-planning when necessary. Planner is responsible for constructing a DAG of sub-tasks and dynamically selecting the appropriate tools, thereby enabling structured and adaptive multi-step execution. Executor executes the specific sub-tasks using these tools, and finally, Writer generates the final answer.

Dynamic Capability Boundary

The system introduces the concept of a Dynamic Capability Boundary, combining the LLM's reasoning ability with a selected subset of tools to tailor plans to the input query. To facilitate precise tool description, the AI search system utilizes an iterative refinement method, DRAFT, optimizing tool documents for LLM interpretability. Functional clustering of tool APIs, facilitated by k-means++, ensures system resilience by enabling immediate substitution with functionally similar tool APIs upon failure. The system employs COLT, a PLM-enhanced retrieval method, to integrate semantic and collaborative dimensions of tool functionality for effective tool retrieval.

DAG-based Task Planning and Master-Guided Re-Action

The AI Search Paradigm uses a dynamic reasoning framework based on a DAG to address complex queries, employing a chain-of-thought → structured-sketch prompting strategy. A Master-guided reflection, re-planning, and re-action mechanism allows for real-time monitoring of sub-task execution and evaluation of intermediate results. Furthermore, the paper proposes a rule-based reward function consisting of final answer rewards, user rewards, formatting rewards, and intermediate execution rewards. The AI search system uses GRPO to optimize the Planner based on the defined rewards.

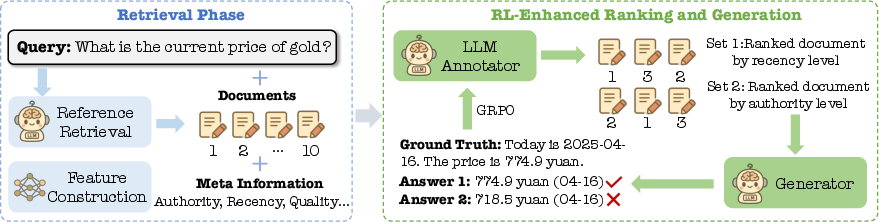

Task Executor: Aligning with LLM Preferences

The Executor leverages LLM capabilities, shifting from PLM-based methods towards LLM preference alignment. It utilizes LLM labeling via RankGPT and TourRank, reference selection, and generation rewards to guide the training process. Distillation of LLM ranking transfers the deep ranking reasoning to an efficient student model. A lightweight system, comprising lightweight retrieval and ranking, efficiently processes massive natural language sub-queries. The Executor also incorporates LLM-augmented features to capture latent user intentions and contextual relevance.

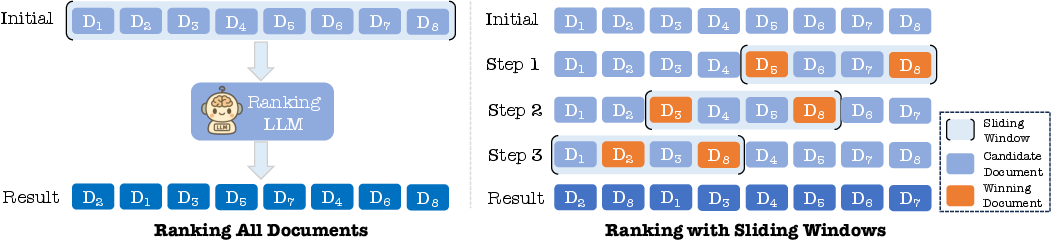

LLM-based Ranking Methods

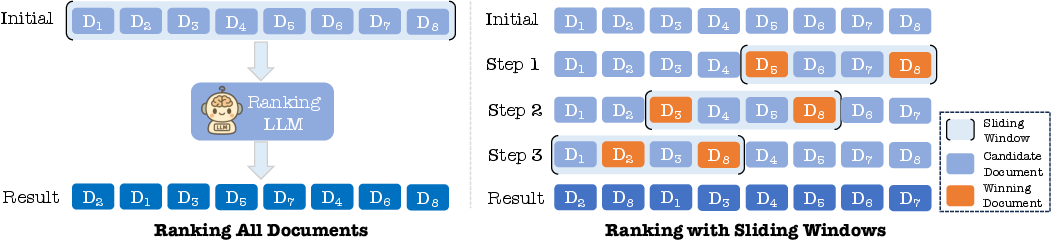

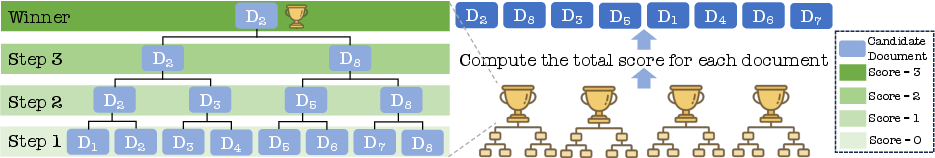

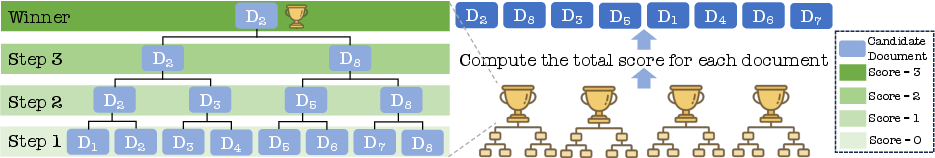

The paper details two Listwise-based ranking methods, RankGPT and TourRank. RankGPT employs a sliding window approach (Figure 3) to efficiently re-rank large candidate sets without exceeding context window limitations. TourRank, inspired by sports tournaments, utilizes a group stage format to expedite the ranking process (Figure 4).

Figure 3: The illustration of ranking all documents and ranking with sliding windows.

Figure 4: The illustration of ranking with a tournament strategy.

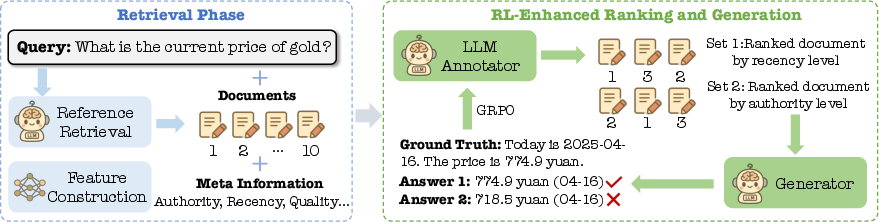

The AI search system is enhanced with semantic features derived from LLMs. Figure 5 provides an illustration of generation reward.

Figure 5: The illustration of generation reward.

Robust LLM-based Generation

The paper presents a multi-agent method ATM, which leverages a multi-agent system combined with adversarial tuning to enhance the robustness of the generator. A preference alignment technique for the RAG scenario (PA-RAG) achieves comprehensive alignment between LLMs and RAG requirements through multi-perspective preference optimization. PA-RAG focuses on response informativeness, response robustness, and citation quality. Finally, the paper explores the direct alignment of LLMs with online human behavior and proposes an LLM alignment method Reinforcement Learning with Human Behaviors (RLHB). The AI search system leverages Multi-Agent PPO (MAPPO) algorithm to align the individual objectives of all modules with the shared goal of maximizing the quality and accuracy of generated answers (Figure 6).

Figure 6: The illustration of MMOA-RAG, which leverages the Multi-Agent PPO algorithm to align the individual objectives of all modules with the shared goal of maximizing the quality and accuracy of generated answers.

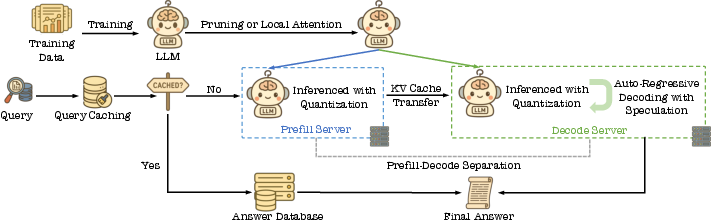

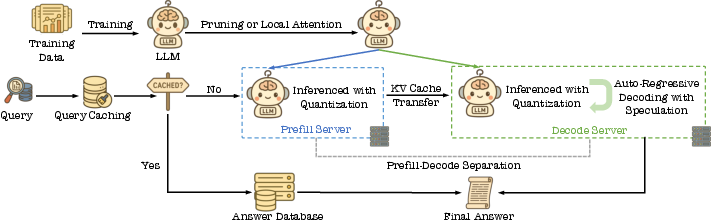

Light-Weighting LLM Generation

To optimize LLM inference, the paper explores light-weighting methodologies categorized into algorithmic-level and infrastructure-level optimizations. Algorithmic-level optimizations include local attention and pruning. Infrastructure-level optimizations leverage input-output similarity, output length reduction, query semantic similarity (semantic caching), quantization, prefill-decode separation, and speculative decoding. Figure 7 illustrates the technical pipeline of Lightning LLM’s Generation.

Figure 7: The technical pipeline of lightning LLMâs generation.

Evaluation and Results

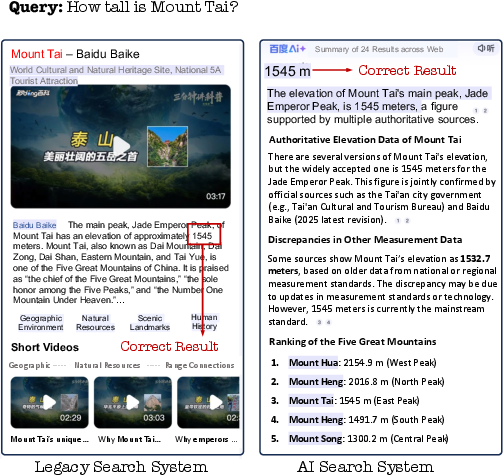

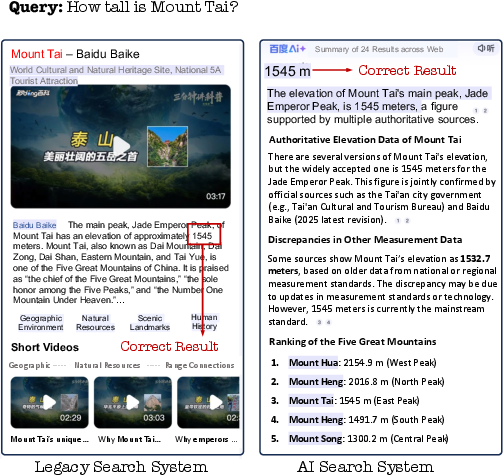

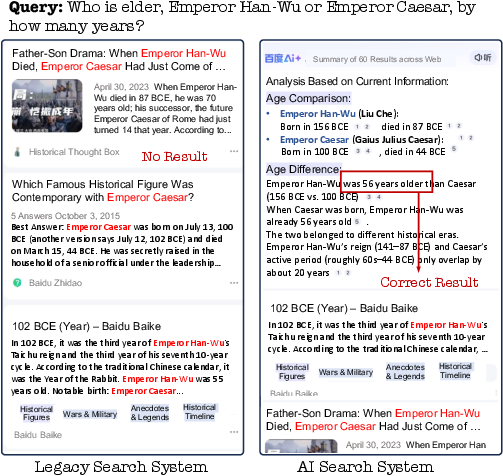

The paper presents experimental results obtained from real-world traffic at Baidu Search. Human evaluations demonstrate that the AI Search system delivers substantial improvements, particularly for moderately complex and complex queries. Online A/B tests reveal significant enhancements in user engagement, including a decrease in change query rate (CQR) and an increase in page views (PV), daily active users (DAU), and dwell time.

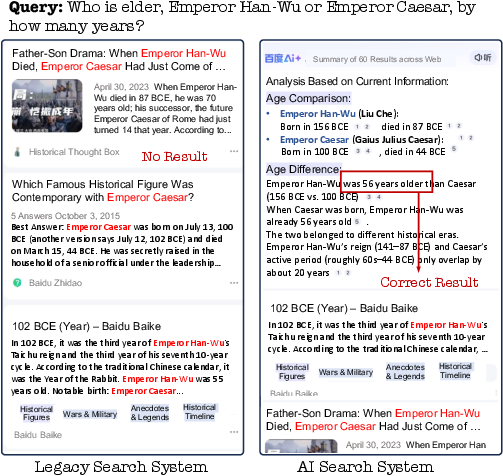

The case study in Figure 8 compares the AI search system and the legacy system for both simple and complex queries, highlighting the advantages of the AI search system in effectively addressing complex queries.

Figure 8: Online case comparisons of the AI search system to the legacy search system.

Conclusion

The AI Search Paradigm introduces a novel approach to information seeking, leveraging a modular, multi-agent architecture. The proposed system addresses the limitations of traditional IR and RAG systems through proactive planning, dynamic tool integration, and iterative reasoning. The paper presents a structured blueprint for future research in AI-driven information seeking, highlighting opportunities for optimizing collaborative agents and seamless tool integration.