- The paper introduces MarketingFM, a framework leveraging LLMs to generate and evaluate ad copy that increases CTR by up to 9% in e-commerce.

- It employs Retrieval-Augmented Generation and task chaining to produce keyword-specific, concise ads using real-time product data.

- The automated evaluation system, integrating rule-based and LLM assessments, achieves an 89.57% agreement with human evaluations.

LLMs for Customized Marketing Content Generation and Evaluation at Scale

The paper presents a framework, called MarketingFM, that leverages LLMs for generating and evaluating customized marketing content at scale, providing a solution to the limitations of current generic, template-based marketing content in e-commerce.

Introduction and Motivation

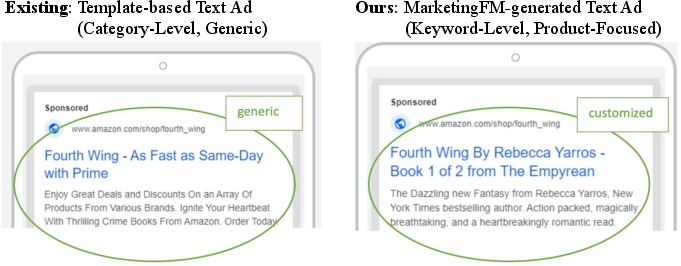

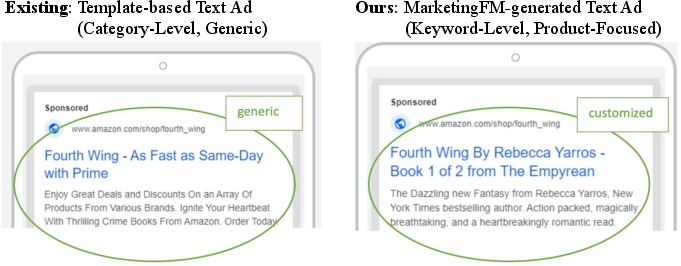

The need for effective offsite marketing content in e-commerce is well-established, as it drives traffic to retail websites via external platforms. The prevalent use of generic, template-based ads means that such content often fails to align well with landing page specifics, thus reducing consumer engagement. To address these limitations, the paper introduces MarketingFM, a system designed to automate the generation of keyword-specific ad copy by integrating multiple data sources, thus minimizing human intervention while maintaining alignment with marketing principles.

The system showcases substantial improvements in typical marketing metrics, reporting up to a 9% increase in CTR, 12% more impressions, and a 0.38% lower CPC when compared to traditional methods.

Figure 1: A comparison of two paid search ads for an e-commerce website, illustrating the enhanced relevance and engagement of product-specific ads over generic ones.

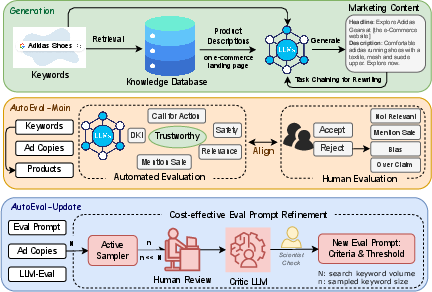

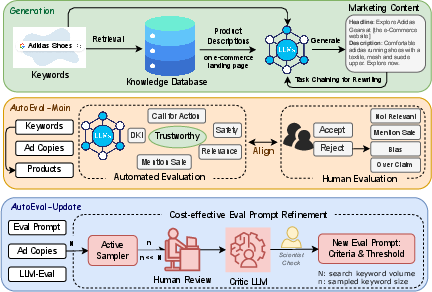

MarketingFM System Architecture

Retrieval-Augmented Content Generation

MarketingFM uses Retrieval-Augmented Generation (RAG), which enhances the relevance of generated ad content by grounding it in real-time product data. This data is retrieved using semantic embedding-based searches from a knowledge base (KB) that indexes product metadata.

Task Chaining for Content Generation

To adhere to specific ad requirements such as character limits and call-to-action (CTA) inclusivity, the system employs task chaining. This involves using a summarization approach to refine and condense generated content into concise ad copies that remain compliant with advertising standards.

Figure 2: An illustration of the MarketingFM framework, detailing the ad copy generation process, AutoEval-Main, and AutoEval-Update.

Automated Evaluation Methodologies

AutoEval-Main

AutoEval-Main combines rule-based checks with LLM-as-a-Judge approaches to automate content evaluation and ensure alignment with marketing goals. It assesses ad quality across several criteria: relevance, generalization, safety, and diversity, integrating both machine-driven scoring and policy compliance checks.

AutoEval-Update

This system addresses the dynamic nature of marketing criteria by implementing an LLM-human collaborative framework. AutoEval-Update facilitates continuous refinement of evaluation prompts, dynamically adapting to evolving criteria via active sampling and alignment reports generated by critic models. This iterative improvement process significantly reduces reliance on human oversight while maintaining evaluation robustness.

Figure 3: An illustration of the AutoEval-Update pipeline for self-refinement of evaluation prompt.

Experimental Results and Observations

The framework's efficacy is validated through extensive offline and online evaluations, encompassing a range of benchmarking and real-world testing:

- Offline Evaluation: High acceptance rates of over 97% in generated ad copies, with primary rejection reasons being irrelevance and over-claims.

- Online A/B Testing: Demonstrated notable lifts in CTR and impressions across test keywords when employing the system’s content generation capabilities.

- AutoEval-Main Performance: The evaluation framework achieved an 89.57% agreement rate with human evaluations, showcasing its capacity to replicate human scoring accuracy while significantly easing resource demands.

- AutoEval-Update Impact: Effectively reduced discrepancy between model and human evaluations through threshold adjustments and real-time criteria adaptations, underscoring the benefits of an iterative refinement process.

Conclusion

The paper demonstrates the potential of MarketingFM and its associated evaluation systems in transforming e-commerce marketing practices. By leveraging LLMs to automate and optimize ad content generation and evaluation, the framework supports scalable, effective marketing strategies while minimizing manual intervention. Future directions include expanding the system’s application to other marketing avenues like social media and outbound emails, as well as enhancing the system's efficiency through model distillation and continual learning advancements.